1. Introduction

The segmentation of customers according to their customer lifetime value (CLV) enables companies to adequately build long-term relationships with customers and effectively manage investments into marketing tools. CLV contributes to solving a number of problems such as decisions related to addressing, retaining and acquiring customers, or issues concerning a company’s long-term value [

1]. Many different CLV models were devised in recent decades and, at the same time, the development of ICT gave rise to e-commerce, which is a fast-growing retail market in Europe and the USA [

2]. The important part of e-commerce is online shopping, which offers retail sales directly to consumers. Companies engaged in e-commerce have high data availability due to the interactions of customers with their websites and other Internet-based services. The high level of competition, especially in online shopping, drives companies to spend their financial resources on marketing activities as efficiently as possible, which can be helped by implementing a CLV model that uses available historical data to estimate customer value. However, in their effort to introduce CLV as a decision-making basis for marketing management, companies operating an online store face the issue of selecting the appropriate CLV model that would be suitable for their kind of business.

The aim of this article is to empirically compare the predictive ability and quality of selected CLV models used in the online shopping environment on the basis of statistical metrics. In addition, the article has a practically oriented aim: to help companies involved in online shopping make a decision concerning the selection and the application of the selected CLV model. Other recommendations concerning the implementation of a particular model are also introduced. Based on managerial issues, this article proposes one research question:

Which of the compared models for calculating CLV has a good predictive performance of CLV in the non-contractual environment of e-commerce?

Good predictive performance is observed when stable quality prediction results can be achieved among all used datasets based on evaluation metrics, and outperform the other compared models in this study. An implicit restriction is the number of different models selected for comparison, see

Section 3.1. On the other hand, the selected models represent very different approaches to CLV modeling, as shown below.

There are two main reasons for performing such research. Firstly, there exist a significant number of reviews comparing the theoretical aspects of selected CLV models based on results from secondary sources, e.g., [

3,

4,

5,

6,

7,

8,

9,

10,

11]. However, very few of the comparative papers are directly based on original empirical research creating a connection between the theoretical and the practical level, which means applying given models to a specific area. There are only two examples of this type of comparative paper [

12,

13]. It is evident that in terms of creating a connection between the theoretical and the practical level the situation is unsatisfactory. This was the principal reason for the authors to carry out their own wider comparative study of selected CLV models that could be used in the field of e-commerce, including online shopping.

The second reason for performing this study is methodological. This need for empirical comparison of models is based on the fact that the CLV applies, in particular, design science research. The research methodology thus builds on the principles applied in design science [

14,

15]. Design science designs and investigates artifacts (e.g., models) that can be regularly tested in different contextual conditions, and thus generates a knowledge base that will affect any similar artifacts in the future. This knowledge also helps to theorize in the area [

15,

16]. Therefore, this article is a complementary addition to artifact design, which is involved in the evaluation and comparison based on real-world data used by the selected models. The need for such research emphasizes even articles from the marketing area, e.g., [

3,

11,

17,

18], and even methodological articles dealing with design science research e.g., [

14,

16,

19]. For other theoretically oriented and review articles, such research brings the following types of benefits:

Better comparable results of the deployment of selected models. Theoretically orientated studies build on secondary sources, most often on the results of the validation of the proposed model in the original article. The problem with studies built on secondary sources rather than comparative empirical research is that the conclusions about the behavior of a model and its comparison with another model are based on the use of entirely different datasets and conditions. This brings up the issue of relevant generalizations based on different results.

New findings for the empirical process of building an information base on individual models. For example, Gupta et al. [

11] consider persistence models, e.g., the Vector Autoregressive model (VAR), as very appropriate for CLV calculation; however, they add that there are very few examples using these models because the demands for data are high. Only the introduction of other applications, for example particular model to new datasets, can enhance the debate about the appropriateness or limits of a particular model in comparison to others, and extend it further by a discussion about the areas of usability.

Up to now only comparisons of a larger number of selected models in one dataset [

12,

13] have been performed, allowing a comparison of results, but also limiting the generalization of results achieved beyond the dataset. In this regard, the presented article is unique because it offers a comparison of the models on six large datasets of selected online stores. The EP/NBD and MC models selected for this study are based on different approaches to modeling. According to [

11] these models are classified as probability and econometric approaches to modeling CLV. Furthermore, compared to the studies [

12,

13], this article offers a different view based on the non-contractual relation typical of online shopping as a part of e-commerce business.

This article connects the theoretical and the practical level by discussing the results acquired from the comparative analysis, which can help companies arrive at a decision on selecting the CLV model suitable for their online shopping conditions, and implement that model. The reliability of the selected CLV models is empirically compared using six datasets from medium to large Czech and Slovak online stores. The results of the comparison are used as the basis for a discussion of individual models, their suitability, and managerial and implementation aspects in the sense of their robustness and accuracy of prediction. As a result, companies running medium- and large-sized e-commerce will not need to carry out their own extensive experiments with different CLV models. That was also the practical motivation for this article because the online shopping industry lacks more extensive comparative analyses of CLV models carried out on up-to-date empirical data using more than one dataset.

The article is structured in the following way:

Section 2 introduces the theoretical basis of CLV. An explorative analysis of the datasets used, as well as the method of carrying out a comparative analysis of selected CLV models, can be found in

Section 3. This section also mentions the selection of CLV models for comparison and an examination of relevant literature concerning the selected models. The results obtained from the comparative analysis are presented in

Section 4 and then discussed in

Section 5.

2. Background

Customers are central to all marketing activities of a company because not only do they generate income, but they increase the company’s market value as well. Marketing emphasizes the interconnection of all processes and activities that create, communicate and provide values for customers, including customer relationship management [

20].

In the past two decades, the field of customer relationship management (CRM) went through a significant transformation thanks to information and communication technologies (mainly database and analytical technology). When analyzing customer feedback, companies no longer have to rely only on the aggregated results of quantitative and qualitative research (e.g., questionnaires, focus groups), but they can use their own customer data and concentrate on selected groups or individual customers. This was achieved thanks to the new possibilities of storing and processing available data about individual customers.

This progress enabled a departure from the established patterns, such as brand equity, transaction and product centricity, and a shift towards a customer-centric approach in relationship management [

21,

22], in which the customer is a valuable intangible asset of the company [

23,

24,

25,

26]. The aim of CRM activities is mainly to retain current customers, build a long-term relationship, and gain new customers [

27]. The CLV approach plays an important role in that process, as it enables companies to segment customers and identifies those who bring the company the largest profit in time [

28]. Concurrently, it makes it possible to choose suitable strategies for activities within the company’s CRM.

There is a number of slightly different definitions of CLV, see the comparison of definitions in articles [

7,

29,

30]. A generally accepted definition of CLV is the present value of future net cash flows [

31] associated with a particular customer [

22].

Companies also use other indicators, such as Customer Profitability (CP). CP refers to the revenues, minus the costs connected to maintaining a mutual relationship, generated during a selected period [

31,

32,

33]. In other words, this is a contemporary or retrospective view [

3] as opposed to CLV, which offers a look ahead. For this reason, the use of CLV is more suitable (better than the historical CP analysis) for strategic and tactical marketing planning [

3,

7,

32,

34].

The CLV approach forms a bridge between marketing and financial metrics, which means that marketing activities are always related to financial metrics, allowing space for optimization and management [

35]. CLV shows the way in which (changes in) customer behavior (e.g., increased purchase, retention) can influence future profitability [

6]. The relevancy of CLV applications is leveraged mainly by customer behavior impacting retention [

36], customer-level attributes impacting customer loyalty (e.g., age and gender) [

37], and national cultural dimensions affecting the drivers of purchase, frequency and contribution margin [

17]. All of these (and other) components used for appropriate CLV models with available data constitute both direct and indirect influences on CLV calculations. The main researched applications of CLV are aimed at the business-to-consumer context while the business-to-business applications are focused on customer asset management [

38].

Closely connected to CLV is the Customer Equity (CE) indicator, which is used mainly for calculating a company’s long-term value. That is usually defined as the sum of the CLV of all current customers of the company [

22], or it can be the sum of the CLV of all current and potential customers [

39,

40,

41,

42]. In this article, CE will be understood according to [

22] above, but for the sake of completeness, it should be added that earlier articles, in particular, understand CE also as the average CLV minus acquisition costs [

43,

44]. Unlike CLV, this definition takes acquisition costs into account [

7].

The past three decades saw the introduction of a vast number of different models and approaches to calculating CLV designed for various types of companies, businesses or chosen management views. One of the possible and often mentioned divisions of CLV models according to the customer-company relationship is into contractual relations (lost for good, retention), semi-contractual relations and non-contractual relations (always a share, migration) [

7].

Within the literature were found only two studies in the Web of Science, which include a greater number of comparisons of selected models for the calculation of CLV based on their empirical research, and therefore a comparison of the predictive capabilities of selected CLV models on a single dataset on the basis of statistical metrics. Donkers et al. [

12] analyzed a dataset from an insurance company with contractual settings and concluded that simple profit regression models achieve the best performance. Batislam et al. [

13] used a dataset from a grocery retailer repeatedly focusing on store cards and their usage as the drivers of higher purchase frequency by customers. The results confirm the better performance of their own modified Beta Geometric/NBD model (BG/NBD) customized to the specified business settings in comparison with Pareto/NBD and original BG/NBD models. It can be stated that even simple models achieve excellent prediction results despite the more complex models being expected to capture the depth of relationship developments better. Similarly, it can be expected that modified models or those designed for specific conditions and environment will produce better predictions in relevant cases than more complex, universally applicable models (achieving consistently good results in various situations).

This article focuses on non-contractual relations typical for e-commerce companies engaged in online shopping. Such companies usually have at their disposal an extensive database concerning their customers, which they use for internal purposes (e.g., financial management, marketing). This kind of online retail market, focusing on selling to end customers, has been growing continuously and it can thus be expected that the number of Internet-based services such as online stores will increase. The same applies to the competitive pressure put on them. In Europe alone, estimated total online sales in Europe in 2016 grew to €530 billion by 15.4% compared to 2015 [

45], with much room for improvement as only 18% of companies are selling online. Every online shopper in Europe was expected to spend

$1330 in 2015 compared to

$1816 in the USA [

45], for a comparison see also other surveys [

45,

46]. The focus on e-commerce companies engaged in online shopping is therefore very topical both in local and global context.

3. Methodology and Data Collection

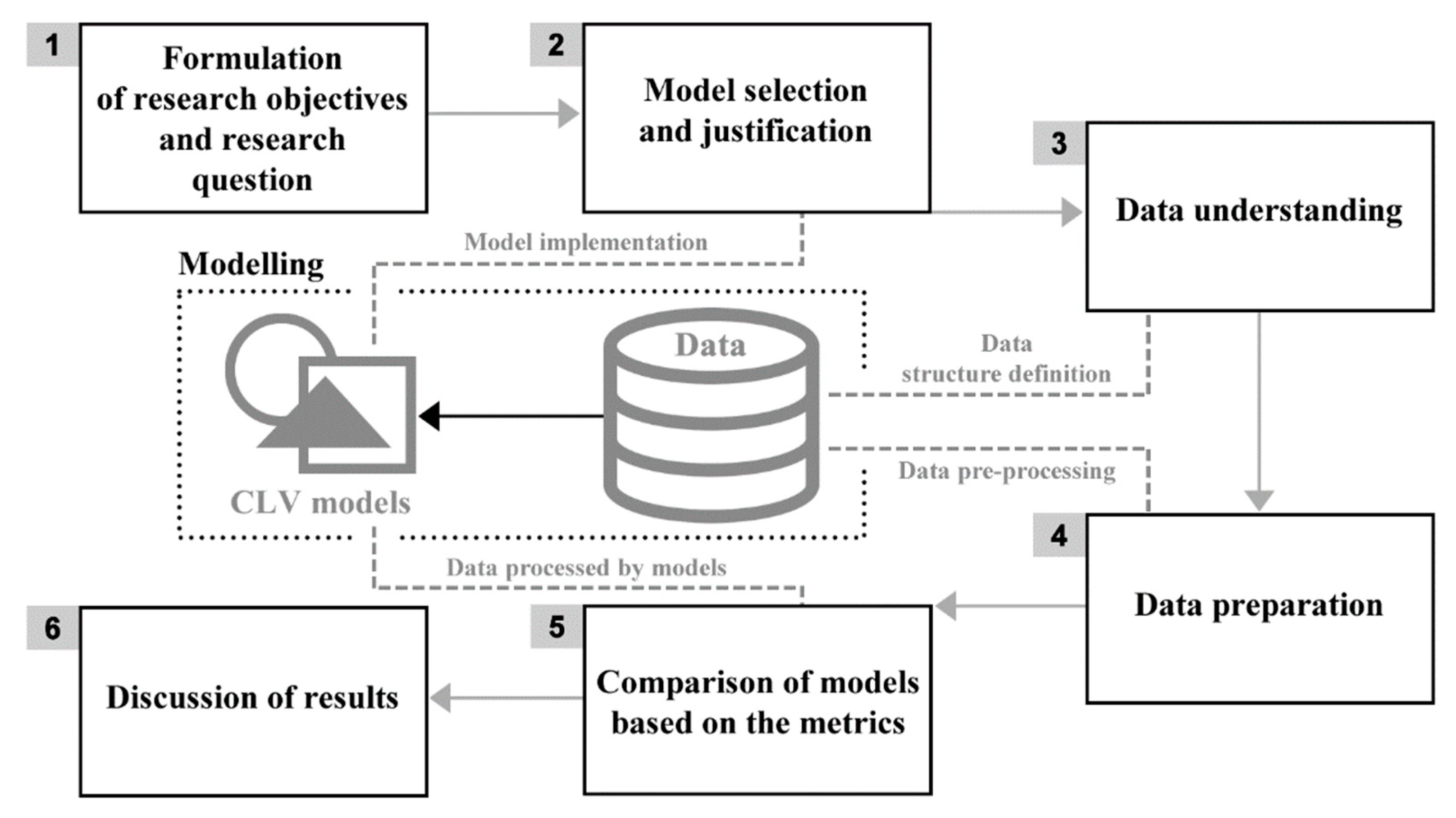

The research methodology consists of six phases, which are illustrated in

Figure 1. The initial phase of the research set the research objectives and formulated the research question. It also justified the need and appropriateness of the proposed research, see

Section 1 and

Section 2. The second phase included the selection and justification of choice of CLV models suitable for use by e-commerce companies engaged in online shopping. In this stage, the implementation of the selected models was also performed according to the described models set out in

Section 3.1. In the third phase, data requirements were defined, based on the selected models. On this basis, it was possible to determine what data, in what form and for what period will be needed in order to perform the research, see

Section 3.2. In the fourth phase, data was collected from various e-commerce companies in the required structure. Further, the acquired datasets that met the specified requirements were pre-processed for the needs of the individual models. The data pre-processing is described in

Section 3.2.

Section 3.3 describes the datasets from various e-commerce companies.

The fifth phase of the research compared the selected CLV models based on statistical metrics listed in

Section 3.4. This section also describes how to perform the comparison, including the definition of a training and testing period. In the last, sixth phase of the research, the research question is answered first in

Section 4, and then the obtained results are subjected to a wider discussion including relevant managerial implications in

Section 5.

3.2. Data Collection and Pre-Processing

The models selected in

Section 3.1 require specific data features and structure. To perform a successful comparison, a definition of required data was published in a publicly available call for data. Several medium- and large-sized online stores from the Czech Republic and Slovakia were asked to participate in this research and provide the data. The minimal possible data according to these requirements included purchase-level identification of customer, date, purchase status, purchase delivery country and region information in the format of a postcode, purchase marketing source, revenue, shipping costs, item quantity and net profit alongside with a unique purchase identifier. These requirements included an anonymization of all identifiers and values in order not to breach any personally identifiable information. A minimum purchase range of 2 years and thousands of unique customers was required. To carry out a comparative analysis, only six out of eleven obtained datasets had met the criteria.

Data pre-processing included (i) descriptive analysis, (ii) data cleaning and (iii) selection of a feature subset from the datasets for individual models. On the basis of the output of the descriptive analysis and in cooperation with the given e-commerce company, the identified outliers were removed. All datasets were cleaned to include only purchases from the same country as determined by the most frequent common country. Individual datasets were trimmed to whole weeks at the beginning and the end because the week is a suitable basic unit for the prediction that can be used by all models. All datasets were aggregated on week level with aggregation details described below. From these data, the datasets for individual models were then created for comparison. For the MC and EP/NBD models, it was necessary to determine the recency and frequency of orders so that both models had identical default data. In the case of the MC model, it was necessary to determine the profitability drivers common to all available datasets. The focus was on the region, time since last purchase, marketing traffic sources, average day of order, month of order, order rank, and delivery price.

The selected models were ordered by the number of features considered as input: Status Quo, EP/NBD and MC, where all models share a minimal dataset, and the last uses additional data from the complete processed dataset.

Table 1 presents the dataset by showing randomly selected rows. Letters A–F are used to denote individual online store datasets. Records are based on each customer’s weekly purchases, where customer identifier

customer_id is unique to each dataset, and weeks (shown as

week_number) correspond to the dataset’s data range counting from number 1.

Monday_date characterises a specific week.

Profit_EUR is the amount of gross profit attributed to these purchases. As several purchases could be made during one week,

avg_purchase_day demonstrates the average of individual transaction weekdays (1 = Monday). Traffic sources are attributed to the most frequent or first source within the week—

channel_poe stands for the paid (P), owned (O) and earned (E) media categorization according to [

65],

channel_type and

medium_source are detailed information about the traffic source. All other purchase information is aggregated:

item_quantity is the quantity of all products purchased,

transaction_shipping is the amount spent on shipping,

transaction_revenue_EUR is the total amount spent by a customer including VAT. Regional information about purchase delivery address was compressed into

zip_firstchar as a first character of the postcode. There are only ten unique postcode first characters in the Czech Republic and Slovakia, so such categorical variable provides sufficient details for the decision tree in the MC model.

To summarize the above, the models use the same minimal dataset and the same data concerning recency and frequency of orders. The MC model also uses additional data required as input parameters. All of the selected models also have the same output, i.e., purpose, despite different calculation methods. This purpose is calculating customer lifetime value. Another unifying element of the comparative analysis is using the same evaluation metrics when comparing all outputs from the models with reality. On this basis, this paper can be considered a relevant comparative analysis.

3.3. Description of Datasets

Six datasets that had met the criteria defined in

Section 3.2 are analyzed within this section. The required data concerning the total number of customers available can be seen in

Table 2. In some cases, data for the entire time of the online shop’s existence were available while in other cases they were not—compare

Table 2 with detailed online store information below. The datasets used for this analysis are very recent—from years between 2008 and 2016, ranging from 151 to 381 weeks of data among the online stores. The next part briefly summarises business verticals of the datasets. All these companies agreed to participate in this research on the condition of anonymity and with a prohibition of spreading the dataset to any third party.

Aggregate results of the descriptive analysis are given in

Table 2, which provides summary information about the individual datasets of the online stores presented.

The company operating online store A focuses on the narrow area of games of all sorts, including board games. In addition to the online shop, they also have several stores in the Czech Republic. Revenues during the year are dominated by a strong Christmas season. In their marketing activities, they target mainly younger customers. The current (2016) total number of customers is nearly 15,000, and altogether they have made almost 20,000 orders.

The company running online store B focuses on sports equipment. In its field, this store is among the biggest in the Czech Republic. Apart from the online shop, they have several stores as well as activities outside the Czech Republic. Sales are again dominated by the Christmas season and several other times of the year. Annual revenues are in the order of tens of millions of euros. They currently (2016) have over 90,000 customers and 136,000 orders in the online store alone.

The company operating online store C focuses on health products. Apart from their online shop they also run a store in Slovakia. They have a strong year-over-year sales growth. At present (2016) they have over 50,000 customers, and nearly 110,000 orders made via the online store.

The company operating online store D focuses primarily on winter and adrenaline sports. They run a store complementary to the online shop. In their marketing activities, they target younger customers. They have a steady year-over-year sales growth, with annual sales in the higher millions of euros. They have over 73,000 customers at present and almost 120,000 orders.

Online store E focuses on erotic and health accessories. It is one of the biggest erotic shops with a strong brand in the Czech Republic. Apart from the online shop, they also run stores. They have a well-functioning community and higher millions of euros in their annual revenues. They have 43,000 customers at present (2016), and nearly 63,000 orders made via the online store.

The last company, online store F, focuses on health and beauty products, especially cosmetics. They have several branches used mainly for goods delivery. The company has experienced strong year-over-year growth since 2007, with ongoing expansion also to other European countries. Due to the product portfolio, the company is impacted by a strong Christmas season. With almost 800,000 customers and 2.4 million orders in the last six years, this is the largest of our datasets.

3.4. Evaluation Metrics

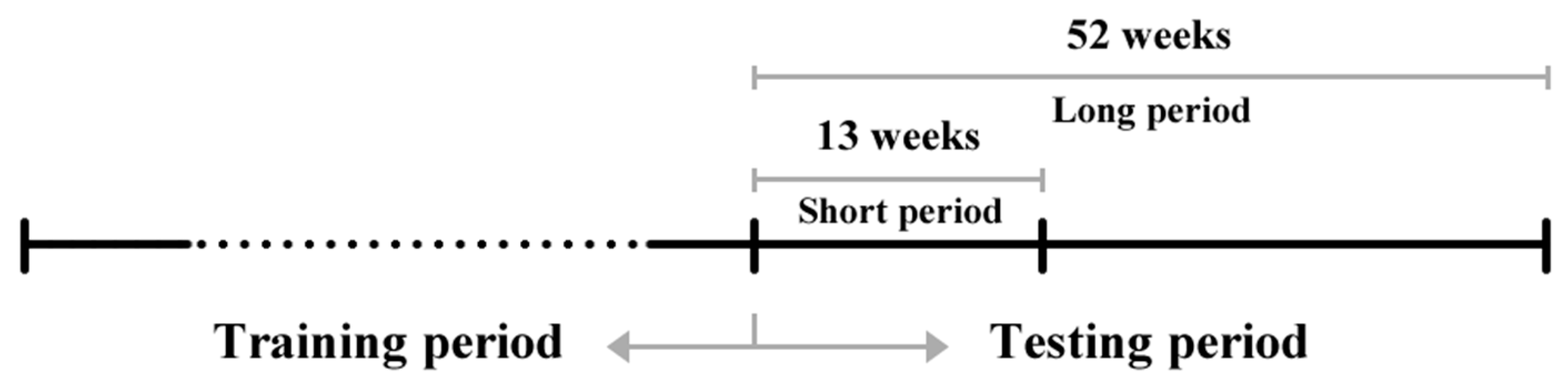

After the pre-processing and data exploration, the following procedure was established for the comparison of the individual models. First, the training and testing periods to carry out the prediction were defined. Two testing periods were selected for the prediction: long (52 weeks) and short (13 weeks), see

Figure 2. In order to make the most of the available data, the last 52 weeks from each dataset were separated, and the data were used for the evaluation of the models’ prediction performance in both long and short testing periods. The remaining data (the total number of weeks without the final 52 weeks) serves as the training period, and its lengths differ per each data set.

Data in the testing period were then cleansed of newcomer customers, as the prediction was compared with reality only for the customers who were acquired before the testing period. Short- and long-term comparisons were made from two perspectives—at the individual and the aggregated customer base level in accordance with [

12].

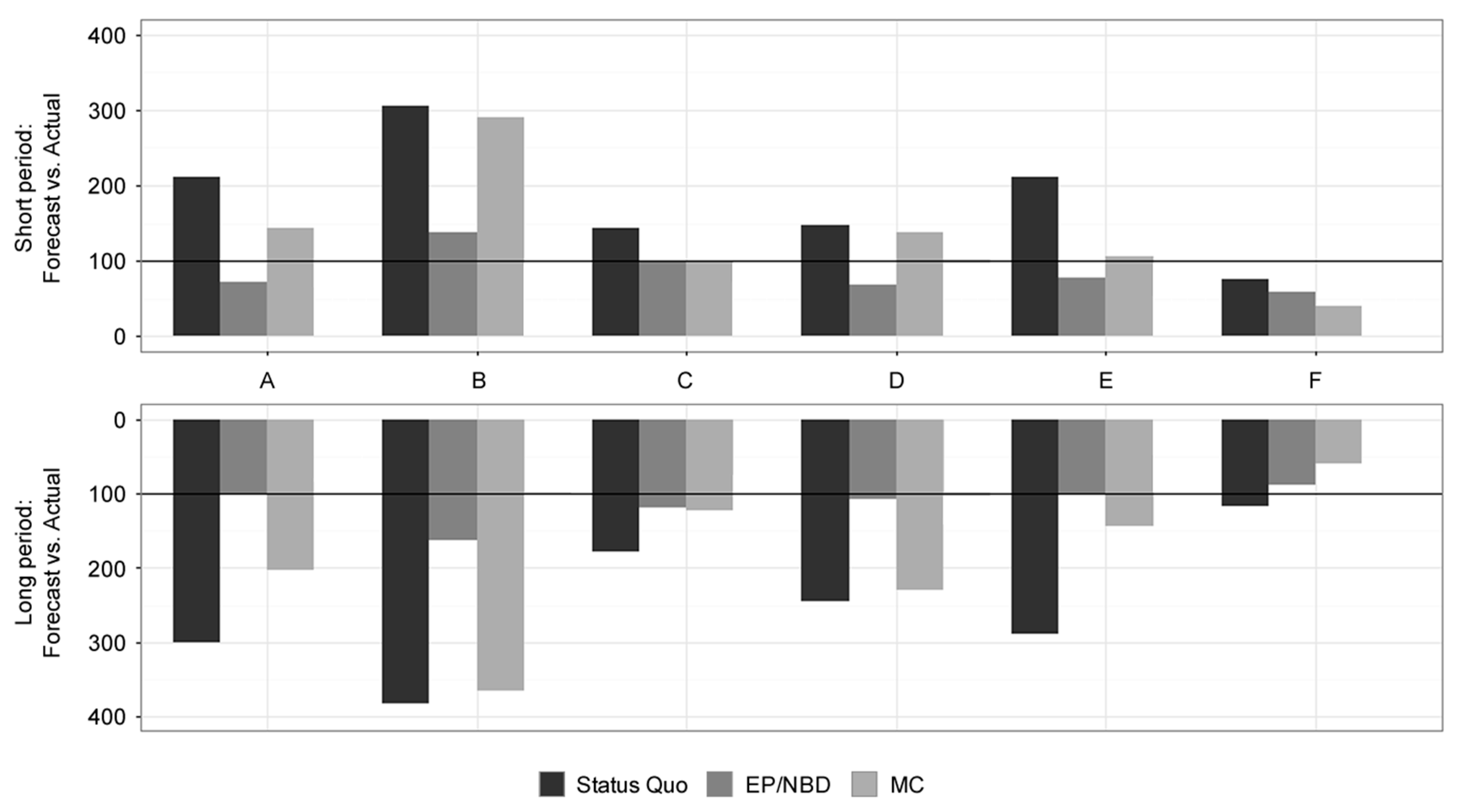

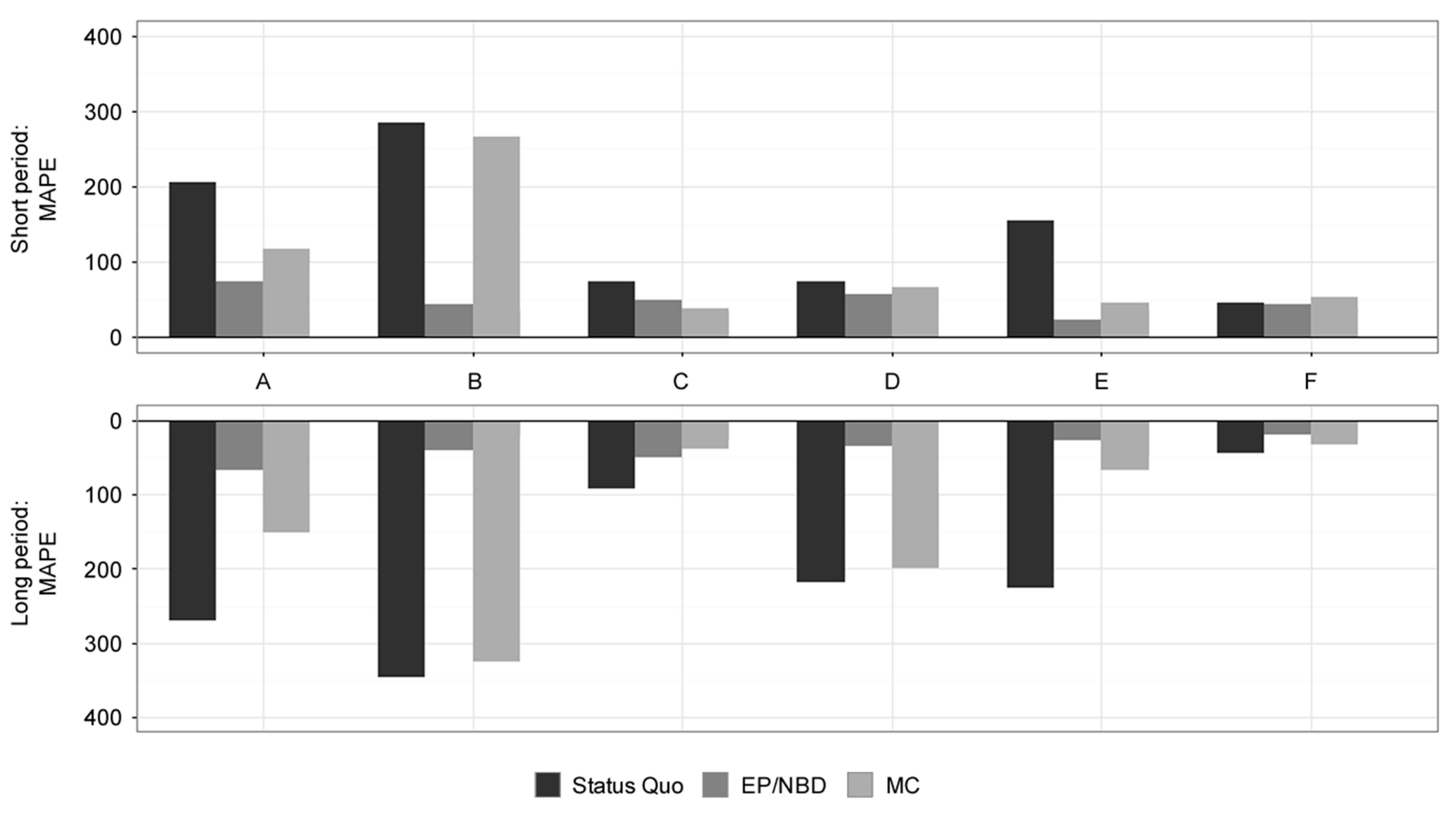

The customer base level offers an overall perspective and compares the real situation with the prediction of CLV for all the customers together. The performance of the models for the whole short and long periods (Forecast vs Actual metric) was evaluated, and the weekly model performance was also assessed using the mean absolute percentage error (MAPE).

The Forecast vs Actual metric is defined as

where

At is the sum of actual profits over all customers in time

t,

Ft is the sum of forecast profits over all customers in time

t;

p is the threshold of the prediction and

h is its horizon.

MAPE also compares the forecasts with actual values and uses the formula

where (

h −

p + 1) is the length of the testing period.

Another perspective compares the prediction at individual customer level. The original intention was to construct the MAPE metric as well; however, this metric would use the actual value of profit in the denominator, which is often equal to zero (for customers who did not make any purchase during the testing period).

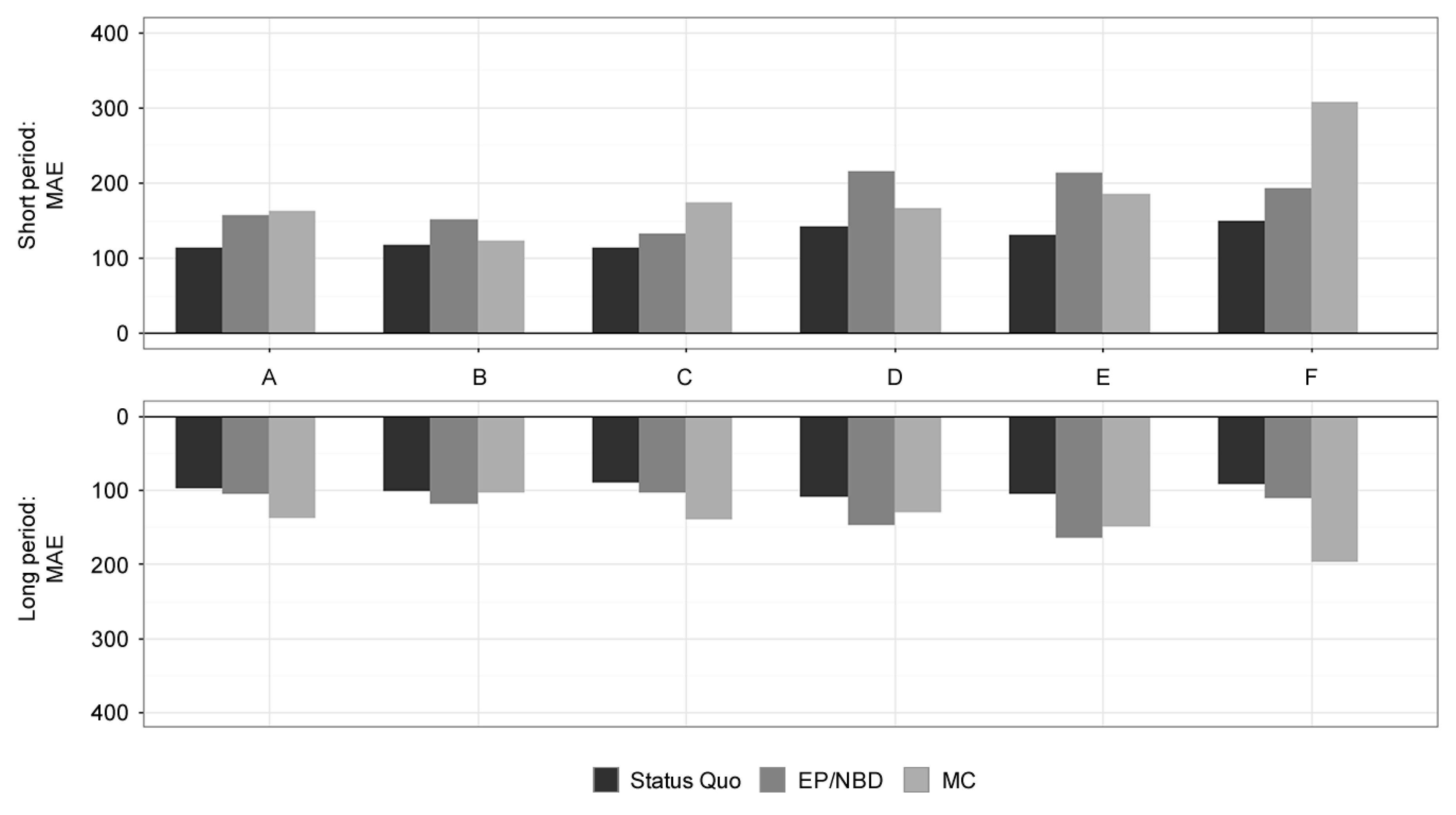

Therefore, the mean absolute error (MAE) was used instead, which is defined as

where

Ai is the sum of actual profits from the

i-th customer over the whole testing period,

Ft is the sum of forecasted profits from the

i-th customer over the entire testing period, and

n is the number of customers.

Although MAE in the percentage of the average actual profit would be preferable, it seemed reasonable to use the same metric as [

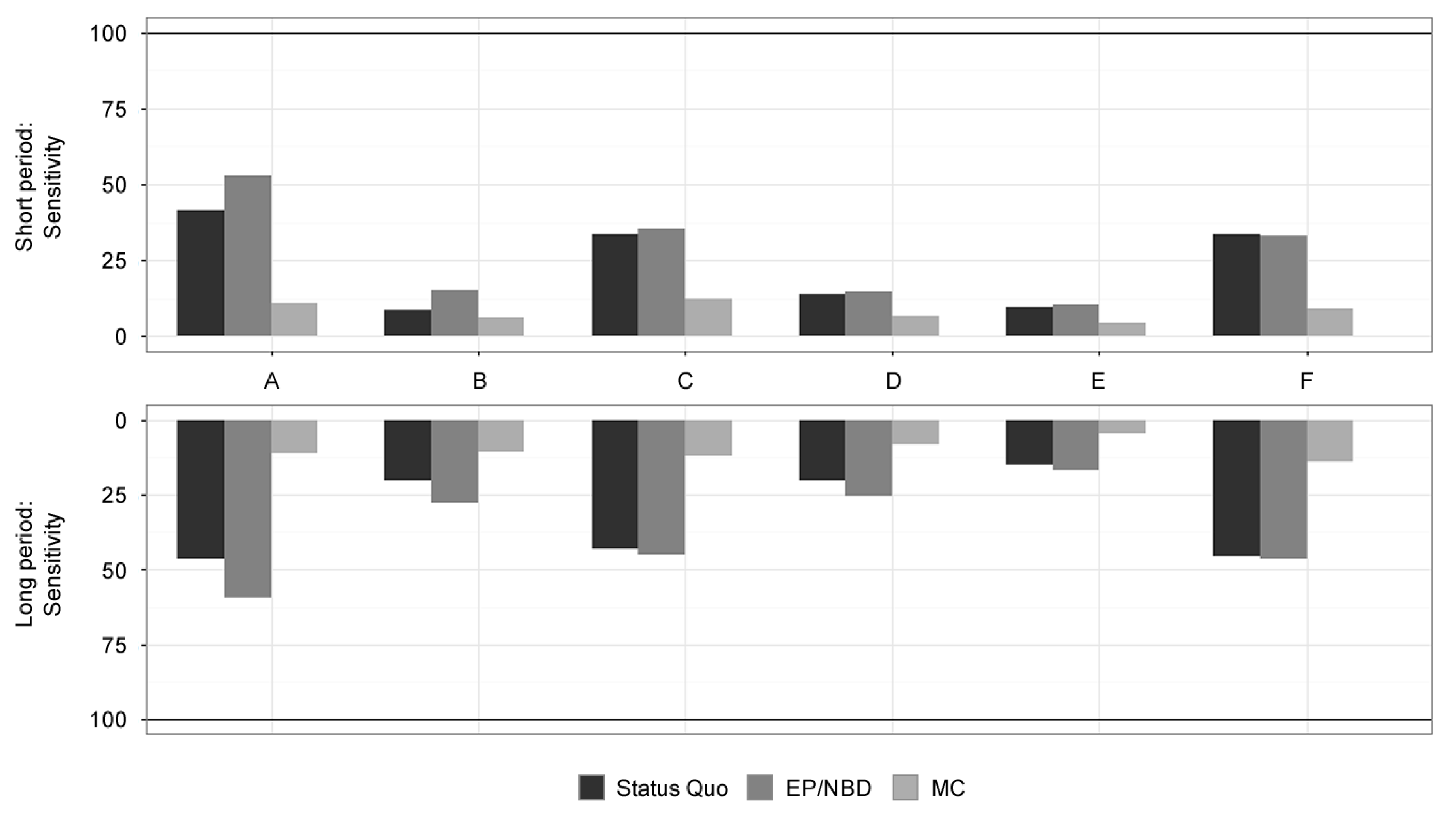

12] for the sake of comparison: MAE in the percentage of average CLV. The reason for this decision is the possibility of broader theorizing about the results of various studies in case the researchers used the same statistical or evaluation metrics. The success rate of selecting the most profitable customers was also evaluated. Using the individual models, 10% of the most profitable customers were selected in the short and the long period and compared with the actual top 10%. To compare the performance of this classification, sensitivity (sometimes called true positive rate or recall) is computed as

where

TP (true positives) is the number of customers assigned correctly to the top 10% class, and

FN (false negatives) is the number of customers that were not assigned to the top 10% by the CLV calculation but which actually belonged to this class.

4. Results

This article aims to empirically compare the predictive ability and quality of selected CLV models on the basis of statistical metrics. This part presents the results of each model for every dataset by the selected performance metrics of Forecast vs Actual, MAE on a customer level, MAPE on a weekly basis, and sensitivity for identifying 10% of the most profitable customers. All the results are compared both for a short-term prediction period of 13 weeks and a long-term prediction of 52 weeks of calculated CLV. Evaluation metrics overview and comparison methodology are presented in

Section 3.4. Further discussion and implications of the results are presented in

Section 5. A visual comparison of results can be found in

Appendix A (

Figure A1,

Figure A2,

Figure A3 and

Figure A4).

For results interpretation, it is important to emphasize that a quality of some results corresponds to the complex prediction subject: the models not only aim at estimating purchase probability, purchase frequency and purchase value, but the final variable is the profit in time.

It can be stated that undervaluation of the customer base offers better insights and possible applications than overestimation. As the results in

Table 3 indicate, the best model for predicting customer base value for the short term period is the Status Quo model, reaching an average 91% of the actual profits. However, it appears extremely inconsistent in predictions as judged by the standard deviation and high overvaluation of the profit value for the majority of datasets except the dataset F. The EP/NBD model shows a solid performance with 62% of actual profit and good standard deviation. The worst model by short-term predictive performance is the MC model with a strong undervaluation of 54% of actual profit and the highest standard deviation from the researched models.

For the long-term period, results in

Table 3 reveal that the best model for predicting customer base value is the EP/NBD model with 93% of actual profit. Very stable results regarding standard deviation are negatively impacted only by the fact that it overestimates the value for all datasets except for online store F. The MC model has excellent performance, reaching 82% of actual profit, but the inconsistency as seen by standard deviation and the comparison with short-term results leave quite poor conclusions for this model. The worst model in the long-term results is the Status Quo model, due to its overestimation of customer base value reaching 138% of actual profit on average.

Weekly predictions for the short-term period shown in

Table 4 are well covered by EP/NBD models, reaching MAPE of 43%, respectively, with very low standard deviation. Status Quo and MC models performed poorly with high standard deviation and MAPE of 59% and 63%, respectively.

In the long-term period, the EP/NBD model performed very well with MAPE of 21%, respectively, as shown in

Table 4. MAPE results were better in the long-term period and understandably worse standard deviation than in short-term with this model. The MC and Status Quo models achieved bad results similar to their short-term performance with MAPE of 50% and 64%, respectively, and also with high variance of results.

Table 5 summarises the results for mean absolute error (MAE). The lowest errors in the short-term period can be observed for the Status Quo model (MAE of 147% on average) with results almost comparable to the EP/NBD model (MAE of 191% on average). The MC model performs poorly (MAE of 294%) mainly because of its bad performance on dataset F. All models have a relatively low standard deviation, which can be seen as a good indicator of their quality.

For the long-term period, both Status Quo and EP/NBD models perform very well with MAE of 93% and 114%, respectively, as shown in

Table 5. Worse results were displayed by the EP/NBD model for dataset E with MAE of 165%. Both of these models performed very well working on the largest dataset F with the Status Quo model reaching MAE of 92% and the EP/NBD model achieving MAE of 112%. The MC model does not perform that well (MAE of 190% on average, with the best result of 104% for dataset B). All models deliver consistent results as seen by the low standard deviations.

Table 6 summarises the results for the sensitivity of selecting 10% of the most profitable customers. Short-term period results indicate that the MC model with a sensitivity of 8.95% has not beaten even the random selection baseline of 10%. The best models for selecting the most valuable customers in the short-term period are EP/NBD and Status Quo models, with a sensitivity of 32% and 31%, respectively.

Long-term period results of sensitivity reveal EP/NBD and Status Quo as the two best models for the selection of highly valuable customers, achieving a sensitivity of 43% and 41%, respectively, and having reasonable standard deviations of 22% and 18%, respectively, see

Table 6 for more details. For datasets A, C and F, robust and similar performance was achieved by both of these models, with the EP/NBD model having a slight improvement of sensitivity for dataset C (shift from 43% to 45%) and dataset F (shift from 45% to 46%) and strong improvement of sensitivity for dataset A (shift from 47% to 59%). The MC model still performs poorly, resulting in the sensitivity of 13% with no exceptional results for any dataset. All the models show better sensitivity results in comparison with the short-term period.

A conclusion can be drawn from all these results to answer the research question from

Section 1: Which of the compared models for calculating CLV have a good predictive performance of CLV in the non-contractual environment of e-commerce? The results described in this section demonstrate that the EP/NBD model has consistently outperformed other selected models in a majority of evaluation metrics and can be thus considered good and stable for online shopping within e-commerce business. Its predictive power was recognised both in short- and long-term periods and also when comparing individual predictions with overall customer base value prediction.

5. Discussion and Implications

Section 2 introduced the main conclusions of the articles [

12,

13]. Like this article, they base their conclusions on their empirical comparison of selected models. In contrast, there exists a significant number of reviews comparing theoretical aspects of selected CLV models based on results from secondary sources that build on different datasets and focus on various types of environment and applications. This article compares models representing different approaches to modeling CLV and also compares other models than those selected for comparison with the articles above [

12,

13]—both studies deal with different environments and use a single dataset for the evaluation of selected models.

The results in

Section 4 offer new insights into the performance of two complex models and a very simple one, considering six different datasets typical for online shopping. All selected models use the same minimal dataset and also the same data concerning recency and frequency of orders. The MC model then uses additional data required as input parameters. All models selected for the comparative analysis also have in common the fact that despite different calculation methods and input parameters, they all have the same output, or purpose: calculating customer lifetime value. That makes this a relevant comparative analysis of CLV calculation results. Here follows a discussion of the results of each model and possible explanations of its performance.

The EP/NBD model performed consistently well both in individual predictions and overall customer base. Slightly bad results for datasets D and E according to MAE for long-term period correlate with lower repurchase frequency of these retailers’ customers. This observation is backed by the feature of the spending submodel used in the EP/NBD model. Whenever a customer features a low number of total transactions (might as well be a new customer with a single order), the Gamma-Gamma spending submodel predicts their lifetime average order value considering predominantly the rest of the customer population. With more orders completed, increasingly more weight is attributed to customer’s purchase behavior, and the rest of the population plays a minor role. Therefore, if a dataset contains only a few customers with repeated purchases, the spending model will prefer more population estimates and the EP/NBD model results will be driven mainly by the Pareto/NBD submodel (Recency and Frequency).

The Status Quo model overestimates customer base profit value for the majority of datasets, except for C and F, as seen in

Table 3. Recency and frequency analysis of the training period reveal that both datasets C and F consist of a very high ratio of customers with low profitability and low purchase frequency with two completed transactions at most. This customer cluster does not impact the results of the rest of the datasets to such an extent. Also observed was an undervaluation of customer-level profit prediction for segments of customers with interpurchase times higher than the threshold of 52 weeks chosen for the Status Quo model. This effect was mainly observed for datasets A, B and E, which include approximately 30% of the customers with second-transaction repurchase interval longer than 52 weeks. Customer segments with high purchase frequency result in overestimation of profit prediction by neglecting dropout signals.

These results indicate that the Status Quo model could be beneficial in situations with low or predictable dropout rates, with a stronger likelihood of higher purchase frequency and low variability in product margin—compare with [

12]. The methodology for the Status Quo model is the simplest and most understandable, yet confusing regarding the description of the underlying customer behavior. However, in comparison with other models used in this research, it must be concluded that the features of the Status Quo model are not suitable for datasets with long training time periods and large data sets with high customer heterogeneity including spontaneous purchase behavior undergoing significant changes in time.

The results of the MC model appear to be very erratic across all the datasets and evaluation metrics. There was a high standard deviation for customer base level prediction (see

Table 3) and weekly trends (see

Table 4). From this analysis of the MC model results, it can be concluded that the performance relies heavily on the quality of the initial subgroups found in the first part of the model execution. These subgroups are estimated by a decision tree, which could benefit from as much customer level data as possible. According to the methodology described in

Section 3.2, it was possible to use only attributes (profitability drivers) available for all datasets. The quality of subgroups corresponds with the weakly results and performance of the Markov chain submodel and implies the need for such customer attributes. It could be recommended to determine more profitability drivers and maintain a thorough analysis of input variables for this model. A remarkable exception to this conclusion is found in the dataset E that initially identified clear subgroups in the CART submodel, yet the results of classification of the most profitable customers in

Table 6 demonstrate weak performance with a sensitivity of only 4%, while random selection would roughly result in 10% sensitivity. The underperformance of MC customer classification compared to random selection was unanticipated.

It can be summarised that the EP/NBD model performed very well on all selected datasets, achieved stable results both on customer level and for the whole customer base metrics. The Status Quo model demonstrated strong results in the selection of the top 10% of the most profitable customers and had low individual customer level error rates, which could be seen as a good result for this naïve model construction. The results were negatively influenced mainly by the large overvaluation of the customer base for the majority of datasets in both the short- and long-term period the MC model performed with the worst results on all levels: achieving poor performance on an individual customer level, in the selection of the top 10% of the most profitable customers, and even when considering the overall customer base value.

Seasonality (e.g., the Christmas period) constitutes a problem for all datasets containing predominantly seasonal purchase behavior. That has a strong impact mainly for datasets B and D with the EP/NBD model, and this impact can be associated with the fact that the training period ended in late November, i.e., in the midst of the Christmas season for online stores. On the other hand, as all datasets included at least three years of data (dataset B missing just one month), this can be seen as equal input conditions for model training and the following comparison.