1. Introduction

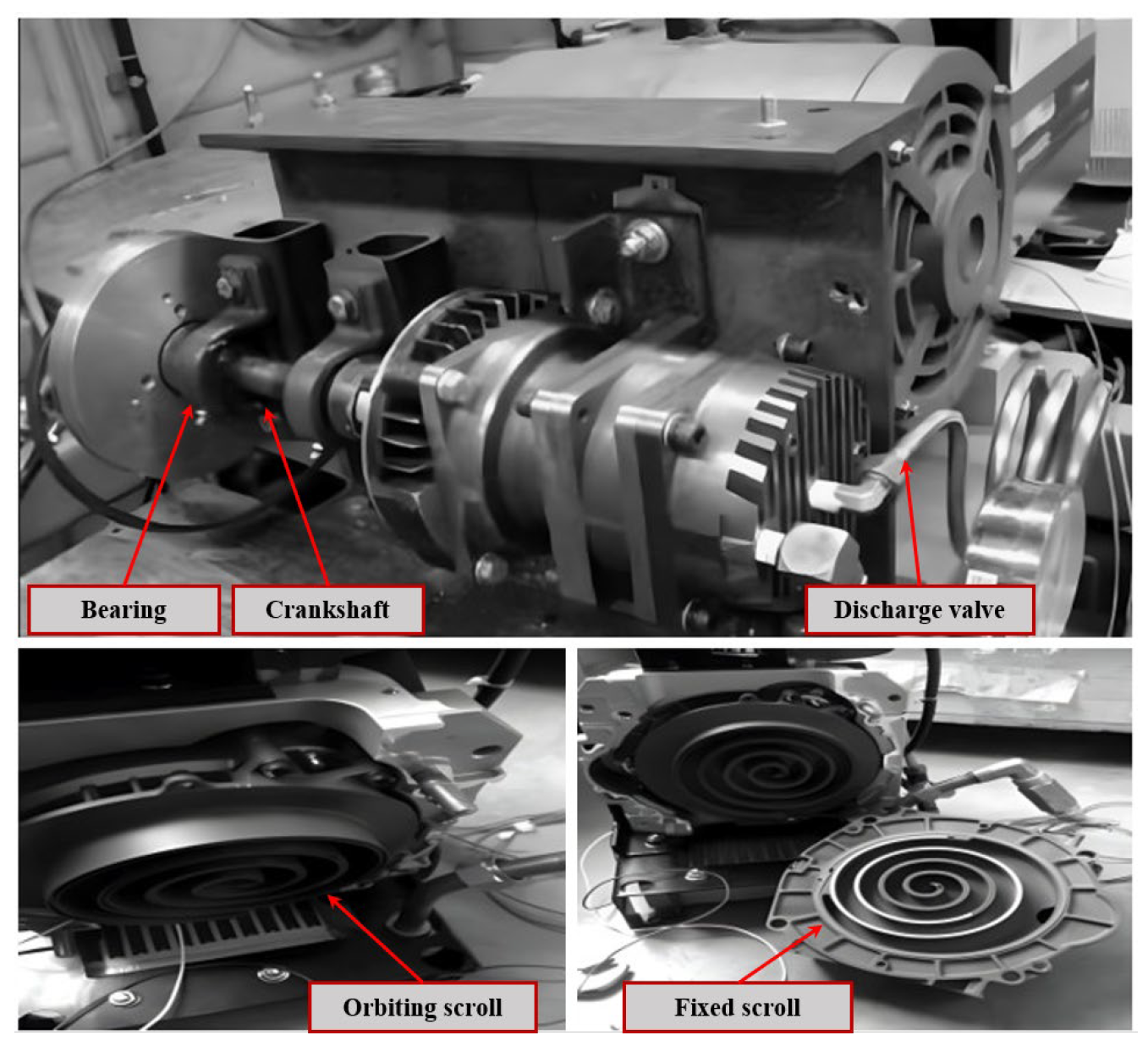

Scroll compressors, recognized for their environmental friendliness, high efficiency, and energy conservation, are extensively utilized in critical sectors such as food processing, refrigeration, and transportation [

1,

2,

3]. These devices are essential for maintaining system reliability and operational efficiency, directly impacting the overall performance of the machinery [

4,

5]. High-end scroll compressors are typically subjected to rigorous conditions, operating at high speeds and pressures, which expose them to an increased risk of faults [

6]. Such malfunctions can not only disrupt operations but may also lead to catastrophic failures [

7]. Consequently, precise and effective fault diagnosis in scroll compressors is crucial for ensuring industrial safety and functionality.

In recent years, fault diagnosis methods have been categorized into three main types: model-based detection methods [

8], diagnostics based on expert knowledge [

9], and data-driven approaches [

10]. While model-based detection methods and expert knowledge-based diagnostics were extensively applied in the past, they have demonstrated significant limitations in handling complex industrial environments. These approaches are heavily dependent on accurate mechanistic models and expert insights, which pose challenges when adapting to complex and nonlinear fault conditions. Furthermore, the maintenance of such models entails substantial costs. The limitations of model-based and expert knowledge-based diagnostics are primarily due to the difficulties in constructing precise mechanistic models, their lack of adaptive learning capabilities, and the associated high maintenance requirements [

11]. In contrast, data-driven methods, which do not rely on specific mechanistic models or expert knowledge, have gained prominence. The rapid development of modern machinery, sensor technology, and the Industrial Internet of Things (IIoT) has facilitated real-time data collection and storage from rotating machinery, significantly enhancing the potential of these methods for big data utilization in fault prediction and analysis [

12]. Traditional machine learning algorithms such as support vector machines [

13,

14], extreme learning machines [

15,

16], and K-means [

17,

18,

19] have been widely applied in fault diagnostics with considerable success. However, despite these achievements, such methods heavily depend on manual feature extraction, which limits their ability to fully capture the complex features present in fault data. Moreover, the shallow network structures of these algorithms make them less effective in processing high-dimensional and complex nonlinear data. For instance, Fu et al. [

20] introduced an ensemble empirical mode decomposition (EEMD)-based diagnostic method capable of effectively diagnosing faults under stochastic noise, noted for its speed, low error rate, and stable performance. However, due to the high number of iterations and slow decomposition speed of EEMD, Sun et al. [

21] proposed a fault feature extraction method that combines empirical mode decomposition (EMD) with an improved Chebyshev distance, which has been validated experimentally. Chen et al. [

22] proposed an intelligent fault diagnosis model based on a multi-core support vector machine (MSVM) optimized using a chaotic particle swarm optimization (CPSO) algorithm. This model demonstrated good generalization capability and diagnostic accuracy. Similarly, Ma et al. [

23] introduced a fault classification and diagnosis method based on a BP neural network ensemble. In this method, multiple sub-BP networks were employed for separate diagnoses, which increased the accuracy of fault diagnosis from 95% to 99.5%. However, due to the shallow network structure and the insufficient feature extraction capability of traditional machine learning algorithms, deeper micro-features contained in fault data could not be explored and extracted [

24]. Consequently, this limitation hindered the further improvement of diagnostic accuracy.

Compared to traditional machine learning methods, modern fault diagnosis methods based on deep learning offer the advantage of eliminating the need for manual feature extraction. However, despite the significant progress made by deep learning in automating feature extraction, current models still face limitations in their ability to fully capture comprehensive features. Specifically, methods such as Convolutional Neural Networks (CNNs) excel in extracting local features but struggle to effectively capture global signal dependencies, particularly in complex fault data, where deeper global information may be overlooked. Moreover, these methods autonomously extract valuable information from raw data and subsequently perform classification tasks [

25]. Notably, significant progress has been made in intelligent fault diagnosis using convolutional neural networks (CNNs) [

26]. For example, Dong et al. proposed a CNN model with a compound attention mechanism for identifying faults in supersonic aircraft [

27]. Additionally, Zhao et al. designed an adaptive intra- and inter-class CNN model that effectively identifies gear faults even under varying speed conditions [

28]. However, CNN models primarily focus on capturing local features in signals. Consequently, they may face challenges in establishing global dependencies, which limits their ability to effectively extract fault information and achieve robust generalization performance, especially when dealing with small sample sizes [

29]. He et al. [

30] proposed a feature-enhanced continuous learning method that allows a diagnostic model to continuously and adaptively acquire knowledge of new fault types, effectively mitigating the forgetting of features and enhancing the model’s fault detection capabilities. Similarly, Seimert et al. [

31] developed a diagnostic system based on a Bayesian classifier capable of classifying and diagnosing healthy and damaged bearings. In addressing unknown fault types that may arise in practical applications, Yang et al. [

32] proposed a multi-head deep neural network (DNN) based on a sparse autoencoder. This network learns shared encoded representations for unsupervised reconstruction and supervised classification of monitoring data, enabling the diagnosis of known defects and the detection of unknown ones. Furthermore, Lei et al. [

33] introduced an intelligent fault diagnosis method based on statistical analysis, an improved distance evaluation technique, and an adaptive neuro-fuzzy inference system (ANFIS) to identify different fault categories and severities. Liu et al. [

34] proposed a new algorithm—the extended autocorrelation function—to capture weak pulse signals in rotating machinery at low signal-to-noise ratios. They further proposed an improved symplectic geometric reconstruction data augmentation method, which offers higher accuracy and convergence capabilities in the fault diagnosis of imbalanced hydraulic pump data [

35]. Moreover, Deng et al. [

36] have developed an end-to-end time series forecasting method known as D-former for predicting the Remaining Useful Life (RUL) of rolling bearings. This method is specifically designed to extract degradation features directly from the original signal. In addition, Jin et al. [

37] introduced a novel fault diagnosis method named the multi-layer adaptive convolutional neural network (MACNN) to tackle the challenge posed by deep learning models’ heavy reliance on consistent feature distribution across training and testing datasets. The MACNN utilizes multi-scale convolution modules to extract low-frequency features and effectively address classification problems.

The development of automated fault identification models typically relies on large-scale standardized datasets. However, in practical applications, acquiring well-labeled fault data is both time-consuming and labor-intensive, often leading to model overfitting due to the scarcity of fault data. To address this issue, data augmentation techniques have emerged as an effective solution. For instance, Taylor and Nitschke [

38] significantly improved the detection performance of CNNs. Similarly, Fernandez et al. [

39] increased the number of minority class samples by creating synthetic samples between existing samples of the minority class, ensuring that the model does not overlook these minority samples during training. Furthermore, He et al. [

40] enhanced the number of minority class samples using adaptive synthetic techniques, thereby improving the model’s focus and classification performance on difficult-to-classify data points and enhancing overall model performance and accuracy. A self-paced fault adaptive diagnosis (SFAD) method based on a self-training mechanism and target prediction matrix constraint was proposed by Jiao et al. [

41], achieving model adaptation using only unlabelled target data. Additionally, Hu et al. [

42] employed relocation techniques to simulate data under different rotational speeds and workloads, effectively increasing the sample size. Moreover, Yang et al. [

43] proposed a diagnostic model using a polynomial kernel-induced MMD (PK-MMD) distance metric to identify the health status of locomotive bearings. However, traditional data augmentation methods often lead to model overfitting and fail to address data distribution edge cases. To overcome these limitations, a data augmentation method based on generative adversarial networks (GANs) has been proposed [

44]. This method leverages the capabilities of GANs to generate new, synthetic samples that exhibit high variability while maintaining statistical similarity to real data. This approach effectively increases data volume, particularly in cases of minority class data scarcity, avoiding the overfitting issues associated with simple sample repetition and better exploring the edge regions of data distribution, thereby improving the model’s generalization to uncommon scenarios. Jiao et al. [

45] proposed a self-training reinforced adversarial adaptation (SRAA) diagnostic method based on a dual classifier difference metric. Similarly, Zhao et al. [

46] introduced a deep residual shrinkage network to enhance the feature learning capability of high-noise vibration signals, achieving high fault diagnosis accuracy. Additionally, Zhang et al. [

47] utilized multiple learning modules and a gradient penalty mechanism to significantly improve the stability of the generative model and the quality of generated data. Despite the demonstrated potential of GANs in bearing fault diagnosis, their limitations cannot be overlooked. Firstly, current GAN-based research mainly focuses on the processing of one-dimensional signals, which may neglect the challenges of extracting complex and deep features from one-dimensional data, potentially leading to the loss of critical information and affecting the quality of generated data. Secondly, the training process of GANs frequently encounters the issue of gradient vanishing. Although Wasserstein GAN (WGAN) [

48] alleviates this problem by introducing a Lipschitz constraint, its capability to fit complex data samples remains limited, impacting model efficacy and potentially leading to the waste of computational resources. In recent years, machine learning and deep learning methods have made significant progress in the field of fault detection, with CNNs and Recurrent Neural Networks (RNNs) excelling in feature extraction and pattern recognition. However, these methods have limitations in dealing with global features and long-range dependencies. For this reason, Transformer models have become an important tool in fields such as computer vision and natural language processing due to their powerful global feature extraction capabilities [

49,

50]. Moreover, the application of the Transformer model in mechanical equipment fault identification has become increasingly widespread, and some scholars have utilized it for modeling and detecting mechanical equipment faults [

51]. For instance, Tang et al. developed a Transformer model specifically designed for bearing fault identification under variable conditions [

52], while Ding et al. introduced a time-frequency analysis-integrated Transformer for detecting faults in rotating machinery [

53]. Furthermore, Zhao et al. proposed an Adaptive Threshold and Coordinate Attention Tree Neural Network (ATCATN) for hierarchical health monitoring, which accurately identifies the location and size of faults in aircraft engine bearings, even under severe noise interference [

54]. Despite their excellence in capturing global features, the performance of Transformer models heavily relies on large datasets and consumes substantial computational resources. The technical challenges primarily include: firstly, the multi-head self-attention mechanism requires extensive matrix multiplications during construction, significantly increasing the demand for computational resources; secondly, traditional convolution kernels used for feature extraction also entail high computational costs; lastly, although feature dimension reduction achieved through pooling layers aids in model light-weighting, it often sacrifices detailed data features. Therefore, developing an efficient and lightweight self-attention mechanism and convolution operations becomes crucial. Such methods not only reduce reliance on computational resources but also effectively downsample features while retaining critical information, providing optimal model deployment solutions for resource-constrained environments.

Fault diagnosis for scroll compressors is still in the early stages of development, with current technologies struggling to meet the demands for both efficiency and accuracy, particularly in complex operational environments. Existing methods predominantly rely on traditional feature engineering, which faces significant challenges in capturing the intricate and often subtle fault patterns present in scroll compressors. To address these challenges, this study introduces an innovative fault diagnosis method based on an enhanced design of two-dimensional convolutional neural networks (2D CNNs). In this preliminary research, experiments were conducted under ideal conditions to validate the method’s effectiveness. The results demonstrate improved diagnostic accuracy and robustness, providing a solid foundation for future studies. These future investigations will explore the method’s performance in more complex and challenging conditions. The key innovations of this approach include:

- (1)

Multiscale Feature Extraction: The application of the Short-Time Fourier Transform (STFT) converts one-dimensional time-series signals into two-dimensional images. This transformation allows the model to perform feature extraction at multiple scales, thereby effectively capturing minor variations in the signal. By utilizing the extensive spectral information contained in two-dimensional images, the model achieves a comprehensive understanding and analysis of mechanical equipment operation. Consequently, this leads to higher fault detection rates in practical applications and ensures the accuracy and reliability of diagnostic results.

- (2)

Hybrid Architectural Design: The integration of Swin Transformer’s window attention mechanism, the global attention mechanism of the Global Attention Mechanism (GAM) Attention, and the shallow 2D convolution feature extraction branch network of ResNet, has been shown to significantly enhance the model’s generalization ability and sensitivity to data. Moreover, this hybrid architecture optimizes the feature extraction process, thereby improving the model’s stability and accuracy in handling complex data. Additionally, it minimizes computational resources, thus increasing the model’s adaptability and performance in diverse data environments.

- (3)

Deep Feature Fusion: The model integrates global spatial and local features extracted by various branch networks using pooling technology. This multilevel feature fusion enables the model to more effectively integrate and express information from different data scales, thereby greatly enhancing its expressiveness and robustness. As a result, the application of deep feature fusion allows the model to exhibit higher adaptability and diagnostic precision when confronted with complex and variable fault signals, significantly improving the reliability and efficiency of fault diagnosis.

2. Preliminaries

2.1. GAM Module

CNNs have been extensively applied across various fields, particularly in computer vision, and are highly regarded by researchers for their exceptional performance. Moreover, convolutional attention mechanisms have provided CNNs with a straightforward and efficient method for feedforward attention processing. Considerable research has been conducted on the performance improvements brought by attention mechanisms in image classification tasks. However, some challenges remain.

For instance, the SENet [

55] model may suffer from inefficiencies when suppressing features unrelated to the target task, consuming significant resources or time for pixel-level processing operations, which impacts the overall efficiency of model training. To address this, the CBAM [

56] model implements both spatial and channel attention mechanisms. The channel attention mechanism compresses the feature map along the spatial dimensions, while the spatial attention mechanism compresses along the channel dimensions, producing new features. Conversely, the BAM [

57] model operates two attention mechanisms in parallel but overlooks the interaction between channel and spatial attention. The GAM [

58] model, on the other hand, enhances the interactions across spatial and channel dimensions, enabling the capture of critical feature information across three dimensions.

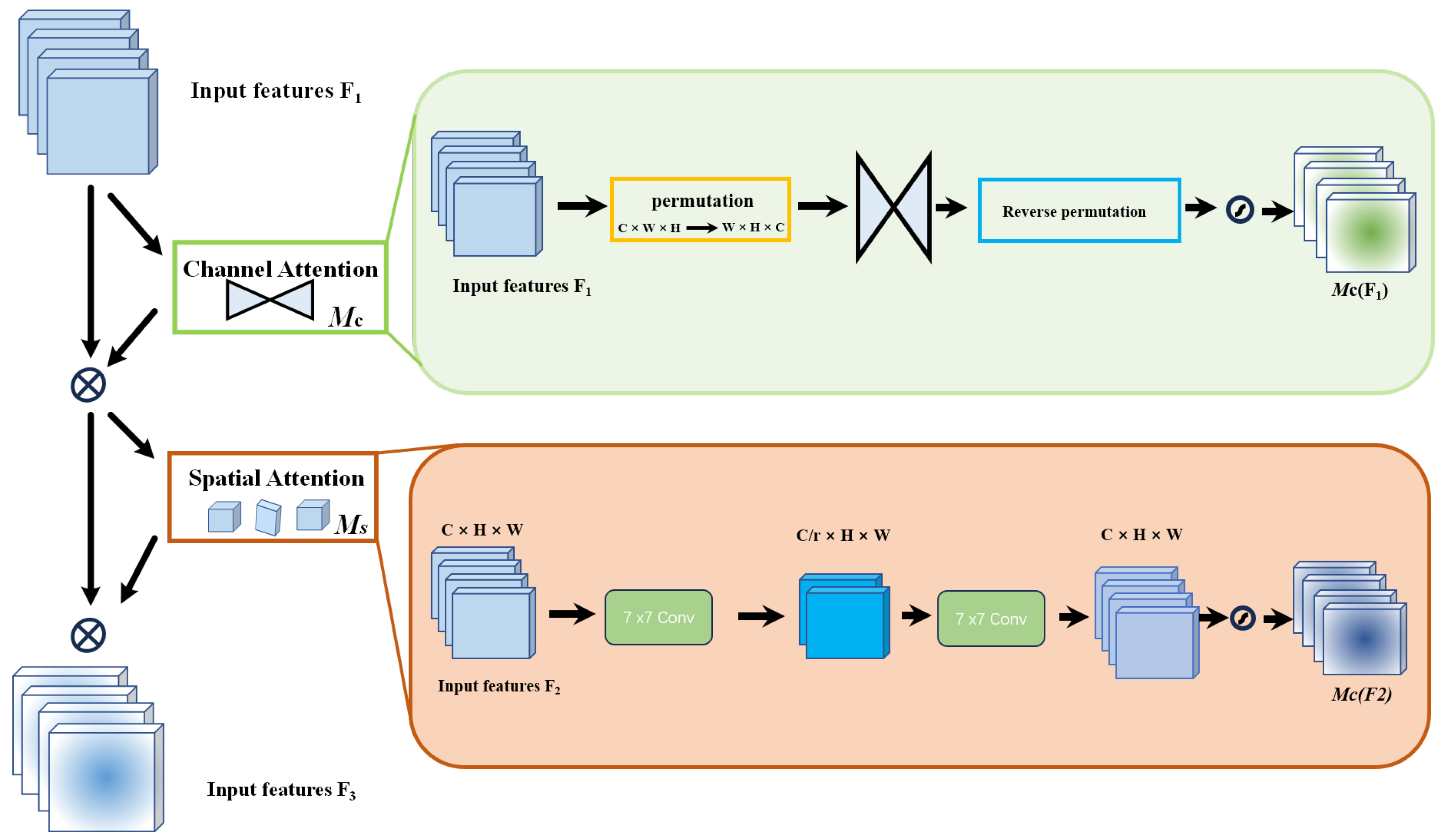

Figure 1 illustrates the structure of the GAM module.

The GAM is designed to enhance neural network performance by integrating sophisticated channel and spatial attention mechanisms. Specifically, the channel attention component is implemented using a multi-layer perceptron (MLP) with two hidden layers and an activation function to introduce non-linearity. Dimensionality is effectively reduced through linear transformations, nonlinear enhancements are applied, and features are remapped to their original dimensions to isolate key channel-specific information. Complementary spatial attention is achieved through two convolutional layers and batch normalization, which accentuate spatial details and stabilize them. This process culminates in a synthesized feature profile that bolsters the model’s contextual understanding. This dual mechanism not only emphasizes relevant features at different scales and locations but also integrates these features into a cohesive, attention-weighted representation through a sophisticated fusion process. Consequently, essential global features are captured, significantly improving the model’s efficiency and accuracy in complex tasks such as classification and detection. Therefore, overall neural network performance is enhanced in demanding analytical environments.

2.2. ResNet Model

As one of the most iconic architectures in the field of convolutional neural networks, ResNet [

59] is highly esteemed by the academic community for its innovative introduction of residual structures, which have significantly enhanced network performance. The residual structure addresses the problem of gradient explosion that arises as neural networks deepen. In a residual module, the input is directly added to the output of the module via identity mapping, ensuring that each layer feeds into the next with minimal loss of information. Consequently, this design allows deeper residual networks to consist of multiple such residual modules. Each module effectively fits the errors of its preceding classifier, thereby improving classification capabilities. The structure of a residual module is defined as follows:

In the formula, x and y represent the input and output vectors of the residual module, respectively. The term F(x, {Wi}) denotes the trainable residual mapping. For Equation (1) to hold, the dimensions of x and F(x, {Wi}) must coincide. If dimensionality is altered due to pooling, it becomes necessary to incorporate an identity mapping within the shortcut connection to ensure dimensional consistency. The corresponding formula is described as follows:

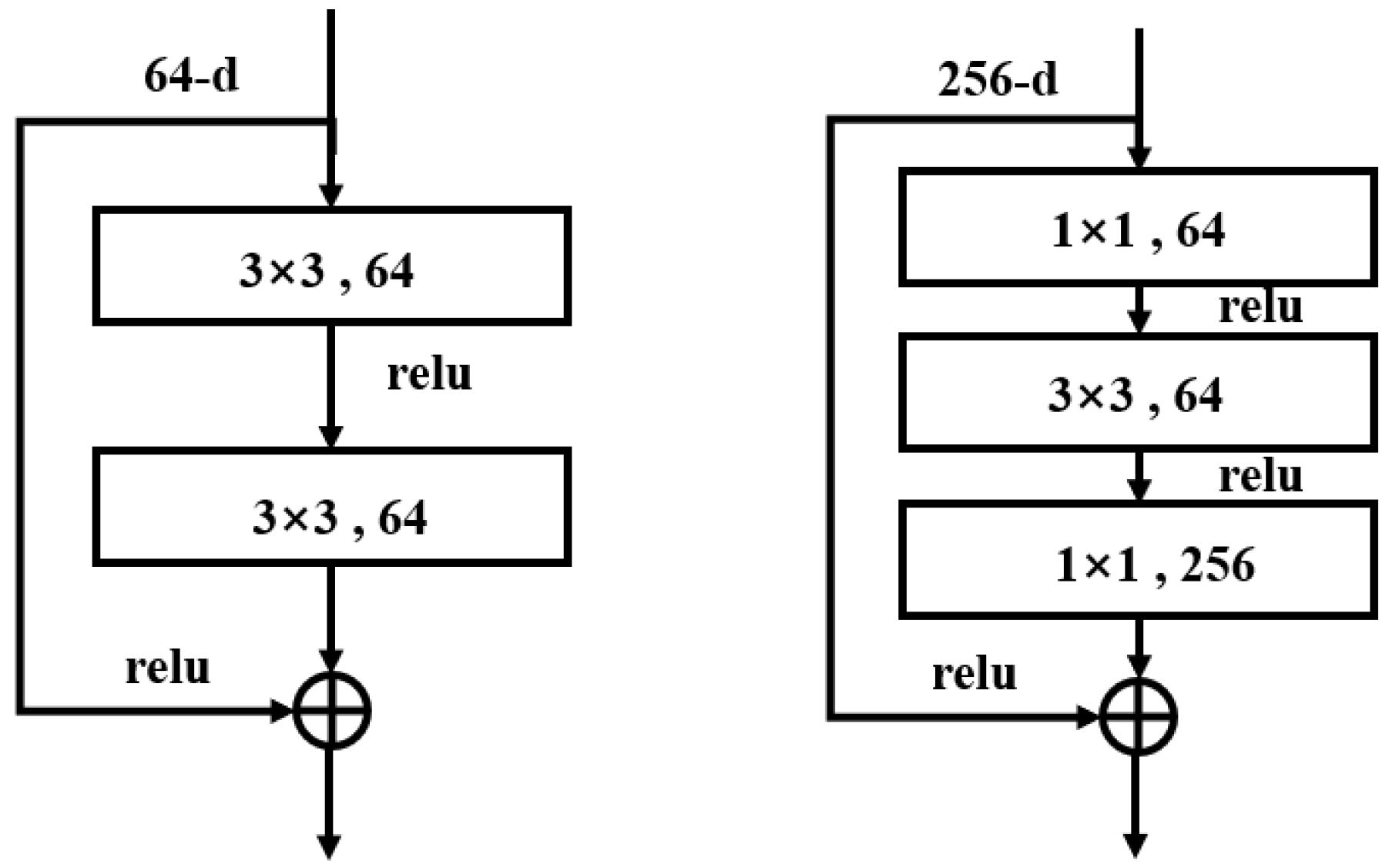

The basic structure of the residual module is shown in

Figure 2. The residual module employs a bottleneck block structure, utilizing 1 × 1 convolutional kernels to reduce the dimensionality of the feature maps, followed by another 1 × 1 convolution to restore the dimensions. This design reduces computational complexity while maintaining network depth, thereby enhancing model efficiency. Alternatively, a basic block structure, consisting of two 3 × 3 convolutional layers, is used. This basic block structure is typically employed in shallower ResNet models, where continuous 3 × 3 convolutions extract features while preserving the dimensions of the feature maps. Overall, the ResNet architecture fundamentally enhances traditional CNNs by adding a residual layer, significantly boosting network performance while maintaining very low complexity.

2.3. Swin Transformer Model

The Swin Transformer model, an innovative neural network architecture introduced by Google in 2017, relies solely on the attention mechanism, discarding traditional recurrent and convolutional network structures. This architecture has been extensively applied in various artificial intelligence scenarios, including machine translation, object detection, and audio processing. By utilizing a fully attention-based design, the Swin Transformer model enables parallel processing of input data, significantly accelerating training speed. Its multi-head attention mechanism allows the model to concurrently focus on multiple positions within a sequence, thereby enhancing its comprehension of complex contexts. Additionally, the Swin Transformer’s structure is highly scalable, allowing for the addition of more layers or heads to tackle more complex tasks, thus excelling in the processing of large-scale datasets. Furthermore, long-range dependencies are captured more effectively, making the Swin Transformer suitable for various languages and tasks. From text processing to computer vision, the Swin Transformer demonstrates exceptional adaptability and broad application potential.

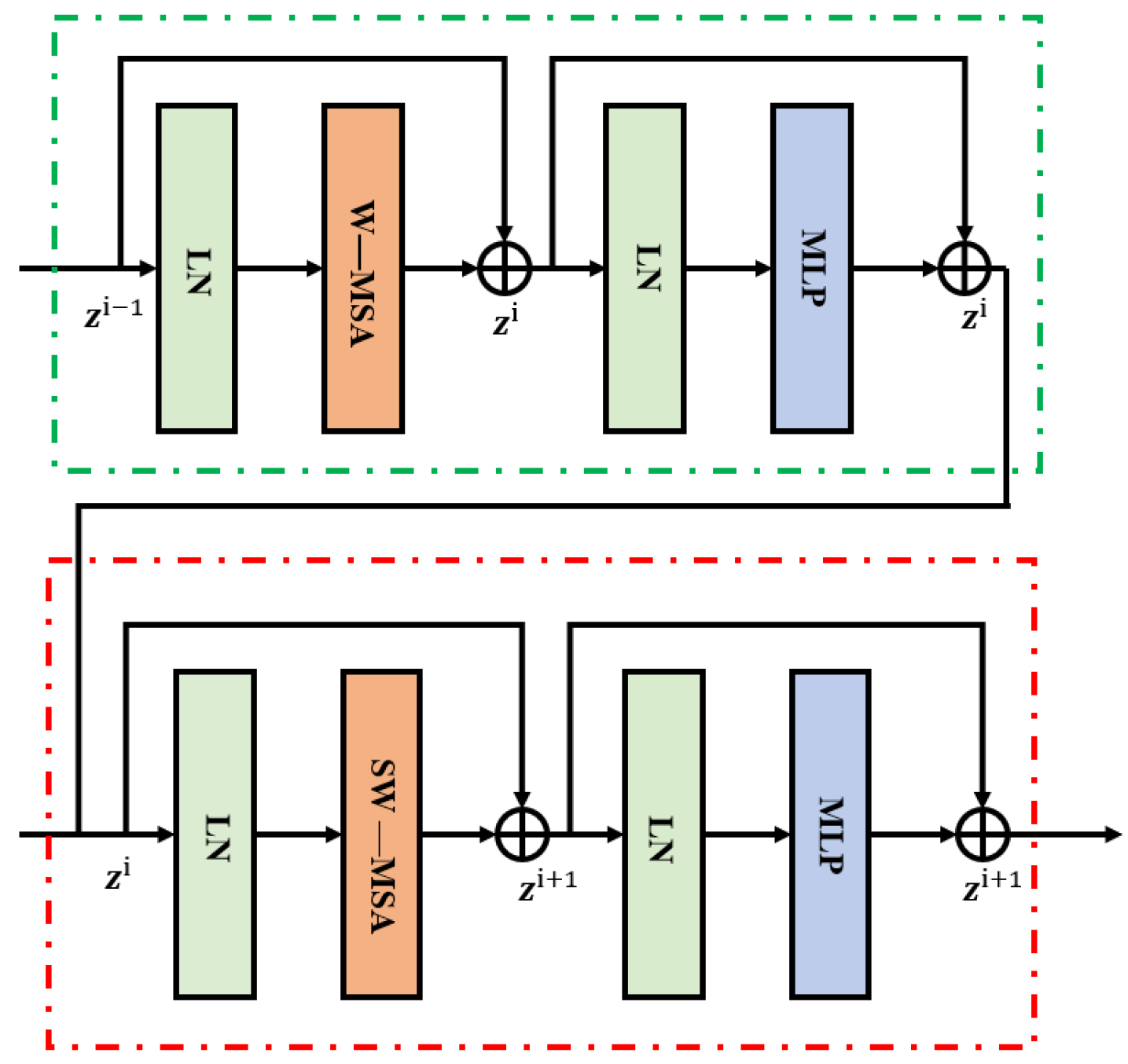

In

Figure 3, the key components of the Swin Transformer model are clearly illustrated, including Windowed Multi-Head Self-Attention (W-MSA), Shifted Windowed Multi-Head Self-Attention (SW-MSA), MLP, and Layer Normalization (LN). The input part of the model consists of a positional encoding layer and an embedding layer, while the output part mainly includes a linear layer and a SoftMax layer. At the core of the model, both the encoder and decoder are comprised of N layers, each consisting of multi-head attention layers, feedforward fully connected layers, normalization layers, and residual connection layers. The multi-head attention layers are composed of multiple attention heads, which help prevent the model from overfitting.

The core feature of the Swin Transformer is its self-attention mechanism, which transforms outputs by leveraging the internal correlations of data sequences. This mechanism involves Queries, Keys, and Values, each represented as vectors. The Query identifies key information within the input data, the Key represents the basic information of the data, and the Value contains specific data corresponding to the Key. The self-attention mechanism calculates and scales the dot product of feature vectors to analyze their similarity. This process addresses long-range dependencies and efficiently highlights important information while reducing reliance on external information. By emphasizing crucial information and ignoring irrelevant content, the goal of reducing dependence on external information is achieved. The corresponding computations can be represented as follows, where W

q, W

k, and W

v are the weight matrices for Queries, Keys, and Values, respectively:

In the Swin Transformer model, the Query (Q), Key (K), and Value (V) matrices are generated by multiplying the input data X with the corresponding weight matrices Wq, Wk, and Wv. Here, dim represents the dimensionality of the Q, K, and V matrices and is used to scale the calculations, ensuring stability. The self-attention mechanism enables the model to focus on the important information within the input features. However, a single self-attention mechanism can only learn information within a single informational space. To more comprehensively understand the key information in the input sequence, the Swin Transformer model introduces the multi-head attention mechanism. This mechanism employs multiple self-attention layers in parallel, allowing the model to extract and focus on critical features from different informational spaces simultaneously, thereby obtaining richer and more integrated feature representations.

The specific implementation process is as follows: the input is divided into multiple parts, each part is processed separately using different weight matrices, and then these results are combined to form the final output. This approach enhances the model’s ability to understand the input data from multiple dimensions. The multi-head attention mechanism is computed as follows:

In the multi-head attention mechanism, the input X is multiplied by the weight matrices Wq, Wk, and Wv to generate the Query Q, Key K, and Value V matrices for each attention head. Here, n represents the total number of attention heads. The results of each head, hi, are computed and then combined through concatenation (Concat) to form the final output. This network structure is designed to process multiple informational dimensions in parallel, thereby enhancing the model’s representational capacity and information capture efficiency. The network structure is illustrated as follows.

3. Network Structure

Based on the foundational theories discussed in

Section 2, the complete details of the compressor fault diagnosis method utilizing the Swin Transform–Shallow Resnet—GAM (SSG-Net) will be comprehensively described in the following section.

3.1. Construction of the SSG-Net Model

This paper presents a multi-channel global attention mechanism-based model for compressor fault detection.

Figure 4 illustrates the architecture of the proposed multi-channel SSG-Net model, which consists of three channels, each dedicated to extracting distinct feature information.

First, the initial channel employs a sliding window attention mechanism based on the Swin Transformer to extract local features from fault images. By calculating attention within each sliding window, this mechanism effectively captures relationships between features. Moreover, the sliding window approach allows the model to process each region of the image and extract local features. Additionally, the overlap between adjacent windows ensures continuity of local information, enabling the model to capture feature dependencies in the time-frequency diagram more accurately and extract critical global information from the signal. However, this method faces certain limitations in extracting fine-grained temporal details from shallow features.

Next, the second channel utilizes a shallow ResNet as the backbone network for feature extraction. The residual structure enables the model to build deeper networks, which enhances its feature extraction capabilities. Additionally, the alternating use of convolutional layers and batch normalization layers ensures stability during training, leading to the effective extraction of high-level feature representations. By retaining more spatial details and low-level features, the model is provided with finer-grained input features, thus improving the overall feature representation capability.

Finally, the third channel employs a CNN2d convolutional pooling network, which is based on the global attention mechanism (GAM-Attention). GAM-Attention computes attention weights across the entire image, prioritizing the key features of fault images. Within this network, the combination of channel attention and spatial attention enables the model to focus on essential features and capture global relationships. Consequently, this feature enhancement method significantly improves the model’s fault detection capabilities.

In summary, the multi-channel design of the SSG-Net model, which integrates sliding window attention, global attention mechanisms, and residual networks, offers a comprehensive approach to capturing both local and global features. This design effectively provides robust fault detection for compressors.

Finally, the SSG-Net model integrates features from three channels, encompassing local, global, and multi-scale information. This comprehensive integration allows the model to effectively utilize diverse features, facilitating the recognition of both global and local details and thereby enhancing fault detection accuracy. The model amalgamates the feature outputs from each module, incorporating long-range dependencies, channel attention, spatial attention, and local feature extraction. This fusion process ensures that the model leverages all available information. Consequently, the model can capture the global features of the entire image without neglecting local details. Ultimately, the combined features are input into a SoftMax classifier for fault classification.

3.2. SSG-Net Detection Framework

The core steps of the SSG-Net model consist of raw data preprocessing, feature extraction, and classification. Raw data alone typically do not provide sufficient information for effective feature extraction and meeting the requirements of deep learning models. Specifically, one-dimensional vibration signals lack explicit frequency information, despite offering amplitude information over time. Therefore, a Short-Time Fourier Transform (STFT) is employed to convert these signals into two-dimensional time-frequency images. This transformation enables a clear representation of the signal’s spectral information as it varies over time, significantly enriching the data available for subsequent feature extraction.

The preprocessing step begins with the application of STFT to the one-dimensional vibration signals. The STFT decomposes the signal into its constituent frequencies over short time windows, producing a time-frequency representation. This representation captures how the signal’s frequency content changes over time, providing a detailed view of both transient and steady-state components. The resulting two-dimensional images, known as spectrograms, contain rich information crucial for accurate fault detection.

In the feature extraction phase, traditional single-channel models may encounter significant challenges when learning from long-sequence data. These models often struggle to fully integrate local and global features, potentially leading to information loss and reduced prediction accuracy. To overcome these limitations, a novel architecture with multiple feature extraction pathways has been introduced. This model comprises three distinct branch networks, each designed to capture different aspects of the data. First, the Local Feature Extraction Branch focuses on fine-grained local details within the time-frequency images, using convolutional layers with small receptive fields to capture subtle variations and transient features. Second, the Multi-Scale Feature Extraction Branch employs a range of receptive fields to capture features at various scales, ensuring a comprehensive representation that includes both fine and coarse details. Finally, the Global Feature Extraction Branch aims to capture long-range dependencies and broader contextual information by utilizing larger receptive fields. These three branches work in parallel to extract a rich set of features from the input data, which are then passed through attention mechanisms, including channel and spatial attention, to enhance the most relevant aspects while suppressing noise and redundancy.

Furthermore, a C-pooling fusion technique is applied to compressor fault diagnosis, ensuring a more effective solution for capturing comprehensive feature information. The specific structure of this model is depicted in

Figure 5.

3.3. Data Preprocessing

Data augmentation plays a crucial role in generating different views of positive and negative sample pairs, enabling robust representations to be learned by the model. The experiments demonstrate that appropriate data augmentation can enhance the model’s ability to learn from a limited amount of data. Although data augmentation methods are well-established in computer vision, they are less common in vibration signal analysis.

To expand the training data and improve the model’s robustness, several time-domain signal augmentation methods were introduced. These include masking, gain adjustment, shifting, fading, fade-out, horizontal flipping, and vertical flipping. Masking selectively applies processing effects to certain areas of the signal, simulating missing or corrupted data. Gain adjustment modifies the signal’s amplitude, enhancing its brightness or contrast. Shifting moves the signal forward or backward in time, creating temporal variations. Fading logarithmically increases or decreases the amplitude over a random interval, while fade-out gradually weakens or strengthens the signal at random points. Horizontal flipping reverses the time sequence of the signal, and vertical flipping inverts the amplitude values, altering the signal’s phase. These augmentation techniques collectively enhance the diversity and representativeness of the training data, leading to more robust feature learning and improved fault diagnosis performance.

Figure 6 illustrates the effects of these signal augmentation methods on the experimental dataset. In each training batch, six augmentation methods were selected and applied to the time-series data. Notably, even though multiple versions of the time-series data were generated, the original batch size was maintained, ensuring data consistency and comparability. This approach not only effectively enhances the model’s performance but also increases the scientific validity of the experiment.

The output spectrum of the Short-Time Fourier Transform (STFT) can be represented as a two-dimensional matrix, with time along the horizontal axis and frequency along the vertical axis. The matrix elements denote the signal’s power or amplitude at the corresponding time and frequency. This two-dimensional image, known as a spectrogram, clearly displays the evolution of the signal over time and frequency. By transforming one-dimensional time-series signals into two-dimensional time-frequency images using STFT, the model can better understand and analyze both the frequency and time-domain characteristics of the signals. This transformation provides rich information for subsequent detection tasks. As depicted in

Figure 7, the time-frequency spectrogram obtained through STFT serves as the input for model training.

5. Conclusions

To overcome the limitations of traditional data-driven and model-driven methods in compressor fault detection, a novel deep feature learning framework, SSG-Net, has been introduced. By incorporating STFT, Swin Transformer, Shallow ResNet, and the GAM global attention mechanism, this framework effectively captures both time-frequency and local spatial features, significantly enhancing fault signal recognition. Moreover, the Swin Transformer’s multi-scale feature extraction, when combined with the GAM mechanism’s global attention, substantially improves the model’s robustness and accelerates its convergence, enabling highly efficient compressor fault detection. Experimental results demonstrate that SSG-Net consistently surpasses existing models on both the scroll compressor dataset and a widely used public dataset, especially in terms of recognition accuracy and convergence speed. Consequently, these strengths establish SSG-Net as a highly competitive approach for practical applications.

In future research, the method’s robust feature extraction and generalization capabilities can be further leveraged to improve fault diagnosis across diverse scenarios, such as varying operating conditions, small-sample learning, and life prediction. However, the current approach is constrained by its limitation to identifying only the fault categories present in the training set, with a reduced ability to detect or manage unknown faults. To address these shortcomings and better align with the complexities of real-world applications, future studies should focus on testing the model under more challenging conditions, including noisy environments, cross-domain data, and imbalanced datasets.

Furthermore, to mitigate the challenges associated with small-sample learning, transfer learning and few-shot learning techniques should be explored. These approaches could enable the model to adapt swiftly to new working conditions or fault types using minimal data. Additionally, Generative Adversarial Networks (GANs) could be utilized to generate synthetic fault samples, enriching the training dataset and further enhancing the model’s generalization capabilities. By implementing these advancements, the method is expected to provide more comprehensive and reliable fault diagnosis, offering stronger support for equipment maintenance and fault management in complex and dynamic industrial environments.