3.1. Data Preprocessing

The goal of data preprocessing is to convert the original check-in records to the data, which can be calculated using the machine learning technique. Although lots of useful features exist (e.g., category and review of POI) in each check-in record, the crucial information including user ID, POI ID, check-in timestamps, and location (i.e., latitude and longitude) is considered as the input features to the following modules. Similar to the majority of previous research, in this paper, the technique of Word2Vec is applied to represent users and POIs using vectors that are capable of capturing the differences among users and POIs [

5]. Based on the mechanism of Word2Vec, each word which has a unique id ranged from 0 to the number of words in the bag will be converted to a specific vector according to the id. The vectors, which are the embeddings representing the corresponding words, are usually initialized based on the Gaussian distribution and updated iteratively within the training processing. Similar to Word2Vec, the users and POIs are given unique ids that link to the corresponding embeddings (i.e., vectors) where the User/POI embeddings will be updated within the processing of fitting individual sequential check-ins. In general, the vectors are stored in a matrix, and the line number of the matrix is utilized to extract the corresponding vector (i.e., the representation of the user or POI). Therefore, in this paper, each user or POI is labeled as a unique number starting from 0 instead of using a complex character string (e.g., 4c4f4d8d24edc9b62a5a77bb). The individual temporal check-in records are described as a numerical sequence which is shown in

Figure 1.

Due to the consideration of

sequential check-ins and

geographical influence, the temporal check-ins and the related geographical location should be extracted from the original datasets. For the influence of

sequential check-ins, the timestamps are used to sort the corresponding check-in records for constructing the individual sequential check-ins of each user instead of being an explicit feature input to the model. Different from traditional recommender systems (i.e., book and movie), the next POI visit is not only dependent on the user preference but also considering the latest visited POIs. In other words, a user cannot visit multiple restaurants in a short time while it is normal for a user to go shopping among several malls. Therefore, the visiting pattern (i.e., the latest temporal visited POIs) not only describes the typical lifestyle but also represents individual preferences. To this end, the 1-stride fixed-size sliding window is utilized to split the individual check-in sequences into independent samples where the last POI of samples is the actual next visited POI. The previous POIs are considered as a visiting pattern. These samples considered as the real training instances will be transformed to the GAN. For the

geographical influence, the distance between the last visited POI and the next POI is also a significant reference for users to accept the recommendations. In other words, there exists a trade-off between the distance and the user preference if the time is limited. Therefore, we consider the latitude and longitude as a two-dimensional vector to capture the significance of distance and preference to a specific user. In our previous work [

30], we conduct experiments to show that, if the POI embedding is updated without the geographical coordinate information, the POI embeddings cannot be related to the geographical context. Therefore, the geographical influence is significant to the performance of POI recommendations.

Based on the aforementioned steps, the output of data preprocessing can be categorized into two parts: (1) the real training samples for the following adversarial learning; (2) the input of generator and discriminator. For the input of generator and discriminator, the features of each sample are integrated into an input hub which is a module to structuring data, including the user ID, temporal check-ins (i.e., visiting pattern), and geographical locations to the corresponding POI.

3.2. Generative Adversarial Network

The generative adversarial network is the crucial module of our proposed POI recommender system, where the goal of GAN is to relieve the sparsity of check-in data and improve the performance of POI recommendations. To relieving the sparsity of check-in data, the generator is capable of capturing the actual distribution of individual sequential check-in records and synthesizing plausible samples, which can supplement training data. For improving the performance of POI recommendations, compared to the single model, the adversarial learning between a generator and a discriminator can further promote the generalization ability of each model and improve the overall performance. In the following paragraphs, we will introduce the generator, the discriminator, and the adversarial learning in detail.

Generator:

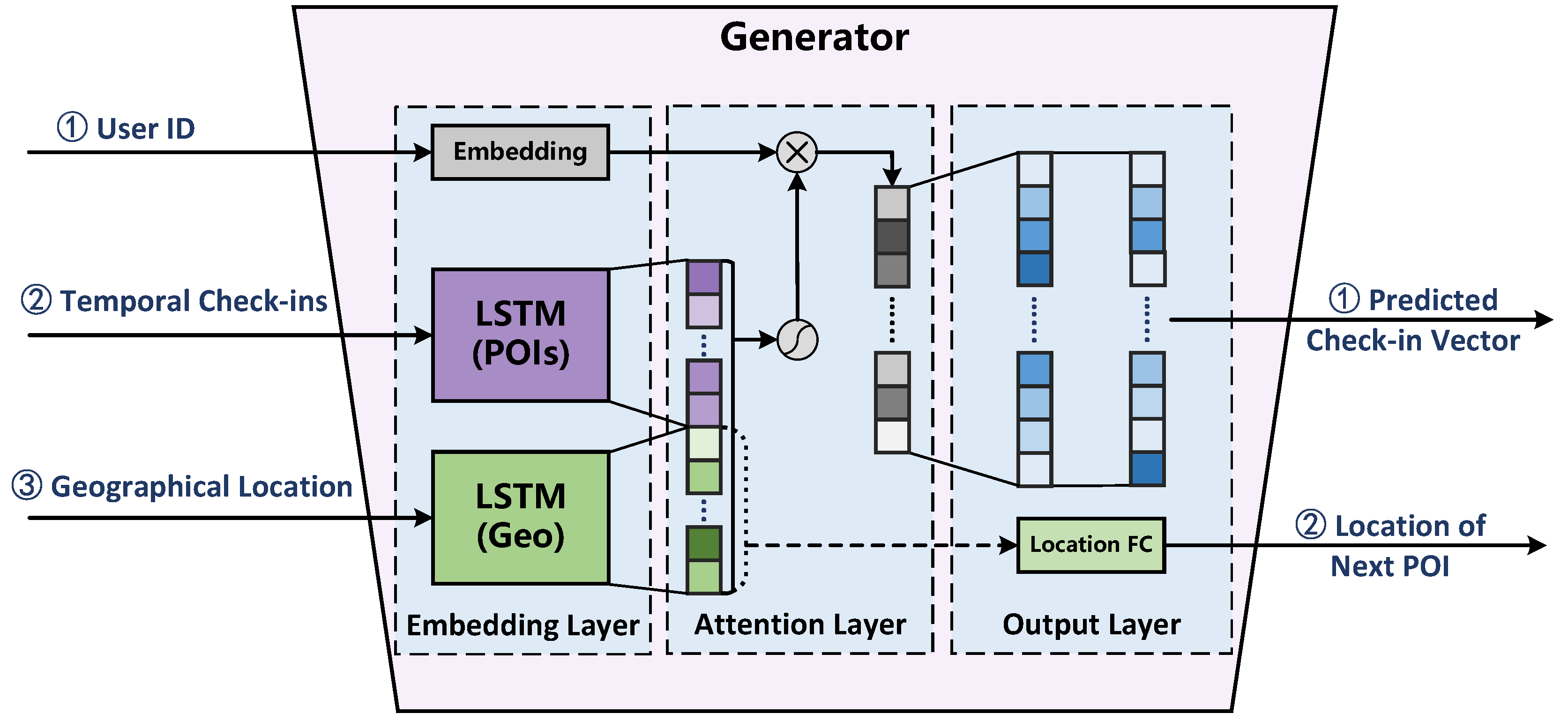

Figure 2 illustrates the structure of proposed generator in the framework where the generator comprises an embedding layer, an attention layer, and an output layer. The input of the generator consists of User ID, temporal check-ins, and geographical locations. The User ID is used to obtain the embedding of a specific user. The temporal check-ins are a list of visited POI IDs for the corresponding user using a three-size sliding window. The geographical locations are the latitudes and longitudes of the corresponding POIs in the temporal check-ins. In detail, the embedding layer is utilized to describe the set of users

, and represent the set of POIs

using the corresponding vectors where the embeddings describe the preferences of users and the characteristics of POIs. Furthermore, an LSTM is leveraged to fold the sequential check-ins

of user

while another LSTM is used to fit the corresponding sequential geographical locations

. Therefore, the output of the embedding layer is the embedding of a user (i.e.,

), the latent feature of corresponding temporal check-ins

, and the latent feature of corresponding geographical location

. The attention layer is leveraged to dynamically adjust the representation of user embeddings based on the latest temporal check-ins and geographical locations. In general, the user embedding

is capable of describing the preference of

i-th user based on her historical check-ins. However, in POI recommender systems, the final choice of a user is not only dependent on the preference but also relevant to the previously visited POI and the current geographical location. In addition, the preference is more specific to a user than the sequential check-ins and geographical locations. Therefore, instead of concatenating the user embedding and the latent features of temporal check-ins and geographical influence (i.e., contextual information), we utilize the latent features of contextual information to calculate the parameter of attention vector to finely tuning the user embedding that is capable of describing the actual preference based on the contexts. The output of the attention layer is based on the following equation:

The output layer (i.e., a fully connected layer) is leveraged to transform the latent features of the previous layer into meaningful outputs. In the proposed generator, the multi-task strategy is used to improve the performance of fitting actual distribution of observed check-in data. Besides the predicted check-in vector, which is calculated based on a two-fully-connected layer, the next POI location is predicted based on a two-fully-connected layer and the latent feature of geographical location

. The predicted check-in vector and the next POI location can be calculated based on the following equations, respectively:

where

is considered as a fake check-in preference (i.e., a fake sample) for the user

according to the current temporal check-ins and geographical location.

Discriminator:

Figure 3 illustrates the proposed discriminator in the framework where the discriminator also comprises an embedding layer, an attention layer, and an output layer. Similar to the structure of the proposed generator, the discriminator also exploits the user ID, the temporal check-ins, and the geographical location to generate the dynamical user preference (i.e., an embedding vector) based on the latest historical check-in records. However, the goal of the discriminator is to distinguish the real samples (i.e., actual check-in records) and the fake samples (i.e., predicted check-in vectors) for helping the generator improve the performance of capturing the actual user preference based on the contextual information. Therefore, besides the user ID, the temporal check-ins, the geographical location, and the check-in vectors, including the actual records and the generated ones, are transformed into a latent vector using a fully connected layer in the embedding layer. The transformed vector, which has the same dimension with the dynamical user preference, represents the user preference based on the current check-in vector. In the attention layer, the generated and transformed user preferences are concatenated into a long vector, which is considered as the output of the attention layer. For the output layer, the discriminator transforms the output of attention layer into a single value where the value is equal to 0 if the generator produces the check-in vector while the value is equal to 1 if the check-in vector is an actual check-in record:

where

is the output of attention layer in the discriminator.

Adversarial Learning: The core of the generative adversarial network is the adversarial game between the generator and the discriminator. In other words, promoting the quality of generated check-in records will improve the performance of distinguishing real or fake samples to the discriminator while the improvement of discriminator will also promote the performance of capturing the actual distribution of user preference to the generator. Therefore, different from traditional models, the generator and the discriminator have individual objective functions. The objective function of the generator consists of the prediction loss, the geographical location loss, and the generation loss. The prediction loss is calculated based on the following equation:

where the binary variables

is the actual check-in record on the

j-th POI in

V for the current user (i.e., User ID), where 0 means that the user has never been to the

j-th POI and 1 means that the user has been to the

j-th POI. The variables

are the predicted check-in record on the

j-th POI in

V. The geographical location loss is calculated as below:

where

and

are the normalized latitude and longitude of actual check-in POI while

and

are the normalized latitude and longitude of predicted check-in POI. The generation loss is based on the performance of distinguishing the generated check-in records to the discriminator:

where

represents the result of discriminator whether the predicted check-in vector

(i.e., the fake sample) is considered as a real or fake sample given a specific user

u and a temporal check-in records

. In general, the generator is updated based on

, which directly shows the quality of produced fake samples. In this paper, due to the sparsity of actual check-in vector based on an individual and specific temporal check-in records,

is used to accelerate the convergence of capturing the actual distribution to the generator. In addition, due to the significance of geographical influence in POI recommender systems,

is utilized to take the distance from the previous visited POI to the next recommended POI into account. Therefore, the objective function of the generator is calculated based on the following equation:

where

is the parameter of generator while

and

is the predefined weight of

and

, respectively.

The objective function of discriminator is similar to that of general binary classification, which can be calculated as below:

where

and

are the real and predicted check-in vector given a specific user

u and temporal check-in records

, respectively. Note that we exploit the general adversarial setting in this paper where the generator and the discriminator will be alternatively updated once based on the corresponding objective function until both of them converge.

The GANs are trained to be good at understanding the known information. In our proposed model, the user and POI embedding are used to capture the characteristics of each user and POI. Due to the sparsity of check-in records, it is hard for the model to train the embeddings sufficiently. Therefore, the GANs are useful for the model to capture the embeddings of users and POIs well. In addition, the GANs can be good at providing appropriate prediction. Even without the consideration of and , the generator in this paper is trained to provide the next POI. In detail, the input of the generator is the latest three visited POI (i.e., 3-size sliding window) which describe the current situation of the corresponding user, while the output of the generator is the next POI which would be interested for the user in the current situation (i.e., pattern). Therefore, the structure of GANs provides the effective strategy for our model to capture the user’s preference based on the contextual check-ins.