1. Introduction

To efficiently transmit high quality video contents over a limited bandwidth, HEVC [

1] was developed by the Joint Collaborative Team on Video Coding (JCT-VC), which was established by the ITU-T Video Coding Experts Group (VCEG) and the ISO/IEC Moving Picture Experts Group (MPEG). Since it includes lots of advanced techniques, such as advanced motion vector prediction modes for inter coding and various angular prediction modes for intra coding, very high coding efficiency is obtained. In particular, a rate-distortion (RD) optimization process [

2] for the various intra prediction modes provides significantly high coding performance. However, to determine the optimum intra prediction mode, the RD optimization process needs all encoding processes, including transform, quantization, inverse quantization, inverse transform, and entropy coding for each mode. After comparison of the encoding results, the optimum mode maximizing the performance is selected among all possible candidate modes. As a result, the mode decision process with RD optimization places a very high computational burden on HEVC encoders.

3D video coding uses a multiview video plus depth (MVD) format, which consists of a texture image and its corresponding depth map, to reduce 3D data size.

Figure 1 shows an example of the MVD format in a Newspaper test sequence. A texture image represents the brightness of an object, whereas a depth map indicates the distance between an object and a camera as a grey scale image. In general, the depth map is used to generate virtual texture views at arbitrary viewpoints, based on a depth image-based rendering (DIBR) technique [

3]. Thanks to the high coding performance of the HEVC standard, 3D-HEVC [

4] was developed by the Joint Collaborative Team on 3D Video Coding Extension Development (JCT-3V) to efficiently compress this MVD format. High coding performance is provided by using a high correlation between the texture image and the depth map in MVD, but this requires drastically high encoding complexity because of the additional depth coding. In particular, the intra prediction mode decision processes with the RD optimization in both texture and depth coding are very complicated. In addition, 3D-HEVC adopted several advanced prediction methods for the efficient depth intra coding, such as a depth modeling mode (DMM) [

5], generic segment-wise DC coding (SDC) [

6], and a depth intra skip mode (DIS) [

7], which also cause some complexity.

Many fast encoding algorithms were developed to reduce the complexity of the intra coding [

8,

9,

10,

11,

12,

13,

14,

15] and inter coding [

16,

17,

18] in 3D-HEVC. In particular, there are two research categories to reduce the encoding complexity of the mode decision process in the depth intra coding [

8,

9,

10,

11,

12,

13,

14,

15]. The first category is to optimize the advanced depth prediction methods and adaptively skip them. Most of fast algorithms in this category were developed to simplify DMM, because it requires much more complicated operations than SDC and DIS. For example, the optimum DMM wedgelet is determined through an exhaustive search process [

5]. Fast algorithms proposed in [

8,

9] adaptively skip this full DMM search in flat and smooth regions. A fast algorithm proposed in [

10] simplifies wedgelet candidates, based on the corresponding texture information. In [

11], some wedgelet partitions are skipped, based on the information of rough mode decision (RMD). A fast algorithm proposed in [

12] reduces the encoding complexity by employing a simplified edge detector. The gradient-based mode filter in [

13] is applied to borders of encoded blocks and determines the best positions to reduce the DMM-related mode decision process. A fast algorithm proposed in [

14] selectively skips unnecessary DMM processes, based on a simple edge classification.

The second category is to reduce the number of original candidate modes in the original mode decision process, which include planar, DC, and 33 angular prediction modes. Unlike the texture image, the depth map mainly contains homogenous regions and sharp edges at object boundaries. In general, the homogenous areas are compressed with the DC and planar modes. The DC mode uses an average value of adjacent pixels in the prediction, whereas the planar mode employs a weighted average. The sharp edges are usually compressed with the horizontal and vertical modes, which do not need interpolation filtering. Based on this observation, a fast conventional HEVC intra mode decision and adaptive DMM search method (FHEVCI+ADMMS), which was recently developed for the fast intra mode decision [

15], only uses the planar, DC, horizontal, and vertical modes in the mode decision process, instead of using the 35 different modes. Also, when the optimum mode among these four modes is the planar or DC mode, DMM is skipped. Even though this method is very simple, it significantly reduces the encoding complexity by reducing the number of candidate modes in the mode decision process, with negligible coding loss. However, based on our analysis, it was observed that there is still room for improvement in simplifying the original mode decision process.

In addition, since the advanced depth prediction methods, such as DMM, SDC, and DIS, have their disabling flags, they can be turned off in real-time applications. On the contrary, there is no flag that can adaptively enable or disable some of the original intra prediction modes. Hence, the research on the second category is very important. In this paper, we performed some useful mode analysis on depth coding, and then generated a mode pattern table based on the analysis. The proposed fast intra mode decision method adaptively reduces the number of candidate modes in the original mode decision process by employing the mode pattern table. Experimental results show that the proposed method outperforms the FHEVCI+ADMMS method, in terms of complexity reduction.

This paper is organized as follows.

Section 2 explains an original intra mode decision method in 3D-HEVC and the FHEVCI+ADMMS method in detail.

Section 3 shows results of the mode analysis and proposes our fast depth intra mode decision method.

Section 4 discusses experimental results including the coding performance and the encoding complexity.

Section 5 summarizes this study.

4. Results

The proposed method was implemented on top of a reference software 3D-HTM 14.0. We used eight JCT-3V test sequences with resolutions of 1024 × 768 and 1920 × 1088.

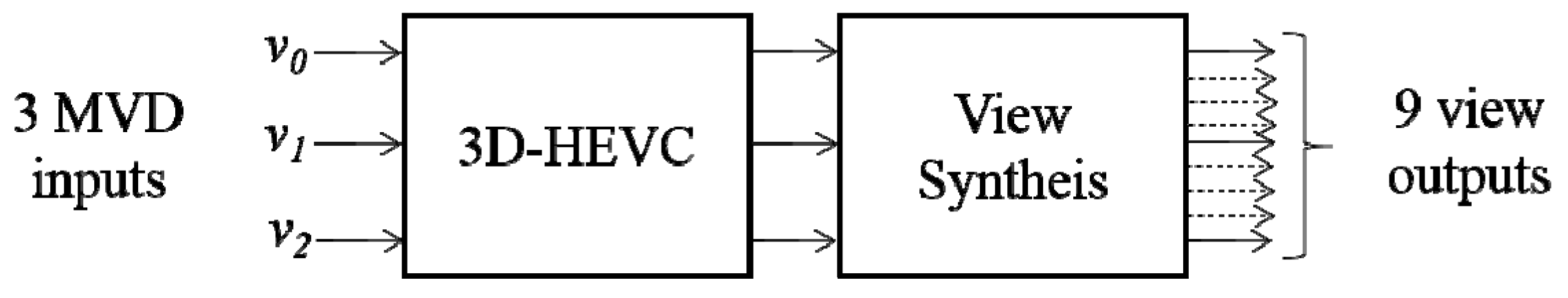

Table 3 shows the sequence information. The three view numbers represent indexes of left, center, and right views, and the MVD data for these views is input to 3D-HEVC, as displayed in

Figure 8. 3D-HEVC compresses these three views with a P-I-P prediction structure as shown in

Figure 9. For example, the center view is encoded as I view, which is called a base view. This base view can be decoded with HEVC because it only performs the inter prediction. On the other hand, both left and right views are encoded as P view using the inter-view prediction. Hence, they are able to use the already encoded views as references in the inter-view prediction. The arrows in

Figure 9 show the prediction direction from the reference view into the target view to be compressed. The view synthesis generates six synthesized views by using the three decoded texture images and depth maps, based on a three-view configuration in the 3D video coding. All coding parameters followed all intra setting in the common test conditions (CTC) of JCT-3V [

20]. The coding performance was measured according to Bjontegaard delta bitrate (BDBR) and PSNR (BDPSNR) [

21] in percentage and dB, respectively, and complexity reduction (CR) was measured with the encoding time as follows:

where

ET (reference) and

ET (proposed) represent the encoding times of the reference software and the proposed software, respectively.

Table 4 shows the overall performance of the FHEVCI+ADMMS method [

15] and the proposed method. BDBR(D) means the overall performances in terms of the average PSNR of the three decoded views over the total coding bitrate of the texture images and the depth maps, and BDBR(S) means the overall performances in terms of the average PSNR of the six synthesized views over the total bitrate [

20]. CR(O) was computed with the overall encoding time of the texture images and depth maps in Equation (1), but CR(D) was only calculated with the depth encoding time. Avg. indicates the average performance of all the test sequences. Both the FHEVCI+ADMMS method and the proposed method only increase the bitrates by about 0.1% and 0.6%, in terms of the decoded and synthesized PSNRs on average, respectively, which is a very small coding loss. In terms of the complexity reduction, the proposed method reduces the encoding time by about 10% more than the FHEVCI+ADMMS method, on average. For instance, the proposed method saves the encoding time by 34.42% and 39.27%, on average, and in terms of the overall and depth encoding times, respectively. However, the FHEVCI+ADMMS method only reduces the encoding times by 23.95% and 27.38% on average, in terms of the overall and depth encoding times, respectively. In addition, the proposed method achieves better results than the FHEVCI+ADMMS method in all the test sequences. The FHEVCI+ADMMS method always investigates the four prediction modes—which include the planar, DC, horizontal, and vertical modes—in the original mode decision process with the RD optimization, whereas the proposed method only tests one or two modes, based on the mode pattern table. Hence, a higher encoding time reduction can be achieved.

Table 5 shows the detailed information of both methods.

Bits and

PSNR were measured with the total coding bitrate and PSNR of the six synthesized views. Avg. indicates the BDBR and BDPSNR performance in each test sequence. As shown in

Table 5, even though both methods use a small number of candidate modes in the mode decision, the coding degradation is significantly small at all the QPs. This means that the planar, DC, horizontal, and vertical modes are enough to determine the optimum mode for depth coding. It also demonstrates that the proposed method further reduces the number of candidate modes in these four modes without significant coding loss. In addition,

Table 6 shows the mode decision accuracy of the proposed method for QPs of 39 and 42 in percentage. This indicates the degree to which the optimum mode as determined by the proposed method is the same as that determined by the original method, among the four modes. As shown in

Table 6, the accuracy is very high for all the test sequences. This indicates that the number of candidate modes can be efficiently reduced based on the mode pattern table in

Table 1.