Improving the Reader’s Attention and Focus through an AI-Driven Interactive and User-Aware Virtual Assistant for Handheld Devices

Abstract

:Featured Application

Abstract

1. Introduction

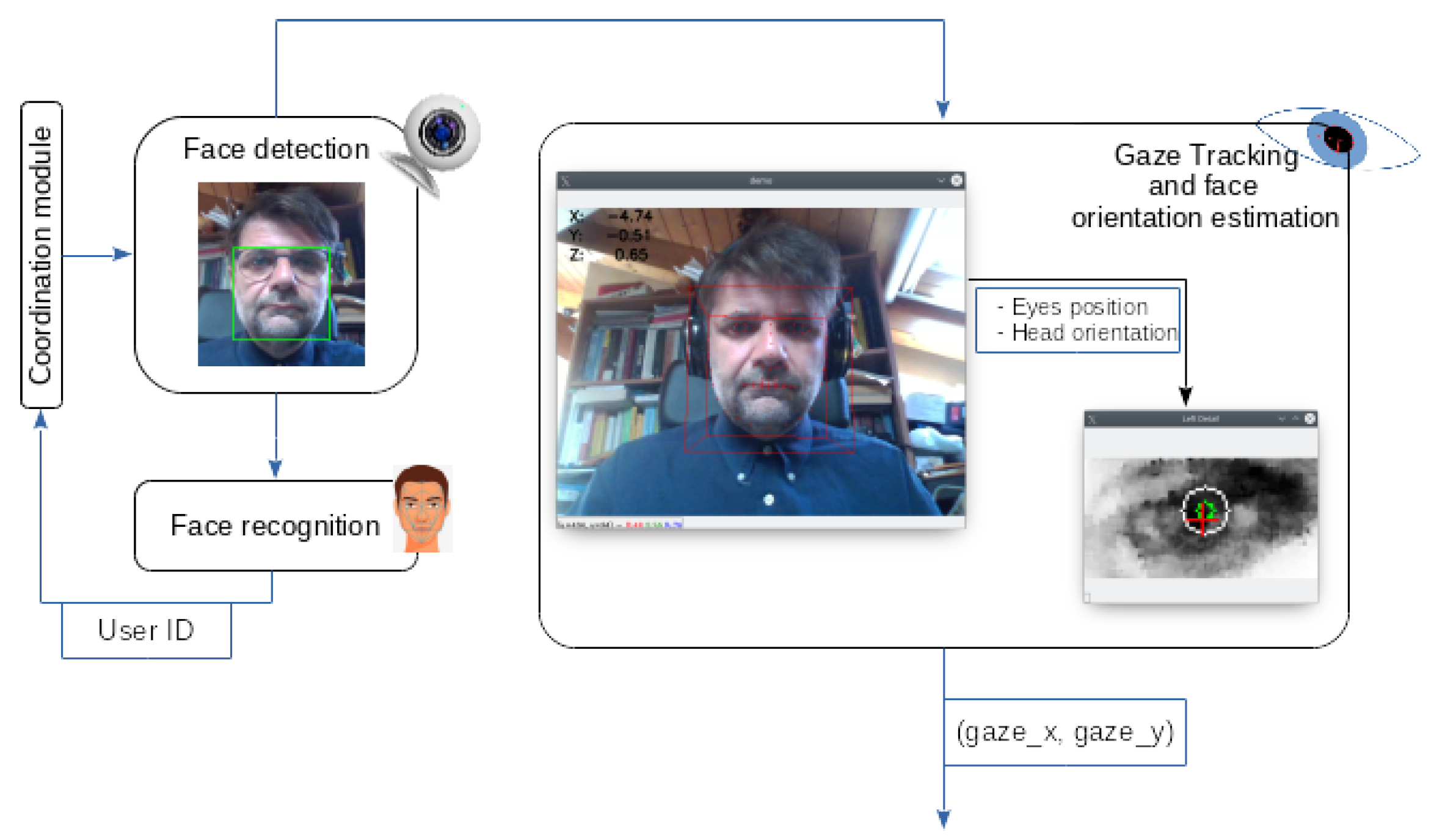

- We describe the modular software platform TFTH, which enables the visual observation of the user, the online analysis of the acquired data, the generation of different human-like gestural and spoken feedback, and the connection to other “smart assistant” services. The platform runs entirely on the device; that is, the processing needed to observe the user, analyze the data and produce the feedback is performed locally without accessing remote resources.

- We describe a coaching application, called the Mobile Interactive Coaching Agent (MICA), which leverages the services of the TFTH platform to monitor the user while reading text or attending to some other screen-based cognitive task and helps the user focus his or her attention on the task at hand.

2. Related Work

- The proposed solution operates locally on a handheld device and does not require remote (e.g., cloud-based) services for its core functions, such as face recognition, face detection, eye tracking, speech recognition and speech synthesis;

- The proposed solution does not require specialized hardware, such as eye gaze trackers, stereo cameras or dedicated processors (e.g., Intel Movidius) for data analysis.

- Does the MICA improve the user’s visual attention when reading from a handheld digital device?

3. Materials and Methods

3.1. The TFTH Architecture

3.2. The Mobile Interactive Coaching Agent

3.3. Experimental Set-up

- The total reading time for the entire excerpt;

- The number of distraction events detected during the trial;

- The total reading time during which the user was distracted (i.e., the cumulative distraction time during the reading session).

4. Results

5. Discussion

6. Limitations and Future Research

7. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Cinquin, P.; Guitton, P.; Sauzéon, H. Online e-learning and cognitive disabilities: A systematic review. Comput. Educ. 2019, 130, 152–167. [Google Scholar] [CrossRef]

- Stančin, K.; Hoić-Božić, N.; Skočić Mihić, S. Using digital game-based learning for students with intellectual disabilities—A systematic literature review. Inform. Educ. 2020, 19, 323–341. [Google Scholar] [CrossRef]

- Coletta, A.; De Marsico, M.; Panizzi, E.; Prenkaj, B.; Silvestri, D. MIMOSE: Multimodal interaction for music orchestration sheet editors. Multimed. Tools Appl. 2019, 78, 33041–33068. [Google Scholar] [CrossRef]

- Marceddu, A.C.; Pugliese, L.; Sini, J.; Espinosa, G.R.; Amel Solouki, M.; Chiavassa, P.; Giusto, E.; Montrucchio, B.; Violante, M.; De Pace, F. A Novel Redundant Validation IoT System for Affective Learning Based on Facial Expressions and Biological Signals. Sensors 2022, 22, 2773. [Google Scholar] [CrossRef]

- Nugrahaningsih, N.; Porta, M.; Klasnja-Milicevic, A. Assessing learning styles through eye tracking for e-learning applications. Comput. Sci. Inf. Syst. 2021, 18, 1287–1309. [Google Scholar] [CrossRef]

- Dondi, P.; Porta, M.; Donvito, A.; Volpe, G. A gaze-based interactive system to explore artwork imagery. J. Multimodal User Interfaces 2022, 16, 55–67. [Google Scholar] [CrossRef]

- Batista, A.F.; Thiry, M.; Gonçalves, R.Q.; Fernandes, A. Using technologies as virtual environments for computer teaching: A systematic review. Inform. Educ. 2020, 19, 201–221. [Google Scholar] [CrossRef]

- Terzopoulos, G.; Satratzemi, M. Voice assistants and smart speakers in everyday life and in education. Inform. Educ. 2020, 19, 473–490. [Google Scholar] [CrossRef]

- Okwu, M.O.; Tartibu, L.K.; Maware, C.; Enarevba, D.R.; Afenogho, J.O.; Essien, A. Emerging Technologies of Industry 4.0: Challenges and Opportunities. In Proceedings of the 2022 International Conference on Artificial Intelligence, Big Data, Computing and Data Communication Systems (icABCD), Durban, South Africa, 4–5 August 2022; pp. 1–13. [Google Scholar] [CrossRef]

- Caprì, T.; Gugliandolo, M.C.; Iannizzotto, G.; Nucita, A.; Fabio, R. The influence of media usage on family functioning. Curr. Psychol. 2021, 40, 2644–2653. [Google Scholar] [CrossRef]

- Asimov, I. The Fun They Had. Mag. Fantasy Sci. Fict. 1954, 6, 125. [Google Scholar]

- Iannizzotto, G.; Lo Bello, L.; Nucita, A.; Grasso, G.M. A Vision and Speech Enabled, Customizable, Virtual Assistant for Smart Environments. In Proceedings of the 2018 11th International Conference on Human System Interaction (HSI), Gdansk, Poland, 4–6 July 2018; pp. 50–56. [Google Scholar] [CrossRef]

- Ivanova, M. eLearning informatics: From automation of educational activities to intelligent solutions building. Inform. Educ. 2020, 19, 257–282. [Google Scholar] [CrossRef]

- De Koning, B.B.; Tabbers, H.K.; Rikers, R.M.J.P.; Paas, F. Towards a Framework for Attention Cueing in Instructional Animations: Guidelines for Research and Design. Educ. Psychol. Rev. 2009, 21, 113–140. [Google Scholar] [CrossRef]

- Martinez, S.; Sloan, R.; Szymkowiak, A.; Scott-Brown, K. Animated virtual agents to cue user attention: Comparison of static and dynamic deictic cues on gaze and touch responses. Int. J. Adv. Intell. Syst. 2011, 4, 299–308. [Google Scholar]

- Shepherd, S.V. Following gaze: Gaze-following behavior as a window into social cognition. Front. Integr. Neurosci. 2010, 4, 5. [Google Scholar] [CrossRef]

- Morishima, Y.; Nakajima, H.; Brave, S.; Yamada, R.; Maldonado, H.; Nass, C.; Kawaji, S. The Role of Affect and Sociality in the Agent-Based Collaborative Learning System. In Affective Dialogue Systems Proceedings of the Tutorial and Research Workshop, ADS 2004, Kloster Irsee, Germany, 14-16 June 2004; André, E., Dybkjær, L., Minker, W., Heisterkamp, P., Eds.; Springer: Berlin/Heidelberg, Germany, 2004; pp. 265–275. [Google Scholar]

- Ammar, M.; Neji, M.; Alimi, A.M. The Role of Affect in an Agent-Based Collaborative E-Learning System Used for Engineering Education. In Handbook of Research on Socio-Technical Design and Social Networking Systems; Whitworth, B., de Moor, A., Eds.; IGI GLobal: Hershey, PA, USA, 2009. [Google Scholar]

- Johnson, W.L.; Lester, J.C. Face-to-Face Interaction with Pedagogical Agents, Twenty Years Later. Int. J. Artif. Intell. Educ. 2016, 26, 25–36. [Google Scholar] [CrossRef]

- Bernardini, S.; Porayska-Pomsta, K.; Smith, T.J. ECHOES: An intelligent serious game for fostering social communication in children with autism. Inf. Sci. 2014, 264, 41–60. [Google Scholar] [CrossRef]

- Clabaugh, C.; Mahajan, K.; Jain, S.; Pakkar, R.; Becerra, D.; Shi, Z.; Deng, E.; Lee, R.; Ragusa, G.; Matarić, M. Long-Term Personalization of an In-Home Socially Assistive Robot for Children With Autism Spectrum Disorders. Front. Robot. AI 2019, 6, 110. [Google Scholar] [CrossRef] [PubMed]

- Rudovic, O.; Lee, J.; Dai, M.; Schuller, B.; Picard, R.W. Personalized machine learning for robot perception of affect and engagement in autism therapy. Sci. Robot. 2018, 3, eaao6760. [Google Scholar] [CrossRef]

- Fridin, M.; Belokopytov, M. Embodied Robot versus Virtual Agent: Involvement of Preschool Children in Motor Task Performance. Int. J. Hum.-Comput. Interact. 2014, 30, 459–469. [Google Scholar] [CrossRef]

- Patti, G.; Leonardi, L.; Lo Bello, L. A Novel MAC Protocol for Low Datarate Cooperative Mobile Robot Teams. Electronics 2020, 9, 235. [Google Scholar] [CrossRef] [Green Version]

- Qu, L.; Wang, N.; Johnson, W.L. Using Learner Focus of Attention to Detect Learner Motivation Factors. In User Modeling 2005 Proceedings of the 10th International Conference, UM 2005, Edinburgh, Scotland, UK, 24–29 July 2005; Ardissono, L., Brna, P., Mitrovic, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2005; pp. 70–73. [Google Scholar]

- Frémont, V.; Phan, M.T.; Thouvenin, I. Adaptive Visual Assistance System for Enhancing the Driver Awareness of Pedestrians. Int. J. Hum.-Comput. Interact. 2020, 36, 856–869. [Google Scholar] [CrossRef]

- Fabio, R.; Caprì, T.; Nucita, A.; Iannizzotto, G.; Mohammadhasani, N. Eye-gaze digital games improve motivational and attentional abilities in RETT syndrome. J. Spec. Educ. Rehabil. 2019, 19, 105–126. [Google Scholar] [CrossRef]

- Kazemi, V.; Sullivan, J. One millisecond face alignment with an ensemble of regression trees. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1867–1874. [Google Scholar] [CrossRef]

- Papoutsaki, A.; Sangkloy, P.; Laskey, J.; Daskalova, N.; Huang, J.; Hays, J. Webgazer: Scalable Webcam Eye Tracking Using User Interactions. In Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence, New York, NY, USA, 9–15 July 2016; pp. 3839–3845. [Google Scholar]

- Cao, Z.; Hidalgo, G.; Simon, T.; Wei, S.E.; Sheikh, Y. OpenPose: Realtime Multi-Person 2D Pose Estimation Using Part Affinity Fields. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 172–186. [Google Scholar] [CrossRef] [PubMed]

- Papandreou, G.; Zhu, T.; Chen, L.C.; Gidaris, S.; Tompson, J.; Murphy, K. PersonLab: Person Pose Estimation and Instance Segmentation with a Bottom-Up, Part-Based, Geometric Embedding Model. In Proceedings of the Computer Vision—ECCV 2018, Munich, Germany, 8–14 September 2018; Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y., Eds.; Springer International Publishing: Cham, Switzerlands, 2018; pp. 282–299. [Google Scholar]

- Mohammadhasani, N.; Fardanesh, H.; Hatami, J.; Mozayani, N.; Fabio, R.A. The pedagogical agent enhances mathematics learning in ADHD students. Educ. Inf. Technol. 2018, 23, 2299–2308. [Google Scholar] [CrossRef]

- Iannizzotto, G.; Nucita, A.; Fabio, R.A.; Caprì, T.; Lo Bello, L. More Intelligence and Less Clouds in Our Smart Homes. In Economic and Policy Implications of Artificial Intelligence; Marino, D., Monaca, M.A., Eds.; Springer International Publishing: Cham, Switzerlands, 2020; pp. 123–136. [Google Scholar] [CrossRef]

- Natesh Bhat. pyttsx3 Library. Available online: https://github.com/nateshmbhat/pyttsx3/ (accessed on 11 September 2022).

- yumoqing. ios_tts. Available online: https://github.com/yumoqing/ios_tts (accessed on 11 September 2022).

- Alpha Cephei. pyttsx3 Library. Available online: https://github.com/alphacep/vosk-api (accessed on 11 September 2022).

- Itseez. Open Source Computer Vision Library. Available online: https://github.com/itseez/opencv. (accessed on 11 September 2022).

- King, D.E. Dlib-ml: A Machine Learning Toolkit. J. Mach. Learn. Res. 2009, 10, 1755–1758. [Google Scholar]

- Iannizzotto, G.; Nucita, A.; Fabio, R.A.; Caprì, T.; Bello, L.L. Remote Eye-Tracking for Cognitive Telerehabilitation and Interactive School Tasks in Times of COVID-19. Information 2020, 11, 296. [Google Scholar] [CrossRef]

- Wolfe, B.; Eichmann, D. A Neural Network Approach to Tracking Eye Position. Int. J. Hum.-Comput. Interact. 1997, 9, 59–79. [Google Scholar] [CrossRef]

- Li, D.; Babcock, J.; Parkhurst, D.J. OpenEyes: A Low-Cost Head-Mounted Eye-Tracking Solution. In Proceedings of the 2006 Symposium on Eye Tracking Research & Applications, San Diego, CA, USA, 27–29 March 2006; Association for Computing Machinery: New York, NY, USA, 2006. ETRA ’06. pp. 95–100. [Google Scholar] [CrossRef]

- Lee, K.F.; Chen, Y.L.; Yu, C.W.; Chin, K.Y.; Wu, C.H. Gaze Tracking and Point Estimation Using Low-Cost Head-Mounted Devices. Sensors 2020, 20, 1917. [Google Scholar] [CrossRef]

- Kassner, M.; Patera, W.; Bulling, A. Pupil: An Open Source Platform for Pervasive Eye Tracking and Mobile Gaze-Based Interaction. In Proceedings of the 2014 ACM International Joint Conference on Pervasive and Ubiquitous Computing: Adjunct Publication, Seattle, WA, USA, 13–17 September 2014; Association for Computing Machinery: New York, NY, USA, 2014. UbiComp ’14 Adjunct. pp. 1151–1160. [Google Scholar] [CrossRef]

- Duchowski, A.T. Eye Tracking Methodology: Theory and Practice; Springer: London, UK, 2003. [Google Scholar]

- Valliappan, N.; Dai, N.; Steinberg, E.; He, J.; Rogers, K.; Ramachandran, V.; Xu, P.; Shojaeizadeh, M.; Guo, L.; Kohlhoff, K.; et al. Accelerating eye movement research via accurate and affordable smartphone eye tracking. Nat. Commun. 2020, 11, 4553. [Google Scholar] [CrossRef]

- Baltrusaitis, T.; Zadeh, A.; Lim, Y.C.; Morency, L.P. OpenFace 2.0: Facial Behavior Analysis Toolkit. In Proceedings of the 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), Xi’an, China,, 15–19 May 2018; pp. 59–66. [Google Scholar] [CrossRef]

- Iannizzotto, G.; La Rosa, F. Competitive Combination of Multiple Eye Detection and Tracking Techniques. IEEE Trans. Ind. Electron. 2011, 58, 3151–3159. [Google Scholar] [CrossRef]

- Nass, C.; Brave, S. Wired for Speech: How Voice Activates and Advances the Human-Computer Relationship; MIT Press: Cambridge, MA, USA, 2007. [Google Scholar]

- Bandura, A. Social Cognitive Theory of Mass Communication. Media Psychol. 2001, 3, 265–299. [Google Scholar] [CrossRef]

- Sweller, J. Cognitive load theory. In Psychology of Learning and Motivation; Academic Press: Cambridge, MA, USA, 2011; Volume 55, pp. 37–76. [Google Scholar] [CrossRef]

- Fabio, R.A.; Caprì, T.; Iannizzotto, G.; Nucita, A.; Mohammadhasani, N. Interactive Avatar Boosts the Performances of Children with Attention Deficit Hyperactivity Disorder in Dynamic Measures of Intelligence. Cyberpsychol. Behav. Soc. Netw. 2019, 22, 588–596. [Google Scholar] [CrossRef] [PubMed]

- Rayner, K. Eye movements in reading and information processing. Psychol. Bull. 1978, 85, 618–660. [Google Scholar] [CrossRef]

- Domagk, S. Do pedagogical agents facilitate learner motivation and learning outcomes? The role of the appeal of agent’s appearance and voice. J. Media Psychol. Theor. Methods Appl. 2010, 22, 84. [Google Scholar] [CrossRef]

- Veletsianos, G. Contextually relevant pedagogical agents: Visual appearance, stereotypes, and first impressions and their impact on learning. Comput. Educ. 2010, 55, 576–585. [Google Scholar] [CrossRef] [Green Version]

- Landau, A.; Fries, P. Attention Samples Stimuli Rhythmically. Curr. Biol. 2012, 22, 1000–1004. [Google Scholar] [CrossRef]

- Mohammadhasani, N.; Caprì, T.; Nucita, A.; Iannizzotto, G.; Fabio, R.A. Atypical Visual Scan Path Affects Remembering in ADHD. J. Int. Neuropsychol. Soc. 2020, 26, 557–566. [Google Scholar] [CrossRef]

- Fabio, R.A.; Iannizzotto, G.; Nucita, A.; Caprì, T. Adult listening behaviour, music preferences and emotions in the mobile context. Does mobile context affect elicited emotions? Cogent Eng. 2019, 6, 1597666. [Google Scholar] [CrossRef]

- Iannizzotto, G.; Lo Bello, L. A multilevel modeling approach for online learning and classification of complex trajectories for video surveillance. Intern. J. Pattern Recognit. Artif. Intell. 2014, 28, 1455009. [Google Scholar] [CrossRef]

- Iannizzotto, G.; Lo Bello, L.; Patti, G. Personal Protection Equipment detection system for embedded devices based on DNN and Fuzzy Logic. Expert Syst. Appl. 2021, 184, 115447. [Google Scholar] [CrossRef]

- Shuangfeng, L. TensorFlow Lite: On-Device Machine Learning Framework. J. Comput. Res. Dev. 2020, 57, 1839. [Google Scholar] [CrossRef]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An Imperative Style, High-Performance Deep Learning Library. In Advances in Neural Information Processing Systems 32; Curran Associates, Inc.: Sydney, Australia, 2019; pp. 8024–8035. [Google Scholar]

- OpenVINO™ Toolkit. Available online: https://github.com/openvinotoolkit/openvino (accessed on 11 September 2022).

| Device | CPU | RAM Capacity | Frame Rate |

|---|---|---|---|

| Tablet | A12X, 8 cores, 2.48 GHz | 4 GB | 24 |

| Tablet | Kirin 659, 8 cores, 2.36 GHz | 3 GB | 15 |

| Raspberry Pi 3B+ | Cortex A53, 4 cores, 1.4 GHz | 1 GB | 10 |

| While reading, the MICA made you more: | □ Distracted | □ Focused | □ Indifferent |

| Distracted | Focused | Indifferent |

|---|---|---|

| 0% | 90% | 10% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Iannizzotto, G.; Nucita, A.; Lo Bello, L. Improving the Reader’s Attention and Focus through an AI-Driven Interactive and User-Aware Virtual Assistant for Handheld Devices. Appl. Syst. Innov. 2022, 5, 92. https://doi.org/10.3390/asi5050092

Iannizzotto G, Nucita A, Lo Bello L. Improving the Reader’s Attention and Focus through an AI-Driven Interactive and User-Aware Virtual Assistant for Handheld Devices. Applied System Innovation. 2022; 5(5):92. https://doi.org/10.3390/asi5050092

Chicago/Turabian StyleIannizzotto, Giancarlo, Andrea Nucita, and Lucia Lo Bello. 2022. "Improving the Reader’s Attention and Focus through an AI-Driven Interactive and User-Aware Virtual Assistant for Handheld Devices" Applied System Innovation 5, no. 5: 92. https://doi.org/10.3390/asi5050092