1. Introduction

Activity recognition is a vital technology that has been used or has the potential to be utilized for a wide range of applications, including transportation mode recognition [

1], indoor positioning, navigation, location-based services, health monitoring, context-aware behaviors, targeted advertising, and mobile social networks [

2]. Recent years have witnessed a significant increase in the variety of consumer devices, which are not only equipped with traditional sensors like GPS, camera, Wi-Fi and Bluetooth but also newly-developed sensors like accelerometer, gyroscope, and barometer. These sensors can capture the intensity and duration of the activity, and are even able to sense the activity context. This can help consumers assess their activity levels and change their activity behaviors to keep fit and healthy.

Equipped with a variety of sensors, smartphones are more attractive for activity recognition compared to on-body devices since they are ubiquitous, easy to use, and they do not disturb users’ normal activities [

1]. Using these built-in smartphone sensors to sense user activity has been explored in the literature. GPS has been widely used for transportation mode classification and daily movement inference [

3,

4]. It can provide relatively fine-grained location information and the speed of movement, enabling the inference of if a user is driving or walking. Combined with a street graph or map, more high-level activities can be inferred. Sohn

et al. [

5] demonstrated the feasibility of using coarse-grained GSM data to recognize high-level user activities. By utilizing the fluctuation information between the radio and the cell tower, it is possible to distinguish whether a person is driving, walking or remaining at one place. Similar to GSM radio, Wi-Fi and Bluetooth radio can also be applied for activity recognition [

6,

7]. Microphone and camera are useful for activity recognition by offering acoustic and visual information, respectively [

8]. Recently, there has been a significant interest in using accelerometer data for activity recognition [

9,

10,

11], mainly because it can work both indoors and outdoors and has no need for extra infrastructure. Similarly, the gyroscope and magnetometer can enhance accelerometer-based activity recognition by detecting the change of smartphone’s orientation [

12,

13]. It is expected that in the near future, more types of sensors (e.g., air quality sensor) will be integrated into smartphones, which would provide richer context information.

A key component for activity recognition is classification algorithm. Lee

et al. [

11] used hierarchical artificial neural networks (ANNs) to recognize six daily activities (lying, standing, walking, going-upstairs, going-downstairs, and driving) according to the accelerometer signals. Lara

et al. [

14] developed a system, called Centinela, that can recognize five activities (walking, running, sitting, ascending, and descending) by combining acceleration data with vital signs. They showed that an accuracy up to 95.7% can be reached by using the additive logistic regression algorithm. Zhu and Sheng [

15] proposed a two-step approach that fuses motion data and location information. In the first step, two neural networks were utilized to classify basic activities, followed by a hidden Markov model to consider sequential constraints. Then, the Bayes theorem was used to fuse location information and update the classified activities from the motion data. Kouris & Koutsouris [

16] compared functional trees, naive Bayes, support vector machine, and C4.5 for physical activity recognition (standing, walking, jogging, running, cycling, and stairs), and concluded that the functional trees outperformed other classifiers. Pei

et al. [

12] used the least-squares support vector machine to recognize eight common motion states (static, standing with hand swinging, fast walking, U-turning, going upstairs, going downstairs, normal walking while holding the phone in hand, normal walking with hand swinging, and fast walking). Lester

et al. [

17] presented a hybrid approach combining the generative techniques with discriminative techniques to recognize different activities (e.g., sitting, standing, walking, jogging, riding a bicycle, driving car) by using wearable multi-sensor boards. The hidden Markov models were used to capture the temporal regularities and smoothness of activities. Hu

et al. [

18] presented a framework that uses skip-chain conditional random fields (CRFs) to recognize concurrent and interleaving activities. Liao

et al. [

19] applied hierarchical CRFs to extract and label a person’s activities and significant places based on GPS data and high-level context information.

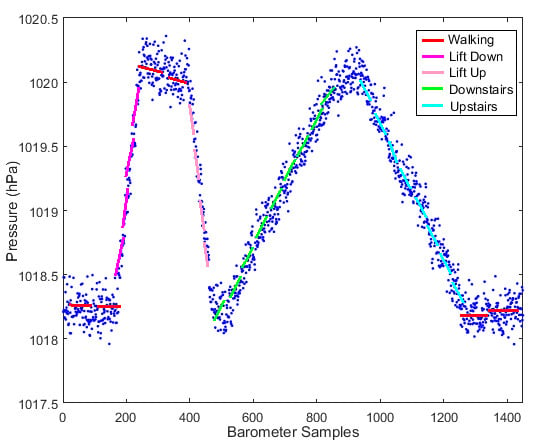

This study focuses on locomotion activity (also known as motion state) recognition. Although a lot of research has been done in this field, some critical issues still need to be explored. Most existing motion-based recognition methods use only accelerometers and extract features from accelerometer readings. However, when using only acceleration-based features, it is difficult to differentiate varying vertical motion states from horizontal motion states. This is especially true when conducting user-independent classification. Generally, the acceleration characteristics of Walking and Upstairs/Downstairs for the same person are different but may be very similar between different users. The advent of the barometer sensor built in smartphones promises an effective solution to this problem. Barometer data have been used for floor-level indoor positioning in [

20,

21]. In this paper, we investigate the use of barometer data for motion state recognition. This paper has two main contributions: first, we propose a novel feature, called pressure derivative, obtained from barometer readings to distinguish vertical motion states in a user-independent manner; second, we develop a method to incorporate the motion state history in the classification of motion states and improve the classification performance.

The remainder of this paper is organized as follows.

Section 2 introduces the key steps of our motion state recognition algorithm and defines the motion states of interest.

Section 3 presents the partitioning method, definition of the proposed novel feature and the sequential forward feature selection method based on the least-squares support vector machine (LS-SVM). In

Section 4, six commonly-used classifiers are briefly introduced, followed by the description of how motion state history and people’s motion characteristics can be used to improve classification accuracy. In

Section 5, we evaluate the usefulness of the proposed feature, motion state recognition accuracy with and without historical information, and then analyze the influence of window size and smartphone pose on the classification accuracy.

Section 6 concludes this paper and discusses the limitations of the study.

2. Overview of Motion State Recognition

The main steps of motion state recognition are shown in

Figure 1. At first, a variety of sensor data need to be collected, which can be done by developing a device-specific program or using some commercial applications. Then, these raw data are filtered to remove random noise during the preprocessing phase. After that, the partitioning is conducted to divide the continuous stream of sensor data into smaller time segments so that different features can be extracted, including statistical, time-domain and frequency-domain features. However, more features do not necessarily mean higher classification accuracy, hence it is important to use effective methods (e.g., filters and wrappers) to select the most appropriate features. Once relevant features are retrieved, a classifier can be trained and then used to classify new unlabeled data.

Figure 1.

Flowchart of motion state recognition.

Figure 1.

Flowchart of motion state recognition.

In this paper, we define seven types of motion states, namely Still, Walking, Running, Downstairs, Upstairs, DownElevator, and UpElevator, as shown in

Table 1.

Table 1.

Types of motion states.

Table 1.

Types of motion states.

| No. | Motion States | Definition |

|---|

| M1 | Still | The user carries a phone without any movement. |

| M2 | Walking | The user is walking with a phone. |

| M3 | Running | Horizontal running. |

| M4 | Downstairs | Going down stairs. |

| M5 | Upstairs | Going up stairs. |

| M6 | DownElevator | Taking an elevator downward. |

| M7 | UpElevator | Taking an elevator upward. |

Generally, the outputs from the accelerometer vary at different poses, leading to a large variance in the features even if the user is staying in the same motion state. Therefore, we consider three common poses as shown in

Table 2.

Table 2.

Pose types.

| No. | Poses | Definition |

|---|

| P1 | Pocket | The phone is put in the trouser pocket. |

| P2 | Holding | The user keeps a phone in his or her hand without swinging. |

| P3 | Swinging | The user moves with a phone swinging in his or her hand. |

6. Conclusions

We presented a method for user-independent motion state recognition using sensors built in modern smartphones. A feature called pressure derivative was proposed to distinguish vertical motion states from horizontal ones. We also described that the performance of commonly-used classifiers for motion state recognition can be further improved by considering state history and people’s moving characteristics. In addition, the influence of both the window size and smartphone pose on the classification accuracy was analyzed.

However, there are still several limitations. Although the proposed pressure derivative is useful for recognizing vertical motion states, there is a delay to accurately recognize Downstairs/Upstairs when users transfer from Walking state. It is the same case when users transfer from Still to DownElevator/UpElevator. This is because barometer readings can be useful for vertical motion state classification only when users travel a certain distance in vertical direction. This results in the relatively low classification accuracies of vertical motion states compared with horizontal motion states. This problem is expected to be solved by considering information from other sensors or environment. For instance, when a user enters an elevator, there is a significant decrease in the Wi-Fi signal strength, which can be combined to judge whether users are in the elevator. In addition, we do not consider less common poses. For example, a person in a Still state might swing the phone in his/her in hand as in a Walking state. This kind of uncommon pose may be addressed by considering the change of Wi-Fi fingerprints when users stay in Wi-Fi covered areas. In addition, the transition from state to state and that from pose to pose is currently not considered in this study.

In future work, we anticipate that much more complex activities like studying at home and shopping can be recognized so as to better understand people’s living behaviors and provide contextual services. We will also investigate the role of motion state recognition in improving localization accuracy in indoor environments.