1. Introduction

Detection fire smoke at the early stage has drawn a lot of attentions recently due to its importance to social security and economic development. Conventional point fire smoke detector sensors are effective for indoor applications, but they have difficulties to detect smoke in large outdoor areas, it is because point fire smoke detector typically detect the presence of certain particles generated by smoke and fire by ionization [

1], photometry [

2], or smoke temperature [

3,

4]. They require a close proximity to fire and smoke, which are not effective for open spaces. For particles to reach these sensors to activate alarms, many sensors are needed to cover a large area which is not cost effective.

Video based fire smoke detection using cameras is of great interest in large and open spaces [

5]. Closed circuit television (CCTV) surveillance systems are widely installed in many public areas to date. These systems can be used to provide early fire smoke detection if a reliable fire detection software is installed in the system. Gottuk et al. [

6] test three commercially available video based fire detection systems against conventional spot systems in a shipboard scenario. Video based systems are found to be more effective in flame detection. These systems are economically viable as CCTV cameras are already available for traffic monitoring [

7] and surveillance [

8] applications. Braovic, M et al. [

9] propose an expert system for fast segmentation and classification of regions on natural landscape images that is suitable for real-time automatic wildfire monitoring and surveillance systems. It is noted that smoke is always visible before fire in most outdoor scenarios. This motivates us to research on detecting smoke in the absence or presence of flame from a single frame of video.

There are some technical challenges in video based fire smoke detection. First, it is observed to be inferior to particle-sampling based detectors in terms of false alarm rate. It is mainly due to the variability in smoke density, scene illumination, interfering objects. Second smoke and fire are difficult to be modeled, most of the existing image processing methods do not characterize smoke well [

10]. Current fire detection algorithms are based on the use of static and motion information in video to detect flames [

11,

12,

13,

14,

15]. Many efforts have been made to reduce the false alarm rate and missing detection rate.

Table 1 shows some static and dynamic features used in these approaches. As shown, the most used features are color, texture, energy and dynamic features.

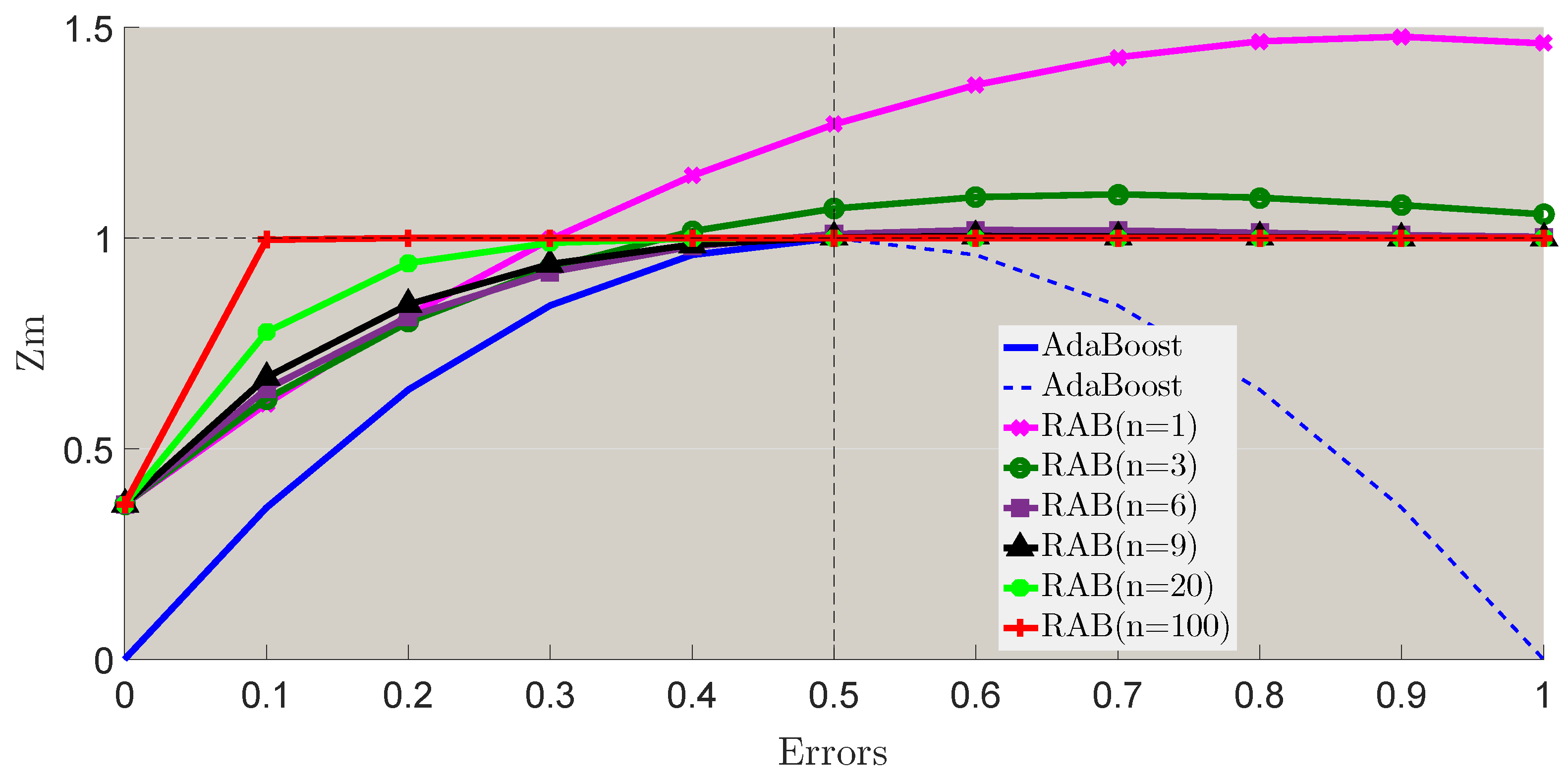

On the other side, for classification, some researchers [

15,

16,

18,

19,

20,

21,

22] use thresholds or parameters from analysis of extracted features, which is less time-consuming but not adaptive in some complex environments. Some other researchers [

30,

31] use well known AdaBoost to training models and classify. AdaBoost based component classifiers can solve the overfitting problem relatively with high precision, one weak classifier of component classifiers is a learner which can return a hypothesis that roughly outperform random guessing. For the component classifiers, the weights updates of them in every step mainly depend on the last errors,

gives the weights of component classifiers, and

is the errors. Definitely, the weights

should be positive, so the error

is required to be less than 0.5, and AdaBoost also requires the error not much less than 0.5, so that the boosting function can make sense of these cascade classifiers. Therefore, we must select a series of proper base classifiers and set a best parameter for every base classifier prudently and it is also time-consuming.

Deep learning is also considered for smoke fire detection. In [

32], a binary classifier is trained using annotated patches from scratch, second, learning and classification using cascade convolutional neural network (CNN) fire detector. Muhammad et al. [

33] propose an adaptive prioritization mechanism for fire smoke detection. CNN and the internet of multimedia things (IoMT) for disaster management are used for early fire detection framework. Through deep learning, features can be learned automatically. However, these deep learning methods for fire smoke detection are still limited by learning static features. Wu et al. [

34] combine deep learning method and conventional feature extraction method to recognize the fire smoke areas. CNN is used in Caffe framework to achieve a Caffemodel with static features. This deep learning approach can work well to certain extent, but the dynamic feature is not trained directly for most situations.

As for fire smoke classification, thresholds or parameters from extracted features are usually used in classification which is less time-consuming. However, it is not adaptive to deal with complex environments [

15,

16,

18,

19,

20,

21,

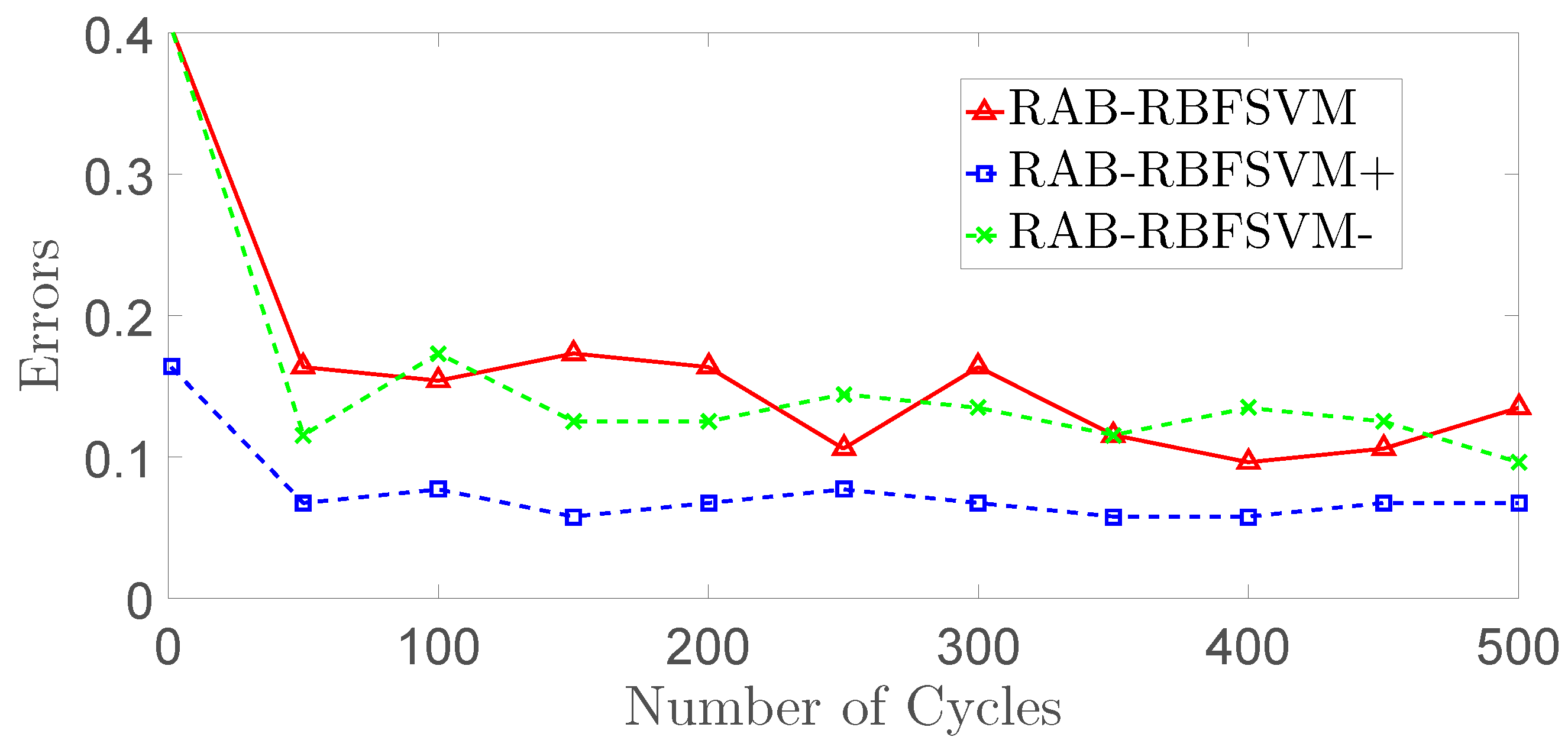

22]. SVM and AdaBoost have been considered for classifier in [

23,

24,

25,

26,

27,

28,

29]. SVM can achieve good accuracy with a sufficiently large training set. Some kernel functions can make it possible to solve the nonlinear problem in high dimensional space [

35,

36,

37,

38]. One popular kernel used in SVM is RBF (RBFSVM). RBFSVM determines the number and location of the centers and the weight values automatically [

39]. It uses a regularization parameter, C, to balance the model complexity and accuracy. Another parameter known as Gaussian width,

, can be used to avoid the over-fitting problem. However, it is difficult to select proper values of C and

. AdaBoost based component classifiers can solve the overfitting problem with relatively high precision. One weak classifier of component classifiers is a learner which can return a hypothesis that roughly outperform random guessing.

In this paper, static features, include texture, wavelet, color, hog, irregularity, and dynamic features, include motion direction, change of motion direction and motion speed, are extracted and trained with different combinations. A robust AdaBoost (RAB) classifier is designed for detection. It can overcome the weights update problem and solve the dilemma between high accuracy and over-fitting. RBFSVM (Radial Basic Function—Support Vector Machine) [

35] is chosen as the base classifier with some improvements made to guarantee the validity and accuracy of the boosting function. An effective algorithm for locating the original fire position is introduced in this paper for practical fire smoke detection.

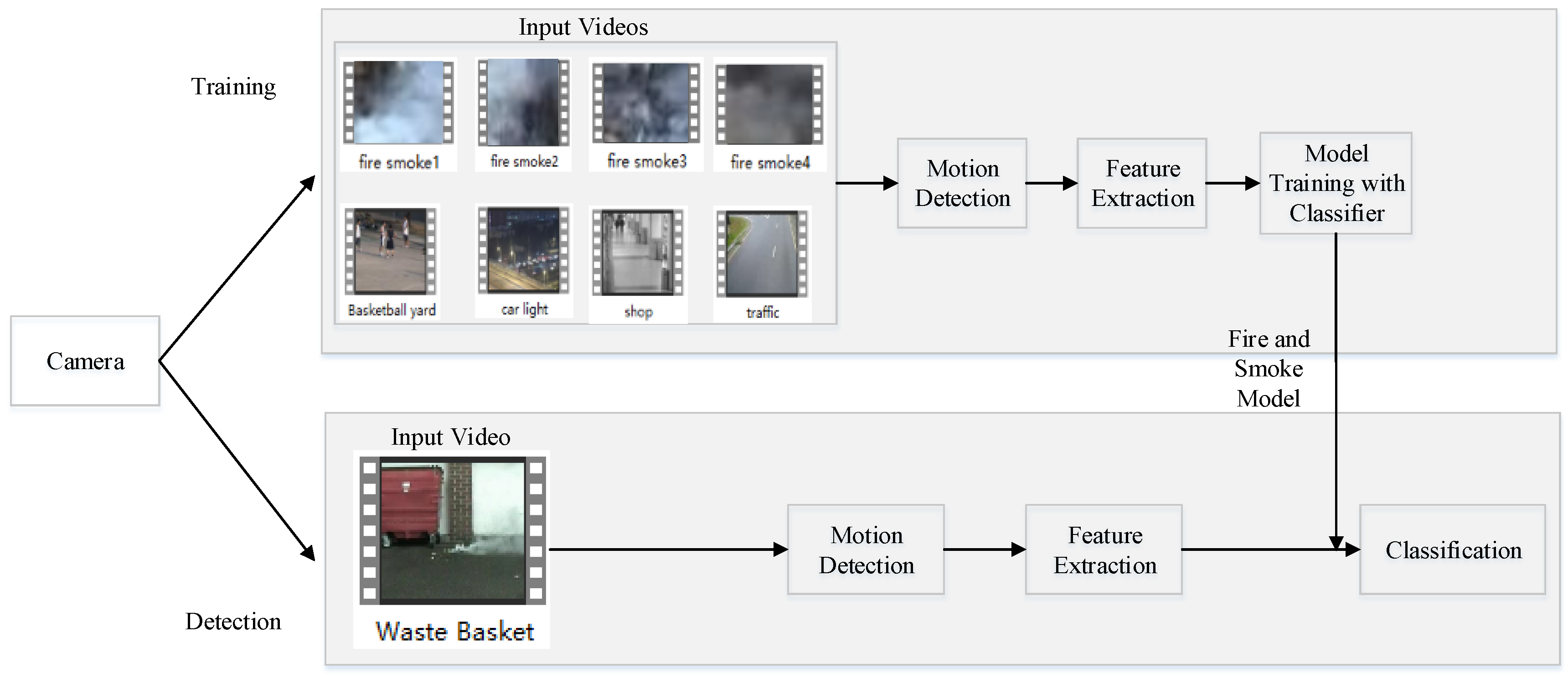

The remainder of this article is organized as follows: Video based fire smoke detection using camera are described in

Section 2, including static feature in

Section 2.1 and dynamic feature extraction in

Section 2.2. The proposed Robust AdaBoost classifier for fire smoke detection is presented in

Section 3. Performance of the proposed method are evaluated by extensive experiments in

Section 4. The paper is concluded in

Section 5.

2. Features Extraction

Fire and smoke training data usually come from pre-collected image datasets [

40]. Due to the difficulty in collecting this type of data, these sets are limited and most of them include other random information besides fire smoke as shown in

Figure 1. In [

29,

34], image data sets are used for static feature extraction and set threshold values for classifying dynamic features in real-time video detection. Although this approach can work well to some extent, it is difficult to set a single threshold value that works for situation.

In this paper, different smoke and non-smoke videos taken from camera are used as training samples. Motion regions of all video sequences are used for static feature extraction, and dynamic features are extracted depending on the correlations among successive frames. These training videos are collected and preprocessed so that the videos contain only fire smoke moving objects.

Robust Orthonormal Subspace Learning (ROSL) [

41] method is used to segment foregrounds from images.

where

M denotes the observed matrix of video,

A is the low-rank background matrix, and

M is the foreground matrix. This method represents

A under the ordinary orthonormal subspace

, where coefficients

. The dimension

k of the subspace is set as

(

is a constant and

).

E is the sparse matrix which represents foregrounds. Foreground segmentation results are shown in

Figure 2. Motion regions are selected frame by frame. In order to choose the exact sample data sets that we need, tiny motion regions which may be created by minute jitter or too big ones should be eliminated.

2.1. Static Features Extraction

Color, texture and energy in wavelet domain are three commonly used static features as shown in

Table 1. Here we consider three other static features including Edge Orientation Histogram (EOH) [

42], irregularity and sparsity, and three dynamic features including motion direction, change of motion direction and motion speed.

2.1.1. Color, Texture and Energy

Color moments are measures that can be used to differentiate images. Stricker and Orengo [

43] propose three central moments of an image color distribution such as mean, standard deviation and skewness. Here we use HSV (Hue, Saturation, and Value) to define three moments for each three color channels: mean, standard deviation and skewness of the

ith color channel at the

jth image pixel

:

LBP feature has been used to capture spatial characteristics of an image as reported in [

22,

44]. The LBP features are extracted as texture descriptor of smoke in this study. When smoke is found in a region, the edge of background will be blurred so that the high frequency energy of this region will decrease. Gabor wavelet is used to get three high frequency components in horizontal (HL), vertical (LH) and diagonal (HH) directions. The energy of image in region

is defined as

where

is the energy of image in region

and

is high frequency wavelet coefficients of pixel at

in region

and

is the entire wavelet coefficients of the same pixel. We have

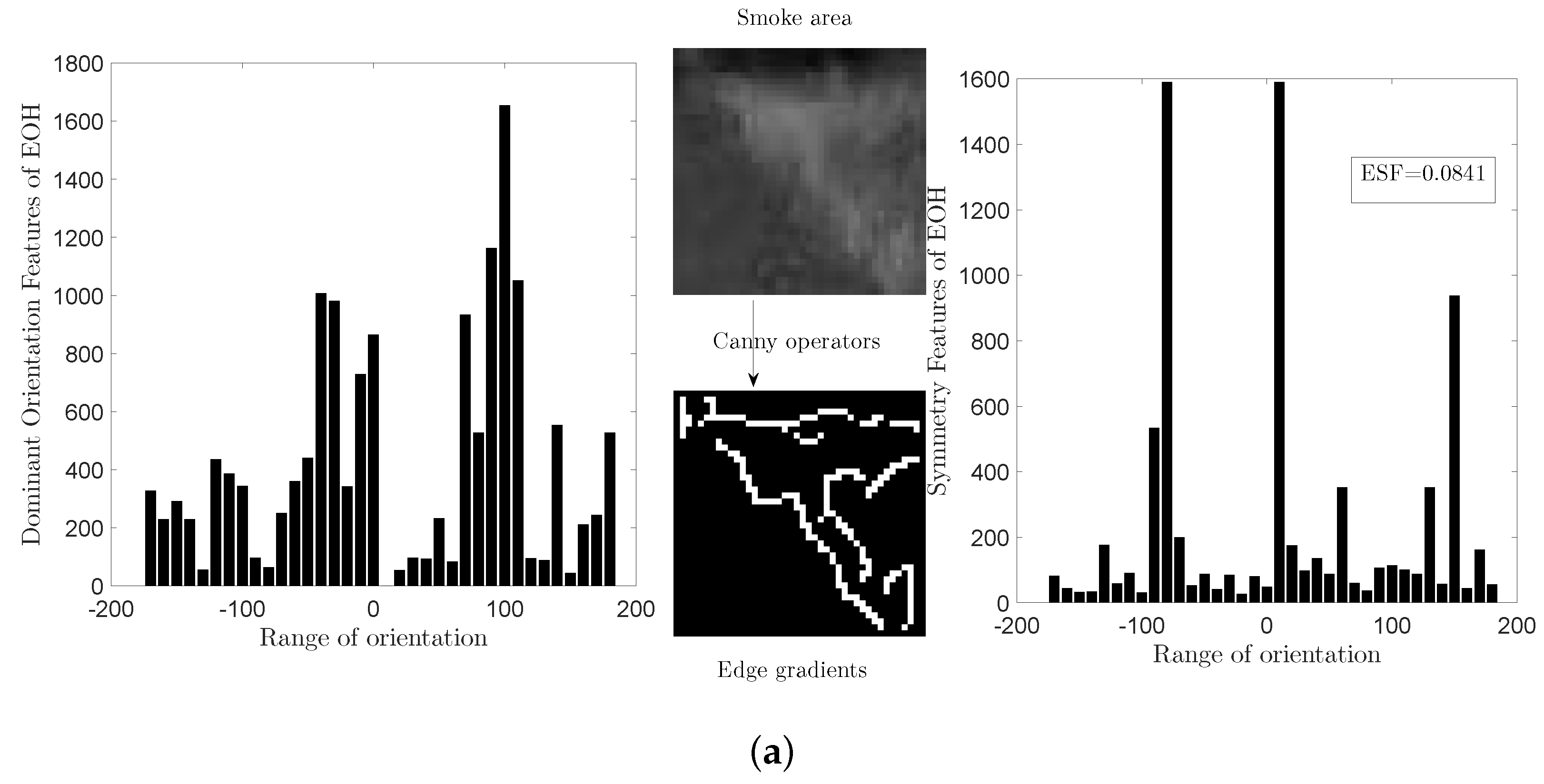

2.1.2. Edge Orientation Histogram

Gradients of an image plays an important role in object detection. The Scale Invariant Feature Transform (SIFT) [

45] achieves an impressive performance on image registration and object detection. As a variant of SIFT, Histograms of Oriented Gradients (HOG) [

46] are used for human detection. HOG can capture local gradient-orientation structures within an image but it is computed on a dense grid of uniform space over an image. Therefore, a high dimensional feature vector is produced to describe each detection window, which is not suitable for real-time application like fire smoke detection. Levi and Weiss [

42] propose the Edge Orientation Histogram (EOH) method which measures the response of linear edge detectors at different subareas of the input image.

In this paper, we use Canny operator to generate image edge and the gradient which contains horizontal component

and vertical component

. The edge gradient

is then converted into polar coordinate. That is

where

m is the magnitude and

denotes the orientation.

The edge orientations are mapped to the range

with an interval of 10°. The numbers of edge orientation in these 36 angle ranges are counted using two angle threshold matrices as

and

,

For each angle range

, the EOH is obtained by summing all the gradient magnitudes whose orientations belongs to this range,

The EOH features can be extracted with two different methods- Dominant Orientation Features (DOF) and Symmetry Features (SF) [

42]. When we try to find the dominant edge orientation in a specific area rather than the ratio between two different orientations, we define a slightly different set of features which measures the ratio between a single orientation and the others. That is

where

records the ratio of every single orientation from

to

,

is a tiny positive value to avoid division by zero. According to Equation (

7), the domain orientation is located in

.

According to [

42], we define the symmetry features in

as

To normalize this feature, we reset it as

where

,

and

are rectangles of the same size and are positioned at opposite sides of the symmetry axes. Not only the symmetry features can be used to find symmetry, but it can also find places where symmetry is absent. From Equation (

9), a small

means that the image orients more in

compared to its opposite side range.

The symmetry value of the entire detected region is computed as

is small when the detected motion region is symmetric.

Figure 3 shows the two different EOH features (

and

) of smoke and car images. In this paper, we concatenate these two EOH features to characterize the edge features.

2.1.3. Irregularity and Sparsity

Fire smoke have no regular shapes in diffusion, this irregularity feature is defined as

where

is the irregularity,

c is the edge perimeter of detected region and

s is the area.

For the sparsity feature, it is mainly for light smoke as described in [

22]. We extract the sparse foreground with ROSL [

41], and

E in Equation (

2) is the foreground matrix.

Figure 4 shows the extracted sparse smoke without backgrounds.

The sparsity value is calculated as

where

is the matrix of region

M,

is the size of

.

Figure 4 shows the foreground motion region. The

norm counts the numbers of non-zero pixels in foreground.

2.2. Dynamic Features Extraction

Fire smoke has special dynamic features that provides information for recognition. Motion direction, change of direction and motion speed are extracted as three dynamic features. Before extracting these features, we need to find the locations of the detected motion region in consecutive frames. The centroid of current motion region is first calculated. Because a moving object in two adjacent frames has similar location and shape, these two motion regions have maximum overlap ratio and the shortest distance.

Figure 5 shows the mechanism of finding the locations of these two corresponding motion regions. In

Figure 5, A, B and C are the centroid of three successive frames, A1 and C1 are the two overlapping regions, region A has the maximum overlap ratio computed with current detected region B for the last frame while region C has the maximum overlapping ratio computed with current detected region B for the next frame, the overlapping ratio is calculated as

where

represents the area of each region shown in the figure.

From

Figure 5, motion region B is detected moving from A to C in three consecutive frames. Hence, its motion direction can be calculated with the orientation of

and

. Define the horizontal component of

as

and the vertical component of

as

, the motion direction of region B is calculated as

As smoke moves slowly and its motion is not obvious in adjacent two frames, we use the statistic measurement to extract the motion features by calculating the mean values of corresponding motion region directions from

and

instead of that in adjacent two frames. The final motion direction feature of one motion region in frame

is defined by

We set the frame interval as

. Each motion region can be calculated by following the scheme above (

in the first four frames and last four frames cannot be calculated), such that we can also obtain one motion direction change in frame

Another dynamic feature is called motion speed, while we can easily achieve the motion speed with the distance of two corresponding motion regions in consecutive two frames as

where

is the frame rate, the motion speed from

to

is calculated as

Combining different features can enhance the robustness of features. According to [

24], features can be concatenated together to form a feature vector in cascade fusion as follows:

where

represents every single features of one image.

Figure 6 shows the framework of fire smoke detection in this paper including motion region detection, feature extraction and samples training and fire smoke classification. As for the classifier, we propose a robust AdaBoost method in the following section.