1. Introduction

The planetary rover has different design requirements and configuration standards according to different task types and functions. As a key part of several subsystems, the navigation system provides the rover device with the ability to sense the environment. By carrying external sensing module (e.g., vision, lidar, etc.), the rover can acquire the structural features of the surrounding environment. Besides, the internal sensing module (e.g., inertial measurement unit, odometer, etc.) can be used to obtain the rover’s relative positional relationship with the environment. Lastly, through the fusion of multiple sensing modes, the rover’s global perception of the environment can be established. This is also well validated and applied in practical tasks such as the Spirit, Opportunity, etc. [

1,

2].

As the detection task becomes more complex, the detection time is longer, and the corresponding detection distance is also extended. This requires the rover itself to have accurate localization and environment reconstruction capabilities, providing accurate input to the planning system, which can optimize tour path to avoid the danger of obstacles and maximize the value of the mission. How to obtain high-precision and large-scale terrain sensing information in an unknown, a complex and dynamic environment, like planetary, is particularly important, which greatly affects the success or failure of the rover mission, and thus has become a research hot spot in this field [

3,

4,

5].

The research on terrain perception can be analyzed from three aspects, namely sensor selection, algorithm and application [

6]. First of all, in terms of sensor selection, the sensors used from the early stage are vision and lidar, and gradually developed to multisensor fusion. In 2007, Olson [

7] achieved high-precision interframe matching in the vicinity of the rover by beam adjustment based on a large number of images taken during the in-orbit and rover process, and realized long-distance terrain reconstruction using wide baseline binocular vision, which purposed a possible solution to the realization of Mars long distance rover navigation. In 2011, Barfoot’s [

8] team of the University of Toronto applied SLAM (Simultaneous Localization and Mapping) technology to planetary exploration, and achieved accurate global terrain estimation through laser radar and odometer compensation. The sparse feature method and batch alignment algorithm purposed in this research effectively solved the robustness problems of feature association and measurement outlier. Carrio et al. [

9] proposed a SLAM terrain fusion estimation method based on a visual odometer, IMU, and wheel odometer. The innovation of the method is that it reconstructed the terrain through multisensor fusion, and improves the estimation accuracy by predicting the non-systematic error caused by the wheel interaction with odometer error model based on Gaussian process. Shaukat et al. [

10] also proposed a fusion strategy of vision and lidar, and verified the advantages of this model in terms of distance, flexibility, and precision.

Secondly, in terms of the development of algorithms, the algorithm gradually evolved from the previous triangulation and filtering optimization to machine learning and biological inspiration. Li et al. [

11] purposed a topological terrain estimate of the Spirit near Gusev Crater based on beam correction and generated a digital elevation map based on Ortho Maps. In 2013, the MRPTA (Micro-Rover Platform with Tooling Arm) project initiated by the Canadian Space Agency [

12] realized the minimum sensor configuration-based terrain construction, proposed a local grid representation centered on the rover and used the map manager achieve the fusion management of measurements at different times. All of these works helped to achieve a globally optimized terrain representation. Later, Bajpai et al. [

13] from Surrey University proposed a monocular-based planetary simultaneous localization and mapping technology (PM-SLAM). The innovation of this method was that it was inspired by the detection of biological semantic features and proposed visually significant model. First, this model gave a method for generating mixed marked features based on the point description, and then used the features estimate terrain state. The test results of multiple scenarios showed that the method could effectively improve the robustness of visual perception. At the same time, with the continuous development of deep learning and convolutional neural networks, more and more algorithms are applied to the rover mission [

14].

Finally, in terms of application changes, the application gradually evolved from the initial terrain estimation to geographical environment modeling and scientific attribute detection. The overall trend is still more inclined to multisensor fusion terrain estimation that does not rely on the external environment condition. In 2017, Gonzalez et al. [

15], from MIT, purposed a machine learning-based sliding detection method in the state of relying only on the internal sensing module to solve the sliding problem that rovers may encounter during the planetary exploration process. On one hand, since this method still used the original internal sensing device, the complexity of the system does not increase. On the other hand, it improves the adaptability to lighting conditions and compared and analyzed the detection validity of the supervised and unsupervised learning methods in the actual verification process. Recently, the research on the estimation of the surface structure of the planetary have made some achievements, and gradually extended to the next stage, namely multiattribute status terrain estimation. This new form of estimation is not only concerned with whether the ground flat or not, but also pay attention to fuse feature attributes such as hardness and material, etc. Deng’s [

16] team, from the Harbin Institute of Technology, proposed a new idea of using the equivalent stiffness to characterize the pressure characteristics of the terrain and the friction angle to characterize the shear characteristics. They also proved that the interaction mechanics model between the wheel and the soil and the contact model for calculating the force between the wheel and the rock is equivalent, and purposed a digital elevation map with physical properties.

In addition, the terrain reconstruction problem is also a research hot spot in the field of ground robots and unmanned driving. As early as 2002, Professor Sebastian Thrun from Carnegie Mellon University gave a literature overview based on robotic drawing, and compared and analyzed a variety of different probability-based implementation methods. This work serves as a very representative work in the field, providing follow-up technical development support [

17]. Bresson et al. [

18] gave a literature overview of the development of SLAM technology and analyzed the current development trend of autonomous driving. Ye and Borenstein [

19], from the University of Michigan, used 2D LIDAR to estimate the elevation map and certainty map of the terrain, and proposed the certainty assisted spatial filter, which effectively distinguish corrupted pixels in elevation maps through physical constraints in motion and spatial continuity. After that, Wolf [

20] and others combined the terrain reconstruction and classification, and purposed three-dimensional terrain estimation based on the hidden Markov model. Besides, they distinguished the navigable area and the non-navigable area. Their work provides more in-depth information for later path planning and semantic ability in a certain sense. Gingras et al. [

21] performed unstructured surface reconstruction using a 360-degree perspective lidar. By analyzing the surface and extracting the navigable space, the safely passable region is represented with compressed irregular triangular grid. This kind of compact terrain representation simplifies the computational complexity and ensures the reliability of the platform operation.

Although the above-mentioned sensing mode and terrain reconstruction method have achieved good precision and the effect is remarkable in practical applications, it is still necessary to consider how to maintain the ability to perceive the terrain under accidents (failure or partial failure) in uncertain external environments. Of course, there are many factors that may cause this kind of accidents, probably because the vibration frequency of the soft landing process is too large or the environmental condition, such as light and temperature, changes. Therefore, how to construct a more robust terrain perception capability based on existing terrain reconstruction capability is the next difficult point to be solved.

Based on the above analysis, this paper mainly focuses on the situation that the visual sensing unit cannot work normally when illumination condition changes. Besides, it discusses the feasible method of accurate terrain reconstruction by considering the active ranging information with motion uncertainty. As early as 1989, Hebert et al. [

22] first proposed the use of the locus algorithm to construct terrain representations in spherical polar coordinate space. At the same time, effective registration of different detection position data was realized based on feature matching and iconic matching. On the basis of the former, Krotkov [

23] considered the problem of target shadow occlusion. Then, in 2001, Whitaker [

24] constructed a height function representation of the terrain based on multiple dense distance maps, and gave an optimal terrain estimate by looking for the maximum posteriori probability on the test set and the prior data set. Besides, his work verified that the results of multiple distance maps experiments are much better than any single distance maps. Finally, it is proved to be robust to noise with laser range finder. After that, Whitaker and Gregor [

25] used the multi-viewpoint distance information with noise to estimate the surface configuration and gave the likelihood expression of the sensor model to construct the three-dimensional surface. Also, by optimizing the likelihood, an unbiased estimator was obtained. This method purposed new terrain estimation ideas compared with previous height measurements and recent point matching. Cremean and Murry [

26] from Caltech proposed a 2.5D digital elevation map construction method suitable for high-speed and highly unstructured outdoor environments. First, they presented a complete uncertainty analysis of the distance measurement sensor error. Then, they transformed measurement into the measured probability density function by uncertain model, and selected the update region near the median region of the probability density function. Finally, the terrain result is continuously updated with Kalman filter, which purposes new ideas in the terms of previous local terrain estimation, update methods, as well as online implementations compared with former researches. Lshigami et al. [

27] proposed a new fan-shaped reference grid for distance data transformation process to terrain, which helped to obtain an elevation map with cylindrical coordinates. This transformation method realizes the scaled representation of terrain representation, that is, to refine reconstruction near the rover, in which the farther away from the rover is, the more sparse the expression. Based on the previous research basis, Fankhauser [

28], from ETH, proposed a probabilistic terrain estimation method for quadruped robots under uncertain localization conditions, and also considered the influence of sensor measurement error and platform motion estimation error. This method achieves high-precision terrain estimation based on kinematics and inertial measurement. At the same time, a three-dimensional covariance representation of the terrain was proposed and the map update error transfer relationship compared with former researches was also derived. Combined with the planetary rover environment, this paper mainly focuses on discussing the multipoint distance-based vibration/gyroscope-coupled elevation terrain construction method, so as to improve the robustness of rover to environmental changes and to provide support for subsequent motion planning and 3D-aware semantic field construction. The remainder of this paper is organized as follows.

Section 2 focuses on the terrain reconstruction method based on uncertainty analysis.

Section 3 analyzes the depth distance sensor system and noise error used in the verification process.

Section 4 compares and analyzes the rationality and correctness of algorithms based on ROS simulation platform and actual test environment. Finally, the conclusion is presented in

Section 5.

2. Uncertainty-Based Terrain Mapping

2.1. Coordinate System Definition

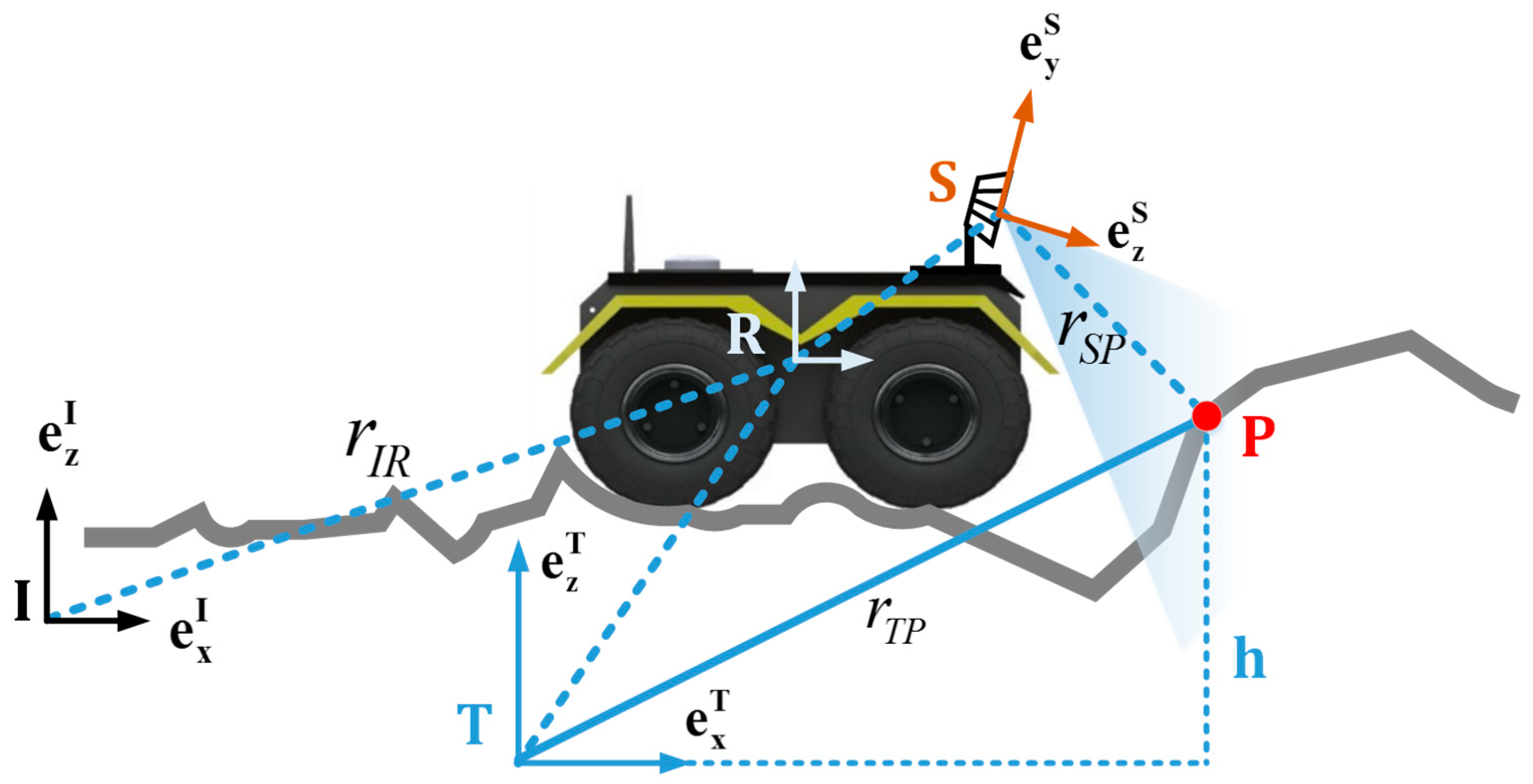

This paper defines four coordinate systems: the inertial coordinate system I, the terrain coordinate system T, the rover body coordinate system R, and the sensor coordinate system S. The inertial coordinate system is fixed in the inertia space, the rover body coordinate system is fixed at the centroid position of the rover, and the sensor coordinate system is fixed with the centroid of the sensor body, as shown in

Figure 1. The transformation relationship between the coordinate systems is given by the transformation matrix, that is, the three-dimensional translation

and three-dimensional rotation

ϕ. The

sensor and the rover are both fixedly calibrated when performing the task, so

the relationship between the rover body coordinate system and the sensor

coordinate system is known, and is defined here as

. Similarly,

the conversion between the inertial coordinate system and the rover body

coordinate system is

. Since the pose of the

rover related to inertial coordinate system at different moments is highly

uncertain, the covariance matrix of the pose at each moment is given

synchronously:

When the rover is advancing in the unknown terrain, the three-dimensional attitude can be described by pitch, yaw, and roll. This paper assumes that the terrain is fixed relative to the inertial coordinate system. The conversion between rover body and inertia coordinate system can be described by the following formula.

where

describes that the inertial coordinate system rotates around the Z-axis

and turns into intermediate coordinate system

;

describes that the pitch and roll transformations from the intermediate coordinate system to the rover system.

For subsequent derivation simplification and calculation, the inertial coordinate system is set to the Z-axis perpendicular to the ground and the Z-axis of the terrain coordinate system is always parallel to the Z-axis of the inertial coordinate system, that is, there is only one degree of freedom for the conversion between the two coordinate systems, which is also yaw around the Z-axis. Also, is set to be given by , which means that the conversion of the terrain coordinate system and the rover body coordinate system has only two degrees of freedom of pitch and roll, thereby achieving dimensionality reduction between coordinate transformations.

2.2. Distance Information Associated with Terrain

For each measurement, there will be different numbers of sampling results according to the sensing ability of the sensing unit and the task requirements. To simplify the understanding, take a point here for analysis. As shown in

Figure 1, point P is the measuring point, and its coordinates are

, which means that in the terrain coordinate system the height estimate at the point

is

. For the estimation of height, this paper uses Gaussian probability distribution to approximate it, which is

, where

is the mean of the distribution and

is the variance of the distribution. As can be seen from

Figure 1, the measured value of point P in the sensor coordinate system is

, through the conversion of the sensor coordinate system to the terrain coordinate system we can get the formula

where

; the three-dimensional coordinates of the point P are extracted in the height direction. Furthermore, it can be known that the height estimation is directly related to the conversion matrix and the sensor measurement value, and corresponds to the error source of the previous analysis. Therefore, when we perform first derivative of the above equation, the Jacobian matrix corresponding to the error is obtained:

The sensor measuring Jacobian matrix:

The sensor coordinate system rotation Jacobian matrix:

where

is defined as a mapping corresponding to the rotation matrix, as detailed in the literature [

29], i.e.,

,

. Bringing the Jacobian matrix into the following equation, we can obtain the variance

error transmission:

The first item is sensor noise due to its error transmission, which is determined by the nature of the sensor itself. The covariance value is solved by the noise model, which is detailed shown in

Section 3 for sensor model analysis. The second term is the error transmission caused by the conversion between coordinate systems. It should be noted that the conversion consists of two parts: translation and rotation. The definition of the terrain coordinate system is defined in the previous coordinate system definition, so the effect of translation can be ignored here.

At this point, the noise error estimation based on the sensor measurement has been obtained, and for each measurement update there will be a corresponding height estimation so the next step can fuse the newly obtained height measurement estimate with the existing elevation terrain map. Because the height measurement estimate has no complex dynamic relationship with each measurement point, the state transfer equation is more intuitive, which is only measurement update for a certain point , so every point in the terrain map will be updated under each sensor measurement. On the contrary, if there is no update, the measurement will remain the same. A fusion form based on Kalman filtering is given here.

First, a simplified discrete Kalman filter equation is given:

For the rover terrain estimation, the state vector is actually the height scale of each measurement point, so

item is

, status

corresponds to the current measurement point height estimate

, observation covariance

corresponds to the height estimate variance of the current measurement point

, the observation value

corresponds to the existing height value

, and error covariance

corresponds to

; substituting Equations (8)–(10) the following can be obtained.

Substituting Equation (11) into Equation (12), the following is obtained.

Then, substituting Equation (11) into Equation (13) the following is obtained.

Thus the fusion of newly measured height with existing elevation map estimate can be given, where k − 1 on the upper left represents the estimate before the update and k represents the updated estimate.

2.3. Motion Information Associated with Terrain

In addition to the noise impact of the sensor itself, the motion of the rover will also produce noise errors. Unlike the conventional terrain estimation, this paper associates the terrain coordinate system with the motion ontology rather than the inertial coordinate system, so there will be terrain updates as long as the rover moves. As you can see from the previous section, in general, the mean and variance of each point will be updated according to the uncertainty of the motion, but this will bring huge computational pressure. So this section uses spatial covariance matrix of each point in real terrain to extend the structure of the elevation map, in this way the three-dimensional uncertainty information of each point can be obtained. According to the previous definition, the terrain coordinate system is associated with the current rover pose, in general, assuming there are new measurement updates with a point

in the grid, set its covariance as [

30]

where the height estimation variance

is given by the calculation in the previous section, the values of

and

approximate the uncertainty of the horizontal direction of the reaction, and its calculation is given by

where

is the length of the side of the square grid. Therefore, even if the sensor measurement update is not received at the current time, due to the relative motion change of the time before and after the rover,

will also be updated, which ensures the system’s robustness to motion noise. The terrain association derivation based on motion information is given below. It can be seen from

Figure 2 that the representation of

in the terrain coordinate system at time k is as shown in the following equation.

Convert it to the

k + 1 moment in terrain coordinate system, then

In this way, the value of measurement point in the terrain coordinate system can be given at each moment, but this also means that the newly estimated result at each moment is integrated with the previous existing result, which will also bring errors and complexity to the calculations. If it can ensure that terrain coordinate system at time

k and

k + 1 moment are consistent, the impact is avoided, and therefore the terrain map data does not need to be added or deleted, which is convenient for actual operation. Therefore, it is possible to make assumptions from translation and rotation, i.e.,

It can be seen from the above formula that the terrain coordinate system at time

k and

k + 1 is the same reference coordinate system, and further development of Equation (20) is available.

Unify it to the terrain coordinate system at time

k + 1, then

In the same way, the expansion of formula (21) is obtained.

Unify it to the terrain coordinate system at time

k + 1, then

Therefore, combining Equations (19), (23), and (25) can lead to the following conclusions.

That is, the time k coordinate and the time k + 1 terrain coordinate system are aligned. The value of the point P in the terrain coordinate system at time k is equal to the value in the terrain coordinate system at time k + 1. For the dynamic process, the terrain representation reference is unified, which simplifies the difficulty of mass data fusion registrations.

Combined with Equation (19), the covariance transfer relationship due to motion at different times can be obtained.

It can be known that there is correlation between the distance estimate of point P at time k + 1 and the distance estimation of point P at time k and the rover body coordinate transformation from time k to k + 1, so the first derivative of Equation (19) can be used to obtain the corresponding Jacobian matrix.

Error transmission of time

k + 1 caused by observation at time

k.

Combined with Equation (21), the following is obtained.

Error transmission caused by translation transformation of the rover body coordinate system from time

k to time

k + 1.

Error transmission caused by rotation transformation of the rover body coordinate system from time

k to time

k + 1.

where

. So far, it is not enough to solve the covariance at time

k + 1, but it also need to know the uncertainty influence caused by the motion estimation error from

k to

k + 1, i.e., solution of

,

, which is expressed as follows.

According to the previously defined coordinate system relationship, the Z-axis of the rover body coordinate system is aligned with the Z-axis of the inertial coordinate system and the obtained processed attitude uncertainty is only related to the yaw angle, so the covariance matrix representation of the pose of the rover at time

k can be obtained through dimensionality reduction, i.e.,

where,

is the aligned rover body coordinate system, both satisfy equation

,

.

2.4. Covariance Solving Based on 3D Vibration/Gyro Detection

The Gaussian random model is used to approximate the motion process. At the same time, the external sensing unit is not used to realize the rover localization in this paper. Therefore, the localization mode using the triaxial vibration haptic sensing unit and the gyro is given. The following derivation method of , will be based on this mode.

2.4.1. Solving Position Information Based on Vibration and Gyroscope Information

The experiment uses a three-axis vibration tactile sensor output value as the amplitude and frequency of the measurement point, which can be converted into an acceleration signal, that is, the input

; the single-axis gyroscope output is the angular acceleration around the Z-axis, which is recorded as

. In order to reduce the error caused by nonlinearity, the sensor connection point is taken as the coordinate origin and the interval before and after measurement

is taken. So there is

where

And, since the rover is relatively stable and slow during the course of travel, its displacement changes from time

k to time

k + 1 is obtained by

Similarly, based on the gyroscope input, the yaw angle change from

k to

k + 1 can be obtained, i.e.,

2.4.2. The Position Relationship between Time k and Time k + 1

In the actual sampling process, since the exist of uncertainty, the Gaussian noise vector is assumed to be

, therefore

Substituting Equations (37) and (39) into (40), we have

Performing the first derivative of the above equation and the Jacobian matrix of state and noise is given.

Then its covariance transfer relationship can be written as

In turn, the conversion relationship of the rover device from time

k to time

k + 1 can be obtained, that is,

At the same time, the displacement and rotation angle values are decomposed.

Substituting into Equation (45), the following equation is obtained.

Then, the covariance of

can be obtained.

As can be seen from Equation (44),

Substituting into Equation (49), then

where

The transition of the rover from

k to

k + 1 is determined by translation and rotation, so the covariance can be given by

Therefore, combined with Equations (51) and (54), the value of

can be obtained. The last one that needs to be solved is

. Since by defining coordinate system, this paper has this conclusion that only the orientation is changed, and the first derivative only stores the value of the z-axis, so it can be obtained:

In summary, combined with Equations (14)–(16) and (28), the uncertain terrain measurement updates of point P corresponds to every point in the terrain from time k to time k + 1 can be obtained.