Hough Transform and Clustering for a 3-D Building Reconstruction with Tomographic SAR Point Clouds

Abstract

1. Introduction

2. Framework of the TomoSAR 3-D Building Reconstruction

3. 3-D Building Reconstruction from TomoSAR Point Clouds

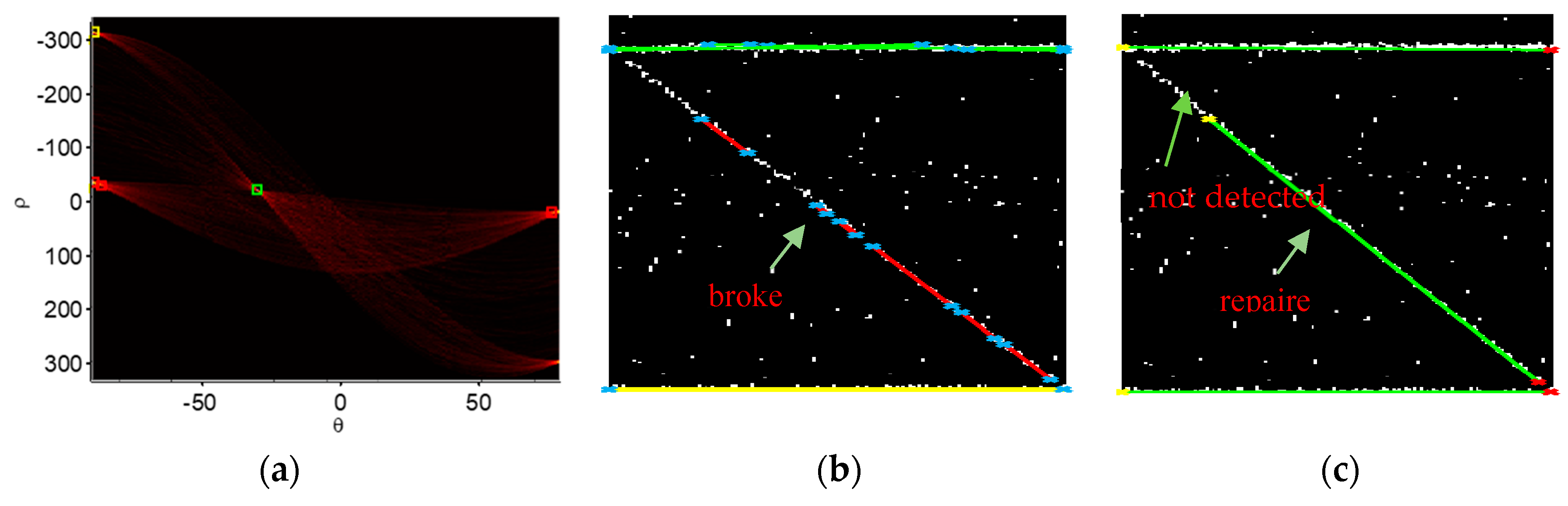

3.1. Hough Transform in the Tomographic Plane of 3-D Point Clouds

3.2. Unsupervised and Supervised Clustering

4. Simulations and Experiments

4.1. Simulation Parameters

4.2. CS-Based TomoSAR Imaging

4.3. Hough Transform Line Detection and Point Cloud Clustering

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Sun, Y. 3D Building Reconstruction from Spaceborne TomoSAR Point Cloud. Master’ Thesis, Technical University of Munich, Munich, Germany, 2016; p. 114. [Google Scholar]

- Lee, H.; Ji, Y.; Han, H. Experiments on a Ground-Based Tomographic Synthetic Aperture Radar. Remote Sens. 2016, 8, 667. [Google Scholar] [CrossRef]

- Wu, Y. Concept of Multidimensional Space Joint-Observation SAR. J. Radars 2013, 2, 135–142. [Google Scholar] [CrossRef]

- Zhu, X.X.; Bamler, R. Very High Resolution Spaceborne SAR Tomography in Urban Environment. IEEE Trans. Geosci. Remote Sens. 2010, 48, 4296–4308. [Google Scholar] [CrossRef]

- Zhou, S.; Li, Y.; Zhang, F.; Chen, L.; Bu, X. Automatic Regularization of TomoSAR Point Clouds for Buildings Using Neural Networks. Sensors 2019, 19, 3748. [Google Scholar] [CrossRef] [PubMed]

- Ferro, A.; Brunner, D.; Bruzzone, L. Automatic Detection and Reconstruction of Building Radar Footprints from Single VHR SAR Images. IEEE Trans. Geosci. Remote Sens. 2013, 51, 935–952. [Google Scholar] [CrossRef]

- Zhu, X.X.; Bamler, R. Superresolving SAR Tomography for Multidimensional Imaging of Urban Areas: Compressive Sensing-Based TomoSAR Inversion. IEEE Signal Process. Mag. 2014, 31, 51–58. [Google Scholar] [CrossRef]

- Guo, R.; Wang, F.; Zang, B.; Jing, G.; Xing, M. High-Rise Building 3D Reconstruction with the Wrapped Interferometric Phase. Sensors 2019, 19, 1439. [Google Scholar] [CrossRef]

- Frey, O.; Meier, E. 3-D Time-Domain SAR Imaging of a Forest Using Airborne Multibaseline Data at L-and P-Bands. IEEE Trans. Geosci. Remote Sens. 2011, 49, 3660–3664. [Google Scholar] [CrossRef]

- Lombardini, F.; Cai, F. Temporal Decorrelation-Robust SAR Tomography. IEEE Trans. Geosci. Remote Sens. 2014, 52, 5412–5421. [Google Scholar] [CrossRef]

- Aguilera, E.; Nannini, M.; Reigber, A.; Member, S. A Data-Adaptive Compressed Sensing Approach to Polarimetric SAR Tomography of Forested Areas. IEEE Geosci. Remote Sens. Lett. 2013, 10, 543–547. [Google Scholar] [CrossRef]

- Li, S.; Yang, J.; Chen, W.; Ma, X. Overview of Radar Imaging Technique and Application Based on Compressive Sensing Theory. Dianzi Yu Xinxi Xuebao/Journal Electron. Inf. Technol. 2016, 38, 495–508. [Google Scholar] [CrossRef]

- Ma, P.; Lin, H.; Lan, H.; Chen, F. On the Performance of Reweighted L1 Minimization for Tomographic SAR Imaging. IEEE Geosci. Remote Sens. Lett. 2015, 12, 895–899. [Google Scholar] [CrossRef]

- Wang, Y.; Zhu, X.X.; Bamler, R. An Efficient Tomographic Inversion Approach for Urban Mapping Using Meter Resolution SAR Image Stacks. IEEE Geosci. Remote Sens. Lett. 2014, 11, 1250–1254. [Google Scholar] [CrossRef]

- Budillon, A.; Ferraioli, G.; Schirinzi, G. Localization Performance of Multiple Scatterers in Compressive Sampling SAR Tomography: Results on COSMO-Skymed Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 2902–2910. [Google Scholar] [CrossRef]

- Aguilera, E.; Nannini, M.; Reigber, A. Wavelet-Based Compressed Sensing for SAR Tomography of Forested Areas. IEEE Trans. Geosci. Remote Sens. 2013, 51, 5283–5295. [Google Scholar] [CrossRef]

- Xing, S.Q.; Li, Y.Z.; Dai, D.H.; Wang, X.S. Three-Dimensional Reconstruction of Man-Made Objects Using Polarimetric Tomographic SAR. IEEE Trans. Geosci. Remote Sens. 2013, 51, 3694–3705. [Google Scholar] [CrossRef]

- Tropp, J.A.; Gilbert, A.C. Signal Recovery from Random Measurements via Orthogonal Matching Pursuit. IEEE Trans. Inf. Theory 2007, 53, 4655–4666. [Google Scholar] [CrossRef]

- Xiao, X.Z.; Adam, N.; Brcic, R.; Bamler, R. Space-Borne High Resolution SAR Tomography: Experiments in Urban Environment Using TS-X Data. 2009 Jt. Urban Remote Sens. Event 2009, 2, 1–8. [Google Scholar] [CrossRef]

- Zhu, X.X.; Bamler, R. Tomographic SAR Inversion by L1-Norm Sensing Approach. IEEE Trans. Geosci. Remote. Sens. 2010, 48, 3839–3846. [Google Scholar] [CrossRef]

- Liang, L.; Li, X.; Ferro-Famil, L.; Guo, H.; Zhang, L.; Wu, W. Urban Area Tomography Using a Sparse Representation Based Two-Dimensional Spectral Analysis Technique. Remote Sens. 2018, 10, 109. [Google Scholar] [CrossRef]

- Shahzad, M.; Zhu, X.X. Automatic Detection and Reconstruction of 2-D/3-D Building Shapes from Spaceborne TomoSAR Point Clouds. IEEE Trans. Geosci. Remote Sens. 2016, 54, 1292–1310. [Google Scholar] [CrossRef]

- Gao, Z.; Ma, W.; Huang, S.; Hua, P.; Lan, C. Deep Learning for Super-Resolution in a Field Emission Scanning Electron Microscope. AI 2020, in press. [Google Scholar] [CrossRef]

- Song, A.; Choi, J.; Han, Y.; Kim, Y. Change Detection in Hyperspectral Images Using Recurrent 3D Fully Convolutional Networks. Remote Sens. 2018, 10, 1827. [Google Scholar] [CrossRef]

- Duan, D.; Xie, M.; Mo, Q.; Han, Z.; Wan, Y. An Improved Hough Transform for Line Detection. ICCASM 2010–2010. Int. Conf. Comput. Appl. Syst. Model. Proc. 2010, 2, 354–357. [Google Scholar] [CrossRef]

- Zhu, X.X.; Wang, Y.; Montazeri, S.; Ge, N. A Review of Ten-Year Advances of Multi-Baseline SAR Interferometry Using TerraSAR-X Data. Remote Sens. 2018, 10, 1374. [Google Scholar] [CrossRef]

- Chen, F.; Wu, Y.; Zhang, Y.; Parcharidis, I.; Ma, P.; Xiao, R.; Xu, J.; Zhou, W.; Tang, P.; Foumelis, M. Surface Motion and Structural Instability Monitoring of Ming Dynasty City Walls by Two-Step Tomo-PSInSAR Approach in Nanjing City, China. Remote Sens. 2017, 9, 371. [Google Scholar] [CrossRef]

- Peng, X.; Wang, C.; Li, X.; Du, Y.; Fu, H.; Yang, Z.; Xie, Q. Three-Dimensional Structure Inversion of Buildings with Nonparametric Iterative Adaptive Approach Using SAR Tomography. Remote Sens. 2018, 10, 1004. [Google Scholar] [CrossRef]

- Zhao, J.; Wu, J.; Ding, X.; Wang, M. Elevation Extraction and Deformation Monitoring by Multitemporal InSAR of Lupu Bridge in Shanghai. Remote Sens. 2017, 9, 897. [Google Scholar] [CrossRef]

| No. | Start Pixel | End Pixel | (°) | (Pixels) | Group Mark (Color) |

|---|---|---|---|---|---|

| 1 | (29,84) | (43,111) | −27 | −13 | red |

| 2 | (64,153) | (67,160) | −27 | −13 | red |

| 3 | (71,166) | (76,177) | −27 | −13 | red |

| 4 | (81,186) | (105,234) | −27 | −13 | red |

| 5 | (108,239) | (118,260) | −27 | −13 | red |

| 6 | (121,265) | (135,293) | −27 | −13 | red |

| 7 | (1,27) | (139,27) | −90 | −26 | green |

| 8 | (1,301) | (139,301) | −90 | −300 | yellow |

| 9 | (31,24) | (44,24) | −87 | −21 | green |

| 10 | (49,25) | (105,27) | −87 | −21 | green |

| 11 | (110,28) | (139,29) | −87 | −21 | green |

| 12 | (1,29) | (96,24) | 87 | 28 | green |

| Parameter | Symbol | Value |

|---|---|---|

| Wavelength | 0.0311 m | |

| Incidence angle | 33.1284° | |

| Center range | 603638.971 m | |

| Bandwidth | 150 MHz | |

| Range resolution | 1 m | |

| Number of images | 24 |

| No. | Length (m) | Inclination (°) | No. | Length (m) | Inclination (°) |

|---|---|---|---|---|---|

| 0 | 0 | any | 12 | 792.207 | 32.3227 |

| 1 | 35.712 | 33.9964 | 13 | 800.280 | 33.77537 |

| 2 | 97.540 | 33.4859 | 14 | 814.724 | 33.5181 |

| 3 | 126.987 | 33.6439 | 15 | 849.129 | 32.7626 |

| 4 | 141.886 | 33.6147 | 16 | 905.792 | 34.0288 |

| 5 | 157.613 | 32.9129 | 17 | 913.376 | 32.1973 |

| 6 | 278.498 | 33.4394 | 18 | 915.736 | 33.0059 |

| 7 | 421.761 | 32.4708 | 19 | 957.167 | 32.8915 |

| 8 | 485.376 | 33.5405 | 20 | 957.507 | 33.6594 |

| 9 | 546.882 | 32.1921 | 21 | 959.492 | 33.7188 |

| 10 | 632.359 | 32.6822 | 22 | 964.889 | 32.5021 |

| 11 | 655.741 | 32.2207 | 23 | 970.593 | 33.1079 |

| 1 | 1.96 | 28.63 |

| 2 | 0.00 | 26.00 |

| 3 | 0.00 | 300.00 |

| Iterations | Method of Reference [5] | Our Method |

|---|---|---|

| 300 | 30.89 | 30.42 |

| 400 | 30.81 | 28.94 |

| 500 | 30.79 | 28.56 |

| 900 | 30.79 | 28.52 |

| Iterations | Method of Reference [5] | Our Method |

|---|---|---|

| 300 | 39.63 | 33.52 |

| 400 | 30.58 | 28.20 |

| 420 | 31.46 | 28.48 |

| 430 | 31.46 | 28.00 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, H.; Pang, L.; Li, F.; Guo, Z. Hough Transform and Clustering for a 3-D Building Reconstruction with Tomographic SAR Point Clouds. Sensors 2019, 19, 5378. https://doi.org/10.3390/s19245378

Liu H, Pang L, Li F, Guo Z. Hough Transform and Clustering for a 3-D Building Reconstruction with Tomographic SAR Point Clouds. Sensors. 2019; 19(24):5378. https://doi.org/10.3390/s19245378

Chicago/Turabian StyleLiu, Hui, Lei Pang, Fang Li, and Ziye Guo. 2019. "Hough Transform and Clustering for a 3-D Building Reconstruction with Tomographic SAR Point Clouds" Sensors 19, no. 24: 5378. https://doi.org/10.3390/s19245378

APA StyleLiu, H., Pang, L., Li, F., & Guo, Z. (2019). Hough Transform and Clustering for a 3-D Building Reconstruction with Tomographic SAR Point Clouds. Sensors, 19(24), 5378. https://doi.org/10.3390/s19245378