3.1. Source Entropy Analysis

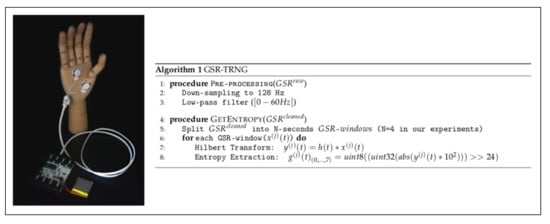

A cryptographic Random Bit Generator (RBG) is composed of three components: (1) an entropy source; (2) an algorithm responsible of storing and providing bits to the target application, and (3) the procedure for combining the two first components. In a nutshell, the entropy source model consists of an analogue noise source (in our case, the GSR signal, which is first cleaned with the procedure Pre-Processing in Algorithm 1) and a digitisation algorithm (procedure GetEntropy specified in Algorithm 1 and defined by Equations (

1) and (

2)).

For testing the entropy of RBGs, the NIST SP 800-90B recommendation proposes ten estimators, including the Markov and LZ78Y estimate among others for calculating the min-entropy [

58]. The final estimation is the minimum value of all these tests. A file of 25 million 1s and 0s was generated using the third dataset to evaluate the entropy quality of the GSR signal. In most tests (see

Table 1), the entropy value was close to the optimal (1) and even for the worst case remained very high (0.935). In this particular case, the t-tuple test sets the min-entropy value. This test evaluates the frequency of pairs, triples, and so on, and estimates the entropy per sample based on these frequencies [

58]. From all the above, fortunately, we can conclude that the GSR signal together with the proposed digitisation algorithm seemed appropriate for cryptographic solutions.

In some occasions, the estimation of the entropy calculated on a very long sequence can produce an overestimation of the entropy—correlated sequences might be generated after a restart. If this is the case, the attacker could cause multiple restarts of the entropy source to generate an advantageous situation for her/him. The “restart” test is defined in the NIST SP 800-90B specification to evaluate this issue. As for generating data for this test, the GSR source was restarted

times, and then we recorded

consecutive values. In our case, we used the third dataset, in which the subjects were shown 20 different videos. Therefore, in our experiments, the reset of the physiological signal was simulated by exposing the subject to a different stimulation (video). Furthermore, to be even more confident, we repeated the test five times (i.e., from File-1 to File-5). As shown in

Table 2, the five tests were passed successfully and confirmed that 0.94 was not an overestimate for the min-entropy.

3.2. Randomness Analysis

In Algorithm 1, we included an entropy distillation process (Procedure GetEntropy) to produce randomness. After the entropy analysis, we needed to assess the randomness quality of the bits generated by the GSR-TRNG. For a first visual inspection in

Figure 3, we show an 8-bit grey scale image (512 × 512) of values generated by our TRNG. No anomalous patterns were detected, and the image behaves as the one generated by any other strong cryptographic random number generator. Several test batteries are commonly used (ENT [

59], DIEHARDER [

53] and NIST [

54]) to analyse the randomness in depth. These tests require an input file of several hundred million bits. In our particular case, we generated a file of 30 MBytes by joining the GSR signals (signals of 84 subjects in total) of the three datasets introduced in

Section 2.1.

ENT suite [

59], which is not intended for cryptographic applications, is one of the test batteries usually used first to discard weak or faulty generators without the need for additional testing.

Table 3 shows the results after analysing the 30 MByte file mentioned above. The entropy and compression results indicate that the file was extremely dense in terms of information (randomness). As for the chi-square test, which is very sensitive to detect weak generators, the results show no g suspicion of being not random. The arithmetic mean value confirmed that the proportion of ones and zeros were equal (i.e., there was no bias in the output). The serial correlation coefficient showed the high unpredictability of the bitstream—there was a low dependence between a particular bit and its predecessors.

To analyse whether there were no biases in the behaviour of each subject’s signals, we performed an additional experiment by analysing them separately. Using the signal of the 37 subjects of the AMIGOS dataset, we generated a binary file of 800 KB for each of the subjects. Each of these files was analysed with the ENT suite.

Figure 4 shows the result of the chi-square test. As shown in the figure, most values were within the optimal value (256) and ± the standard deviation. We can, therefore, conclude that the different subjects behaved similarly. In other words, there were no significant differences between the bitstreams generated from the different GSR signals corresponding to each subject.

DIEHARDER [

53] (a modern version of the Diehard battery), and NIST [

54] are much more demanding test batteries than ENT. NIST has been designed to test RNGs that are devoted to cybersecurity solutions. DIEHARD consists of 15 test and the results obtained are summarised with a

p-value in

Table 4a. In detail, all tests were within the interval [0.025–0.975]—note that, due to a large number of

p-values calculated, it would not be uncommon for some of them to be outside this range. Apart from being distributed within the interval mentioned above (0.05 of significance level), the critical point to consider the file under analysis random is that these

p-values must follow a uniform distribution. We tested this hypothesis using a Kolgomorov–Smirnov test, which returned a decision that the

p-values come from a uniform distribution at the 5% of the significance level. Therefore, we can conclude (95% of confidence) that there were no bad behaviours in the analysed bitstream (30 MByte file) and that all the DIEHARD tests were successfully passed. As mentioned above, NIST is often used in the context of cybersecurity and for formal verification of RNG designs. The NIST suite is made up of 15 tests, which examine bits, m-bit blocks or m-bit parts. Regarding the interpretation of the results, the first value corresponds with the

p-value calculated for uniformity testing with the

p-values obtained with a given test; the values in brackets represent the proportion of tests passing the corresponding test. The following equation gives the minimum number of tests (except for the random excursion test) that must be passed for each test:

being

the significance level and

K the number of sequences tested. In our particular case,

and

, thus the minimum pass rate was 96. From the results in

Table 4b, all the tests passed the uniformity test (

p-values in the interval 0.01–0.99;

) and the proportion test was above the mentioned threshold (

). Furthermore, the Kolgomorov–Smirnov confirmed the uniformity of all

p-values (15 tests) with 1% of significance level. From all this, we can conclude that the bits generated by the TRNG based on GSR signals behaved as a random variable.

As an additional experiment, we analysed whether there was any relationship between the random numbers generated by each user (GSR signal). If this were the case, it would be very advantageous for an attacker, since s/he could exploit the knowledge of a GSR signal (e.g., User-A) and predict the values of another signal (e.g., User-B). To assess this, using the 38 users of Dataset 3, we created a file of 800 KB. Next, we grouped the data of each file in words of different sizes (

). For each of these word sizes, we computed the hamming distance between all the dataset pair combinations (

). We show the results obtained in

Figure 5.

If there is no relation between the users (GSR signals), the calculated Hamming distance should follow a binomial distribution (

;

and

) being

m the size of the words and

as the zeros and the ones are equally likely). In our experiments (see

Figure 5), as expected, the experimental values were almost identical to the theoretical ones (i.e., a hamming distance of 4, 8, 16 and 32, respectively). Therefore, the advantage of an adversary of predicting the values of a user using the knowledge of other users’ signals was zero.

Apart from the randomness tests, and as a final test, we analysed the TRNG as if it were used as a generator of a ciphering sequence (

s) to encrypt a plain-text (

m):

. In particular, using this approach, five different images (256 × 256 grayscale images), chosen randomly from the Internet, were used as inputs for the experiment. As for the ciphering sequence, bits were grouped in bytes and then regrouped into a matrix of the same size as the inputs images. As a first glance,

Figure 6 shows the histogram of one of the tested images and its histogram after encryption. As expected, the encryption made the histogram uniform. Note that, if

s (image with random values) follows a uniform distribution, and

s and

m are chosen independently of each other, the resulting value is uniformly distributed, since we combine them with the bitwise operation. This uniform distribution at the output makes it impossible for an attacker to extract any information from the original plaintext (image from the Internet in our example). Nowadays, NPCR and UACI tests are used to evaluate the strength of an image encryption technique against differential attacks [

60]. In short, the first assesses the number of changing pixels and the second evaluates the changes in intensity, in both cases, between two encrypted images when the two plain images differ by one bit. In

Table 5, we summarise the results of these test for the five examined images. Considering the thresholds given in [

61], NPCR and UACI tests passed successfully at 0.05 significance level (i.e.,

and

).