Accuracy of an Affordable Smartphone-Based Teledermoscopy System for Color Measurements in Canine Skin

Abstract

1. Introduction

2. Materials and Methods

2.1. Optical System

2.2. Laboratory Measurements

- (1)

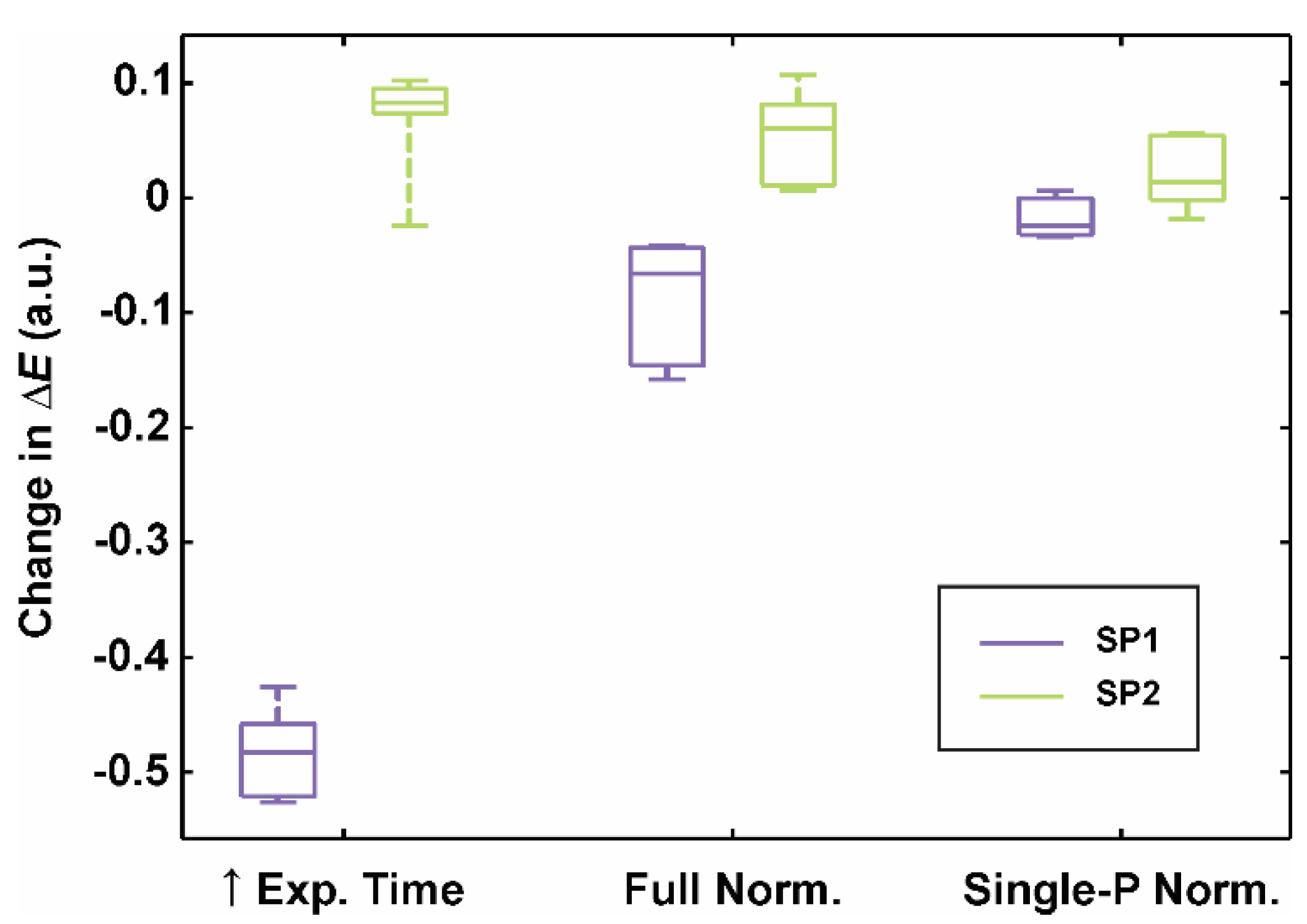

- Longer exposure time. If the exposure time is not locked on the brightest CCT’s patch (e.g., patch 19), the camera sensor’s capacity limit can be reached, leading to saturation. We acquired additional six full-range CCT image sets (Sfull1–6) with the exposure time locked on the slightly gray patch (full-range CCT: patch 20, neutral 8 (.23 *), L* = 81.3) and a transformation (regression) model was built in the same manner as described before (Figure 3a). Finally, the retrieved color differences were calculated and compared to the calibration on the image sets Ifull/skin1–6.

- (2)

- Full normalization (Figure 3b) was done across the whole lightness range based on the grayscale patches (No. 19–24). As guaranteeing a color constancy by acquiring all 24 patches for each clinical measurement could be burdensome and time-consuming, we expedited the color calibration procedure by introducing an initial transformation from the subsequent (Ifull 2–6) to the first image set (Ifull 1) RGB color space: RGB1 = g(RGB2–6), where g was a quadratic polynomial. Six grayscale patch images served for the determination of function’s coefficients with the regression model fit. Finally, RGB values were converted to the CIELAB color space based on the known f1 (Figure 3a).

- (3)

- Single-point normalization (Figure 3c) expedited a color calibration procedure since the normalization was implemented with only the brightest (No. 19) instead of the six grayscale patches. As before, the transformation to CIELAB color space was based on the known model f1 from the image set Ifull 1.

2.3. Clinical Measurements

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Majumder, S.; Deen, M.J. Smartphone Sensors for Health Monitoring and Diagnosis. Sensors 2019, 19, 2164. [Google Scholar] [CrossRef] [PubMed]

- Huynh, M. Smartphone-Based Device in Exotic Pet Medicine. Vet. Clin. Exot Anim. 2019, 22, 349–366. [Google Scholar] [CrossRef] [PubMed]

- Debauche, O.; Mahmoudi, S.; Andriamandroso, A.L.H.; Manneback, P.; Bindelle, J.; Lebeau, F. Cloud services integration for farm animals’ behavior studies based on smartphones as activity sensors. J. Ambient Intell. Hum. Comp. 2019, 10, 4651–4662. [Google Scholar] [CrossRef]

- Freitag, F.A.V.; Muehlbauer, E.; Martini, R.; Froes, T.R.; Duque, J.C.M. Smartphone otoscope: An alternative technique for intubation in rabbits. Vet. Anaesth. Analg. 2020, 47, 281–284. [Google Scholar] [CrossRef] [PubMed]

- Quesada-González, D.; Merkoçi, A. Mobile phone-based biosensing: An emerging “diagnostic and communication” technology. Biosens. Bioelectron. 2017, 92, 549–562. [Google Scholar] [CrossRef]

- Kim, S.D.; Koo, Y.; Yun, Y. A Smartphone-Based Automatic Measurement Method for Colorimetric pH Detection Using a Color Adaptation Algorithm. Sensors 2017, 17, 1604. [Google Scholar] [CrossRef]

- Hou, Y.; Wang, K.; Xiao, K.; Qin, W.; Lu, W.; Tao, W.; Cui, D. Smartphone-Based Dual-Modality Imaging System for Quantitative Detection of Color or Fluorescent Lateral Flow Immunochromatographic Strips. Nanoscale Res. Lett. 2017, 12, 291. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Wu, Y.; Zhang, Y.; Ozcan, A. Color calibration and fusion of lens-free and mobile-phone microscopy images for high-resolution and accurate color reproduction. Sci. Rep.-UK 2016, 6, 27811. [Google Scholar] [CrossRef]

- Bruce, A.F.; Mallow, J.A.; Theeke, L.A. The use of teledermoscopy in the accurate identification of cancerous skin lesions in the adult population: A systematic review. J. Telemed. Telecare 2018, 24, 75–83. [Google Scholar] [CrossRef]

- Lee, K.J.; Finnane, A.; Soyer, H.P. Recent trends in teledermatology and teledermoscopy. Dermatol. Pract. Concept. 2018, 8, 214–223. [Google Scholar] [CrossRef]

- Vestergaard, T.; Prasad, S.C.; Schuster, A.; Laurinaviciene, R.; Andersen, M.K.; Bygum, A. Diagnostic accuracy and interobserver concordance: Teledermoscopy of 600 suspicious skin lesions in Southern Denmark. J. Eur. Acad. Dermatol. 2020, 34, 1601–1608. [Google Scholar] [CrossRef]

- Jaworek-Korjakowska, J.; Kleczek, P. eSkin: Study on the Smartphone Application for Early Detection of Malignant Melanoma. Wirel. Commun. Mob. Comput. 2018, 2018, 5767360. [Google Scholar] [CrossRef]

- Rizvi, S.M.H.; Schopf, T.; Sangha, A.; Ulvin, K.; Gjersvik, P. Teledermatology in Norway using a mobile phone app. PLoS ONE 2020, 15, e0232131. [Google Scholar] [CrossRef] [PubMed]

- Abbasi, N.R.; Shaw, H.M.; Rigel, D.S.; Friedman, R.J.; McCarthy, W.H.; Osman, I.; Kopf, A.W.; Polsky, D. Early Diagnosis of Cutaneous Melanoma: Revisiting the ABCD Criteria. JAMA 2004, 292, 2771–2776. [Google Scholar] [CrossRef] [PubMed]

- Zanna, G.; Roccabianca, P.; Zini, E.; Legnani, S.; Scarampella, F.; Arrighi, S.; Tosti, A. The usefulness of dermoscopy in canine pattern alopecia: A descriptive study. Vet. Dermatol. 2017, 28, 161-e34. [Google Scholar] [CrossRef]

- Cugmas, B.; Olivry, T. Evaluation of skin erythema severity by dermatoscopy in dogs with atopic dermatitis. Vet. Dermatol. 2020. accepted. [Google Scholar]

- Mokrzycki, W.; Tatol, M. Color difference Delta E - A survey. Mach. Graph. Vis. 2011, 20, 383–411. [Google Scholar]

- Wang, X.; Zhang, D. A New Tongue Colorchecker Design by Space Representation for Precise Correction. IEEE J. Biomed. Health 2013, 17, 381–391. [Google Scholar] [CrossRef]

- Goñi, S.M.; Salvadori, V.O. Color measurement: Comparison of colorimeter vs. computer vision system. J. Food Meas. Charact. 2017, 11, 538–547. [Google Scholar] [CrossRef]

- Quintana, J.; Garcia, R.; Neumann, L. A novel method for color correction in epiluminescence microscopy. Comp. Med. Imaging Graph. 2011, 35, 646–652. [Google Scholar] [CrossRef]

- Charrière, R.; Hébert, M.; Trémeau, A.; Destouches, N. Color calibration of an RGB camera mounted in front of a microscope with strong color distortion. Appl. Opt. 2013, 52, 5262–5271. [Google Scholar] [CrossRef] [PubMed]

- Amani, M.; Falk, H.; Jensen, O.D.; Vartdal, G.; Aune, A.; Lindseth, F. Color Calibration on Human Skin Images. In Proceedings of the Computer Vision Systems; Tzovaras, D., Giakoumis, D., Vincze, M., Argyros, A., Eds.; Springer International Publishing: Cham, Switzerland, 2019; pp. 211–223. [Google Scholar]

- Cugmas, B.; Pernuš, F.; Likar, B. Color constancy in dermatoscopy with smartphone. Proc. SPIE 2017, 10592, 105920G. [Google Scholar]

- Mulcare, D.C.; Coward, T.J. Suitability of a Mobile Phone Colorimeter Application for Use as an Objective Aid when Matching Skin Color during the Fabrication of a Maxillofacial Prosthesis. J. Prosthodont. 2019, 28, 934–943. [Google Scholar] [CrossRef] [PubMed]

- Westland, S. Computational Colour Science Using MATLAB 2e. Available online: https://se.mathworks.com/matlabcentral/fileexchange/40640-computational-colour-science-using-matlab-2e (accessed on 3 July 2020).

- Cugmas, B.; Olivry, T.; Olivrī, A.; Spīgulis, J. Skimager for the objective erythema estimation in atopic dogs. Proc. SPIE 2020, 11211, 1121110. [Google Scholar]

- BabelColor The Problem with the ColorChecker Digital SG. Available online: http://www.babelcolor.com/colorchecker-3.htm#CCP3_SGproblem (accessed on 10 June 2020).

- Kuzmina, I.; Lacis, M.; Spigulis, J.; Berzina, A.; Valeine, L. Study of smartphone suitability for mapping of skin chromophores. J. Biomed. Opt. 2015, 20, 090503. [Google Scholar] [CrossRef]

| Color Calibration | SP1 | SP2 | SP1–SP2 |

|---|---|---|---|

| Full-range CCT (CIE76) | 6.45 (2.52–13.19) | 6.60 (1.66–12.80) | 3.91 (0.36–9.30) |

| Full-range CCT (CIE94) | 3.96 (1.51–9.25) | 4.46 (1.02–9.75) | 2.60 (0.36–7.28) |

| Skin-range CCT (CIE76) | 3.19 (0.93-9.04) | 1.76 (0.47–3.65) | 2.01 (0.34–7.18) |

| Skin-range CCT (CIE94) | 1.74 (0.40–3.64) | 1.16 (0.25–3.10) | 1.05 (0.13–3.08) |

| Color Calibration | CCT–SP1 | CCT–SP2 | SP1–SP2 |

|---|---|---|---|

| 1. Without (RGB) L | 0.40 ± 0.93 | 0.60 ± 1.16 | 0.29 ± 0.40 |

| 2. Full Norm. (sRGB) L | 0.04 ± 0.06 | 0.04 ± 0.09 | 0.03 ± 0.04 |

| 3. Single-P Norm. (sRGB) L | 0.04 ± 0.06 | 0.04 ± 0.10 | 0.03 ± 0.04 |

| 4. Single-P Norm. (sRGB) C | / | / | 0.02 ± 0.01 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cugmas, B.; Štruc, E. Accuracy of an Affordable Smartphone-Based Teledermoscopy System for Color Measurements in Canine Skin. Sensors 2020, 20, 6234. https://doi.org/10.3390/s20216234

Cugmas B, Štruc E. Accuracy of an Affordable Smartphone-Based Teledermoscopy System for Color Measurements in Canine Skin. Sensors. 2020; 20(21):6234. https://doi.org/10.3390/s20216234

Chicago/Turabian StyleCugmas, Blaž, and Eva Štruc. 2020. "Accuracy of an Affordable Smartphone-Based Teledermoscopy System for Color Measurements in Canine Skin" Sensors 20, no. 21: 6234. https://doi.org/10.3390/s20216234

APA StyleCugmas, B., & Štruc, E. (2020). Accuracy of an Affordable Smartphone-Based Teledermoscopy System for Color Measurements in Canine Skin. Sensors, 20(21), 6234. https://doi.org/10.3390/s20216234