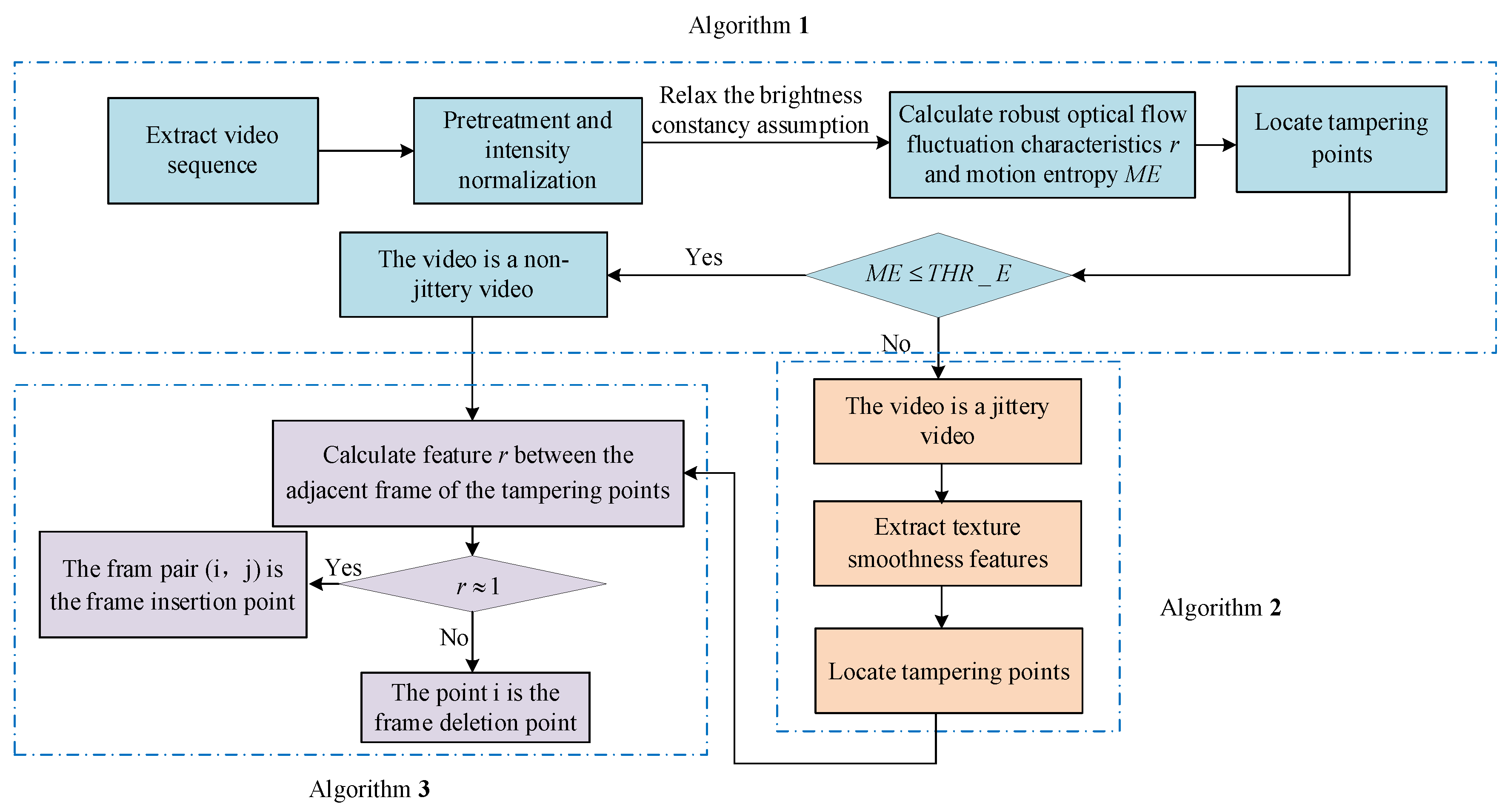

We conduct extensive experiments in diverse and realistic forensic setups to evaluate the performance of the proposed detection framework in this section. The experimental data is introduced first. Then the setup of parameters and evaluation standards are suggested. Finally, we present the experimental results and comparison analysis with four existing state-of-art algorithms to detect accuracy and robustness.

6.3. Experimental Results

Figure 7 is the detection result of frame deletion forgery for the video with jitter noises and illumination noises.

Figure 7a is the experimental results by Algorithm 1, which shows the

fluctuation feature sequence has peaks pair (91, 99, 118). At the same time, the calculated value of motion entropy

is 0.672, which indicates that the video is jittery. To reduce the side effect of the video jitter, we detect the nervous video by Algorithm 2, which utilizes the texture changes fraction feature

to detect. The detection result of double-checking is shown as

Figure 7b, where the tampering point is 118. At last, we make the judgment of video tamper by Algorithm 3, We can obtain that 118 is frame deletion forgery point, and the peak pair (91, 99) is false detection results.

Based on the detection result of

Figure 7,

Figure 8 is the detection result of multiple tampering of the same video.

Figure 8a is the experimental results by the Algorithm 1, which shows that the

fluctuation feature sequence has peaks pair (91, 99, 118, 150, 180). Moreover, the motion entropy

is 0.752, which indicates that the video is jittery. To eliminate the effect of the video jitter, this video is re-tested by Algorithm 2, The re-testing detection result is shown as

Figure 8, which locates the tampering points pair at (118, 150, 180), and the peak pair (91, 99) is false detection results. At last, we make the judgment of tamper by Algorithm 3. We can obtain that frame118 is the deletion forgery point, and the point pair (150, 180) is frame insertion forgery point.

Figure 9 is the detection result of the untampered video with jitter noises and illumination noises.

Figure 9a is the detection result by Algorithm 1, which shown that the

fluctuation feature sequence has a peak pair (22, 70). The motion entropy

is 0.643, which indicates the video is jittery. To eliminate the effect of the video jitter, this video is re-tested by the Algorithm 2, which utilizes the texture changes fraction to detect. The detection result of re-testing is shown as

Figure 9b, which indicates that the texture changes fraction sequence has no peaks. Based on the above test results, we judge that the video is original and has not been tampered.

Figure 10 shows frame replacement forgery detection result of video with illumination noise. It shows that the feature sequence has peaks pair (51, 93). At the same time, the calculated motion entropy

is 0.453, which indicates that the video is not jittery. Then we judge video tamper, and the

fluctuation feature

r between frame 50th and 94th is 1.0046, which shows frame pair (50, 94) is very similar. Therefore, the peak pair (51, 93) is the location of video insertion forgery.

Figure 11 shows the detection result of frame deletion forgery of video with illumination noises. It indicates the

fluctuation feature

r has prominent peaks at frame deletion point 56. Because the motion entropy

is 0.486, which suggests that the video is not jittery. At last, we make the judgment of video tamper and obtain that frame point 56 is the location of video deletion forgery.

Figure 12 is the detection result of video frame copy-move forgery of video with illumination noise.

Figure 12 is the detection result by Algorithm 1, which shown that the

fluctuation feature sequence has a peak pair (45, 57). And we calculate the value of motion entropy

is 0.482, which indicates that the video is not jittery. At last, we make the judgment of video tamper. The

fluctuation feature

r between frame 44th and 58th is 0.9844. Therefore, the peak pair (45, 57) is the location of video insertion forgery.

According to the performance evaluation criteria of the proposed algorithm, a comparison is made between the proposed algorithm in the paper and the state-of-the-art different video tamper detection algorithms [

3,

6,

9,

37].

Table 3 shows the parameter description of the comparison methods, our proposed method and the comparison methods use the same dataset, and the comparison results are shown in

Table 4.

As compared to methods reported in [

3,

6,

9,

37], the proposed method has high robustness and high accuracy. The results indicate that the proposed method is capable of effective detection and localization of all inter-frame forgeries on videos with illumination noises and jitter noises. In a real-life scenario, the forensic investigator has no control over the parameters of the environment where the video was captured or the parameters used by the video tamper. The forensic investigator must detect in the complete absence of any information regarding the noises, the motion-level, and the forgery operation forms of the captured video. Therefore, the most suitable forgery detection is the one that has practical suitability for the real-life video scenes, such as videos with brightness variance, videos with significant jitter, and the various motion-level videos. Furthermore, our method not only can locate the forgery precisely, but also can estimate the way of multi-forgery on tampered positions.

For [

3,

6], the detection methods based on

are invalid when there are illumination changes added to the image sequence. Hence, the detection result is not so good. For [

9], the detection performance is improved; the main reason is that the Zernike moment feature avoids the effect of brightness intensity. However, experiments prove that its detection performance on the jittery video has decreased significantly, so the detection result is not so good. For [

37], the test results are also relatively improved; the main reason is that the multi-channel feature avoids missing detection; however, experimental results show that the performance of this method is not good for the minor frame deletion forgery, so this method is not as stable as the proposed method in our paper.

Prior video tampering detection methods are not suitable for videos with dynamic brightness changes and jittery videos. The detection method [

13] based on motion residual can be ideal for the most motion-level video, such as high motion-level, medium motion-level, etc. However, it is not suitable for the slowest motion-level video. The inter-frame difference will decrease as the video motion-level decrease, so the extracted motion residual feature will be weak. However, the relocated I-frame is not affected by the motion level of video, so the relocated I-frame will be defined as the tampered frame mistakenly. Therefore, reference [

13] is not suitable for the lowest motion video. Our proposed method utilizes the inconsistencies of features, including the enhanced

and texture changes fraction, to detect tamper in real-life videos. The former feature is insensitive to the motion level of the video. Moreover, the latter feature can also describe the subtle inter-frame differences of the lowest motion video. Therefore, our method is also suitable for the lowest motion video.

To reduce the effect of illumination noises and jitter noises, we utilize a robust optical flow detection method based on relaxing brightness consistency assumption and intensity normalization, which can reduce the influence of significant brightness change and small brightness change, respectively. At the same time, we use motion entropy to sense whether the video is jittery and utilize the texture changes fraction TC for double-checking, so the false detection caused by video jitter can be reduced. Experiments prove that the proposed detection method has strong robustness and high accuracy for complex scene video.