1. Introduction

In recent years, the importance of navigation systems has been increasing due to their accessibility and functionality, allowing users to access from their mobile device to information and location of places anywhere in distinct scenarios. The navigation systems are categorized as

outdoor and

indoor. Outdoor navigation systems use Global Positioning System (GPS), public maps, or satellite images. However, such data are unsuitable for indoor navigation systems operating inside buildings or small semi-open areas, such as schools, hospitals, and shopping malls. Due to the incompatibility and low accuracy of the standardized technology of such systems [

1], the development of indoor navigation systems has been the subject of diverse research works [

2,

3,

4,

5], resulting in a variety of technologies, methods, and infrastructure for its implementation, being difficult to establish a standard for the development of these systems. The implementation of indoor navigation systems is highly beneficial for locating and extracting information about points of interest within buildings, especially when users are visiting the location for the first time, the indoor signs are not very clear, or there is no staff available to provide directions.

Most of the works for navigation focus on the development of positioning methods, based on wireless networks (IR, sensors, ultrasound, WLANs, UWB, Bluetooth, RFID) [

2,

4,

6,

7,

8]; Augmented Reality (AR), but not providing contextual information [

3,

9,

10,

11]; or the development of methods providing contextual information regarding the environment, but without offering indoor navigation [

5,

12,

13,

14]. Thus, such works require a suitable infrastructure for indoor position tracking and to adapt the system to specific environments. In recent years, Augmented Reality [

3,

15] and Semantic Web [

16] technologies have grown in relevance, separately, as enrichment means for the development of navigation systems. These technologies provide the user with more interactive navigation experiences and enhancing information consumption and presentation. Although AR offers capabilities for tracking the user’s location and presenting augmented content, the Semantic Web offers capabilities for querying, sharing, and linking public information available on the Web through a platform-independent model. Combining both technologies for indoor navigation would enhance the user experience, linking available indoor place information to navigation paths, navigation environment, and points of interest. However, according to our knowledge, there are no works exploiting AR and the Semantic Web for developing indoor navigation systems.

Therefore, this paper presents a methodology for developing an indoor navigation system based on AR and the Semantic Web. These combined technologies allow displaying navigation content and environment information to resemble the functionality provided by outdoor navigation systems without additional infrastructure for its implementation. The proposed methodology consists of four modules that communicate with each other, establishing alignments for spatial representation both real and virtual, the structure of contextual information, the generation of routes, and the presentation of augmented content to reach a destination point.

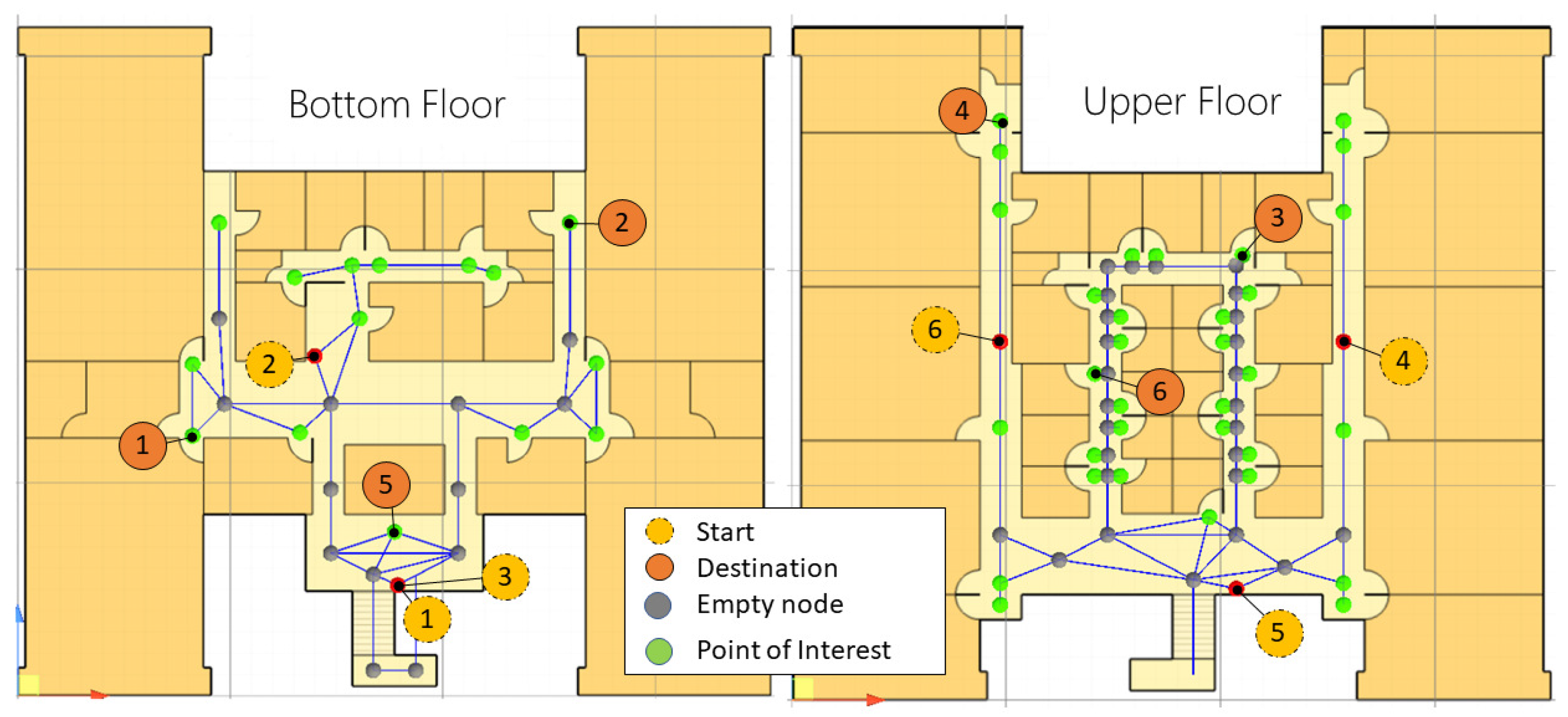

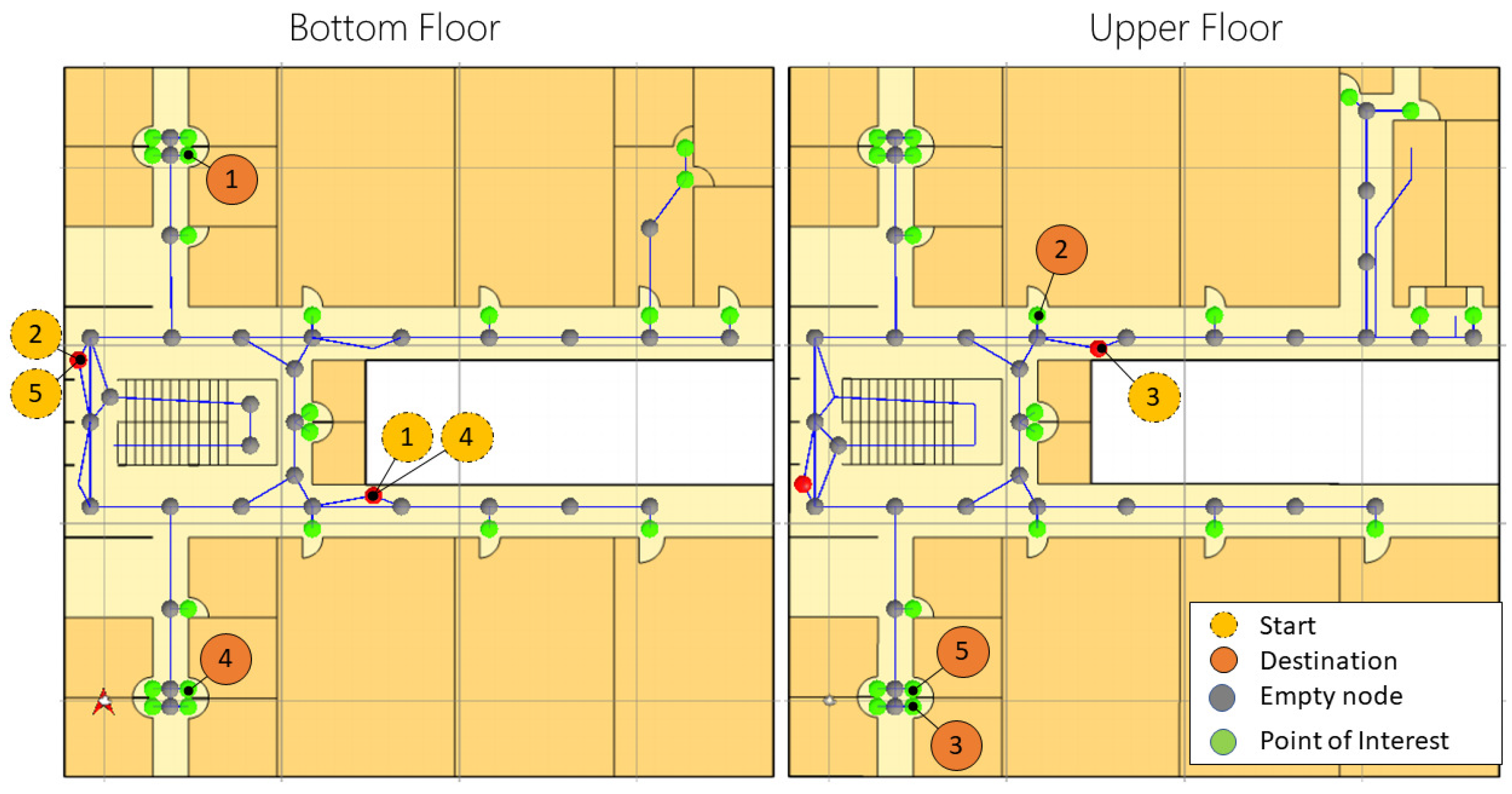

Academic environments are suitable for navigation systems due to the high number of external visitors and public information availability. Thus, the proposed methodology was tested by implementing a mobile device system prototype related to the academic context, where the points of interest refer to offices, laboratories, and classrooms. In particular, two buildings (from independent institutions) were modeled through a graph space model (routes, places) with points of interest distributed across different floors. Moreover, it was defined and implemented an ontology that supports a knowledge base (KB) with information of the academic or administrative staff (associated with the points of interest). Such KB is helpful to disseminate information through the Web and provides contextual information as virtual objects during navigation. Both scenarios were evaluated using different navigation tasks and participants.

The contributions of this paper can be summarized as follows:

The design of a methodology for indoor navigation systems incorporating Augmented Reality and Semantic Web technologies.

The implementation and availability of a functional indoor navigation prototype application and ontology for academic environments.

This paper is organized as follows.

Section 2 describes the related work regarding the development of indoor navigation systems using AR and Semantic Web technologies.

Section 3 describes the proposed methodology and its modules. Next, in

Section 4, the implementation process is presented. In

Section 5, the experiments and results are described, stating the evaluation criteria and study cases. Afterward,

Section 6 presents a discussion over the prototype’s performance; and finally,

Section 7 states the conclusions and further work.

3. Methodology Design

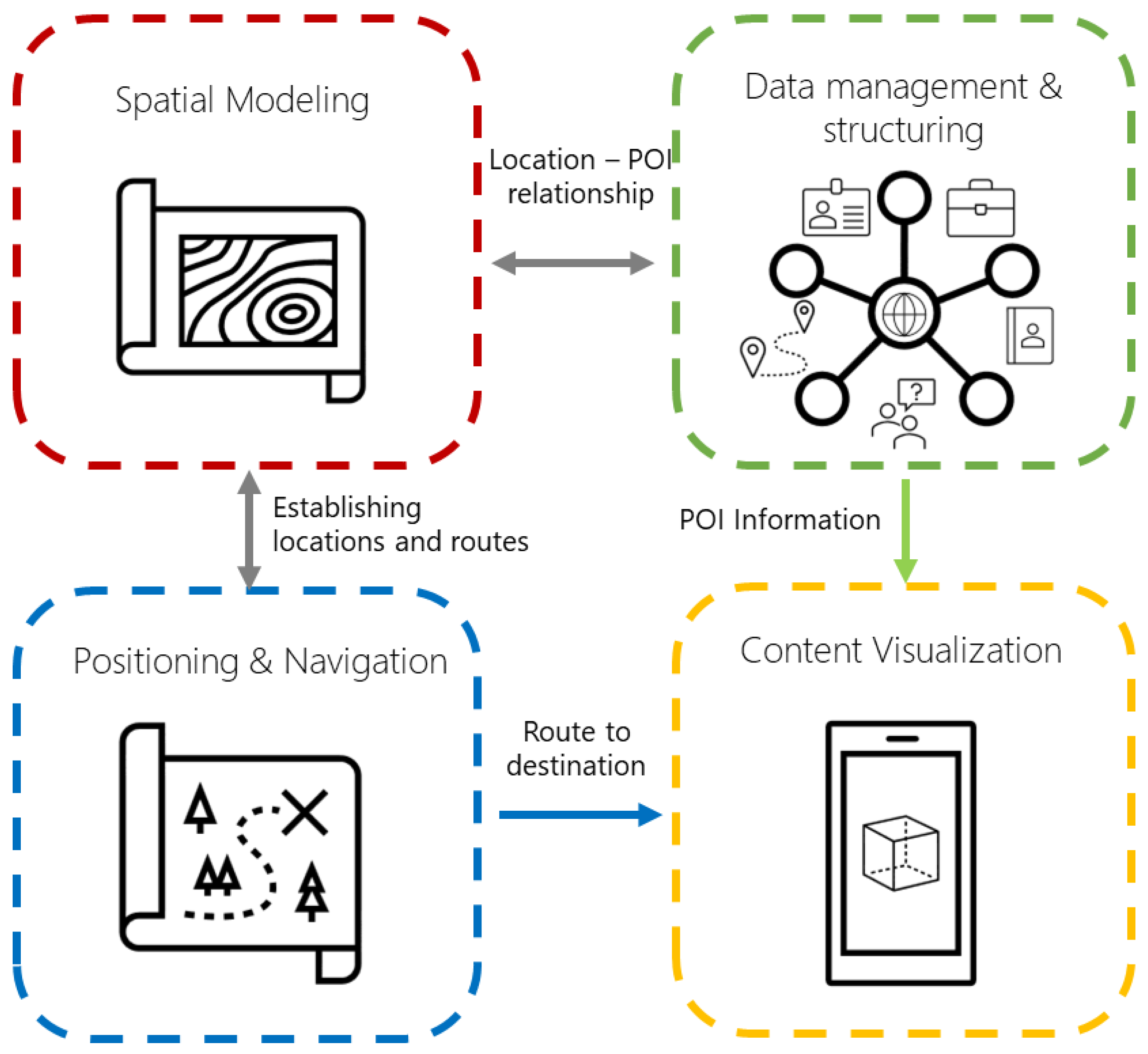

This section presents the proposed methodology for the development of indoor navigation systems for mobile devices. It integrates AR and Semantic Web technologies as methods for navigation and contextual information consumption. According to the components stated in the related work for the development of indoor navigation systems, this proposal is based on four modules presented in a sequential order in which the methodology is performed: (a)

Spatial modeling, for representing places, points of interest, and routes; (b)

Data management and structuring, for contextual data representation and consumption; (c)

Positioning and navigation, for user position tracking and pathfinding; and (d)

Content Visualization, for displaying navigation routes and point of interest information through AR elements.

Figure 1 summarizes the modules of the proposed architecture. Although the following subsections present general design descriptions of the modules in the methodology (without focusing on specific programming tools), the next section presents particular details (strategies, tools, recommendations) for a system implementation following such modules.

3.1. Spatial Modeling

The objective of this module is to create a spatial model for the computational representation of a building. Thus, the model uses a coordinate system for the location of points of interest that will help in the generation of navigation routes for guiding the user. This coordinate system requires three axes to represent a multistory building and for the degree of orientation of the mobile device to present the virtual content concerning the user’s position and orientation.

In other words, a spatial model is composed of a set of points and a subset of points of interest . In this regard, a point takes the form , where define the sets of valid coordinates for the modeled scenario. Additionally, it can be applied a rotation over the model, keeping the y axis fixed.

3.2. Data Management and Structuring

This module aims to create a Semantic Web knowledge base (KB) that stores information about the points of interest from the spatial model. The KB is structured and supported by an ontology that models the navigation environment and the information presented to the user at navigation time. In this way, the data consumption through the KB is helpful for the application due to its well-defined structure, flexibility, and association with real-world entities (for more intuitive queries). According to the Semantic Web guidelines, the ontology must represent all domain of interest concepts in a clear and well-defined form. Moreover, this ontology must reuse existing ontologies (or accepted vocabularies), such as iLOC ontology [

26], ifcOWL [

29], FOAF [

30], DBpedia [

31], to mention a few. Then, a new ontology can be extended and adapted to the indoor navigation environment at hand. Therefore, the entities of the navigation environment, points of interest, spatial model, and any other contextual information can be represented in the KB.

For practical reasons, most of the KBs are structured and represented according to the

Resource Description Framework (RDF) [

32] as a directed edge-labeled graph [

33]. Formally, RDF is a data model (whose main component is the RDF triple) that defines elements such as

Internationalized Resource Identifiers (IRIs) [

34], to identify resources globally on the Web;

blank nodes, particular components used for grouping data (acting as parent nodes); and

literals, representing strings or datatype values. Thus, given infinite sets of IRI elements

I, blank nodes

B, and literals

L, an RDF triple takes the form

. For example, entities as

s are expected to be uniquely associated with their corresponding

by means of some property

. Then, other properties in the ontology are helpful to define additional information about

s and enrich the points in the spatial model (and used as contextual information during navigation). Please note that the KB is queried at navigation time for presenting information to the user about the indoor navigation environment (at the beginning), contextual information during route traversing, and points of interest arrival (virtual landmarks).

3.3. Positioning and Navigation

After modeling the coordinate system and the KB with the POI information, this module aims to obtain the user position within the spatial model and the navigation through an obtained route. In this sense, it is required a positioning method that tracks the user movements in two phases:

Establishing the initial location. This phase establishes the user’s initial position within the spatial model, presenting the whole navigation environment and virtual objects relative to that position. Thus, an initial position can be defined as

, which can be established in multiple ways, as previously mentioned. For example, through marker detection [

15], Lbeacons and sensors [

21], and WiFi fingerprinting [

27], to mention a few.

Tracking user’s movements. Once the initial position is established, the user’s position is tracked and updated to correctly place all virtual objects involved during navigation (displayed in positions relative to the tracked movements). Thus, when the user is moving throughout the building, its position is commonly updated from a point to an adjacent point

through the mobile device’s sensors (e.g., WiFi, Bluetooth, compass) [

21,

22,

23] or computer vision [

24,

28], to mention a few.

A positioning and tracking strategy is later defined (

Section 4) using the mobile device’s motion sensors and visual features in the environment. On the other hand, the navigation function is aimed at helping users reach a point of interest from a starting position. A pathfinding algorithm is applied to the spatial model to create the shortest navigation route between the pair of points. In other words, given the function

, where

is the initial position,

a point of interest, and

m a spatial model, it returns a succession of points in

m to travel from

to

. For example, if the spatial model is represented by a graph [

20,

22,

28], it is possible to apply a pathfinding algorithm [

35] such as A* or Dijkstra.

3.4. Content Visualization

This final module aims to present the information regarding the points of interest stored in the KB and the visualization of navigation routes through virtual objects (using AR and the components above described), taking into account the best way to present such content without affecting its consumption. In this sense, two components are proposed:

Data presentation. This component presents the information of the points of interest through non-augmented interfaces. The goal is to show an interface to read and view concisely such information. Moreover, it allows the user to begin the setting and select for navigation.

Navigation guidance. In contrast, navigation guidance is provided by augmented objects visible through the mobile device screen, ensuring that the user can follow the guidelines while still paying attention to their step. Of course, according to the user position tracking, such augmented objects stay fixed at the points given by so that the user can follow the navigation route. Moreover, additional augmented objects can be displayed during navigation, such as discovering point of interest within a radio from the user position (contextual information) or when the desired position is reached.

4. Methodology Implementation

This section presents a prototype implementation (as a mobile device system) based on the proposed methodology. This indoor navigation system aims to support users in finding an office, laboratory, classroom, or finding a place according to the staff member (e.g., professor

x assigned to office

y). Although it is possible to implement the proposed methodology using different tools, the prototype was developed with Unity 3D (it is a development engine that is compatible with AR frameworks and handles coordinate systems to place virtual objects) and designed for scenarios related to academic spaces. Thus, the ontology and navigation paths were designed to cover the needs of students and guests of such spaces. However, although this proposed implementation can be reused for modeling other types of buildings (such as hospitals, shopping malls, museums), the spatial modeling and the KB must be developed and adapted to the scenario and data. Details of the implementation for each module of the methodology are next described. The project’s repository is available online (

https://github.com/RepoRodhe, accessed on 7 June 2021).

4.1. Spatial Model

To specify a relationship in the data, the spatial model is represented with a graph with several nodes and points of interest distributed over different building floors. The process is as follows:

Digitalization. Scale plans of the building are used to create the graph, generating digital images used as a reference for placing the graph’s nodes and route visualization being imported into Unity3D. Thus, the plans should be digitized, and their scale (pixels per unit) must be defined to import them. In this sense, 1 unit in Unity3D represents approximately 1 m in a real environment (digitalized plans and scale examples are later presented in the experiments section). Moreover, to keep the images homogeneous (no annotations of measurements, indications, etc.), it is recommended to import the plans using a design software tool such as SketchUp [

36].

Graph creation. Within the system, nodes refer to points of interest or landmarks within buildings, storing information that aids in the extraction of information from the KB (ready for Semantic Web usage). On the other hand, edges represent corridors and help in the creation and visualization of navigation paths. In this regard, native Unity3D objects were used to represent the graph nodes and edges. Thus, the nodes have their corresponding location within the native coordinate system of the engine using three-axis to enable multi-floor positioning. In turn, each of the nodes contains an array of adjacent nodes (a

GameObject array in C#), which has the function of representing the edges of the graph. Although the methodology does not limit the representation of the number of floors of a building, the implemented prototype uses a spatial model of two floors for the two tested scenarios (later presented in the experiments). It is worth mentioning that the performance of the Unity3D platform is affected according to those objects that are visible at any given time [

37] and thus, it is possible to have several objects for modeling any building.

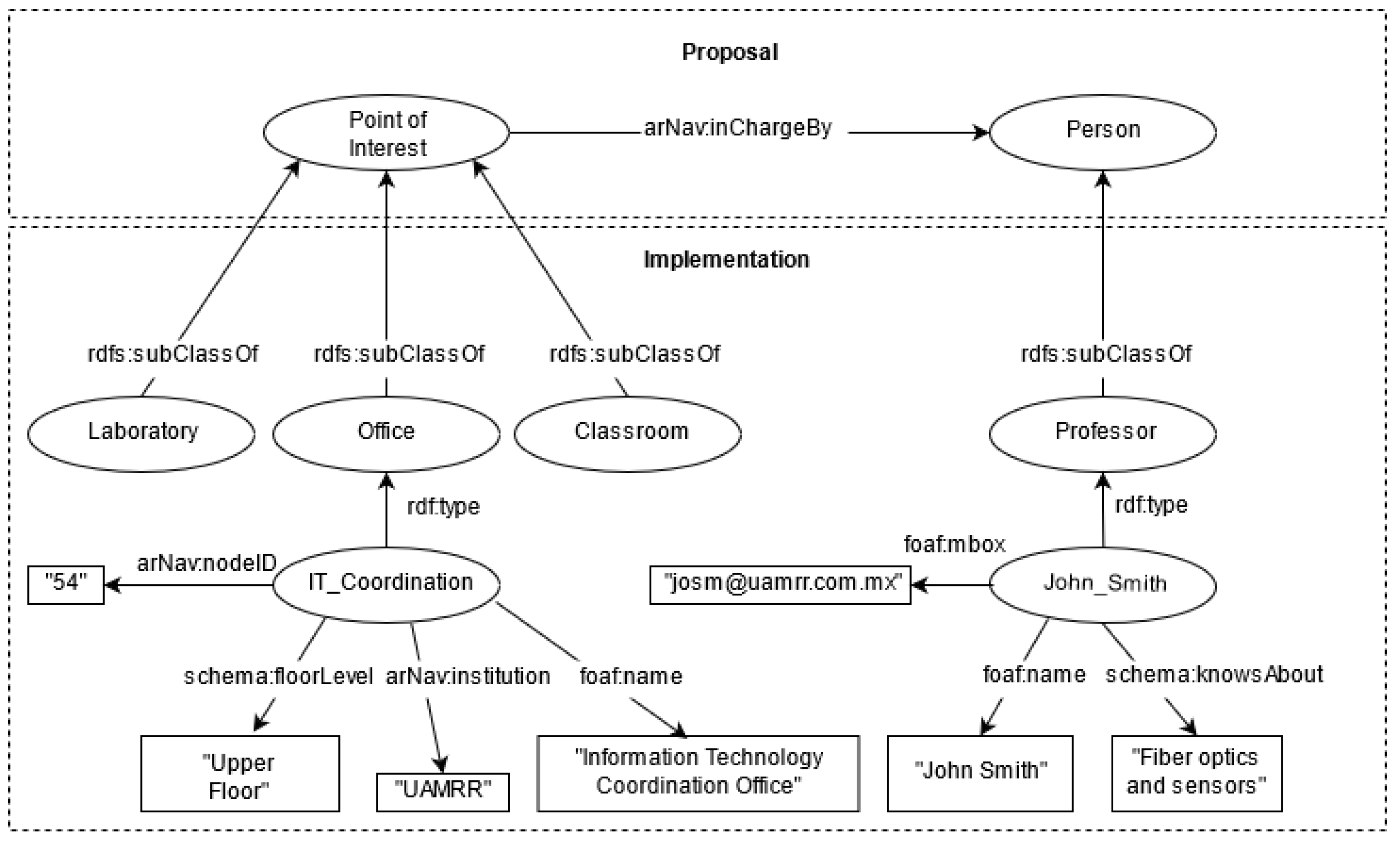

4.2. Data Structuring

For the ontology construction and implementation, a hierarchy of concepts was designed to store helpful information for the user concerning the points of interest. In this sense, it was defined an ontology for describing points of interest information, which is based (and aligned) on the iLOC ontology [

26] (for publishing indoor locations and navigation data). However, since the prototype system domain is part of the academic context, the ontology is enriched with classes representing offices, laboratories, and classrooms, storing information about the teaching staff associated with each location, their activities, academic specialties, and contact information. Particular details for the construction, feeding, and consumption of the KB (supported by the ontology) are as follows:

The Protégé tool [

38] was used to build the ontology, maintaining a hierarchy of classes to reduce redundant properties and in compliance with the Linked Data principles [

39]. The scheme of the proposed and implemented ontology can be seen in

Figure 2. In this sense,

arNav refers to the prefix of the application,

Proposal refers to the smallest part of the ontology to work under any environment, and

Implementation gives particular definitions according to the domain at hand, in this case for the academic domain. Please note that some labels and properties (

alignments, equivalences) are not shown to keep the figure legible.

Once the ontology was defined, the information for creating the KB can be manually entered (RDF files) or through a system assistant. For the implemented system, the applied strategy was to load information from a spreadsheet file into the ontology using the Protégé tool Cellfie (

https://github.com/protegeproject/cellfie-plugin, accessed on 7 June 2021), which allows the creation of classes, entities, attributes, and relationships through the specification of rules. Please note that information from multiple navigation scenarios can be stored in the same KB. In this sense, the KB for the two cases in the experiments contains 1347 RDF triples (81 points of interest from a total of 149 entities).

The client-server model was used to keep the KB updated and reduce the mobile device’s processing load regarding information consumption. For this purpose, the Virtuoso Open Server tool [

40] was used since it supports RDF/OWL storage and offers a SPARQL endpoint for its consumption. For testing purposes, the developed prototype was configured under a local network using a 2.4 Ghz WiFi connection, where the SPARQL queries were submitted from the mobile device to the server through HTTP requests using Unity3D’s native networking library and then parsing the JSON response for the correct visualization in the prototype interface. Five different SPARQL queries are performed through the menus and interfaces offered by the prototype, extracting the following information: (1) Information linking the nodes with the points of interest of the navigation environment, (2) Information of all points of interest by category, (3) Information of all academic staff, (4) Detailed information of a selected point of interest, and (5) Detailed information of a person. Listing 1 presents a SPARQL query example to retrieve all the information of a point of interest of a certain institution and the staff members related to that location. The retrieved information by such query is later used by the next module for visualizing content.

Listing 1. SPARQL query example.

PREFIX arNav: <http://www.indoornav.com/arNav#>

PREFIX foaf: <http://xmlns.com/foaf/0.1/>

SELECT ∗ WHERE {

arNav:Research_Lab arNav:nodeID ?id;

foaf:name ?name;

sch:floorLevel ?floor;

arNav:institution "UAT".

OPTIONAL{

arNav:Research_Lab arNav:inChargeBy ?person.

?person foaf:firstName ?firstName;

arNav:fatherName ?lastName;

arNav:extension ?extension.

OPTIONAL {?person foaf:title ?nameTitle.}

}

OPTIONAL{

arNav:Research_Lab sch:description ?desc.

}

OPTIONAL{

arNav:Research_Lab arNav:areas ?areas.

}

}

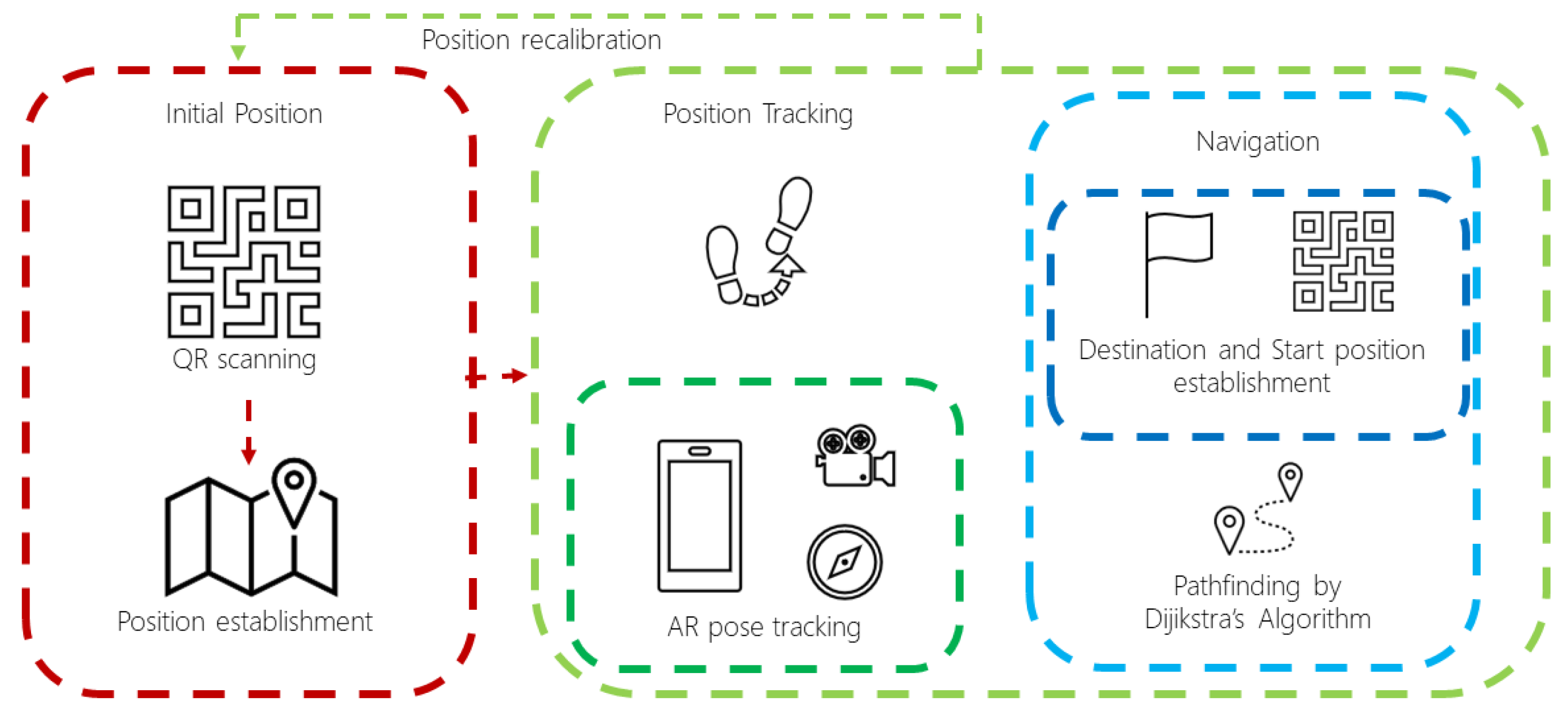

4.3. Positioning and Navigation

For the implementation of the positioning function, the AR Foundation framework was used [

41], offered by the Unity3D engine. It integrates functionalities from other AR frameworks on the market, such as Google’s ARCore [

42] and Apple’s ARKit [

43], so a mobile device compatible with either of the two frameworks, described on their respective website, is required to use the AR functions with the AR Foundation framework. Thus, the positioning and navigation are addressed as follows:

Initial position. In order to establish the user’s initial position within the spatial model, QR codes were used to store information about the building and the location under the Unity3D coordinate system. The ZXing library [

44] was used for decoding the QR codes. It is worth mentioning that a QR code must be scanned if the user position is lost (recalibration/repositioning), resuming the navigation automatically.

Tracking. Once the initial position is established, the user location is tracked down using the pose tracking component (TrackedPoseDriver) from the Unity Engine Spatial Tracking library and attaching it to the AR Camera. This component captures visually distinctive features in the environment to work as anchors and track the device movements and rotations relative to the detected features’ position. Such component is also supported by the mobile phone’s sensors (gyroscope and accelerometer). Afterward, the detected movement changes are processed by the ARSessionOrigin component, provided by the AR Foundation framework, to translate the movements to the Unity Engine coordinate system.

Navigation. Regarding navigation, the graph denoting the spatial model is represented as an adjacency matrix (using each node’s adjacency nodes array), so then the Dijkstra’s pathfinding algorithm [

35] is applied for finding the shortest route for navigation towards the destination.

The interaction of the components in the positioning and navigation implementation is summarized in

Figure 3.

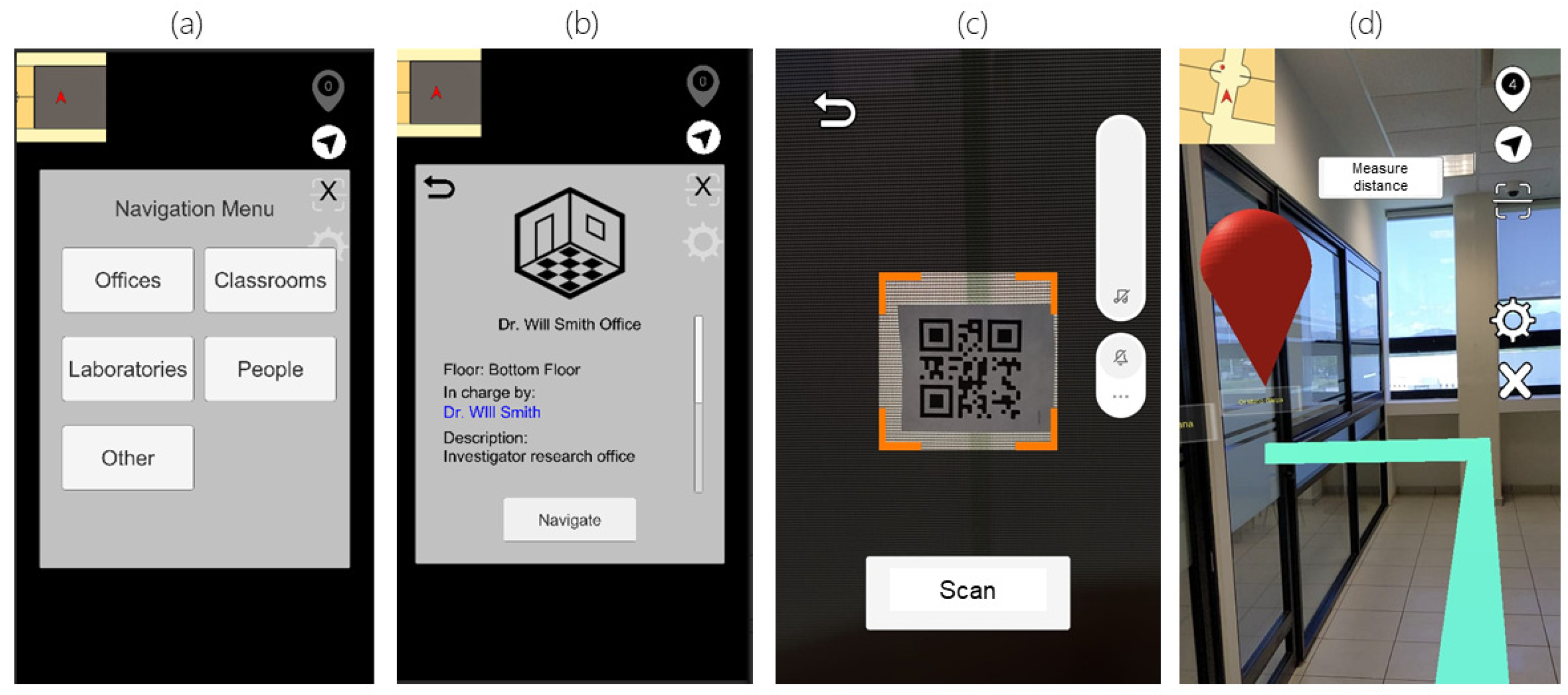

4.4. Visualizing Content

Taking advantage of the AR framework capabilities to display content, the user can visualize the virtual navigation guidance and the points of interest information stored in the KB through different interfaces.

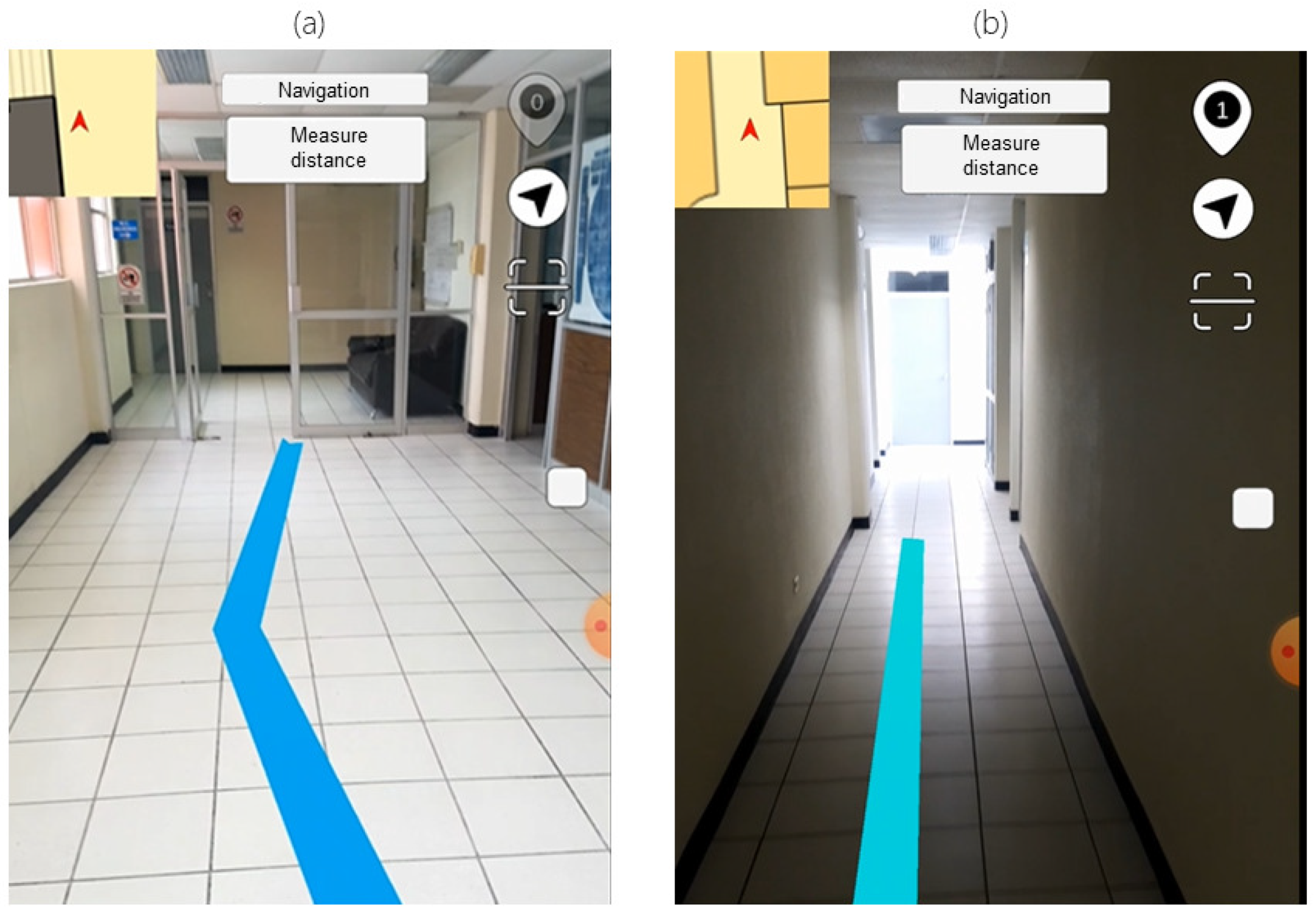

The visualization starts by scanning a QR code, which helps load the corresponding data for the building where the user is located. Afterward, it is displayed a menu categorizing the entries in the ontology regarding the stored classes (laboratories, offices, and classrooms). At this step, the user can select a point of interest to visualize the stored information and set its location as the destination to start the navigation. This action prompts the user to scan a QR code to establish the initial location, calculating the shortest route between the two locations (using the Dijkstra algorithm). The generated route is displayed by an augmented virtual line (

LineRenderer) that connects all the nodes involved in the route and can be viewed through the mobile device’s screen. An example of all the different interfaces implemented is displayed in

Figure 4.

6. Discussion

This section discusses the features, results, and limitations presented by the navigation system based on the proposed methodology, according to the evaluation criteria and the prepared navigation scenarios.

6.1. Implementation

As previously mentioned, the implemented prototype is based on a mobile application system whose main components are the AR for content display and the Semantic Web for data management. Even though other tools can be incorporated into the prototype, the purpose of each module in the methodology is fulfilled without incorporating external elements for positioning or sensing. However, different tools will be considered for a future version of the system.

6.2. Position Setting

The resulting performance for establishing the initial position by scanning QR codes is primarily positive, obtaining an accuracy close to 100% in both cases where the prototype was tested. In this sense, there were issues on three instances in total, positioning the user in the wrong coordinates inside the system and causing to display the AR elements in the wrong location, as their position should be relative to the user’s initial location. In these cases, when performing a QR code scan, the system registered several changes in the detected feature points falsely, causing such initial positioning to be incorrect. The main reason for this issue is how AR works to establish and track the user’s positioning, being dependent on the environment’s characteristic features (e.g., object’s edges and textures) to register the user’s movement [

47]. These feature points are obtained through algorithms (SIFT, FAST, BRISK) that process the pixels of the captured images under conditions such as color differences in the pixels around a central point, being affected by the illumination and the monotony of the environment. The current solution to this issue was to re-scan the QR code to fix the location.

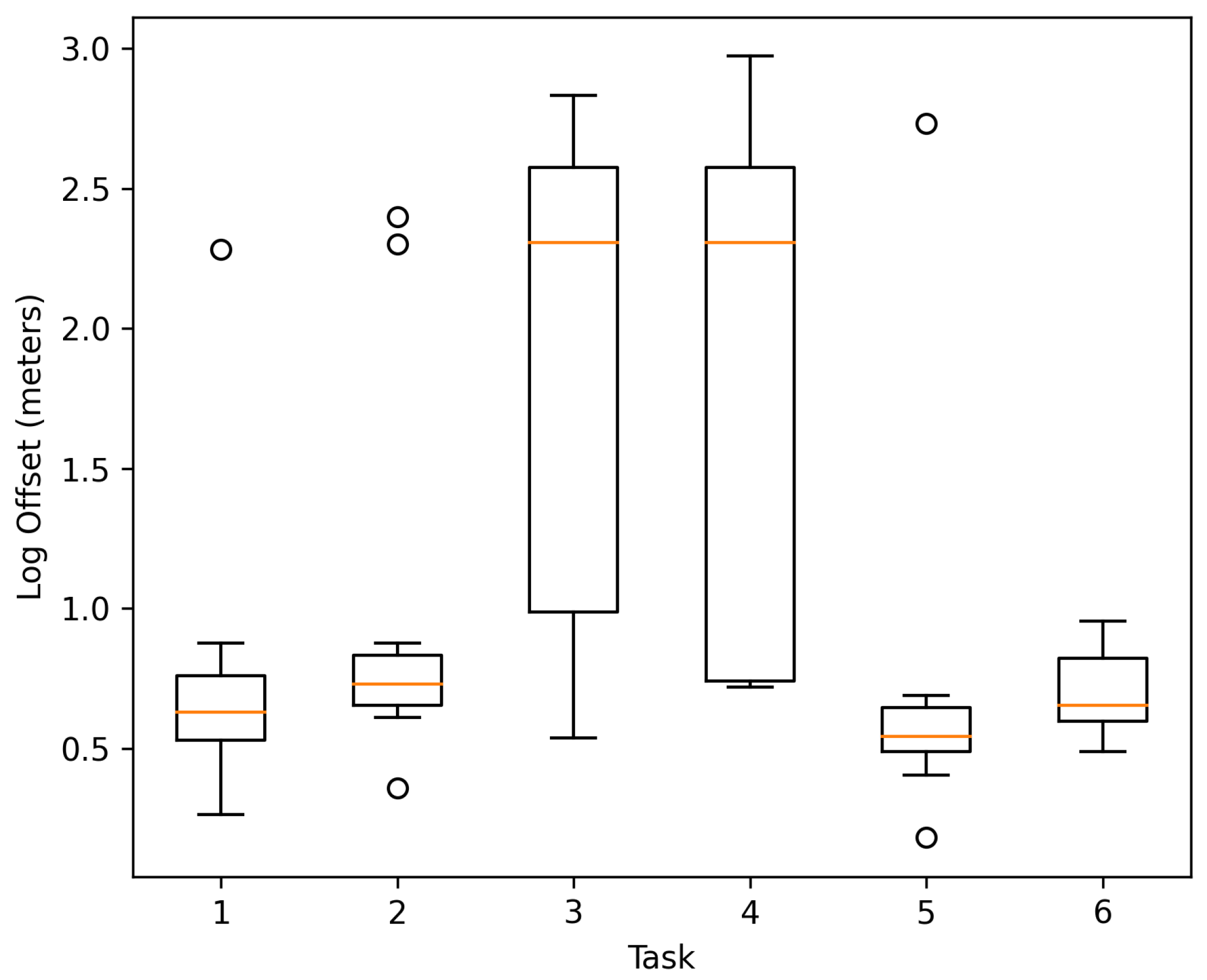

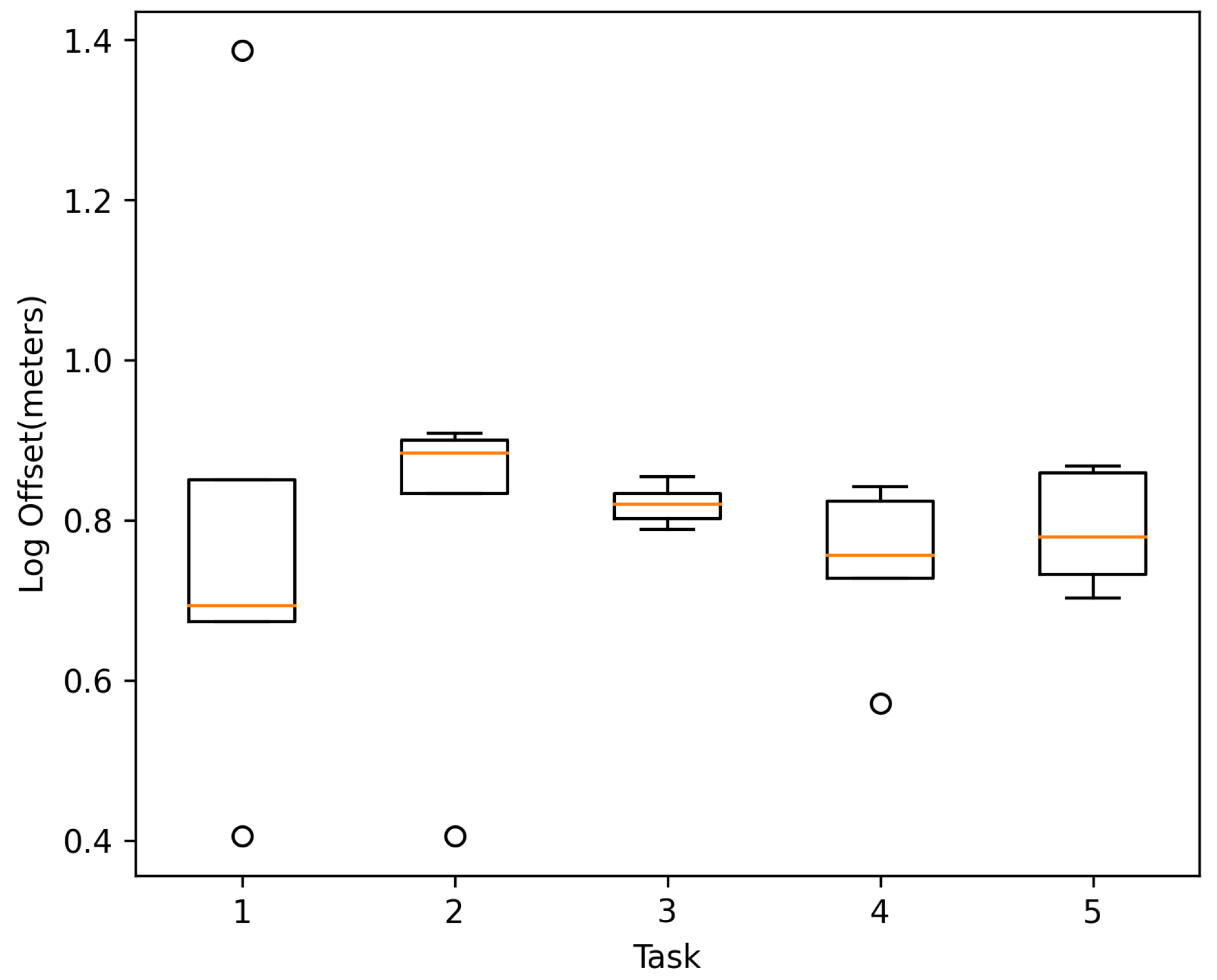

6.3. Distance Offset

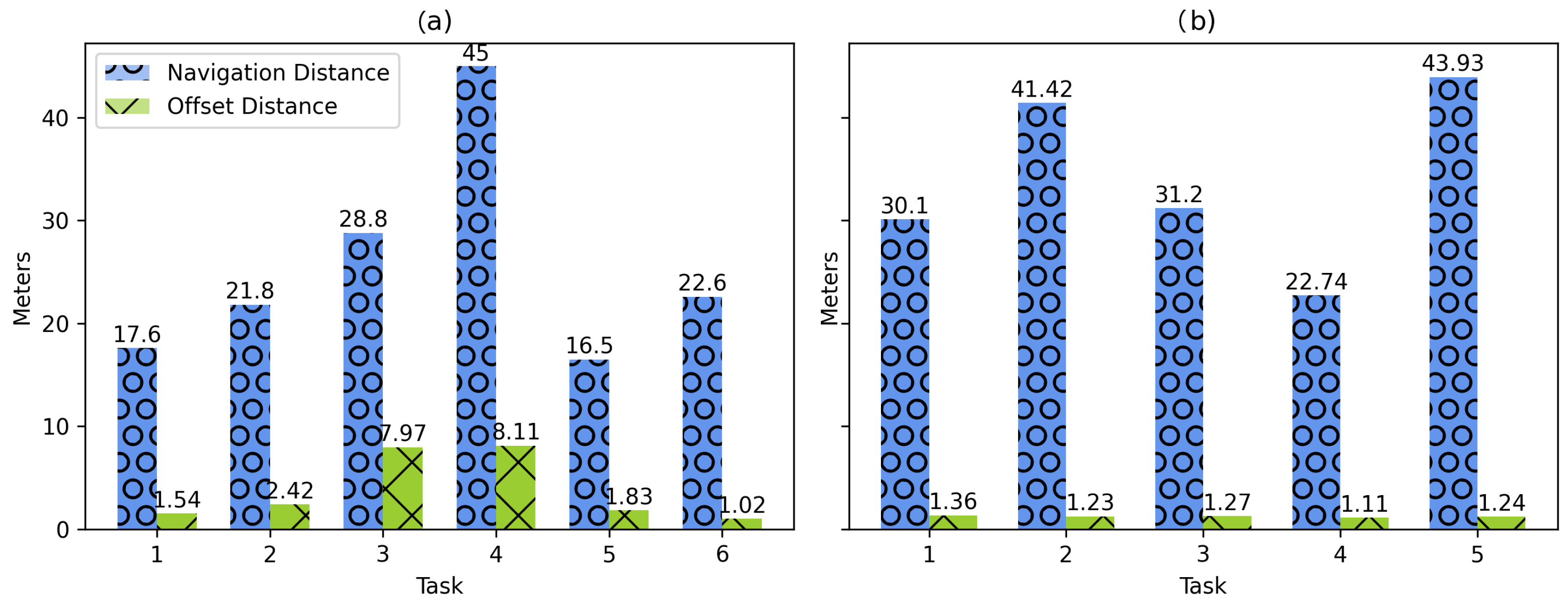

During the experiments, the system’s distance offset was recorded to measure the tracking functionality. According to the results obtained, the navigation to targets 3 and 4 in Case 1 presented the largest distance offsets, affecting the visualization of the AR content. Although it was initially assumed that there is a relationship between navigation distance and offset distance,

Figure 16 shows that this is not the case. Thus, navigation to points of interest with relatively long distances presented similar offsets to other short-distance routes (also presented in results of Case 2). In this sense, illumination, shadows, and difference in textures are reasons that negatively impact computer vision-aided positioning and tracking systems [

48]. Therefore, it is necessary to have a good level of illumination in the corridors and to avoid pointing the camera of the mobile device at walls or objects of monotonous colors to be able to detect enough environmental characteristic features within the environment. Such issue is shown in

Figure 17.

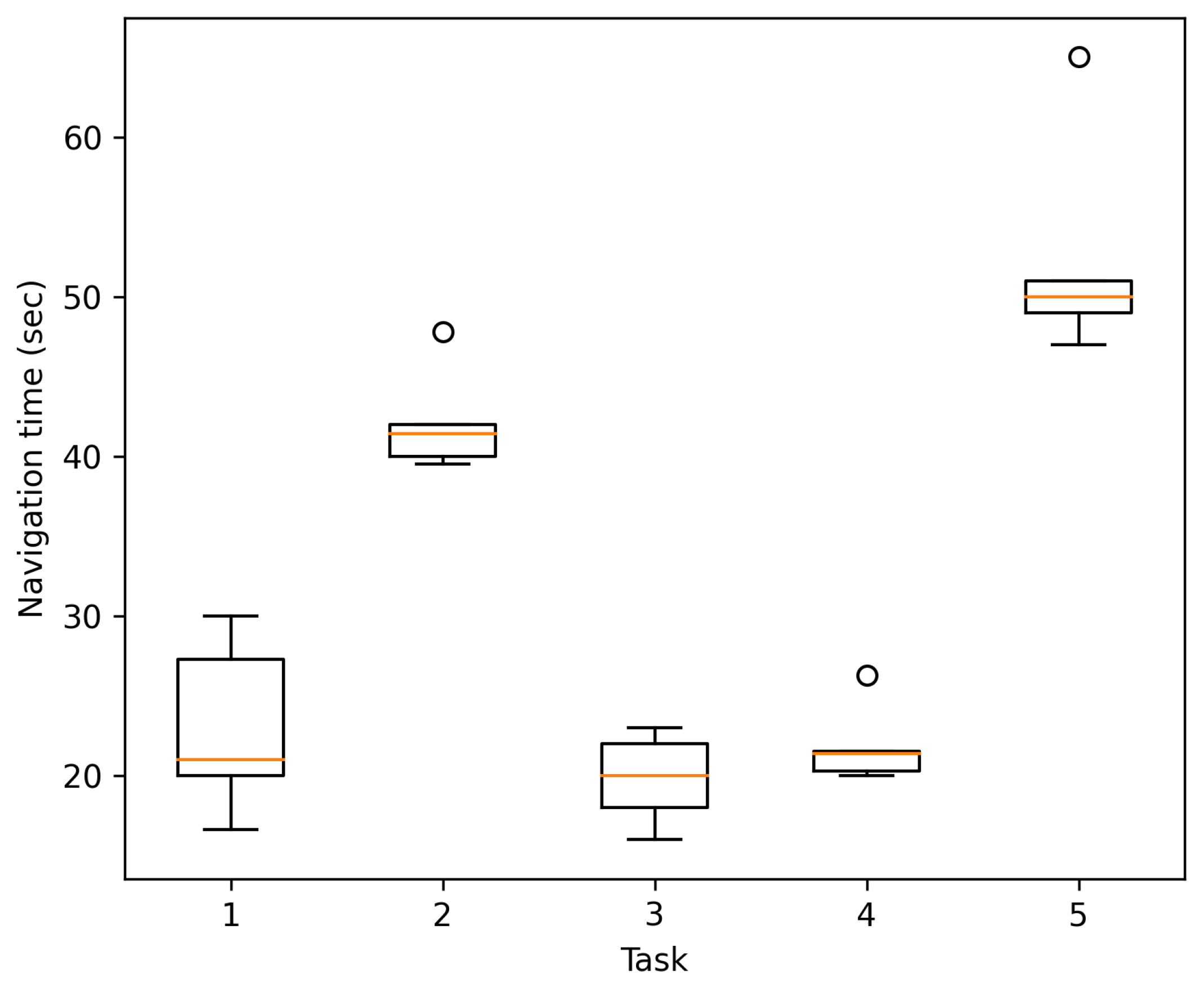

6.4. Navigation Time

For Case 1, a navigation time comparison is presented for participants using the navigation system (or not) for the six navigation tasks. The results demonstrated faster navigation times on four out of the six tasks when the system is used. In this regard, the navigation time is mainly affected by factors such as prior knowledge of the environment, or the AR guide display issues encountered during the experiment as shown in

Figure 7 and

Figure 8. For example, in task 6, when the system is not used, the point of interest does not contain any landmark to help identify the destination, which causes the users to request support from people to find the navigation target and, in this way, justifying the development of this research work. In the cases where the system was slower than navigation without its use, it was because the location of the targets was relatively simple (there were signs indicating their location) or the users knew the location beforehand, allowing them to navigate with more confidence and faster.

On the other hand, although Case 2 does not demonstrate the time with those participants not using the navigation system, it was possible to collect the navigation time, offset distances, and positioning scans to test the feasibility of the proposed method. However, even though the testing conditions were different for both cases (e.g., tasks, participants, scenario dimensions, corridor widths), the participant speed results indicated a median of 0.63 m/s and 1.03 m/s for Cases 1 and 2, respectively. Such speed results provide an initial idea of the system’s behavior for further fine-grained evaluations on general indoor navigation systems with varying participant ages, physical limitations, and possible routes, to mention a few.

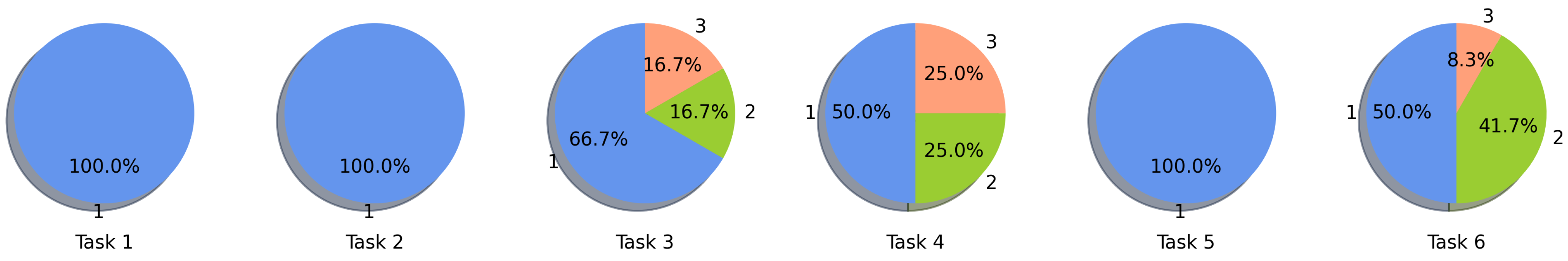

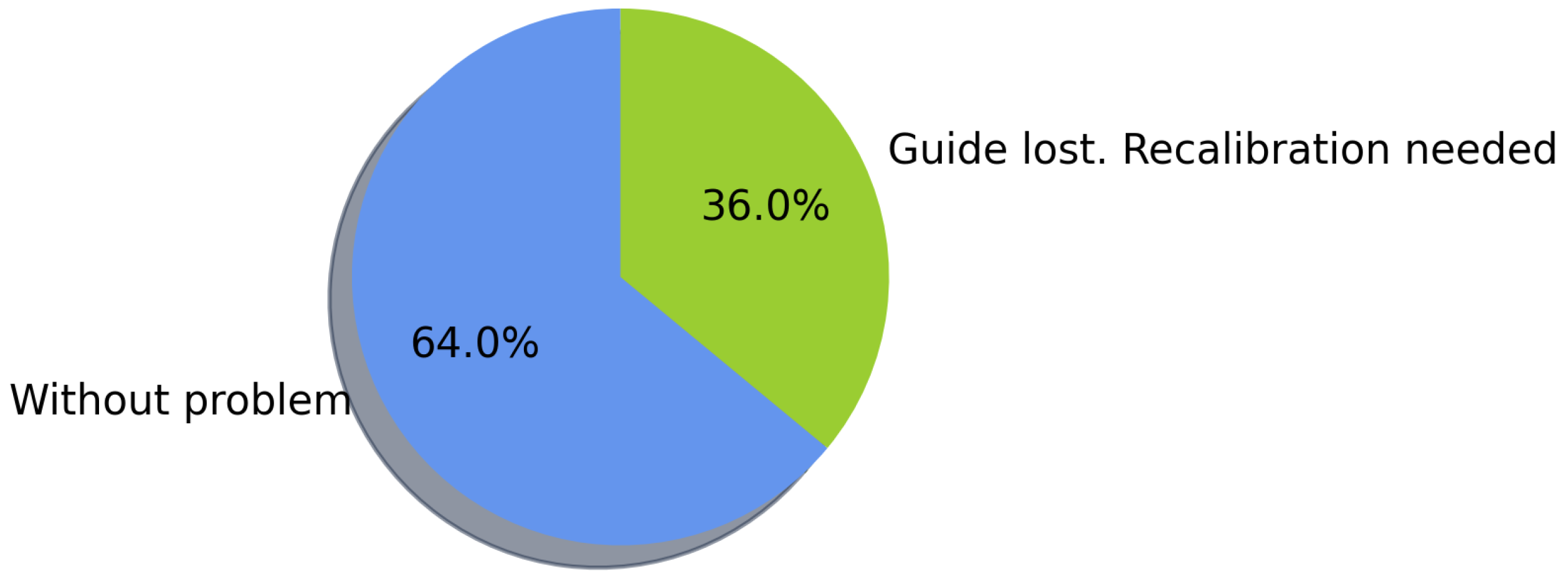

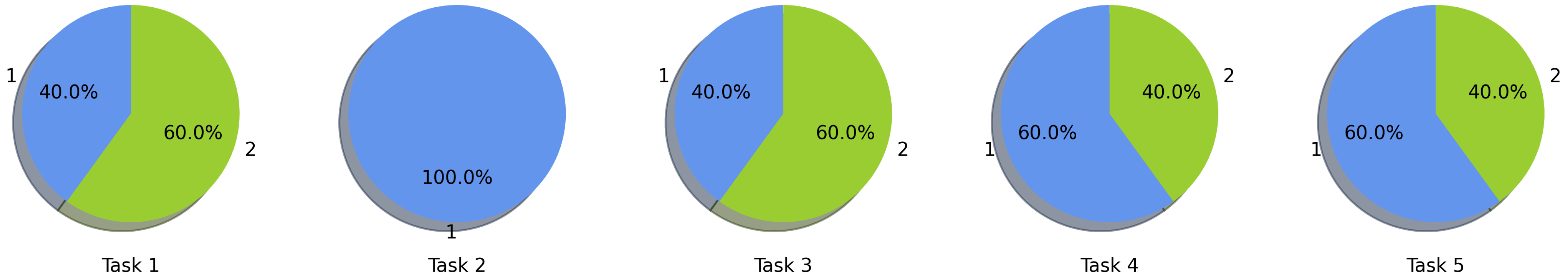

6.5. AR Display Issues

Regarding the AR content presentation, on average, 70.9% of the navigation tasks for both implementation cases did not present any visualization problem regarding the augmented objects used to present the navigation route, therefore not needing to calibrate the system to complete the navigation (only required for the initial positioning). As for those that presented visualization problems, the route presented on the screen did not match the corridors of the building, thus confusing the user. These presented visualization issues were mainly due to the position tracking and distance offset, generated by situations previously discussed regarding feature points detected from the environment, thus requiring a system calibration. Since a positioning calibration may be required anywhere in the building to restore the correct position, it was decided to place the QR codes in the main locations of the buildings, covering a radius of 7 m per code.

6.6. User Feedback

As part of the navigation tests, 27 different people shared their opinion by answering a survey. In general, most users expressed a favorable impression of the system, considering the AR interactive enough for indoor navigation and finding helpful information provided by the KB. In Case 1, since the University currently lacks a database for the extraction of contact information of the academic staff, users appreciate being able to obtain such information at hand and even know the research and teaching activities they perform, as it benefits them when seeking advisor for the development of research projects. Overall, their opinion is affected according to the experience obtained using the application, being more favorable in those cases where users presented minor AR display difficulties or locating an unfamiliar point of interest. For example, new users find the system very useful to get to know the institution and their professors better, especially when looking for someone in particular. On the other hand, users in higher grades see the availability of the information provided by the system as favorable but consider that they can navigate faster within the environment without the help of the system. Even so, several of these users mentioned that they were unfamiliar with many of the professors and their offices despite having been at the institution for four years.

6.7. Data Model

Although existing navigation works use traditional database solutions, a KB supported by an ontology provides semantic and structural advantages for managing the data in navigation environments with varying features. For example, due to the different roles of teachers and staff in the academic domain, modeling data can be complex with a relational database, requiring join and union operators to check the availability of components (in the case of null values), which can complicate the definition of queries [

49]. Thus, the ontology provides the properties to define environment features, and the contextual information can be consulted more directly (as presented in Listing 1). Otherwise, the definition of multiple tables and foreign keys would be required. Moreover, using a KB provides a specialization of the points of interest that allow the disambiguation of entities and definition of hierarchies (e.g., a classroom part of a department within a floor). For example, if there is a laboratory called “Alan Turing” but a professor has the same name, the ontology allows differentiation of both entities according to their classes. On the other hand, there might be entities with different names (synonyms), but the same meaning supported by the KB. For example, “Lab-1” is also known as “Alan Turing” laboratory. Another advantage is that the KB follows a platform-independent standardization useful for data sharing and consumption [

32]. Although the inference process has not yet been exploited for this work, rules could be generated to take advantage of the KBs in a further system version.

6.8. Data Storage and Consumption

Although several RDF data stores can be used to implement the proposed methodology, Virtuoso was initially chosen because of its free version and popularity in projects such as DBpedia [

31]. It also fulfills the necessary tasks to store the KB and serve queries in SPARQL. On the other hand, various studies show competitive results for Virtuoso in different tests of throughput, query latency, and query response time regarding existing RDF stores [

50,

51,

52]. However, to capture the prototype information consumption performance, an analysis of the response time and size was conducted between the mobile device and the server. In this sense, the five SPARQL queries mentioned in

Section 4.2 were submitted to the Virtuoso server inside a local wireless network. Each query was submitted 31 times to get an average performance using random entities regarding points of interest and persons. Thus, the number of returned RDF triples was varied for each query response. The results of the response time and size are shown in

Figure 18 on a logarithmic scale. The implementation shows a response time in the range of 18 to 965 ms, with an average of 100 ms. In general, the query that collects the initial data for the system presents the highest average response time compared to the other queries (144 ms). On the other hand, the size of the responses is strongly linked to the number of triples extracted from the server, presenting different sizes among the same type of query because the points of interest and persons in the KB contain different amounts of information. The response sizes varied from 0.381 Kb to 14.559 Kb, with the initial data query again presented the largest response size with an average of 13.35 Kb.

6.9. Limitations

Due to the proposed use of AR as a positioning method, it is necessary to have a device compatible with the AR framework [

53]. In this sense, the system is limited to illumination conditions and extraction of characteristic points in the environment for its correct operation. In addition, this technology makes intensive use of the processor’s device camera, causing the device’s battery to be drained quickly [

54]. At the same time, due to the proposed client-server model handling the KB, the system requires a server connection to obtain the points of interest of the environment and its information.

6.10. Application Usage

The availability of an indoor navigation system provides advantages in several situations, giving more independence to the user while looking for specific locations in unfamiliar environments. In indoor scenarios, there are several situations in which navigation without a system can become more difficult for users. For example, when there are not enough signs to guide users through the corridors, the provided maps are not up to date, there are no people available around to provide clear directions, and so on. Even though some errors occurred when displaying the virtual navigation guide in the system, the user had the option to recalibrate its position and then reach its destination. Thus, through the implemented system, the search for indoor locations becomes a more independent activity, providing contextual and updated information on the locations of places by updating the nodes and linking them to the KB. In this regard, one of the contributions of the methodology is to provide the information of points of interest through the KB following Semantic Web standards, which offers the option to consume such information independently of the platform by users and applications.

7. Conclusions

This paper presented a novel methodology for developing indoor navigation systems, integrating AR and Semantic Web technologies to visualize routes and consume contextual information of the environment. Based on the design of the proposal, it was developed a prototype system implementation for testing purposes. The system was tested on two cases of study into academic environments, designing and implementing an ontology (and a KB) for supporting the information in such a domain. Moreover, it was modeled the information of plans, routes, locations, and points of interest for each case of study. According to the results obtained from the navigation experiments, the AR (and computer vision) effectiveness as the positioning method is determined, being highly dependent on detecting environmental characteristics and illumination conditions of the environment for better tracking user’s movements. The positive feedback received from the users who participated in the experimentation and the response of the system on the two modeled scenarios demonstrated the feasibility of the proposed methodology, resulting in easier and faster navigation to points of interest whose location was unknown to the users. As future work, we wish to explore other methods for position tracking (such as WiFi or Bluetooth wireless networks) to reduce location problems using the currently available infrastructure. In addition, it is intended to take further advantage of AR and Semantic Web to offer a greater variety of ways to present contextual information while navigating without cluttering the user interface, even in other contextual environments aside from academic institutions.