1. Introduction

In an environment with intense ionizing radiation, energetic particles can easily damage the electronic and optical components of image sensors [

1], causing the captured digital images to be highly degraded; the images are essential for the subsequent professional analysis or other advanced computer vision tasks, e.g., image classification [

2], object detection [

3], and semantic segmentation [

4], etc. Although shielding measures such as covering sensors with lead boxes [

5] can improve the radiation resistance level to a certain extent, these measures will increase volumes and workloads of the perception machines sharply or raise the costs of these alternative sensors. Therefore, it is wise to remove strong noise quickly and retain textures as much as possible for the captured radiation scene images by micro-chips, i.e., to focus on effective and robust denoising algorithms.

Few researchers pay attention to denoising algorithms in terms of radiation scene images with complex shapes and dense distributions. Wang et al. [

6] proposed an improved median filtering method combining adaptive thresholds and wavelet transformations to effectively reduce the nuclear radiation noise. Zhang et al. [

7] used adaptive segmentation and fast median filtering to denoise nuclear radiation noises. Yang et al. [

8] combined the frame difference method with interpolation algorithms to restore nuclear radiation images. These real-time denoising methods focus on nuclear radiation noise removal, but they find it difficult to handle very strong noises caused by extreme high radiation dosage.

Traditionally, a captured degraded digital image

Y can be modeled as

Y =

Y′ +

N [

9,

10]. Assuming that the degradation factor is additive noise

N, a clean image

Y′ can be restored through

Y′ =

Y −

N. We carefully analyzed nuclear radiation images and found that the noise in the images is also additive. Therefore, in addition to the above existing denoising methods for radiation scenes, general denoising algorithms focused on additive noise removal may also have good denoising performances in radiation scene images. The existing general image denoising methods fall into two categories: image prior-based methods [

11,

12,

13,

14,

15] and discriminative learning methods [

16,

17,

18,

19,

20,

21].

Image prior-based methods use some prior information of natural images for noise removal, e.g., local smoothness, non-local self-similarity, sparsity, etc. A Block Matching and 3D filtering (BM3D) algorithm [

11] utilized the non-local self-similarity prior to natural images, and it was one of the state-of-the-art (SOTA) image prior-based methods. In a different way, Dong et al. used the sparsity prior to natural images and proposed the Nonlocally Centralized Sparse Representation (NCSR) algorithm [

12] for noise removal. Gu et al. proposed a denoising algorithm named Weighted Nuclear Norm Minimization (WNNM) [

15], combining the Non-local Means method (NLM) [

13] with Low-rank Representation (LRR) [

14]. Though theoretically clear, these SOTA image prior-based methods are time consuming due to their multiple iterations. Last but not least, the verbose hyperparameters of these methods, e.g., the size of sliding windows, the number of image blocks, and the supposed noise variances, vary from one denoising scene to another. Therefore, general image prior-based methods are not suitable for the denoising task in radiation scenes which require high denoising efficiency.

Discriminative learning methods use hard constraints between noised-and-clean image pairs to remove the complex noises without specific mathematical definitions. A landmark of discriminative learning methods was Denoising Convolutional Neural Networks (DnCNN) [

16], which applied Convolutional Neural Networks (CNN), residual learning and batch normalization techniques to remove Additive White Gaussian Noise (AWGN) for the first time. On the basis of DnCNN, Fast and Flexible Denoising Network (FFDNet) [

17] adopted the learned noise level map as a part of the network input to improve the denoising effect. After that, the Convolutional Blind Denoising Network (CBDNet) [

18] used 5-layer Fully Convolutional Networks (FCN) to adaptively obtain the noise level map, which was hugely different from FFDNet and greatly enhanced the blind denoising ability. Recently, the Batch Renormalization Denoising Network (BRDNet) [

19] adopted dilated convolutions and batch renormalizations to achieve a balance between training efficiency and model complexity. In addition, the Attention-guided Denoising Network (ADNet) [

20] applied an attention block at the end of a lightweight backbone and obtained the best denoising results. Benefiting from convolutional feature extractors, Graphics Processing Unit (GPU) computing, and end-to-end training, the CNN-based denoising methods had better performance than traditional image prior-based methods in terms of efficiency and usability, so they are suitable for nuclear radiation scenes with complex noises.

However, the fast CNN-based denoising networks find it difficult to achieve a balance between model complexity and the denoising effect. Moreover, these CNN-based methods pay little attention to texture retention, so the results are prone to being smooth. To solve the texture problem, Details Retraining CNN (DRCNN) [

21] added a texture learning unit on the basis of DnCNN and its promising qualitative results proved that the retraining strategy was useful. Nevertheless, the network structure and optimization method of DRCNN was so simple that its noise learning ability was limited. In short, there is still a lack of the image restoration network with high denoising efficiency and good texture retention.

Taking the rapidity and information retention into account with respect to the strong nuclear radiation noise removal task, we design a lightweight CNN-based denoising network composed of a Noise Learning Unit (NLU) and a Texture Learning Unit (TLU). The NLU adopts the effective non-local idea from traditional image prior-based methods and uses the popular residual learning technique from CNN-based methods in a novel way. To be more specific, the backbone of NLU bilinearly consists of a Multi-scale Kernel Module (MKM) and a Residual Module (RM), obtaining non-local information and high-level texture information, respectively. Moreover, both the MKM and RM have few channels, and their bottoms have Receptive Field Blocks (RFB) and Attention Blocks (AB) to expand receptive fields and enhance convolutional features. In addition, a small sub-network named the Texture Learning Unit (TLU) is at the end of the NLU. It uses an independent loss for optimization and learns detailed texture features simply and effectively. The entire network uses the Mish activation function to obtain good nonlinearity. At the same time, asymmetric convolutions are applied throughout the whole network to greatly reduce the amount of model parameters. The main contributions of our work are as follows.

We designed an extreme lightweight denoising network that not only effectively and efficiently removes the complex and strong nuclear radiation noises, but also carefully retain its texture details.

We applied useful tricks from other computer vision tasks like multi-scale kernel convolution, receptive field blocks, Mish activation and asymmetric convolution to image denoising for the first time. Detailed experiments proved that these techniques benefit image restorations.

The network has good generalization and performs well in other denoising tasks. Compared with the six popular CNN-based denoising methods in removing synthetic Gaussian noises, text noises, and impulse noises, the proposed method still has the highest quantitative metrics.

The rest of this paper is organized as follows.

Section 2 analyzes the nuclear radiation noise.

Section 3 introduces the methodology, including the overall network framework, detailed structures of the sub-networks, and the adopted deep learning tricks.

Section 4 introduces the experiments and

Section 5 analyzes the results.

Section 6 performs detailed discussions and

Section 7 draws the conclusion.

2. Analysis of Nuclear Radiation Noises

We analyzed the noise of the nuclear radiation scene images to guide our research method. The studied noised images were captured by special robots in a real nuclear emergency accident. Note that these images are all polluted by nuclear radiation noises, and there is no original clean image without pollution.

The analysis idea comes from the studies of real noise removal [

9,

10,

18], that the clean ground truth image can be obtained by averaging noised photographs with the same lens.

Figure 1 shows the averaged results of multiple frames and the denoised results from the NLM [

13] of three challenging nuclear radiation scenes.

It can be seen from

Figure 1a that the nuclear radiation noises have irregular shapes and distributions, which is quite challenging. As shown in

Figure 1e, the NLM which works well for AWGN hardly removes nuclear radiation noises, indicating that the noises should not be simply defined as a kind of Gaussian noise or impulse noise, and traditional image prior-based methods find it difficult to handle the denoising task. It is worth noticing that, in

Figure 1b–d, averaging frames does have obvious denoising effects on the nuclear radiation scene images, and with the increase in the averaging numbers, the mean images are prone to being cleaner. The intuitive results demonstrate that the nuclear radiation noise has the same properties as additive noises which means the averaging operation can greatly reduce the noise variances [

9].

In order to find more solid evidence that the nuclear radiation noise is additive, we analyzed the qualitative relationship between the averaging number and the quantitative metrics, as shown in

Figure 2. The ground truth images were obtained by averaging 150 frames, and the metrics are peak signal-to-noise ratio (PSNR) and structural similarity (SSIM).

It can be seen from

Figure 2 that with the increase in the averaging number, PSNR and SSIM metrics between the randomly selected noised frame and the averaged result are prone to being higher; with the increase in the averaging number, the averaged result becomes closer to the clean ground truth. Meanwhile, it can be seen in

Figure 2 that, when the averaging number is 100, the PSNR value reaches 41.08 and the SSIM value reaches 0.95. The three cues indicate that the nuclear radiation noise is almost addictive, and the clean ground truth images obtained by averaging 150 frames are reliable. Therefore, we can make a nuclear radiation dataset composed of noise-and-clean image pairs and handle the difficult denoising task with general CNN-based methods.

4. Experiments

In this section, we first introduce the experimental datasets, including our nuclear radiation dataset and two popular public datasets for synthetic noise removal. Then training and testing details of our experiments are listed. Finally, we introduce the evaluation strategies, including objective evaluation and subjective evaluation.

4.1. Nuclear Radiation Dataset

Our method aimed at removing strong noises in radiation scenes based on CNN, so we made a nuclear radiation dataset composed of noise-and-clean image pairs. The original noised images sized 640 × 480 in this dataset are all captured from the cameras on a special robot in a nuclear emergency accident. There are 37 scenes in the datasets, and each scene has 150 noised images and 1 clean image. Note that each clean image is obtained by averaging the 150 noised images. Finally, 2960 image pairs are used for training, 370 image pairs are used for validation, and 370 image pairs are used for testing, following the commonly used dataset splitting ratio 8:1:1.

Figure 7 shows four challenging scenes of our nuclear radiation dataset.

As shown in

Figure 7, the residual noise maps in the third row have no obvious regulations in term of color, shape, and distribution, while the clean images in the second row have much better perceptual satisfaction than the original noised images in the first row, indicating that the nuclear radiation dataset is applicable.

4.2. Public Synthetic Noise Datasets

In order to verify the generalization ability of our network, we carried out experiments on public synthetic noise datasets for three synthetic noise removal tasks, including gaussian noise, text noise, and impulse noise.

The training set is the widely used Pristine image dataset [

36], which contains 3859 color photographs. The validation set and the testing set are the widely used McMaster [

13] dataset and the Kodak [

37] dataset, which contain 18 and 24 high quality images, respectively.

The synthetic noises are added online, and they have the same mean value of 0. The noise variances in the specific denoising experiments are 25, 50, 75.

4.3. Training and Testing Details

We use the same training and testing strategies in all denoising experiments to ensure fairness:

The training sets are all image patches cropped from training image pairs with windows size 50 × 50 and stride 40, while the validation set and the testing sets are the images pairs with their original sizes.

In training stages, the default batch sizes are 128, and the images patches

X are normalized by

X/255 typed Float32. In addition, the optimization methods are those adopted in Adam [

38] with the initial learning rate 0.001, and the network initialization methods are those adopted in Kaiming [

2]. Validations are performed and recorded at the end of each epoch.

In testing stages, the batch sizes are 1, and the inference platform is TITAN XP. Note that all networks are trained for 50 epochs, and we choose the models with the highest PSNR on the validation set for testing.

4.4. Evaluation Metrics

We adopted three commonly used evaluation metrics for the image restoration tasks, including peak signal-to-noise ratio (PSNR), structural similarity (SSIM) [

39], and mean opinion scores (MOS) [

28].

The unit of PSNR is decibels (dB), and its calculation formula is:

where

x and

y are the input images;

MAX is the maximum value of the images’ grayscale;

Y and

Y1 are the noised image and the denoised result, respectively. Since the input data of the network

X has been normalized by

X/255, we set

MAX = 1. It can be seen from the formula that the larger the PSNR value, the smaller the mean square error (MSE) between the noised image and the denoised result, i.e., the less the distortion of the reconstructed image.

The mean pixel value of an image denotes the estimate of brightness, while the standard deviation denotes the estimate of contrast, and covariance is a measure of structural similarity. Therefore, the SSIM metric combines the information from the three estimators and comprehensively evaluates the effect of image restoration. For the two given images, their structural similarity SSIM is defined as:

where

x and

y are the input images;

μx,

σx2 are the mean and variance of

x, respectively;

μy,

σy2 are the mean and variance of

y, respectively;

σxy is the covariance between

x and

y;

c1 and

c2 are two constants that maintain the stability of the calculation, and we set

c1 = 0.01

2,

c1 = 0.03

2. Unlike PSNR that calculates the entire image, SSIM calculates the image patches with a sliding window sized

m ×

m (we set

m = 11). The ultimate global SSIM value is the mean of local SSIM values from all patches. SSIM is also a good quantitative metric to represent the quality of image restoration.

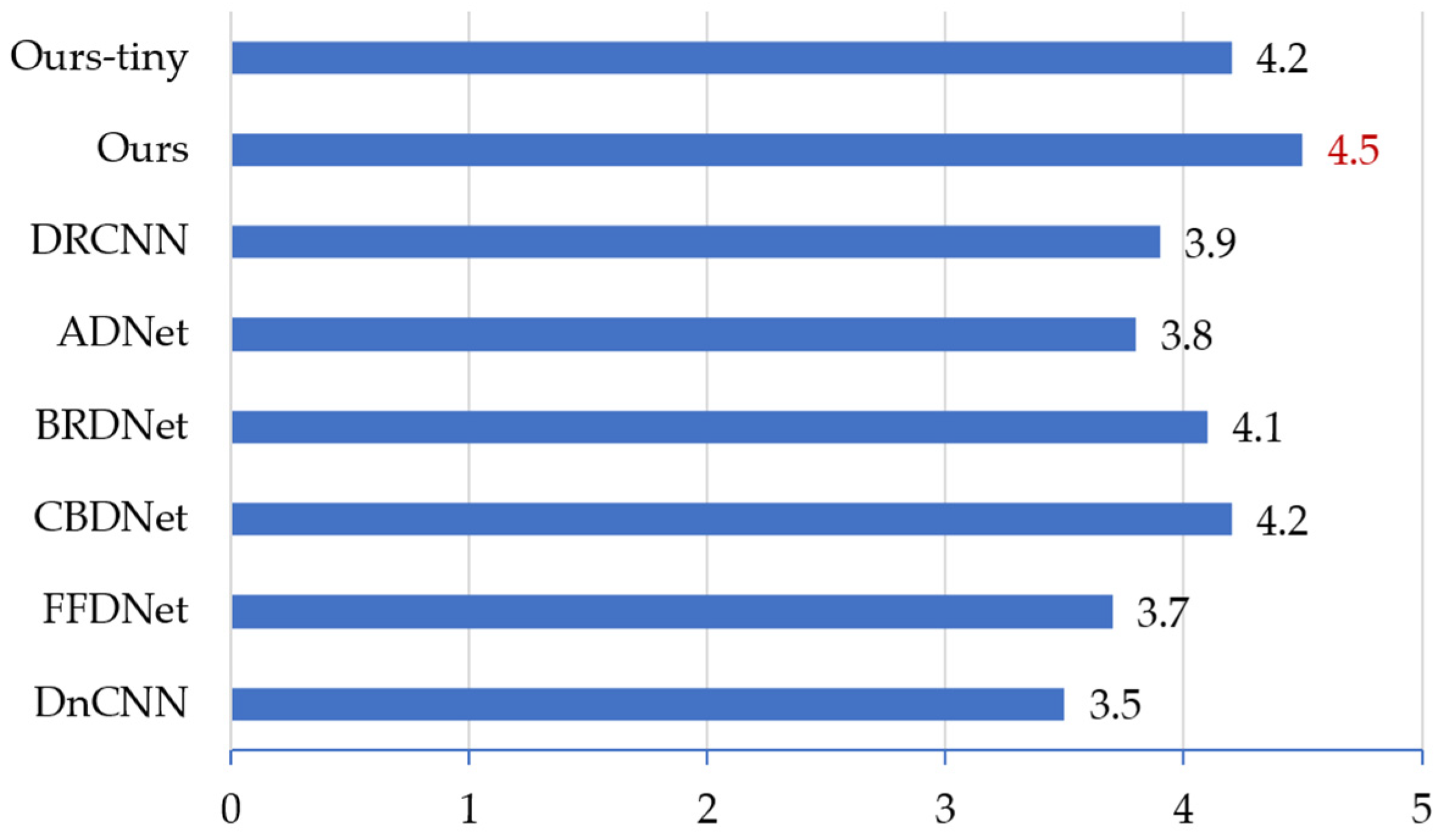

In addition, we performed mean opinion scores (MOS) to quantitatively quantify the perceptual satisfaction of the restored image. Specifically, we asked 20 volunteers with different ages and occupations, and required them to score the images (ranged 0 to 5). The ultimate MOS of the image is the mean value from all the volunteers.

7. Conclusions

In order to remove the complex and strong-level noise in radiation scene images, we designed a lightweight network composed of a noise learning unit (NLU) and a texture learning unit (TLU). The proposed network applied multi-scale kernel convolution, receptive field blocks, Mish activation, and asymmetric convolution to denoising tasks for the first time, and these tricks provided substantive improvements.

Compared with 12 denoising methods including 6 traditional image prior-based methods and 6 latest CNN-based methods on our nuclear radiation dataset, the proposed method has the real-time FPS with the highest PSNR and SSIM metrics.

In addition, compared with the six CNN-based methods, our network had the highest MOS score and the best perceptual effects on our nuclear radiation dataset, and obtained the highest quantitative metrics on the public Kodak dataset for removing synthetic Gaussian noise, text noise, and impulse noise.

Therefore, the strategy of using the complexity of the network structure in exchange for the miniaturization of the model is effective, and the proposed method commendably solves the problem of strong noise removal in nuclear radiation scenes.