Figure 1.

Sample of degraded image. (a) presents an original image , (b) demonstrates the AWGN image , and (c) shows the resultant image .

Figure 1.

Sample of degraded image. (a) presents an original image , (b) demonstrates the AWGN image , and (c) shows the resultant image .

Figure 2.

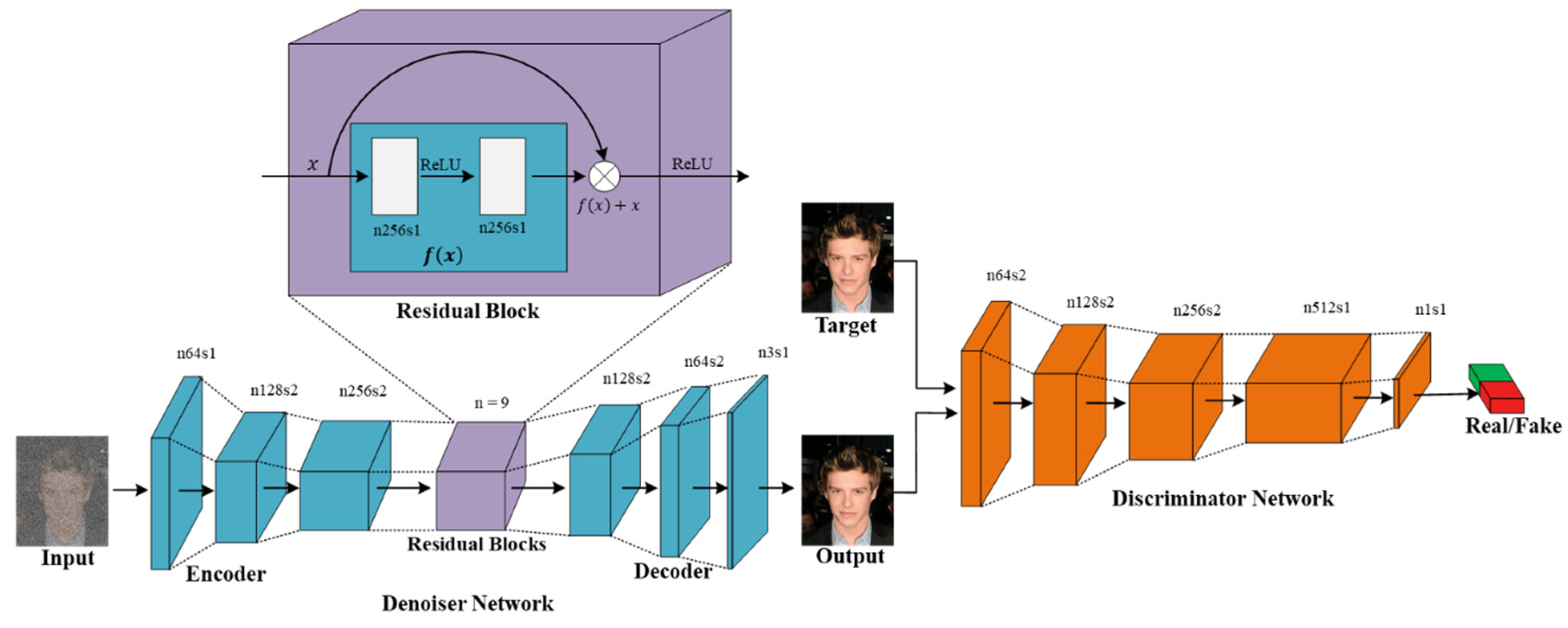

AGDN framework. AGDN consists of the denoiser, , and the discriminator, . The denoiser, , aims to construct noise-free images from the given noisy images. It consists of the encoder-decoder configuration with three down-sampling convolution stride-1 and stride-2 layers, nine residual blocks, two up-sampling transposed convolution layers of stride-2, and one convolutional layer of stride-1. The discriminator, , includes the convolutional batch-normalization leaky ReLU layers, and the output of is utilized to differentiate the constructed images from the real images.

Figure 2.

AGDN framework. AGDN consists of the denoiser, , and the discriminator, . The denoiser, , aims to construct noise-free images from the given noisy images. It consists of the encoder-decoder configuration with three down-sampling convolution stride-1 and stride-2 layers, nine residual blocks, two up-sampling transposed convolution layers of stride-2, and one convolutional layer of stride-1. The discriminator, , includes the convolutional batch-normalization leaky ReLU layers, and the output of is utilized to differentiate the constructed images from the real images.

Figure 3.

Results of different loss functions that construct different noise-free images from corresponding noisy images. The first row results are the noise level of sigma 25. The second row results are the noise level of sigma 50. The third row results are the noise level of sigma 100. (a) input noisy image, (b) result of L2 alone, (c) result of L2 and adversarial loss, (d) result of L1 alone, (e) result of the proposed AGDN loss function, and (f) target image.

Figure 3.

Results of different loss functions that construct different noise-free images from corresponding noisy images. The first row results are the noise level of sigma 25. The second row results are the noise level of sigma 50. The third row results are the noise level of sigma 100. (a) input noisy image, (b) result of L2 alone, (c) result of L2 and adversarial loss, (d) result of L1 alone, (e) result of the proposed AGDN loss function, and (f) target image.

Figure 4.

Different approaches for image denoising tasks.

Figure 4.

Different approaches for image denoising tasks.

Figure 5.

The different network structures for the image generation network. The left one is the encoder-decoder structure, where first the image is encoded to some latent space, and then decoded for target image reconstruction. The right one is the U-NET structure, where the encoder and decoder are connected with skip-connections.

Figure 5.

The different network structures for the image generation network. The left one is the encoder-decoder structure, where first the image is encoded to some latent space, and then decoded for target image reconstruction. The right one is the U-NET structure, where the encoder and decoder are connected with skip-connections.

Figure 6.

Sample results of the image denoising task using different methods and network structures. The first row is the input images of different noise levels. The second row shows the results produced by the RLID method using the U-NET structure. The third row presents the U-NET structure results via the DID method. The fourth row shows the encoder-decoder structure results using the RLID method. The fifth row presents the encoder-decoder structure results via the DID method. The sixth row demonstrates the results of the proposed AGDN.

Figure 6.

Sample results of the image denoising task using different methods and network structures. The first row is the input images of different noise levels. The second row shows the results produced by the RLID method using the U-NET structure. The third row presents the U-NET structure results via the DID method. The fourth row shows the encoder-decoder structure results using the RLID method. The fifth row presents the encoder-decoder structure results via the DID method. The sixth row demonstrates the results of the proposed AGDN.

Figure 7.

First sample results of image denoising tasks on the Partial-CelebA dataset. The First to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, Second-row to the last-row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 7.

First sample results of image denoising tasks on the Partial-CelebA dataset. The First to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, Second-row to the last-row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 8.

Second sample results of image denoising tasks on the Partial-CelebA dataset. The first to last column images were generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows the input images, second-row to the last-row presents the results of the Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 8.

Second sample results of image denoising tasks on the Partial-CelebA dataset. The first to last column images were generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows the input images, second-row to the last-row presents the results of the Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 9.

Third sample results of image denoising tasks on the Partial-CelebA dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last-row presents the results of the Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 9.

Third sample results of image denoising tasks on the Partial-CelebA dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last-row presents the results of the Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 10.

Fourth sample results of image denoising tasks on the Partial-CelebA dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last-row presents the results of the Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 10.

Fourth sample results of image denoising tasks on the Partial-CelebA dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last-row presents the results of the Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 11.

First example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 11.

First example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 12.

Second example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 12.

Second example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 13.

Third example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 13.

Third example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by the noise level of sigma 5, 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 14.

Fourth example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by

Table 5. 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Figure 14.

Fourth example results of image denoising tasks on the DIV2K dataset. The first to last column images generated by

Table 5. 25, 50, and 100, respectively. First-row shows input images, second-row to the last row presents the results of Dn-CNN, ID-MSE-WGAN, FFDNet, the perceptually inspired method, and the proposed AGDN, respectively.

Table 1.

Comparison of the proposed and state-of-the-art methods.

Table 1.

Comparison of the proposed and state-of-the-art methods.

| Methods | Advantages | Disadvantages |

|---|

| Model-Based Methods | Priors of Sparsity [15,16], Non-local Self-Similarity (NSS) [17,18,19,20] | | |

| Discriminative Learning-based methods | NOISE2NOISE [21], NOISE2VOID [22] | | |

| Dn-CNN [23], ID-MSE-WGAN [45] | | |

| FFDNet [24] | | |

| The perceptually inspired denoising method [46], | | |

| The proposed AGDN | Fast inference time Construct sharp, pleasing, and target-oriented images for all levels of noise Used residual blocks for deeper network

| |

Table 2.

Denoiser network of AGDN.

Table 2.

Denoiser network of AGDN.

| | Padding | Kernel Size | Operation | Feature Maps | Stride | Non-Linearity |

|---|

| Encoder | 3 | 7 | Convolution | 64 | 1 | ReLU |

| 1 | 3 | Convolution | 128 | 2 | ReLU |

| 1 | 3 | Convolution | 256 | 2 | ReLU |

| Residual Blocks | 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| 1 | 3 | Residual block | 256 | 1 | ReLU |

| Decoder | 1 | 3 | Deconvolutional | 128 | 2 | ReLU |

| 1 | 3 | Deconvolutional | 256 | 2 | ReLU |

| 3 | 7 | Convolutional | 256 | 1 | Tanh |

Table 3.

Residual block network.

Table 3.

Residual block network.

| Padding | Kernel Size | Operation | Feature Maps | Stride | Non-Linearity | Dropout |

|---|

| 1 | 3 | Convolution | 256 | 1 | ReLU | 0.5 |

| 1 | 3 | Convolution | 256 | 1 | - | - |

Table 4.

Discriminator network.

Table 4.

Discriminator network.

| Padding | Kernel Size | Operation | Feature Maps | Stride | Non-Linearity |

|---|

| 1 | 4 | Convolution | 64 | 2 | LeakyReLU |

| 1 | 4 | Convolution | 128 | 2 | LeakyReLU |

| 1 | 4 | Convolution | 256 | 2 | LeakyReLU |

| 1 | 4 | Convolution | 512 | 1 | LeakyReLU |

| 1 | 4 | Convolution | 1 | 1 | Sigmoid |

Table 5.

Quantitative results for the noise level of sigma 25 compared with different loss functions. Bold results show good scores.

Table 5.

Quantitative results for the noise level of sigma 25 compared with different loss functions. Bold results show good scores.

| | PSNR (dB) | SSIM | UQI | VIF | FID |

|---|

| Input | 17.48 | 0.5725 | 0.8173 | 0.2384 | 106.4 |

| L2 | 27.15 | 0.9142 | 0.9457 | 0.4765 | 44.99 |

| L2 + Adv_loss | 26.22 | 0.9028 | 0.9381 | 0.4546 | 48.07 |

| L1 | 25.62 | 0.8924 | 0.9371 | 0.4355 | 51.49 |

| Proposed | 27.41 | 0.9184 | 0.9483 | 0.4879 | 42.73 |

Table 6.

Quantitative results for the noise level of sigma 50 compared with different loss functions. Bold results show good scores.

Table 6.

Quantitative results for the noise level of sigma 50 compared with different loss functions. Bold results show good scores.

| | PSNR (dB) | SSIM | UQI | VIF | FID |

|---|

| Input | 14.66 | 0.5570 | 0.7618 | 0.2089 | 131.0 |

| L2 | 26.48 | 0.9130 | 0.9432 | 0.4835 | 43.41 |

| L2 + Adv_loss | 26.35 | 0.9082 | 0.9427 | 0.4801 | 45.31 |

| L1 | 25.41 | 0.8858 | 0.9180 | 0.4257 | 53.64 |

| Proposed | 26.54 | 0.9148 | 0.9437 | 0.4850 | 42.24 |

Table 7.

Quantitative results for the noise level of sigma 100 compared with different loss functions. Bold results show good scores.

Table 7.

Quantitative results for the noise level of sigma 100 compared with different loss functions. Bold results show good scores.

| | PSNR (dB) | SSIM | UQI | VIF | FID |

|---|

| Input | 12.74 | 0.3792 | 0.7204 | 0.1104 | 252.9 |

| L2 | 24.39 | 0.8503 | 0.9243 | 0.4259 | 80.11 |

| L2 + Adv_loss | 24.18 | 0.8442 | 0.9257 | 0.4169 | 84.82 |

| L1 | 24.83 | 0.8840 | 0.9261 | 0.4421 | 59.53 |

| Proposed | 24.90 | 0.8850 | 0.9251 | 0.4434 | 59.33 |

Table 8.

Quantitative results of different methods with primary two configurations of generating the model on several noise levels. Bold results show good scores.

Table 8.

Quantitative results of different methods with primary two configurations of generating the model on several noise levels. Bold results show good scores.

| Method/Noise Level | | | | | Average |

|---|

| PSNR (dB) |

| U-NET-RLID | 31.65 | 26.92 | 25.54 | 21.49 | 26.40 |

| U-NET-DID | 31.28 | 27.23 | 26.06 | 22.67 | 26.81 |

| Enc-Dec-RLID | 31.67 | 27.45 | 26.85 | 24.79 | 27.69 |

| Enc-Dec-DID | 28.75 | 25.62 | 25.40 | 24.83 | 26.15 |

| Proposed AGDN | 28.85 | 27.41 | 26.54 | 24.90 | 26.92 |

| SSIM |

| U-NET-RLID | 0.9638 | 0.9097 | 0.8199 | 0.6502 | 0.8359 |

| U-NET-DID | 0.9605 | 0.9076 | 0.8734 | 0.7361 | 0.8694 |

| Enc-Dec-RLID | 0.9604 | 0.9014 | 0.9165 | 0.8482 | 0.9066 |

| Enc-Dec-DID | 0.9571 | 0.8924 | 0.8857 | 0.8840 | 0.9048 |

| Proposed AGDN | 0.9570 | 0.9184 | 0.9148 | 0.8850 | 0.9188 |

| UQI |

| U-NET-RLID | 0.9650 | 0.9418 | 0.9306 | 0.8967 | 0.9335 |

| U-NET-DID | 0.9633 | 0.9459 | 0.9359 | 0.9122 | 0.9393 |

| Enc-Dec-RLID | 0.9675 | 0.9488 | 0.9366 | 0.9266 | 0.9449 |

| Enc-Dec-DID | 0.9557 | 0.9371 | 0.9179 | 0.9261 | 0.9342 |

| Proposed AGDN | 0.9565 | 0.9483 | 0.9437 | 0.9251 | 0.9434 |

| VIF |

| U-NET-RLID | 0.6275 | 0.4750 | 0.3857 | 0.2560 | 0.4360 |

| U-NET-DID | 0.6186 | 0.4674 | 0.4183 | 0.2997 | 0.4510 |

| Enc-Dec-RLID | 0.6273 | 0.4735 | 0.4906 | 0.4233 | 0.5037 |

| Enc-Dec-DID | 0.6055 | 0.4355 | 0.4256 | 0.4421 | 0.4772 |

| Proposed AGDN | 0.6062 | 0.4879 | 0.4850 | 0.4434 | 0.5056 |

| FID |

| U-NET-RLID | 20.55 | 45.34 | 79.58 | 133.8 | 69.82 |

| U-NET-DID | 20.86 | 44.65 | 61.60 | 107.5 | 58.65 |

| Enc-Dec-RLID | 19.77 | 46.63 | 42.26 | 88.91 | 49.39 |

| Enc-Dec-DID | 23.40 | 51.49 | 53.64 | 59.53 | 47.01 |

| Proposed AGDN | 23.79 | 42.73 | 44.24 | 59.33 | 42.52 |

Table 9.

Quantitative results of baseline methods with the proposed method on several noise levels. Bold results show good scores.

Table 9.

Quantitative results of baseline methods with the proposed method on several noise levels. Bold results show good scores.

| Method/Noise Level | | | | | Average |

|---|

| PSNR (dB) |

| Noisy Input | 28.64 | 17.48 | 14.66 | 12.74 | 18.38 |

| Dn-CNN | 31.23 | 25.78 | 24.77 | 20.26 | 25.51 |

| ID-MSE-WGAN | 31.30 | 26.12 | 25.15 | 24.23 | 26.70 |

| FFDNet | 28.66 | 26.95 | 26.30 | 24.39 | 26.58 |

| Perceptually Inspired | 31.28 | 26.63 | 25.86 | 22.37 | 26.53 |

| Proposed AGDN | 28.85 | 27.41 | 26.54 | 24.90 | 26.92 |

| SSIM |

| Noisy Input | 0.9653 | 0.5725 | 0.5570 | 0.3792 | 0.6185 |

| Dn-CNN | 0.9594 | 0.8554 | 0.7805 | 0.5862 | 0.7953 |

| ID-MSE-WGAN | 0.9657 | 0.9020 | 0.8890 | 0.8492 | 0.9014 |

| FFDNet | 0.9583 | 0.9130 | 0.9120 | 0.8503 | 0.9084 |

| Perceptually Inspired | 0.9605 | 0.9076 | 0.8734 | 0.7361 | 0.8694 |

| Proposed AGDN | 0.9570 | 0.9184 | 0.9148 | 0.8850 | 0.9188 |

| UQI |

| Noisy Input | 0.9265 | 0.8173 | 0.7618 | 0.7204 | 0.8065 |

| Dn-CNN | 0.9672 | 0.9390 | 0.9265 | 0.8804 | 0.9282 |

| ID-MSE-WGAN | 0.9677 | 0.9358 | 0.9261 | 0.9276 | 0.9393 |

| FFDNet | 0.9555 | 0.9480 | 0.9429 | 0.9243 | 0.9426 |

| Perceptually Inspired | 0.9633 | 0.9459 | 0.9359 | 0.9122 | 0.9393 |

| Proposed AGDN | 0.9565 | 0.9483 | 0.9437 | 0.9251 | 0.9434 |

| VIF |

| Noisy Input | 0.7016 | 0.2384 | 0.2089 | 0.1104 | 0.3148 |

| Dn-CNN | 0.6221 | 0.4258 | 0.3546 | 0.2232 | 0.4064 |

| ID-MSE-WGAN | 0.6275 | 0.4605 | 0.4801 | 0.4243 | 0.4981 |

| FFDNet | 0.6123 | 0.4821 | 0.4972 | 0.4249 | 0.5041 |

| Perceptually Inspired | 0.6186 | 0.4674 | 0.4183 | 0.2997 | 0.4510 |

| Proposed AGDN | 0.6062 | 0.4879 | 0.4850 | 0.4434 | 0.5056 |

| FID |

| Noisy Input | 19.78 | 106.43 | 131.0 | 252.9 | 127.5 |

| Dn-CNN | 20.46 | 88.12 | 124.2 | 228.5 | 115.3 |

| ID-MSE-WGAN | 19.70 | 47.93 | 45.62 | 87.61 | 50.21 |

| FFDNet | 22.03 | 42.99 | 44.40 | 80.11 | 47.38 |

| Perceptually Inspired | 20.86 | 44.65 | 61.60 | 107.5 | 58.65 |

| Proposed AGDN | 23.79 | 42.73 | 44.24 | 59.33 | 42.52 |

Table 10.

Quantitative results of baseline methods with the proposed method on the DIV2K dataset of multiple noise levels. Bold results show good scores.

Table 10.

Quantitative results of baseline methods with the proposed method on the DIV2K dataset of multiple noise levels. Bold results show good scores.

| Method/Noise Level | | | | | Average |

|---|

| PSNR (dB) |

| Noisy Input | 29.57 | 18.09 | 15.00 | 13.14 | 18.95 |

| Dn-CNN | 33.32 | 25.61 | 22.92 | 20.66 | 25.62 |

| ID-MSE-WGAN | 32.50 | 25.27 | 22.71 | 20.43 | 25.23 |

| FFDNet | 25.90 | 24.39 | 21.97 | 19.15 | 22.85 |

| Perceptually Inspired | 32.54 | 26.27 | 23.41 | 21.15 | 25.84 |

| Proposed AGDN | 32.88 | 26.01 | 23.48 | 21.52 | 25.97 |

| SSIM |

| Noisy Input | 0.9521 | 0.7248 | 0.5399 | 0.3612 | 0.6445 |

| Dn-CNN | 0.9719 | 0.8599 | 0.7683 | 0.6217 | 0.8055 |

| ID-MSE-WGAN | 0.9670 | 0.8466 | 0.7573 | 0.6347 | 0.8014 |

| FFDNet | 0.9294 | 0.8618 | 0.7786 | 0.6637 | 0.8084 |

| Perceptually Inspired | 0.9643 | 0.8653 | 0.7868 | 0.6725 | 0.8222 |

| Proposed AGDN | 0.9673 | 0.8709 | 0.7897 | 0.6815 | 0.8274 |

| UQI |

| Noisy Input | 0.9508 | 0.8382 | 0.7825 | 0.7440 | 0.8289 |

| Dn-CNN | 0.9740 | 0.9493 | 0.9312 | 0.9008 | 0.9388 |

| ID-MSE-WGAN | 0.9707 | 0.9340 | 0.9164 | 0.8973 | 0.9296 |

| FFDNet | 0.9362 | 0.9271 | 0.9046 | 0.8715 | 0.9099 |

| Perceptually Inspired | 0.9686 | 0.9414 | 0.9295 | 0.9112 | 0.9377 |

| Proposed AGDN | 0.9715 | 0.9472 | 0.9285 | 0.9135 | 0.9402 |

| VIF |

| Noisy Input | 0.7931 | 0.4121 | 0.2500 | 0.1272 | 0.3956 |

| Dn-CNN | 0.7851 | 0.4638 | 0.3390 | 0.2219 | 0.4525 |

| ID-MSE-WGAN | 0.7889 | 0.4655 | 0.3361 | 0.2263 | 0.4542 |

| FFDNet | 0.6253 | 0.4615 | 0.3458 | 0.2491 | 0.4204 |

| Perceptually Inspired | 0.7598 | 0.4787 | 0.3516 | 0.2408 | 0.4577 |

| Proposed AGDN | 0.7890 | 0.4696 | 0.3479 | 0.2524 | 0.4647 |

| FID |

| Noisy Input | 11.33 | 87.80 | 157.0 | 225.1 | 120.3 |

| Dn-CNN | 11.63 | 70.64 | 120.4 | 179.1 | 95.44 |

| ID-MSE-WGAN | 11.21 | 67.80 | 118.9 | 174.3 | 93.05 |

| FFDNet | 24.52 | 69.06 | 108.0 | 152.5 | 88.52 |

| Perceptually Inspired | 12.90 | 68.73 | 112.8 | 158.2 | 88.16 |

| Proposed AGDN | 15.11 | 68.29 | 107.1 | 150.7 | 85.30 |