1. Introduction

Semantic segmentation is a basic computer vision topic, wherein an explicit category label is assigned to each pixel of an input image, which can be utilized in many applications, such as automotive driving, medical imaging, and video surveillance [

1,

2,

3,

4]. In vehicle-mounted scenes, objects usually appear at multiple scales, because there are various objects, and varying locations or distances of these objects; this is one of the great challenges in semantic segmentation. In general, convolutional neural networks (CNNs) are inherently limited by the design of the neurons at each layer, where the receptive field is restricted to constant regions, and the representation ability of multi-scale features is limited. Extensive efforts [

5,

6,

7,

8] have been made to acquire multi-scale feature representation. The pioneering work of multi-scale feature representation involves constructing an image pyramid (

Figure 1a), where small object details and long-range context can be obtained from large-scale and small-scale inputs, respectively [

7,

9,

10]. However, this process takes a lot of time. In order to mitigate this problem, other researchers extract features hierarchically by an encoder–decoder structure (

Figure 1b) [

11,

12,

13]. Because there are different neuron receptive fields in different layers, the features extracted from various layers of an encoder implicitly contain different scale information. However, each layer of an encoder transmits the same feature maps to the corresponding layer of a decoder, which results in double calculation. A more efficient method builds a spatial pyramid feature extraction module (

Figure 1c), such as a pyramid pooling module [

8] or atrous spatial pyramid pooling (ASPP) module [

5]. Leveraging one of them enables the output features of a large-scale backbone network to be passed to different receptive field branches and transformed into multi-scale features. Furthermore, an enhanced feature representation is obtained by merging all branch features. Nevertheless, these approaches usually apply complicated backbone networks (such as VGG [

14] or ResNet-101 [

15]). Therefore, their computational overhead is huge.

A semantic segmentation network used in a vehicle-mounted scene not only needs enough accuracy, but it must be able to operate in real time. In the past decade, many model compression methods have been proposed, such as network pruning [

16], singular value decomposition (SVD) [

17], and low-rank factorization [

18]. However, these methods are generally used in well-trained, complicated backbone networks, and they are rarely applied to lightweight networks. Some real-time semantic segmentation methods build a network more suitable for mobile devices by using a lightweight backbone network that is composed of deep separable convolution [

19,

20,

21,

22,

23,

24,

25]. They significantly reduce network parameters by compressing feature channels and reducing layers. However, parameter reduction is usually accompanied by decreasing segmentation accuracy. The final mean intersection over union (MIoU) score of these models notably drops to 71% or even lower, which limits their practical application. In contrast, other methods use ResNet-18 [

15] as a backbone network to obtain high accuracy [

26,

27,

28,

29,

30,

31], but they also have relatively more parameters and computations. It is a great challenge to strike a good trade-off between model accuracy and efficiency. To illustrate this actuality,

Figure 2 presents the accuracy (mIoU) and inference speed (frames per second (fps)) obtained by several state-of-the art methods and our proposed method on the Cityscapes test dataset.

Many state-of-the-art semantic segmentation methods illustrate that spatial details and high-level semantic context information are the keys to obtaining high accuracy [

27,

32]. With the deepening of a network, the resolution of the feature map will decrease, and more spatial details will be lost. Dilated convolution is a general technique for preserving spatial details, which generates high-resolution feature maps without increasing parameters. Some state-of-the-art methods apply dilated convolutions at the last several stages of their networks to construct the dilated FCN [

5,

33]. However, the dilated FCN makes the network wider and computationally intensive. When compared with the original FCN [

34], 23 residual blocks in the dilated FCN (based on ResNet-101 [

15]) require four times more computational resources and memory, while three residual blocks take 16 times more resources [

35]. This is clearly not suitable for real-time semantic segmentation networks. Designing additional shallow and wide sub-networks to extract spatial details is a simple and effective solution to this. BiSeNet [

27] and ICNet [

30] are representative approaches. They extend an image pyramid structure to a multi-branch structure, and extract spatial details and context semantics in parallel. Nevertheless, an additional sub-network not only brings redundant parameters, but it also slows down the network to a certain extent. Generally speaking, semantic contextual information is more concentrated in the higher levels of a network. For this reason, many context extraction modules, such as ASPP [

5], PPM [

8], and attention module [

33], have been proposed to connect to the tail of a network. However, some studies have shown that their effectiveness depends on complicated backbone networks with a large channel capacity [

19].

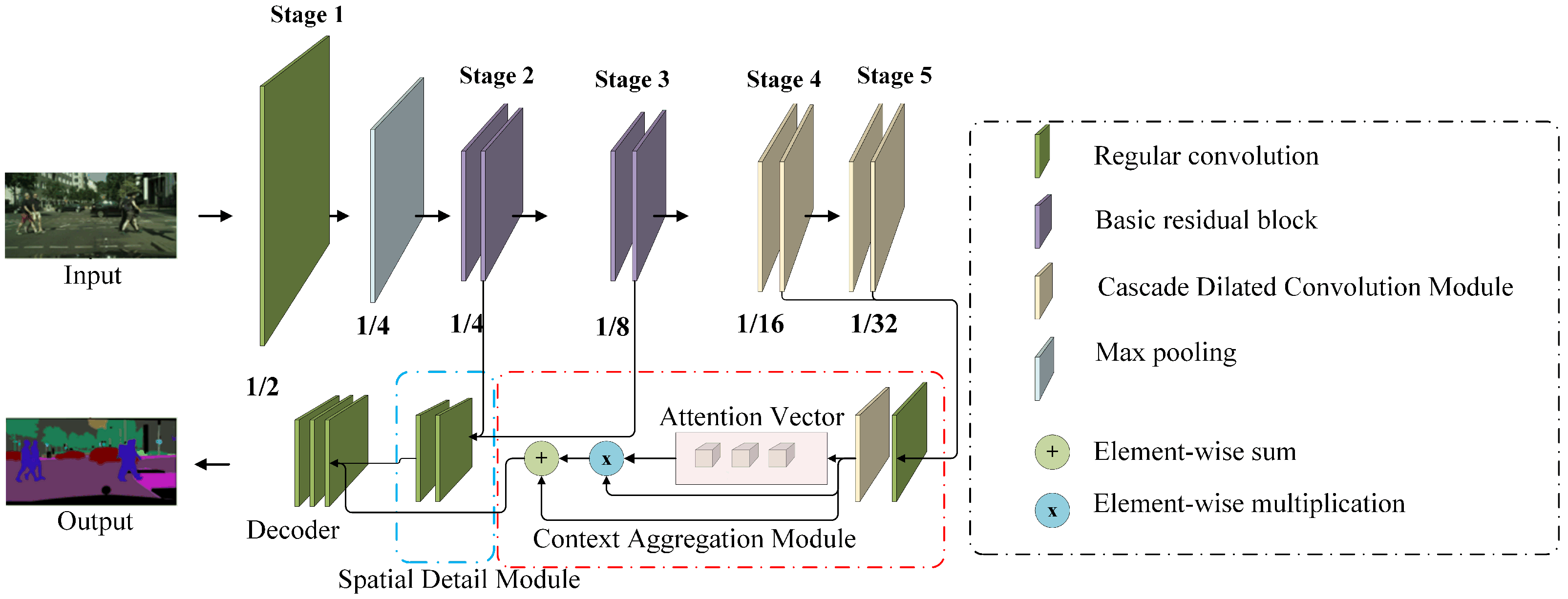

In this paper, we propose a multi-layer and multi-scale feature aggregation network (MMFANet) for semantic segmentation in vehicle-mounted scenes. Different from previous approaches that only focus on the accuracy or efficiency of the model, we aim to improve model efficiency while simultaneously ensuring high segmentation accuracy on multi-scale objects. In pursuit of better accuracy, we adopt the following design. Firstly, a cascaded dilated convolution module (CDCM) is designed to enable the backbone network to perform multi-scale feature extraction layer-by-layer. Subsequently, a context aggregation module (CAM), which is composed of a channel attention mechanism and CDCM, can adaptively encode the multi-scale context information and channel context information under a larger receptive field. Thus, the CAM guides the learning process more precisely. Next, a spatial detail module (SDM) is designed to ease the transmitting of low-frequency information from low layers to high layers. The SDM only contains two standard convolutions, and it does not require an additional sub-network or adopt a dilated FCN to generate high-resolution features. Finally, a decoder is adopted to jointly learn spatial detail and multi-scale context information, and generates final segmentation results. In order to improve the model efficiency, we optimized ResNet-18 [

15]. We use a CDCM with lower feature channel capacity to replace the residual blocks of the last two stages of ResNet-18 (ResNet is divided into five stages according to the resolution of output feature maps). The maximum feature channel number of our backbone network in each layer is no more than 256, which maintains the computation efficiency and simultaneously guarantees adequate information during propagation. The modified lightweight backbone ResNet-18 is named MSResNet-18 in this paper. The lower channel capacity of MSResNet-18 avoids a large number of channel calculations.

Figure 1d illustrates the proposed network architecture. Without any ImageNet pretraining, MMFANet obtains 79.3% MIoU on the Cityscapes test set, and 68.1% MIoU on the CamVid test set, with only 5.5 M parameters. Meanwhile, it processes an image of 1536 × 768 resolutions at a speed of 58.5 FPS on a single NVIDIA GTX 1080Ti card. the experimental results demonstrate that our method achieves a better trade-off between model accuracy and computational complexity. The contributions of this paper are listed below:

A cascade dilated convolution module (CDCM) is proposed, and it is used as a building block of the backbone network to efficiently extract multi-scale features layer by layer. By controlling the channel capacity of the module, we build a more efficient and lightweight backbone network, i.e., MSResNet-18.

A lightweight feature aggregation framework (consisting of an SDM and CAM) is proposed to fuse the features of each stage of our backbone network. Therefore, it can asymptotically refine segmentation results in a coarse level for better accuracy.

The design of MMFANet guarantees high segmentation accuracy while significantly improving network efficiency. When compared with state-of-the-art methods, MMFANet can obtain a better trade-off between model accuracy and efficiency. We release our source code for the benefit of other researchers and further advancement in this field. The Pytorch source code used for this research is available at GitHub:

https://github.com/GitHubLiaoYong/MMFANet (accessed on 1 July 2020).

4. Experiments

We use a modified ResNet-18 [

15] model MSResNet-18 for real-time semantic segmentation tasks. We evaluate the proposed MMFANet on road driving datasets Cistyscapes [

47] and CamVid [

48]. First, the dataset and implementation protocol are introduced. Next, we performed ablation experiments for each part of the proposed method. All of the ablation experiments are carried out on the Cityscapes validation set. Finally, we compare the accuracy, speed, and model complexity of the proposed method with other state-of-the-art semantic segmentation methods on the above two datasets.

4.1. Datasets and Implementation Protocol

Cityscapes: Cityscapes is a collection of high-resolution images that were taken from a driver’s perspective on a clear day. It consists of a training set of 1975 images, a validation set of 500 images, and a testing set of 1525 images, all of which have a resolution of 2048 × 1024. These images were taken from 50 cities, with the shooting time distributed across seasons. The dataset is divided into 30 semantic categories. Following previous works [

11,

49], we use only 19 common semantic categories for training and evaluation in our experiments. More than 20,000 images with coarse annotations are also provided. In our experiments, we only use images with fine annotations.

CamVid: CamVid is also an urban street scene dataset related to autonomous vehicles. It contains 701 images, with 367, 101, and 233 images for training, validation, and testing, respectively. These images have a resolution of 960 × 720, and they belong to 32 classes. We refer to the index used in [

5] to change the number of classes to 11. Following prior works [

11,

49], we randomly sub-sample all images to a 360 × 480 resolution to evaluate model performance.

Implementation details: our implementation is based on a public platform Pytorch (version 1.7.0). We use 5 GTX1080Ti cards under CUDA 9.0 and cuDNN V7 to train our model. For the loss function, the output resolution of our network is 1/8 of the input image and, thus, we upsample the final output with the same size as the input. Note that the different datasets use different loss functions. The standard cross-entropy loss is used for the CamVid dataset; for the Cityscapes dataset, we use the online hard example mining (OHEM) loss function because the Cityscapes scenes are more complex. The objective function is optimized using small batch stochastic gradient descent (SGD), the batch size is 10, the momentum decay is 0.9, and the weight decay is 0.025.

Learning rate strategy: similar to [

5], we adopt a poly learning rate strategy, where the initial learning rate is multiplied by

, and power = 0.9. Because the MSResNet-18 is not pre-trained on ImageNet [

50], when experimenting on the Cityscapes and CamVid datasets, we set the maximum number of epochs to 1000 and 400, respectively.

Data augmentation: we use random horizontal flipping for all datasets, average subtraction, and random resizing between 0.75 and 2. Finally, images and labels in Cityscapes and CamVid are randomly cropped to 1024 × 1024 and 480 × 360 for training. Data augmentation was done using PyTorch’s data enhancement tool, which randomly enhanced the data during the training process. We did not generate any new data.

4.2. Ablation Study

In this subsection, we will study, in detail, the impact of each component on the proposed network. The following experiments are conducted with MSResNet-18 as the backbone when there are no special instructions. We evaluate our method on the Cityscapes validation dataset. We refer to the structure of FCN32s [

34], and directly upsample the final output of the backbone network 32 times to the same size as the input image, and use the result of FCN32s as our baseline.

Table 2 shows the experimental results.

4.2.1. Ablation of the CDCM

The purpose of the CDCM is to extract multi-scale features while capturing a larger receptive field and improving the efficiency of ResNet-18, as mentioned in

Section 3.2. It can be seen from

Table 2 that, after replacing the residual blocks of the last two stages of ResNet-18 with the CDCM, the number of parameters is reduced by 8.5 M and the accuracy is improved from 62.31% to 64.88%. Furthermore, our MSResNet-18 achieves better accuracy than MobileNetV2, which adopts deep separable convolution to reduce the number of parameters.

To compare with the ESP [

24] module, we construct a new model, called ESPResNet-18, where the CDCM in the MSResNet-18 is replaced by the ESP module. For fair comparison, the number of input feature channels of the ESP module is set as the same as that for the CDCM. We observe that ESPResNet-18 achieves comparable or slightly better performance in terms of both the number of parameters and FLOPs. However, the segmentation accuracy of ESPResNet-18 reduced to 61.56%, which is far from satisfying. The experimental results show the effectiveness and efficiency of the CDCM. After verifying the effectiveness of the CDCM, we set up another set of experiments to explore the dilated rate setting of the CDCM in MSResNet-18. We set up three different combinations of dilated ratios:

(named

,

, and

, respectively).

Table 3 shows the experimental results. We can see that, by replacing standard convolution with a dilated convolution group D3, the performance improves from 62.72% to 64.88%. In the case of the same computational complexity and number of parameters,

can achieve higher segmentation precision than

; this is in agreement with the conclusion presented in [

40] because the hybrid dilated convolution (HDC) mode can alleviate the gridding artifact problem. Therefore, we choose

as the final architecture of our network.

4.2.2. Ablation of the CAM

The CAM combines the CDCM and channel-wise attention to gather spatial context and channel context information. We add the CAM to the baseline in order to analyze the impact of the CAM on network performance. At the same time, to compare with current multi-scale modules (i.e., the ASPP module and PPM), we also replace the CAM with these two modules and train the model under the same experimental conditions. The number of input feature channels of ASPP and PPM is 256, because the final feature map of MSResNet-18 only contains 256 channels. The experimental results are shown in

Table 4. After adding the CAM to the baseline network, the performance improved from 64.88% to 77.37%. Hence, the CAM can obtain a stronger feature representation with only 0.9 M parameters and 1.4 GFLOPs. The comparison experiment with ASPP module shows that the CAM not only reduces the number of parameters by 1.5 M, but it also improves the MIoU by 3.43%. In addition, comparative experiments with the PPM show that, although the PPM achieves excellent performance on model efficiency, it sacrifices accuracy. These results indicate that, for our model, the combination of spatial context and channel attention in the CAM is a more accurate approach than the current multi-scale modules that only consider spatial context. We also note that the CAM has slightly more FLOPs than the ASPP module and PPM. When considering the trade-off between accuracy and complexity, the CAM remains a better choice.

4.2.3. Ablation of the SDM

We select different feature maps as the input of the SDM to evaluate the quality of various low-frequency features combinations. All of the methods based on the SDM outperform the baseline method, as shown in

Table 5. We observe that module c (the final architecture of the SDM) achieves comparable or slightly better performance in terms of both accuracy and efficiency than module a and module b. We attribute the superiority of module c to the fact that the output features of stage 2 and stage 3 of backbone network contain different scale information. The combination of these features is beneficial to the segmentation of various-scale objects in vehicle-mounted scenes. From the results presented in

Table 5, we also find that the FLOPs of module a are about twice that of modules b, c, and d. The reason for this is that the resolution of module a’s feature map is 1/4 of the input image. Such a high-resolution feature map leads to intensive computation. In addition, the accuracy of module d, where a straightforward fusion of stage 2 and stage 3 features is implemented by channel concatenation, yields the least MIoU boost. This indicates that such fusion may improve the recognition performance by only a limited degree, because it may introduce noise. In contrast, our SDM achieves a 12.83% increase in MIoU at a small cost of adding 0.3 M parameters and 4.6 GFLOPs. The significant improvement in baseline performance is due to two main reasons. First, spatial detail information that is gathered by the SDM can help the network output more refined prediction.

Figure 8 shows some segmentation results. Obviously, MMFANet can obtain the most refined segmentation results (such as boundaries and contours). Second, before the SDM is added to the baseline model, the feature map is directly upsampled 32 times to obtain the segmentation result. An excessive upsampling step size leads to rough prediction. We also notice that, after adding the SDM, the FLOPs of MMFANet increase to some extent because high-resolution features require more computational cost. However, when considering the trade-off between model accuracy and complexity, a slight increase in the amount of calculation is acceptable. After adding the SDM and CAM simultaneously, the upsampling step size is reduced from 16 to 8. This improves the performance from 77.71% to 79.16%, as shown in

Table 4.

4.3. Comparison with State-Of-The-Art Methods

Table 6 reports the model accuracy and complexity of MMFANet based on ResNet-18 and MSResNet-18. As can be seen, MMFANet-1 achieves a 2.1% higher MIoU improvement than MMFANet-2 under the premise of reducing the parameters by 8 M. Furthermore, the FLOPs of MFFANet-1 are reduced by 11.2 G when the resolution is 1536 × 768. This proves that MSResNet-18 can obtain larger receptive fields and robust multi-scale features in a more efficient way, which is beneficial in improving the performance of semantic segmentation.

Previous lightweight methods, such as ENet [

49], SegNet [

11], ESPNet [

24], DABNet [

19], and LEDNet [

20] achieve excellent performance on model efficiency. However, these methods sacrifice too much segmentation accuracy for efficiency. In contrast, the proposed MMFANet, which obtains 79.3% MIoU and yields a real-time speed of 58.5 FPS on a 1536 × 768 resolution image, makes an obvious improvement in both accuracy and efficiency. At the same time, when compared with recent state-of-the-art real-time semantic segmentation networks that are based on ResNet-18 (such as BiSeNet2 [

27] and SwiftNet [

26]), MMFANet also achieves higher accuracy with fewer parameters (the number of parameters is reduced by at least 53.38%). Our model also achieves better performance than some early non-real-time semantic segmentation networks (based on large-scale backbone), such as DeepLabV2 [

5] and FCN8s [

34], as shown in the top rows of

Table 6. These results indicate that the proposed model can make full use of the features of all levels in the backbone network.

4.3.1. Speed Analysis

In practical applications, speed determines whether a semantic segmentation network can produce practical effects. It is difficult to make a fair comparison because different methods use different hardware and software environments to test speed. In this experiment, we use a single 1080Ti GPU card as hardware and PyTorch as the software environment. In this process, we do not use any testing techniques or any accelerated optimization technique, such as multi-scale inputs or TensorRT implementation. We report the speed of our network at different input resolutions. MMFANet-1 can process 1024 × 2048 images at a speed of 33.5 FPS, as shown in

Table 7. Because dilated convolution is not efficiently optimized, when the image has a low resolution, the speed of MMFANet-1 is slower than that of MMFANet-2.

4.3.2. Performance Analysis on an Edge Device

We run a speed test on a Samsung S20 phone to test the proposed network’s performance on edge devices. When compared with professional deep learning computing platforms, smart phones have some gaps in computing performance. To this end, we compare the computing power of the Samsung S20 phone and Nvidia TX2; the specific data are shown in

Table 8. As can be observed, the performance of the Samsung S20 is worse than that of the professional Nvidia TX2 device.

Table 9 compares the speed of some real-time semantic segmentation models with the presented approach. Our code runs under float32 and it does not have some optimizations, such as TensorRT. The test code is based on PyTorch-Mobile. It can be seen that the model that is proposed in this paper can achieve a speed of about 10.3 FPS when the image resolution is 224 × 224. Moreover, the proposed method is faster than some methods that are based on custom modules, such as DABNet [

19], LEDNet [

20], and ESPNet [

24]. The main reason for this may be that these networks only subsample the features eight times in order to retain more spatial details, resulting in too large of a feature resolution.

From

Table 9 ut can be seen that the MSResNet-18 (MMFANet-1) proposed in this paper can achieve faster speed on mobile devices than ResNet-18 (MMFANet-2). This is the opposite of what was found in the server GPU test (

Table 6). The reason for this phenomenon is that server GPUs have greater parallelism and may not be as sensitive to feature channel capacity as mobile devices. At the same time, we can also see that the MMFANet-1 that is based on MSResNet-18 is faster than some real-time semantic segmentation networks based on ResNet-18, such as SwiftNet and BiSeNet. The above experimental results verify that our optimization method of direct channel reduction can make the backbone network run faster on mobile devices.

4.4. CamVid

We conduct experiments on the CamVid dataset to verify the generalization of the proposed approach. Training and test images both have a 360 × 480 resolution.

Table 10 shows the results for each class. It can be seen that the proposed method can achieve higher accuracy, even with lower-resolution images. Therefore, it can be inferred that our method has good generalizability.