1. Introduction

As an effective way of revealing human intentions, gaze tracking technology has been widely applied in many areas, including marketing, ergonomics, rehabilitation robots and virtual reality [

1,

2]. Gaze tracking systems can be divided into remote and head-mounted gaze trackers (HMGT) [

3]. The remote gaze tracker is typically placed on a fixed location such as a desktop, to capture images of the user’s eyes and face by a camera. The HMGT system is usually fixed to the user’s head, which includes the scene camera to capture the view of the scene and eye camera to observe eye movement. The feature of allowing users to move freely makes HMGT more flexible and suitable for tasks such as human–computer interaction in a real 3D environment. Therefore, HMGT has received extensive attention by many researchers in recent years.

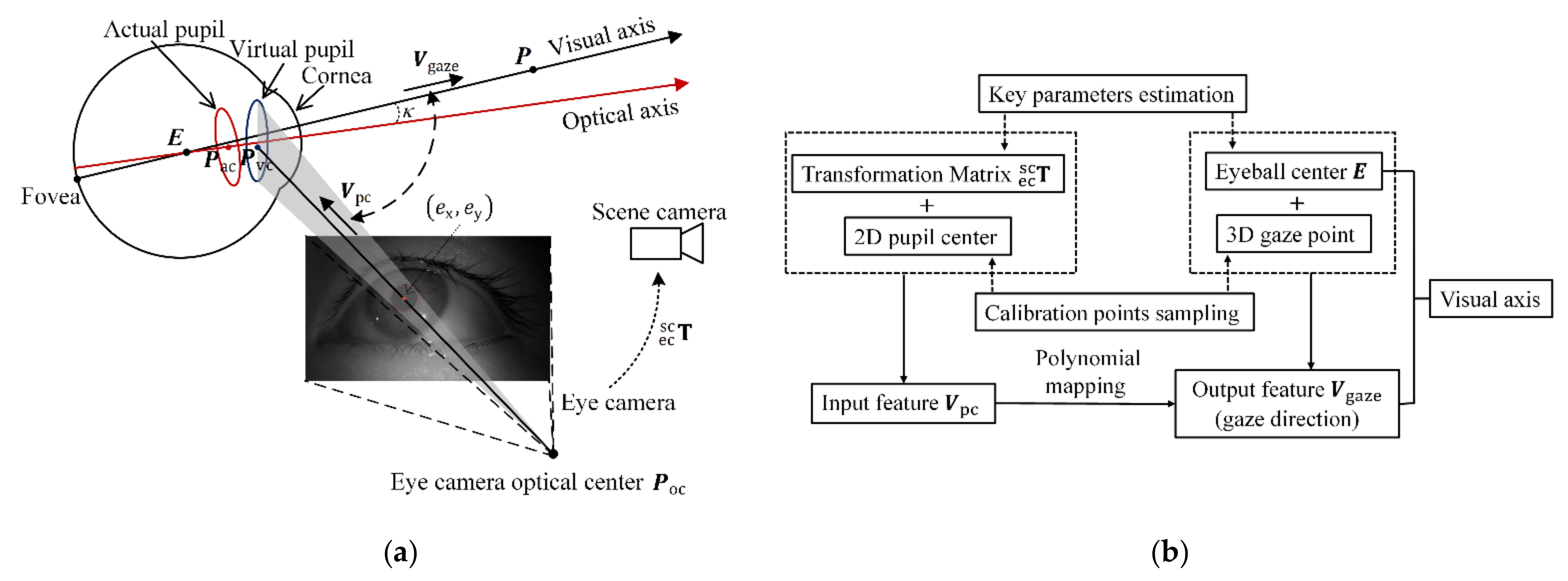

The problem of 3D gaze estimation can be viewed as inferring visual axes from eye images captured by cameras. Typically, there are two different gaze estimation methods which are model-based and regression-based methods, respectively. The model-based methods utilize extracted features from eye images to build a geometric eye model and calculate the visual axis. Traditional model-based methods employed multiple eye cameras and infrared light sources to calculate optical axis, and then calculate the angle Kappa between the optical axis and the visual axis with single-point calibration [

4,

5]. The main merits are rapid calibration and robustness against system drift (the slippage of HMGT), but the complex setting of cameras and lights requirements limit its application. As the HMGT with simple camera setting can be developed more conveniently, it has a broader application prospect. Some research utilized inverse projection law to calculate the pupil pose with simple camera setting. The contour-based method in [

6] designs a 3D eye model fitting method to compute a unique solution by fitting a set of eye images, but the gaze estimation accuracy is relatively low due to the corneal refraction of the pupil. The method in [

7] models the corneal refraction by assuming the physiological parameters of the eyeball, but the performance is not stable because physiological parameters of the eyeball vary from person to person. In addition, it is challenging for these methods to extract the pupil’s contour accurately in the eye image due to the occlusion of eyelids and eyelashes. Therefore, it is difficult for model-based methods to get high-accuracy visual axes with a simple camera setting.

In contrast, the regression-based methods usually adopt single eye camera. The key idea of this kind of method is to establish a regression model to fit the mapping relationship between eye image features and gaze points in scene camera coordinate system [

8,

9]. This kind of method has two sources of error, namely parallax error and extrapolation error. The noticeable extrapolation error may occur due to the underfitting situation caused by improper regression models or calibration point sampling strategy. The parallax error is caused by the spatial displacement between the eyeball and the scene camera [

10]. For instance, the corresponding eye image features of the points on visual axis are the same, but their coordinates in the scene camera coordinate system are different, which leads to one-to-many relationships.

To reduce extrapolation error, different mapping functions are investigated, in which the polynomial regression is the most common model. The method in [

11] compares different polynomial functions and chooses the best performer to estimate the gaze point. However, the functions higher than two orders can not reduce extrapolation errors significantly [

12]. In [

1,

13], the Gaussian process regression is investigated as an alternative mapping function, but the accuracy performance of the Gaussian process regression is unstable. To improve the estimation accuracy of gaze depth, some methods employ MLP neural network to estimate the depth with inputs of pupil centers or pupillary distance [

14,

15], but the gaze estimation models based on neural network require more training data, which causes a heavier burden of calibration procedures.

To prevent parallax error, some methods determine the depth of 3D gaze point by analyzing scene information. The method in [

16] uses SLAM to extract environmental information. Then, the 3D gaze point is estimated by using the correspondence relationship between the triangles containing 2D gaze points in the scene camera image and triangles containing 3D gaze points in the real world. In [

17], SFM (Structure from Motion) is utilized to estimate the 3D gaze point, with two different head positions to look at the same place. However, the performance of these methods gets worse when acquiring sparse feature points from scene image. A more common method is to calculate the visual axes of both eyes and intersect them to get 3D gaze point. The method in [

18] sets calibration points on a screen with fixed depth and requires the user to keep the head still, then employs a polynomial function to fit the mapping relationship between 2D pupil center and 3D gaze point. The visual axis is determined by the fixed eyeball center and the estimated gaze point on the screen. As an improved method, the method in [

19] requires two additional calibration points outside the mapping surface, then a more precise position of the eyeball center is calculated by triangulation. In [

9], the calibration data are collected by staring at a fixed point while rotating head, the position of the eyeball center is set to an estimated initial value, and the loss function based on the angular error of the visual axis is employed to optimize parameters. Obviously, the above methods infer the visual axis by calculating the eyeball center and the direction of line of sight. However, the eyeball centers are usually estimated with data-fitting methods, which can be sample dependent and have limited generalization ability.

In summary, existing regression-based paradigms face three main issues. The first one is how to formulate an appropriate regression model. Most paradigms utilize the image pupil center and the gaze point as input and output features [

9,

19]. However, it may lead to inadequate fitting performance and appreciable extrapolation errors due to the complexity of the human visual system. The second one is how to define a proper calibration point distribution over the whole field of view. Existing paradigms sample the calibration points over a casual field of view [

9,

20]. However, a significant accuracy degradation would occur when the gaze direction is outside the calibration range due to the extrapolation error. The third one is the lack of an elegant recalibration strategy. The mapping relationship between input and output features would change as the HMGT slips. Without an efficient recalibration strategy, the user needs to repeat primary calibration procedures to rectify relative parameters of the gaze estimation model with a heavy burden [

21,

22].

To address these issues, a hybrid gaze estimation method is proposed with real-time head pose tracking in this paper. On one hand, it utilizes the human eye geometric model to analyze the parameters that influence the pose of visual axis and estimates the key parameters eyeball center and camera optical center in head frame. On the other hand, it employs a polynomial regression model to calculate the direction vector of the visual axis. The main contributions of this paper are summarized as follows:

- (1)

A novel hybrid 3D gaze estimation method is proposed to achieve higher gaze estimation accuracy than the state-of-the-art methods. The two key parameters, eyeball center and camera optical center, are estimated in head frame with geometry-based method, so that a mapping relationship between two direction features is established to calculate the direction of the visual axis. As the direction features are formulated with the accurately estimated parameters, the complexity of mapping relationship is reduced and a better fitting performance can be achieved.

- (2)

A calibration point sampling strategy is proposed to improve the uniformity of training set for fitting the polynomial mapping and prevent appreciable extrapolation errors. By estimating the pose of the eyeball coordinate system, the calibration points are retrieved with uniform angular intervals over human’s field of view for symbol recognition.

- (3)

An efficient recalibration method is proposed to reduce the burden of recovering gaze estimation performance when slippage occurs. A rotation vector is introduced to our algorithm, and an iteration strategy is employed to find the optimal solution for the rotation vector and new regression parameters. With an updated eyeball coordinate system, only one extra recalibration point is enough for the algorithm to get comparable gaze estimation accuracy with primary calibration.

The rest of the paper is organized as follows.

Section 2 describes the proposed methods in primary calibration and recalibration.

Section 3 presents the experimental results.

Section 4 is the discussion, and

Section 5 is the conclusion.

3. Experiment and Results

To verify the effectiveness of our proposed method, the HMGT shown in

Figure 2 is developed. This HMGT has two eye cameras (30 fps, 1280 × 720 pixels) to capture movement of eyes, two scene cameras (30 fps, 1280 × 720 pixels) to capture scene view and a 6D pose tracker (Tracker-0) to capture the head movement. Before the experiment, the intrinsic matrix parameters of eye cameras and scene cameras are calibrated by the MATLAB toolbox. The transformation matrix between eye cameras, scene cameras and Tracker-0 is estimated by the proposed method in

Section 2.2. Five subjects participate in the experiment. Firstly, the subject needs to calibrate the eyeball coordinate system with the proposed method in

Section 2.3 and

Section 2.5. Then, the calibration points and test points for regression model fitting are sampled in union eyeball coordinate system. In order to evaluate the effects of calibration depth on gaze estimation performance and compare different methods, calibration points at three different planes distant from the eyeball center with 0.3 m, 0.4 m and 0.5 m are taken into consideration. At each depth, 42 calibration points and 30 test points are sampled with uniform angular intervals of visual axis. The positions of them are calculated by intersecting the pre-defined visual axes and the calibration plane.

As shown in

Figure 6, two 6D pose trackers, Tracker-4 and Tracker-5, are fixed with the arm base and the end effector, respectively. The robot arm can move the end-effector with a marker to a predefined location in the eyeball coordinate system with real-time head pose tracking. The 2D pupil center in eye image is detected in real time by the algorithm investigated in [

27]. When the subject gazes at the marker, the 2D pupil center and the position of the marker are collected in synchronization. The data collection is implemented by using programming in C++. To verify the effectiveness of recalibration method, all subjects wear the HMGT twice and repeat the entire calibration twice. The gaze estimation model is implemented by using programming in MATLAB with collected data. Data acquired in the first wearing are used to evaluate the gaze accuracy of primary calibration method, and data acquired in the second wearing are used to evaluate the gaze accuracy of the recalibration method and compare different methods. The common criterion for evaluating gaze estimation performance is the angular error between estimated visual axis and real visual axis. However, it is improper to compare the performances of different methods with the angular error of visual axis derived with the estimated eyeball center and the gaze point, considering that the estimated eyeball centers in different methods usually have different error distributions. Therefore, a more reasonable evaluation criterion, scene angular error (

SAE), is defined as

where

is the direction vector from the scene camera optical center to the real gaze point and

is the direction vector from the scene camera optical center to the estimated gaze point. The estimated gaze point is the intersection of the estimated visual axes of two eyes.

3.1. Evaluation of Primary Calibration Method

The gaze estimation performance of the primary calibration method based on a training set at different depths is shown in

Figure 7a. It can be found that each situation achieves better performance than other situations at corresponding calibration plane. For example, the method achieves the best gaze estimation performance at a depth of 0.3 m when

. In addition, the mean and standard deviation of error in situation 1 (

) are significantly high while there is no significant difference between situation 2 (

) and situation 3 (

) (paired-t test:

). This may be caused by the extrapolation error. Because of the use of the union eyeball coordinate system, the field of view covered by calibration points at different depths is slightly different due to the depth-dependent parallax between the single and the union eye visual system (see

Figure 7b). As the depth of calibration plane increases, the parallax becomes smaller, the difference in gaze estimation performance in different situations becomes smaller.

3.2. Evaluation of Recalibration Method

The proposed recalibration method re-estimates the transformation matrix between the eyeball and scene camera coordinate system with the proposed geometry-based method and utilizes calibration points to rectify the parameters of the gaze estimation model. To reveal the influence of the number of calibration points on gaze estimation performance in recalibration, two strategies are implemented and compared. One uses a single calibration point, and the other uses all calibration points at depth of 0.5 m. As mentioned in

Section 2.5, the positions of calibration points on calibration plane are determined by eyeball horizontal rotation angle

, and vertical rotation angle

. Without loss of generality, the calibration point whose polar coordinate

is closest to (0,0) is selected to verify the single-point strategy. As shown in

Figure 8, the mean and standard deviation of error in situation 1 (single calibration point) are slightly higher than the other two situations. The overall gaze accuracy performance of them is comparable (the paired-t test:

).

3.3. Comparison with Other Methods

To compare our proposed method with other methods, we implemented and evaluated the following baseline methods.

The method in [

9] formulated a constrained nonlinear optimization to calculate the eyeball center and the regression parameters that were used to map the eye image features to the gaze vector. The initial position of the eyeball center is assumed by 2D pupil center and scene camera intrinsic matrix. The constrained search range of the eyeball center is set as

. This method needs two calibration planes, and the training set at depth of 0.3 m and 0.5 m is used for calculating.

The method based on mapping surfaces [

19] mapped the eye image feature to 3D gaze point on a certain plane. This way, two calibration surfaces with different depths correspond to two different regression mapping functions. For a particular eye image, two different 3D gaze points on different planes can be calculated, then the visual axis can be obtained by connecting two points. This method also needs two calibration planes and the training set at a depth of 0.3 m and 0.5 m is used for calculation.

In comparison, our proposed primary recalibration method and recalibration method use the training set at a depth of 0.5 m. As shown in

Figure 9, the proposed primary calibration method achieves the lowest mean error, followed by the proposed recalibration method. There is no significant difference between their overall gaze estimation performance (the paired-t test:

). In addition, the mean error of the method with nonlinear optimization is slightly lower than the error of the method with two mapping surfaces. Compared to the method with nonlinear optimization, the proposed primary calibration and recalibration method improve accuracy by 35 percent (from a mean error of 2.00 degrees to 1.31 degrees) and 30 percent (from a mean error of 2.00 degrees to 1.41 degrees).

The scene angular error at each of the 90 test points for different methods is illustrated in

Figure 10. The error of each test point is calculated by averaging the error of the same test point for all subjects. The primary calibration and recalibration method obtain better accuracy performance than the baseline method for the 81% of validation points. Although the accuracy performance of our proposed method at a few points is worse than the baseline method, the error at these points is relatively low (lower than 2.4 degree) which is acceptable. In terms of time cost, the baseline method cost 168 s on average while the proposed primary calibration and recalibration method cost 114 s and 32 s, respectively. Therefore, it can be concluded that the proposed methods can achieve better accuracy performance with less time cost of calibration procedures.

4. Discussion

As revealed by the comparison of different methods, the proposed gaze estimation method achieves better performance than the state-of-the-art methods. The main reason is that the eyeball and camera coordinate system are estimated accurately in advance so that they are used as known knowledge to simplify the mapping relationship in regression model. When slippage occurs, the proposed recalibration strategy can utilize the old regression parameters as initial value to optimize the new regression parameters with estimated eyeball coordinate system. That is why the recalibration can get comparable performance with primary calibration with a single calibration point. As a limitation, our proposed calibration and recalibration method both require the calibration procedure to estimate the transformation matrix between eyeball and scene camera coordinate system, but it is simple and it takes little time (30 s approximately).

To compare our proposed method with other methods which need multiple calibration depths, the robot arm is adopted in our experiments to sample calibration points at different depths. However, our proposed method has no requirement for multiple calibration depth, thus the robot arm is not necessary for practical use. For instance, the combination of display screen and trackers can be adopted to sample calibration points at a certain depth, which is more convenient. Noted that the use of the 6D pose tracker can help adjust the positions of calibration points with the movement of a human’s head. It is user friendly because there is no need to keep the head still when sampling calibration points. Benefits always come with costs. The main disadvantage of our proposed method is that the 6D pose tracker is necessary for calibration procedures. However, the head pose tracking based on the 6D pose tracker is beneficial for human–machine interaction because the estimated visual axis can be switched to the world coordinate system.