1. Introduction

In recent years, the development of heterogeneous fifth-generation (5G) networks has led to the rapid evolution of modern high technologies and has changed people’s daily lives by providing various high-demand and intensive applications (e.g., virtual/augmented reality (VR/AR), the internet of vehicles, mobile healthcare, cloud gaming, face/fingerprint recognition, industrial robotics, video streaming analysis, and autonomous driving) [

1,

2,

3]. These applications generate a vast amount of data and require a fast response time and large resource capacities. Consequently, there is a significant burden on resource-constrained IoT devices to handle these heavy computational demands and to accomplish requests quickly. However, these devices are insubstantial with regard to their battery life, computing capabilities, and storage capacity, and are ineffectual when performing a high number of intensive tasks [

4]. Therefore, it is crucial to offload the tasks to other powerful remote computing infrastructures.

In conventional cloud computing, mobile cloud computing (MCC) is a prominent model, supporting computational offloading for mobile devices [

5]. By taking advantage of the enormous computing capabilities of MCC over a wide area network (WAN), user devices can send requests to powerful global cloud servers to utilize their rich computational resources for task processing. However, the long distance between the devices and core network in the MCC network not only causes high transmission delays, data losses, and significant energy consumption, it also limits the context-awareness of applications [

6]. As a result, MCC is unable to meet the standard requirements of delay-sensitive and real-time applications in heterogeneous IoT environments.

To cope with these challenges, multi-access edge computing (MEC) [

7], formerly mobile edge computing, is a European Telecommunications Standards Institute (ETSI)-proposed network architecture that brings the servers with cloud computing properties closer to the IoT devices and deploys them at the edge of the network [

8]. Unlike the centralized nature of MCC, MEC deploys a dense and decentralized peer-to-peer network where the edge servers are allocated in a distributed manner. MEC provides ultra-low latency and a high-bandwidth environment which can be leveraged by IoT applications. Moreover, MEC enhances the quality of experience (QoE) and meets the requirements of the quality of services (QoS); for example, it provides a low execution time and reasonable energy consumption. Additionally, MEC supports modern 5G applications and mitigates traffic burdens, as well as lowering bottlenecks in backhaul networks. As a result, MEC efficiently supports IoT devices in task offloading, particularly for heavy and latency-sensitive tasks [

9,

10].

In spite of the substantial characteristics and potential of MEC, there have been several issues and challenges in relation to the MEC network, as follows. First, the storage capacities of edge servers are limited. This causes unbalanced loads and congestion among edge servers when numerous requests from IoT devices arrive. Second, because of the randomness and changeability of the networks, along with the long execution time of intensive applications in edge servers, the task failure rate due to offloading significantly rises [

11,

12]. Third, assigning resource allocations for task offloading, as well as developing effective and accurate offloading decision methods, are critical challenges in MEC. Furthermore, in heterogeneous IoT environments, the incoming streams of offloaded tasks from delay-sensitive and intensive applications need to be flexibly processed. Several approaches to resolving these issues are discussed below.

One such approach is known as the mobile edge orchestrator (MEO), which is an orchestration embedded in MEC and which has been defined by ETSI [

13]. The MEO has a broad perspective of the edge computing system and manages the available computing resources, network conditions, and the properties of the application [

14,

15]. Moreover, the MEO selects the appropriate mobile edge hosts for processing the applications based on constraints such as latency and inspects the available capacities of virtualization infrastructures for resource allocation [

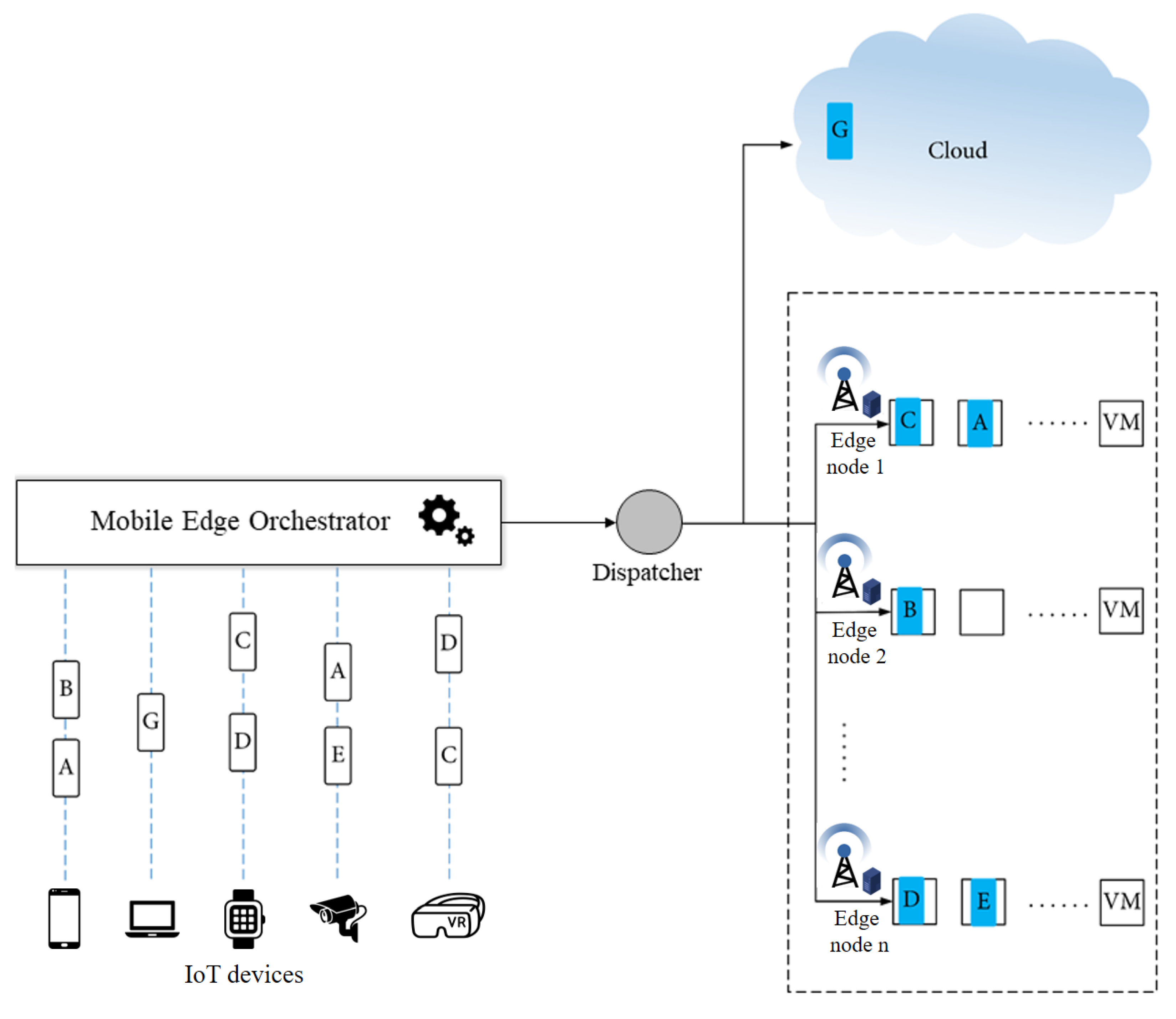

14]. Specifically, the MEO takes responsibility as a decision-maker in the network. Therefore, the target servers (e.g., the local edge server, the cloud server, and the remote edge server) for task offloading are efficiently decided by the MEO. The flow of offloaded tasks through an MEO and a dispatcher is illustrated in

Figure 1. By achieving the topology of the network and analyzing the constraints, the MEO chooses the suitable target servers to execute tasks in the virtual machines (VMs) of the corresponding edge servers.

Recently, fuzzy logic has emerged as a reasonable alternative to handle orchestration issues such as task offloading and resource allocation in edge computing networks. Edge computing networks, such as fog computing and cloudlet computing in general, and the MEC network in particular, are types of swiftly changing uncertain networks. Fuzzy logic is appropriate to cope with changes of parameters, for instance, central unit processing (CPU) utilization on a VM, which regularly changes based on the number of tasks being executed or the bandwidth fluctuations that frequently occur when the number of users increases [

16,

17]. The reasons for this are briefly described as follows. First, because the fuzzy-logic-based approach has a lower computational complexity than other decision-making algorithms, it is effective for solving online and real-time problems without the need for detailed mathematical models [

18]. Second, to support the heterogeneity of devices and the unpredictability of environments, fuzzy logic sets the rules, which are based on well-understood principles and the use of imprecise information provided in a high-level human-understandable format [

16], and takes multiple network parameters of the network (e.g., task size, network latency, and server computational resources) into consideration [

19]. Third, fuzzy logic considers multi-criteria decision analysis to determine the suitable servers at which IoT devices should offload the tasks [

20]. Therefore, fuzzy-assisted MEO usefully supports task offloading by deciding where to offload the incoming requests from clients. There have been considerable works studying task offloading using the fuzzy logic approach. The authors of [

21] proposed a cooperative fuzzy-based task offloading scheme for mobile devices, edge servers, and a cloud server in the MEC network. The authors of [

22] proposed a fuzzy-based multi-criteria decision-making problem regarding the appropriate selection of security services. A novel fuzzy-logic-based task offloading collaboration among user devices, edge servers, and a centralized cloud server for an MEC small-cell network was studied in [

23]. Nevertheless, these studies did not study how to find the best neighboring edge server to which the user device should offload the task, particularly when the network is crowded with a lot of IoT devices sending requests.

On the other hand, machine learning (ML) methods have been extensively integrated into heterogeneous 5G networks [

24]. Among the ML-based approaches, such as supervised learning, unsupervised learning, and reinforcement learning, the algorithm of reinforcement learning is highly appropriate for handling problems with dynamically changing systems in a wireless network [

25]. Moreover, reinforcement learning has lately become a promising technique for making offloading decisions [

26], as well as performing resource allocations [

27], in real-time. Reinforcement learning supports the MEO in the selection of suitable resources for applications by means of its useful features, such as its ability to learn without input knowledge and sequential decision-making within an up-to-date environment [

28]. Additionally, Q-Learning and state-action-reward-state-action (SARSA) are two commonly used model-free reinforcement learning techniques with different exploration policies and similar exploitation policies [

29]. A comparison of learning algorithms, such as GQ, R learning, actor-critic, Q-learning, and SARSA, on the arcade learning environment in [

30] has shown that SARSA performs the most effectively in gaining the reward in comparison to other learning algorithms. Furthermore, a number of studies [

31,

32,

33,

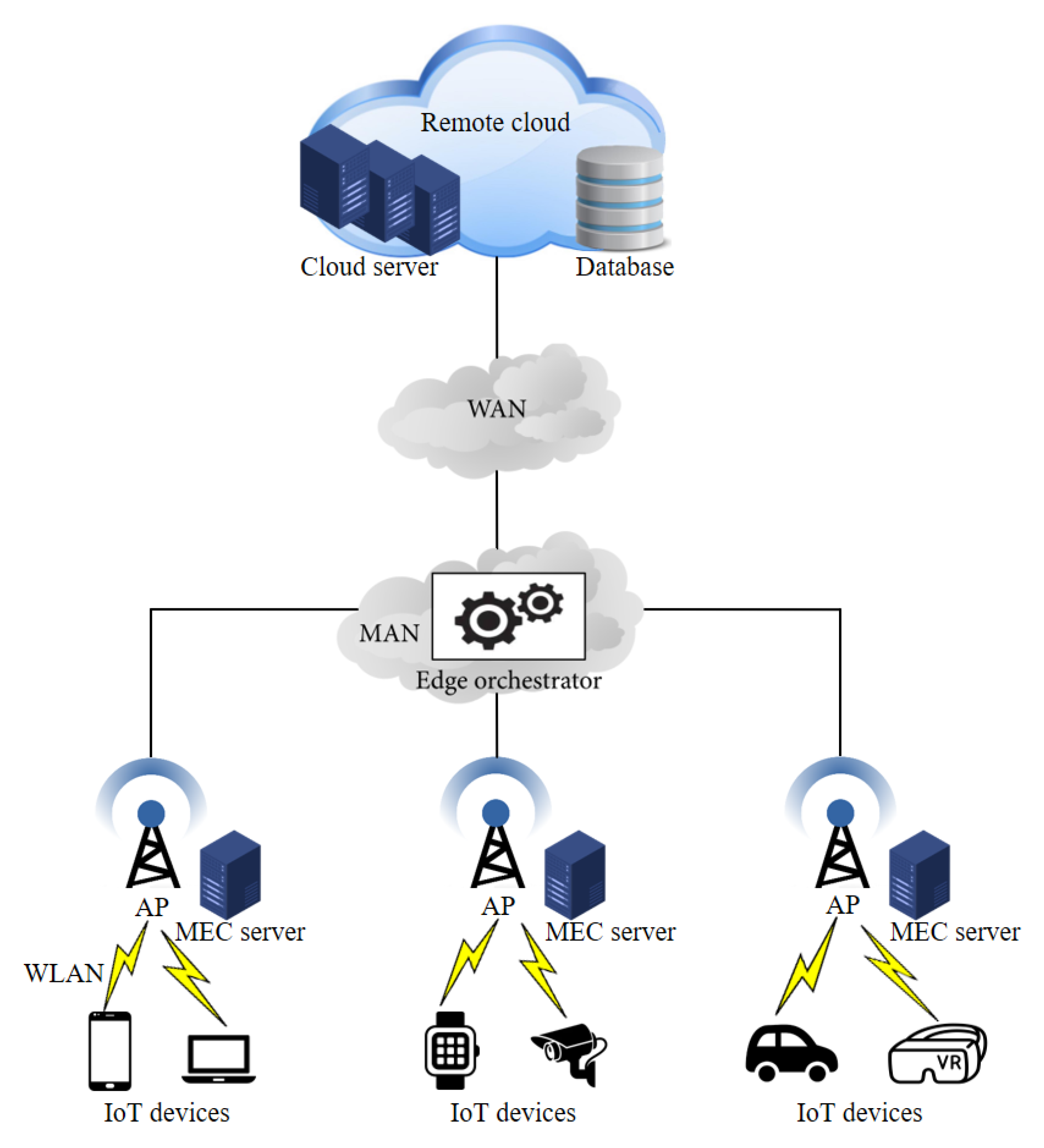

34] on task offloading using the Q-Learning and SARSA techniques have been conducted to enhance the overall performance of the system (i.e., latency and energy consumption minimization, utility and resource optimization). However, these studies did not take advantage of the benefits of using neighboring edge nodes to serve offloaded tasks when the local edge servers have run out of computational resources. In this work, we model an MEC environment as a multi-tier system corresponding to the communication networks at different capacities, such as a WAN, a metropolitan area network (MAN), and a local area network (LAN). The system comprises thousands of IoT devices that continuously send a dynamic flow of requests for offloading. The main novelty of our work is to consider the best neighboring edge servers in which computational resources are excess for task offloading. We take advantage of learning through the experiences of SARSA to find the best neighboring edge servers. As a result, the load balancing among the edge servers is balanced, and the number of failed tasks is significantly reduced when the system receives the dynamic flow of requests from IoT devices. Moreover, we consider the use of an MEO as a decision-maker for task offloading in our system. In SARSA learning, the MEO takes responsibility as an agent, which decides the action. The key contributions of this paper are summarized as follows:

We aim to improve the rate of successfully executing offloaded tasks and to minimize the processing latency by determining the server at which the task should be offloaded, such as a cloud server, local edge server, or the best neighboring edge server, via a decision-maker.

We define the MEO as a decision-maker for flexible task offloading in the system. The MEO manages the topology of the network and decides where the task will be executed. The MEO performs allocations in the MAN of the network.

A collaboration algorithm between the fuzzy logic and SARSA techniques is proposed for optimizing the offloading decisions, which we call the Fu-SARSA algorithm. Fu-SARSA includes two phases: (i) the fuzzy logic phase and (ii) the SARSA phase. The fuzzy logic phase determines whether the task should be offloaded to a cloud server, local edge server, or neighboring edge server. If the MEO chooses the neighboring edge server to execute that task, the choice of the best neighboring edge server is considered in the SARSA phase.

To model the incoming task requests, we consider four groups of applications: healthcare, AR, infotainment, and compute-intensive applications. They have dissimilar characteristics, such as their task length, delay sensitivity, and resource consumption. We compare and evaluate the results with four opponent algorithms, considering typical performance aspects such as the rate of task failure, service time, and VM utilization.

Performance evaluations demonstrate the effectiveness of Fu-SARSA, which showed better results compared to the other algorithms.

We have organized the rest of this paper as follows.

Section 2 lists the related work on task offloading. In

Section 3, we introduce the model of our proposed system and an overview of the Fu-SARSA algorithm. We briefly describe the first and second phases of the Fu-SARSA algorithm (i.e., the fuzzy logic phase in

Section 4 and the SARSA phase in

Section 5), respectively.

Section 6 shows the simulation results of our proposal. Finally, we conclude the paper and discuss the future research approaches in

Section 7.

2. Related Work

Task offloading and resource allocation are the key features in heterogeneous IoT networks. Based on the previous studies, the offloading decision can be classified into three main goals: minimizing the latency [

35,

36], minimizing the energy consumption [

35,

37,

38,

39,

40], and maximizing the utility of the system [

41,

42,

43]. The authors of [

35] proposed an MEC-assisted task offloading technique to enhance latency and energy consumption by applying a hybrid approach: the grey wolf optimizer (GWO) and particle swarm optimization (PSO). Sub-carriers, power, and bandwidth were taken into consideration for offloading to minimize energy consumption. Shu et al. [

36] introduced an efficient task offloading scheme to decrease the total completion time for processing IoT applications by jointly considering the dependency of sub-tasks and the contention between edge devices. In a study by Kuang et al. [

37], using partial offloading scheduling and resource allocation, the energy consumption and total execution delay were optimized, while alsoconsidering the transmission power constraints in the MEC network. The offloading scheduling and task offloading decision issues were settled using the flow shop scheduling theory, whereas the suboptimal power allocation with partial offloading was achieved by applying the convex optimization method. Huynh et al. [

38] formulated an optimization problem as a mixed-integer nonlinear program problem of NP-hard complexity to minimize the total energy consumption and task processing time in the MEC network. The original problem can be split into two subproblems: decisions of resource allocation and computation offloading, solved using a particle swarm optimization approach. By considering the total energy of both file transmission and task computing, the authors of [

39] introduced an optimization problem for efficient task offloading to optimize the energy consumption in the MEC-enabled heterogeneous 5G network. Incorporating the various characteristics of the 5G heterogeneous network, an energy-efficient collaborative algorithm between radio resource allocation and computing offloading was designed. Khorsand et al. [

40] formulated an efficient task offloading algorithm using the Technique for Order of Preference by Similarity to Ideal Solution (TOPSIS) and best-worst method (MWM) methodologies in order to determine the important cloud scheduling decision. The authors of [

41] proposed joint task offloading and load balancing as a mixed-integer nonlinear optimization problem to maximize the system utility in a vehicular edge computing (VEC) environment. The optimization problem was divided into two subproblems—the VEC server selection problem, as well as the issues of the offloading ratio and computation resources. Lyu et al. [

42] jointly optimized the heuristic offloading decision, communication resources, and computational resources to maximize the system utility while satisfying the QoS of the system. The study of Tran et al. [

43] combined the resource allocation and task offloading decisions to maximize the system utility in the MEC network. To solve the task offloading problem, a novel heuristic algorithm was introduced to obtain the suboptimal solution in the polynomial time, whereas the convex and quasi-convex optimization methods were applied to handle the resource allocation problem. The task length for offloading can be classified into two categories: binary or full offloading [

35,

38,

39,

40,

42,

43] and partial offloading [

36,

37,

41]. The tasks were either processed locally themselves or offloaded to the servers in a full offloading, whereas in partial offloading, a part of the task was locally processed and the other parts could be offloaded to the edge servers or a cloud server for execution.

To handle unpredictable environments with multi-criteria decision-making in IoT heterogeneous environments, fuzzy logic has been applied in recent research [

21,

23,

44] to solve problems. Hossain et al. [

23] proposed the fuzzy-assisted task offloading scheme among user devices, edge servers, and a cloud server in the MEC network, using one fuzzy logic stage with five fuzzy input variables. The authors of [

21] proposed the cooperative task offloading mechanism among mobile devices, edge servers, and a cloud server, using eight fuzzy input parameters to achieve better performance with respect to processing time, VM utilization, WAN delay, and WLAN delay. Basic et al. [

44] proposed an edge offloading and optimal node selection algorithm, applying fuzzy handoff control and considering several fuzzy crisp inputs, such as processor speed, bandwidth, and latency capabilities. To maximize the QoE of the system, An et al. [

28] leveraged the advantages of both fuzzy logic and deep reinforcement learning Q-learning mechanisms for efficient task offloading in the vehicular fog computing environment. Moreover, deep learning methods, for example, in [

28,

29,

45,

46,

47,

48,

49], have emerged as a potential means of efficient task offloading in modern networks. The authors of [

45] formulated the computation offloading problem by jointly applying the reinforcement learning Q-learning approach and a deep neural network (DNN) to obtain the optimal policy and value functions for applications in the MEC network. Tang et al. [

46] proposed a full task offloading scheme for delay-sensitive applications to minimize the expected long-term cost by combining three techniques, dueling deep Q-network (DQN), long short-term memory (LSTM), and double-DQN. The authors of [

47] proposed a task offloading algorithm with low-latency communications, using the deep reinforcement learning technique to optimize the throughput of the user vehicles in highly dynamic vehicular networks. Jeong et al. [

48] proposed a flexible task offloading decision method and took the time-varying channel into consideration to minimize the total latency of the application among edge servers in the MEC environment. To optimize the total processing time, a Markov decision process (MDP) technique was applied, and to handle the MDP problem, they designed a model-free reinforcement learning algorithm. To block the attacks from privacy attackers with prior knowledge, the authors of [

49] proposed an offloading and privacy model to evaluate the energy and time consumption and privacy losses for intelligent autonomous transport systems. Taking the risk related to location privacy into consideration, a deep reinforcement learning method was applied and a privacy-oriented offloading policy was formalized to solve these problems. Alfakih et al. [

29] applied the SARSA-based reinforcement learning method for task offloading and resource allocation to optimize the energy consumption and processing time of user devices in an MEC network. However, most of these studies did not consider the best neighboring edge server for task offloading in cases that all VMs in the local edge node are being used. Moreover, to ensure the QoS and QoE of the system, the task throw rate is considered in our study.

6. Performance Evaluation

For the network simulation, we used a realistic simulation that enabled multi-tier edge computing, named EdgeCloudSim [

65]. To attain a more realistic simulation environment, EdgeCloudSim was used to perform an empirical study for the WLAN and WAN using real-life properties. Furthermore, the MAN delay was achieved using a single server queue model with Markov-modulated Poisson Process (MMPP) arrivals. The VM numbers per edge and cloud server were 8 and 4, respectively. The simulation parameters for the MEC network are briefly presented in

Table 5.

To evaluate this proposal, we studied numerous scenarios with different numbers of IoT devices. Specifically, the minimum and the maximum number of IoT devices were 250 and 2500, respectively. The difference in IoT devices between the two consecutive scenarios was 250 devices. In a real-world generic edge computing environment, IoT devices generate various types of applications. However, to decide on the application types, we considered the most studied edge computing use cases in the recent research. We used four typical types of applications for more realistic simulations: healthcare, augmented reality (AR), infotainment, and compute-intensive applications. Specifically, first, a health application that uses a foot-mounted inertial sensor was studied to analyze users’ walking patterns in [

66]. Second, the authors of [

67] proposed an AR application on Google Glass, which is a head-mounted intelligent device that can be worn as wearable computing eyewear. Third, Guo et al. [

68] proposed vehicular infotainment systems for driving safety, privacy protection, and security. Finally, to optimize the delay and energy consumption, compute-intensive services were proposed in [

69]. The characteristics of these application types are described in

Table 6. The use percentage of the application shows the portion of IoT devices generating this application. The incoming tasks were distributed over time based on the task interval indicator; for example, the MEO would receive a request for a healthcare application every 3 s. The delay sensitivity indicator measured the delay sensitivity of the task. The AR application was assumed to be a delay-sensitive application since its delay sensitivity value was 0.9, whereas the compute-intensive case was delay-tolerant with a very low delay sensitivity value of 0.15. The IoT devices implemented applications during the active period and rested during the idle period. The percentage of VM utilization on edge or cloud servers was subject to the length of the task.

To verify its performance, we compared our proposal with other task-offloading approaches: utilization, online workload balancing (OWB), a fuzzy-based competitor, and a hybrid approach. The utilization approach depended on the local VM utilization threshold in the decision as to whether a task should be offloaded to either the remote edge server or a centralized cloud. By considering the lowest VM utilization of any edge server in the network, the OWB approach preferred to offload the task to these servers. The fuzzy-based competitor [

70] utilized four crisp input variables (i.e., task length, network demand, delay sensitivity, and VM utilization) and one FLS process. In this approach, the task could be offloaded to one of the following three server types: a local edge server, a neighboring edge server, or a cloud server. Finally, the hybrid approach analyzed the WAN bandwidth and local VM utilization to offload the task to either the local edge server or a cloud server. In our work, we evaluated several criteria, such as the task failure rate, the percentages of VM utilization, the service time required for accomplishing the applications, and the network delay, in the results of our simulation. The utilization, OWB, fuzzy-based competitor, and hybrid approaches are abbreviated as util, owb, fu-comp, and hybrid, respectively, in the evaluation figures. We compared them to our proposal algorithm, Fu-SARSA, and study their performance. The performance of the main criteria in terms of the average results of all applications are compared in

Figure 9. Moreover, the comparison of the task failure rate between the approaches with four different application types is depicted in

Figure 10, whereas the comparison of service time for the different application types is represented in

Figure 11. In this paper, we study each criterion on the basis of the simulation results.

The main aim of our proposed algorithm Fu-SARSA was to reduce the rate of task failure. The average percentage of failed tasks for all applications is presented in

Figure 9a. The performances of all approaches were similar when the number of IoT devices was below 1500. The network became congested at 1750 IoT devices and reached the peak congestion between 2000 and 2500 devices. Compared to the other competitors, our proposal, Fu-SARSA, showed the best efficiency when the network was overloaded. Due to the network losses on MAN resources, many tasks were not successfully offloaded in the other approaches. The utilization and hybrid approaches exhibited the worst results because these techniques only consider the threshold of the MAN bandwidth or VM utilization. In the real-world 5G environment, applications need to adapt to flexible changes in network parameters. During the comparison with different application types, the failed task percentage of Fu-SARSA was much lower than that of any other algorithm. Particularly, Fu-SARSA worked best for healthcare and AR applications, as shown in

Figure 10a,b, because these applications required a small CPU capacity to ensure that the load balancing among servers was stable when the Fu-SARSA algorithm was operated in the network. For the heavy tasks, such as the compute-intensive and infotainment applications, both Fu-SARSA and fuzzy-based competitor approaches showed good performance in reducing the task failure rate, as depicted in

Figure 10c,d, especially for the scenario with 2500 IoT devices.

Figure 9d represents the average VM utilization of edge servers. As many heavy tasks from 2500 devices were successfully offloaded using the Fu-SARSA approach, a larger amount of CPU resources was used to process the tasks.

Service time is an important criterion to evaluate the effectiveness of the system. In the heterogeneous 5G network, the service time required to accomplish the application should be as short as possible to satisfy the QoS of the system.

Figure 9b represents the average service time for the tasks in terms of all types of applications. The service time of the task is the sum of the processing time and the network delay. Our proposal, Fu-SARSA, provided the best results in terms of the service time in comparison with the other approaches, because it took the network conditions and properties of the incoming task into account; hence, the best decisions, such as the choice of the best neighboring edge server, were made. When the system load was high and the number of IoT devices was more than 1250, the utilization and hybrid approaches showed poor performance. In the worst-case scenario, approximately 6 s were needed to accomplish the task. The OWB approach preferred to offload the task to the VM with the lowest resource capacity and the fuzzy-based competitor approach considered the network parameters for an offloading decision; therefore, they showed better results and processed the task faster. As a result, the average network delay of these algorithms was higher than that of other algorithms when the MAN and WAN resources became congested, as depicted in

Figure 9c. Concerning the average service time required with different application types, Fu-SARSA worked well for heavy computational tasks, as shown in

Figure 11c,d. However, more service time was needed for the healthcare and AR applications in cases with many IoT devices, for instance, from 2000 to 2500 devices. As explained above, Fu-SARSA worked the best for healthcare and AR applications, since it provided a significantly lower task failure rate, especially when the network was overloaded. The network delay for these applications was longer; therefore, the average service time was higher, as illustrated in

Figure 11a,b.

As the Fu-SARSA applies SARSA-based reinforcement learning, we investigated the convergence of the algorithm at some typical learning rates, abbreviated as lr in

Figure 12a. The reward of the system was evaluated with respect to the order of episodes. Four learning rate values were taken into account: 0.01, 0.005, 0.001, and 0.0001. The algorithm worked best with a learning rate of 0.001, achieving the fastest convergence state in comparison to the other learning rates. When the learning rate was greater than 0.001, more episodes needed to be converged. As the learning rate decreased (i.e., lr = 0.0001), the performance worsened, with a longer time needed to reach stable values. On the other hand, to attain better offloading decisions with the on-policy reinforcement learning technique, the value of the epsilon variable should be considered. Since the agent chose the best action with a probability of

, epsilon was selected as a small number, close to 0. For this reason, we investigated five epsilon values of 0.1, 0.2, 0.3, 0.4, and 0.5 in terms of the average task failure percentage when the network was overloaded. Three scenarios, involving 2000, 2250, and 2500 IoT devices, were studied to evaluate the different epsilon values, as depicted in

Figure 12b. Compared to other values, the epsilon value of 0.1 was the most effective in terms of the task offloading performance and was demonstrated to be compatible with the

-greedy policy.