2. Methods

This systematic review is reported in accordance with the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines [

37]; the related 2020-PRISMA checklist is provided as

Supplementary Material (Supplementary File S1). The review protocol was registered within the International Prospective Register of Systematic Reviews (PROSPERO) (Registration ID: 319508 and date of registration: 20 March 2022). The record was constantly updated in the case of any change to the design of the work.

2.1. Eligibility Criteria

All empirical studies published between 2013 and 2022, written in English, and focusing on education through XR technologies in cranial neurosurgery were eligible for inclusion in the present systematic review (

Table 1).

2.2. Types of Studies

The systematic review includes original, experimental, peer-reviewed studies, regardless of publication status. Non-empirical studies presenting XR techniques (e.g., pipelines, systems, know-how) without any user studies for validation purposes are excluded.

2.3. Types of Population

No restrictions to inclusion were made based on the training level or experience of participants. This means that test subjects recruited in the included user studies can be medical students (MSs), residents, or experienced neurosurgeons.

2.4. Type of Intervention

Only articles focusing on the use of extended reality applications for cranial neurosurgical education were considered for inclusion in this review. Studies addressing the use of XR within other contexts such as patient education, informed consent, preoperative planning, or intraoperative navigation were systematically excluded. The focus of this review relied on commercial, off-the-shelf stereoscopic displays; hence, all studies introducing or employing custom devices that are not available on the market were excluded.

2.5. Types of Comparators

There were no restrictions with respect to the type of comparator. This means that control cases can include between-subject conditions, which compare members of the same population, as well as within-subject conditions, which quantify changes in UP/UX for each test subject, and longitudinal studies, which focus more on the long-term impact of the proposed application.

2.6. Types of Outcome Measures

The main outcomes of interest were measures of performance and usability assessed objectively and subjectively by users interacting with different systems.

2.7. Databases and Search Strategy

Five electronic databases covering medicine and technology were used: PubMed, Scopus and Web of Science for medicine; IEEE Xplore and ACM Digital Library for engineering and technology were searched. To ensure reproducibility of our findings, an extensive and elaborate description of the steps that went into the creation of our search strategy (

Supplementary File S2) as well as a detailed description of the query (or queries) applied in each engine is provided (

Supplementary File S3). The search strategy applied to the present review in February 2022 limits results to the last decade (2013–2022), and only includes papers written in English.

2.8. Study Selection

The search yielded a total of 784 papers across databases. Once retrieved, the records were uploaded onto Rayyan [

38], where manual deduplication was performed, leaving 352 articles. It was then noted that two articles were written in foreign languages without translation, which resulted in their exclusion. The remaining 350 records were then screened by two independent and blinded reviewers (V.G.E. and A.I.), first by title and subsequently by abstract. In the final step, full texts of the 50 remaining articles were extracted and separated into three groups of 16 or 17 articles, so as to assign two of them to each of three blinded and independent reviewers (A.I., V.G.E., and M.G.). This way, each group of articles would be reviewed by two different reviewers. After each step, conflicts were solved through both discussion between the involved parties and consultation of a fourth reviewer (M.R.). The entire process yielded a total of 31 studies deemed eligible for definitive inclusion in this review, as illustrated in the PRISMA flow chart shown in

Figure 2.

2.9. Data Extraction

Data from selected records were extracted using a predefined template including (1) general information, i.e., title, first author, journal, publication year, location, etc.; (2) population characteristics; (3) intervention characteristics, i.e., applicability and end-use of the technology, specific domain of application, kind of device used, use of haptic devices, combination of other models; (4) study characteristics and comparators, study design (cross-sectional, case control, or cohort studies), controls; (5) outcomes, i.e., subjective measures, surveys, objective performance metrics; and (6) results and conclusions, i.e., positive or negative results and short summary of the final study outcome.

2.10. Data Synthesis and Risk of Bias Assessment

Although all included studies revolve around the use of XR in cranial neurosurgical education, the procedures simulated in these articles are diverse, from tumor resections to ventriculostomies and trigeminal rhizotomies. This variability in domain of application together with the heterogeneity among both the devices used and the study designs, hinders the performance of a meta-analysis. Consequently, we chose to adhere to a narrative synthesis of the data to describe the body of evidence gathered around the topic of interest, report trends, and highlight the gaps within the literature. Since this systematic review focuses on the use of XR in neurosurgical education specifically, we chose the Newcastle–Ottawa Scale-Education (NOS-E) [

39] as a risk of bias assessment tool tailored to assess medical research quality. According to it, a score on a scale from 0–6 was allocated to each study based on a specific set of items.

5. Discussion

This systematic review explored the use of XR in cranial neurosurgical education, in order to detect trends and uncover knowledge gaps within the research area. We found that the increasing volume of research on the application of XR in the field of neurosurgery does not necessarily coincide with equivalent geographical contributions. To the contrary, research on the topic seems to be mainly localized in North America (especially Canada and USA), where most of the devices employed in present studies are manufactured. Although off-the-shelf XR systems, and especially HMDs, may be accessible anywhere in the world, access to state-of-the-art advanced simulation technologies—which were represented in the majority (74%) of the studies included in this review—is limited in developing countries [

76,

77]. This is, in fact, concerning, as developing countries are oftentimes pointed out as an important beneficiary of XR technologies [

21]. Nevertheless, we can compare the overall spread, market size, and pool of potential users between devices such as NeuroVR and ImmersiveTouch, which are built for the specific purpose of surgical simulation, and other commercial HMDs such as the HoloLens and HTC VIVE. It is clear that by adopting more easily available and widespread technologies in the implementation of XR educational applications, the digital divide as well as the economic barriers currently challenging research in developing countries can be partially overcome.

One way of compensating for these geographical limitations is to outsource technologies, know-how, and expertise in order to gain access to a diversified network of resources including—but not limited to—XR technologies, test subjects, and financial assets. The potential of distributing such resources across multiple countries (or continents), especially in developing nations, unfolds the possibility of overcoming socioeconomic barriers which, at times, prevent talented neurosurgeons and trainees from gaining access to useful tools for their own improvement, learning, and skill assessment. Additionally, disparities between different areas of the globe when it comes to education in neurosurgery can be addressed by developing XR-based applications that are readily accessible on the market, easy to use, and require low maintenance to operate [

21,

22].

Interestingly, we found that only eight studies (26%) employed HMDs as a type of holographic display, while 23 (74%) employed static monitors with a fixed point of view. While the latter enables a better control of environmental variables and user interaction as well as easing the development of XR systems without the need to register virtual on real imagery, the former allows participants more freedom of movement, a higher quality visual perception of the virtual environment (bigger field of view, three degrees of freedom head movements, higher pixel density, adjustment for vision limitations, etc.), and a more natural interaction with varying degrees of augmentation (i.e., the amount of virtual imagery superimposed on real imagery). Throughout the literature presented in this review, static monitors were found to be more commonly used in conjunction with haptic devices and more often associated with a more thorough UP assessment, with a greater number of metrics being involved than HMDs. Although less thoroughly investigated within this area, we argue that more studies presenting educational applications on commercial HMDs would supplement and strengthen the foundations of research presented in the existing literature involving devices such as the NeuroVR and the ImmersiveTouch. Moreover, because of their low cost, we believe that promoting the use of HMDs in particular could potentially enable departments all over the world—especially in developing countries—to carry out research on cranial neurosurgical practices by overcoming socioeconomic challenges related to the resources needed to purchase, maintain, and install more complex and advanced XR systems, such as NeuroVR. A quick comparison between advantages and disadvantages of the two technologies—fixed monitors and HMDs—is presented in

Table 5.

A relevant challenge in designing user tests that yield meaningful impact in this specific field of research is that of participant recruitment; as with any other medical specialty field, it is important to have a sufficiently big dataset to analyze by involving a significant number of users, especially when carrying out between-subjects tests (e.g., residents vs. experienced neurosurgeons). Only seven of the 31 (23%) studies presented in this review collected data from 50 participants or more, and the overall average across all studies amounted to fewer than 31 participants; when only considering medical students, neurosurgical residents, and interns, who are the primary user base for such educational applications, the average drops to slightly above 24, with only five studies (16%) collecting data from 50 participants of these categories or more. This observation highlights the need for recruiting larger pools of test subjects. Again, developing XR applications that are scalable and accessible onto widespread commercial devices, such as HMDs, could help amplify both the cohort sizes and the overall number of studies, empowering the evidence base surrounding this topic.

In the present review, we arbitrarily divided neurosurgical education into assessment of skills, training or practicing, and acquisition of procedural knowledge. Studies considered here were then categorized according to their main subject of focus among these three aspects, albeit it is worth clarifying that not all papers address a single specific aspect of education and not all of them do so in the same way. An example is the possible approaches researchers can take in skill assessment: an XR system can be developed with the specific aim of enabling self-assessment and appreciation by the users themselves, or evaluation and selection by experienced neurosurgeons. The boundaries between these arbitrary labels can thus at times be blurry; nevertheless, we can still infer conclusions from the overall distribution of papers across different groups. Specifically, studies on procedural knowledge acquisition are the least common, focus on a variety of medical practices, and cover a total of six different devices (

Table 2); this in turn suggests that more research addressing this particular aspect of education through longitudinal experiments is needed, with the aim of observing learning curves in test subjects when acquiring new skills.

Moreover, UX, despite being a component of major importance in the development of educational applications, has so far been focused on in a limited and non-scalable approach. As mentioned in previous sections, less than half of the papers presented here address the topic, and only one of them employs standardized, validated questionnaires to assess different measures of UX which, despite not being specifically related to the kind of procedure considered (ventriculostomy), allow for an easier interpretation and comparison of the proposed results with other—past and future—studies. Other publications present a varied and novel set of custom questionnaire items which delve to a certain extent into procedure-related aspects, or more generally assess a heterogeneous combination of UX factors, such as usefulness and realism. Although such experimental designs can yield meaningful results that can be used to estimate the impact of the study on the research field, a comparison with related work, as well as bias avoidance and formal validation of the chosen approach, can be challenging. This, in turn, may partially undermine the quality of the proposed conclusions, especially for those papers in which UX questionnaire items were proposed by the authors without any theoretical foundations to them. Of these types of survey items, task difficulty and consistency with real practices are particularly relevant when developing XR-based educational applications; however, only few studies (n = 4 in both cases) include them when evaluating the related proposed applications. Possible reasons for this are existing challenges in objectively defining task difficulty, and partial redundancies in perceived realism—which is at times addressed with more or less specificity, even multiple times in a single survey. In order to address this issue, standardized questionnaires (e.g., SUS, NASA TLX, and others) need to be consistently employed and, in future research, novel questionnaires that are more specific to the field of neurosurgery need to be developed.

Compared to UX, UP is the subject of most attention throughout the 31 papers presented in this review. User performance is a broad term that refers to relevant aspects of performed surgical simulations, such as eye–hand coordination, manual dexterity, success metrics, and kinematic coordination, to name a few. Most often, metrics of UP are assessed by experienced neurosurgeons or by test subjects themselves after the surgical simulation, which, despite any attempt at avoiding bias, can still be influenced by human factors and therefore lead to inaccuracies in assessment. Additionally, by involving human actors in the measurement and judgment of surgical performance, comparison of results across multiple studies (and even within longitudinal ones) may be biased, hence lowering the overall quality of the proposed conclusions.

A number of studies have dealt with this issue by employing metrics that are computed automatically—and oftentimes in real time—by the same system that the simulation is performed with; in particular, those focusing on more established types of procedure (i.e., tumor resection, ventriculostomy, aneurysm clipping) present a more structured and “advanced” set of metrics when compared to procedures considered in fewer studies. Possible explanations for this trend are the higher amount of research revolving around XR-based neurosurgical education within certain types of surgical practices, and also the lack of any type of optical tracking in the NeuroVR and ImmersiveTouch systems, which in turn partially enables an easier automatic assessment of UP. We can speculate that, in such cases as trigeminal rhizotomy, endoscopy, and cauterization, the adoption of detailed objective UP metrics that are assessed automatically is still underdeveloped and therefore future research could compensate for that. More in general, future research on XR applications to neurosurgical education can possibly focus on the replacement of humans with artificial intelligence (AI) systems in the measuring of UP. When comparing different subject groups, we show that not all UP metrics seem to distinguish experienced neurosurgeons from residents and medical students. Knowing which metrics to use in order to accurately assess skills can be a challenge, which is where machine learning comes into play. In future research, such an approach could aid in assessing performance by looking at overall performance instead of specific metrics. This has already been attempted by two studies included in this review [

59,

70], with the aim of accurately assigning users to their corresponding expertise level.

An essential contributing factor to the quality of the learning experience is the sense of realism as experienced by users when performing surgical simulations in an XR environment. Realism in this context refers to the fidelity of imagery, interaction, and context of an XR application, and quantifies how closely resembling a real-life scenario the visual (and tactile) stimuli are in the mind of the users. The more realistic the simulation is, the higher the quality of the education is [

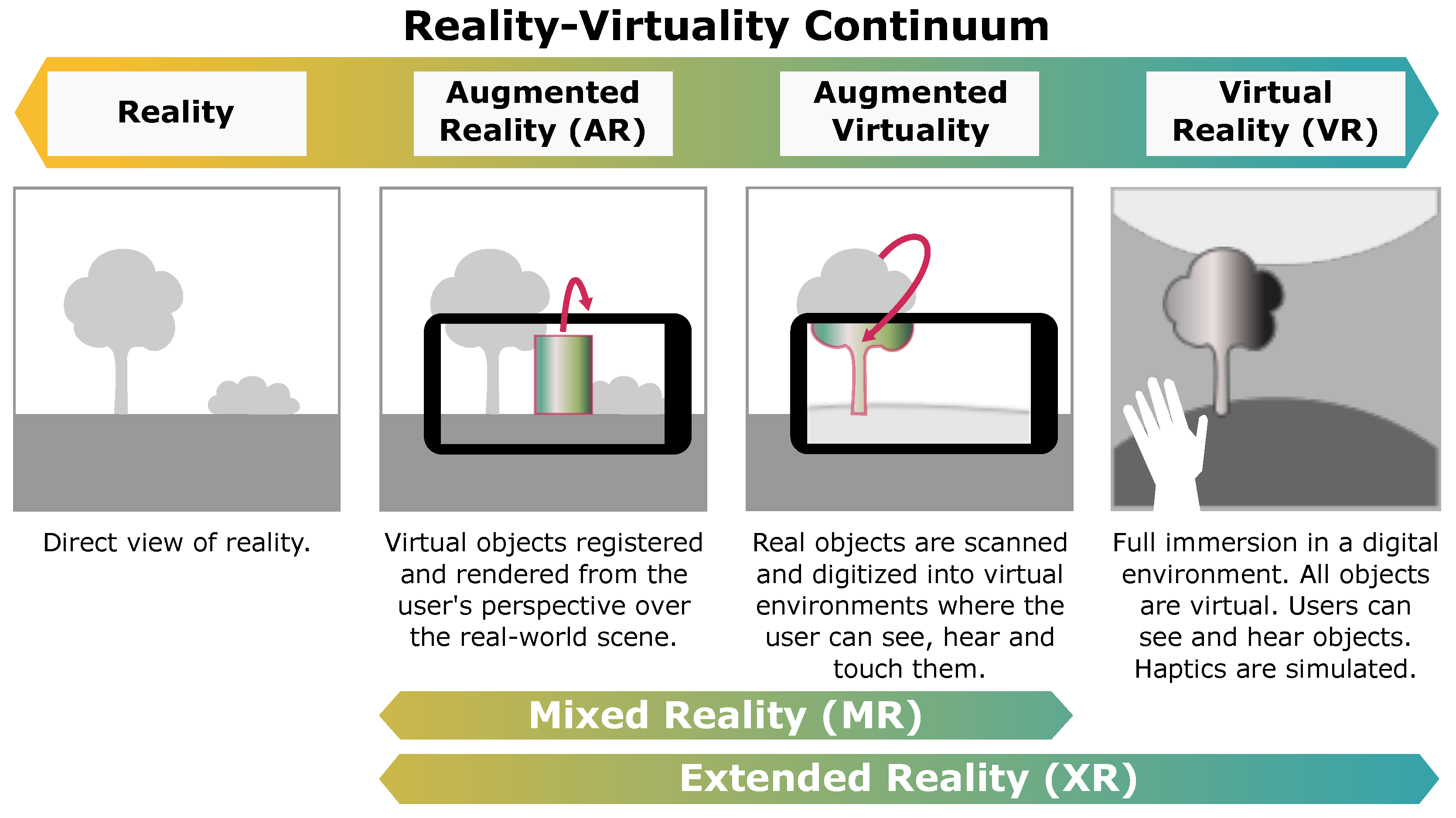

78]. This is currently addressed in present research through non-standardized questionnaires which, despite covering a broad range of facets within realism itself, have so far recorded inconclusive results on the matter. It is, in fact, unclear whether, when comparing subject groups with different levels of expertise (e.g., residents vs. experienced neurosurgeons vs. medical students), less experienced users find the simulation environment more realistic than experienced users, or vice versa. Significant impact may in this context be brought about with the use of surgical phantoms in simulated surgeries, a practice that has already been explored much in previous research—including that within the field of cranial neurosurgery—with solid conclusions stating their usefulness. By employing such phantoms in educational applications, thus implementing so-called augmented virtuality (AV), perceived realism of the experience can arguably benefit from the registration of the physical object with the virtual counterpart (also known as digital twin).

With the present systematic review, we aimed at shedding light on the plethora of recent research on the topic of extended reality applied to cranial neurosurgery. As shown in multiple studies [

79,

80,

81], such technology can have a significant impact on the quality of education intended as training and practicing, skill assessment, and procedural knowledge acquisition. Not only does it enable immersive, realistic simulation of surgical practices in any place and at any time, but it also breaks the boundaries of traditional teaching by expanding it with detailed 3D models of the anatomy, haptic force feedback, automatic performance measurements, and more. While extensive research has already been carried out in other surgical specialties on the topic (including spinal neurosurgery), the specific field of cranial neurosurgery is still in its infancy when it comes to applying XR technologies to educational applications. Nevertheless, studies introduced here present solid results to prove the potential usefulness of such an approach, including when compared to or complementing traditional tutoring and teaching. In this perspective, less experienced users generally appreciate it more than the more experienced counterpart, with only a single study suggesting no difference between subject groups. Assessment of learning curves among trainees such as neurosurgical residents and medical students, as well as comparisons with that of experienced surgeons, show that in surgeries with a lesser degree of variability in their operative conditions (such as ventriculostomy), the performance improvement over time tends to be greater than that in other surgeries (in particular, tumor resection).

Ventriculostomy is typically uniform in its course; thus, successful outcomes resulting from this procedure are highly relying on anatomical knowledge and experience, unlike tumor resection surgeries where monotony is practically absent, which ascribes the skills and dexterity of the surgeon a much bigger role. Hence, improvements are hard to visualize on the modest follow-up timeline provided by most longitudinal studies. In fact, it was found that UP improvements in tumor resection were either non-significant or much more subtle [

58,

59] as compared to those witnessed for ventriculostomy [

19,

65]. Along the same lines, in both tumor resection studies [

58,

59], more training sessions (four and five, respectively) were required to detect even the small improvements in UP, as compared to studies reporting on ventriculostomy procedures, where a single training session was sufficient to significantly improve UP both immediately [

19,

65] and at a 47-day average follow-up [

19]. In summary, the findings described seem to indicate that some surgical procedures may require longer training durations as compared to others, and that studies considering such procedures ought to expand their timeline in order for the desired outcomes to be correctly appreciated.

In our opinion, the true value of this innovative technology ultimately lies in improvement of both quality of patient care and operative outcomes. Despite the fact that only a small number of studies (n = 3) projected the benefits of training in XR settings on live-patient operator performances [

19,

62,

69], the results obtained thus far all proved promising, with increased overall success rates in favor of XR-trained residents. This provides insightful knowledge on the effectiveness of XR simulation in improvement of patient outcomes, and highlights the need for taking the research on this topic one step further with a more patient-centered focus and a larger pool of both participants and patients.

5.1. Limitations

As for the limitations to this review, an exclusively user-centered approach was adopted to study the impact of this technology on the field of interest, while system-related performance metrics, constrained to the capabilities of the technologies employed, were not considered. Additionally, we only performed a narrative synthesis of the major trends in current research, while meta-analysis of the data was not attempted due to the high heterogeneity with regards to populations, comparators, and outcome metrics.

5.2. Future Perspective

Finally, we suggest that research employing diversified XR technologies (such as HMDs) to monitor mid- to long-term improvements in trainee surgical simulation performance through longitudinal within-subjects studies could help confirm conclusions presented in previous work, both in the field of cranial neurosurgery and in the broader field of education in medicine. Moreover, we propose that an accurate analysis of biomechanical factors, measured automatically by capturing body and hand movements in real time during surgical simulation, could improve the evaluation of users’ dexterity and eye–hand coordination when performing specific surgical procedures. Through a comparison of trainee skills with those of experienced neurosurgeons, appreciation of learning curves in an extended timespan would be enabled and, ultimately, access to high-level education would be open to residents and medical students all over the world. Eventually, detailed and precise computation of performance metrics via the implementation of AI in XR systems would decrease the need for expert assessment when monitoring trainee improvements, thus facilitating the acquisition of procedural knowledge related to cranial neurosurgical procedures.