1. Introduction

HAR has received a lot of attention in recent years for its applications in smart homes, fall detection for the elderly, sports training, medical rehabilitation, and misbehavior recognition [

1,

2]. For example, by analyzing the movements of elderly people living alone, the fall behavior can be detected for seeking help from family members in time. Fitness people can obtain their own exercise data by counting steps and recognizing exercise status to achieve scientific exercise and fitness management. Doctors can diagnose patients with knee diseases by gait analysis. In the rehabilitation phase, the rehabilitation plan can be adjusted based on the movement data of patients with lower limb diseases. The HAR technologies could be divided into two categories: camera-based and sensor-based [

3,

4]. The camera-based method extracts the human activity features from the video stream by placing a camera in the human surroundings. Although this approach can visually display the details of human action, it suffers from privacy issues, and its performance is subject to the quality of background illumination. On the contrary, the sensor-based approach has many advantages. It is unaffected by the surrounding environment and is promising to obtain higher accuracy. In addition, it will not cause privacy problems of users. Therefore, sensor-based approaches are more suitable for human activity recognition [

5]. In this paper, we mainly discuss the problem of sensor-based HAR.

In the existing studies, researches placed the smart device on the waist [

6], pants pocket [

7], or wrist [

8], using the inertial measurement unit (IMU) in the smart device to collect human activity data. The IMU including the accelerometer and gyroscope is used to measure human body acceleration and angular rate. Firstly, the sensor data are preprocessed for noise reduction and normalization. Then, the feature extraction and feature classification are performed to complete the human activity classification. In the past, magnificent progress has been made by conventional machine learning algorithms on HAR such as support vector machines (SVM), random forest (RF), and hidden Markov models (HMM). In [

9], a k-nearest neighbor (KNN) model was used to classify human action, but some similar activities could not be distinguished. The paper [

10] compared the performance of three classifiers, KNN, SVM, and RF, and the RF has the highest accuracy. Wang et al. [

11] used extreme learning machine to recognize eight activities and achieved an accuracy of 70%. Duc et al. [

12] designed a HAR system based on SVM by extracting 248 features to recognize six activities. Even though these methods achieve good results in some datasets, certain human experience is required for extracting hand-crafted features which results in a limited accuracy [

6].

Unlike the traditional machine learning, deep learning (DL) can process the preprocessed IMU data without extracting hand-crafted features and is widely used in the activity recognition [

13,

14]. In recent years, many CNN-based HAR methods which extracted features automatically have been proposed [

15,

16,

17]. In [

18], a deep convolutional neural network is proposed to perform an effective HAR. Ignatov [

15] proposed a CNN model for local feature extraction along with statistical features to obtain the global properties of the sensor time series. The recognition accuracy of his method on the public dataset WISDM is 93.32%. Huang et al. [

17] proposed a two-stage end-to-end convolutional neural network to solve the problem of low accuracy of going upstairs and going downstairs. The model was tested on WISDM. Compared with the single-stage CNN, the recognition accuracy of going upstairs and going downstairs is improved. Alemayoh et al. [

6] proposed a double-channel CNN to identify human behavior by accelerometer and gyroscope in the smartphone strapped to the waist. The accuracy reached 97.08%, but the network could overlook the temporal features. Qi et al. [

19] proposed a fast and robust deep convolutional neural network to identify 12 complex human activities collected from a smartphone and the accuracy reached 94.18% in the experiment.

However, the activity recognition is a classification problem based on time series. CNN is hard to extract the long-term dependency within the time series which makes it hard to improve the performance of the model. To solve the problem, Long Short-Term Memory (LSTM) network has been widely used in HAR because of its advantages in extracting long-term dependence within time series [

20,

21]. Mohib et al. [

22] proposed a stacked LSTM network for recognizing human behaviors using smartphone data. The accuracy of the proposed network is 93.13%. Zhao et al. [

23] proposed a residual BiLSTM to address the HAR problem. The residual connection built between the stacked cells can avoid the gradient vanishing problem. Alawneh et al. [

24] compared the results of the LSTM and BiLSTM models on the sensor-based HAR dataset. The results showed that the BiLSTM outperforms the LSTM in the recognition accuracy.

A lot of recent work on HAR focused on the hybrid model of CNN and RNN. Nafea et al. [

25] proposed a model using CNN with varying kernel dimensions along with BiLSTM to obtain features at different resolutions. It has a high accuracy on the WISDM and UCI datasets. Nan et al. [

26] proposed a multichannel CNN-LSTM network for smartphone-based HAR in elderly people. Fifty-three elderly people participated in the experiment, and the results showed that the proposed network performed better than CNN and CNN-LSTM. In [

27], four deep learning hybrid models composed of CNN and RNN were studied to recognize complex activities. Experimental results show that CNN-BiGRU performs better than several other models.

In addition, some papers introduced the self-attention to HAR. Abdel et al. [

28] proposed a dual-channel network composed of convolutional residual network, LSTM, and attention mechanism. The accuracy of the proposed network on WISDM reached 98.9%. Mahmud et al. [

29] employed self-attention to identify human activities, and the F1 score of the model is 96%. Although many DL studies have achieved great success in the field of HAR, their performance is not the best due to neglecting to exploit both spatial and temporal information of sensor data. Some networks [

6,

25,

29] are relatively complex, making them difficult to run in the devices which have limited computer sources and memory spaces. To solve these problems, in this study, we proposed a new DL model which cascades a residual network with BiLSTM. Firstly, we use a residually connected convolutions (ResNet) [

30] to extract the spatial features of sensor data. Then we use BiLSTM to obtain forward and backward dependencies of feature sequence.

The primary contributions of this work are as follows:

- (1)

A new model, combining the ResNet with BiLSTM, is proposed to capture the spatial and temporal feature of sensor data. The rationality of this model is explained from the perspective of human lower limb movement and the corresponding IMU signal.

- (2)

We introduce the BiLSTM into ResNet to extract the forward and backward dependencies of feature sequence which is useful to improve the performance of the network. We analyze the impact of model parameters on classification accuracy. The optimal network parameters are selected through experiments.

- (3)

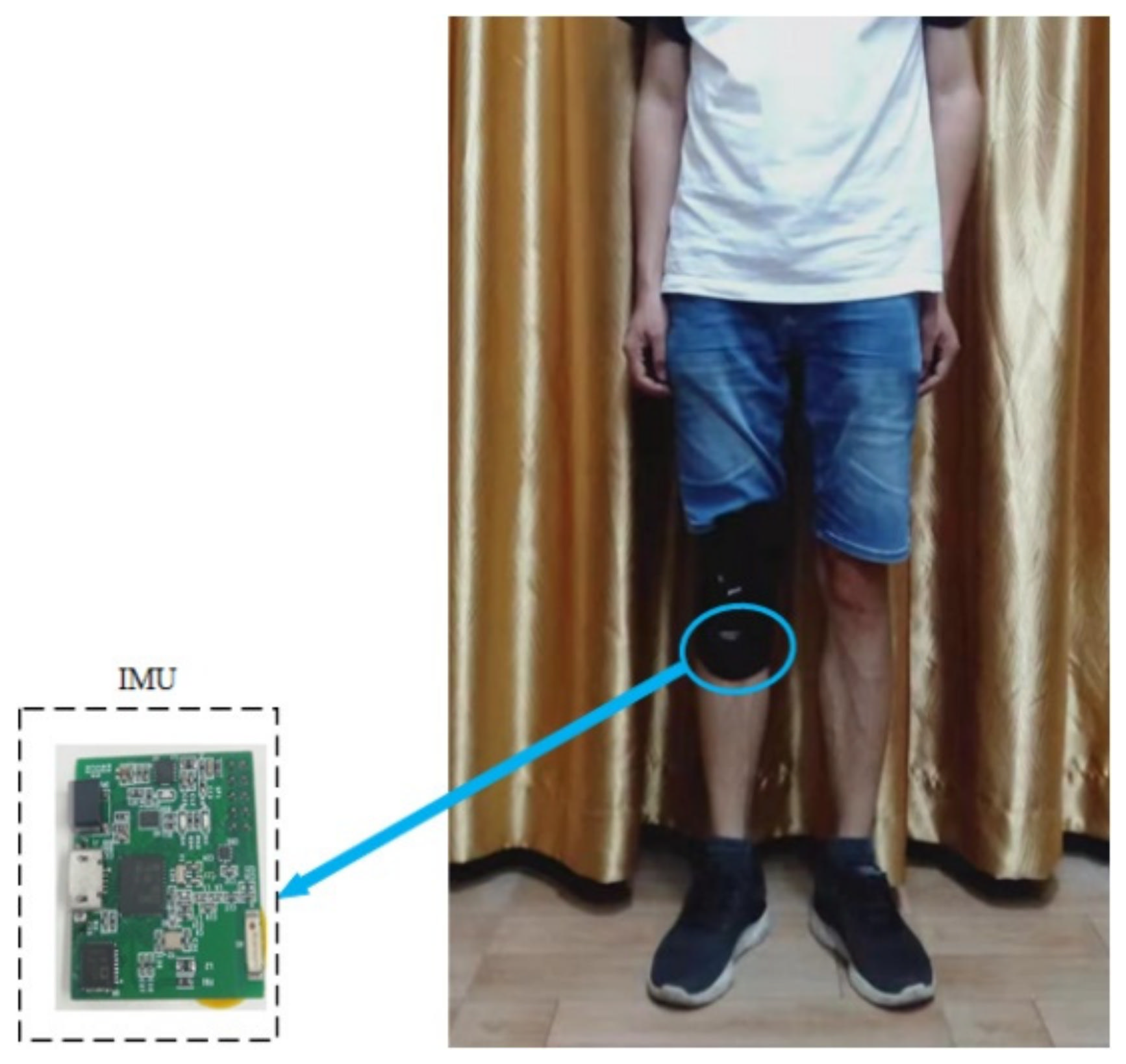

An HAR dataset, in which the human activity data are collected by a self-developed IMU board, was made. The IMU board is attached to human shank to collect the activity data of the human lower limbs. Our model performs well on this dataset. The proposed model was also tested on both the WISDM and PAMA2 HAR datasets and outperforms existing solutions.

The rest of this paper is organized as follows. In

Section 2, the proposed mode is described.

Section 3 describes the collection of sensor data, the public HAR dataset, experimental setup, experimental results and discussion.

Section 4 is the conclusion of this paper.

2. Proposed Approach

With the massive application of MEMS IMU in the smartphone and wearable systems, the HAR is gradually shifting from image-based to sensor-based.

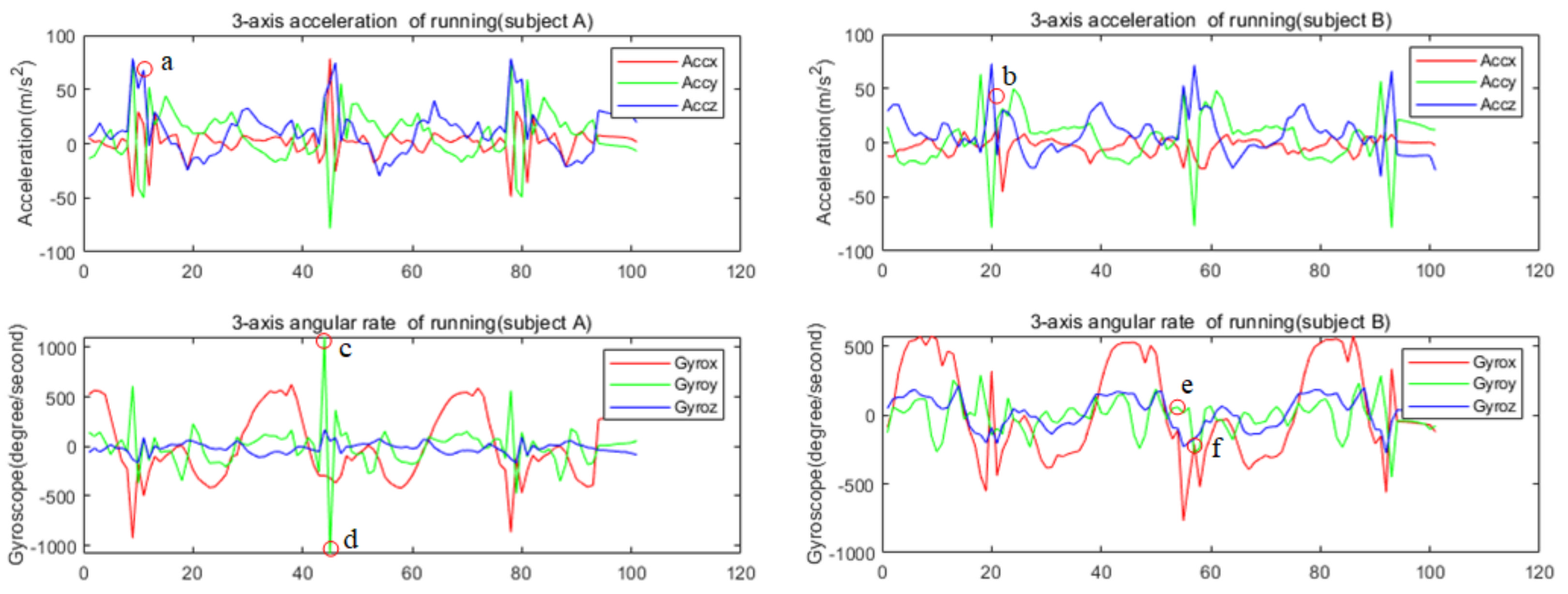

Figure 1 shows the signal of IMU attached to the shanks on different people while running. The traditional HAR method firstly calculates the features of IMU signal in a period, such as the mean, variance, and maximum value of sensor data in the sliding window, or the correlation coefficient of different channel signals. Then, the calculated features are used to judge the activity category by some preset thresholds, or the calculated features are put into a machine learning model for training and classification. As we know, for the same activity, there are differences in different people’s movements and differences in one person’s movement at different times; therefore, the calculated features are quite different. The calculated features of different actions by traditional methods tend to overlap. As shown in

Figure 1, the signal amplitude at a certain point (c, d, e, f) in the running cycle of two people is different, obviously, which leads to large differences in the hand-crafted features. Therefore, it is hard to recognize the human activity using such features and more powerful feature extraction methods are needed.

ResNet [

30] is an important improved CNN model with powerful local spatial feature extraction capability widely used in the field of image recognition. It can also be used to extract the local features between different channels of IMU signal in a small sampling segment, that is, the local spatial features of IMU signal. However, the human motion, especially the motion of the lower limbs, is a non-rigid motion. There are some irregular changes in the IMU signal due to the movement of the lower limbs of the human body within a short period. For example, there is an irregular spike at point a for subject A, but it is smooth at point b for subject B in

Figure 1. Only extracting the spatial features of the IMU signal may easily lead to false recognition. For a long time, the sensor signal is relatively flat and periodic due to the stability and periodicity of human gait. We can obtain the dependence of the sensor signal over a long time to improve the recognition accuracy. Therefore, we consider using LSTM to extract the long-term dependency of IMU signal.

BiLSTM is a special LSTM that can extract both forward and backward dependence on the time sequences [

23]. We proposed a new model by merging the ResNet and BiLSTM based on the above analysis of the characteristics of human limb movement and IMU signal. The architecture of our proposed model is presented in

Figure 2. As shown in the figure, the input data are firstly processed by the residual block to extract the local spatial features of the data. Then, the flattened features are fed into BiLSTM. There is a dropout layer followed by the BiLSTM to avoid overfitting. After a dense layer, a Softmax layer is used for yielding a probability distribution over classes at the end of the model.

2.1. Spatial Feature Extraction Based on ResNet

Due to the differences of the movements of the lower limbs when different individuals exercise and the complexity of human movements, the extracted hand-crafted features of different human activities are easy to overlap. It is difficult to separate these features by threshold or machine learning model. Manual features that are easy to distinguish are also difficult to design. Traditional methods use the manual features to recognize human activities, and the effect is not good. The CNN model can automatically extract the local spatial features of the sensor signal by learning lots of samples. The powerful capability of feature extraction improves the accuracy of HAR. It has been widely used in sensor-based HAR. However, increasing the number of convolutional layers in the model results in accuracy saturation. The ResNet is proposed to solve this problem. In the shallow network, the residual module can be also beneficial to improve network performance [

30]. As shown in

Figure 2, the residual module is composed of two convolutional layers connected in sequence, and a parallel skip link is added. In order to obtain the spatial features of the different channels of sensor signal, the two-dimensional convolutional residual network is used. The first convolution layer is designed with 32 kernels of size 2 × 2. The stride length of the convolution window is 2. A Batch Norm (BN) layer is added to this convolutional layer, to speed up the training process, and to avoid problems of covariate shift. The BN layers are followed by the ReLu activation function, which has the advantage of avoiding gradient disappearance. The second convolution layer has the same parameters except the stride length is 1. In order to make the dimension of output of the two convolutional layers consistent with the original input dimension, the same two-dimensional convolution is performed in the parallel skip linking. Let the input of residual block be

, the output can be expressed as

where

represents the mapping learned by the stacked convolutional layers and

represents the mapping learned by the shortcut connection part. When the shortcut connection part is added, the residual network is prone to learn constant mappings after the network reaches its optimal performance, thus at least not deteriorating the network performance, while more parameters allow for a greater model fitting potential. The model parameters are described later.

2.2. BiLSTM Layer

As mentioned above, only extracting the local spatial features of the sensor signal of human activity is not enough for HAR. The RNN model has the ability to capture temporal information from time sequences. However, Bengio et al. [

31] inform that RNN networks can recognize the data for only a moment, owing to the vanishing and exploding gradient issue. LSTM is a special type of RNN that solves the problem of long-time dependence of time series due to its special memory cells [

32]. In this study, we use a special LSTM named BiLSTM to analyze the local spatial feature sequence to obtain the long-term regularity of the sensor signal.

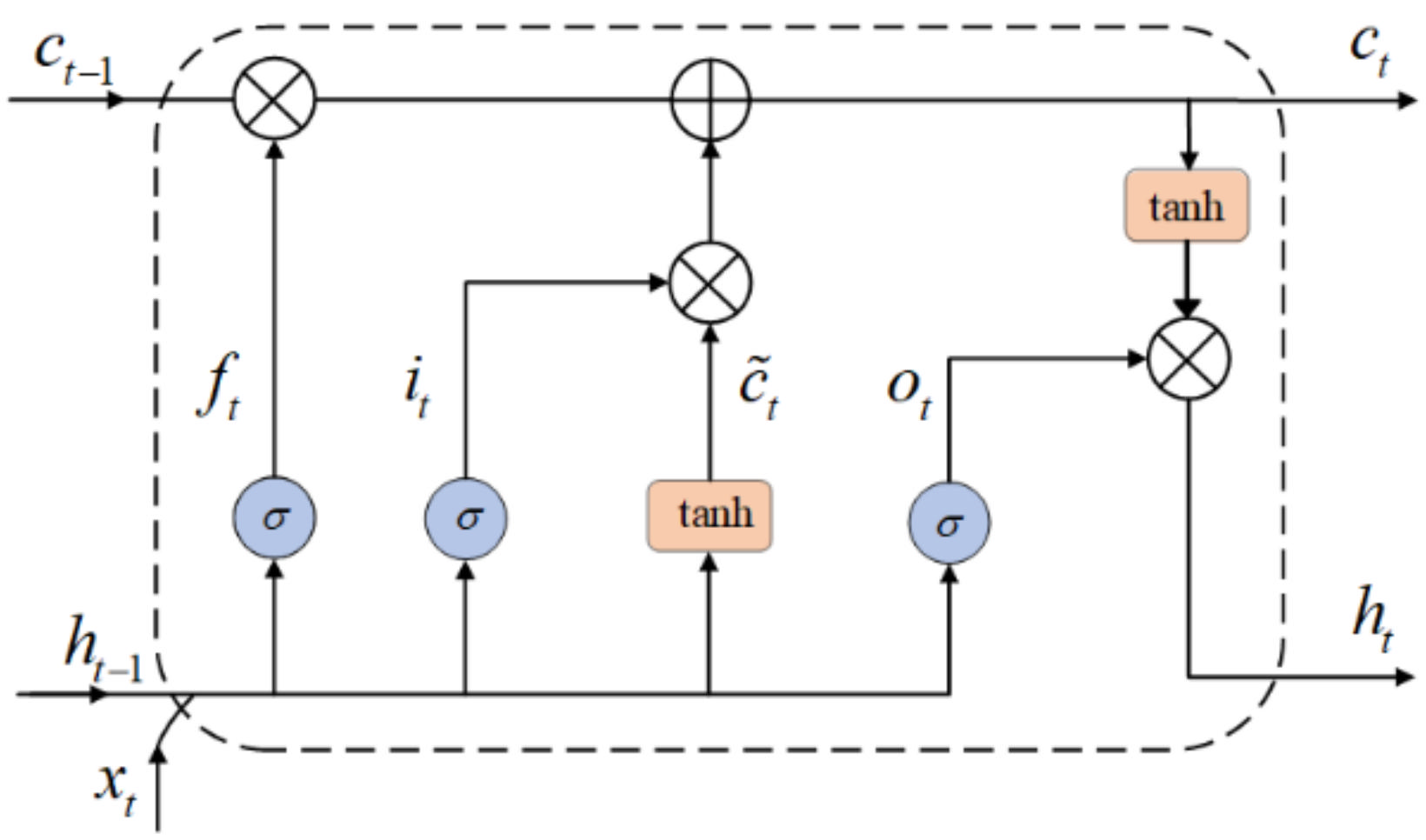

Figure 3 shows the cells of LSTM.

LSTM is implemented through three gates: input gate, forget gate and output gate. An LSTM unit can be defined and explained as follows, where

,

is the weight matrix, and

is the bias term:

where,

is the input gate at time

,

is the matrix multiplication,

is the sigmoid function,

is the input data at time

, and

is the output of the previous LSTM unit. The input gate determines which information in the previous unit needs to be updated.

where,

represents the forget gate which calculates the importance of the information and forgets some old information.

The candidate state

is calculated with the tanh function as depicted in Equation (3). Then, the present cell state is computed as expressed in Equation (4), where,

denotes element multiplication.

In Equation (6), the output gate is calculated. In Equation (7), is the output of LSTM unit.

Baseline LSTM predicts the human current activity based only on former data. It is obvious that some information may be lost if the data are considered on only one direction. The BiLSTM is made up of two LSTM layers in two directions. As shown in

Figure 4, the output of the BiLSTM is determined by the LSTM in the forward layers and backward layers together. In the BiLSTM, the output layer

is expressed as follow [

33]:

where, the

and

represent the forward and backward results of LSTM units. The output

is formed by concatenating these two LSTM units.