Real-Time Recognition and Detection of Bactrocera minax (Diptera: Trypetidae) Grooming Behavior Using Body Region Localization and Improved C3D Network

Abstract

1. Introduction

2. Materials and Methods

2.1. Experimental Materials

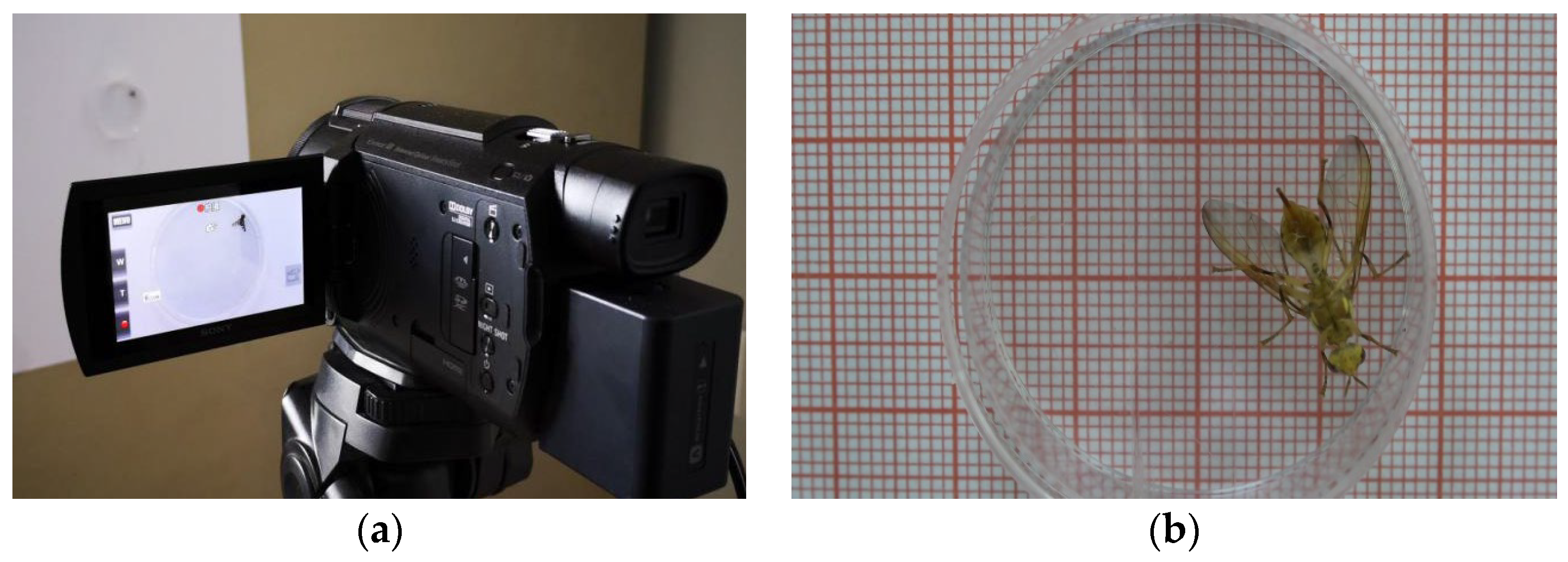

2.1.1. Experimental Equipment and Environment

2.1.2. Experimental Data Acquisition

2.2. Experimental Methods

2.2.1. General Flow Chart of the Methodology

2.2.2. Target Region Localization

2.2.3. Target ROI Acquisition

2.2.4. Training Set Generation

2.2.5. Improved C3D Network Training

2.2.6. Identifying the Behavior of Bactrocera minax in Consecutive Frames

3. Results

3.1. Input Data Pre-Processing

3.2. Three-Dimensional Neural Network Training Parameter Setting

3.3. Continuous Frame Target Region Interception

3.4. Statistical Results of Grooming Behavior Identification and Detection of Bactrocera minax

3.5. Comparison between Methods

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Wei, Z.; Ding, M.; Gu, W.; Huang, Y.; Li, S. Study on grooming behavior ethogram and behavior sequence in fruitfly Drosophila melanogaster. J. Xian Jiaotong Univ. 2006, 27, 23–26. [Google Scholar]

- Kalueff, A.V.; La Porte, J.L.; Bergner, C.L. Neurobiology of Grooming Behavior; Cambridge University Press: Cambridge, UK, 2010. [Google Scholar]

- Böröczky, K.; Wada-Katsumata, A.; Batchelor, D.; Zhukovskaya, M.; Schal, C. Insects groom their antennae to enhance olfactory acuity. Proc. Natl. Acad. Sci. USA 2013, 110, 3615–3620. [Google Scholar] [CrossRef] [PubMed]

- Barradale, F.; Sinha, K.; Lebestky, T. Quantification of Drosophila Grooming Behavior. J. Vis. Exp. 2017, 125, e55231. [Google Scholar] [CrossRef]

- Zhukovskaya, M.; Yanagawa, A.; Forschler, B.T. Grooming behavior as a mechanism of insect disease defense. Insects 2013, 4, 609–630. [Google Scholar] [CrossRef]

- Yanagawa, A.; Neyen, C.; Lemaitre, B.; Marion-Poll, F. The gram-negative sensing receptor PGRP-LC contributes to grooming induction in Drosophila. PLoS ONE 2017, 12, e0185370. [Google Scholar] [CrossRef]

- Ozaki, M.; Wada-Katsumata, A.; Fujikawa, K.; Iwasaki, M.; Yokohari, F.; Satoji, Y.; Nisimura, T.; Yamaoka, R. Ant nestmate and non-nestmate discrimination by a chemosensory sensillum. Science 2005, 309, 311–314. [Google Scholar] [CrossRef] [PubMed]

- Carlin, N.F.; Hölldobler, B. The kin recognition system of carpenter ants (Camponotus spp.) I. Hierarchical cues in small colonies. Behav. Ecol. Sociobiol. 1986, 19, 123–134. [Google Scholar] [CrossRef]

- Rath, W. Co-adaptation of Apis cerana Fabr. and Varroa jacobsoni Oud. Apidologie 1999, 30, 97–110. [Google Scholar] [CrossRef]

- Spruijt, B.M.; van Hooff, J.A.; Gispen, W.H. Ethology and neurobiology of grooming behavior. Physiol. Rev. 1992, 72, 825–852. [Google Scholar] [CrossRef]

- Pitmon, E.; Stephens, G.; Parkhurst, S.J.; Wolf, F.W.; Kehne, G.; Taylor, M.; Lebestky, T. The D1 family dopamine receptor, DopR, potentiates hind leg grooming behavior in Drosophila. Genes Brain Behav. 2016, 15, 327–334. [Google Scholar] [CrossRef]

- Hamiduzzaman, M.M.; Emsen, B.; Hunt, G.J.; Subramanyam, S.; Williams, C.E.; Tsuruda, J.M.; Guzman-Novoa, E. Differential Gene Expression Associated with Honey Bee Grooming Behavior in Response to Varroa Mites. Behav. Genet. 2017, 47, 335–344. [Google Scholar] [CrossRef] [PubMed]

- Seeds, A.M.; Ravbar, P.; Chung, P.; Hampel, S.; Midgley, F.M., Jr.; Mensh, B.D.; Simpson, J.H. A suppression hierarchy among competing motor programs drives sequential grooming in Drosophila. Elife 2014, 3, e02951. [Google Scholar] [CrossRef] [PubMed]

- Gui, L.-Y.; Xiu-Qin, H.; Chuan-Ren, L.; Boiteau, G. Validation of harmonic radar tags to study movement of Chinese citrus fly. Can. Entomol. 2011, 143, 415–422. [Google Scholar] [CrossRef]

- Luo, J.; Gui, L.Y.; Boitaeu, G.; Hua, D.K. Study on the application of insect harmonic radar in the behavior of Chinese citrus fly. J. Environ. Entomol. 2016, 38, 514–521. [Google Scholar]

- Huang, X.Q.; Zheng-Yue, L.I.; Chuan-Ren, L.I.; Boiteau, G.; Gui, L.Y. Wing loading and extra loading capacity of adults of the Chinese citrus fruit fly, Bactrocera(Tetradacus) minax (Diptera:Tephritidae). Acta Entomol. Sin. 2012, 55, 606–611. [Google Scholar]

- He, Z.; Gui, L.; Wang, F.; Shi, Y.; Liang, P.; Yang, X.; Hua, D.; Du, T. Effect of male inflorescence of Castanea mollissima on the reproductive development and lifetime of Bactrocera minax. J. Asia-Pac. Entomol. 2020, 23, 1041–1047. [Google Scholar] [CrossRef]

- You, K.; Zhou, Q.; Jing, Q. Feeding, mating and oviposition behaviours of the adults of Bactrocera minax Enderlein. J. Nat. Sci. Hunan Norm. Univ. 2012, 35, 68–71. [Google Scholar]

- Wang, J.; Fan, H.; Xiong, K.-C.; Liu, Y.-H. Transcriptomic and metabolomic profiles of Chinese citrus fly, Bactrocera minax (Diptera: Tephritidae), along with pupal development provide insight into diapause program. PLoS ONE 2017, 12, e0181033. [Google Scholar] [CrossRef]

- Zhan, W.; Zou, Y.; He, Z.; Zhang, Z. Key points tracking and grooming behavior recognition of Bactrocera minax (Diptera: Trypetidae) via DeepLabCut. Math. Probl. Eng. 2021, 2021, 1392362. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhan, W.; He, Z.; Zou, Y. Application of Spatio-Temporal Context and Convolution Neural Network (CNN) in Grooming Behavior of Bactrocera minax (Diptera: Trypetidae) Detection and Statistics. Insects 2020, 11, 565. [Google Scholar] [CrossRef]

- Wu, X. Application of artificial intelligence in modern vocational education technology. J. Phys. Conf. Ser. 2021, 1881, 032074. [Google Scholar] [CrossRef]

- Zhan, W.; Hong, S.; Sun, Y.; Zhu, C. The system research and implementation for autorecognition of the ship draft via the UAV. Int. J. Antennas Propag. 2021, 2021, 4617242. [Google Scholar] [CrossRef]

- Guo, Y.; Zhan, W.; Li, W. Application of Support Vector Machine Algorithm Incorporating Slime Mould Algorithm Strategy in Ancient Glass Classification. Appl. Sci. 2023, 13, 3718. [Google Scholar] [CrossRef]

- Huang, H.; Zhan, W.; Du, Z.; Hong, S.; Dong, T.; She, J.; Min, C. Pork primal cuts recognition method via computer vision. Meat Sci. 2022, 192, 108898. [Google Scholar] [CrossRef]

- She, J.; Zhan, W.; Hong, S.; Min, C.; Dong, T.; Huang, H.; He, Z. A method for automatic real-time detection and counting of fruit fly pests in orchards by trap bottles via convolutional neural network with attention mechanism added. Ecol. Inform. 2022, 70, 101690. [Google Scholar] [CrossRef]

- Chao, M.; Wei, Z.; Yuqi, Z.; Jianhua, L.; Shengbing, H.; Tianyu, D.; Jinhui, S.; Huazi, H. Trajectory Tracking and Behavior Analysis of Stored Grain Pests via Hungarian Algorithm and LSTM Network. J. Chin. Cereals Oils Assoc. 2023, 38, 28–34. [Google Scholar]

- Mengyuan, X.; Wei, Z.; Lianyou, G.; Hu, L.; Peiwen, W.; Tao, H.; Weihao, L.; Yong, S. Maize leaf disease detection and identification based on ResNet model. Jiangsu Agric. Sci. 2023, 1–8. [Google Scholar]

- Li, W.; Zhan, W.; Han, T.; Wang, P.; Liu, H.; Xiong, M.; Hong, S. Research and Application of U 2-NetP Network Incorporating Coordinate Attention for Ship Draft Reading in Complex Situations. J. Signal Process. Syst. 2023, 95, 177–195. [Google Scholar] [CrossRef]

- Sun, C.; Zhan, W.; She, J.; Zhang, Y. Object detection from the video taken by drone via convolutional neural networks. Math. Probl. Eng. 2020, 2020, 4013647. [Google Scholar] [CrossRef]

- Zhan, W.; Sun, C.; Wang, M.; She, J.; Zhang, Y.; Zhang, Z.; Sun, Y. An improved Yolov5 real-time detection method for small objects captured by UAV. Soft Comput. 2022, 26, 361–373. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.-Y.; Berg, A.C. Ssd: Single shot multibox detector. In Proceedings of the Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, 11–14 October 2016; pp. 21–37. [Google Scholar]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, 23–28 August 2020; pp. 213–229. [Google Scholar]

- Manoukis, N.C.; Collier, T.C. Computer vision to enhance behavioral research on insects. Ann. Entomol. Soc. Am. 2019, 112, 227–235. [Google Scholar] [CrossRef]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 35, 221–231. [Google Scholar] [CrossRef]

- Yang, X.; Fan, P. Convolutional end-to-end memory networks for multi-hop reasoning. IEEE Access 2019, 7, 135268–135276. [Google Scholar] [CrossRef]

- Tran, D.; Bourdev, L.; Fergus, R.; Torresani, L.; Paluri, M. Learning spatiotemporal features with 3d convolutional networks. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 4489–4497. [Google Scholar]

- Zhou, Z.-H. Machine Learning; Springer Nature: Berlin/Heidelberg, Germany, 2021. [Google Scholar]

- Yang, H.; Yuan, C.; Li, B.; Du, Y.; Xing, J.; Hu, W.; Maybank, S.J. Asymmetric 3d convolutional neural networks for action recognition. Pattern Recognit. 2019, 85, 1–12. [Google Scholar] [CrossRef]

- Rizvi, S.A.H.; George, J.; Reddy, G.V.P.; Zeng, X.; Guerrero, A. Latest Developments in Insect Sex Pheromone Research and Its Application in Agricultural Pest Management. Insects 2021, 12, 484. [Google Scholar] [CrossRef]

- Bjerge, K.; Nielsen, J.B.; Sepstrup, M.V.; Helsing-Nielsen, F.; Hoye, T.T. An Automated Light Trap to Monitor Moths (Lepidoptera) Using Computer Vision-Based Tracking and Deep Learning. Sensors 2021, 21, 343. [Google Scholar] [CrossRef]

- Tetila, E.C.; Machado, B.B.; Menezes, G.V.; de Souza Belete, N.A.; Astolfi, G.; Pistori, H. A deep-learning approach for automatic counting of soybean insect pests. IEEE Geosci. Remote Sens. Lett. 2019, 17, 1837–1841. [Google Scholar] [CrossRef]

- Albanese, A.; Nardello, M.; Brunelli, D. Automated pest detection with DNN on the edge for precision agriculture. IEEE J. Emerg. Sel. Top. Circuits Syst. 2021, 11, 458–467. [Google Scholar] [CrossRef]

- Yue, Y.; Cheng, X.; Zhang, D.; Wu, Y.; Zhao, Y.; Chen, Y.; Fan, G.; Zhang, Y. Deep recursive super resolution network with Laplacian Pyramid for better agricultural pest surveillance and detection. Comput. Electron. Agric. 2018, 150, 26–32. [Google Scholar] [CrossRef]

- Bhoi, S.K.; Jena, K.K.; Panda, S.K.; Long, H.V.; Kumar, R.; Subbulakshmi, P.; Jebreen, H.B. An Internet of Things assisted Unmanned Aerial Vehicle based artificial intelligence model for rice pest detection. Microprocess. Microsyst. 2021, 80, 103607. [Google Scholar] [CrossRef]

- Willett, D.S.; George, J.; Willett, N.S.; Stelinski, L.L.; Lapointe, S.L. Machine Learning for Characterization of Insect Vector Feeding. PLoS Comput. Biol. 2016, 12, e1005158. [Google Scholar] [CrossRef] [PubMed]

- Nath, T.; Mathis, A.; Chen, A.C.; Patel, A.; Bethge, M.; Mathis, M.W. Using DeepLabCut for 3D markerless pose estimation across species and behaviors. Nat. Protoc. 2019, 14, 2152–2176. [Google Scholar] [CrossRef] [PubMed]

- Hara, K.; Kataoka, H.; Satoh, Y. Learning spatio-temporal features with 3d residual networks for action recognition. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Venice, Italy, 22–29 October 2017; pp. 3154–3160. [Google Scholar]

| Type of Behavior | Quantity | Type of Behavior | Quantity |

|---|---|---|---|

| Head grooming | 269 | Hind leg grooming | 272 |

| Foreleg grooming | 266 | Wing grooming | 266 |

| Fore-mid leg grooming | 298 | Resting and walking | 266 |

| Mid-hind leg grooming | 259 |

| Threshold | Grooming Behavior ROI Stability Rate | Body Coverage Rate of Walking Behavior | Threshold | Grooming Behavior ROI Stability Rate | Body Coverage Rate of Walking Behavior |

|---|---|---|---|---|---|

| 10 | 95.8% | 100% | 110 | 100% | 95.8% |

| 20 | 98.0% | 100% | 120 | 99.9% | 95.2% |

| 30 | 98.6% | 100% | 130 | 100% | 92.8% |

| 40 | 97.9% | 100% | 140 | 100% | 87.4% |

| 50 | 99.5% | 100% | 150 | 100% | 87.7% |

| 60 | 99.9% | 100% | 160 | 100% | 83.2% |

| 70 | 99.9% | 100% | 170 | 100% | 83.8% |

| 80 | 100% | 99.4% | 180 | 100% | 83.2% |

| 90 | 100% | 98.6% | 190 | 100% | 73.3% |

| 100 | 100% | 98.8% | 200 | 100% | 61.9% |

| Walking Behavior Training Set Type | Accuracy |

|---|---|

| Make ROI Cuts | 95.8% |

| No ROI cropping | 89.7% |

| Behavior Category | Precision | Recall | F1 Score |

|---|---|---|---|

| Stationary | 0.99393572 | 1 | 0.99695864 |

| Foreleg grooming | 0.9390681 | 0.92907801 | 0.93404635 |

| Fore-mid leg grooming | 0.93997965 | 0.97777778 | 0.95850622 |

| Head grooming | 0.99920319 | 0.86363636 | 0.92648689 |

| Hind leg grooming | 0.95076401 | 0.94594595 | 0.94834886 |

| Mid-hind leg grooming | 0.82645503 | 0.98611111 | 0.89925158 |

| Wing grooming | 0.79351032 | 0.80298507 | 0.79821958 |

| Video Number | Number of Behaviors Recorded Using Manual Observation. | Number of Behaviors Accurately Recorded Using Our Method. | Degree of Difference |

|---|---|---|---|

| 1 | 76 | 67 | 11.84% |

| 2 | 36 | 31 | 13.88% |

| 3 | 47 | 43 | 8.51% |

| 4 | 67 | 60 | 10.44% |

| 5 | 81 | 73 | 9.87% |

| 6 | 39 | 35 | 10.25% |

| 7 | 99 | 88 | 11.11% |

| 8 | 104 | 88 | 15.38% |

| 9 | 62 | 55 | 11.29% |

| 10 | 67 | 60 | 10.44% |

| 11 | 116 | 95 | 18.10% |

| 12 | 48 | 41 | 14.58% |

| 13 | 82 | 72 | 12.19% |

| 14 | 56 | 48 | 14.28% |

| 15 | 95 | 86 | 9.47% |

| Average | 71.6 | 62.8 | 12.11% |

| Experimental Method | Grooming Behavior Accuracy | Detection Speed (Frames/s) |

|---|---|---|

| Temporal Context | 93.43% | 10.3 |

| Deeplabcut | 92.55% | 7.8 |

| C3D (without cropping) | 76.82% | 21.8 |

| C3D (using ROI cropping) | 86.11% | 21.4 |

| Our method | 93.46% | 20.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, Y.; Zhan, W.; Dong, T.; Guo, Y.; Liu, H.; Gui, L.; Zhang, Z. Real-Time Recognition and Detection of Bactrocera minax (Diptera: Trypetidae) Grooming Behavior Using Body Region Localization and Improved C3D Network. Sensors 2023, 23, 6442. https://doi.org/10.3390/s23146442

Sun Y, Zhan W, Dong T, Guo Y, Liu H, Gui L, Zhang Z. Real-Time Recognition and Detection of Bactrocera minax (Diptera: Trypetidae) Grooming Behavior Using Body Region Localization and Improved C3D Network. Sensors. 2023; 23(14):6442. https://doi.org/10.3390/s23146442

Chicago/Turabian StyleSun, Yong, Wei Zhan, Tianyu Dong, Yuheng Guo, Hu Liu, Lianyou Gui, and Zhiliang Zhang. 2023. "Real-Time Recognition and Detection of Bactrocera minax (Diptera: Trypetidae) Grooming Behavior Using Body Region Localization and Improved C3D Network" Sensors 23, no. 14: 6442. https://doi.org/10.3390/s23146442

APA StyleSun, Y., Zhan, W., Dong, T., Guo, Y., Liu, H., Gui, L., & Zhang, Z. (2023). Real-Time Recognition and Detection of Bactrocera minax (Diptera: Trypetidae) Grooming Behavior Using Body Region Localization and Improved C3D Network. Sensors, 23(14), 6442. https://doi.org/10.3390/s23146442