1. Introduction

As modern sensor technologies advance and automation continues to rise, the shift from traditional preventive maintenance (PM) to condition-based predictive maintenance (CBPM) marks a significant evolution in aviation management. Traditional PM relies on routine inspections and repairs following a predetermined schedule, often leading to unnecessary maintenance activities and associated costs. In contrast, CBPM leverages advanced technologies such as sensors and data analytics to monitor real-time equipment conditions. This proactive approach allows organizations to make informed decisions by predicting potential failures or maintenance needs, optimizing schedules, minimizing downtime, and reducing overall costs [

1,

2]. The transition to CBPM represents a more efficient and proactive strategy, enhancing the reliability and performance of critical assets while maximizing operational efficiency.

Central to the CBPM methodology is the prediction of the remaining useful life (RUL), an extremely challenging task that has attracted considerable interest from the research community in recent years. The RUL prediction poses significant challenges due to the complex nature of the underlying systems and the dynamic operational conditions they endure. For example, for RUL prediction, turbofan engines exhibit non-linear degradation patterns over time, and the relationship between sensor readings and the health state of the engine is often intricate. Also, the degradation of turbofan engines can be highly sensitive to operational profiles. Small variations in operating conditions can lead to different rates of degradation. Developing a model that is robust to these variations and accurately captures the sensitivity to different profiles is a challenging aspect of RUL prediction. The objective of RUL prediction is to accurately estimate the time span between the current moment and the projected conclusion of a system’s operational life cycle. This estimation serves as a crucial input for subsequent maintenance scheduling, enabling proactive and timely maintenance actions.

Conventional methods for estimating RUL encompass two main approaches: physics-based methods and statistics-based methods. Physics-based methods employ mathematical tools such as differential equations to model the degradation process of a system, offering insights into the physical mechanisms governing its deterioration [

3,

4,

5,

6,

7,

8,

9,

10]. On the other hand, statistics-based methods rely on probabilistic models, such as the Bayesian hidden Markov model (HMM), to approximate the underlying degradation process [

11,

12,

13,

14,

15,

16]. Nevertheless, these conventional methods either depend on prior knowledge of system degradation mechanics or rest on probabilistic assumptions about the underlying statistical degradation processes. The inherent complexity of real-world degradation processes poses a significant challenge in accurately modeling them. Consequently, the application of these methods in real-world CBPM systems may lead to suboptimal prediction performance and less effective decisions in maintenance scheduling.

To overcome the limitations of traditional physics-based and statistics-based methods, researchers are redirecting their focus towards the adoption of artificial intelligence and machine learning (AI/ML) techniques for predicting the RUL. This strategic shift has been prompted by the demonstrated successes of AI/ML applications in diverse domains, including but not limited to cybersecurity [

17,

18], engineering [

19,

20], and geology [

21,

22]. The growing prevalence of data and the continuous advancements in computational power further underscore the potential of AI/ML in increasing the accuracy of RUL prediction. This trend offers a promising avenue for overcoming the inherent limitations associated with traditional methodologies. Following this trend, our study introduces the STAR framework, an AI/ML-driven approach that combines a two-stage attention mechanism and integrates a hierarchical encoder–decoder structure for predicting the RUL.

The rest of the paper is structured as follows:

Section 2 provides a review of related work in AI/ML-based RUL prediction.

Section 3 provides a comprehensive exposition of the STAR model architecture.

Section 4 intricately explores the experimental details, presents the results, and offers a thorough analysis. Finally,

Section 5 concludes the paper.

2. Related Literature

Deep learning (DL) stands out as the most widely employed AI/ML approach, demonstrating remarkable success across diverse disciplines and delivering substantial performance enhancements when compared with traditional methods [

23]. Recurrent neural networks (RNNs) and convolutional neural networks (CNNs) stand out as widely employed DL methodologies for RUL prediction, leveraging their abilities in capturing temporal patterns and spatial features in multidimensional time series data. Peng et al. [

24] proposed a method that combines a one-dimensional CNN with fully convolutional layers (1-FCCNN) and a long short-term memory (LSTM) network to predict the RUL for turbofan engines. Remadna et al. [

25] developed a hybrid approach for RUL estimation combining CNNs and bidirectional LSTM (BiLSTM) networks to extract spatial and temporal features sequentially. Hong et al. [

26] and Nair et al. [

27] developed an LSTM model, achieving heightened accuracy, while addressing challenges of dimensionality and interpretability using dimensionality reduction and Shapley additive explanation (SHAP) techniques [

28]. Rosa et al. [

29] introduced a generic fault prognosis framework employing LSTM-based autoencoder feature learning methods, emphasizing the semi-supervised extrapolation of reconstruction errors to address imbalanced data in an industrial context. Ji et al. [

30] proposed a hybrid model for accurate airplane engine failure prediction, integrating principal component analysis (PCA) for feature extraction and BiLSTM for learning the relationship between the sensor data and RUL. Peng et al. [

31] introduced a dual-channel LSTM neural network model for predicting the RUL of machinery, addressing challenges related to noise impact in complex operations and diverse abnormal environments. Their proposed method adaptively selects and processes time features, incorporates first-order time feature information extraction using LSTM, and creatively employs a momentum-smoothing module to enhance the accuracy of RUL predictions. Similarly, Zhao et al. [

32] designed a double-channel hybrid prediction model for efficient RUL prediction in industrial engineering, combining a CNN and BiLSTM network to address drawbacks in spatial and temporal feature extraction. Wang et al. [

33] addressed challenges in RUL prediction by introducing a novel fusion model, B-LSTM, combining a broad learning system (BLS) for feature extraction and LSTM for processing time series information. Yu et al. [

34] presented a sensor-based data-driven scheme for system RUL estimation, incorporating a bidirectional RNN-based autoencoder and a similarity-based curve matching technique. Their approach involves converting high-dimensional multi-sensor readings into a one-dimensional health index (HI) through unsupervised training, allowing for effective early-stage RUL estimation by comparing the test HI curve with pre-built degradation patterns.

While RNNs and CNNs have demonstrated effectiveness in RUL estimation, they come with certain limitations. RNNs, due to their sequential nature, may suffer from slow training and prediction speeds, particularly when dealing with long sequences of time series data. The vanishing gradient problem in RNNs can impede their ability to capture dependencies across extended time intervals, potentially leading to the inadequate modeling of degradation patterns. Additionally, RNNs may struggle with incorporating contextual information from distant time steps, limiting their effectiveness in capturing complex temporal relationships. On the other hand, CNNs, designed for spatial feature extraction, may overlook temporal dependencies crucial in RUL prediction tasks, potentially leading to suboptimal performance.

The Transformer architecture [

35], initially introduced for natural language processing tasks, represents a paradigm shift in sequence modeling. Unlike traditional models like RNNs and CNNs, Transformers rely on a self-attention mechanism, enabling the model to weigh the importance of different elements in a sequence dynamically. This attention mechanism allows Transformers to capture long-range dependencies efficiently, overcoming the vanishing gradient problem associated with RNNs. Moreover, Transformers support the parallelization of computation, making them inherently more scalable than sequential models like RNNs. The self-attention mechanism in Transformers also addresses the challenges faced by CNNs in capturing temporal dependencies in sequential data, as it does not rely on fixed receptive fields.

Within the realm of RUL prediction, numerous studies have introduced diverse customized Transformer architectures tailored specifically for RUL estimation. By utilizing a Transformer encoder as the central component, Mo et al. [

36] presented an innovative method for predicting the RUL in industrial equipment and systems. The model proposed tackles constraints found in RNNs and CNNs, providing adaptability to capture both short- and long-term dependencies, facilitate parallel computation, and integrate local contexts through the inclusion of a gated convolutional unit. Introducing the dynamic length Transformer (DLformer), Ren et al. [

37] proposed an adaptive sequence representation approach, acknowledging that individual time series may require different sequence lengths for accurate prediction. The DLformer achieves significant gains in inference speed, up to 90%, while maintaining a minimal degradation of less than 5% in model accuracy across multiple datasets. Zhang et al. [

38] introduced an enhanced Transformer network tailored for multi-sensor signals to improve the decision-making process for preventive maintenance in industrial systems. Addressing the limitations of existing Transformer models, the proposed model incorporates the Trend Augmentation Module (TAM) and Time-Feature Attention Module (TFAM) into the traditional Transformer architecture, demonstrating superior performance in various numerical experiments.

Li et al. [

39] introduced an innovative approach to enhance the RUL prediction accuracy using a novel encoder–decoder architecture with Gated Recurrent Units (GRUs) and a dual attention mechanism. Integrating domain knowledge into the attention mechanism, their proposed method simultaneously emphasizes critical sensor data through knowledge attention and extracts essential features across multiple time steps using time attention. Peng et al. [

40] developed a multiscale temporal convolutional Transformer (MTCT) for RUL prediction. The unique features of MTCT include a convolutional self-attention mechanism incorporating dilated causal convolution for improved global and local modeling and a temporal convolutional network attention module for enhanced local representation learning. Xiang et al. [

41] introduced the Bayesian Gated-Transformer (BGT) model, a novel approach for RUL prediction with a focus on reliability and quantified uncertainty. Rooted in the Transformer architecture and incorporating a gated mechanism, the BGT model effectively quantifies both epistemic and aleatory uncertainties and providing risk-aware RUL predictions. Most recently, Fan et al. [

42] introduced the BiLSTM-DAE-Transformer framework for RUL prediction, utilizing the Transformer’s encoder as the framework’s backbone and integrating it with a self-supervised denoising autoencoder that employs BiLSTM for enhanced feature extraction.

Although Transformer-based methods for RUL prediction outperform traditional RNNs and CNNs, they are not without their limitations. Firstly, in the application of the self-attention mechanism to time series sensor readings for RUL prediction, these methods emphasize the weights of distinct time steps while overlooking the significance of individual sensors within the data stream—an aspect critical for comprehensive prediction performance. Secondly, in the utilization of temporal self-attention, these methods treat sensor readings within a single time step as tokens. However, a single time step reading usually has few semantic meanings. Consequently, a singular focus on the attention of individual time steps proves inadequate for capturing nuanced local semantic information requisite for RUL prediction. Inspired by recent advances in multivariate time series prediction, particularly those aimed at improving accuracy through the incorporation of both temporal and variable attention [

43,

44,

45], we introduce the STAR framework to tackle these challenges. The proposed framework integrates a two-stage attention mechanism, sequentially capturing temporal and sensor-specific attentions, and incorporates a hierarchical encoder–decoder structure designed to encapsulate temporal information across various time scales. As demonstrated later in our numerical experiments, the proposed STAR framework consistently outperforms existing RUL prediction models. This superior performance has significant implications for maintenance scheduling, enabling more effective and timely proactive maintenance actions within the context of CBPM.

The studies conducted in [

46,

47] share some similarities with our current research. Notably, they also integrate sensor-wise attention into the prediction process. However, these approaches treat temporal attention and sensor-wise variable attention as independent entities. In other words, they generate two copies of the input sensor readings: one for computing temporal attention and the other for calculating sensor-wise variable attention. Subsequently, a fusion layer is employed to combine these two forms of attention together. In contrast to their methodology, our approach takes a distinct route by utilizing a two-stage attention mechanism. Our approach sequentially captures temporal attention and sensor-wise variable attention, addressing each aspect separately. This two-stage attention strategy is designed to provide a nuanced understanding of both temporal dynamics and individual sensor contributions for more comprehensive prediction capabilities.

The main contributions of this work are as follows:

We introduce a two-stage attention mechanism that sequentially captures both temporal attention and sensor-wise variable attention, distinguishing our approach from existing methods that handle these attentions separately and independently. This marks the first successful application of such a mechanism to turbofan engine RUL prediction. Our experimental results substantiate the effectiveness of our approach, showcasing superior prediction accuracy compared to existing methods.

We propose a hierarchical encoder–decoder framework to capture temporal information across various time scales. While multiscale prediction has shown superior performance in numerous computer vision and time series classification tasks [

45,

48], our work marks the first successful implementation of multiscale prediction in RUL prediction. Additionally, our encoder–decoder architecture diverges from existing Transformer-based RUL prediction models, which typically incorporate only a Transformer encoder in their prediction modeling. Our numerical experiments reveal that the inclusion of the encoder–decoder architecture leads to notable improvements in prediction performance.

3. Methodology

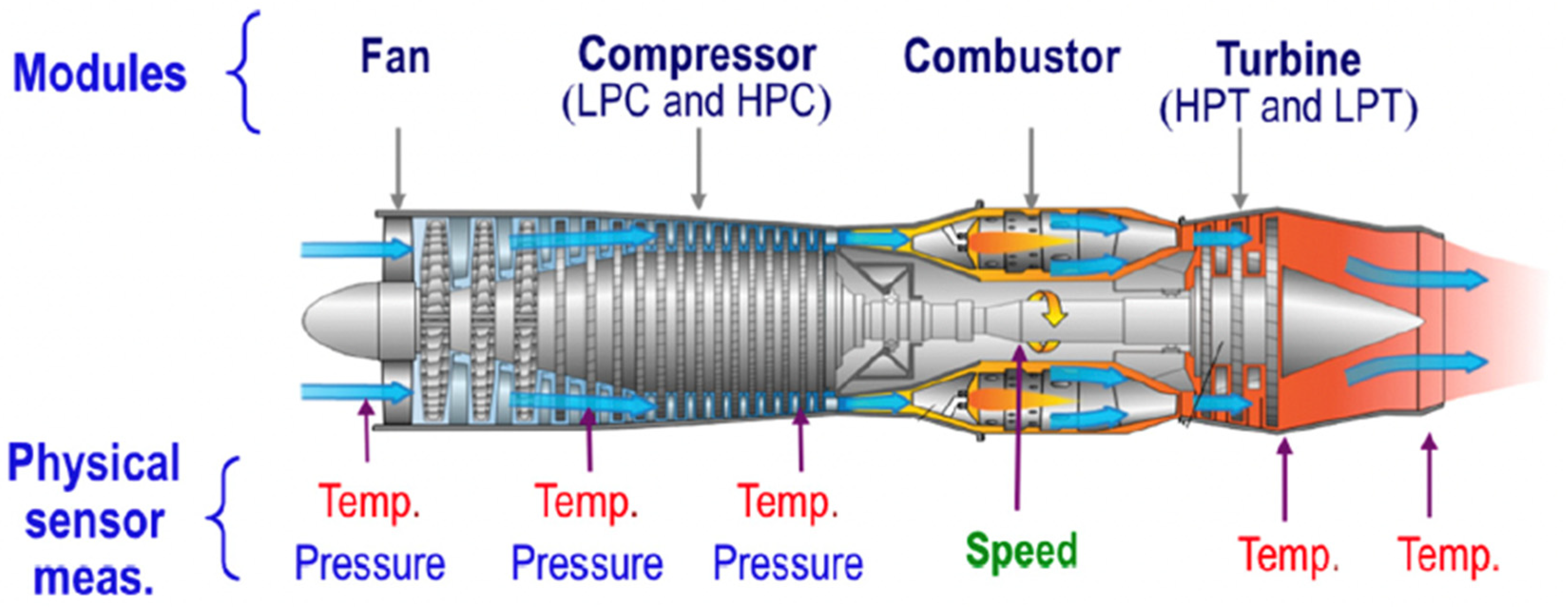

Our study is dedicated to predicting the RUL of a turbofan engine based on historical multivariate time series sensor readings denoted as

, where

represents the number of time steps in the input data, and

is the number of onboard sensors. The proposed STAR framework, illustrated in

Figure 1, comprises five key components:

Dimension-wise segmentation and embedding (

Section 3.1): Each sensor’s univariate time series is segmented into

disjoint patches with length

. To embed individual patches, a combination of an affine transformation and positional embedding is utilized [

35].

Encoder (

Section 3.2): adapting the traditional Transformer encoder [

35], we introduce a modification that integrates a two-stage attention mechanism to capture both temporal and sensor-wise attentions.

Decoder (

Section 3.3): refining the conventional Transformer decoder [

35], our modification introduces a two-stage attention mechanism aimed at capturing both temporal and sensor-wise attentions.

Patch merging (

Section 3.4): merging neighboring patches for each sensor in the temporal domain facilitates the creation of a coarser patch segmentation, enabling the capture of multiscale temporal information.

Prediction layer (

Section 3.5): the final RUL prediction is achieved by concatenating information across different time scales through the use of a multi-layer perceptron (MLP).

Figure 1.

Overall structure of the proposed STAR frameworks.

Figure 1.

Overall structure of the proposed STAR frameworks.

As depicted in

Figure 1, our proposed framework takes multivariate time series sensory readings as the input. Subsequently, the model processes the patched input obtained through the dimension-wise segmentation of these multivariate time series for prediction. This patched input undergoes positional embedding and enters a two-stage attention-based encoder for feature encoding. It is important to note that the encoder’s output represents the finest feature representation of the patched input in a time scale. Subsequently, the output proceeds to a two-stage attention-based decoder block for prediction at the finest scale, and a patch merging block facilitates the generation of a coarser representation in time scale.

The subsequent subsections elaborate on each of the above five components.

3.1. Dimension-Wise Segmentation and Embedding

The original development of the Transformer architecture focused on natural language processing tasks like neural machine translation [

35,

49]. Consequently, when applied to time series prediction tasks, the conventional approach treats each time step in the time series data as a token, akin to the treatment of words in natural language processing tasks. However, the information contained in a single time step is often limited, potentially resulting in suboptimal performance for time series prediction tasks. Inspired by the recent success of using Transformers in computer vision tasks, where input image data are segmented into small patches, researchers in time series predictions have adopted a similar segmentation procedure, leading to enhanced performance in time series prediction tasks [

43,

44,

45]. In line with this approach, we employ a similar segmentation procedure in our work for RUL prediction.

The dimension-wise segmentation segments each sensor time series reading into

smaller disjoint patches with length

, as shown in the top left of

Figure 1. Each segmentation is denoted as

(

) and embedded with an affine transformation and positional encoding:

where

is a learnable matrix for embedding and

denotes the learnable positional encoding for each patch. As a result, the information of the original patch

is embedded into a

dimensional space.

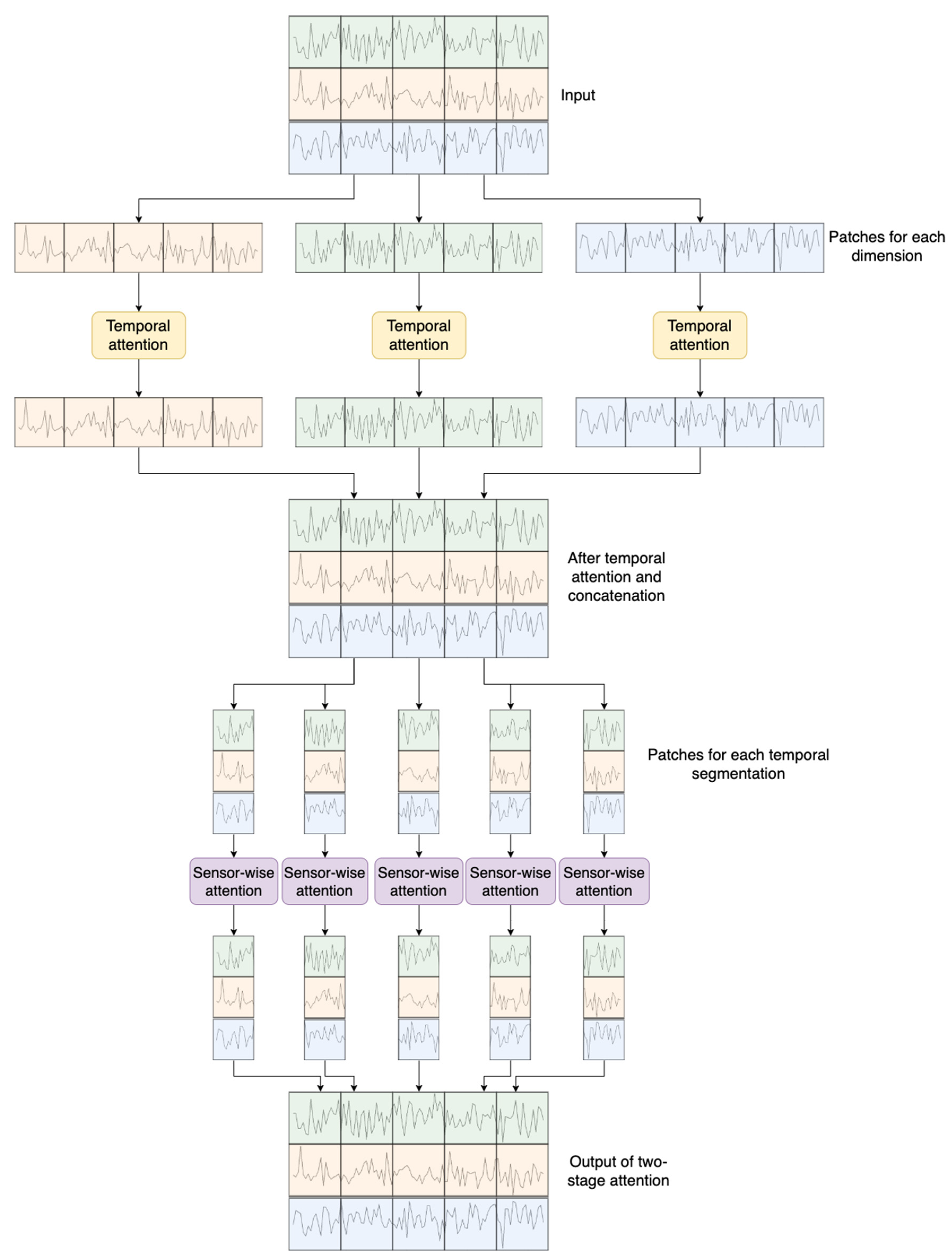

3.2. Two-Stage Attention-Based Encoder

Denote

as the embedded inputs, which act as the input for the encoder, as depicted at the top of

Figure 2.

The input is initially partitioned into

distinct fragments. Each fragment

is then fed into the temporal attention calculation block, closely resembling the conventional multi-head self-attention (MSA) [

35], as depicted in

Figure 3a. This block is responsible for capturing temporal dependencies within each sensor’s readings.

MSA is a critical mechanism in the Transformer architecture, particularly beneficial for tasks involving sequential data processing. In the original Transformer formulation, the self-attention mechanism is enhanced by introducing multiple attention heads. This extension allows the model to attend to different positions in the input sequence simultaneously and learn diverse relationships between elements.

The standard self-attention mechanism computes attention scores using the following equation for a single attention head:

Here, and denote the query, key, and value matrices, respectively. The softmax operation normalizes the attention scores, and is a scaling factor to control the magnitude of the scores. The resulting attention values are then multiplied by the value matrix to obtain the weighted sum.

In the multi-head attention mechanism, the process is parallelized across

attention heads, each with distinct learned linear projections of the input

and

matrices. The final output is obtained by concatenating the outputs from all attention heads with a linear transformation:

Here,

is a learned linear transformation matrix applied to the concatenated outputs. Then, the temporal attention block can be expressed as follows:

where

denotes layer normalization and

represents a feedforward network. These procedures are widely utilized in network architectures based on Transformers [

35,

44,

46]. Following the temporal attention block,

is subsequently fed into the sensor-wise attention block, depicted in

Figure 3b, to capture sensor-wise attention. The computation within the sensor-wise attention block is analogous to that of the temporal attention block, utilizing the input

. This mechanism allows the model to attend to important sensors and capture relevant features in the context of the temporal sequence.

3.3. Patch Merging

As illustrated in

Figure 1, the output of the two-stage attention-based encoder, denoted as

, undergoes processing in the patch merging block to generate coarser patches, facilitating multiscale predictions. Specifically, in the patch merging block (see

Figure 4), adjacent patches for each sensor are combined in the time domain, creating a coarser patch segmentation. These resultant coarser patches serve as the input for the subsequent layer/scale (

) in the encoder. This hierarchical structure enables the model to capture temporal information across different time scales, enhancing its predictive capabilities.

The concatenated coarser patch undergoes an affine transformation to maintain the dimensionality at

. The procedure is summarized by the equation below:

Here, represents a learnable matrix employed for dimensionality preservation during the patch merging process.

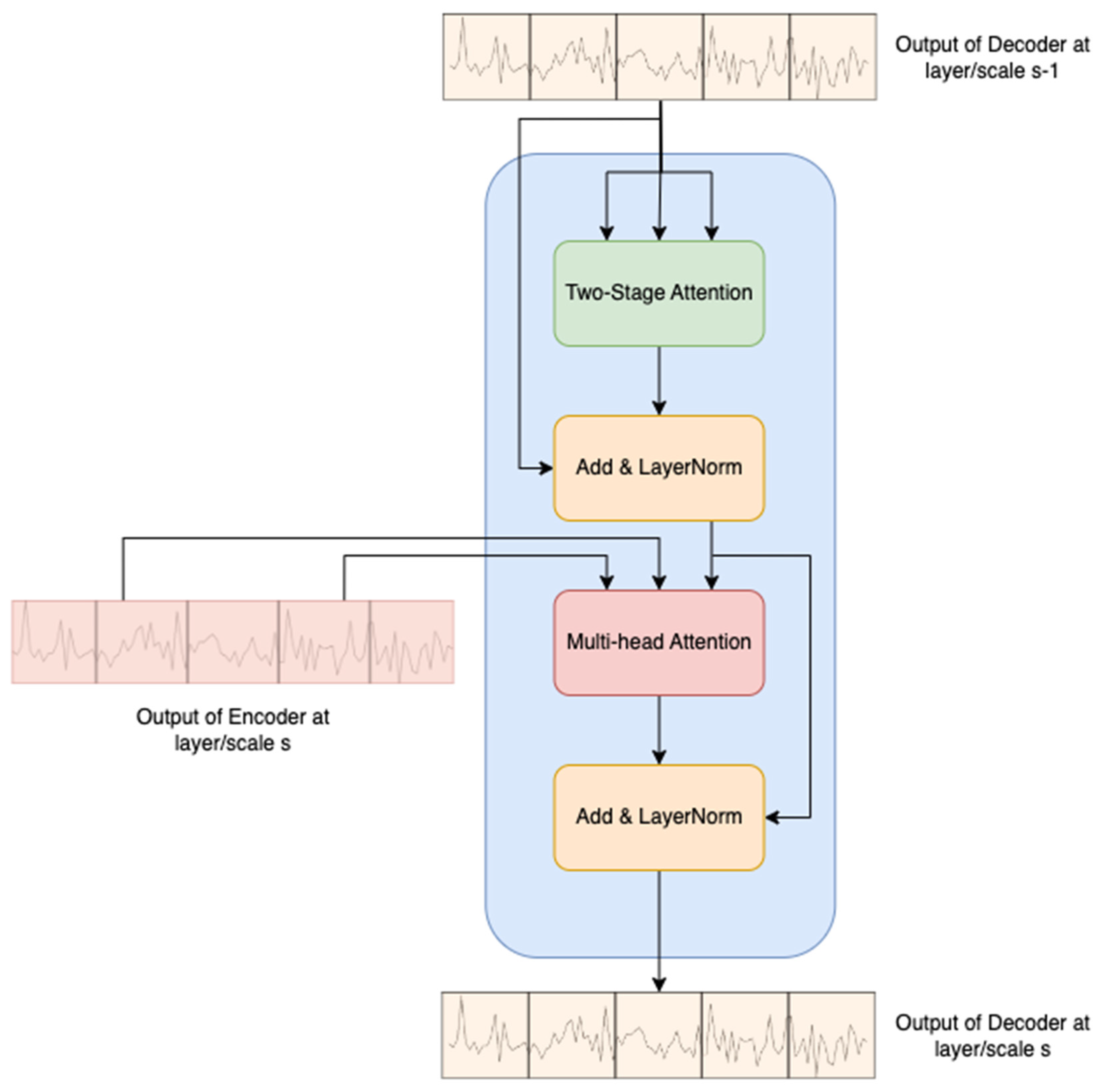

3.4. Two-Stage Attention-Based Decoder

At layer/scale

, the inputs of the two-stage attention-based decoder are

and

, where

is the output of the decoder from the previous layer/scale

. The decoder architecture closely resembles that of the original Transformer network, with the modification of replacing the masked multi-head self-attention (MMSA) with a two-stage attention mechanism, as illustrated in

Figure 5.

In the decoder process, the output of the decoder at the previous layer

undergoes the two-stage attention block, followed by a residual connection and layer normalization. Subsequently, the output of the encoder in the current layer

serves as the keys and values for the MSA block. This modification enhances the decoder’s ability to capture both temporal and sensor-wise attention, contributing to improved RUL prediction accuracy. It is important to note that the input of the decoder at the initial layer/scale comprises a fixed positional encoder defined by trigonometric functions, as introduced by Vaswani et al. [

35].

3.5. Prediction Layer

As depicted in the right-hand part of

Figure 1, the outputs of the decoders at different layers/scales are fed into separate MLPs to further embed the information, enhancing the model’s ability to capture intricate patterns for RUL prediction. The outputs from these individual MLP blocks are then concatenated and passed into another MLP to make the final prediction. This hierarchical embedding and fusion process enables the model to capture both local and global dependencies, contributing to improved accuracy in predicting the RUL of turbofan engines.

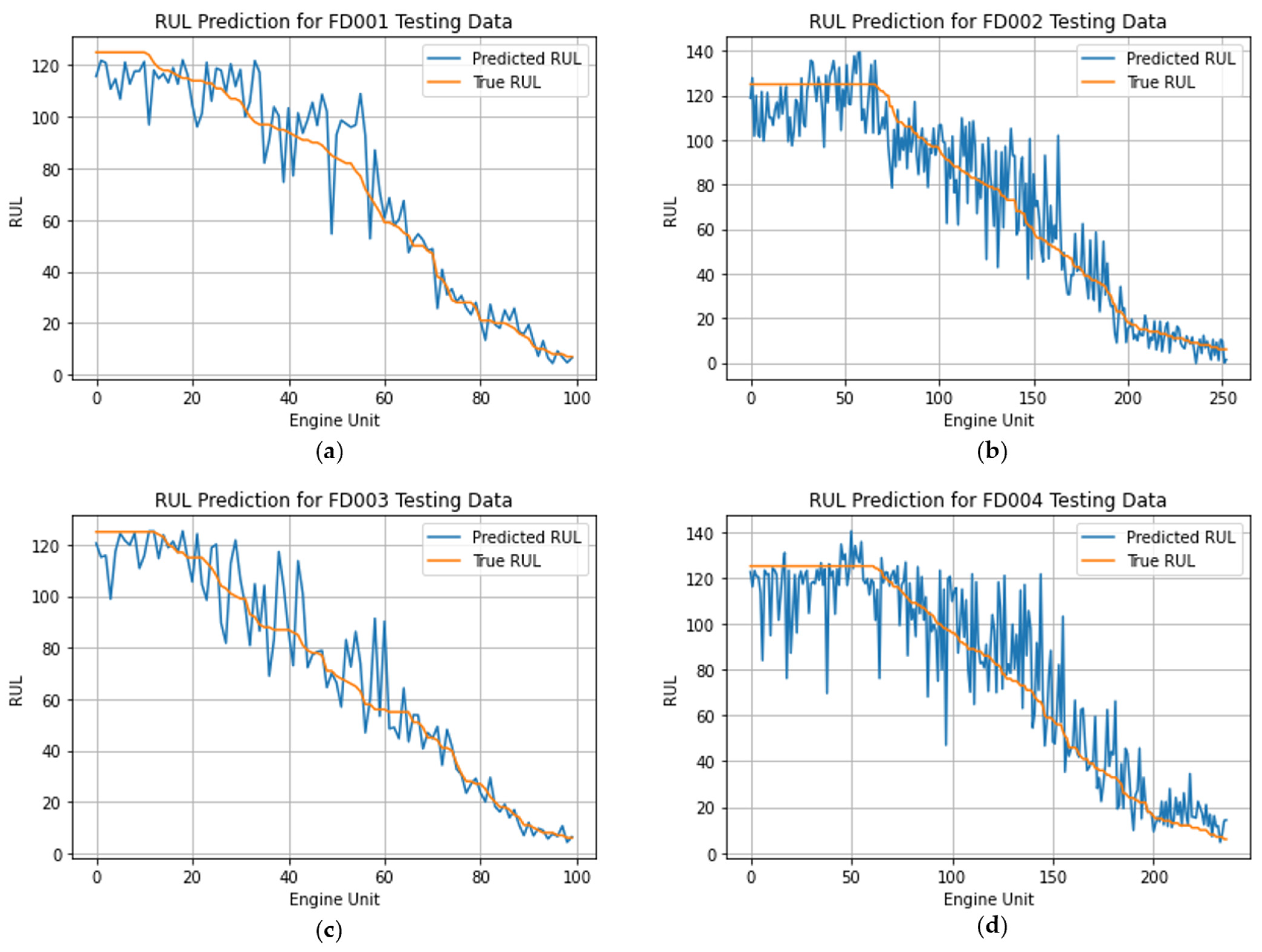

5. Conclusions

This paper presents an innovative STAR framework designed for predicting the RUL of turbofan engines. Leveraging a two-stage attention mechanism, our proposed model adeptly captures both temporal and sensor-wise variable attention. By utilizing a hierarchical encoder–decoder structure to integrate multiscale information, the model produces hierarchical predictions, demonstrating superior performance in predicting the RUL. Using the CMAPSS dataset, we illustrate the importance of incorporating both temporal attention and sensor-wise variable attention for RUL prediction through a series of numerical experiments. Notably, the STAR framework demonstrates clear improvements in both the RMSE and Score metrics for the challenging FD002 dataset, outperforming the state-of-the-art models by 12% and 15% in terms of the RMSE and Score, respectively. Additionally, for the FD001 dataset, our STAR framework outperforms existing algorithms by 4% and 10% in terms of the two metrics. The results highlight the promising potential of the STAR framework in achieving accurate and reliable RUL predictions, thereby contributing to advancements in prognostics for the health management of aircraft engines.

Despite the superior performance demonstrated by the proposed methods in predicting the RUL, it is important to note that the model inherently lacks the ability to provide explanations for its identification of equipment approaching failure. Therefore, a promising area for future research involves incorporating Explainable Artificial Intelligence (XAI) methods, such as SHAP and LIME, to unravel the prediction logic of the model. This enhancement has the potential to increase the applicability of the prediction model in practical scenarios, particularly within the context of CBPM. Additionally, it is noteworthy that the current STAR framework employs an attention mechanism with quadratic model complexity with respect to the length of input sequences. While this design proves effective for typical input lengths, it might become impractical when dealing with long input time series, which could contain more information than shorter sequences. Hence, an intriguing avenue for future research involves the development of methodologies specifically tailored for RUL prediction using longer multivariate time series as inputs.