1. Introduction

Supersonic impinging jets (SIJs) play a pivotal role in numerous engineering applications, ranging from industrial processes such as cold-spray manufacturing [

1] to aerospace systems such as vertical take-off and landing thrusters [

2]. Hence, there is value in studying and understanding the complex flow phenomena associated with an SIJ as it can be used to guide the optimization of various applications.

Despite decades of extensive experimental research in this field [

2,

3,

4,

5,

6,

7,

8], high-speed cameras and the particle image velocimetry technique are still insufficient in capturing the megahertz sampling frequencies required for the highly transient shock structures of the SIJ configuration, which arise from its interactions with the compressible jet shear layer [

9,

10,

11,

12]. Consequently, computational fluid dynamics (CFD) simulations to augment experimental SIJ research efforts have been used for more than 20, demonstrating that, for SIJ configurations, steady mean numerical results from the Reynolds-averaged Navier–Stokes (RANS) methodology can have good overall agreement with physical measurements [

5,

13]. Li et al. [

1] even extended their validated mean flow results with a Lagrangian module for particle motion to study gas–particle interactions during the steady cold-spray process. Recognizing the highly transient characteristics of SIJs, Dauptain and co-workers [

14] employed the inherently unsteady large-eddy simulation (LES) formulation on the SIJ configuration of Henderson et al. [

6], establishing that, for an explicit third-order solver with an unstructured mesh, more than 20M cells are required to ensure grid convergence. The advantages of LES over RANS for SIJ simulations were further highlighted by Chan et al. [

15], even though both methods were found to be able to capture the mean flow features. While useful in supplementing experiments, CFD simulations have previously not been able to provide high-fidelity results at a tractable cost. However, the advancements in highly scalable high-performance computing (HPC) resources and the development of accurate and computationally efficient CFD solvers render the time right to conduct comprehensive numerical studies of the SIJ configuration, as seen in the recent intensive yet insightful studies in References [

16,

17], with the latter even including heat transfer effects.

Regarding CFD solvers for transient compressible flows like the SIJ configuration, there are in general three categories, namely, commercial, in-house, and open-source. Typically well validated and widely used for engineering problems, commercial CFD solvers like ANSYS Fluent [

18,

19] and Simcenter STAR-CCM+ [

20,

21] offer the advantages of direct support for troubleshooting, user-friendly graphical user interfaces, and solver runtime stability. There are, however, two main drawbacks. First, most commercial CFD software suites do not expose any of the underlying code being used, so there is no way for users to directly modify the algorithms or inspect the mathematics and logic, except for relying on documentation and the word of the developers. Second, the cost for even a basic-level solver license can be prohibitive, and now is usually coupled with a trend to lock each license to a certain CPU core count, which is restrictive for high-fidelity simulations.

The traditional route to circumvent the limitations of commercial CFD solvers, especially in the academic community, is to develop in-house and purpose-built CFD code [

1,

5,

10,

14,

17,

22]. Not accounting for the time and effort needed for such developments, which are usually daunting and specialized, the cost of in-house CFD solvers is often minimal. Nonetheless, they are typically exclusive to the developers, inconsistent in documentation and support, and validated for only specific configurations. Furthermore, legacy solvers that are not regularly maintained may face scalability issues with constantly upgrading HPC systems.

Compared to commercial and in-house CFD solvers, open-source CFD solvers have previously been the least common, mostly due to a lack of validation studies and existing literature [

23]. However, with the introduction of the OpenFOAM (Open Field Operation And Manipulation) framework in 2004 [

24], along with its built-in libraries and support for user modifications [

25,

26,

27], open-source solvers have generally garnered much more acceptance. To date, among the various open-source CFD solvers available, the OpenFOAM framework [

28] remains a popular choice for compressible, turbulent SIJ simulations [

15,

16,

25], owing to its flexibility, extensibility, and robustness [

29,

30,

31,

32].

For the above reasons, this paper considers large-eddy simulations of SIJs using the

rhoCentralFoam solver within the OpenFOAM framework. In particular, the focus was placed on SIJs with a separation distance (i.e., distance between jet nozzle and wall) of less than two nozzle diameters, a scenario that has been found to be lacking in the existing computational literature except for such studies as References [

1,

15]. Such close separation distances are of particular interest because the stability (or lack thereof) of the standoff shock, located just above the wall, is very sensitive to both the nozzle pressure ratio,

, and the separation distance [

33]. This observation applies not only to the magnitude of unsteadiness, but also the various modes of unsteadiness such as the typical jittering and more abrupt and larger jumps in the standoff shock location [

34].

The remainder of this paper is structured as follows: In the next section, the methodology is communicated, providing information on the mesh topology, initial and boundary conditions, CFD schemes and models, and HPC configurations. Then, the results are discussed in terms of grid convergence, effects of mesh resolution, simulation accuracy, and solver scalability on the given HPC architectures. Conclusions are provided at the end.

4. Conclusions

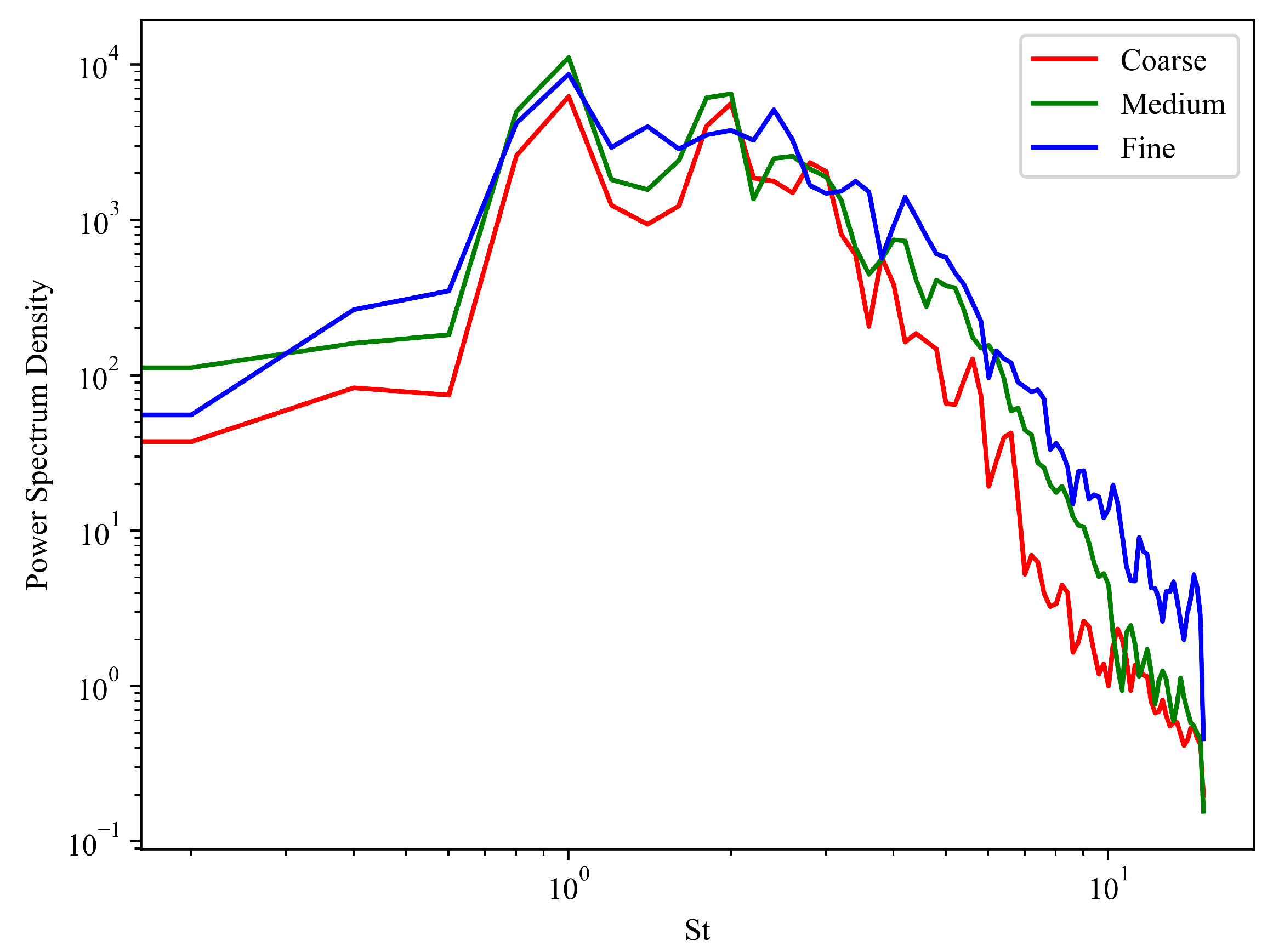

In this work, the initial flow and its quasi-steady structures and behaviors of an underexpanded supersonic jet impinging directly on a flat plate at a low separation distance of less than two nozzle diameters were simulated using rhoCentralFoam, a large-eddy simulation solver of the OpenFOAM framework. Three different levels of mesh resolution were evaluated to confirm grid convergence, and the results were verified with experimental schlieren results. Overall, all meshes were determined to be sufficient for bulk flow properties, such as time-averaged centerline Mach number profile and standoff shock location. However, differences in the resolved vortices, especially those along the jet shear layer, were observed among the three meshes and shown to have an effect on the transient flow structures. These discrepancies will in turn affect the shear-layer/tail shock interactions, which will have cascading effects on time-varying behaviors like standoff shock oscillations. To quantify the differences in the unsteady results of the three cases, a power spectrum density analysis was conducted by probing the pressure of a point within the shear layer. Predictably, the energy dissipation was found to be higher for the coarse mesh and to decrease with increasing mesh resolution.

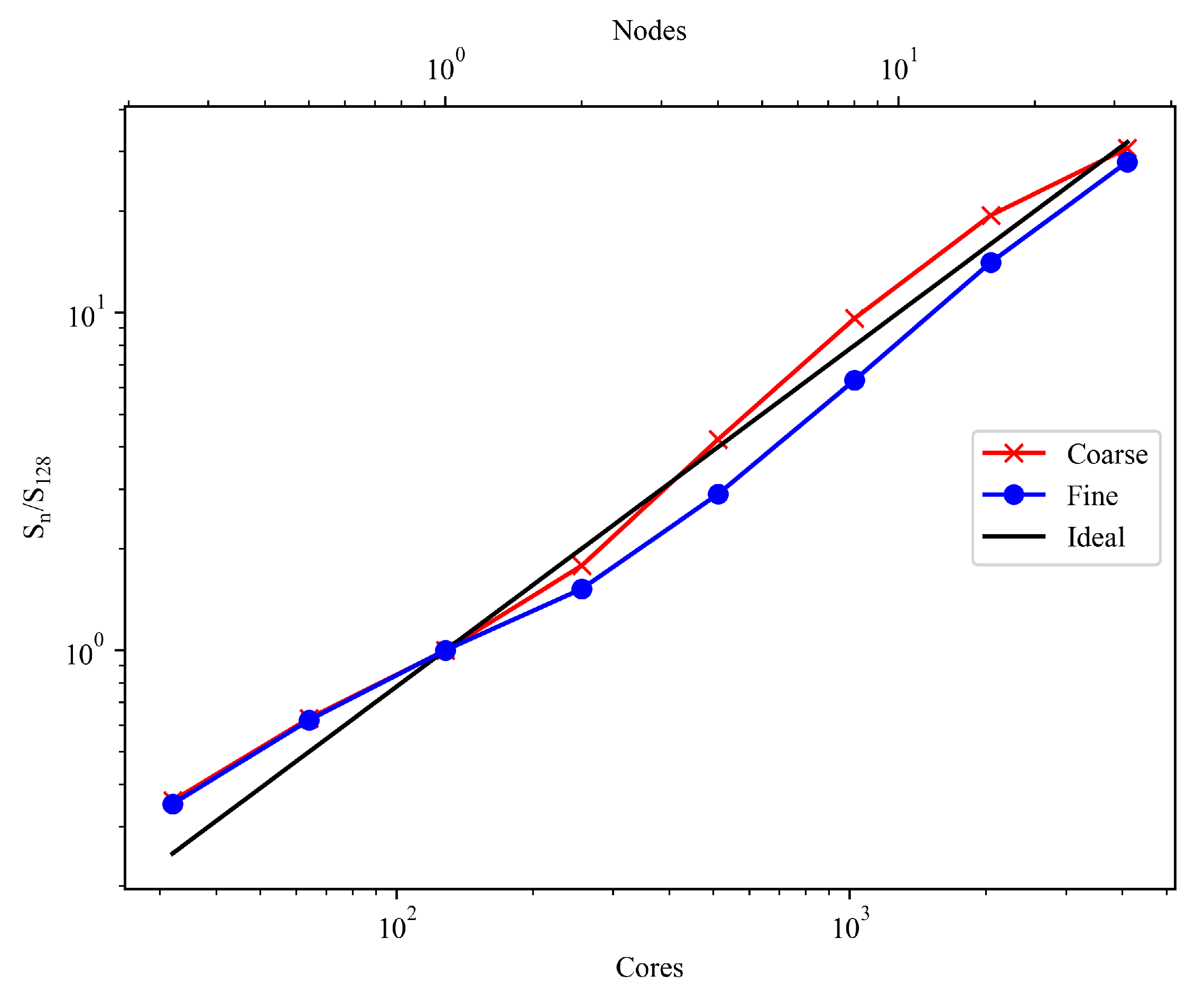

The contrasting observations of the same three meshes being all able to represent the mean SIJ profiles but differing in the unsteady features highlight the fact that the adequacy of a mesh resolution depends on the simulation objectives, whether only net effects are of interest or temporal phenomena have to be properly accounted for. To guide this consideration in the context of rhoCentralFoam, computational scaling analyses were conducted on the three cases, showing that, on current HPC architecture, the solver has good performance in terms of weak scaling. Hence, good estimations on balancing computational resources and compute time for a given mesh resolution can be made. Outstanding strong scaling was also demonstrated, with an approximately linear trend up to 2048 cores (16 nodes) and 4096 cores (32 nodes) for the ∼8.5M and ∼41M cell count cases, respectively.

So far, like previous studies that have considered optimal computational scaling for OpenFOAM simulations [

32,

45], the discussion has been centered around a “cells-per-core” guideline. However, given the internode communication bottlenecks of OpenFOAM architecture [

46,

47] and recent significant jumps in cores per node in HPC CPUs, in this work we suggest starting to revise to a “cells-per-node” basis, with particular attention to the interconnect speed and architecture used. This switch implies that an HPC system looking to support a high number of OpenFOAM workloads should not only seek to maximize the core count per compute node, but also ensure the interconnect backbone is sufficient and not a limiting factor. On this note, future studies on the physics of highly transient supersonic impinging jets with low separation distance, which will presumably require large datasets from high-fidelity simulations, can be more effectively conducted.