1. Introduction

The COVID-19 pandemic has caused critical economic and social disruption all over the world [

1,

2], leading to a significant transformation of work and research-related activities [

3,

4]. While the regulations to follow “work from home” and, similarly, “research from home” [

5] come from the governmental level, academia and industry research organizations have faced several challenges in managing their traditional workflows. Restrictions on physical contact and social isolation have massively affected the research projects related to the use of Extended Reality (XR) and immersive environments, where in-lab experiments with the presence of a moderator and a participant in one space accounted for more than 70% of the overall user study methods [

6,

7]. Similarly, other research activities, for instance based on the usage of mobile devices, have faced similar complications, and require additional efforts to be conducted.

XR technologies, which include augmented, mixed, and virtual realities (AR, MR, and VR), is a hot topic of HCI (human-computer interaction) research among academic and industrial practitioners. XR technologies offer unlimited benefits in the industrial context via blurring the boundaries between what is real and what is virtual, thus extending the possibilities of traditional technology appliances and supporting industrial tasks. The cumulative evidence has proven the benefits of XR for industrial needs; XR has shown increased performance in facilitating collaboration and planning in construction projects [

8,

9,

10,

11] and immersive training of novice maintenance technicians [

12], as well as the support of the whole product lifecycle in the manufacturing field [

13,

14,

15]. Furthermore, the flexibility of XR to facilitate remote coordination and communication is even more pronounced during the pandemic [

16,

17].

Due to the above-mentioned advantages, large international corporations, such as KONE [

18], perform their own research activities to investigate the appliance of novel technologies to their field. Such studies are usually conducted either internally or in collaboration with academia, targeting the company’s novice and expert employees from different counties. The lockdown and travel restrictions caused a notable disruption in industrial and academic research activities, especially with topics that involve practical experiments including XR or mobile devices [

19,

20,

21]. To continue their work activities, industrial researchers were forced to rapidly shift towards adopting remote practices and methods.

Remote usability testing and user studies are not novel concepts for the research world [

22,

23]. The effects of remote setups and related challenges has been investigated in academia since the early 2000s, due to potential benefits in cost and resource reduction [

24]. The existing studies on remote testing demonstrated no significant variance between physically facilitated tests and remote tests; however, several challenges, such as research validity and lack of control, were also highlighted [

25]. In XR research, remote studies have also been experimented with, targeting the growing group of HMD (head-mounted display) owners [

26,

27]. Nevertheless, there is neither clear guidance on how to conduct remote studies, nor an extensive body of research to base decision-making processes on when designing for a remote experiment. Furthermore, there is limited knowledge of conducting remote user testing in the industrial context, where the target user group is limited to experts and company employees. To fill this gap and demonstrate the practicalities of conducting remote user studies in the industrial context, our article details five industrial use cases, performed by a large international corporation in the field of manufacturing and maintenance during the pandemic restrictions. Our hypothesis is that it is possible to conduct user studies with remote and hybrid setups. The hypothesis is confirmed with the five use cases of user studies, which were successfully carried out during the pandemic. Two out of five studies were performed in collaboration with academia, based on the framework of remote academia-industry collaboration [

28]. All five use cases provide a unique perspective to the practicalities of remote user studies, detailing how to approach the preparation phase and the remote processes of handling the experiments and safety aspects related to COVID-19 restrictions. The article, therefore, answers the following research question:

RQ: How can VR user studies be conducted with remote and hybrid setups in the industrial context?

The results of the remote case studies are presented in three categories to support the future implementation of remote experiments by industrial and academic practitioners: (1) insights that are especially important because of the pandemic, (2) insights that are especially important because of remote and hybrid setups, and (3) insights that are especially important because of the industrial context. In addition, we share (4) insights regarding conducting remote and hybrid interviews. The results are further discussed from the perspective of (5) general insights on user testing, and things related to (6) testing new, fascinating technology. Furthermore, we describe (7) hygiene and safety protocols for conducting VR user tests during a pandemic.

3. Case Descriptions

We organized remote user sessions in five different industrial use cases during the 2020–2021 pandemic. All cases concerned collaboration in VR. The total number of organized user test sessions was 22, and several pre-sessions were organized in addition to this. All together we involved subject matter experts from eight countries: Finland, India, China, Germany, Indonesia, Malaysia, the United States, and the United Arab Emirates. Some participants attended user tests in more than one use case, and, therefore, the total number of persons involved as participants is somewhat lower than sum of all participants.

Table 1 gives a summary of all cases. Case 1 and Case 2 were KONE internal studies, and Case 3 and Case 5 were part of Human Optimized XR (HUMOR) [

50] project activities in collaboration with Tampere University and KONE. Case 4 was part of the KEKO Smart Building Ecosystem project [

51] activities at KONE.

The terminology used in the literature for participants and researchers organizing user testing varies. For clarity we have summarized the terminology used in this paper in

Table 2.

3.1. Case 1: Collaborative VR for Maintainability Review

Case description: In this project, we investigated the benefits of multi-user virtual reality for a collaborative maintainability review during an early product development phase [

52]. We used two commercially available VR environments: DesignSpace [

53] and Glue [

54]. Both environments also had support for desktop participants. The VR devices used were the Samsung Odyssey (facilitator), the HP Reverb (participant), and the HTC Vive (participant, observers)—see

Figure 1.

In this project, a Lean start-up methodology was used [

55]. Collaboration methods, tasks, and VR environments were developed in iterative sprints. In total, six user test sessions were conducted. Three sessions were held in Glue and another three in DesignSpace (see

Figure 2).

Pandemic restrictions: This project started in February 2020, and the generic introduction of VR environments for some of the users was done face-to-face in the office premises before the restrictions started. In March 2020, the pandemic situation worsened in the Uusimaa area, Finland, where this research took place, and restrictions were in place to prohibit entering the office premises. The company allowed a limited number of co-workers to meet in person if needed. Thus, all user tests were conducted remotely from people’s homes (as shown on

Figure 1). The project ended in July 2020.

Test setup: All sessions started with a Teams call, where the participants were introduced to the VR task, and an on-site assistant instructed how to start the VR environment. The facilitator explained how the hand controllers, menus, etc. work, and showed videos and screenshots from VR during the starting session. The Teams session was also used when practical help was needed with the VR devices or the VR environment. In tests with Glue, there was a remote facilitator inside the VR wearing an HMD, 1–2 HMD participants, and 1–2 desktop participants depending on the session; the total number of participants was three in each session. In tests with DesignSpace, there was a remote facilitator inside the VR wearing an HMD and 2 HMD participants, 1 desktop participant, and 1–2 observers (HMD). The same three participants attended all iterative sessions (

Figure 3).

Case-specific challenges: In order to include necessary subject matter experts despite the pandemic restrictions, one of the HMD participants was sent a VR set to their home and was instructed remotely via Teams on its use. The participant had no previous experience of setting up a VR environment (e.g., calibrating the play area and updating the VR software). The participant was a 60+-year-old expert on industrial maintenance and maintenance methods development, with hands on experience in the field.

3.2. Case 2: Multi-User VR in Global Department-to-Department Collaboration

Case description: One separate user test session was conducted to evaluate multi-user VR collaboration in a global department-to-department setting. In this session, the Glue environment [

54] was used (see

Figure 4).

The session focused on identifying the possibilities of VR for global collaboration and potential use situations and use cases. The participants had different professional backgrounds: maintenance methods development, technical documentation, and learning and development. Participants were from two different countries with different levels of previous VR experience. This work was KONE’s internal development activity related to the Human Optimized XR (HUMOR) project. The results of this user test have not been published elsewhere, but the results were taken into account in the planning of the following cases.

Pandemic restrictions: This test was carried out in July 2020. The company restrictions restricted access to the office premises, but a limited number of co-workers were allowed to meet in person if needed.

Test setup: In this session, we had a remote HMD facilitator inside the VR, three HMD participants, and three desktop participants. All participants were in different locations, except for one desktop participant who was in the same physical location with one HMD participant. The desktop participant also acted as an on-site assistant to ensure physical safety of the HMD user in the same location (

Figure 5).

Case-specific challenges: Because of restrictions on entering the offices, it was difficult to recruit industrial subject matter experts. In order to involve necessary subject matter expertise, one of the HMD participants was sent a VR set to their home and was instructed remotely via Teams on its use. The participant had no previous experience of setting up a VR environment (e.g., calibrating the play area and updating the VR software). The participant was ~35 years old, with expertise in industrial maintenance, maintenance method development, and hands-on expertise from the field.

3.3. Case 3: VR for Maintenance Method Development and Documentation Creation

Case description: The aim of the study was to analyze the subjective perceptions and usefulness of a single-user VR system in accordance with maintenance and technical documentation industrial tasks. The study was designed in collaboration between academic and industry researchers. The test sessions were conducted at the company’s premises in Finland and were facilitated and observed by industry researchers. In total, there were one pre-test, six on-site user tests, and one remote user test facilitated for a participant located in the USA.

Pandemic restrictions: At the time of the evaluation, the Finnish government recommended adhering to remote work practices [

56]. Hence, the evaluation was designed to ease remote observation. The user test setting consisted of cameras located in the VR room, a speaker, and a video live-stream via Teams.

Test setup: The evaluation was conducted using a mix of remote observation and on-site facilitation. The remote observation was conducted using a Teams meeting. For the pre-test and six on-site user tests, there were one facilitator and one participant in the VR room (

Figure 6). However, the evaluation for the participant in the USA was conducted entirely remotely. For each session, at least one remote observer was present in the Teams call. The audio in the session was transmitted via Teams using a good quality speakerphone in the VR room. The on-site facilitator set up a USB camera to live-stream the whole procedure, from set-in up the VR room until end of the user test session. In addition, during the VR evaluation, the participants’ VR point-of-view was live-streamed simultaneously as the VR room camera view. The Teams call was recorded to capture the facilitation and participant’s actions for later analysis. Lastly, a GoPro Hero 3 camera was set up to record the room setup as an offline backup (

Figure 7). The test procedure was conducted using an HTC Vive Pro headset with hand controllers.

Case-specific challenges: The hardware setup was more troublesome than expected. During the first evaluation, the VR point-of-view was streamed over Teams, however, the VR room view was not. This hindered the remote observation as observers could not see the user’s actions outside of VR. For that reason, the cameras and audio setup were changed to afford the VR room view simultaneously with the VR point-of-view (

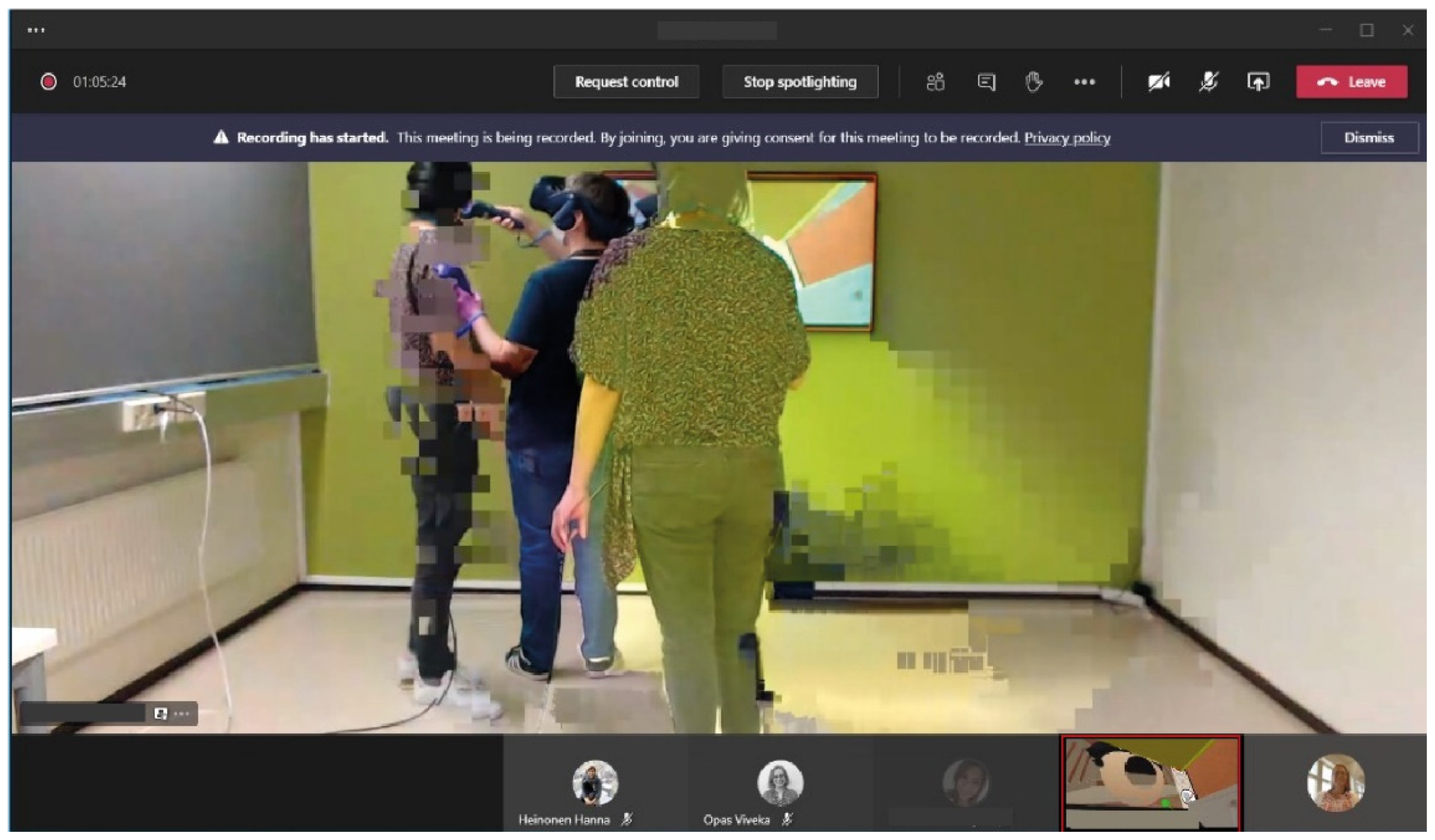

Figure 8). In addition, since observers were set up remotely, the on-site facilitator’s duties became more demanding. The facilitators disinfected, set up, facilitated, and managed after-test practicalities by themselves. In practice, this meant that even though the evaluations lasted on average close to two and half hours, the facilitator needed one hour for preparation and two for after-test practicalities. There were network issues during the user tests which affected the observation. In

Figure 9, the facilitator is visible on the screen twice, and the image is pixelated.

3.4. Case 4: Multi-User VR for Collaboration in Complex Machine Room Planning

Case description: The use of a multi-user VR in complex elevator machine room planning of high-rise building projects was tested. The study aimed to find out the benefits of multi-user VR from the user and business perspective as well as how to optimally apply the technology in the process. Four real-life high-rise building projects in Indonesia, Malaysia, the United States, and the United Arab Emirates were studied. There were nine HMD participants who were members from the selected projects and company personnel from Finland. There were no participants from the Malaysian site because a COVID-19 national lockdown prevented them from accessing the VR facility. Three remote cross-country user tests were organized. Each test involved participants from two to three different countries. Facilitation was conducted remotely, and the remote facilitator was in the virtual environment with the HMD participants. After each test, a group interview was held to gather feedback from the participants. In this study, four VR headsets were used: the HTC VIVE, HTC VIVE Pro, Oculus Rift S, and HP Reverb.

Pandemic restrictions: Movement restrictions in all testing countries were in effect, which restricted the use of the office and the number of people in one meeting room. International travel was prohibited. Access to VR facilities was limited. Participants were required to wear a mask at all times and wash their hands with soap and use hand sanitizer regularly.

Test setup: Before every user test, remote onboarding sessions were held to instruct the on-site assistant and familiarize HMD participants with the VR environment. In each testing session, 2–3 testing sites in different countries were involved. Each site had 1–3 HMD participants and 1 on-site assistant (

Figure 10). Only one HMD participant at each site was in the VR environment at a time. They took turns to perform the assigned tasks. The whole session was remotely facilitated, and the communication was done via Teams. Each testing session focused on one machine room project so that its participants could experience the use of VR in their actual workflow. The VR view of the HMD participant, who was working daily on the examined project, was shared on Teams. Each site also had their camera on in the Teams video call for remote observation (

Figure 11). Moreover, the remote facilitator juggled being in the VR environment for any requested VR task-related assistance with observing the HMD participants through their video on Teams. Furthermore, each testing session also had 1–2 desktop participants and remote observers who joined the online Teams meeting (

Figure 12). The desktop participants did not perform many tasks for the testing but rather assisted with elevator technology related questions.

In one instance of user testing, the on-site assistant was not available in one of the testing locations. The number of HMD participants was 1–3 for each testing location. The HMD participants took turns, and others participated via a large display on the wall. In one of the sessions, one HMD user also acted as an interpreter.

Case-specific challenges: Remote coordination between two different testing sites in one session was challenging since the remote facilitator did not have control over the test arrangement. The remote facilitator juggled being inside VR and observing what was occurring on Teams, making the facilitation demanding. Moreover, the on-site assistant was not available for one of the sessions. This added to the workload of the remote facilitator, who had to perform troubleshooting when technical difficulties occurred. Moreover, an unstable internet connection affected the synchronization to the cloud-based VR environment, causing differences in what users in different countries saw (

Figure 13). This issue also created a delay in the communication on Teams. The situation became more challenging due to the language barrier. One user test was also cancelled due to a total lockdown in that country.

3.5. Case 5: Collaborative Multi-User VR for Maintenance Method Development and Documentation Creation

Case description: In this study, we analyzed a collaborative multi-user virtual environment for maintenance method development and documentation creation. The aim of the study was to analyze the subjective perceptions and usefulness of a multi-user asymmetric VR system in accordance with industrial tasks. Asymmetric VR settings refer to the use of immersive and non-immersive platforms simultaneously, in this case by HMD and Teams participants. The study consisted of five user test sessions. For each session, two HMD participants and two Teams participants were present, with 20 participants in total. The two HMD participants joined the virtual environment simultaneously while the two Teams participants, from India, China, or the USA, joined the session using a Teams call (

Figure 14). Teams participants engaged in the VR session by viewing the VR session through one the HMD participants’ point-of-view, referred to as HMDe, and interacted with voice commands via Teams (

Figure 15). The test procedure was conducted using two HTC Vive Pro headsets with hand controllers.

Pandemic restrictions: At the time of the evaluation, the Finnish government recommended adhering to remote work practices [

56]. In addition, there were similar restrictions in place in India and the USA. The guidelines in China allowed the participants to work at the company facilities.

Test setup: Due to the COVID-19 situation, the sessions were designed to facilitate remote participation. For each session, two on-site HMD and two Teams participants were present. The facilitation consisted of two on-site facilitators, one chat facilitator, and remote observers. For the on-site sessions, there was one on-site facilitator and one HMD participant per location (See

Figure 16).

The on-site facilitator kept two meters distance from the HMD participant during the whole evaluation. The invitations for the on-site sessions (HMD participants) included the COVID-19 safety guidelines and required participants to read and agree with the participation guidelines upon acceptance of the invite. To facilitate remote participation, a series of cameras were placed in the two on-site testing locations to transmit and record the session in Teams (

Figure 14 and

Figure 15). In addition, the HMDe’s view was transmitted during the evaluation. The Teams session was recorded and stored for later analysis. The test procedure was conducted using two HTC Vive Pro headsets with hand controllers.

Case-specific challenges: This user test was conducted under one of the highest pandemic-restriction periods in Finland [

56]. Thus, approval to conduct the test was required from the company’s human resources and upper management from all sites involved in the test prior to the evaluation. Subsequently, the researchers corroborated the participants’ right to decline their participation. One on-site participant declined and one Teams participant postponed their participation. Other technical practicalities of the evaluation regarded the general setup. For instance, the audio in one of the on-site evaluation rooms was set up using a speaker to accommodate the HMDe participant and the on-site facilitator. Conversely, the audio from the other evaluation room was set up directly in the VR headset to afford only the HMD participant. Lastly, due to the varying roles of the remote participants, the facilitation had to be adjusted to suit the purpose. During the pre-test, we realized that the facilitation roles were cumbersome. Initially, only the two on-site facilitators were included in the evaluation script; however, with this setup, the Teams participants were left without technical support. For this reason, after the pre-test we decided to include a chat facilitator to supplement the remote facilitation and to provide technical support. In addition, we faced challenges communicating the varying range of roles and facilitators involved in the evaluation. This was mainly because the communication channels differed from on-site to remote facilitation; however, ultimately this evaluation included both types of facilitation at once.

3.6. Safety and Hygiene for On-Site VR Tests during the Pandemic

The safety protocols were enforced before, during, and after the user tests. Before the session, the facilitator and participants needed to wash their hands and use hand sanitizer before entering the test room. A sanitization table was set up at the entrance of the testing room, featuring FFP2 face masks, hand sanitizer, and surgical gloves for the participants and facilitators. People who could not to wear a mask or use hand sanitizer did not participate in the test. In addition, a UVC light was used to disinfect the headset and hand controllers, and the equipment was wiped with disinfecting wipes. To ensure the sanitization of the devices, VR disposable hygiene covers were placed after disinfection of the equipment.

The facilitator and participant wore FFP2 face masks and surgical gloves throughout the duration of the user test. The participants were instructed on the use of the VR equipment with a training video along with instructions from the facilitator, so each participant put the VR equipment on by themselves. Due to the practicalities of VR facilitation, the protocols also included guidelines to prevent physical contact between the facilitator and the participant. In case the participant was too close to a physical obstacle, the facilitator would warn the participant by saying “stop” and instruct in which direction to move. The facilitator was only advised to approach the participant in the case of emergency: e.g., if the participant feels dizzy and is at risk of falling/fainting. Fortunately, no such event occurred during the user tests. Lastly, remote participation was enabled, the testing session was streamed via a USB camera in the testing room using Teams. Participation consent forms were shared with the participants via Teams, and participants signed the form by adding their name to the Teams chat.

5. Results

The combined results of the five user tests can be categorized into the following groups: general user test arrangements, insights that are important because of new and fascinating technologies, insights that are important due to a pandemic, insights that are important because of the remote setup, insights that are important because of the industrial context, and insights regarding remote interviews. In this section we also give recommendations for safety and hygiene protocols for VR user testing.

5.1. Insights that are Especially Important Due to a Pandemic

In a pandemic, it is important to maintain social distancing (2 m), to disinfect devices and the premises between users, and to have enough time between sessions for ventilation (Case 3, Case 4, Case 5). Considering all preparations, disinfecting, and restrictions, in the ideal case only one test per day should be scheduled (Case 3). The room used for testing should be large enough to accommodate social distancing (Case 3, Case 4, Case 5).

Under normal conditions, the practice is that the on-site assistant or facilitator helps with putting on the VR equipment. Because of the 2 m distance rule, this is not possible during a pandemic, and therefore good instructions for (first-time) users are extremely important. Video instructions displayed on a large display proved to be efficient (Case 3, Case 4, Case 5).

Due to social distancing, travel limitations, and restricted gatherings and contact, it is important to enable remote participation and remote observation (Case 1, Case 2, Case 3, Case 4, Case 5). With VR testing, this is best supported with hybrid setups, where both the VR view and room view are shared over Teams (or another video conferencing app) for remote participants and observers (Case 1, Case 2, Case 3, Case 4, Case 5).

In Case 1 and Case 2, the participants were sent the VR set and a compatible laptop. What is remarkable is that these two participants were far from the tech-savvy type, both having a mechanical engineering and field maintenance background, with very little experience of VR. They had never set up VR glasses themselves, and only participated in some facilitated VR sessions before. Furthermore, the VR set they were using, the HP Reverb, was a new device for them. In these cases, they had a Teams video call with a VR expert. The VR expert went through the setup procedure step-by-step with them, and with the video connection the VR expert was able to see everything that happened at the remote location, for example, which lights were active on the hand controllers (Case 1, Case 2).

Due to the changing conditions and changing rules, the development was done in iterative sprints. Therefore, we had a slightly different setup in Case 1, which made comparisons difficult. This was the first case and the pandemic restrictions were new. It is advisable to plan carefully and be prepared for the worst-case scenario. In later cases, we planned the experiments so that changing restrictions did not have an effect on executing the user tests (Case 2, Case 3, Case 4, Case 5).

When facial masks are required, facial expressions are not fully visible to observers and, therefore, are difficult to interpret. Facial masks also muffle the voices of the participants. Therefore, good quality audio is essential. Furthermore, the think-aloud method is very important to understand the participants and their actions. When a mask was used, the verbal expression of feelings was became important in addition to thoughts, which was explained to participant. (Case 3, Case 4, Case 5).

5.2. Insights that are Especially Important Because of Remote and Hybrid Setups

It is good to have Teams or a similar video collaboration tool to support user test arrangements if done remotely, even if the tests are carried out in VR. It can be at least used in the orientation before logging in to VR (Case 1, Case 2, Case 3, Case 4, Case 5).

It is always advisable to record the user test session for later reference, but when observing user tests remotely, a live video feed is also essential. The integrated laptop camera is designed for “face-to-face” video conferencing, and it is difficult to position the laptop so that the user test environment and participants can be seen from that camera feed. Therefore, we have noted that it is useful to have an external USB camera, which can be easily positioned to cover the whole user testing area (Case 1, Case 3, Case 4, Case 5). An additional USB webcam is also useful if there is a need to introduce the use of new equipment, or if there are some practical issues like setting up the VR equipment for participants (Case 1, Case 2).

In Case 1, we asked the participants to fill in a questionnaire after the session. One person never filled in the questionnaire, and as it was anonymous, we did not know whom to remind. Thereafter, in all other user tests, we closed the session only after the participants had answered the questionnaire, and we could be sure that everyone filled out the questionnaire without any problems (Case 3, Case 4, Case 5). Thus, we recommend this practice.

In some user test sessions, the participant was alone in a remote location (Case 1, Case 2, Case 4, Case 5). This sometimes led to a chaotic situation without the on-site assistant (Case 4). Hence, it is recommended to have at least one on-site assistant at each testing location, if possible (Case 1, Case 4).

Moreover, the physical view and VR view of each testing site should be enabled. This helps the remote facilitator comprehend the circumstances of the testing site and determine the exact guidance needed for all questions raised. This is especially important if there is no on-site facilitator in the remote location. The remote observers benefit from multiple views as well (Case 3, Case 4, Case 5).

Instructions (a comprehensive user test plan) should be always available and communicated well in advance. Everybody can then monitor the progress and prepare for their upcoming tasks. This is especially important in the remote setup because of limited communication possibilities during sessions. If the researchers are physically in the same room, one may whisper and discretely ask something from a colleague without disturbing the session (Case 1, Case 3, Case 4, Case 5).

It is preferable to have instructions easily available for on-site participants and directly in the Teams chat box for remote participants. This approach provides immediate access and eliminates the need for additional hardware and software manipulation (Case 1, Case 3, Case 4, Case 5).

If the facilitation is done remotely, the setup needs to be very carefully tested. A full pre-test session or “a dress rehearsal“ is recommended where the whole test workflow with all the roles is tested. The test setup needs to be identical with the actual user test to discover any issues with the setup or workflow. We also recommend having someone with a technical background on site for at least the pre-test to quickly solve any technical issues with, for example, connectivity (Case 2, Case 3, Case5, Case 5).

The use of face masks impedes the observation of participants’ facial expressions and muffles their voices. This is especially poignant when using the think-aloud method and observing remotely, and the camera angle is not always optimal. Therefore, we recommend using several cameras, if possible, to overcome this and instructing the users to speak clearly (Case 3, Case 4, Case 5). The audio and camera settings and the network should be tested during the pre-test and prior to every user test with the remote observers (Case 3, Case 4).

The peculiarities of the remote observers’ computer setup, internet connection, software knowledge, and other variables, might affect the evaluation setup. If the roles and responses regarding technical support during the test session are not clear, it could cause confusion. Furthermore, when someone starts to solve technical issues, they are not able to concentrate on their actual role, e.g., facilitation/observation (Case 3, Case 4). On the other hand, when there is a dedicated person to help with the technical issues and the software being used, everyone is able to concentrate on the actual task and their own roles (Case 1, Case 2, Case 4). Therefore, it is advisable to have an extra technical support facilitator participating in the user test sessions if possible, or at least have roles and responsibilities agreed upon for such situations where technical support might be needed (Case 1, Case 3, Case 4).

In the case of hybrid on-site and remote facilitation, the evaluation procedure should be supported with online tools. For instance, the VR tasks were administered as notes in the VR environment so that HMD and non-HMD participants could read them. However, the remote tasks were administered by the chat facilitator in the chat window following the task list. In addition, the whole procedure was supplemented with an online presentation shared over Teams. The slide deck followed the sections described in the user test plan and helped all participants have a visual example of the upcoming items in the evaluation (Case 5).

In addition, at the end of 2020, Teams added an automatic transcription for recordings which aided in note taking (Case 4 and Case 5).

5.3. Insights that are Especially Important Because of the Industrial Context

In the industrial context, some on-site facilitators and assistants are company employees, not researchers. Therefore, they do not necessarily have experience with facilitation or research methodology. Thus, there is a strong need for detailed instructions and a pre-test session. In particular, the role of the facilitator and on-site assistant needs to be clearly defined, communicated, and tested in the pre-test session. Otherwise, this might lead to the on-site assistant taking a stronger role or misunderstanding their role and the remote facilitator losing control over the test environment and facilitation (Case 4). Similarly, if the interpreter is not a professional interpreter, the role needs to be made clear so that the opinions and the views of the interpreter are not mixed with those of the participants (Case 4).

Recruiting participants is trickier in the industrial context, where certain expertise is needed, than, for example, in contexts where general usability of an application is being tested. In the latter case, the background of the participant does not matter that much, and it is common practice in academia to recruit students from campus. In our industrial case, it was important to have real subject matter experts attending the user tests. However, due to the pandemic restrictions, some were not able to participate (Case 3 and Case 4). In Case 1 and Case 2, we enabled the participation of important subject matter experts by sending VR devices to their home and instructing them remotely on the setup.

In industrial research, it is important that tasks in the user test mimic the actual work or, even better, real tasks. This way, the participants understand the need for research input as it is directly related to their own work. Furthermore, this way the collected feedback is focused on improving the industrial work.

A good introduction to user testing is needed as people often come in a hurry from other work duties. Therefore, we have learned that a good practice is to have refreshments available at the beginning and allow participants to relax and have a small break while explaining the purpose and context to get them onboarded into testing. Again, this a very different from recruiting students from campus. Of course, remote setups made it difficult to offer refreshments, but in some locations the remote assistant was able to organize it.

5.4. Insights Regarding Conducting Remote and Hybrid Interviews

In a hybrid interview setting, many factors could affect how interviewees behave in the session. The different nature of being remote and on-site is among the most influential. On-site interviewees tend to dominate the discussion as the interview is conducted in a face-to-face format. Remote interviewees might find it hard to keep up with the conversation. Hence, they may find that their role is less significant in the discussion. Moreover, cultural and personal differences may also affect how active people are in the remote interview. Some are more open to discussion on-site while some might find it easier to communicate remotely. The language barrier could also have a significant impact as some may not be able to keep up with the discussion in a foreign language (Case 4, Case 5). Therefore, it is important that the interviewer proactively engages everyone throughout the session. Using a non-professional interpreter may lead to situation where the interpreter dominates the discussion over the participants (Case 4).

Every interviewee should be given a chance to speak and asked or encouraged by the interviewer to express their opinion in all questions. One may hesitate to answer as they deem their responses repetitive to others, which might not be the case. Tackling this assumption by asking everyone to express their views can result in a more fruitful discussion. In addition, the interviewer can address a question to a specific participant to initiate the conversation (Case 4, Case 5).

The note-taking procedure is usually cumbersome during in-person interviews as interviewers usually rely on physical notes. In contrast, remote interviews improved the review and note-taking procedure during the interviews (Case 4, Case 5). This is because in remote interviews, interviewers had easy access to digital notes. In addition, remote interviews over platforms such as Teams allowed the interviewers to have access to multiple monitors. Essentially, the interviewer set up the online interview on one screen and the notes on another.

In addition, video conference tools expanded the ways to share ideas. In some cases, the interviewers and interviewees used the chat box to type the discussion (Case 4, Case 5). This was especially observed in interviews where the participants preferred and felt more confident writing rather than speaking in a foreign language. Similarly, video conference software enabled the interviewers and interviewees to reinforce the communication using visual tools. For instance, some interviewees used the screen-sharing tool to share slide decks or images to support the discussion (Case 3, Case 4, Case 5). In other cases, the interview was supplemented with applications, such as Miro, to brainstorm and describe new ideas (Case 4).

In all interview sessions it was observed that the use of cameras to see the facial expressions enhanced the engagement in the conversation. Likewise, seeing the facial and hand expressions eased the interpretation of the discussion in later analysis. The use of a camera was particularly beneficial in the case of group interviews where the interviewees reflected on their colleagues’ answers. In addition, all the interviews benefited from seeing the interviewer and other participants on the live stream (Case 4, Case 5).

5.5. Recommendations for On-Site Safety and Hygiene Protocols for VR User Testing

On-site safety and hygiene protocols provided comfort to on-site facilitators and participants as they were assured that the researchers were compliant with the local regulations. Similarly, the researchers adhered to company regulations and thus worked closely with managers and human resource representatives. Clear and concise safety and hygiene guidelines helped us to communicate safety protocols and demonstrate to the company authorities and participants that the user tests would follow official regulations and guidelines.

As the pandemic situation and official guidelines changed, we had to iteratively change our procedures.

Figure 17 presents the ultimate on-site safety measures that we used during the pandemic in VR user tests. Furthermore, the safety measures taken by the on-site facilitators and participants are presented in

Figure 18.

5.6. Insights That Are Especially Important Because of Novel and Fascinating Technologies

When you are testing novel, exciting technologies, such as VR, test participants might get excited and attempt things outside of the scope of the test plan. In Case 2, we ran out of time due to this and did not collect all the feedback during the session as planned. Therefore, some of the feedback was collected afterwards via email. Our recommendation is that if there are people who have not used the equipment or technology before, reserve enough time for users to explore the environment and its features before the actual user test. The same recommendation applies to all exciting technologies (Case 4, Case 5).

As mentioned earlier, it is sometimes advisable to have a separate introduction and familiarization session before the user test.

5.7. General Comments Regarding User Test Arrangements

Especially in the case of complex evaluation setups, such Case 5 with the combined in-person and remote evaluation, it is imperative to script a comprehensive user test plan. In the plan, all the evaluation steps should be clearly specified, and action points appointed to specific people: participants, facilitators, and observers. If possible, the script should also include estimate times per section so that the evaluation remains on track. Prior to the evaluation, the facilitators, assistants, and observers should have a debrief meeting to overview the test plan. During the evaluation, the facilitators should ensure that the participants comprehend the procedure, roles, and tasks.

User test sessions should be scheduled in a staggered manner, with preferably only one or two tests per day. This will give the facilitator and observers time to finalize notes and prepare for the next tests in an orderly manner. This is especially important when the facilitators and observers have other work duties alongside the user tests, as is often the case in industry research activities. This is also important during a pandemic to have enough ventilation time for test facilities between test sessions, and to reserve time for disinfecting procedures.

When conducting Case 1, we had to adapt to the changing situations and setups on an ad hoc basis, which made the tests challenging. Therefore, a pre-test practice was used for Cases 2, Case 3, Case 4, and Case 5. The pre-test worked as a “dress rehearsal” where any complications and difficulties were noticed, and no ad hoc fixes or adjustments were needed in the actual user tests. In most cases, one pre-test was enough to finetune testing procedures, but in Case 5, for example, several pre-tests were needed before the setup was clear and manageable.

Recording the user test sessions is highly recommended. Researchers can later conduct further analysis of the verbal and non-verbal feedback from the users, which might not be thorough while facilitating the session. The audio and camera settings should be tested during the pre-test and prior to every session.

It is a good practice to have a video conferencing connection with recording (e.g., Teams session) of all organizers and participants throughout the whole session, from the beginning until submission of questionnaires or whatever is the last part of the test session. This ensures that everyone is part of the whole end-to-end process and as much information as possible is gathered (Case 1, Case 2, Case 3, Case 4, Case 5). For research and note taking purposes, it is good to also record the preparations (e.g., setting up VR gear and cameras) (Case 3, Case 4, Case 5).

In earlier work, we had introduction + user test + interview sessions that took close to 2.5 h, and it proved to be too long and intensive for the users. We noticed that a separate introduction and training session reduced the intensity of actual user testing and allowed more time to be spent on each task and on discussion arising from the think-aloud method during the session. In Case 1 and Case 4, we had a separate 0.5–1.5 h introduction and training session for the VR. Thereafter, within 1–3 days, we had the actual VR collaboration user test with work-role-related tasks. In both cases, the user test and discussion lasted 1.5–2 h. However, in Case 4, each user spent only 20–30 min in an intensive VR environment as their role was rotated. In Case 5, the training session and user test were held in succession, and the whole session with the training, user test, surveys (before and after the user test), and group interview took up to 2 h and 45 min, but it included a break and the schedule was not tight. As our cases were to test the industrial use of collaborative VR rather than general usability, familiarization helped users to shift their focus and provide more relevant comments on their work role and tasks. Such results were achieved with relaxed scheduling throughout all cases.

6. Discussion

Even though arranging user tests during a pandemic with all the restrictions is challenging, with good planning and proper setups it is possible, as this article proves. Yet there are some differences and limitations in remote user testing compared to face-to-face testing sessions. For example, on-site people often continue discussion in an informal manner after finishing the user test tasks. This happened, for example, in Case 3 and Case 5 with some on-site participants. In many remote setups, possibility for this kind of discussion is missing and valuable comments are lost.

Some people are shy and/or anxious to speak when several people are listening, and prefer to share their views in one-to-one discussions. Some participants also noted that they feel nervous because of the remote observers (Case 3, Case 5). Thus, remote observers should remain as unobtrusive as possible, so the participant acts as natural as possible (see, e.g., [

57]). We also highlight the importance of good facilitation and encourage getting everyone deeply involved.

In remote and mixed setups, it is important to have clear roles for organizers and to have enough researchers involved. In the ideal case, there is a dedicated person for resolving technical problems. An on-site facilitator should be able to concentrate on the persons that are physically located at the site; therefore, it is advisable to have a dedicated chat facilitator, or similar role, to narrate and facilitate remote participants.

In an on-site session, it is natural and easy for observers to move a little when needed to have a better view of the participants during the session. In remote observation this is not possible. Thus, we recommend having several cameras, a movable camera, and an onsite assistant who can move the camera. In addition, there is always a risk of network issues during remote sessions, and, therefore, a recording of the session and an offline camera as backup are encouraged.

It is always important to plan user tests properly, but when implementing a remote user test, the planning becomes even more important. Ad hoc changes are easier to manage in on-site sessions. The remote setup is especially demanding when several remote locations are involved, and when non-research oriented remote assistants are used. Thus, good planning with back-up plans for possible incidents is needed.

One good practice is to have a pre-test (“dress rehearsal”) practice where the whole user test procedure and setup is tested. The roles of all participants should be clear and well defined. This is especially important when utilizing industrial employees as on-site assistants or interpreters.

During a pandemic, the unexpected sometimes happens: new restrictions are announced, people are quarantined, or for some reason on-site participation is cancelled at the last minute (Case 4, Case 5). Location-independent remote participation reduces the risk of cancellations and rescheduling. In Case 1 and Case 2, the test devices (VR equipment) were sent to some participants’ homes to enable their participation.

The role of hygiene and safety in user testing during a pandemic cannot be stressed enough. During more normal times, the facilitator is responsible for safe working practices, such as making sure that the participants wear the required personal protective equipment (PPE). During a pandemic, the role of safety is increased with requirements for wearing masks, disinfecting the equipment, social distancing, etc. This might be challenging remotely and requires careful planning. Additionally, if the remote participant is alone in the remote location, physical safety must be taken care of for the HMD participant. Researchers [

30,

31] have noted that a video-based approach enables the researcher to understand exactly the steps of the participant during the evaluation. Thus, having a camera view of the remote location is highly recommended in remote and mixed (on-site and remote) evaluations (Case 3, Case 4, Case 5).

Even though the pandemic has made many areas of life challenging, there are also good practices that we have learned that can be used to enhance post-pandemic global collaboration. We started planning Case 1 in the pre-pandemic era, but as the pandemic hit, we adjusted our plans to accommodate for the new conditions. With learnings from each of the cases, our processes and the execution of the remote and hybrid user tests became smoother, and many of these ideas can be applied to the post-pandemic world. By working in remote and hybrid setups, we can involve a global user base in the testing, save on travel money, and encourage sustainability.