Artificial Intelligence in Digital Marketing: Towards an Analytical Framework for Revealing and Mitigating Bias

Abstract

1. Introduction

2. Literature Review

2.1. Artificial Intelligence and Bias in Marketing

2.2. Relevant Methods, Models, and Frameworks

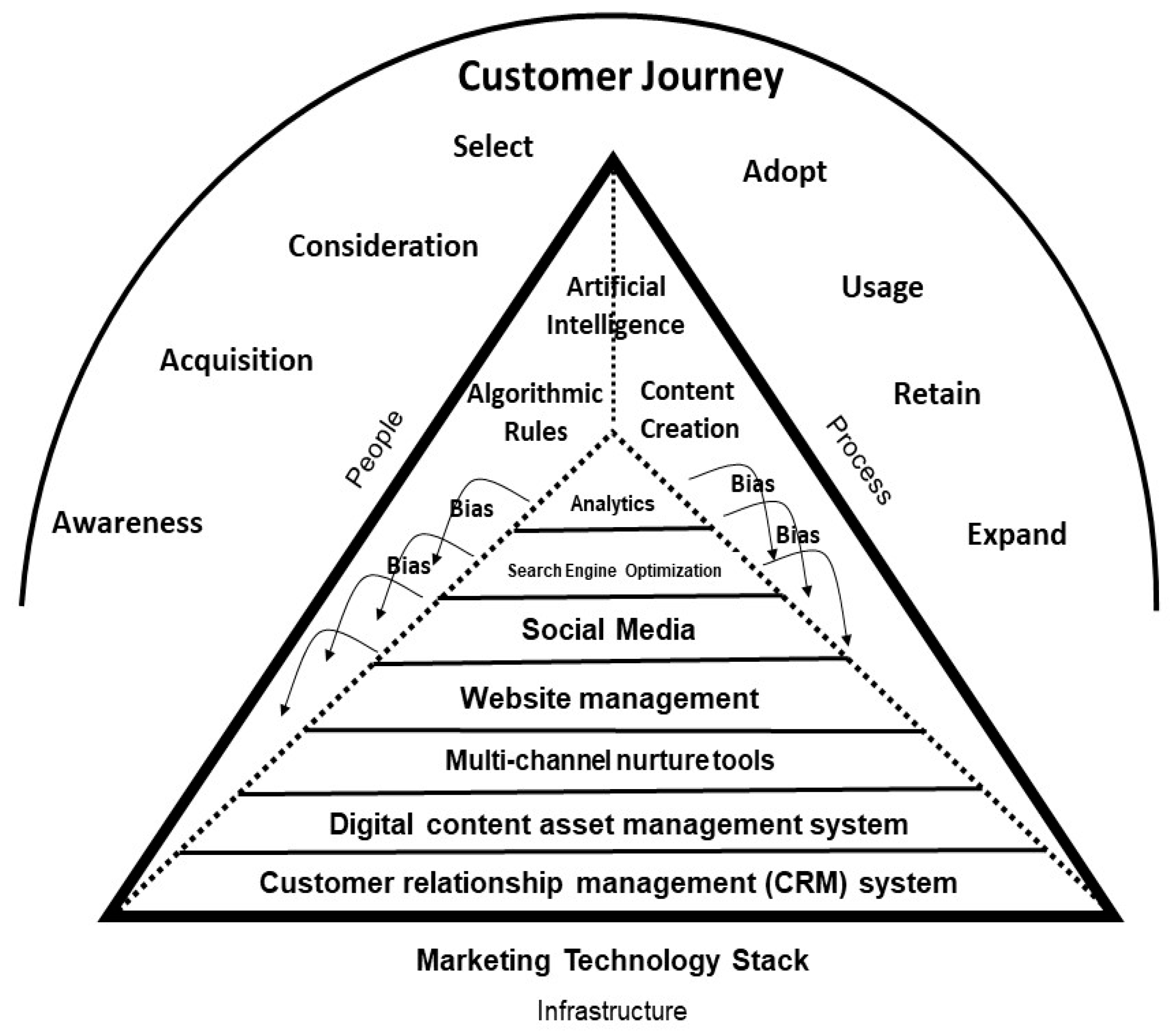

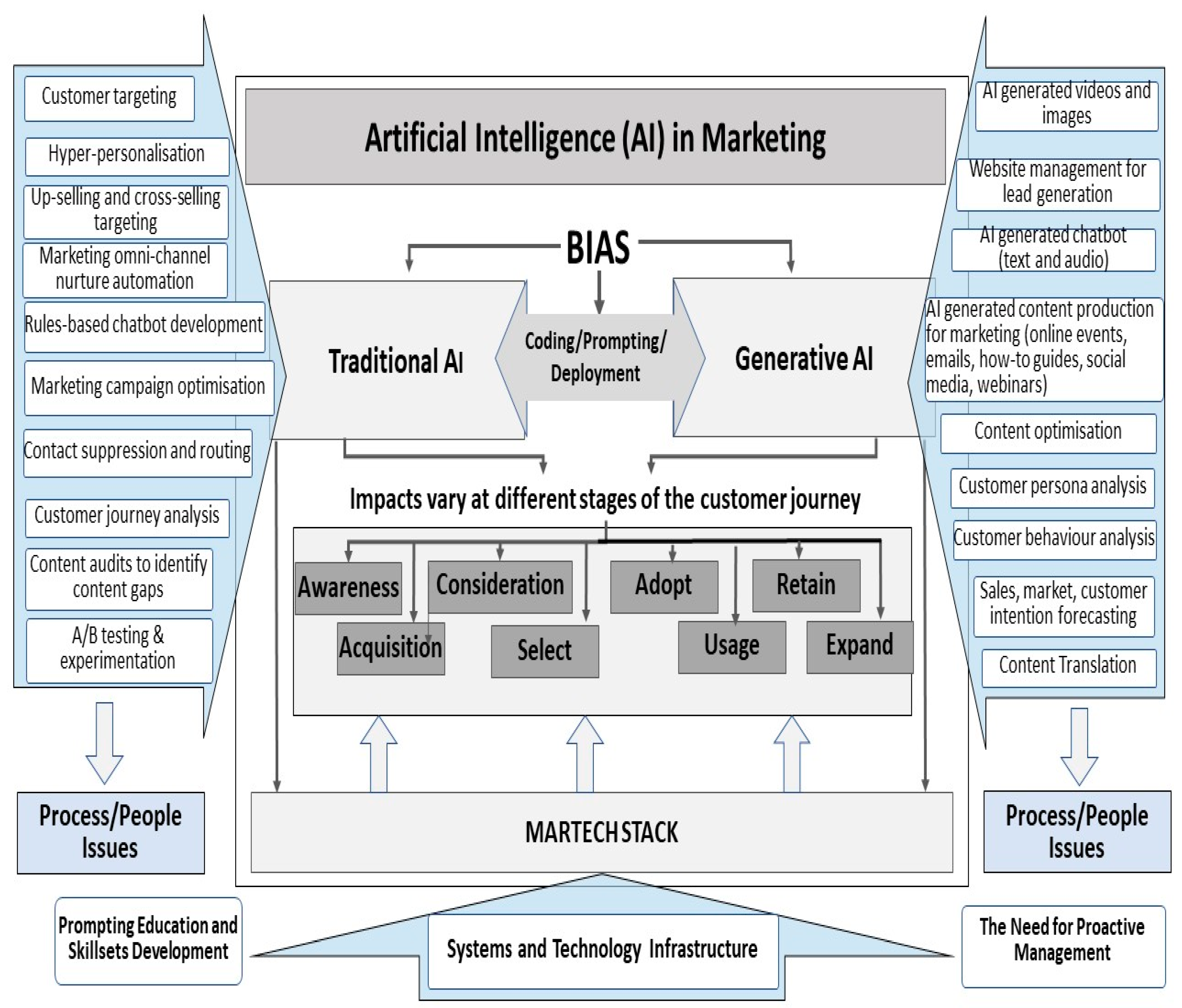

2.3. Provisional Conceptual Framework

3. Research Method

3.1. The Case Study Approach, Data Collection and Research Philosophy

3.2. Data Analysis and Validation

4. Results

4.1. RQ1. What Are the Current and Perceived Bias Issues in Coding, Prompting and Deployment of AI in Digital Marketing?

4.2. RQ2. What Framework Can Be Developed to Provide Guidance for Practitioners, for Revealing and Mitigating Bias in AI Deployment in Digital Marketing?

4.2.1. PCF Review

4.2.2. Towards an Analytical Framework for Revealing and Mitigating Bias

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

| Awareness | Acquisition | Consideration | Select | Adopt | Usage | Retain | Expand | |

|---|---|---|---|---|---|---|---|---|

| Gen AI | Content produced for advertising: images, videos, text and audio [14,18,38] R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  R05 “Translation of copy through AI, usage of AI service to generate voice-over in language for video localization”  | Content produced for acquisition stage: whitepapers, eBooks, etc. [14,18,38]  Online events (i.e., webinars)—full content production, tailoring content [6]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  | Content produced for consideration stage: whitepapers, eBooks, etc.  AI Chatbots—text, audio [14]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  R05 “Translation of copy through AI, usage of AI service to generate voice-over in language for video localization”  | Content produced for Select stage: Guided experiences and free trials [14,18,38] Inbound qualification services: contact us and chatbots [75,76]  Marketplace to buy software [77]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  | Personalised generated content at scale. Content produced for usage, retain and expand stage: emails, how-to guides, webinars, etc. [14,18,38] A/B testing on email and content wording and structure [15]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  R05 “Translation of copy through AI, usage of AI service to generate voice-over in language for video localization”  | |||

| Trd AI | Using Target Account Lists to target certain companies and personas [11,78,79] R02 “Persona rules”.  R03 “hyper-personalization tools, advanced A/B testing methodologies, customer journey analysis”.  | R01 “CSC leverages AI and machine learning to deliver personalized customer experiences” Using Target Account Lists to target certain companies [11,78,79]  Webinars—segmenting event audiences, geofencing [6]  | AI Chatbots—routing rules/suppression rules [76] Using Target Account Lists to target certain companies [11,78,79]  R05 “use industry-standard tools” (embedded AI)  | Contact us and inbound qualification services [75] R05 “use industry-standard tools” (embedded AI)  | R01 “CSC leverages AI and machine learning to deliver personalized customer experiences” Using Target Account Lists to target upselling and cross-selling software to specific companies and personas [11,78,79]  Nurture emails and webcast routing rules [2,80]  R02 “using Marketo for marketing nurture automation”.  R05 “use industry-standard tools” (embedded AI)  R03 “hyper-personalization tools, advanced A/B testing methodologies, customer journey analysis”.  | |||

| Search Engine Optimisation | Social Media | Website | Multi-Channel Nurture Tools | Digital Asset Management (DAM) | Customer Relationship Management (CRM) | Analytics | |

|---|---|---|---|---|---|---|---|

| Gen AI | R03 “content optimization for SEO”  Content generation with SEO keywords (optimised organic ranking) [14,18,38]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  | R02 “Generative AI creates a breadth of banners to be used in social channels” R04 “Creating social posts for customer references for some events”.  R03 “Social for awareness and content distribution”  Paid social personalised generated content [14,18,38]  Social media content generated from social listening [81]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  | R03 “website management for lead generation” Personalised generated content [14,18,38]  Software reviews—automate and analyse customer feedback [82]  R05 “investment to use Adobe Experience Manager (embedded AI)”  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  | R03 “channel nurture tools for email nurture and omni channel strategy” Personalised generated content [14,18,38]  A/B testing on email and content wording and structure [15]  R04 “internal CSC AI”. R05 “Gen-AI CSC internal tool”  | Personalised generated content [14,18,38] R02 “Generative AI supports the content localization process.” Localisation of content [14]  Generating descriptions for accessible content [22]  R05 “investment to use Opal (embedded AI)”  R03 “DAM for content management” R05 “Translation of copy through AI, usage of AI service to generate voice-over in language for video localization”  | Generate predictive analytics—customer behaviour [83,84] R03 “CRM for lead management”  | Generate forecasts [85,86,87] R03 “Analytics for reporting” |

| Trd AI | Targeting rules. A/B testing on keywords. Metadata matching rules. [75]  R01 “CSC leverages AI and machine learning to deliver personalized customer experiences” | Social listening targeting rules [81]. R05 “use industry-standard tools” (embedded AI—Sprinklr social media software)  | Personalisation rules and A/B testing on website. [11,78]  R02 “Persona rules”.  R03 “hyper-personalization tools, advanced A/B testing methodologies, customer journey analysis”  R01 “CSC leverages AI and machine learning to deliver personalized customer experiences”  | R02 “using Marketo for marketing nurture automation”. Nurture & promotional emails—data profiling, segmentation, rules, scoring. (i.e., by product based on interaction) [2,80]  R03 “channel nurture tools for email nurture and omni channel strategy”  | Automating tagging and categorising content [14]  R06 “using AI as part of content audits to identify content gaps”.  R01 “our marketing department leverages AI to translate content and deliver content to the right personas”  R05 “internal CSC Machine translation” | R01 “our marketing department leverages AI to identify target accounts” Contact suppression rules. Data modelling algorithms. Contact routing rules. Contact scoring rules. [2,80]  R05 “internal CSC Machine translation” R03 “CRM for lead management”  | R01 “CSC leverages AI and machine learning to optimize campaign performance” R02 “Persona rules”.  R03 “hyper-personalization tools, advanced A/B testing methodologies, customer journey analysis”.  Analysis of customer data [78] Dependent on data maturity—large database required [86]  First-party & third-party data targeting [87]  R01 “our marketing department leverages AI to identify target accounts and optimize campaign programs effectively”  R05 “internal CSC Machine translation” |

References

- Li, R. Artificial Intelligence Revolution: How AI Will Change Our Society, Economy, and Culture; Skyhorse: New York, NY, USA, 2020. [Google Scholar]

- Roetzer, P.; Kaput, M. Marketing Artificial Intelligence: AI, Marketing, and the Future of Business; BenBella Books: Dallas, TX, USA, 2022. [Google Scholar]

- Gerhard, T. Bias: Considerations for research practice. Am. J. Health-Syst. Pharm. 2008, 65, 2159–2168. [Google Scholar] [CrossRef] [PubMed]

- Schwartz, R.; Vassilev, A.; Greene, K.; Perine, L.; Burt, A.; Hall, P. Towards a Standard for Identifying and Managing Bias in Artificial Intelligence; NIST Special Publication 1270; US Department of Commerce, National Institute of Standards and Technology: Gaithersburg, MD, USA, 2022.

- Stamford, C. Gartner Survey Finds 63% of Marketing Leaders Plan to Invest in Generative AI in the Next 24 Months. 2023. Available online: https://www.gartner.com/en/newsroom/press-releases/2023-08-23-gartner-survey-finds-63-percent-of-marketing-leaders-plan-to-invest-in-generative-ai-in-the-next-24-months (accessed on 23 March 2024).

- Buczek, L.; Holder-Browne, A.; Maddox, M.; Kurtzman, W.; Jimenez, D.; Rajagopal, S.; Lall, R.; White, S.; Wallace, D. IDC FutureScape: Worldwide Chief Marketing Officer 2024 Predictions. October 2023. IDC FutureScape. Available online: https://www.idc.com/getdoc.jsp?containerId=US50107023 (accessed on 12 October 2024).

- Rethlefsen, M.L.; Page, M.J. PRISMA 2020 and PRISMA-S: Common questions on tracking records and the flow diagram. J. Med. Libr. Assoc. 2022, 110, 253. [Google Scholar] [CrossRef]

- Mousavi, Z.; Varahram, S.; Ettefagh, M.; Sadeghi, M.; Feng, W.; Bayat, M. A digital twin-based framework for damage detection of a floating wind turbine structure under various loading conditions based on deep learning approach. Ocean Eng. 2024, 292, 116563. [Google Scholar] [CrossRef]

- Crockett, L. “All AI’s Are Psychopaths”? Reckoning and Judgment in the Quest for Genuine AI; Augsburg University: Minneapolis, MN, USA, 2020. [Google Scholar]

- Heimo, O.I.; Kimppa, K.K. No Worries-the AI Is Dumb (for Now). Tethics 2019, 5, 1–8. [Google Scholar]

- Earley, S. Using the customer journey to optimise the marketing technology stack. Appl. Mark. Anal. 2021, 6, 190–210. [Google Scholar] [CrossRef]

- Buolamwini, J.; Gebru, T. Gender shades: Intersectional accuracy disparities in commercial gender classification. In Proceedings of the Conference on Fairness, Accountability and Transparency, New York, NY, USA, 23–24 February 2018; pp. 77–91. [Google Scholar]

- Ziakis, C.; Vlachopoulou, M. Artificial Intelligence in Digital Marketing: Insights from a Comprehensive Review. Information 2023, 14, 664. [Google Scholar] [CrossRef]

- Haleem, A.; Javaid, M.; Qadri, M.A.; Singh, R.P.; Suman, R. Artificial intelligence (AI) applications for marketing: A literature-based study. Int. J. Intell. Netw. 2022, 3, 119–132. [Google Scholar] [CrossRef]

- Nair, K.; Gupta, R. Application of AI technology in modern digital marketing environment. World J. Entrep. Manag. Sustain. Dev. 2021, 17, 318–328. [Google Scholar] [CrossRef]

- Brenner, M. The Ultimate Guide to a Content Marketing Strategy That Delivers ROI. Marketing Insider Group. 2023. Available online: https://marketinginsidergroup.com/content-marketing/content-marketing-strategy-roi-ultimate-guide/ (accessed on 5 January 2024).

- Kotzsch, R. Reduce Marketing Costs by Reducing Your Translation Costs. Smart Brief. 2023. Available online: https://www.smartbrief.com/original/reduce-marketing-costs-by-reducing-your-translation-costs (accessed on 7 November 2023).

- Rudan, N. 6 Ways Marketers Are Using Generative AI: Is It Really Saving Time? Databox. 2023. Available online: https://databox.com/how-are-marketers-using-gen-ai (accessed on 8 February 2024).

- Scott, D.M. The New Rules of Marketing and PR: How to Use Content Marketing, Podcasting, Social Media, AI, Live Video, and Newsjacking to Reach Buyers Directly; John Wiley & Sons: London, UK, 2022. [Google Scholar]

- Sun, L.; Wei, M.; Sun, Y.; Suh, Y.J.; Shen, L.; Yang, S. Smiling women pitching down: Auditing representational and presentational gender biases in image-generative AI. J. Comput.-Mediat. Commun. 2024, 29, 45–53. [Google Scholar] [CrossRef]

- Henning, T.M. Don’t Just “Google It”: Argumentation and Racist Search Engines. Fem. Philos. Q. 2022, 8, 2. [Google Scholar] [CrossRef]

- Tang, R.; Du, M.; Li, Y.; Liu, Z.; Zou, N.; Hu, X. Mitigating Gender Bias in Captioning Systems. In Proceedings of the IW3C2 Proceedings from International World Wide Web Conference Committee, New York, NY, USA, 3 June 2021. [Google Scholar]

- Tatman, R. Gender and Dialect Bias in YouTube’s Automatic Captions. In Proceedings of the First ACL Workshop on Ethics in Natural Language Processing. Association for Computational Linguistics, Valencia, Spain, 18 April 2017. [Google Scholar]

- Koenecke, A.; Nam, A.; Lake, W.; Goel, S. Racial disparities in automated speech recognition. In Proceedings of the National Academy of Sciences, Washington, DC, USA, 23 March 2020. [Google Scholar]

- Conick, H. Read This Story to Learn How Behavioral Economics Can Improve Marketing. AMA. 2023. Available online: https://www.ama.org/publications/MarketingNews/Pages/read-story-learn-how-behavioral-economics-can-improve-marketing.aspx. (accessed on 6 November 2023).

- Smith, M.; Conrad, S. Algorithmic Bias: A Threat to Digital Marketing Ethical Practices. J. Bus. Ethics 2020, 163, 623–634. [Google Scholar]

- Diakopoulos, N. Accountability in algorithmic decision making. Commun. ACM 2016, 59, 56–62. [Google Scholar] [CrossRef]

- Karimi-Mamaghan, M.; Mohammadi, M.; Meyer, P.; Mohammad, A.; Karimi-Mamaghan, A.; Talbi, E. Machine learning at the service of meta-heuristics for solving combinatorial optimization problems: A state-of-the-art. Eur. J. Oper. Res. 2022, 296, 393–422. [Google Scholar] [CrossRef]

- Lipton, Z. The mythos of model interpretability. Acmqueue 2016, 5, 1–27. [Google Scholar]

- Metz, C.; Thompson, S. What to Know About Tech Companies Using A.I. to Teach Their Own A.I. 2024. Available online: https://www.nytimes.com/2024/04/06/technology/ai-data-tech-companies.html (accessed on 10 February 2024).

- Kaminski, N. Women in Tech: Why Are Only 10% of Software Developers Female? 2023. Available online: https://jetrockets.com/blog/women-in-tech-why-are-only-10-of-software-developers-female (accessed on 6 February 2024).

- Vailshery, L. Software Developers: Distribution by Gender 2022. 2022. Available online: https://www.statista.com/statistics/1126823/worldwide-developer-gender/ (accessed on 2 March 2024).

- Yalkin, C.; Özbilgin, M. Neo-colonial hierarchies of knowledge in marketing: Toxic field and illusion. Mark. Theory 2022, 22, 191–209. [Google Scholar] [CrossRef]

- Palmer, L. Traditional AI vs GenAI: Amplified Risks and Challenges in Governance Explained. 2023. Available online: https://www.drlisa.ai/post/traditional-ai-vs-genai-amplified-risks-and-challenges-in-ai-governance-explained (accessed on 10 November 2023).

- Huang, M.H.; Rust, R.T. A strategic framework for artificial intelligence in marketing. J. Acad. Mark. Sci. 2021, 49, 30–50. [Google Scholar] [CrossRef]

- Buch, I.; Thakkar, M. AI in Advertising. 2023. Available online: https://www.researchgate.net/publication/357268694_AI_in_Advertising (accessed on 8 February 2024).

- Yu, Y. The Role and Influence of Artificial Intelligence on Advertising Industry. Adv. Soc. Sci. Educ. Humanit. Res. 2021, 631, 190–194. [Google Scholar]

- Nesterenko, V.; Olefirenko, O. The impact of AI development on the development of marketing communications. Mark. Menedžment Innovacij 2023, 14, 169–181. [Google Scholar] [CrossRef]

- Brynjolfsson, E.; Rock, D.; Syverson, C. Artificial intelligence and the modern productivity paradox. Econ. Artif. Intell. Agenda 2019, 23, 23–57. [Google Scholar]

- Jarrahi, M.; Askay, D.; Eshraghi, A.; Smith, P. Artificial intelligence and knowledge management: A partnership between human and AI. Bus. Horiz. 2023, 66, 87–99. [Google Scholar] [CrossRef]

- Jones, P.; Wynn, M. Artificial Intelligence and Corporate Digital Responsibility. J. Artif. Intell. Mach. Learn. Data Sci. 2023, 1, 50–58. [Google Scholar] [CrossRef] [PubMed]

- Dwivedi, Y.K.; Kshetri, N.; Hughes, L.; Slade, E.L.; Jeyaraj, A.; Kar, A.K.; Baabdullah, A.M.; Koohang, A.; Raghavan, V.; Ahuja, M.; et al. “So what if ChatGPT wrote it?” Multidisciplinary perspectives on opportunities, challenges and implications of generative conversational AI for research, practice and policy. Int. J. Inf. Manag. 2023, 71, 102642. [Google Scholar] [CrossRef]

- Nadeem, A.; Abedin, B.; Marjanovic, O. Gender bias in AI: A review of contributing factors and mitigating strategies. In Proceedings of the Australasian Conference on Information Systems, Wellington, New Zealand, 1 December 2020. [Google Scholar]

- Varona, D.; Suárez, J.L. Discrimination, Bias, Fairness, and Trustworthy AI. Appl. Sci. 2022, 12, 5826. [Google Scholar] [CrossRef]

- Shachar, C.; Gerke, S. Prevention of bias and discrimination in clinical practice algorithms. JAMA 2023, 4, 283–284. [Google Scholar] [CrossRef]

- Dinesh, C. Mitigating AI Bias: Strategies for Ethical and Fair Algorithms. 21 November 2023. Linked-In. Available online: https://www.linkedin.com/pulse/mitigating-ai-bias-strategies-ethical-fair-algorithms-dinesh-c-qeg2c/ (accessed on 12 January 2024).

- Kaur, A. Mitigating Bias in AI and Ensuring Responsible AI. No Date. Leena AI. Available online: https://leena.ai/blog/mitigating-bias-in-ai/#:~:text=Strategies%20for%20Identifying%20and%20Addressing%20Bias%20in%20AI%20Systems&text=One%20crucial%20approach%20is%20diverse,more%20equitable%20and%20unbiased%20decisions (accessed on 14 January 2025).

- Lemon, K.N.; Verhoef, P.C. Understanding customer experience throughout the customer journey. J. Mark. 2016, 80, 69–96. [Google Scholar] [CrossRef]

- Moorman, C.; Soli, J.; Seals, M. How the Pandemic Changed Marketing Channels. Harvard Business Review. 2023. Available online: https://hbr.org/2023/08/how-the-pandemic-changed-marketing-channels (accessed on 10 February 2024).

- Micheaux, A.; Bosio, B. Customer Journey Mapping as a New Way to Teach Data-Driven Marketing as a Service. J. Mark. Educ. 2019, 41, 127–140. [Google Scholar] [CrossRef]

- Purmonen, A.; Jaakkola, E.; Terho, H. B2B customer journeys: Conceptualization and an integrative framework. Ind. Mark. Manag. 2023, 113, 74–87. [Google Scholar] [CrossRef]

- Strong, F. B2B Sales Cycles Require 27 Interactions both Digital and Human [Study]. 2022. Available online: https://www.swordandthescript.com/2022/05/b2b-sales-interactions/ (accessed on 14 February 2024).

- Santosh, M. Artificial Intelligence and Digital Marketing: An Overview. Int. J. Eng. Sci. Humanit. 2024, 14, 118–122. [Google Scholar] [CrossRef]

- Jabareen, Y. Building a conceptual framework: Philosophy, definitions, and procedure. Int. J. Qual. Methods 2009, 8, 49–62. [Google Scholar] [CrossRef]

- Dowling, K.; Guhl, D.; Klapper, D.; Spann, M.; Stich, L.; Yegoryan, N. Behavioral biases in marketing. J. Acad. Mark. Sci. 2020, 48, 449–477. [Google Scholar] [CrossRef]

- Schwandt, T. Constructivist, interpretivist approaches to human inquiry. Handb. Qual. Res. 1994, 1, 118–137. [Google Scholar]

- Flick, U.; von Kardorff, E.; Steinke, I. Qualitative Forschung. Ein Handbuch; Qualitative Research. A Handbook; Rowohlt Taschenbuch: Hamburg, Germany, 2013. [Google Scholar]

- Holliday, A. Doing & Writing (3e)-Qualitative Research; SAGE Publications: London, UK, 2006. [Google Scholar]

- Thomas, D.R. A General Inductive Approach for Analyzing Qualitative Evaluation Data. Am. J. Eval. 2006, 27, 237–246. [Google Scholar] [CrossRef]

- Saunders, M.; Lewis, P.; Thornhill, A. Research Methods for Business Students, 3rd ed.; Pearson Education Limited: London, UK, 2023. [Google Scholar]

- Creswell, J.W.; Creswell, J.D. Research Design: Qualitative, Quantitative, and Mixed Methods Approaches; Sage: London, UK, 2018. [Google Scholar]

- Islam, M.; Aldaihani, F. Justification for Adopting Qualitative Research Method, Research Approaches, Sampling Strategy, Sample Size, Interview Method, Saturation, and Data Analysis. J. Int. Bus. Manag. 2022, 5, 1–11. [Google Scholar]

- Guest, G.; Bunce, A.; Johnson, L. How Many Interviews Are Enough? An Experiment with Data Saturation and Variability. Field Methods 2006, 18, 59–82. [Google Scholar] [CrossRef]

- Ritchie, J.; Lewis, J.; Elam, G. Designing and selecting samples. In Qualitative Research Practice: A Guide for Social Science Students and Researchers; Ritchie, J., Lewis, J., Eds.; Sage Publications Ltd.: London, UK, 2003; pp. 77–108. [Google Scholar]

- Lee, N.; Lings, I. Doing Business Research: A Guide to Theory and Practice; Sage Publications Ltd.: London, UK, 2008. [Google Scholar]

- Cauteruccio, F. Investigating the emotional experiences in eSports spectatorship: The case of League of Legends. Inf. Process. Manag. 2023, 60, 103516. [Google Scholar] [CrossRef]

- Terry, G.; Hayfield, N.; Clarke, V.; Braun, V. Thematic analysis. SAGE Handb. Qual. Res. Psychol. 2017, 2, 17–37. [Google Scholar]

- Webb, C. Analysing qualitative data: Computerized and other approaches. J. Adv. Nurs. 2001, 29, 323–330. [Google Scholar] [CrossRef]

- Mason, J. ‘Re-using’ qualitative data: On the merits of an investigative epistemology. Sociol. Res. Online 2007, 12, 39–42. [Google Scholar] [CrossRef]

- Smith, J.; Firth, J. Qualitative data analysis: The framework approach. Nurse Res. 2011, 18, 42–48. [Google Scholar] [CrossRef]

- Onwuegbuzie, A.J.; Leech, N.L.; Collins, K.M. Qualitative analysis techniques for the review of the literature. Qual. Rep. 2012, 17, 17–28. [Google Scholar] [CrossRef]

- Gray, D. Doing Research in the Business World; Sage Publications Ltd.: London, UK, 2019; pp. 1–896. [Google Scholar]

- Yin, R.K. Case Study Research and Applications: Design and Methods, 6th ed.; Sage Publications Ltd.: London, UK, 2018. [Google Scholar]

- Flyvbjerg, B. Five misunderstandings about case-study research. Qual. Inq. 2006, 12, 219–245. [Google Scholar] [CrossRef]

- Arsenijevic, U.; Jovic, M. Artificial intelligence marketing: Chatbots. In Proceedings of the 2019 International Conference on Artificial Intelligence: Applications and Innovations, Hersonissos, Greece, 24–26 May 2019; pp. 19–193. [Google Scholar]

- Rakib, S.; Rabbi, S.N. Ai in Digital Marketing. Bachelor’s Thesis, Seinajoki University, Seinäjoki, Finland, 2024. [Google Scholar]

- Permana, B. The Strategy for Developing a Marketplace Promotion Model Based on Artificial Intelligence (AI) to Improve Online Marketing in Indonesia. Int. J. Soc. Sci. Bus. 2024, 8, 190–197. [Google Scholar]

- Flavin, S.; Heller, J. A Technology Blueprint for Personalization at Scale; McKinsey: New York, NY, USA, 2019. [Google Scholar]

- Meda, K. Biased Advertising: Identifying & Addressing the Problem. 2023. Available online: https://kortx.io/news/biased-advertising/ (accessed on 7 February 2024).

- Nygård, R. AI-Assisted Lead Scoring. Master’s Thesis, Åbo Akademi University, Turku, Finland, 2019. [Google Scholar]

- Hayes, J.L.; Britt, B.C.; Evans, W.; Rush, S.W.; Towery, N.A.; Adamson, A.C. Can Social Media Listening Platforms’ Artificial Intelligence Be Trusted? Examining the Accuracy of Crimson Hexagon’s AI-Driven Analyses. J. Advert. 2020, 50, 81–91. [Google Scholar] [CrossRef]

- Limoa. What Is the Role of AI in Customer Feedback Analysis? 2024. Available online: https://www.lumoa.me/blog/artificial-intelligence-customer-feedback-analysis/ (accessed on 6 March 2024).

- Ghadage, A.; Yi, D.; Coghill, G.; Pang, W. Multi-stage bias mitigation for individual fairness in algorithmic decisions. In IAPR Workshop on Artificial Neural Networks in Pattern Recognition; Springer International Publishing: New York, NY, USA, 2022. [Google Scholar]

- Zhang, W.; Weiss, J.C. Fairness with censorship and group constraints. Knowl. Inf. Syst. 2023, 65, 2571–2594. [Google Scholar] [CrossRef]

- Welsch, A. Humans and AI: Designing Collaboration for A Better Future. 2024. Available online: https://intelligencebriefing.substack.com/p/human-ai-collaboration-for-adaptive-processes?utm_source=publication-search (accessed on 22 February 2024).

- Lee, E. The Data Maturity Model: Master Your Data in 5 Easy Stages. 2024. Available online: https://kortxprod.wpenginepowered.com/news/data-maturity-model/ (accessed on 6 February 2024).

- Wilson, R. A Guide to Take Your Third-Party Data to the Next Level. 2024. Available online: https://kortxprod.wpenginepowered.com/news/third-party-data/ (accessed on 6 February 2024).

| Pre-Sale Stage | Sale | Post-Sale Stage | |||||||

| Stages | Awareness | Acquisition | Consideration | Select | Adopt | Usage | Retain | Expand | |

| Content | Content with messaging for awareness | Content with messaging for acquiring | Content with messaging for consideration | Content that is used for final sale/selection | Content with messaging on how to adopt the new purchase | Content with messaging on how to use the new purchase | Content that is used for customer loyalty and retention | Content to expand customers into purchasing other products | |

| Channels | Channels that grab awareness: Brand (TV, Billboards, etc.) Paid Media Social Media Organic Search Website Pages Events | Channels that acquire: Account-based marketing Software Reviews Paid Media Emails Organic Search Website Pages Events | Channels that further consideration: Outbound Tele-sales Software Reviews Paid Media Emails Organic Search Website Pages Events | Channels that encourage selection: Free Trials Inbound tele-sales Marketplace websites Events Partners | Channels that encourage adoption: Emails Community Websites Learning Modules | Channels that encourage usage: Emails Community Websites Learning Modules Outbound Tele-sales | Channels that retain customer: Customer Success Events Community Websites | Channels that encourage expansion: Emails Website Outbound Tele-sales | |

| Respondent Code | Job Profile | Years of Experience | Knowledge of AI |

|---|---|---|---|

| R01 | Strategic Marketing Project Manager | 3 Years |

|

| R02 | Marketing Program Lead | 13 Years |

|

| R03 | Content Marketing Lead | 12 Years |

|

| R04 | Integrated Marketing Program Management | 10 Years |

|

| R05 | Marketing Localization Strategy Lead | 15 Years |

|

| R06 | Marketing Content Operations | 16 Years |

|

| Coding | Prompting | Deployment |

|---|---|---|

| C1. Machine learning heuristics—quick, approximate solutions—drive AI speed and scalability, but often at the expense of accuracy and fairness [28]. Transparency and accountability are limited due to the proprietary nature of these algorithms, raising ethical concerns [29]. C2. Only 8–10% of software developers are female, and this imbalance can encode biases into algorithms, often unintentionally [31,32]. C3. Assumptions made by predominantly male developers can lead to unfair outcomes, particularly in culturally sensitive applications where debiasing efforts remain insufficient [20]. The European Union’s AI Act mandates debiasing, but loopholes allow companies to circumvent regulations based on production location, perpetuating inequalities and sustaining market dominance by former colonial powers [33]. C4. There are no global regulatory rules for AI; different countries, continents and political and economic unions are employing different approaches [4,27]. | P1. Generative AI learning from the users’ preferences. This can include any bias from the prompter who does not understand a culture but is generating content for their market; or any bias from the prompter who assumes their target audience characteristics—gender, age, location etc. [R01, R02, R04]. P2. Marketers themselves can unintentionally corrupt AI models through adversarial attacks, altering input data, such as text or images, to mislead algorithms. These subtle manipulations compromise machine-learning models for all users [34]. P3. Lack of understanding and knowledge for correctly prompting an AI. “The art of prompting” is not something currently taught and so marketeers are having to use their own knowledge or research to learn how to prompt. To be aware of bias propagation they must currently use their own “moral compass” [R01, R02, R04, R05]. | D1. No identified failsafe in generative AI usage to flag biased prompts or inputs [R01, R02 R03, R04, R05, R06]. D2. Further training is required that is focused specifically on marketing use cases and projects. This includes prompting guidance or training and should be a continuous learning experience [R01, R02 R03, R04, R05, R06]. D3. Inconsistency of laws regarding AI and its usage allows Eurocentric marketing practices to occur. Those who are not culturally or language fluent work on localized projects [R04, R05]. Eurocentric marketing practices are prevalent within large companies—where decisions are made on behalf of other markets by people who may not be aware of cultural norms and differences [21,33]. D4. Further Eurocentric focus can result from incomplete data integrity for research profiles. Persona research may just be done on one or two markets, adding bias into findings [R02]. D5. Usage of historical data for current data-driven decision making—such data for software buyers can be skewed by gender, age, demographics etc., and then used for current marketing where purchaser profiles are evolving to new demographics [R01, R03]. |

| Respondent | Please Now Rank the Value of Using AI in Digital Marketing 1 = Highest Ranked, 10 = Lowest Ranked | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 1st | 2nd | 3rd | 4th | 5th | 6th | 7th | 8th | 9th | 10th | |

| R01 | Increased Conversion Rates | Increased Output | Work on Higher Value Activities | Improved Supplier Performance | Increased Visibility of Data | Higher Quality Output | Reduced Workload | Reduced Risk | Improved Brand Adherence | Increased Control |

| R02 | Work on Higher Value Activities | Improved Supplier Performance | Reduced Workload | Increased Output | Increased Conversion Rates | Higher Quality Output | Reduced Risk | Increased Control | Increased Visibility of Data | Improved Brand Adherence |

| R03 | Increased Output | Work on Higher Value Activities | Improved Supplier Performance | Reduced Workload | Higher Quality Output | Increased Conversion Rates | Increased Visibility of Data | Reduced Risk | Increased Control | Improved Brand Adherence |

| R04 | Reduced Workload | Increased Output | Improved Brand Adherence | Work on Higher Value Activities | Improved Supplier Performance | Increased Control | Higher Quality Output | Increased Visibility of Data | Increased Conversion Rates | Reduced Risk |

| R05 | Increased Output | Increased Conversion Rates | Work on Higher Value Activities | Reduced Workload | Increased Visibility of Data | Higher Quality Output | Improved Supplier Performance | Increased Control | Reduced Risk | Improved Brand Adherence |

| R06 | Work on Higher Value Activities | Increased Visibility of Data | Reduced Workload | Increased Conversion Rates | Improved Brand Adherence | Increased Control | Higher Quality Output | Increased Output | Reduced Risk | Improved Supplier Performance |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Reed, C.; Wynn, M.; Bown, R. Artificial Intelligence in Digital Marketing: Towards an Analytical Framework for Revealing and Mitigating Bias. Big Data Cogn. Comput. 2025, 9, 40. https://doi.org/10.3390/bdcc9020040

Reed C, Wynn M, Bown R. Artificial Intelligence in Digital Marketing: Towards an Analytical Framework for Revealing and Mitigating Bias. Big Data and Cognitive Computing. 2025; 9(2):40. https://doi.org/10.3390/bdcc9020040

Chicago/Turabian StyleReed, Catherine, Martin Wynn, and Robin Bown. 2025. "Artificial Intelligence in Digital Marketing: Towards an Analytical Framework for Revealing and Mitigating Bias" Big Data and Cognitive Computing 9, no. 2: 40. https://doi.org/10.3390/bdcc9020040

APA StyleReed, C., Wynn, M., & Bown, R. (2025). Artificial Intelligence in Digital Marketing: Towards an Analytical Framework for Revealing and Mitigating Bias. Big Data and Cognitive Computing, 9(2), 40. https://doi.org/10.3390/bdcc9020040