1. Introduction

In the last few years, cybercrime, which accounts for a 67% increase in the incidents of security breaches, has been one of the most challenging problems that national security systems have had to deal with worldwide [

1]. Deepfakes (i.e., realistic-looking fake media that has been generated by deep-learning algorithms) are being widely used to swap faces or objects in video and digital content. This artificial intelligence-synthesized content can have a significant impact on the determination of legitimacy due to its wide variety of applications and formats that deepfakes present online (i.e., audio, image and video).

Considering the quickness, ease of use, and impacts of social media, persuasive deepfakes can rapidly influence millions of people, destroy the lives of its victims and have a negative impact on society in general [

1]. The generation of deepfake media can have a wide range of intentions and motivations, from revenge porn to political fake news. Rana Ayyub, an investigative journalist in India, became a target of this practice when a deepfake sex video showing her face on another woman’s body was circulated on the Internet in April 2018 [

2]. Deepfakes have also been published to falsify satellite images with non-existent landscape features for malicious purposes [

3].

There are numerous captivating applications of deepfakery in video compositing and transfiguration in portraits, especially in identity protection as it can replace faces in photographs with ones from a collection of stock images. Cyber-attackers, using various strategies other than deepfakery, are always aiming to penetrate identification or authentication systems to gain illegitimate access. Therefore, identifying deepfake media using forensic methods remains an immense challenge since cyber-attackers always leverage newly published detection methods to immediately incorporate them in the next generation of deepfake generation methods. With the massive usage of the Internet and social media, and billions of images available on the Internet, there has been an immense loss of trust from social media users. Deepfakes are a significant threat to our society and to digital evidence in courts. Therefore, it is highly important to obtain state-of-the-art techniques to identify deepfake media under criminal investigation.

As demonstrated in

Table 1 (inspired by the figure presented in [

1]), tampering of evidence, scams and frauds (i.e., fake news), digital kidnapping associated with ransomware blackmailing, revenge porn and political sabotage are among the vast majority of types of deepfake activities with the highest level of intention to mislead [

1].

The first deepfake content published on the Internet was a celebrity pornographic video that was created by a Reddit user (named deepfake) in 2017. The Generative Adversarial Network (GAN) was first introduced in 2014 and used for image-enhancement purposes only [

4]. However, since the first published deepfake media, it has been unavoidable for deepfake and GAN technology to be used for malicious uses. Therefore, in 2017, GANs were used to generate new facial images for malicious uses for the first time [

5]. Following that, there has been a constant development of other deepfake-based applications such as FakeApp and FaceSwap. In 2019, Deepnude was developed and provided undressed videos of the input data [

6]. The widespread strategies used to manipulate multimedia files can be broadly categorized into the following major categories: copy–move, splicing, deepfake, and resampling [

7]. Copy–move, splicing and resampling involve repositioning the contents of a photo, overlapping different regions of multiple photos into a new one, and manipulating the scale and position of components of a photo. The final goal is to manipulate the user by conveying the deception of having a larger number of components in the photograph than those that were initially present. Deepfake media, however, leveraging powerful machine-learning (ML) techniques, have significantly improved the manipulation of the contents. Deepfake can be considered to be a type of splicing, where a person’s face, sound, or actions in media is swiped by a fake target [

8]. A wide set of cybercrime activities are usually associated with this type of manipulation technique, and while spreading them is easy, correcting the records and avoiding deepfakes are harder [

9]. Consequently, it is becoming harder for machine-learning techniques to identify convolutional traces of deepfake generation algorithms, as there needs to be frequency-specific anomaly analysis. The most basic algorithms that were being used to train models for the task of deepfake detection such as Support Vector Machine (SVM), Convolution Neural Network (CNN), and Recurrent Neural Network (RNN) are now being coupled with multi-attentional [

10] or ensemble [

11] methods to increase the performance and address weakness of other methods. As proposed by [

12], by implementing an ensemble of standard and attention-based data-augmented detection networks, the generalization issue of the previous approaches can be avoided. As such, it is of high importance to identify the most suitable algorithms for the backbone layers in multi-attentional and ensembled architectures. As generation of deepfake media only started in 2017, academic writing on the problem is meager [

13]. Most of the developed and published methods/techniques are focused on deepfake videos. The main difference between deepfake video- and image-detection methods is that video-detection methods can leverage spatial features [

14], spatio-temporal anomalies [

15] and supervised domain [

16] to draw a conclusion on the whole video by aggregating the inferred output both in time and across multiple faces. However, deepfake image-detection techniques have access to one face image only and mostly leverage pixel- [

17] and noise-level analysis [

18] to identify the traces of the manipulation method.

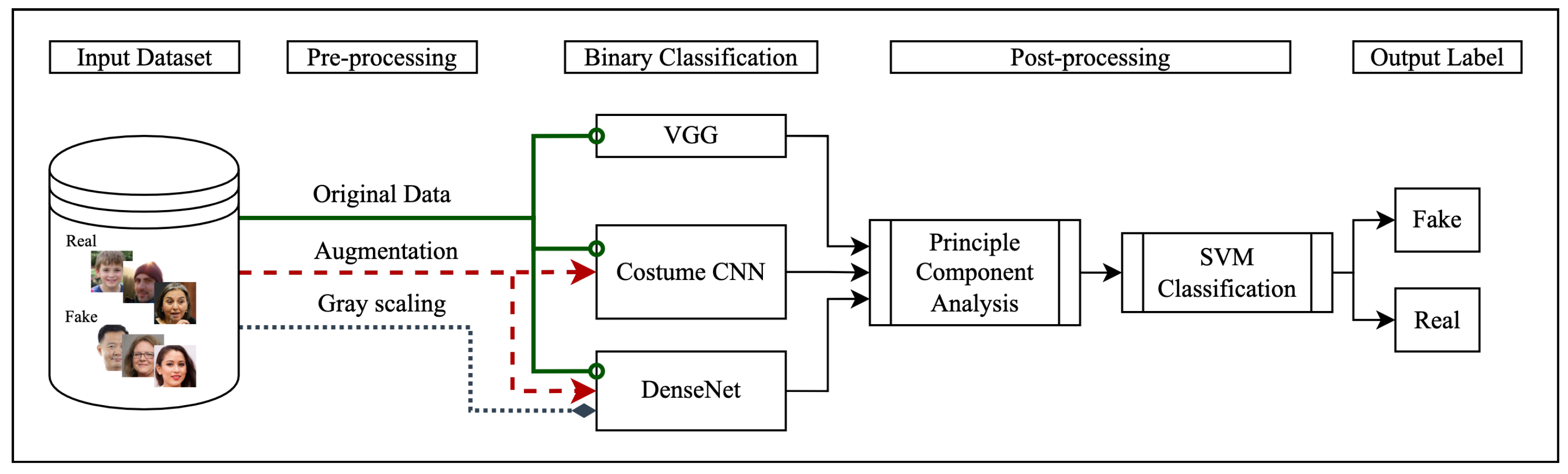

Therefore, identifying the most reliable methods for face-image forgery detection that relies on convolutional neural networks (CNN) as the backbone for a binary classification task could provide valuable insight for the future direction in the development of deepfake-detection techniques. The overall approach taken in this work is illustrated in

Figure 1.

DenseNet has shown significant promise in the field of facial recognition. DenseNet as an extension of Residual CNN (ResNet) architecture has addressed the low-supervision problem of all its counterparts by initiating a between-layer connection using dense blocks. The dense blocks in the DenseNet architecture improve the learning process by leveraging a transition layer (essentially convolution, average pooling, and batch normalization between each dense block) that concatenates feature maps. As such, gradients from the initial input and loss function are shared by all the layers. The described implementation reduces the number of required parameters and feature maps, and consequently provides a less computationally expensive model. Therefore, we have decided to test DenseNet’s capabilities and compare it with other neural network architectures.

VGG-19, as an algorithm that has been widely used to extract the features of the detected face frames [

19], was chosen to be compared with the DenseNet architecture. VGG-19’s architecture eases the face-annotation process by forming a large training dataset with the use of online knowledge sources that are then used to implement deep CNNs to perform the task of face recognition. The formed model is then evaluated on face recognition benchmarks to analyze model efficiency regarding the generation of facial features. During this process, VGG-19 is trained on classifiers with sigmoid activation function in the output layer which produces a vector representation of facial features (face embedding) to fine-tune the model. The fine-tuning process differentiates class similarities using Euclidean distance that is achieved using a triplet loss function that aims at comparing Euclidean spaces of similar and different faces using learning score vectors. The CNN architecture implemented in VGG-19 implements fully connected classifiers that include kernels and ReLU activation followed by maxpooling layers.

Finally, we have implemented a Custom CNN architecture to evaluate the performance of previously described algorithms and analyze the effectiveness of dropout, padding, augmentation and grayscale analysis on model performance.

This study aims to provide an in-depth analysis on the described algorithms, structures and mechanisms that could be leveraged in the implementation of an ensembled multi-attentional network to identify deepfake media. The result of this work contributes to the nascent literature on deepfakery by providing a comparative study on effective algorithms for deepfake detection on facial images within the possible use of digital forensics in criminal investigations.

The rest of this paper is organized as follows.

Section 2 provides a literature review of the algorithms and datasets that are widely used for deepfake detection.

Section 3 provides details on the analysis methods and configurations of the compared algorithms as well as with the details on the tested dataset.

Section 4 provides the results of the comparative analysis. Finally,

Section 5 concludes with implications, limitations, and suggestions for future research.

3. Approach

Our proposed method for deepfake detection on images is shown in

Figure 1. We have taken two different classification procedures in this work. As shown in both

Figure 1 and

Figure 3, input data goes through the same procedure with the same architecture; however,

Figure 3 demonstrates a second round of analysis with an additional post-processing classification step that has been added to the last output layer of the analyzed models. The second round of analysis with additional post-processing was performed to analyze the effects of principal component analysis on the task of deepfake classification. Further details about the post-processing step are described in the final paragraphs of the evaluation subsection of this section.

3.1. Implementation

Input data are a dataset that is labeled and clustered into two categories of real and fake. They are augmented for training purposes using the following specifications:

Rotation range of 20 for DenseNET and no rotation on Custom CNN

Scaling factor of 1/255 was used for coefficient reduction

Shear range of 0.2 to randomly apply shearing transformations

Zoom range of 0.2 to randomly zoom inside pictures

Randomized images using horizontal and vertical flipping

After augmentation, the face images are classified as either fake or real using three different models: Custom CNN, VGG, and DenseNET. We defined two classes for our binary classification task: 0 to denote the real (e.g., normal, validation, and disguised face images) and 1 to denote fake (e.g., impersonator face images) groups, respectively.

The “Real and Fake Face-Detection” dataset was used to train the three models at a learning rate of 0.001 and for 10 epochs. The test accuracy was then calculated using the test set. We applied data augmentation to flip all original images horizontally and vertically, hence a three-fold increase of the dataset size (original image + horizontally flipped image + vertically flipped image).

The Custom CNN architecture included six convolution layers (Conv2D) each paired with batch normalization, max pooling and dropout layers. Rectified Linear Unit (ReLU) and sigmoid activation functions were applied for the input and output layers respectively. Dropout was applied to each layer to minimize over-fitting and padding was also applied to the kernel to allow for a more accurate analysis of images. The Custom CNN architectures have been trained and validated on the original and augmented datasets with a 1/255 scaling factor. Data augmentation was performed to observe effects of data aggregation on model performance and promote the generalizability of the findings. Details on augmentation process includes horizontal flip along with a 0.2 zoom range, shear range of 0.2 along with rescaling factor to avoid image quality to factor in model behavior during classification since not all the images had the same pixel-level quality.

Following a similar approach to [

56],

the VGG-19 model that was used is a 16-layer CNN architecture paired with three fully connected layers, five maxpooling layers and one SoftMax layer that is modeled from architectures in [

56]. VGG-19 has been pretrained on a wide variety of object categories, which leads to its ability to learn rich feature representations. VGG-19 has demonstrated that it can provide a high accuracy level when classifying partial faces. This architecture demonstrated that its highest accuracy is accessible when its size is increased [

57]; therefore, we have applied a high-end configuration to it by adding a dense layer after the last layer block that provides the facial features and added a dense layer as the output layer with sigmoid activation function to fine-tune the model for the task of deepfake detection.

The DenseNET architecture used in this work is Keras’s DenseNet-264 architecture with an additional dense layer as the last output layer. This architecture starts with a 7 × 7 stride 2 convolutional layer followed by a 3 × 3 stride-2 MaxPooling layer. It also includes four dense blocks paired with batch normalization and ReLU activation function for the input layers and sigmoid activation function for the output layer. Furthermore, there are transition layers between each denseblock that include a 2 by 2 average pooling layer along with a 1 by 1 convolutional layer. The last dense block is followed by a classification layer that leverages the feature maps of all layers of the network for the task of classification which we have coupled with a denseblock with the sigmoid activation function as the output layer. This model was trained on 100,000 images and validated on 20,000 images. This model has been trained and validated on the original, grayscale and augmented datasets with a 1/255 scaling factor too. We aimed to add to the diversity of the training data by performing augmentation to the DenseNet architecture by applying a horizontal flip, a 20 range rotation along with the same rescaling procedure that was applied in the Custom CNN architecture. Because pixel-level resolution of grayscale and color images are different, we have also measured the importance of color on model behavior towards classifying data into the fake and real categories by training the DenseNet architecture on grayscale only data too. The VGG architecture, however, was only trained and tested on the original dataset. All the analyzed models in this work are used as they were designed with an additional custom dense layer with sigmoid activation function. The rationale behind adding this layer to all models was to add a useful rectifier activation function layer for the task of binary classification to produce a probability output in the range of 0 to 1 that can easily and automatically be converted to crisp class values.

3.2. Evaluation

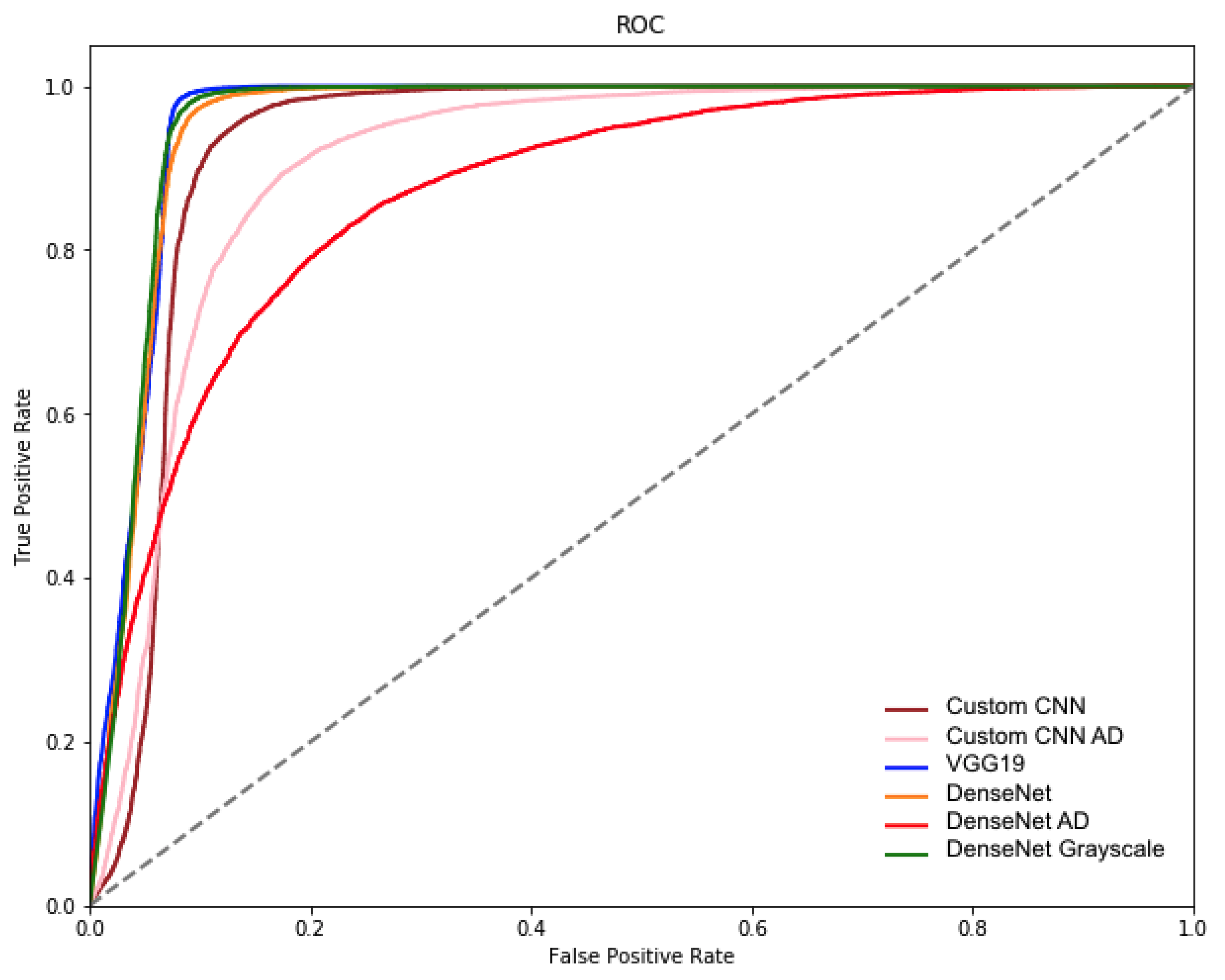

The performance of the described models is assessed with accuracy, precision, recall, F1-score, average precision (AP) and area under the ROC curve.

Accuracy, simply put, indicates how close the model prediction is to the target or actual value (fake vs. real), meaning how many times the model was able to make a correct predication among all the predictions it has made. Equation (

1) indicates the overall formula used to calculate prediction, where

TPR stands for true prediction and

TOPR stands for total predictions made by the model.

Precision, on the other hand, refers to how consistent results are regardless of how close to the true value they are using the target label. Equation (

2) demonstrates the ratio that indicates the proportion of positive identifications by model that were actually correct.

TP in Equation (

2) stands for the number of true positives and

FP stands for the number of false positives.

The recall is the proportion of actual positives that were identified by the model that were correct. Equation (

3) demonstrates this ratio where

TP is the number of true positives and

FN the number of false negatives. The recall is intuitively the ability of the classifier to find all the positive samples.

The F1-score, by taking into account both precision and recall, balances the precision and recall and indicates model ability to accurately predict both true-positive and true-negative classes. The F1 score can be interpreted as a harmonic mean of the precision and recall. For the task of deepfake classification, F1-score is a better measure to assess model performance, since both classes are of importance and the relative contribution of precision and recall to the F1 score are better than equal. Equation (

4) demonstrates how F1-score is calculated.

Average Precision (AP) was used as an aggregation function for the task of object detection to summarize the precision–recall curve as the weighted mean of precision achieved at each threshold, with the increase in recall from the previous threshold used as the weight based on Equation (

5), where

Rn and

Pn are the precision and recall at the nth threshold [

58].

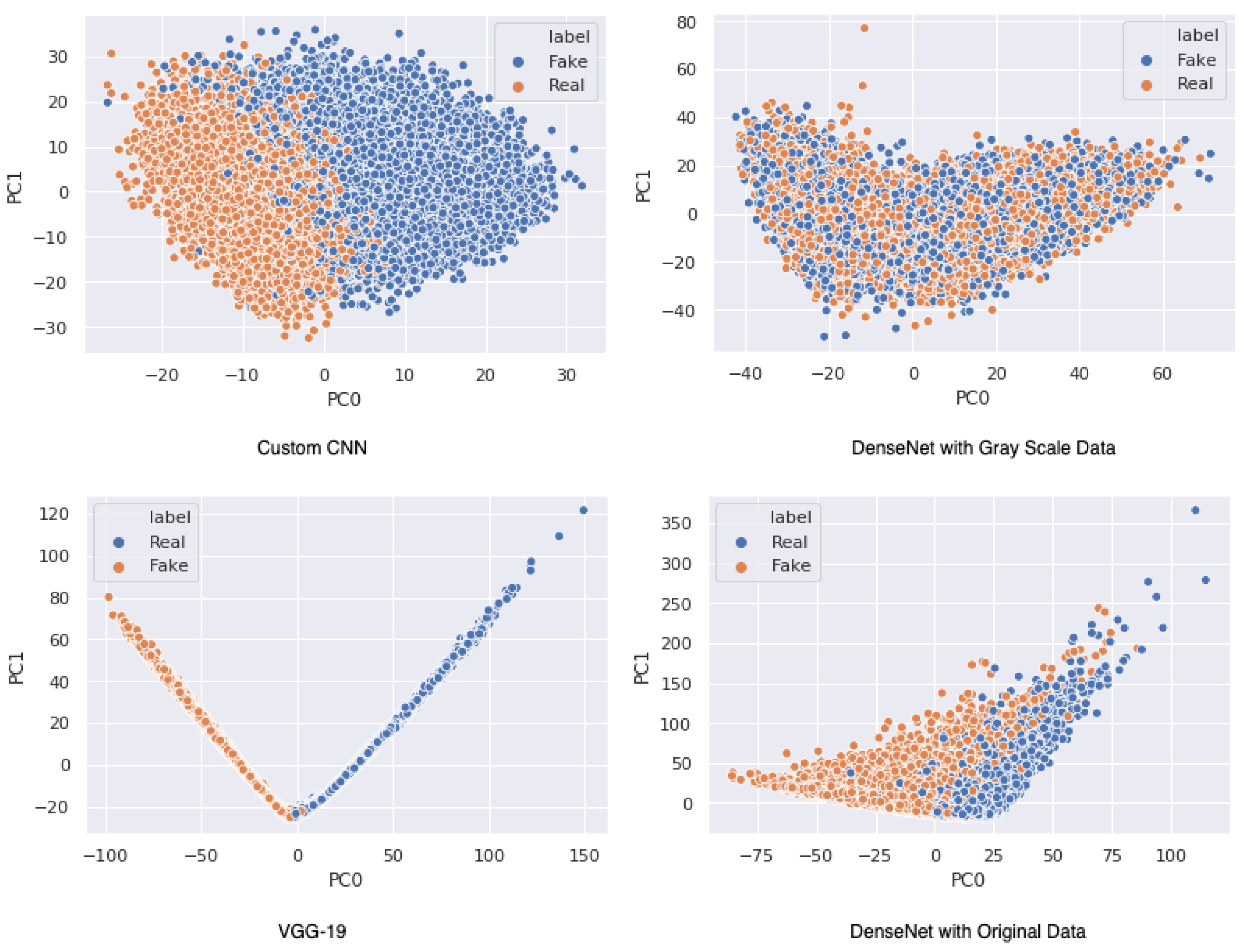

Finally, as shown in

Figure 3, the output vectors of the final hidden layer of the analyzed architectures were extracted and treated as a representation of the images. Dimensions of the vectors for the Custom CNN architecture, VGG-19 and DenseNet architectures were 512, 2048 and 1024, respectively. Principal Component Analysis (PCA) was performed to keep the most dominant variable vector points and preserved 50 principal components. The resulting vectors from the PCA were fed into a support vector machine (SVM) to classify them into the two classes of real and fake.

5. Conclusions and Future Work

The results of our work demonstrated that deep-learning architectures are reliable and accurate at distinguishing fake vs. real images; however, detection of the minimal inaccuracies and misclassifications remain a critical area of research. Recent efforts have focused on improving the algorithms that create deepfakes by adding especially designed noise to digital photographs or videos that are not visible to human eyes and can fool the face-detection algorithms [

61]. The results of our work indicate that VGG-19 performed best, taking accuracy, F1-score, precision, AUC-ROC and PCA-SVM measures into the account. DenseNet had a slightly better performance in terms of AP, and the results from the Custom CNN trained on original data were satisfactory too. This suggests that aggregation of the results from multiple models, i.e., ensemble or multi-attention approaches, can be more robust in distinguishing deepfake media.

Future work could also leverage unsupervised clustering methods such as auto-encoders to analyze its effectiveness on the task of deepfake classification and provide a better interpretation of the CNN algorithms designed in this work. There could be classification methods developed that would examine and flag social media users who uploaded images/videos before being posted on the Internet to avoid the spread of misinformation [

62]. We plan to further improve performance with deep-learning algorithms as well as exploring the application of stenography, steganalysis and cryptography in the identification and classification of the genuine and disguised face images [

63]. Future work not only has to include collecting and experimenting with different disguised classifiers, but also must work on the development of training data that can improve the performance of implemented architectures as suggested by [

33]. The authors of the paper plan to discover the use of information pellets on the development of an ensemble framework. As suggested in [

64] using a patch-based fuzzy rough set feature-selection strategy can preserve the discrimination ability of original patches. Such implementation can assist in anomaly detection for the task of deepfake detection. By integrating the local-to-global feature-learning method with multi-attention and ensemble-modeling (holistic, feature-based, noise-level, steganographic) approach, we believe we can achieve a superior performance than the current state-of-the-art methods. Considering the limitations of Eff-YNet network developed by [

55], which has an advantage in examining visual differences within individual frames, analyzing EfficientNet performance on deepfake image datasets used in this work can be another direction for future work, as it may identify another suitable baseline model for ensembled approaches.