-

A Model-Driven Engineering Approach to AI-Powered Healthcare Platforms

A Model-Driven Engineering Approach to AI-Powered Healthcare Platforms -

Generative AI in Developing Countries: Adoption Dynamics in Vietnamese Local Government

Generative AI in Developing Countries: Adoption Dynamics in Vietnamese Local Government -

New Concept of Digital Learning Space for Health Professional Students: Quantitative Research Analysis on Perceptions

New Concept of Digital Learning Space for Health Professional Students: Quantitative Research Analysis on Perceptions -

Visual Harmony Between Avatar Appearance and On-Avatar Text: Effects on Self-Expression Fit and Interpersonal Perception in Social VR

Visual Harmony Between Avatar Appearance and On-Avatar Text: Effects on Self-Expression Fit and Interpersonal Perception in Social VR -

C-STEER: A Dynamic Sentiment-Aware Framework for Fake News Detection with Lifecycle Emotional Evolution

C-STEER: A Dynamic Sentiment-Aware Framework for Fake News Detection with Lifecycle Emotional Evolution

Journal Description

Informatics

Informatics

is an international, peer-reviewed, open access journal on information and communication technologies, human–computer interaction, and social informatics, and is published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, and other databases.

- Journal Rank: CiteScore - Q1 (Communication)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 32.1 days after submission; acceptance to publication is undertaken in 4.2 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Journal Cluster of Information Systems and Technology: Analytics, Applied System Innovation, Cryptography, Data, Digital, Informatics, Information, Journal of Cybersecurity and Privacy and Multimedia.

Impact Factor:

2.8 (2024);

5-Year Impact Factor:

3.1 (2024)

Latest Articles

Augmented Reality as a Tool for 5G Learning: Interactive Visualization of NSA/SA Architectures and Network Components

Informatics 2026, 13(4), 58; https://doi.org/10.3390/informatics13040058 - 3 Apr 2026

Abstract

►

Show Figures

The rapid advancement of digital and mobile technologies has reshaped the educational landscape, fostering the adoption of interactive and learner-centered methodologies. Among these, immersive technologies such as Augmented Reality (AR), when coupled with next-generation wireless communication systems, hold the potential to revolutionize knowledge

[...] Read more.

The rapid advancement of digital and mobile technologies has reshaped the educational landscape, fostering the adoption of interactive and learner-centered methodologies. Among these, immersive technologies such as Augmented Reality (AR), when coupled with next-generation wireless communication systems, hold the potential to revolutionize knowledge acquisition and student engagement. In this paper, we present the design and development of an AR-based educational tool specifically oriented to teaching concepts of fifth-generation (5G) mobile networks. The tool provides a real-time interactive visualization of 3D network components on mobile devices, enabling learners to explore 5G NSA/SA architectures in an accessible manner with real-world environments through mobile devices and their integrated cameras. The application was developed using Blender for 3D modeling and Unity as the rendering engine, incorporating the Vuforia SDK for marker-based AR tracking, and it was deployed on the Android operating system. Unlike traditional static approaches, the proposed solution enables learners to explore complex network architectures and key functionalities of 5G in an interactive and accessible manner. To assess its perceived effectiveness, quantitative surveys were conducted with both university and high school students, focusing on usability, engagement, and perceived learning outcomes. Results indicate that the tool is user-friendly, enhances motivation, and supports conceptual understanding as perceived by participants of 5G technologies. These findings highlight the potential of AR, supported by advanced wireless networks, as a pedagogical strategy to improve STEM education and foster technological literacy in the era of digital transformation.

Full article

Open AccessArticle

A Multimodal Vision: Language Framework for Intelligent Detection and Semantic Interpretation of Urban Waste

by

Verda Misimi Jonuzi and Igor Mishkovski

Informatics 2026, 13(4), 57; https://doi.org/10.3390/informatics13040057 - 3 Apr 2026

Abstract

Urban waste management remains a significant challenge for achieving environmental sustainability and advancing smart city infrastructures. This study proposes a multimodal vision–language framework that integrates real-time object detection with automated semantic interpretation and structured semantic analysis for intelligent urban waste monitoring. A custom

[...] Read more.

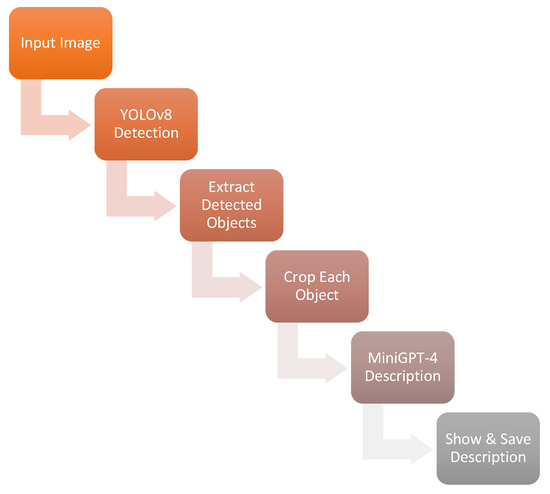

Urban waste management remains a significant challenge for achieving environmental sustainability and advancing smart city infrastructures. This study proposes a multimodal vision–language framework that integrates real-time object detection with automated semantic interpretation and structured semantic analysis for intelligent urban waste monitoring. A custom dataset including 2247 manually annotated images was constructed from publicly available sources (TrashNet and TACO), enabling robust multi-class detection across six waste categories. Two state-of-the-art object detection models, YOLOv8m and YOLOv10m, were trained and evaluated using a fixed 70/15/15 train–validation–test split. Under this configuration, YOLOv8m achieved a mAP@50 of 90.5% and a mAP@50–95 of 87.1%, slightly outperforming YOLOv10m (89.5% and 86.0%, respectively). Moreover, YOLOv8m demonstrated superior inference efficiency, reaching 120 FPS compared to 105 FPS for YOLOv10m. To obtain a more reliable estimate of performance stability across data partitions, stratified 5-Fold Cross-Validation was conducted. YOLOv8m achieved an average Precision of 0.9324 and an average mAP@50–95 of 0.9315 ± 0.0575 across folds, suggesting generally stable performance across data partitions, while also revealing variability associated with dataset heterogeneity. Beyond object detection, the framework integrates MiniGPT-4 to generate context-aware textual descriptions of detected waste items, thereby enhancing semantic interpretability and user engagement. Furthermore, GPT-5 Vision is incorporated as a structured auxiliary semantic classification and category-suggestion module that analyzes object crops and multi-class scenes, producing constrained JSON-formatted outputs that include category labels, concise descriptions, and recyclability indicators. Overall, the proposed YOLOv8–MiniGPT-4–GPT-5 Vision pipeline shows that combining accurate real-time detection with multimodal semantic reasoning can improve interpretability and support interactive, semantically enriched waste analysis in smart-city and environmental monitoring scenarios.

Full article

(This article belongs to the Section Machine Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

Assessment Validity in the Age of Generative AI: A Natural Experiment

by

Håvar Brattli, Alexander Utne and Matthew Lynch

Informatics 2026, 13(4), 56; https://doi.org/10.3390/informatics13040056 - 3 Apr 2026

Abstract

Universities play a dual role as sites of learning and as institutions that certify student competence through assessment. The rapid diffusion of generative artificial intelligence (GenAI) challenges this certification function by altering the conditions under which assessment evidence is produced. When powerful AI

[...] Read more.

Universities play a dual role as sites of learning and as institutions that certify student competence through assessment. The rapid diffusion of generative artificial intelligence (GenAI) challenges this certification function by altering the conditions under which assessment evidence is produced. When powerful AI tools are widely available, grades may increasingly reflect a combination of individual understanding and external cognitive support rather than solely independent competence. This study examines how changes in assessment format interact with GenAI availability to reshape observable performance outcomes in higher education. Using exam grade data from a compulsory undergraduate course delivered over five years (2021–2025; N = 1066), the study exploits a naturally occurring change in assessment conditions as a natural experiment. From 2021 to 2024, the course was assessed using an AI-permissive take-home examination, while in 2025 the assessment shifted to an AI-restricted, supervised in-person examination. Course content, intended learning outcomes, grading criteria, examiner continuity, and the structural design of the examination tasks remained stable across cohorts. The results reveal a pronounced shift in grade distributions coinciding with the format change. Failure rates increased sharply in 2025, mid-range grades declined, and the proportion of top grades remained largely unchanged. Statistical analysis indicates a significant association between examination period and grade outcomes (χ2(5, N = 1066) = 60.62, p < 0.001), with a small-to-moderate effect size (Cramér’s V = 0.24), driven primarily by the increase in failing grades. These findings suggest that AI-permissive and AI-restricted assessment formats may not be measurement-equivalent under conditions of widespread GenAI use. The results raise concerns about construct validity and the credibility of grades as signals of independent competence, while also highlighting tensions between certification credibility and assessment authenticity.

Full article

(This article belongs to the Special Issue Generative AI in Higher Education: Applications, Implications, and Future Directions)

►▼

Show Figures

Figure 1

Open AccessReview

Toward Network-Managed 5G Fixed Wireless Access: Technologies, Challenges, and Future Directions

by

Asri Wulandari, Muhammad Suryanegara and Dadang Gunawan

Informatics 2026, 13(4), 55; https://doi.org/10.3390/informatics13040055 - 3 Apr 2026

Abstract

►▼

Show Figures

The increasing digitalization of industrial ecosystems under the Industrial Revolution 4.0 has intensified the demand for fast, reliable, and inclusive broadband connectivity. The expansion of 5G technology led by data-driven services addresses the growing demand for high-capacity, low-latency communication through Fixed Wireless Access

[...] Read more.

The increasing digitalization of industrial ecosystems under the Industrial Revolution 4.0 has intensified the demand for fast, reliable, and inclusive broadband connectivity. The expansion of 5G technology led by data-driven services addresses the growing demand for high-capacity, low-latency communication through Fixed Wireless Access (FWA) as a cost-effective broadband solution. FWA is a wireless broadband access technology that provides high-speed connectivity to fixed locations using 5G New Radio (NR) infrastructure instead of physical fiber networks, while reducing deployment time and infrastructure investment. This review examines the technical challenges, economic business implications, and comparative performance of 5G FWA relative to other broadband technologies. It also examines the implementation of Enhanced Telecom Operations Map (eTOM) in several telecommunication network functions. The analysis indicates that successful 5G FWA implementation requires not only technical optimization, but also the adaption of standardized, scalable, and AI-driven network management practices. Emphasis is placed on the role of the eTOM as a structured framework for aligning technical, operational, and organizational processes in FWA deployment. This review highlights how eTOM can support readiness assessment, process harmonization, and lifecycle management to ensure consistent and efficient service delivery. This study provides a comprehensive reference for researchers and industry stakeholders in developing sustainable and future-ready 5G FWA networks.

Full article

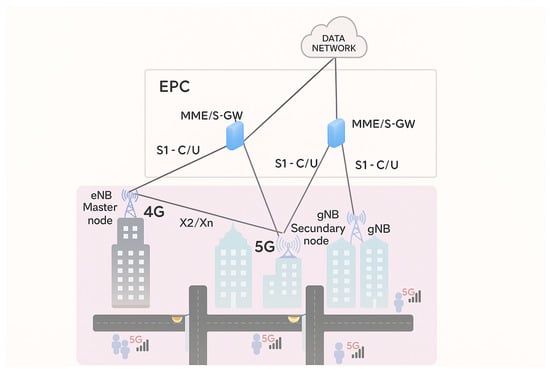

Figure 1

Open AccessArticle

Cybersecurity Challenges in Hospitals: International Incident Reports Analysis and Expert Validation

by

Grigori Rogge and Sabine Bohnet-Joschko

Informatics 2026, 13(4), 54; https://doi.org/10.3390/informatics13040054 - 2 Apr 2026

Abstract

►▼

Show Figures

The healthcare sector is undergoing a digital transformation that improves the quality of care, increases efficiency, and enhances connectivity. With digitalization comes an increase in cyber threats. Hospitals are among the primary targets of cybercriminals. Adequate protective measures require knowledge and analysis of

[...] Read more.

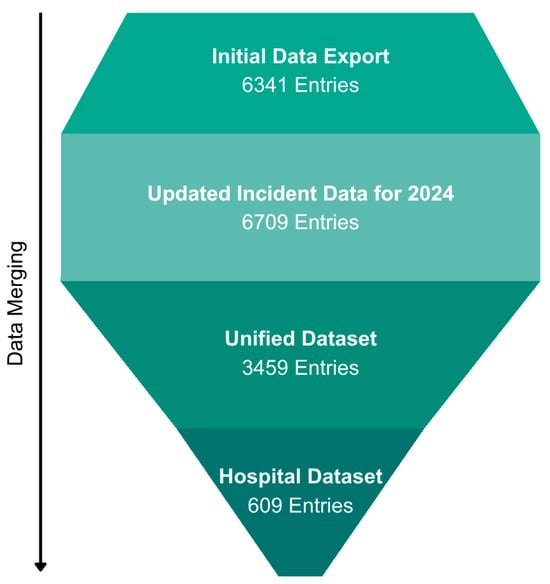

The healthcare sector is undergoing a digital transformation that improves the quality of care, increases efficiency, and enhances connectivity. With digitalization comes an increase in cyber threats. Hospitals are among the primary targets of cybercriminals. Adequate protective measures require knowledge and analysis of frequently occurring incidents. This study aimed to identify types of cyber risks and to evaluate factors influencing incident occurrence using a mixed-methods approach. Data on cyber incidents and data breaches from 2021 to 2024 were consolidated from five publicly accessible international datasets into a single unified dataset with 3459 entries and analyzed with a focus on hospital incidents. Results showed that hacking, especially involving ransomware, poses a key security risk in hospitals. The results were then discussed in four focus groups with 14 IT experts from hospitals. They highlighted threats and potential conflicts arising from the integration of new technologies, including the escalation of external risks as hacking activities become more organized and professionalized. The need for openly accessible and understandable data on hospital cyber risks, as well as for collaborative exchange among institutions, was emphasized. The study identifies gaps in current knowledge regarding the integration of technology into hospital networks, suggesting directions for future research.

Full article

Figure 1

Open AccessArticle

Quality Assessment of Generative AI in Cybersecurity Certification

by

Vanessa G. Félix, Rodolfo Ostos, Luis J. Mena, Homero Toral-Cruz, Alberto Ochoa-Brust, Pablo Velarde-Alvarado, Apolinar González-Potes, Ramón A. Félix-Cuadras, José A. León-Borges and Rafael Martínez-Peláez

Informatics 2026, 13(4), 53; https://doi.org/10.3390/informatics13040053 - 30 Mar 2026

Abstract

Generative Artificial Intelligence (GenAI), particularly Large Language Models (LLMs), is rapidly changing how higher education approaches teaching, learning, and assessment. In cybersecurity education, professional certification exams are key for measuring competence and helping professionals find better job offers, but there is little research

[...] Read more.

Generative Artificial Intelligence (GenAI), particularly Large Language Models (LLMs), is rapidly changing how higher education approaches teaching, learning, and assessment. In cybersecurity education, professional certification exams are key for measuring competence and helping professionals find better job offers, but there is little research on how GenAI systems perform in these exam settings. This study looks at how three popular LLMs, ChatGPT-5, Gemini-2.5 Pro, and Copilot-2.5 Pro, handle 183 practice questions from the CompTIA Security+ certification. The study used a two-phase evaluation: a domain-based assessment and a full-length practice exam that mirrors real certification tests. The researchers measured model performance with accuracy scores, chi-square tests for statistical differences, and an error taxonomy to spot patterns of mistakes important for education. All three GenAI systems scored above the passing mark, and there were no significant differences between them. Still, the error analysis showed ongoing conceptual and classification mistakes that did not show up in the overall accuracy scores. Our results show that GenAI systems can pass structured certification tests, but accuracy by itself does not fully measure professional skills. The study points out important issues for the reliability and validity of AI-based assessments in higher education and stresses the need for more realistic, concept-focused ways to evaluate GenAI in cybersecurity education.

Full article

(This article belongs to the Special Issue Generative AI in Higher Education: Applications, Implications, and Future Directions)

►▼

Show Figures

Figure 1

Open AccessArticle

Tax Professionals’ Perceptions, Compliance Costs, and Compliance Intentions Under Indonesia’s Core Tax Administration System

by

Prianto Budi Saptono, Gustofan Mahmud, Ismail Khozen, Arfah Habib Saragih, Wulandari Kartika Sari, Adang Hendrawan and Milla Sepliana Setyowati

Informatics 2026, 13(4), 52; https://doi.org/10.3390/informatics13040052 - 27 Mar 2026

Abstract

►▼

Show Figures

This study provides an early evaluation of the effectiveness of the Core Tax Administration System, a digital taxation platform introduced to integrate all tax administration processes in Indonesia into a single system. To conduct this evaluation, the study integrates two of the most

[...] Read more.

This study provides an early evaluation of the effectiveness of the Core Tax Administration System, a digital taxation platform introduced to integrate all tax administration processes in Indonesia into a single system. To conduct this evaluation, the study integrates two of the most established frameworks in the information systems literature, namely the DeLone and McLean Information Systems Success Model and the Technology Acceptance Model. Tax professionals are involved in the evaluation process because they are the primary users of the system and possess advanced knowledge of taxation. Structural equation modeling is employed as the analytical technique. The results indicate that system usage generates individual-level benefits by reducing perceived compliance costs, which in turn translate into organizational-level outcomes in the form of increased tax compliance intentions. However, the non-linear effect analysis reveals that this relationship is not entirely linear but follows an inverted U-shaped pattern. This finding suggests that over time, highly routine system usage may reduce professional vigilance by fostering excessive reliance on automated features and superficial processing. Such dependence can weaken perceived efficiency gains and diminish intrinsic motivation for careful and accurate reporting, highlighting the importance of balancing efficiency with system design features that support professional judgment and vigilance.

Full article

Figure 1

Open AccessReview

Ethics in Artificial Intelligence: A Cross-Sectoral Review of 2019–2025

by

Charalampos M. Liapis, Nikos Fazakis, Sotiris Kotsiantis and Yannis Dimakopoulos

Informatics 2026, 13(4), 51; https://doi.org/10.3390/informatics13040051 - 27 Mar 2026

Abstract

►▼

Show Figures

Artificial Intelligence (AI) has transitioned from a specialized research area to a ubiquitous socio-technical infrastructure influencing sectors from healthcare and law to manufacturing and defense. In tandem with its transformative promise, AI has created an exponentially expanding ethics literature questioning, fairness, transparency, accountability,

[...] Read more.

Artificial Intelligence (AI) has transitioned from a specialized research area to a ubiquitous socio-technical infrastructure influencing sectors from healthcare and law to manufacturing and defense. In tandem with its transformative promise, AI has created an exponentially expanding ethics literature questioning, fairness, transparency, accountability, and justice. This review synthesizes publications and key policy developments between 2019 and 2025, bringing sectoral discourses together with cross-cutting frameworks. Grounded in a systematic scoping review methodology, we frame the field along four meta-dimensions: trust and transparency, bias and fairness, governance & regulation, and justice, while we investigate their expression across diverse sectors. Special attention is dedicated to healthcare (patient trust and algorithmic bias), education (integrity and authorship), media (misinformation), law (accountability), and the industrial sector (data integrity, intellectual property protection, and environmental safety). We ground abstract principles in concrete case studies to illustrate real-world harms and mitigation strategies. Furthermore, we incorporate pluralistic ethics (e.g., Ubuntu, Islamic perspectives), environmental ethics, and emerging challenges posed by Generative AI and neuro-AI interfaces. To bridge theory and practice, we propose an operational governance framework for organizations. We contend that success involves transitioning from principles toward ethics-by-design, pluralistic governance, sustainability, and adaptive oversight. This review is intended for scholars, practitioners, and policymakers who need a comprehensive and actionable framework for navigating the complex landscape of AI ethics.

Full article

Figure 1

Open AccessArticle

Data Mining to Identify Factors Associated with University Student Retention

by

Yuri Reina Marín, Lenin Quiñones Huatangari, Judith Nathaly Alva Tuesta, Omer Cruz Caro, Jorge Luis Maicelo Guevara, Einstein Sánchez Bardales and River Chávez Santos

Informatics 2026, 13(4), 50; https://doi.org/10.3390/informatics13040050 - 27 Mar 2026

Abstract

►▼

Show Figures

Student retention has become a major challenge for higher education institutions due to the influence that academic, socioeconomic, family, and motivational factors exert on students’ academic continuity. In this context, understanding the determinants that explain university persistence is essential for designing effective retention

[...] Read more.

Student retention has become a major challenge for higher education institutions due to the influence that academic, socioeconomic, family, and motivational factors exert on students’ academic continuity. In this context, understanding the determinants that explain university persistence is essential for designing effective retention strategies. Based on the analysis of factors related to motivation, commitment, attitude, academic integration, and social and economic conditions, retention patterns were examined in a population of 532 university students, of whom 57.7% showed high retention, 38.2% medium retention, and 4.1% low retention. To identify the factors with the greatest influence on academic continuity, educational data mining techniques and supervised classification models were applied and evaluated using stratified 10-fold cross-validation. Tree-based ensemble models showed the most consistent predictive performance, with Random Forest achieving the best results (accuracy = 0.729 ± 0.058; F1-macro = 0.636 ± 0.136). Model interpretability was examined through SHAP analysis, which revealed that transportation conditions (0.249), task completion (0.170), absence of work obligations (0.168), and course completion (0.164) were the most influential predictors in the classification of retention levels. In addition, sensitivity analysis indicated that academic commitment accounts for 41.6% of the predictive impact, followed by motivation (23.5%). These findings demonstrate that student retention is shaped by the interaction of academic, motivational, and contextual factors and provide practical implications for the development of **early warning systems, personalized tutoring programs, psychosocial support initiatives, and financial assistance policies aimed at strengthening university retention.

Full article

Figure 1

Open AccessArticle

A Discrete-Form Double-Integration-Enhanced Recurrent Neural Network for Stewart Platform Control with Time-Varying Disturbance Suppression

by

Yueyang Ma, Yang Shi and Chao Jiang

Informatics 2026, 13(4), 49; https://doi.org/10.3390/informatics13040049 - 25 Mar 2026

Abstract

The discrete-form control of the Stewart platform is essential for digital implementation in intelligent manufacturing and robotic systems under the context of Industry 4.0, yet its performance is often degraded by unavoidable discrete disturbances. This challenge motivates the development of algorithms with strong

[...] Read more.

The discrete-form control of the Stewart platform is essential for digital implementation in intelligent manufacturing and robotic systems under the context of Industry 4.0, yet its performance is often degraded by unavoidable discrete disturbances. This challenge motivates the development of algorithms with strong disturbance suppression capability. To address this issue, a continuous-form double-integration-enhanced recurrent neural network (CF-DIE-RNN) algorithm incorporating a novel double-integration-enhanced design concept is first developed to improve robustness against time-varying disturbances. For digital hardware applications, a discrete-form double-integration-enhanced RNN (DF-DIE-RNN) algorithm is then constructed by discretizing the CF-DIE-RNN algorithm using a general four-step discretization formula and a one-step forward difference formula based on Taylor expansion. Rigorous theoretical analysis establishes the convergence properties of the proposed algorithm and characterizes its steady-state residual bounds under different disturbance types, revealing its capability to suppress discrete quadratic time-varying disturbances. Numerical and simulation experiments demonstrate that the DF-DIE-RNN algorithm achieves superior disturbance suppression and more accurate trajectory tracking than existing discrete-form RNN algorithms, confirming its effectiveness for discrete-form Stewart platform control.

Full article

(This article belongs to the Section Industry 4.0)

►▼

Show Figures

Figure 1

Open AccessArticle

Generative AI-Assisted Automation of Clinical Data Processing: A Methodological Framework for Streamlining Behavioral Research Workflows

by

Marta Lilia Eraña-Díaz, Alejandra Rosales-Lagarde, Iván Arango-de-Montis and José Alejandro Velázquez-Monzón

Informatics 2026, 13(4), 48; https://doi.org/10.3390/informatics13040048 - 25 Mar 2026

Abstract

►▼

Show Figures

This article presents a methodological framework for automating clinical data processing workflows using Generative Artificial Intelligence (AI) as an interactive co-developer. We demonstrate how Large Language Models (LLMs), specifically ChatGPT and Claude, can assist researchers in designing, implementing, and deploying complete ETL (Extract,

[...] Read more.

This article presents a methodological framework for automating clinical data processing workflows using Generative Artificial Intelligence (AI) as an interactive co-developer. We demonstrate how Large Language Models (LLMs), specifically ChatGPT and Claude, can assist researchers in designing, implementing, and deploying complete ETL (Extract, Transform, Load) pipelines without requiring advanced programming or DevOps expertise. Using a dataset of 102 participants from a nonverbal expression study as a proof-of-concept, we show how AI-assisted automation transforms FaceReader video analysis outputs during the Cyberball paradigm into structured, analysis-ready datasets through containerized workflows orchestrated via Docker and n8n. The resulting framework successfully processes all 102 datasets, generating machine learning outputs to validate pipeline execution stability (rather than clinical predictivity), and deploys interactive visualization dashboards, tasks that would normally require significant manual effort and technical specialization expertise. This work establishes a replicable methodology for integrating Generative AI into research data management workflows, with implications for accelerating scientific discovery across behavioral and medical research domains.

Full article

Figure 1

Open AccessArticle

Concurrent Prediction of Length of Stay, Mortality, and Total Charges in Patients with Acute Lymphoblastic Leukemia Using Continuous Machine Learning

by

Jiahui Ma, Elizabeth Johnson, Bradley M. Whitaker, Faraz Dadgostari, Hansjorg Schwertz and Bernadette McCrory

Informatics 2026, 13(4), 47; https://doi.org/10.3390/informatics13040047 - 24 Mar 2026

Abstract

►▼

Show Figures

Acute lymphoblastic leukemia (ALL) presents significant clinical challenges due to its genetic complexity and high relapse rates. While outcomes like length of stay (LOS), mortality, and total charges (TCs) are critical quality indicators, most existing models rely on static data and separate outcome

[...] Read more.

Acute lymphoblastic leukemia (ALL) presents significant clinical challenges due to its genetic complexity and high relapse rates. While outcomes like length of stay (LOS), mortality, and total charges (TCs) are critical quality indicators, most existing models rely on static data and separate outcome modeling. This study utilized the HCUP National Inpatient Sample (NIS) to develop a dynamic, concurrent prediction model for prolonged LOS and mortality (PLOSM), alongside a framework for TCs. By integrating temporally updated patient information, the concurrent approach outperformed single-outcome models. Within the first seven days of hospitalization, the model achieved accuracy and precision above 90%, with recall and F1-scores exceeding 80%. Key predictors of these outcomes included age, race, insurance type, financial indicators, and elective surgery status. Notably, both prolonged LOS and mortality were significant drivers of TCs. By bridging predictive modeling and real-time clinical data, this framework enables data-driven decision-making to optimize patient management, enhance safety, and mitigate the financial burden of ALL care.

Full article

Figure 1

Open AccessSystematic Review

Reimagining Traditional Workspaces Through Digitalisation and Hybrid Perspective: A Systematic Review

by

Ayogeboh Epizitone and Smangele Pretty Moyane

Informatics 2026, 13(4), 46; https://doi.org/10.3390/informatics13040046 - 24 Mar 2026

Abstract

Workspace digitalisation presents a transformative shift from traditional, physically bounded offices to virtual, technology-enabled environments. Digital technologies like cloud computing, artificial intelligence, and the Internet of Things enable remote collaboration, data accessibility, and operational efficiency, thereby accelerating this transformation. Digital workspaces transcend geographical

[...] Read more.

Workspace digitalisation presents a transformative shift from traditional, physically bounded offices to virtual, technology-enabled environments. Digital technologies like cloud computing, artificial intelligence, and the Internet of Things enable remote collaboration, data accessibility, and operational efficiency, thereby accelerating this transformation. Digital workspaces transcend geographical limitations, enabling a more flexible, inclusive, and adaptive work culture. They offer better work–life balance, with flexible options, reduced commuting time, and increased personal autonomy and control over commitments, compared to traditional workspaces. Despite these benefits, digitalisation creates cybersecurity, data privacy, and digital divide issues, where unequal access to digital tools and skills can exacerbate social and economic inequalities. The lack of physical interaction affects team cohesion and company culture. Hence, this paper explores these phenomena to uncover their implications and consider possible strategies to optimise workspace digitalisation, providing a comprehensive systematic review of extant literature within the study context, offering pragmatic insights and recommendations for workspaces. This study has found workspace digitalisation to be a complex, multifaceted phenomenon that provides flexibility, efficiency, and innovation, but also poses challenges that must be carefully managed. It postulates that as technology and work progress, a hybrid model that blends digital and traditional workspaces would be suited to each organisation’s needs and goals.

Full article

(This article belongs to the Section Social Informatics and Digital Humanities)

►▼

Show Figures

Figure 1

Open AccessReview

Data Foundations for Medical AI: Provenance, Reliability and Limitations of Russian Clinical NLP Resources

by

Arsenii Litvinov, Lev Malishevskii, Evgeny Karpulevich, Iaroslav Bespalov, Yaroslav Nedumov, Sergey Zhdanov, Ivan Oseledets, Evgeniy Shlyakhto and Arutyun Avetisyan

Informatics 2026, 13(3), 45; https://doi.org/10.3390/informatics13030045 - 20 Mar 2026

Abstract

Russian-language resources for medical natural language processing (NLP) are expanding rapidly; however, their fragmentation, uneven curation, and limited clinical reliability hinder the development of safe machine learning systems for prognosis, prevention, and precision medicine. We provide the first systematic survey of Russian medical

[...] Read more.

Russian-language resources for medical natural language processing (NLP) are expanding rapidly; however, their fragmentation, uneven curation, and limited clinical reliability hinder the development of safe machine learning systems for prognosis, prevention, and precision medicine. We provide the first systematic survey of Russian medical NLP datasets and analyze their suitability for clinically meaningful tasks as defined by the MedHELM taxonomy. We additionally perform expert clinical validation of three representative public corpora—RuMedPrimeData (real outpatient notes), MedSyn (synthetic clinical notes), and RuMedNLI (translated natural language inference)—assessing clinical plausibility, diagnosis accuracy, and logical consistency. Experts identified substantial reliability issues: across randomly sampled subsets of each corpus, only approximately 20% of RuMedPrimeData records, fewer than 15% of MedSyn records, and approximately 55% of RuMedNLI pairs met essential quality criteria, which can hinder downstream ML systems built on these data. To support robust applications—ranging from medical chatbots and triage assistants to predictive and preventive models—we outline practical requirements for high-quality datasets: coordinated, expert-validated, machine-readable corpora aligned with clinical guidelines and insurance logic, standardized de-identification, and transparent provenance. Strengthening these data foundations will enable the development of reliable, reproducible, and clinically relevant AI systems suitable for real-world healthcare applications.

Full article

(This article belongs to the Special Issue From Data to Evidence: Transformative AI for Real-World Data)

►▼

Show Figures

Figure 1

Open AccessArticle

Machine Learning and Generative AI in Administrative Processes in Peru: Administrative Efficiency in the National Public Sector

by

Miluska Odely Rodriguez Saavedra, Juliana Mery Bautista Lopez, Wilian Quispe Nina, Antonio Víctor Morales Gonzales, Iván Cuentas Galindo, Luis Miguel Campos Ascuña, Anthony Stefano Saenz Colana, Robinson Bernardino Almanza Cabe, Paola Gabriela Lujan Tito and Sharon Veronika Liendo Teran

Informatics 2026, 13(3), 44; https://doi.org/10.3390/informatics13030044 - 19 Mar 2026

Abstract

►▼

Show Figures

Public organizations in Peru have committed substantial resources to artificial intelligence over recent years, yet evidence on whether these investments produce measurable returns has remained scarce. This study evaluated the causal impact of AI adoption on administrative efficiency across 20 Peruvian national public

[...] Read more.

Public organizations in Peru have committed substantial resources to artificial intelligence over recent years, yet evidence on whether these investments produce measurable returns has remained scarce. This study evaluated the causal impact of AI adoption on administrative efficiency across 20 Peruvian national public organizations, using a quasi-experimental design combining Difference-in-Differences with Propensity Score Matching, complemented by XGBoost version 1.7.6, Random Forest, GPT-4, and SHAP explainability analysis. The sample comprised 428 civil servants across treatment and control organizations. Results showed significant efficiency gains as perceived by civil servants through validated Likert instruments: work absenteeism decreased by 9.4%, processing times by 8.7%, and administrative costs by 18.2%, all at p < 0.001 with Cohen’s d ranging from 0.55 to 0.90. The convergence between DiD and PSM estimates supports a causal reading of these effects. Four of five hypotheses were supported. AI delivered comparable efficiency gains regardless of institutional complexity, so H2 was not confirmed. Digital infrastructure significantly moderated AI effectiveness (H3: r = 0.198, p = 0.004). Higher resistance to change was significantly associated with lower efficiency outcomes (H5: r = −0.256, p < 0.001), reinforcing the role of proactive change management as a positive moderator of AI effectiveness. SHAP analysis revealed that training investment, specialized IT personnel, and resistance management together explained 51% of predictive importance, outweighing structural variables such as budget size or geographic location. These findings provide the first systematic causal evidence on AI efficiency in Peruvian public administration and offer actionable benchmarks for comparable middle-income public sectors.

Full article

Figure 1

Open AccessArticle

Artificial Intelligence in Literature Review Synthesis: A Step-by-Step Methodological Approach for Researchers and Academics

by

Matolwandile M. Mtotywa, Jeri-Lee J. Mowers, Wavhudi Ndou, Thabang V. Q. Moleko and Matsobane J. Ledwaba

Informatics 2026, 13(3), 43; https://doi.org/10.3390/informatics13030043 - 13 Mar 2026

Cited by 1

Abstract

►▼

Show Figures

The integration of artificial intelligence (AI) in literature reviews aims to transform research by potentially automating processes, enhancing rigour, and improving quality. The study proposes a structured step-by-step approach to integrate AI tools into the literature review synthesis process. The developed methodological approach

[...] Read more.

The integration of artificial intelligence (AI) in literature reviews aims to transform research by potentially automating processes, enhancing rigour, and improving quality. The study proposes a structured step-by-step approach to integrate AI tools into the literature review synthesis process. The developed methodological approach has five steps. The first step, planning and readiness, involves scoping, understanding practices, and defining boundaries of AI use. Next is selecting AI tools and aligning their capabilities with the literature needs through a matrix. The third step focuses on using AI to conduct the review, followed by validation and cross-referencing of AI-generated results. The final step is disclosing AI use in line with ethical and reporting standards. The approach is demonstrated through five scenarios: emerging or fragmented literature, large or saturated fields, interdisciplinary domains, methodologically diverse studies, and under-researched topics. This approach is designed to enhance transparency, potentially reduce bias, and support reproducibility by aligning AI functions with research goals. It also addresses ethical considerations and promotes human–AI collaboration. For researchers and academics, it aims to provide a practical roadmap for the responsible adoption of AI in literature reviews, supporting efficiency, ethical tool use, transparency, and the balance between machine assistance and academic judgment.

Full article

Figure 1

Open AccessArticle

Voice, Text, or Embodied AI Avatar? Effects of Generative AI Interface Modalities in VR Museums

by

Pakinee Ariya, Perasuk Worragin, Songpon Khanchai, Darin Poollapalin and Phichete Julrode

Informatics 2026, 13(3), 42; https://doi.org/10.3390/informatics13030042 - 11 Mar 2026

Abstract

Virtual museums delivered through immersive virtual reality (VR) function as information environments where users access interpretive content while navigating spatially. With the integration of generative artificial intelligence (AI), conversational assistants can dynamically mediate information interaction; however, evidence remains limited regarding how different AI

[...] Read more.

Virtual museums delivered through immersive virtual reality (VR) function as information environments where users access interpretive content while navigating spatially. With the integration of generative artificial intelligence (AI), conversational assistants can dynamically mediate information interaction; however, evidence remains limited regarding how different AI interface representations affect user experience. This study compares three generative AI interface modalities in a VR virtual museum: voice only, voice with synchronized text, and voice with an embodied AI avatar. A controlled experiment with 75 participants examined their effects on user engagement, perceived information quality, and subjective cognitive workload while holding informational content constant. The results indicate that the voice-and-text modality produced the highest perceived information quality, whereas the embodied AI avatar modality yielded the highest user engagement. No significant differences were observed in cognitive workload across modalities. These findings suggest that AI interface modalities play complementary roles in VR-based information interaction and provide design guidance for selecting appropriate AI representations in immersive information systems.

Full article

(This article belongs to the Special Issue Real-World Applications and Prototyping of Information Systems for Extended Reality (VR, AR, and MR))

►▼

Show Figures

Figure 1

Open AccessReview

Dr. Google vs. Dr. ChatGPT in Online Health Self-Consultation: A Scoping Review of Accuracy, Bias, and Actionability (2023–2025)

by

Magdalena Trillo-Domínguez, Juan Ignacio Martin-Neira and María Dolores Olvera-Lobo

Informatics 2026, 13(3), 41; https://doi.org/10.3390/informatics13030041 - 5 Mar 2026

Abstract

►▼

Show Figures

The rapid adoption of generative artificial intelligence (AI) systems has transformed health information seeking, raising questions about their role as intermediaries in non-professional health self-consultation. This study compares Google Search and ChatGPT as paradigmatic models of algorithmic mediation of health information, focusing on

[...] Read more.

The rapid adoption of generative artificial intelligence (AI) systems has transformed health information seeking, raising questions about their role as intermediaries in non-professional health self-consultation. This study compares Google Search and ChatGPT as paradigmatic models of algorithmic mediation of health information, focusing on accuracy, biases, information quality and potential harms. A scoping review was conducted following the PRISMA-ScR framework. Empirical studies published between 2023 and 2025 were retrieved from PubMed/MEDLINE, Web of Science (WoS) and Scopus. After screening and eligibility assessment, 63 original empirical studies were included. The results indicate that ChatGPT consistently outperforms Google Search in terms of factual accuracy and information quality, achieving moderate to high DISCERN scores (4–5 out of 5) and showing moderate to strong correlations with expert clinical evaluations. Users also tend to value ChatGPT responses positively due to their clarity, coherence and perceived empathy. However, these advantages coexist with significant structural limitations. Hallucinations are reported in an estimated 31–45% of references, source provenance remains opaque, linguistic complexity is high, and actionability is limited, with only around 40% of responses providing clearly actionable guidance. In contrast, Google Search offers greater source traceability and verifiability, but at the cost of fragmented information and higher exposure to commercial content. The review identifies critical research gaps related to behavioural impacts, critical health literacy, equity of access, professional integration and vulnerable contexts. Overall, the findings highlight the need for hybrid human–AI models, professional mediation and critical AI literacy to ensure safe, equitable and trustworthy use of generative AI in public health communication.

Full article

Figure 1

Open AccessArticle

Organizational Characteristics Associated with Health Information Systems Adoption in Local Health Departments During the COVID-19 Pandemic

by

Nardeen Shafik, Gulzar H. Shah, Timothy C. McCall, Bettye A. Apenteng, Mansoor Abro and William A. Mase

Informatics 2026, 13(3), 40; https://doi.org/10.3390/informatics13030040 - 4 Mar 2026

Abstract

►▼

Show Figures

Background: The COVID-19 pandemic revealed persistent gaps in local health department (LHD) health informatics capacity. This study examines organizational characteristics of LHDs associated with the adoption of six health information systems: electronic case reporting (eCR), electronic disease reporting systems (EDRS), electronic health records

[...] Read more.

Background: The COVID-19 pandemic revealed persistent gaps in local health department (LHD) health informatics capacity. This study examines organizational characteristics of LHDs associated with the adoption of six health information systems: electronic case reporting (eCR), electronic disease reporting systems (EDRS), electronic health records (EHR), electronic lab reporting (ELR), health information exchange (HIE), and immunization registries (IR). Methods: We used a mixed-methods design, including multinomial or binary logistic regression analyses of quantitative data from the 2022 NACCHO National Profile of Local Health Departments (n = 441) and thematic analysis of semi-structured interviews with five LHD staff members. Results: About half (49.9%) of LHDs had implemented eCR, while higher proportions had implemented EDRS (78.0%), EHR (62.4%), ELR (57.2%), HIE (92.6%), and IR (92.6%). Workforce size was associated with the implementation of eCR, EHR, and IR. The number of vacant staff positions was associated with a lower odds of IR implementation; compared with medium-sized LHDs, both small and large LHDs had higher odds of IR implementation. Shared-governance LHDs had higher odds of adopting ELR and HIE than state-governed LHDs. Qualitative themes highlighted challenges, including staff burnout, high turnover, pay inequities, role ambiguity, political pressures, rapid changes in informatics, and interoperability problems. Conclusions: Findings underscore the need to improve LHD workforce capacity and governance structures to support a resilient public health informatics infrastructure.

Full article

Figure 1

Open AccessArticle

Integrating Agentic Artificial Intelligence to Automate International Classification of Diseases, Tenth Revision, Medical Coding

by

Kitti Akkhawatthanakun, Lalita Narupiyakul, Konlakorn Wongpatikaseree, Narit Hnoohom, Chakkrit Termritthikun and Paisarn Muneesawang

Informatics 2026, 13(3), 39; https://doi.org/10.3390/informatics13030039 - 4 Mar 2026

Abstract

Automating ICD-10 coding from discharge summaries remains demanding because coders analyze clinical narratives while justifying decisions. This study compares three automation patterns: PLM-ICD as a standalone deep learning system emitting 15 codes per case, LLM-only generation with full autonomy, and a hybrid approach

[...] Read more.

Automating ICD-10 coding from discharge summaries remains demanding because coders analyze clinical narratives while justifying decisions. This study compares three automation patterns: PLM-ICD as a standalone deep learning system emitting 15 codes per case, LLM-only generation with full autonomy, and a hybrid approach where PLM-ICD drafts candidates for an agentic LLM audit to accept or reject. All strategies were evaluated on 19,801 MIMIC-IV summaries using four LLMs spanning compact (Qwen2.5-3B-Instruct, Llama-3.2-3B-Instruct, Phi-4-mini-instruct) to large-scale (Sonnet-4.5). Precision guided evaluation because coders still supply any missing diagnoses. PLM-ICD alone reached 55.8% precision while always surfacing 15 suggestions. LLM-only generation lagged severely (1.5–34.6% precision) and produced inconsistent output sizes. The agentic audit delivered the best trade-off: compact LLMs reviewed the 15 candidates, discarded weak evidence, and returned 2–8 high-confidence codes. Llama-3.2-3B-Instruct, for example, improved from 1.5% as a generator to 55.1% as a verifier while trimming false positives by 73%. These results show that positioning LLMs as quality controllers, rather than primary generators, yields reliable support for clinical coding teams, while formal recall/F1 reporting remains future work for fully autonomous implementations.

Full article

(This article belongs to the Special Issue Health Data Management in the Age of AI)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Informatics Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Computers, Informatics, Information, Logistics, Mathematics, Algorithms

Decision Science Applications and Models (DSAM)

Topic Editors: Daniel Riera Terrén, Angel A. Juan, Majsa Ammuriova, Laura CalvetDeadline: 30 June 2026

Topic in

AI, Algorithms, BDCC, Computers, Data, Future Internet, Informatics, Information, MAKE, Publications, Smart Cities

Learning to Live with Gen-AI

Topic Editors: Antony Bryant, Paolo Bellavista, Kenji Suzuki, Horacio Saggion, Roberto Montemanni, Andreas Holzinger, Min ChenDeadline: 31 August 2026

Topic in

World, Informatics, Information

The Applications of Artificial Intelligence in Tourism

Topic Editors: Angelica Lo Duca, Jose BerengueresDeadline: 30 September 2026

Topic in

Applied Sciences, Electronics, Informatics, JCP, Future Internet, Mathematics, Sensors, Remote Sensing

Recent Advances in Artificial Intelligence for Security and Security for Artificial Intelligence

Topic Editors: Tao Zhang, Xiangyun Tang, Jiacheng Wang, Chuan Zhang, Jiqiang LiuDeadline: 28 February 2027

Conferences

Special Issues

Special Issue in

Informatics

Health Data Management in the Age of AI

Guest Editors: Brenda Scholtz, Hanlie SmutsDeadline: 30 May 2026

Special Issue in

Informatics

Machine Learning in Social Media Analysis

Guest Editors: Kellyton Brito, Vinícius Vieira, Pablo SampaioDeadline: 30 June 2026

Special Issue in

Informatics

Revolutionizing Agriculture and Natural Resource Management with Artificial Intelligence Approaches

Guest Editors: Sarawut Ninsawat, Jaturong Som-ardDeadline: 31 July 2026

Special Issue in

Informatics

From Data to Evidence: Transformative AI for Real-World Data

Guest Editors: Jiang Bian, Yu HuangDeadline: 31 July 2026