1. Introduction

Sensors are present in much of the equipment used in everyday life by everyone, including mobile devices, which are currently applied in ambient assisted living (AAL) systems, such as smartphones, smartwatches, smart wristbands, tablets and medical sensors. In these devices, sensors are commonly used to improve and support peoples’ activities or experiences. There are a variety of sensors that allow the acquisition of the various types of data, which can then be used for different types of tasks. While sensors may be classified according to the type of data they manage and their application purposes, the data acquisition is a task that is highly dependent on the user’s environment and application purpose.

The main objective of this paper is to present a comprehensive review of sensor data fusion techniques that may be employed with off-the-shelf mobile devices for the recognition of ADLs. We present a classification of the sensors available in mobile devices and review multi-sensor devices and data fusion techniques.

The identification of ADLs using sensors available in off-the-shelf mobile devices is one of the most interesting goals for AAL solutions, as this can be used for the monitoring and learning of a user’s lifestyle. Focusing on off-the-shelf mobile devices, these solutions may improve the user’s quality of life and health, achieving behavioural changes, such as to reduce smoking or control other addictive habits. This paper does not comprehend the identification of ADLs in personal health or well-being, as this application ecosystem is far wider and deserves a more focused research, therefore being addressed in future work.

AAL has been an important area for research and development due to population ageing and to the need to solve societal and economic problems that arise with an ageing society. Among other areas, AAL systems employ technologies for supporting personal health and social care solutions. These systems mainly focus on elderly people and persons with some type of disability to improve their quality of life and manage the degree of independent living [

1,

2]. The pervasive use of mobile devices that incorporate different sensors, allowing the acquisition of data related to physiological processes, makes these devices a common choice as AAL systems, not only because the mobile devices can combine data captured with their sensors with personal information, such as, e.g., the user’s texting habits or browsing history, but also with other information, such as the user’s location and environment. These data may be processed either in the device or sent to a server using communication technologies for later processing [

3], requiring a high level of quality of service (QoS) to be needed to achieve interoperability, usability, accuracy and security [

2]. The concept of AAL also includes the use of sophisticated intelligent sensor networks combined with ubiquitous computing applications with new concepts, products and services for P4-medicine (preventive, participatory, predictive and personalized). Holzinger

et al. [

4] present a new approach using big data to work from smart health towards the smart hospital concept, with the goal of providing support to health assistants to facilitate a healthier life, wellness and wellbeing for the overall population.

Sensors are classified into several categories, taking into account different criteria, which include the environmental analysis and the type of data acquired. The number and type of sensors available in an off-the-shelf mobile device are limited due to a number of factors, which include the reduced processing capacity and battery life, size and form design and placement of the mobile device during the data acquisition. The number and type of available sensors depend on the selected mobile platform, with variants imposed by the manufacturer, operating system and model. Furthermore, the number and type of sensors available are different between Android platforms [

5] and iOS platforms [

6].

Off-the-shelf mobile devices may include an accelerometer, a magnetometer, an ambient air temperature sensor, a pressure sensor, a light sensor (e.g., photometer), a humidity sensor, an air pressure sensor (e.g., hygrometer and barometer), a Global Positioning System (GPS) receiver, a gravity sensor, a gyroscope, a fingerprint sensor, a rotational sensor, an orientation sensor, a microphone, a digital camera and a proximity sensor. These sensors may be organized into different categories, which we present in

Section 2, defining a new classification of these sensors. Jointly with this classification, the suitability of the use of these sensors in mobile systems for the recognition of ADLs is also evaluated.

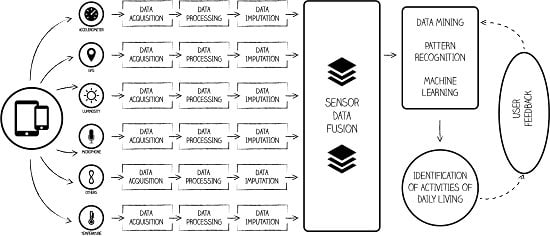

The recognition of ADLs includes a number of different stages, namely data acquisition, data processing, data imputation, sensor data fusion and data mining, which consist of the application of machine learning or pattern recognition techniques (

Figure 1).

As shown in

Figure 1, the process for recognizing ADLs is executed at different stages, which starts with the data acquisition by the sensors. Afterwards, the data processing is executed, which includes the validation and/or correction of the acquired data. When data acquisition fails, data correction procedures should be performed. The correction of the data consists of the estimation of the missing or inconsistent values with sensor data imputation techniques. Valid data may be sent to the data fusion module, which consolidates the data collected from several of the available sensors. After the data fusion, ADLs can be identified using several methods, such as data mining, pattern recognition or machine learning techniques. Eventually, the system may require the user’s validation or feedback, either at an initial stage, or randomly in time, and this validation may be used to improve, to train and to fine-tune the algorithms that handle the consolidated data.

Figure 1.

Schema of a multi-sensor mobile system to recognize activities of daily living.

Figure 1.

Schema of a multi-sensor mobile system to recognize activities of daily living.

Due to the processing, memory and battery constraints of mobile devices, the selection of the algorithms has to be done in a particular way, or otherwise, the inappropriate use of resource-greedy algorithms will render the solution unusable and non-adoptable by the users.

The remaining sections of this paper are organized as follows:

Section 2 proposes a new classification for the sensors embedded in off-the-shelf mobile devices and identifies different techniques related to data acquisition, data processing and data imputation;

Section 3 is focused on the review of data fusion techniques for off-the-shelf mobile devices; some applications of mobile sensor fusion techniques are presented in

Section 4; in

Section 5, the conclusions of this review are presented.

3. Sensor Data Fusion in Mobile Devices

Data fusion is a critical step in the integration of the data collected by multiple sensors. The main objective of the data fusion process is to increase the reliability of the decision that needs to be made using the data collected from the sensors, e.g., to increase the reliability of the identification of the ADL algorithm running in an off-the-shelf mobile device. If a single stream of data cannot eliminate uncertainty from the output, data fusion will use data from several sources with the goal of decreasing the uncertainty level of the output. Consequently, the data fusion increases the level of robustness of a system for the recognition of ADLs, reducing the effects of incorrect data captured by the sensors [

81] or helping to compute solutions when the collected data are not usable for a specific task.

A mobile application implemented by Ma

et al. [

82] was tested in a Google Nexus 4 and uses the accelerometer, gyroscope, magnetometer and GPS receiver to evaluate the sensors’ accuracy, precision, maximum sampling frequency, sampling period, jitter and energy consumption in all of the sensors. The test results show that the built-in accelerometer and gyroscope sensor data have a standard deviation of approximately 0.1 to 0.8 units between the measured value and the real value, the compass sensor data deviate approximately three degrees in the normal sampling rate, and the GPS receiver data have a deviation lower than 10 meters. Thus, one of the working hypotheses of the research is the data collected by mobile sensors may be fused to work with more precision towards a common goal.

The data fusion may be performed with mobile applications, accessing the sensors data as a background process, processing the data and showing the results in a readable format or passing the results or the data to a central repository or central processing machine for further processing.

Durrant-Whyte

et al. [

83] described a decentralized data fusion system, which consists of a network of sensor nodes, each one with its own processing facility. This is a distributed system that does not require any central fusion or central communication facility, using Kalman filters to perform the data fusion. Other decentralized systems for data fusion have also been developed, improving some techniques and the sensors used [

84].

The definition of the categories of the data fusion methods has already been been discussed by several authors [

85,

86,

87]. According to these authors, the data fusion methods may be categorized as probabilistic, statistic, knowledge base theory and evidence reasoning methods. Firstly, probabilistic methods include Bayesian analysis of sensor values with Bayesian networks, state-space models, maximum likelihood methods, possibility theory, evidential reasoning and, more specifically, evidence theory, KNN and least square-based estimation methods, e.g., Kalman filtering, optimal theory, regularization and uncertainty ellipsoids. Secondly, statistic methods include the cross-covariance, covariance intersection and other robust statistics. Thirdly, knowledge base theory methods include intelligent aggregation methods, such as ANN, genetic algorithms and fuzzy logic. Finally, the evidence reasoning methods include Dempster-Shafer, evidence theory and recursive operators.

Depending on the research purpose of the data fusion, these methods have advantages and disadvantages presented in

Table 5. The data fusion methods are influenced with the constraints in the previous execution of data acquisition, data processing and data imputation. The advantages and disadvantages also depend on the environmental scenarios and the choice of the correct method to apply for each research scenario.

Table 5.

Advantages and disadvantages of the sensor data fusion methods.

Table 5.

Advantages and disadvantages of the sensor data fusion methods.

| Methods | Advantages | Disadvantages |

|---|

| Probabilistic methods | Provide methods for model estimation; allows unsupervised classification; estimate the state of variables; reduce errors in the fused location estimate; increase the amount of data without changing its structure or the algorithm; produce a fused covariance matrix that better reflects the expected location error. | Require a priori probabilistic knowledge of information that is not always available or realistic; classification depends on the starting point; unsuitable for large-scale systems; requires a priori knowledge of the uncertainties’ co-variance matrices related to the system model and its measurements. |

| Statistic methods | Accuracy improves from the reduction of the prediction error; high accuracy compared with other local estimators; robust with respect to unknown cross-covariance. | Complex and difficult computation is required to obtain the cross-variance; complexity and larger computational burden. |

| Knowledge base theory methods | Allows the inclusion of uncertainty and imprecision; easy to implement; learning ability; robust to noisy data and able to represent complex functions. | The knowledge extraction requires the intervention of human expertise (e.g., physicians), which takes time and/or may give rise to interpretation bias; difficulty in determining the adequate size of the hidden layer; inability to explain decisions; lack of transparency of data. |

| Evidence reasoning methods | Assign a degree of uncertainty to each source. | Require assigning a degree of evidence to all concepts. |

In [

88], data fusion methods are distributed in six categories. The categories of the data fusion methods include data in–data out, data in–feature out, feature in–feature out, feature in–decision out, decision in–decision out and data in–decision out.

Performing the data fusion process in real time can be difficult because of the large amount of data that may need to be fused. Ko

et al. [

88] proposed a framework, which used dynamic time warping (DTW), as the core recognizer to perform online temporal fusion on either the raw data or the features. DTW is a general time alignment and similarity measure for two temporal sequences. When compared to hidden Markov models (HMMs), the training and recognition procedures in DTW are potentially much simpler and faster, having a capability to perform online temporal fusion efficiently and accurately in real time.

The most used method for data fusion is the Kalman filter, developed for linear systems and then improved to a dynamically-weighted recursive least-squares algorithm [

89]. However, as the sensor data are not linear, the authors in [

89] used the extended Kalman filter to linearize the system dynamics and the measurement function around the expected state and then applied the Kalman filter as usual. A three-axis magnetometer and a three-axis accelerometer are used for the estimation of several movements [

89].

Other systems employ variants of the Kalman filter to reduce the noise and improve the detection of movements. Zhao

et al. [

90] use the Rao-Blackwellization unscented Kalman filter (RBUKF) to fuse the sensor data of a GPS receiver, one gyroscope and one compass to improve the precision of the localization. The authors compare the RBUKF algorithm to the extended Kalman filter (EKF) and unscented Kalman filter (UKF), stating that the RBUKF algorithm improves the tracking accuracy and reduces computational complexity.

Walter

et al. [

91] created a system for car navigation by fusing sensor data on an Android smartphone, using the embedded sensors (

i.e., gyroscope) and data from the car (

i.e., speed information) to support navigation via GPS. The developed system employs a controller area network (CAN)-bus-to-Bluetooth adapter to establish a wireless connection between the smartphone and the CAN-bus of the car. The mobile application fuses the sensors’ data and implements a strap down algorithm and an error-state Kalman filter with good accuracy, according to the authors of [

91].

Anther application for location inference was built by using the CASanDRA mobile OSGi (Open Services Gateway Initiative) framework using a

LocationFusion enabler that fused the data acquired by all of the available sensors (

i.e., GPS, Bluetooth and WiFi) [

92].

Mobile devices allow the development of context-aware applications, and these applications after use a framework for context information management. In [

93], a mobile device-oriented framework for context information management to solve the problems on context shortage and communication inefficiency is proposed. The main functional components of this framework are the data collector, the context processor, the context manager and the local context consumer. The data collector acquires the data from internal or external sensors. Then, the context processor and the context manager extract and manage context. Finally, the local context consumer develops context-aware applications and provides appropriate services to the mobile users. The authors claim that this framework is able to run real-time applications with quick data access, power efficiency, personal privacy protection, data fusion of internal and external sensors and simplicity in usage.

Blum

et al. [

94] use the GPS receiver, compass and gyroscope embedded in Apple iPhone 4 (iOS) (Apple, Cupertino, CA, USA), iPhone 4s (iOS) (Apple, Cupertino, CA, USA) and Samsung Galaxy Nexus (Android) (Samsung, Seul, Korea), for the measurement of the location and augmented reality situations. Blum

et al. analysed the position of the smartphone during the data collection, testing in three different orientation/body position combinations and in varying environmental conditions, obtaining results with location errors of 10 to 30 m (with a GPS receiver) and compass errors around 10 to 30°, with high standard deviations for both.

In [

95], the problems of data fusion, placement and positioning of fixed and mobile sensors are focused on, presenting a two-tiered model. The algorithm combines the data collected by fixed sensors and mobile sensors, obtaining good results. The positioning of the sensors is very important, and the study implements various optimization models, ending with the creation of a new model for the positioning of sensors depending on their types. Other models are also studied by the authors, which consider the simultaneous deployment of different types of sensors, making use of more detailed sensor readings and allowing for dependencies among sensor readings.

Haala and Böhm [

96] created a low-cost system using an off-the-shelf mobile device with several sensors embedded. The data collected by a GPS receiver, a digital compass and a 3D CAD model of a region are used for provisioning data related to urban environments, detecting the exact location of a building in a captured image and the orientation of the image.

A different application where data fusion is required is the recognition of physical activity. The most commonly-used sensors for the recognition of physical activity include the accelerometer, the gyroscope and the magnetic sensor. In [

26], data acquired with these sensors are fused with a combined algorithm, composed of Fisher’s discriminant ratio criterion and the J3 criterion for feature selection [

97]. The collection of data related to the physical activity performed is very relevant to analyse the lifestyle and physiological characteristics of people [

98].

Some other applications analyse the user’s lifestyle by fusing the data collected by the sensors embedded in mobile devices. Yi

et al. [

99] presented a system architecture and a design flow for remote user physiological data and movement detection using wearable sensor data fusion.

In the medical scope, Stopczynski

et al. [

27] combined low-cost wireless EEG sensors with smartphone sensors, creating 3D EEG imaging, describing the activity of the brain. Glenn and Monteith [

100] implemented several algorithms for the analysis of mental status, by using smartphone sensors. These algorithms fuse the data acquired from all of the sensors with other data obtained over Internet, to minimize the constraints of mobile sensors data.

The system proposed in [

101] makes use of biosensors for different measurements, such as a surface plasmon resonance (SPR) biosensor, a smart implantable biosensor, a carbon nanotube (CNT)-based biosensor, textile sensors and enzyme-based biosensors, combined with smartphone-embedded sensors and applying various techniques for the fusion of the data and to filter the inputs to reduce the effects of the position of the device.

Social networks are widely used and promote context-aware applications, as data can be collected with the user’s mobile device sensors to promote the adaptation of the mobile applications to the user-specific lifestyle [

102]. Context-aware applications are an important research topic; Andò [

103] proposed an architecture to adapt context-aware applications to a real environment, using position, inertial and environmental sensors (e.g., temperature, humidity, gases leakage or smoke). The authors claim this system is very useful for the management of hazardous situations and also to supervise various physical activities, fusing the data in a specific architecture.

Other authors [

104] have been studying this topic with mobile web browsers, using the accelerometer data and the positional data, implementing techniques to identify different ADLs. These systems capture the data while the user is accessing the Internet from a mobile device, analysing the movements and the distance travelled during that time and classifying the ADLs performed.

Steed and Julier [

105] created the concept of

behaviour–aware sensor fusion that uses the redirected pointing technique and the yaw fix technique to increase the usability and speed of interaction in exemplar mixed-reality interaction tasks.

In [

106], vision sensors (e.g., a camera) are used and combined with accelerometer data to apply the depth from focus (DFF) method, which was limited to high precision camera systems for the detection of movements in augmented reality systems. The vision systems are relevant, as the obtained information can identify more accurately a moving object. Some mobile devices integrate RFID readers or cameras to fetch related information about objects and initiate further actions.

Rahman

et al. [

107] use a spatial-geometric approach for interacting with indoor physical objects and artefacts instead of RFID-based solutions. It uses a fusion between the data captured by an infrared (IR) camera and accelerometer data, where the IR cameras are used to calculate the 3D position of the mobile phone users, and the accelerometer in the phone provides its tilting and orientation information. For the detection of movement, they used geometrical methods, improving the detection of objects in a defined space.

Grunerbl

et al. [

108] report fusing data from acoustic, accelerometer and GPS sensors. The extraction of features from acoustic sensors uses low-level descriptors, such as root-mean-square (RMS) frame energy, mel-frequency cepstral coefficients (MFCC), pitch frequency, harmonic-to-noise ratio (HNR) and zero-crossing-rate (ZCR). They apply the naive Bayes classifier and other pattern recognition methods and report good results in several situations of daily life.

Gil

et al. [

109] present other systems to perform sensor fusion, such as LifeMap, which is a smartphone-based context provider for location-based services, as well as the Joint Directors of Laboratories (JDL) model and waterfall IF model, which define various levels of abstraction for the sensor fusion techniques. Gil

et al. also present the

inContexto system, which makes use of embedded sensors, such as the accelerometer, digital compass, gyroscope, GPS, microphone and camera, applying several filters and techniques to recognize physical actions performed by users, such as walking, running, standing and sitting, and also to retrieve context information from the user.

In [

110], a sensor fusion-based wireless walking-in-place (WIP) interaction technique is presented, creating a human walking detection algorithm based on fusing data from both the acceleration and magnetic sensors integrated in a smartphone. The proposed algorithm handles a possible data loss and random delay in the wireless communication environment, resulting in reduced wireless communication load and computation overhead. The algorithm was implemented for mobile devices equipped with magnetic, accelerometer and rotation (gyroscope) sensors. During the tests, the smartphone was adapted to the user’s leg. After some tests, the authors decided to implement the algorithm with two smartphones and a magnetometer positioned on the user’s body, combining the magnetic sensor-based walking-in-place and acceleration-based walking in place, in order to discard the use of a specific model (e.g., gait model) and a historical data accumulated. However, the acceleration-based technique does not support the correct use in slow-speed walking, and the magnetic sensor-based technique does not support both normal and fast walking speeds.

Related to the analysis of walking, activities and movements, several studies have employed the GPS receiver, combined with other sensors, such as the accelerometry, magnetometer, rotation sensors and others, with good accuracy using some fusion algorithms, such as the naive and oracle methods [

111], machine learning methods and kinematic models [

112] and other models adapted to mobile devices [

91]. The framework implemented by Tsai

et al. [

113] detects the physical activity fusing data from several sensors embedded in the mobile device and using crowdsourcing, based on the methods’ result of the merging of classical statistical detection and estimation theory and uses value fusion and decision fusion of human sensor data and physical sensor data.

In [

114], Lee and Chung created a system with a data fusion approach based on several discrete data types (e.g., eye features, bio-signal variation, in-vehicle temperature or vehicle speed) implemented in an Android smartphone, allowing high resolution and flexibility. The study involves different sensors, including video, ECG, photoplethysmography, temperature and a three-axis accelerometer, which are assigned as input sources to an inference analysis framework. A fuzzy Bayesian network includes the eyes feature, bio-signal extraction and the feature measurement method.

In [

81], a sensor weighted network classifier (SWNC) model is proposed, which is composed of three classification levels. A set of binary activity classifiers consists of the first classification level of the model proposed. The second classification level is defined by node classifiers, which are decision making models. The decisions of the model are combined through a class-dependent weighting fusion scheme structure with a structure defined through several base classifiers. The weighted decisions obtained by each node classifier are fused on the model proposed. Finally, the last classification level has a similar constitution of the second classification level. Independently of the level of noise imposed, when the mobile device stays in a static position during the data acquisition process, a performance up to 60% can be achieved with the proposed method.

Chen

et al. [

115] and Sashima

et al. [

116] created client-server platforms that monitor the activities in a space with a constant connection with a server. This causes major energy consumption and decreases the capabilities of the mobile devices in the detection of the activities performed.

Another important measurement with smart phone sensors is related to the orientation of the mobile device. Ayub

et al. [

117] have already implemented the DNRF (drift and noise removal filter) with a sensor fusion of gyroscope, magnetometer and accelerometer data to minimize the drift and noise in the output orientation.

Other systems have been implemented for gesture recognition. Zhu

et al. [

118] proposed a high-accuracy human gesture recognition system based on multiple motion sensor fusion. The method reduces the energy overhead resulting from frequent sensor sampling and data processing with a high energy-efficient very large-scale integration (VLSI) architecture. The results obtained have an average accuracy for 10 gestures of 93.98% for the user-independent case and 96.14% for the user-dependent case.

As presented in

Section 2.4, decentralized systems, cloud-based systems or server-side systems are used for data processing. As the data fusion is the next stage, van de Ven

et al. [

119] presented the Complete ambient assisting living experiment (CAALYX) system that provides continuous monitoring of people’s health. It has software installed on the mobile phone that uses data fusion for decision support to trigger additional measurements, classify health conditions or schedule future observations.

In [

120], Chen

et al. presented a decentralized data fusion and active sensing (D

2FAS) algorithm for mobile sensors to actively explore the road network to gather and assimilate the most informative data for predicting the traffic phenomenon.

Zhao

et al. [

121,

122] developed the

COUPON (Cooperative Framework for Building Sensing Maps in Mobile Opportunistic Networks) framework, a novel cooperative sensing and data forwarding framework to build sensing maps satisfying specific sensing quality with low delay and energy consumption. This framework implements two cooperative forwarding schemes by leveraging data fusion; these are epidemic routing with fusion (ERF) and binary spray-and-wait with fusion (BSWF). It considers that packets are spatial-temporally correlated in the forwarding process and derives the dissemination law of correlated packets. The work demonstrates that the cooperative sensing scheme can reduce the number of samplings by 93% compared to the non-cooperative scheme; ERF can reduce the transmission overhead by 78% compared to epidemic routing (ER); BSWF can increase the delivery ratio by 16% and reduce the delivery delay and transmission overhead by 5% and 32%, respectively, compared to binary spray-and-wait (BSW).

In [

123], a new type of sensor node is described with modular and reconfigurable characteristics, composed of a main board, with a processor, FR (Frequency Response) circuits and a power supply, as well as an expansion board. A software component was created to join all sensor data, proposing an adaptive processing mechanism.

In [

124], the C-SPINE (Collaborative-Signal Processing in Node Environment) framework is proposed, which uses multi-sensor data fusion among CBSNs (Collaborative Body Sensor Networks) to enable joint data analysis, such as filtering, time-dependent data integration and classification, based on a multi-sensor data fusion schema to perform automatic detection of handshakes between two individuals and to capture possible heart rate-based emotional reactions due to individuals meeting.

A wireless, wearable, multi-sensor system for locomotion mode recognition is described in [

125], with three inertial measurement units and eight force sensors, measuring both kinematic and dynamic signals of the human gait. The system uses a linear discriminant analysis classifier, obtaining good results for motion mode recognition during the stance phase, during the swing phase and for sit-to-stand transition recognition.

Chen [

126] developed an algorithm for data fusion to track both non-manoeuvring and manoeuvring targets with mobile sensors deployed in a wireless sensor network (WSN). It applies the

GATING technique to solve the problem of mobile-sensor data fusion tracking (MSDF) for targets. In WSNs, an adaptive filter (Kalman filter) is also used, consisting of a data association technique denoted as one-step conditional maximum likelihood.

Other studies have focused on sensor fusion for indoor navigation [

127,

128]. Saeedi

et al. [

127] proposes a context-aware personal navigation system (PNS) for outdoor personal navigation using a smartphone. It uses low-cost sensors in a multi-level fusion scheme to improve the accuracy and robustness of the context-aware navigation system [

127]. The system developed has several challenges, such as context acquisition, context understanding and context-aware application adaptation, and it is mainly used for the recognition of the people’s activities [

127]. It uses the accelerometer, gyroscope and magnetometer sensors and the GPS receiver available on the off-the-shelf mobile devices to detect and recognize the motion of the mobile device, the orientation of the mobile device and the location and context of the data acquisition [

127]. The system includes a feature-level fusion scheme to recognize context information, which is applied after the data are processed and the signal’s features are extracted [

127]. Bhuiyan

et al. [

128] evaluate the performance of several methods for multi-sensor data fusion focused on a Bayesian framework. A Bayesian framework consists of two steps [

128], such as prediction and correction. In the prediction stage, the current state is updated based on the previous state and the system dynamics [

128]. In the correction stage, the prediction is updated with the new measurements [

128]. In [

128], the authors studied different combinations of methods for data fusion, such as a linear system and Kalman filter and a non-linear system and extended Kalman filter, implementing some sensor data fusion systems with good results.

In [

129], a light, high-level fusion algorithm to detect the daily activities that an individual performs is presented. The proposed algorithm is designed to allow the implementation of a context-aware application installed on a mobile device, working with minimum computational cost. The quality of the estimation of the ADLs depends on the presence of biometric information and the position and number of available inertial sensors. The best estimation for continuous physical activities obtained, with the proposed algorithm, is approximately 90%.

The

CHRONIOUS system is developed for smart devices as a decision support system, integrating a classification system with two parallel classifiers. It combines an expert system (rule-based system) and a supervised classifier, such as SVM, random forests, ANN (e.g., the multi-layer perceptron), decision trees and naive Bayes [

130]. Other systems for the recognition of ADLs have also been implemented using other classifiers during the data fusion process, e.g., decision tables.

Martín

et al. [

131] evaluated the accuracy, computational costs and memory fingerprints in the classifiers mentioned above working with different sensor data and different optimization. The system that implements these classifiers encompasses different sensors, such as acceleration, gravity, linear acceleration, magnetometer and gyroscope, with good results, as presented in [

131]. Other systems were implemented using lightweight methods for sensor data fusion, e.g., the

KNOWME system [

132], which implements the autoregressive-correlated Gaussian model for data classification and data fusion.

Other research studies focused on the compensation of the ego-motion of the camera, carried out with the data fusion of Viola and Jones face detector and inertial sensors, reporting good results [

133].

In [

134], sensor fusion is used for error detection, implementing the fusion of ECG with the blood pressure signal, blood pressure with body temperature and acceleration data with ECG.

In [

135], Jin

et al. proposed a robust dead-reckoning (DR) pedestrian tracking system to be used with off-the-shelf sensors. It implements a robust tracking task as a generalized maximum

a posteriori sensor fusion problem, and then, they narrow it to a simple computation procedure with certain assumptions, with a reduction in average tracking error up to 73.7%, compared to traditional DR tracking methods.

Table 6.

Examples of sensor data fusion methods.

Table 6.

Examples of sensor data fusion methods.

| Sensors | Methods | Achievements |

|---|

| Accelerometer; Gyroscope; Magnetometer; Compass; GPS receiver; Bluetooth; Wi-Fi; digital camera; microphone; RFID readers; IR camera. | DR pedestrian tracking system; Autoregressive-Correlated Gaussian Model; CASanDRA mobile OSGi framework; Genetic Algorithms; Fuzzy Logic; Dempster-Shafer; Evidence Theory; Recursive Operators; DTW framework; CHRONIOUS system; SVM; Random Forests; ANN; Decision Trees; Naive Bayes; Decision Tables; Bayesian analysis. | The use of several sensors reduces the noise effects; these methods also evaluated the accuracy of sensor data fusion; the data fusion may be performed with mobile applications, accessing the sensors data as a background process, processing the data and showing the results in a readable format. |

| Accelerometer; Gyroscope; Magnetometer; Compass; GPS receiver; Bluetooth; WiFi; digital camera; microphone; low-cost wireless EEG sensors; RFID readers; IR camera | Kalman Filtering; C-SPINE framework; DNRF method; SWNC model; GATING technique; COUPON framework; CAALYX system; high energy-efficient very large-scale integration (VLSI) architecture; sensor-fusion-based wireless walking-in-place (WIP) interaction technique; J3 criterion; DFF method; ERF method; BSWF method; inContexto system; RMS frame energy; MFCC method; pitch frequency; HNR method; ZCR method; KNN; Least squares-based estimation methods; Optimal Theory, Regularization; Uncertainty Ellipsoids. | These methods allow a complex processing of amount of data acquired, because it is central processed in a server-side system; using data from several sources decreases the uncertainty level of the output; performing the data fusion process in real time can be difficult because of the large amount of data that may need to be fused; the data fusion may be performed with mobile applications, accessing the sensors data as a background process, processing the data and showing the results in a readable format or passing the results or the data to a central repository or central processing machine for further processing. |

| Gyroscope; Compass; Magnetometer; GPS receiver. | Kalman Filtering; Bayesian analysis. | It is mainly useful for the context-aware localization systems; defined several recognizer algorithms to perform online temporal fusion on either the raw data or the features. |

| ECG and others | Kalman Filtering. | Using data from several sources decreases the uncertainty level of the output; defined several recognizer algorithms to perform online temporal fusion on either the raw data or the features. |

In [

136], Grunerbl

et al. developed a smartphone-based recognition of states and state changes in bipolar disorder patients, implemented an optimized state change detection, developing various fusion methods with different strategies, such as logical AND, OR, and their own weighted fusion, obtaining results with good accuracy.

In

Table 6, a summary of sensor data fusion techniques, their achievements and sensors used is presented. This table also helps make clear that different methods can be applied to different types of data collected by different sensors.

In conclusion, sensor fusion techniques for mobile devices are similar to those employed with other external sensors, because the applications usually involve embedded and external sensors at the same time. The Kalman filter is the most commonly used, but on these devices, there is a need to use low processing techniques. Some research applications need a high processing capacity, and in this case, the mobile device is only used to capture the data. After capturing the data, the captured data will be sent to a server for later processing. The most important criterion for choosing a data fusion method should be based on the limited capacities of the mobile devices, as these devices increase the possibility to collect physiological data anywhere and at anytime with low cost.

5. Conclusions

Data acquisition, data processing, data imputation on one sensor data stream and, finally, multiple sensor data fusion together are the proposed roadmap to achieve a concrete task, and as discussed in this paper, the task at hand is the identification of activities of daily living. While these steps pose little challenge when performed in fixed computational environments, where resources are virtually illimited, when their execution is intended in mobile off-the-shelf devices, a new type of challenges arises with the restrictions of the computational environment.

The use of mobile sensors requires a set of techniques to classify and to process the data acquired to make them usable by software components and to automate the execution of specific tasks. The data pre-processing and data cleaning tasks are performed at the start of the sensors’ data characterization. The collected data may have inconsistent and/or unusable data, normally called environmental noise. However, the application of filters helps with removing the unusable data. The most commonly-used filters are the Kalman filter and its variants, as these methods are often reported to have good accuracy. Nevertheless, it must be noted that the correct definition of the important features of the collected data influences the correct measurement of the accuracy of the Kalman filter. Therefore, and because it is difficult to assess the accuracy of one particular type of Kalman filter, especially when used regarding the identification of activities of daily living, it is advisable to explore different sensor data fusion technologies. The main methods for the identification of the different features of the sensor signal are machine learning and pattern recognition techniques.

Sensor fusion methods are normally classified into four large groups: probabilistic methods, statistical methods, knowledge-based theory methods and evidence reasoning methods. Although several methods have been studied and discussed in this paper, the choice of the best method for each purpose depends on the quantity and types of sensors used, on the diversity in the representation of the data, on the calibration of the sensors, on the limited interoperability of the sensors, on the constraints in the statistical models and, finally, on the limitations of the implemented algorithms.

Sensors available in off-the-shelf mobile devices can support the implementation of sensor fusion techniques and improve the reliability of the algorithms created for these devices. However, mobile devices have limited processing capacity, memory and autonomy. Nevertheless, the fusion of the sensors’ data may be performed with mobile applications, which access the sensors’ data as a background task, processing the data collected and showing the results in a readable format to the user or sending the results or the data to a central repository or central processing machine.

The techniques related to the concepts of sensor and multi-sensor data fusion presented in this paper have different purposes, including the detection/identification of activities of daily living and other medical applications. For off-the-shelf mobile devices, the positioning of the device during the data acquisition process is in itself an additional challenge, as the accuracy, precision and usability of obtained data are also a function of the sensors’ location. Therefore, it has to be expected that these systems can fail only because of poor mobile device positioning.

Several research studies have been carried out regarding sensor fusion techniques applied to the sensors available in off-the-shelf mobile devices, but most of them only detect basic activities, such as walking. Due to the large market of mobile devices, e.g., smartphones, tablets or smartwatches, ambient assisted living applications on these platforms become relevant for a variety of purposes, including tele-medicine, monitoring of elderly people, monitoring of sport performance and other medical, recreational, fitness or leisure activities. Moreover, the identification of a wide range of activities of daily living is a milestone in the process of building a personal digital life coach.

The applicability of sensor data fusion techniques for mobile platforms is therefore dependent on the variable characteristics of the mobile platform itself, as these are very diverse in nature and features, from local storage capability to local processing power, battery life or types of communication protocols. Nevertheless, experimenting with the algorithms and techniques described previously, so as to adapt them to a set of usage scenarios and a class of mobile devices, will render these techniques usable in most mobile platforms without major impairments.