Abstract

Compressed sensing (CS) theory has attracted widespread attention in recent years and has been widely used in signal and image processing, such as underdetermined blind source separation (UBSS), magnetic resonance imaging (MRI), etc. As the main link of CS, the goal of sparse signal reconstruction is how to recover accurately and effectively the original signal from an underdetermined linear system of equations (ULSE). For this problem, we propose a new algorithm called the weighted regularized smoothed -norm minimization algorithm (WReSL0). Under the framework of this algorithm, we have done three things: (1) proposed a new smoothed function called the compound inverse proportional function (CIPF); (2) proposed a new weighted function; and (3) a new regularization form is derived and constructed. In this algorithm, the weighted function and the new smoothed function are combined as the sparsity-promoting object, and a new regularization form is derived and constructed to enhance de-noising performance. Performance simulation experiments on both the real signal and real images show that the proposed WReSL0 algorithm outperforms other popular approaches, such as SL0, BPDN, NSL0, and L-RLSand achieves better performances when it is used for UBSS.

1. Introduction

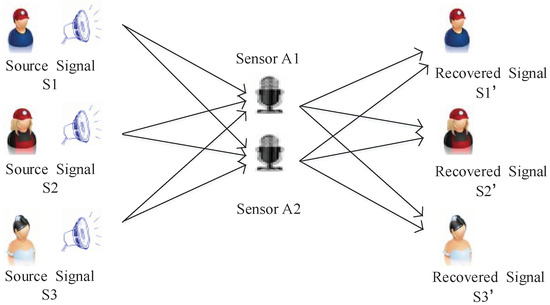

The problem that UBSS [1,2] needs to address is how to separate multiple signals from a small number of sensors. The essence of this problem is to solve the optimal solution of the undetermined linear system of equations (ULSE). Fortunately, as a new undersampling technique, compressed sensing (CS) [3,4,5] is an effective way to solve ULSE, which makes it possible to apply CS to UBSS.

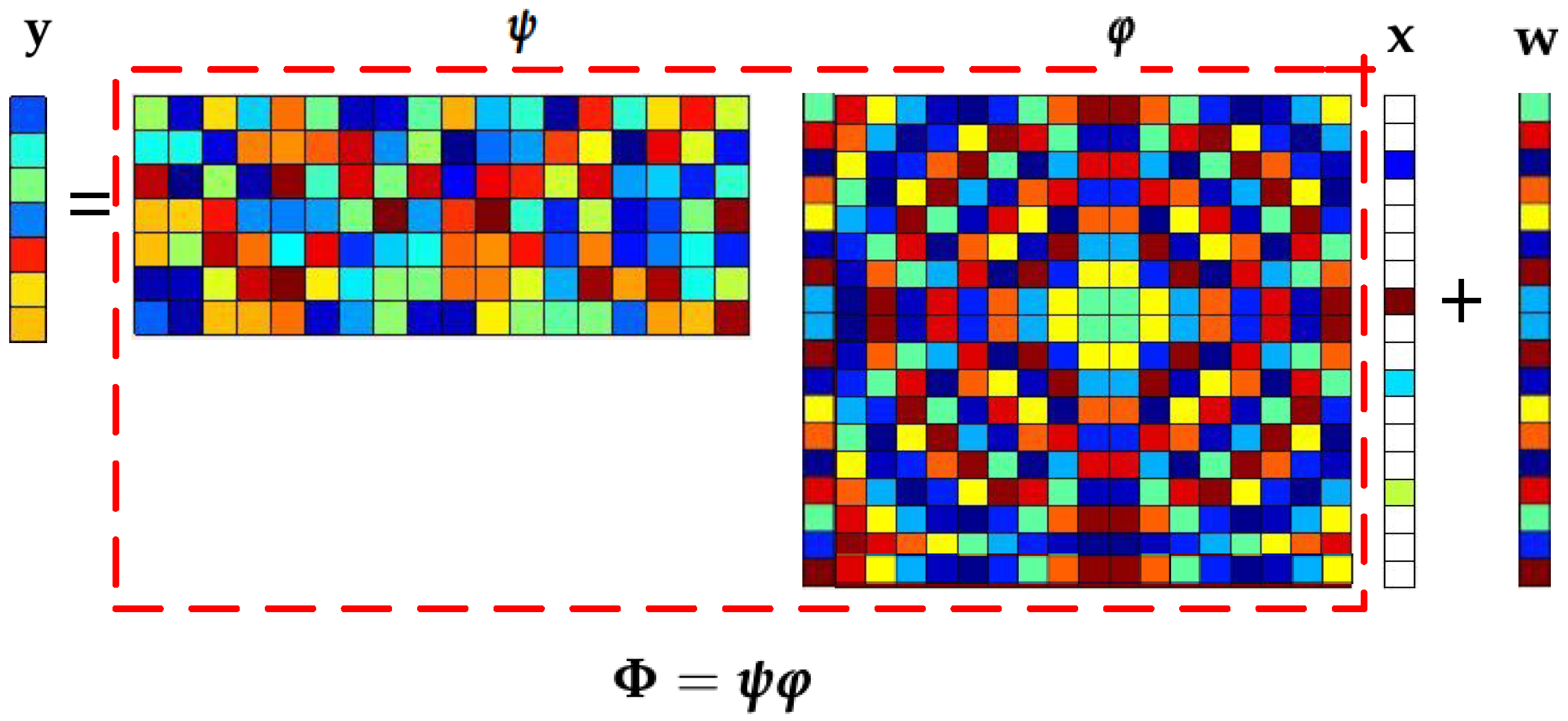

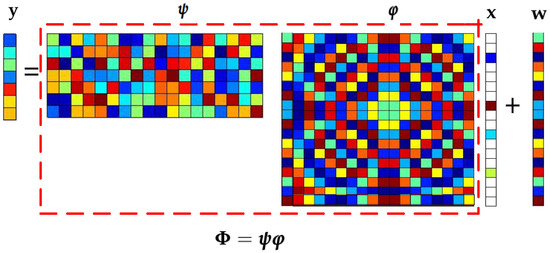

The model of CS is shown in Figure 1. According to this figure, it can be see that CS boils down to the form,

where is a sensing matrix with the condition of and , which can be further represented as , while is a random matrix, and is the sparse basis matrix. is the vector of measurements. Moreover, denotes the additive noise.

Figure 1.

Frame of compressed sensing (CS).

To solve the ULSE in Equation (1), we try to recover the sparse signal from the given by CS. According to CS, this problem is transformed into solving the -norm minimization problem.

where denotes error. This rather wonderful attempt is actually supported by a brilliant theory [6]. Based on this theory, in the noiseless case, it is proven that the sparsest solution is indeed a real signal when is sufficiently sparse and satisfies the restricted isometry property (RIP) [7]:

where K is the sparsity of signal and is a constant. In Equation (2), the -norm is nonsmooth, which leads an NP-hard problem. In practice, two alternative approaches are usually employed to solve the problem [8]:

- Greedy search by using the known sparsity as a constraint;

- The relaxation method for the .

For greedy search, the main methods are based on greedy matching pursuit (GMP) algorithms, such as orthogonal matching pursuit (OMP) [9,10], stage-wise orthogonal matching pursuit (StOMP) [11], regularized orthogonal matching pursuit (ROMP) [12], compressive sampling matching pursuit (CoSaMP) [13], generalized orthogonal matching pursuit (GOMP) [14,15], and subspace pursuit (SP) [16,17] algorithms. The objective function of these algorithms is given by:

As shown in the above equation, the features of GMP algorithms can be concluded as:

- Using sparsity as prior information;

- Using the least squares error as the iterative criterion.

The advantage of GMP algorithms is that the computational complexity is low, but the reconstruction accuracy is not high in the noise case.

At present, the relaxation method for is widely used. The relaxation method is mainly divided into two categories: the constraint-type algorithm and the regularization method. The constraint-type algorithm can also be divided into -norm minimization methods and smoothed -norm minimization methods. The representative algorithm of the former is the BPalgorithm [18], and the latter is the smoothed -norm minimization (SL0) algorithm. For the SL0 algorithm, the objective function can be expressed as:

where is a smoothed function, which approximates the -norm when . Compared with or , a small is selected to make the function close to -norm [8]; therefore, are closer to the optimal solution.

Based on the idea of approximation, Mohimani used a Gauss function to approximate the -norm [19], which is described as:

According to the equation, we can know:

when is a small enough positive value, the Gauss function is almost equal to the -norm. Furthermore, the Gauss function is differentiable and smoothed; hence, it can be optimized by optimization methods such as the gradient descent (GD) method. Zhao proposed another smoothed function: the hyperbolic tangent (tanh) [20],

This smoothed function makes a closer approximation to the -norm than the Gauss function, as shown in [19], with the same ; hence, it performs better in sparse signal recovery. Indeed, a large number of simulation experiments confirmed this view.

Another relaxation method is the regularization method. For CS, sparse signal recovery in the noise case is a very practical and unavoidable problem. Fortunately, the regularization method makes the solution of this problem possible [21,22]. The regularization method can be described as a “relaxation” approach that tries to solve the following unconstrained recovery problem:

where is the parameter that balances the trade-off between the deviation term and the sparsity regularizer . The sparse prior information is enforced via the regularizer , and a proper is crucial to the success of the sparse signal recovery task: it should favor sparse solutions and make sure the problem can be solved efficiently in the meantime.

For regularization, various sparsity regularizers have been proposed as the relaxation of the -norm. The most popular algorithms are the convex -norm [22,23] and the nonconvex -norm to the power [24,25]. In the noiseless case, the -norm is equivalent to the -norm, and the -norm is the only norm with sparsity and convexity. Hence, it can be optimized by convex optimization methods. However, according to [8], in the noisy case, the -norm is not exactly equivalent to the -norm, so the effect of promoting sparsity is not obvious. Compared to the -norm, the nonconvex -norm to the power makes a closer approximation to the -norm; therefore, -norm minimization has a better sparse recovery performance [8].

In view of the above explanation, in this paper, a compound inverse proportional function (CIPF) function is proposed as a new smoothed function, and a new weighted function is proposed to promote sparsity. For the noise case, a new regularization form is derived and constructed to enhance de-noising performance. The experimental simulation verifies the superior performance of this algorithm in signal and image recovery, and it has achieved good results when applied to UBSS.

This paper is organized as follows: Section 2 introduces the main work of this paper. The steps of the ReRSL0algorithm and the selection of related parameters are described in Section 3. Experimental results are presented in Section 4 to evaluate the performance of our approach. Section 5 verifies the effect of the proposed weighted regularized smoothed -norm minimization (WReSL0) algorithm in UBSS. Section 6 concludes this paper.

2. Main Work of This Paper

In this paper, based on the in Equation (9), we propose a new objective function, which is given by:

According to this equation, We not only propose a smoothed function approximating the -norm, but also propose a weighted function to promote sparsity. This section focuses on the relevant contents of and .

2.1. New Smoothed Function: CIPF

According to [26], some properties of the smoothed functions are summarized in the following:

Property: Let and, define for any . The function f has the property, if:

- (a)

- f is real analytic on for some ;

- (b)

- , , where is some constant;

- (c)

- f is convex on ;

- (d)

- ;

- (e)

- .

It follows immediately from that converges to the -norm as , i.e.,

Based on , this paper proposes a new smoothed function model called CIPF, which satisfies and better approximates the -norm. The smoothed function model is given as:

In Equation (12), denotes a regularization factor, which is a large constant. By experiments, the factor is determined to be 10, which is a good result of the simulation. represents a smoothed factor, and when it is smaller, it will make the proposed model closer to the -norm. Obviously, or approximately is satisfied. Let:

where for small values of , and the approximation tends to equality when .

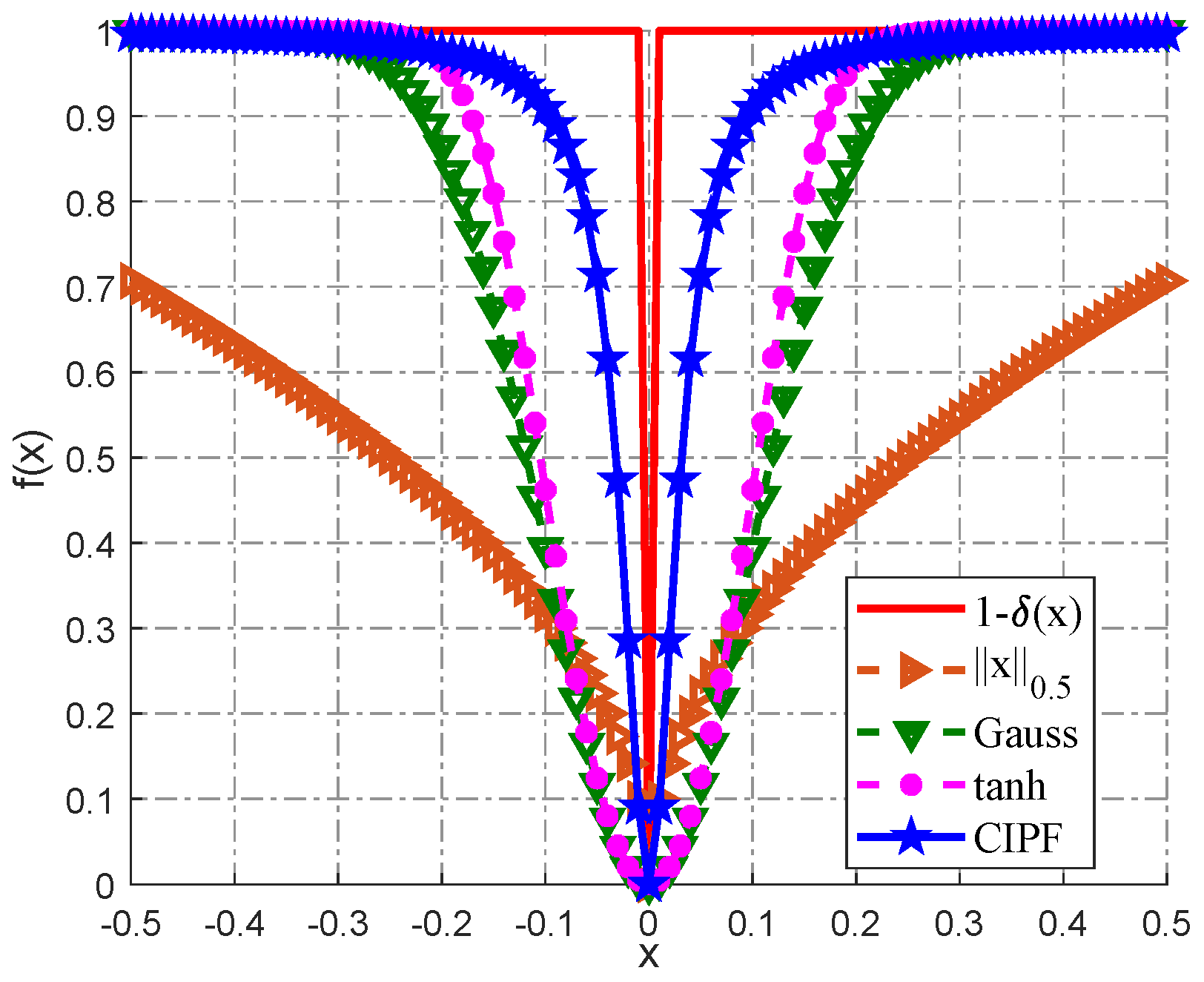

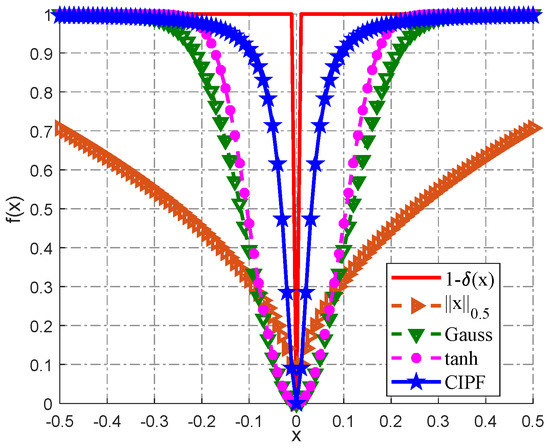

Figure 2 shows the effect of the CIPF model approximating the L0-norm. Obviously, the CIPF model makes a better approximation.

Figure 2.

Different functions used in the literature to approximate the -norm; some of them are plotted in this figure, and the -norm is displayed for comparison. CIPF, compound inverse proportional function.

In conclusion, the merits of the CIPF model can be summarized as follows:

- It closely approximates the -norm;

- It is simpler in form than that in the Gauss and tanh function models.

These merits make it possible to reduce the computational complexity on the premise of ensuring the accuracy of sparse signal reconstruction, which is of practical significance for sparse signal reconstruction.

2.2. New Weighted Function

Candès et al. [27] proposed the weighted -norm minimization method, which employs the weighted norm to enhance the sparsity of the solution. They provided an analytical result of the improvement in the sparsity recovery by incorporating the weighted function with the objective function. Pant et al. [28] applied another weighted smoothed -norm minimization method, which uses a similar weighted function to promote sparsity. The weighted function can be summarized as follows:

- Candès et al.: ;

- Pant et al.: , is a small enough positive constant.

From the two weighted functions, we can find a phenomenon: a large signal entry is weighted with a small ; on the contrary, a small signal entry is weighted with a large value . By analysis, the large forces the solution to concentrate on the indices where is small, and by construction, these correspond precisely to the indices where is nonzero.

Combined with the above idea, we propose a new weighted function, which is given by:

As for Candès et al., when the signal entry is zero or close to zero, the value of will be very large, which is not suitable for computation by a computer. Although Pant et al. noticed the problem and improved the weighted function to avoid it, the constant depends on experience. Actually, the proposed weighted function can avoid the two problems. Moreover our weighted function can be satisfied with the phenomenon. When the small signal entry can be weighted with a large and a large signal entry can be weighted with a small , this can make the large signal entry and small signal entry closer. In this way, the direction of optimization can be kept as consistent as possible, and the optimization process tends to be more optimal. Therefore, the proposed weighted function can have a better effect.

3. New Algorithm for CS: WReSL0

3.1. WReSL0 Algorithm and Its Steps

Here, in order to analyze the problem more clearly, we rewrite Equation (10) as follows:

where ( is a unit vector) is a differentiable smoothed accumulated function. The weighted function . Therefore, we can obtain the gradient of CIPF, which is written as:

Solving the problem of ULSE is to solve the optimization problem in Equation (10). As for this problem, there are many methods, such as split Bregman methods [29,30,31], FISTA [32], alternating direction methods [33], gradient descent (GD) [34], etc. In order to reduce the computational complexity, this paper adopts the GD method to optimize the proposed objective function.

Given , a small target value , and a sufficiently large initial value , after referring to the annealing mechanism in simulated annealing [35], this paper proposes a monotonically-decreasing sequence , which is generated as:

where , is a constant that is larger than one, and T is the maximum number of iterations. Using such a monotonically-decreasing sequence can avoid the case of too small of a leading to the local optimum.

Similar to SL0, WReSL0 also consists of two nested iterations: the external loop, which begins with a sufficiently large value of , i.e, , responsible for the gradually decreasing strategy in Equation (17), and the internal loop, which for each value of , finds the maximizer of on .

According to the GD algorithm, the internal loop consists of the gradient descent step, which is given by:

where and denotes a step size factor. This part is similar to SL0, followed by solving the problem:

where denotes the optimal solution. By regularization, this form can be converted to another form as follows,

where is the regularization parameter, which is adapted to balance the fit of the solution to the data y and the approximation of the solution to the maximizer of . Weighted least squares (WLS) can be used to solve this problem, and the solution is:

By calculation, Equation (21) is equivalent to:

where and are both identity matrices of size and , respectively. Therefore, we can obtain:

According to the above analysis and derivation, we can get:

The initial value of the internal loop is the maximizer of obtained for . To increase the speed, the internal loop is repeated a fixed and small number of times (L). In other words, we do not wait for the GD method to converge in the internal loop.

According to the explanation above, we can conclude the steps of the proposed WReSL0 algorithm, which are given in Table 1. As for , it can be shown that function remains convex in the region where the largest magnitude of the component of is less than . As the algorithm starts at the original value , the above choice of ensures that the optimization starts in a convex region. This greatly facilitates the convergence of the WReSL0 algorithm.

Table 1.

Weighted regularized smoothed -norm minimization (WReSL0) algorithm using the GD method.

3.2. Selection of Parameters

The selection of parameters and will affect the performance of the WReSL0 algorithm; thus, this paper discusses the selection of these two above parameters in this section.

3.2.1. Selection of Parameter

According to the algorithm, each iteration consists of a descent step , followed by a projection step. If for some values of i, we have , then the algorithm does not change the value of in that descent step; however, it might be changed in the projection step. If we are looking for a suitably large , a suitable choice is to make the algorithm force all those values of satisfying toward zero. Therefore, we can get:

and:

By calculation, we can obtain:

According to the above derivation, we have come to the conclusion that . Therefore, we can set .

3.2.2. Selection of Parameter

According to Equation (17), the descending sequence of is generated by (it is obtained through simplification of Equation (17)). Parameter and parameter should be appropriately selected. The selection of and is discussed below.

For the initial value of , i.e., , here, let ; suppose there is a constant b, in order to make the algorithm converge quickly; let parameter satisfy:

From the equation, we can see that constant b satisfies ; thus , and here, we define constant b as . Hence, .

For the final value , when , . That is, the smaller , the more can reflect the sparsity of signal , but at the same time, it is also more sensitive to noise; therefore, the value should not be too small. Combining [19], we choose .

4. Performance Simulation and Analysis

The numerical simulation platform is MATLAB 2017b, which is installed on a computer with a Windows 10, 64-bit operating system. The CPU of the simulation computer is the Intel (R) Core (TM) i5-3230M, and the frequency is 2.6 GHz. In this section, the performance of the WReSL0 algorithm is verified by signal and image recovery in the noise case.

Here, some state-of-the-art algorithms are selected for comparison. The parameters are selected to obtain the best performance for each algorithm: for the BPDNalgorithm [36], the regularization parameter ; for the SL0 algorithm [19], the initial value of smoothed factor , the final value of smoothed factor , scale factor is set as step size , and the attenuation factor ; for the NSL0algorithm [20], the initial value of smoothed factor , the final value of smoothed factor , the step size , and the attenuation factor ; for L-RLSalgorithm [24], the number of iterations , the norm initial value , the norm final value , the initial value of regularization factor , the final value of regularization factor , and the algorithm termination threshold ; for the WReSL0 algorithm, the initial value of smoothed factor , the final value of smoothed factor , the iterations , the step size , and the regularization parameter . All experiments are based on 100 trials.

4.1. Signal Recovery Performance in the Noise Case

In this part, we discuss signal recovery performance in the noise case. We add noise to the measurement vector ; moreover, , is randomly formed and follows the Gaussian distribution of . For signal recovery under noise conditions, we evaluate the performance of algorithms by the normalized mean squared error (NMSE) and the CPU running time (CRT). NMSE is defined as . CRT is measured with and . In order to analyze the de-noising performance of the WReSL0 algorithm in context closer to the real situation, we constructed a certain signal as an experimental object in the experiments in this section. The signal is given by:

where , , , and . Hz; Hz; Hz; and Hz. Here, is a sequence with , and is sampling interval with the value of . is the sampling frequency with the value of 800 Hz. The object that needs to be reconstructed can be expressed as:

where is a sparse signal in the frequency domain, and it is the Fourier transform expression of , . Here, let , . Moreover, can be represented as ; here, is a randn matrix generated by a Gaussian distribution, and is a sparse basis matrix generated by Fourier transform. Here, can be given by Fourier , and is a unit matrix. This target signal is sparse in Fourier space; hence, the signal can be recovered from given by CS recovery methods.

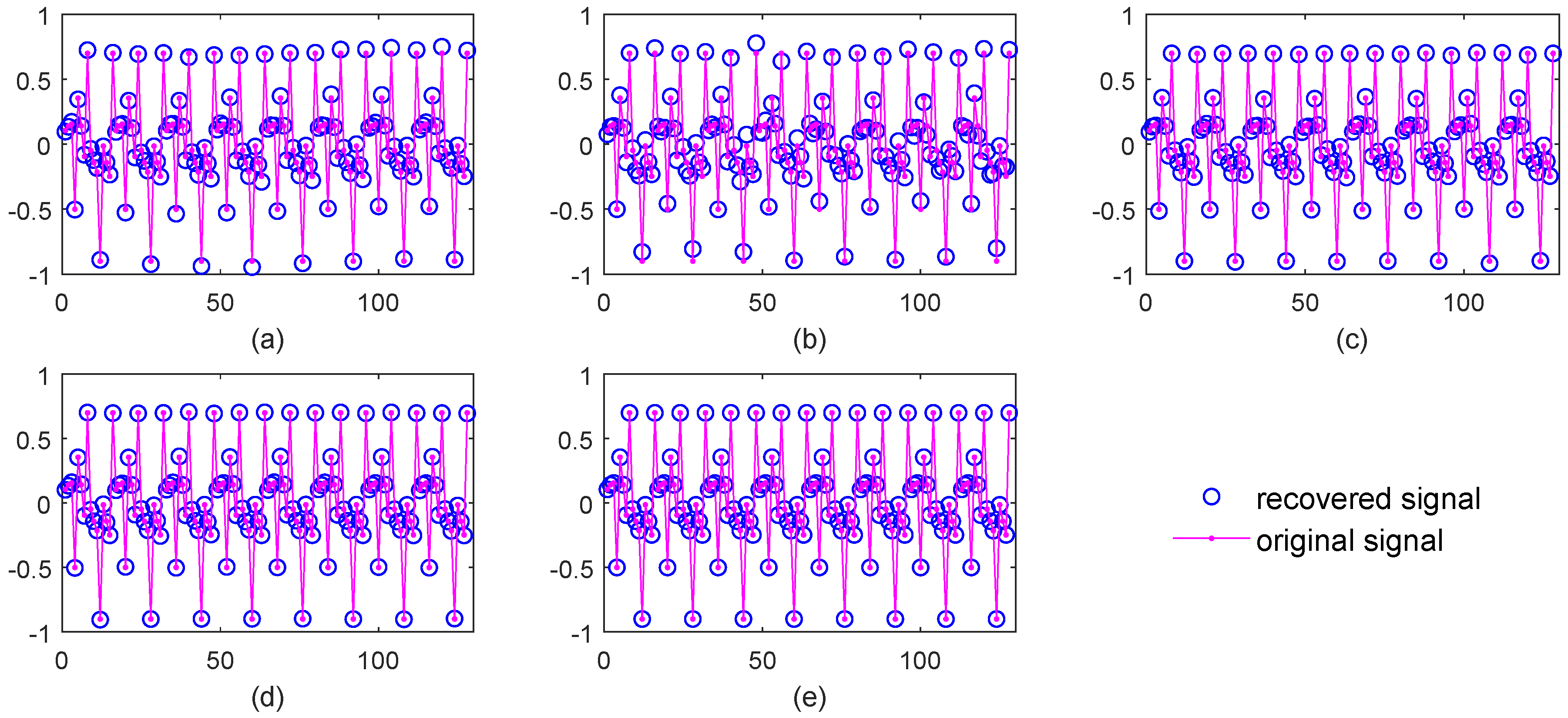

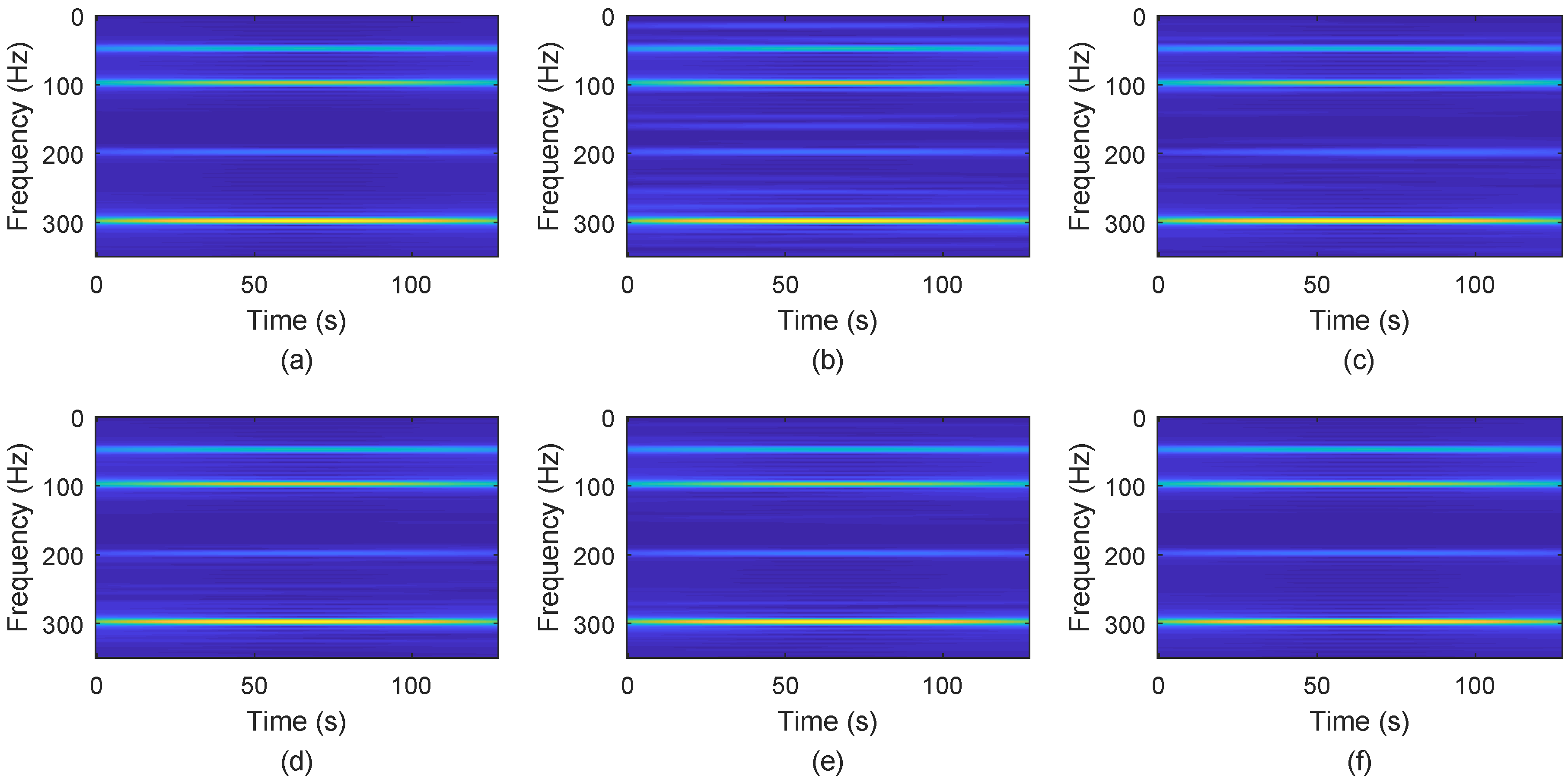

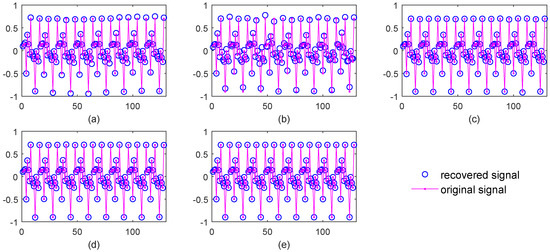

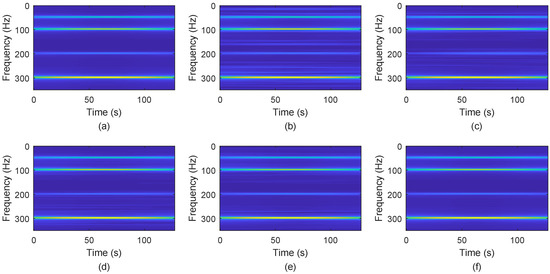

Figure 3 shows the signal recovery effect. Obviously, BPDN and SL0 do not perform well, while NSL0, L-RLS and the proposed WReSL0 perform quite well. This verifies that the regularization mechanism has a good de-noising effect. Figure 4 shows the frequency spectrum of the recovered signal by the selected algorithms. The spectrum of the signal recovered by our proposed WReSL0 algorithm is almost the same as the original signal, while other algorithms fail to achieve this effect.

Figure 3.

Signal recovery effect by BPDN, SL0, NSL0, L-RLS, and weighted regularized smoothed -norm minimization (WReSL0) when noise intensity = 0.2. (a) signal recovery by the BPDN algorithm; (b) signal recovery by the SL0 algorithm; (c) signal recovery by NSL0 algorithm; (d) signal recovery by the L-RLS algorithm; (e) signal recovery by the WReSL0 algorithm.

Figure 4.

Frequency spectrum analysis of the original signal and the signal recovered by BPDN, SL0, NSL0, L-RLS, and WReSL0 when noise intensity = 0.2. (a) original signal; (b) signal recovery by the BPDN algorithm; (c) signal recovery by the SL0 algorithm; (d) signal recovery by the NSL0 algorithm; (e) signal recovery by the L-RLS algorithm; (f) signal recovery by the WReSL0 algorithm.

Table 2 shows the CRT of all algorithms. The n changes according to a given sequence . From the table, for any n, SL0 has the shortest computation time, followed by WReSL0, NSL0, and L-RLS, and BPDN has the longest computation time. The BPDN algorithm is generally implemented by the quadratic programming method, and the computational complexity of this method is very high, thus resulting in a large increase in the overall computation time of the algorithm. Furthermore, in L-RLS, the iterative process adopts the conjugate gradient method with high complexity, while NSL0 and WReSL0 do not. Compared with NSL0, WReSL0 is more prominent in the decrease of computation time.

Table 2.

Signal CPU running time (CRT) analysis for BPDN, SL0, NSL0, L-RLS, and the proposed WReSL0 with signal length changes according to the sequence [170, 220, 270, 320, 370, 420, 470, 520] when .

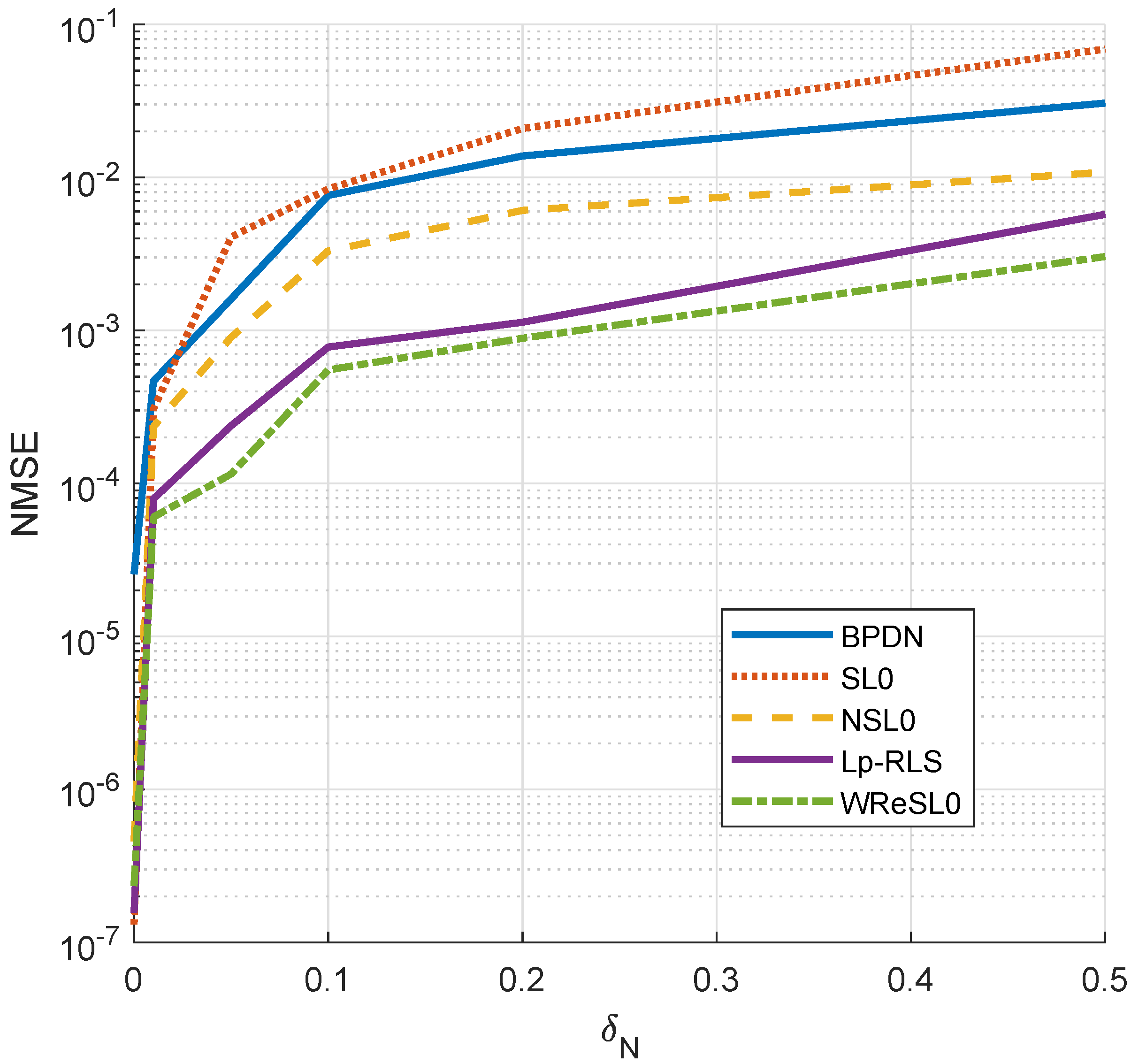

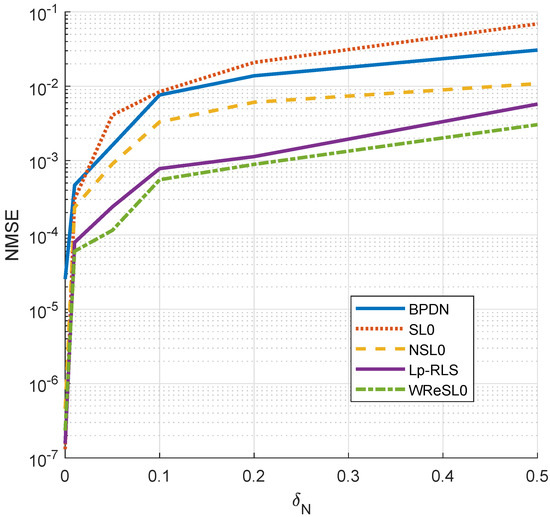

The performance of each algorithm under different noise intensities is shown in Figure 5. When , SL0 outperforms other algorithms, but with the increase of , the effect of SL0 becomes worse and worse. This result further illustrates that the traditional constrained sparse recovery algorithm does not have the performance of anti-noising. For BPDN, NSL0, L-RLS, and WReSL0, they all applied the regularization mechanism, and they are indeed superior to SL0 in the noise case. Therefore, the proposed WReSL0 in this paper has the best de-noising performance.

Figure 5.

NMSE analysis by BPDN, SL0, NSL0, L-RLS, and WReSL0 when noise intensity changes according to the sequence [0, 0.1, 0.2, 0.3, 0.4, 0.5].

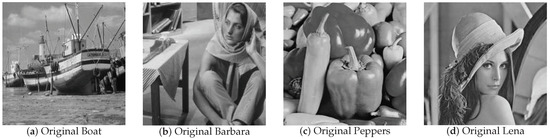

4.2. Image Recovery Performance in the Noise Case

Real images are considered to be approximately sparse under some proper basis, such as the DCT basis, DWT basis, etc. Here, we choose the DWT basis to recover these images. We compare the recovery performances based on the four real images in Figure 6: boat, Barbara, peppers, and Lena. The size of these images is ; the compression ratio (CR; defined as ) is 0.5; and the noise equals 0.01. We still choose SL0, BPDN, NSL0, and L-RLS to make comparisons. For image recovery, the object of image processing is given by:

Figure 6.

Original images: (a) boat; (b) Barbara; (c) peppers; (d) Lena.

Here, , , are matrices, and among these, , . In order to meet the basic requirements of CS, we perform the following processing:

where , , are the column vectors of , , , respectively. , obeys the Gaussian distribution .

To perform image recovery, we valuate it by the peak signal to noise ratio (PSNR) and the structural similarity index (SSIM). PSNR is defined as:

where , and SSIM is defined as:

Among these, is the mean of image p, is the mean of image q, is the variance of image p, is the variance of image q, and is the covariance between image p and image q. Parameters and , for which , and L is the dynamic range of pixel values. The range of SSIM is , and when these two images are the same, SSIM equals one.

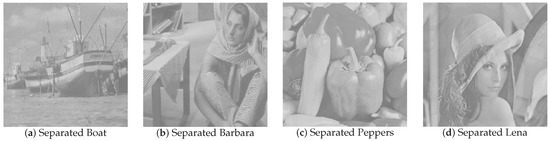

Figure 7 shows the recovery effect of boat and Barbara with noise intensity . For boat and Barbara, the recovered images by SL0 and BPDN have obvious water ripples, while recovered images by other algorithms have no such water ripples. Similarly, for peppers and Lena, the recovered images by SL0 and BPDN are blurred compared with the recovered images by other algorithms. The NSL0, L-RLS, and WReSL0 algorithms are also effective at noisy image recovery. For the NSL0, L-RLS, and WReSL0 algorithms, their recovery effects are very similar. In order to further analyze the advantages and disadvantages of the algorithms, we analyze the PSNR and SSIM of the images recovered by these algorithms, and the results are shown in Table 3 and Table 4. By observation and analysis, L-RLS performs better than NSL0, and at the same time, WReSL0 outperforms L-RLS. Hence, the WReSL0 proposed by this paper is superior to the other selected algorithms in image processing.

Figure 7.

Image recovery effect by the BPDN, SL0, NSL0, L-RLS, and WReSL0 algorithms with noise intensity = 0.01. In (a–d), from left to right, are: image recovered by the BPDN, SL0, NSL0, L-RLS, and WReSL0 algorithms.

Table 3.

PSNR and SSIM analysis of recovered images (boat and Barbara) by SL0, BPDN, NSL0, L-RLS, and WReSL0.

Table 4.

PSNR and SSIM analysis of recovered images (peppers and Lena) by SL0, BPDN, NSL0, L-RLS, and WReSL0.

5. Application in Underdetermined Blind Source Separation

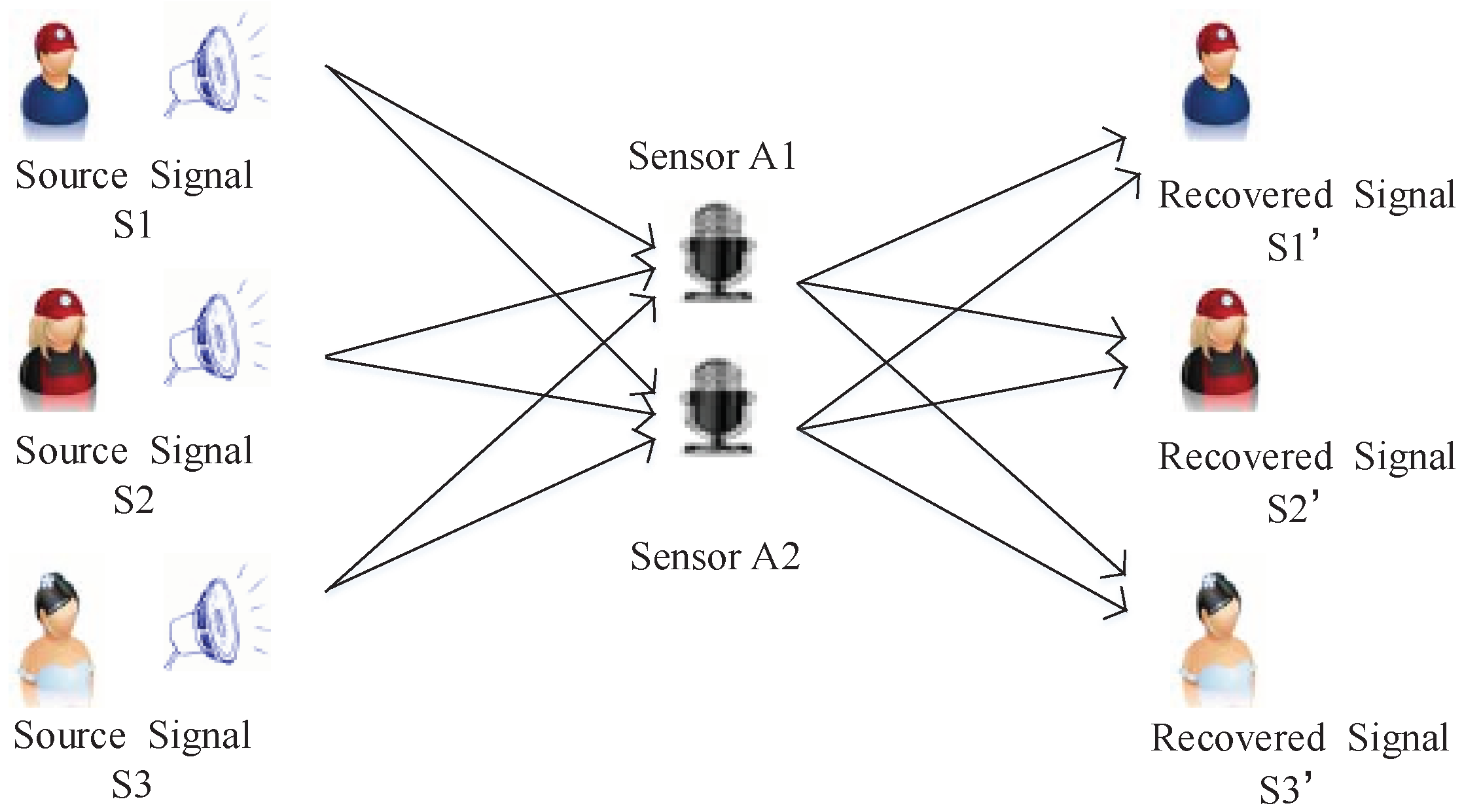

The problem of UBSS stems from cocktail reception, which is shown in Figure 8. Suppose the source signal matrix , the mixed matrix (Sensors) is () matrix, the Gaussian noise is generated by Gaussian distribution, and the observed mixed signal matrix ; therefore, the general mathematical models of UBSS can be summarized as:

Figure 8.

Schematic diagram of cocktail reception signal mixing.

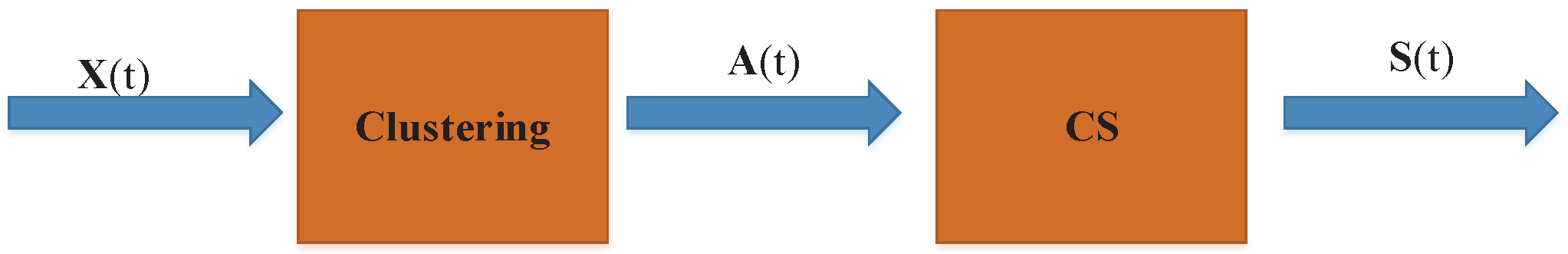

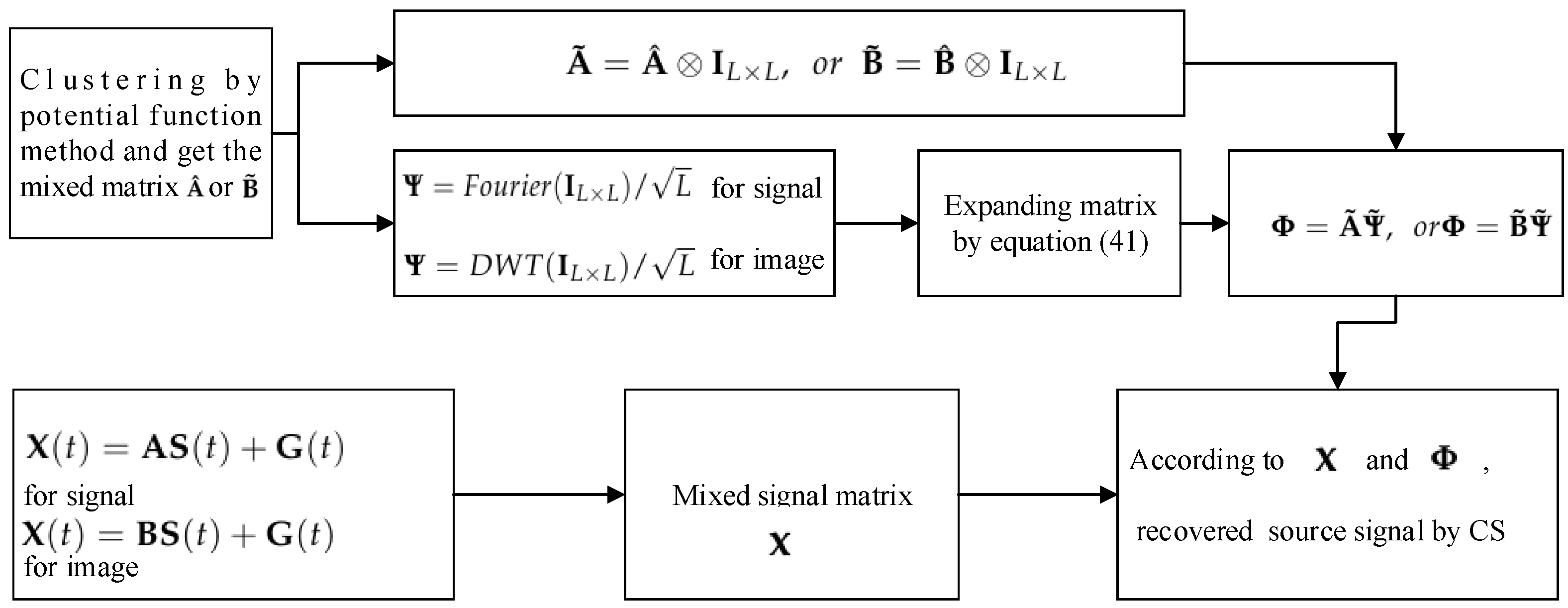

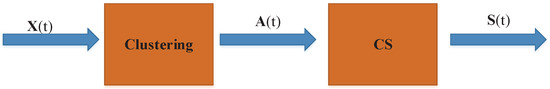

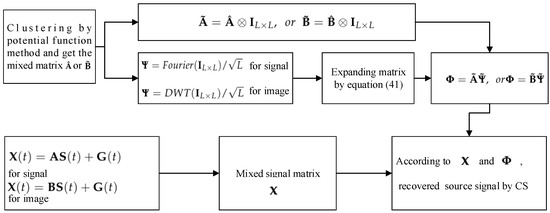

In fact, each signal has L data collected; therefore, , , , and , and can be represented as ( obeys ). The purpose of UBSS is to use the mixed signal matrix to estimate the sof the source signal matrix . In fact, this is the process of solving the underdetermined linear system of equations (ULSE). For this problem, we can use the two-step method to solve it, which is shown in Figure 9.

Figure 9.

Schematic diagram of two-step method for UBSS.

From Figure 9, firstly, we get the mixed matrix by the clustering method and then use CS technology to separate the signal, so as to restore the original signal.

5.1. Process Analysis of CS Applied to UBSS

5.1.1. Solving the Mixed Matrix by the Potential Function Method

In this section, we choose the potential function method to solve the mixed matrix . To verify the performance of the proposed WReSL0 algorithm better, we choose four simulated signals and four real images to organize experiments in this section.

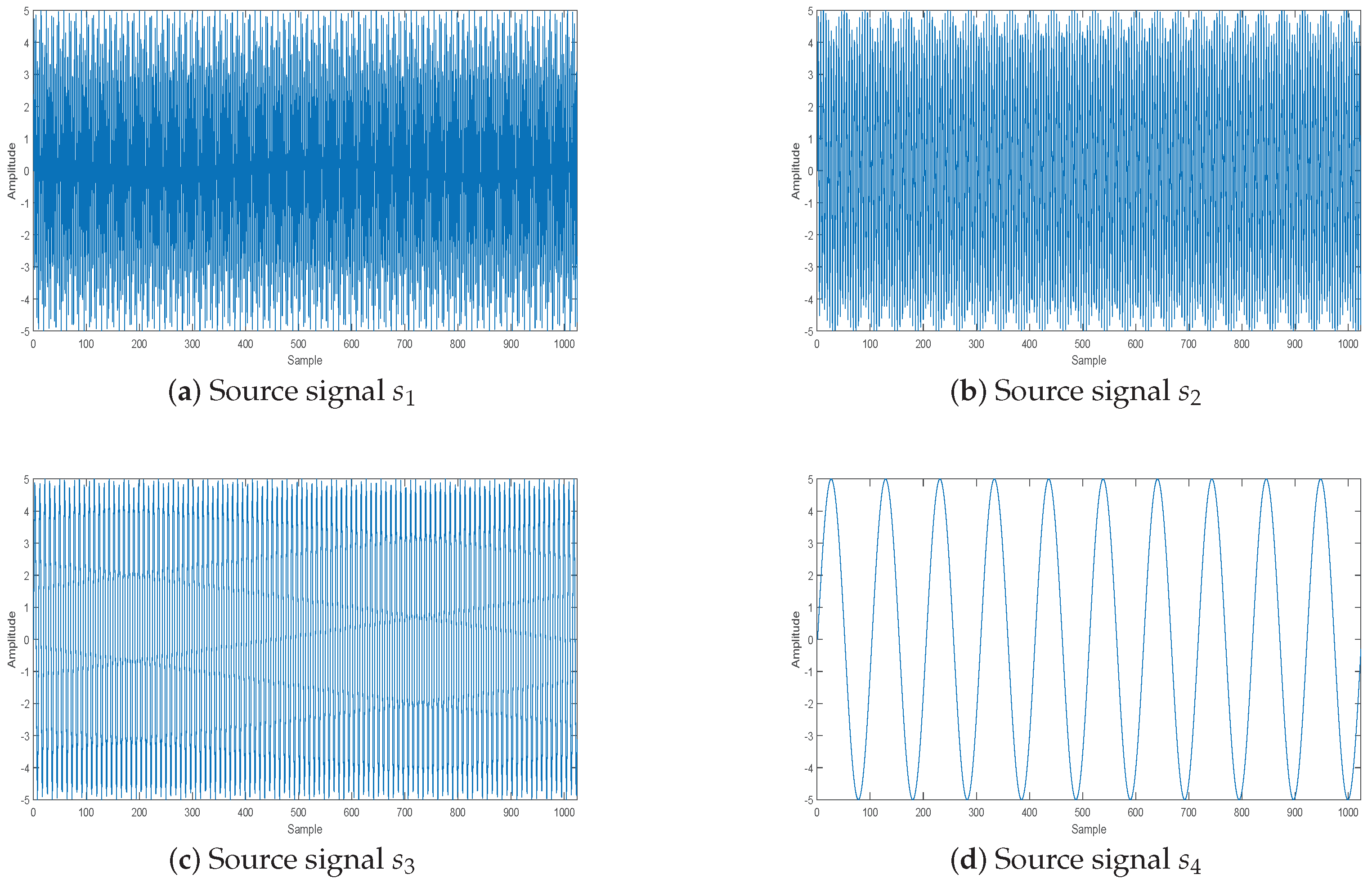

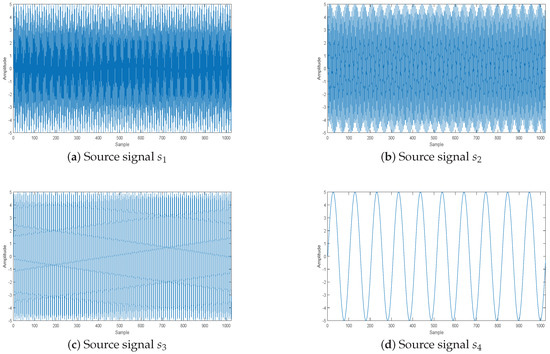

Suppose there are four source signals, which are:

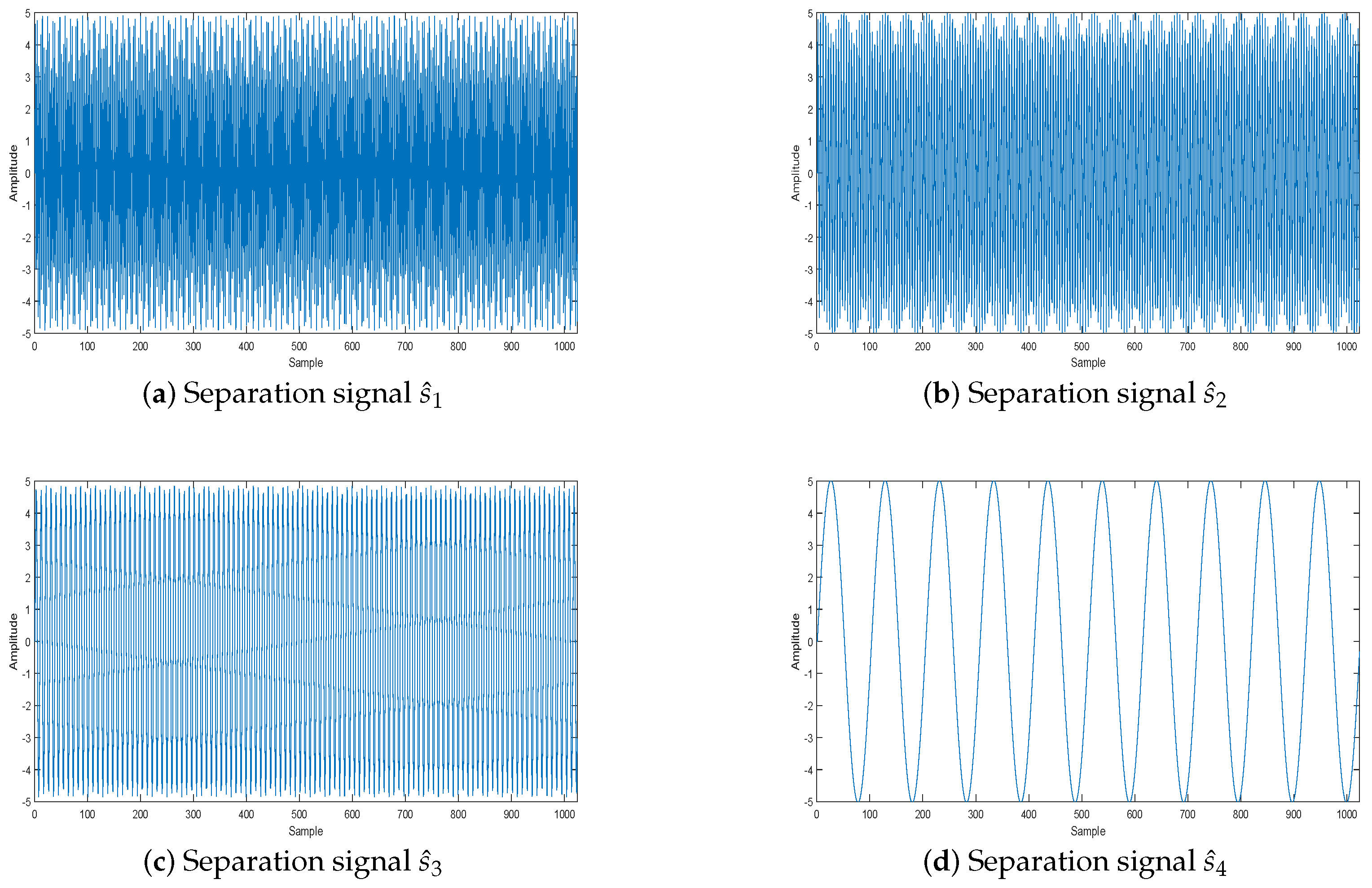

where Hz, Hz, Hz, and Hz. The length of each source signal is 1024, and the sample frequency is 1024 Hz. These four signals are shown in Figure 10.

Figure 10.

Source signal.

The four source images are the classic standard test images: boat, Barbara, peppers, and Lena, which are in Figure 6.

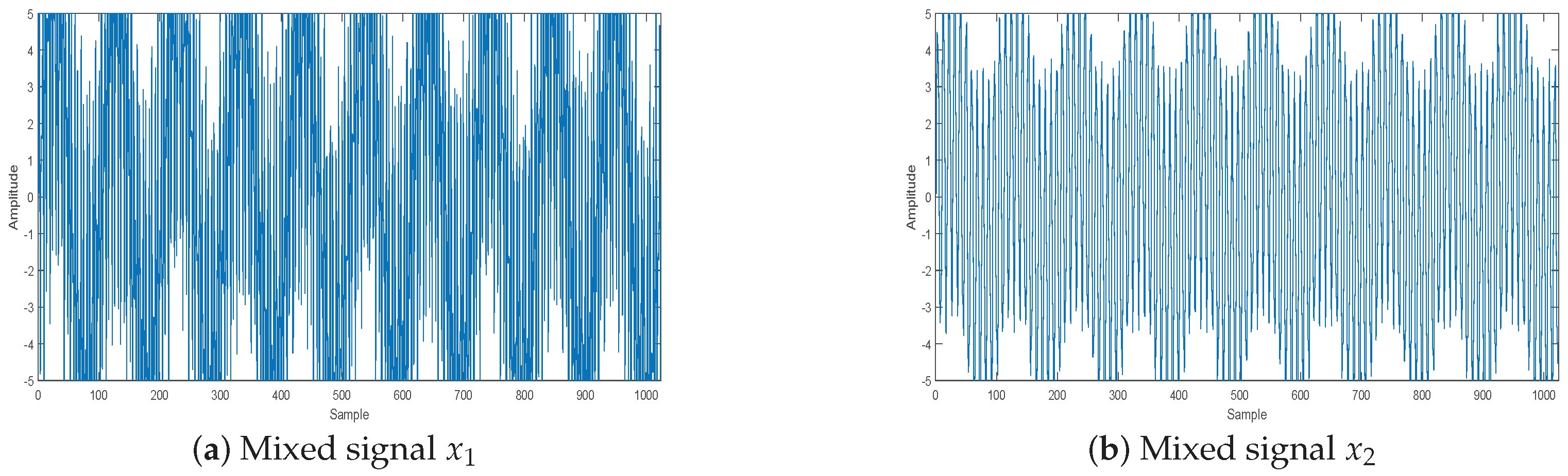

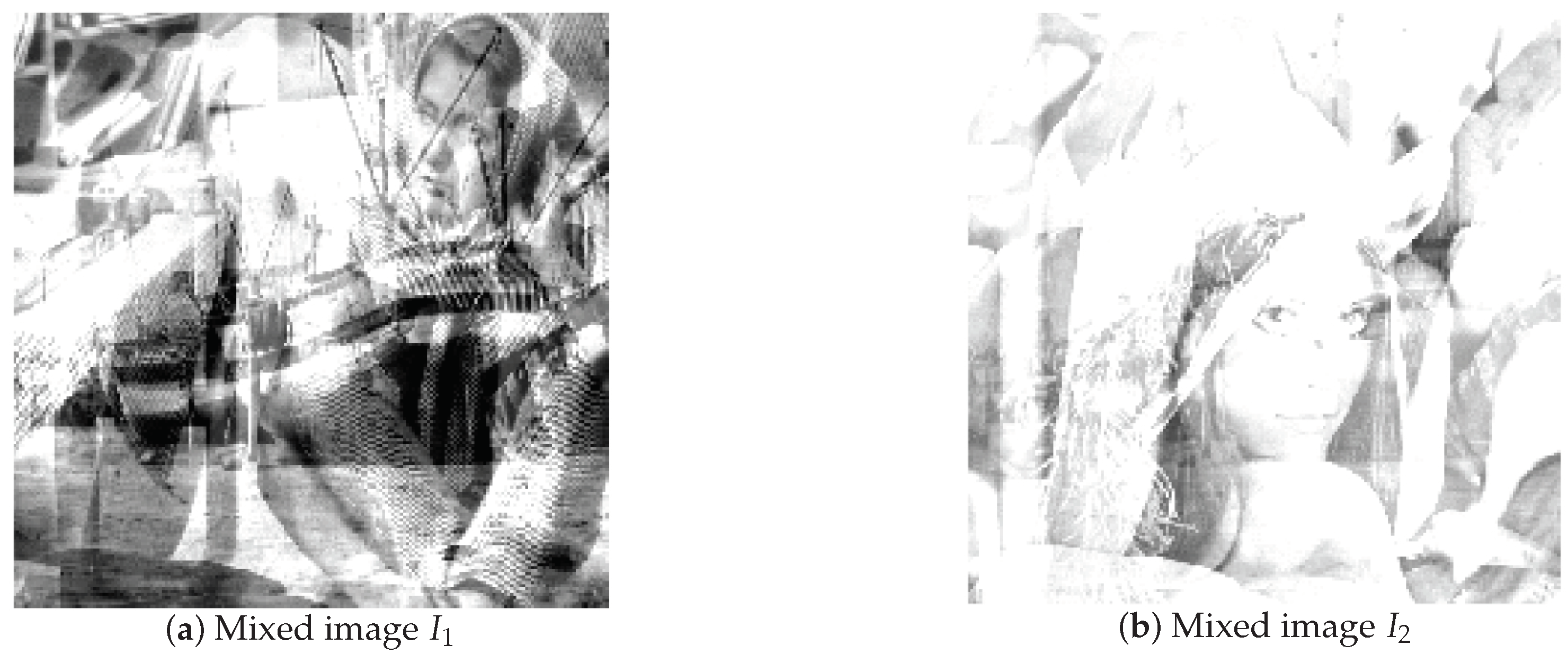

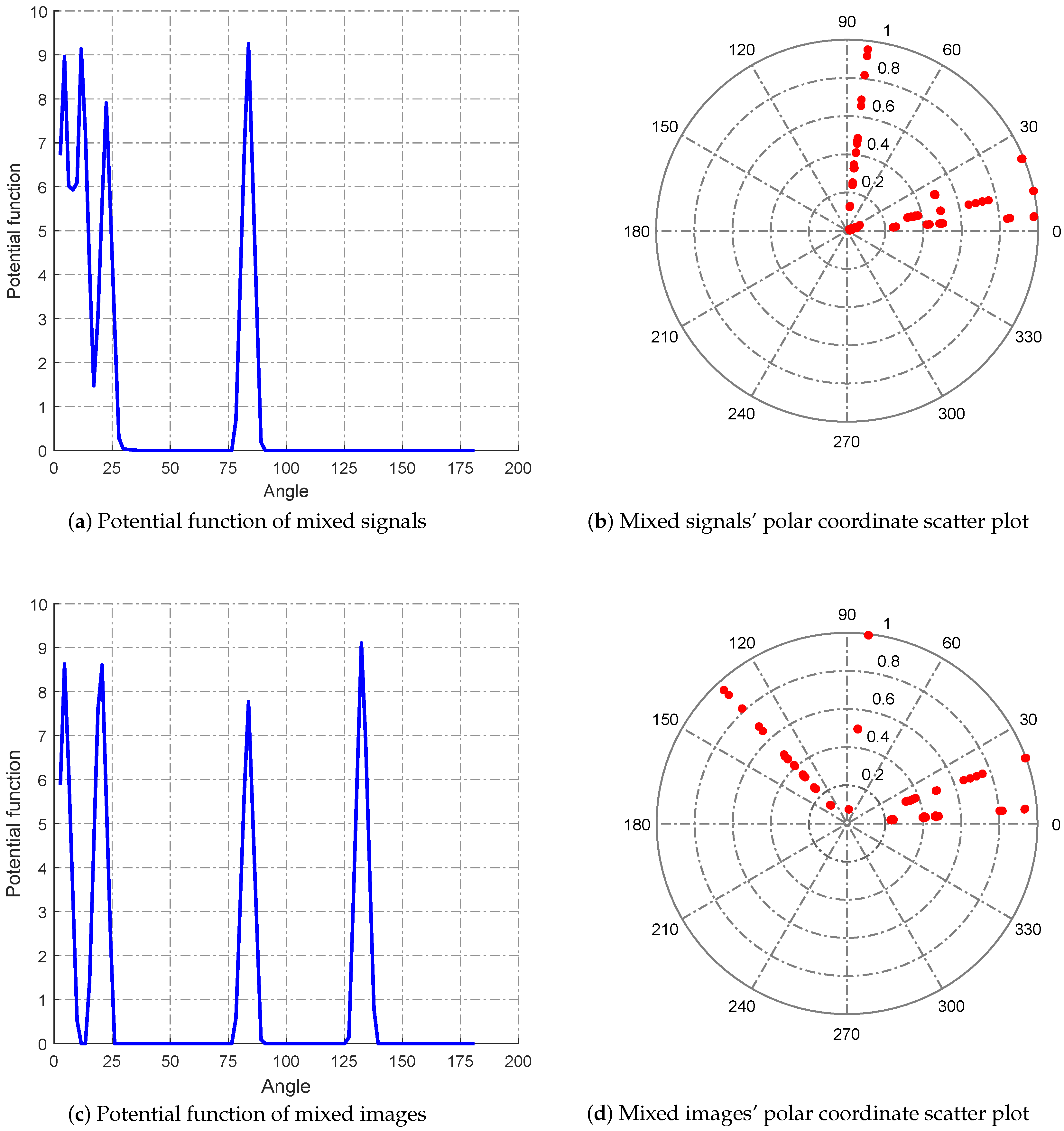

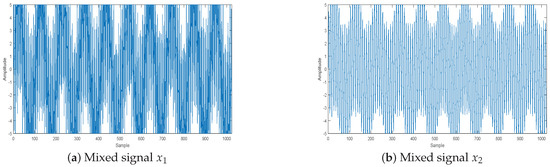

Suppose there are two sensors that receive signals and another two sensors that receive images. Mixed matrices and are set as:

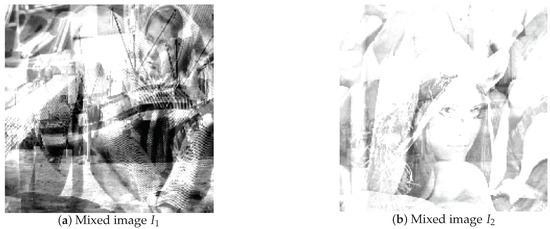

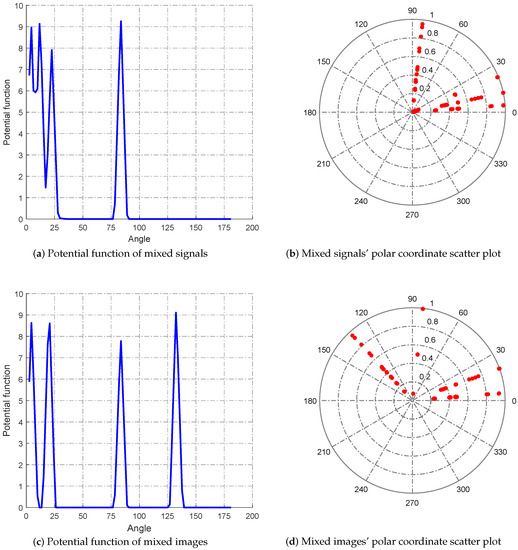

By this mixed matrix and added Gaussian noise (), we can get the two mixed signals, which are shown in Figure 11, and the two mixed images, which are shown in Figure 12. Then, we can get the estimated mixed matrix and by clustering by the potential function method [37]. As shown in Figure 13, the potential function method can cluster well. By clustering, we get the estimated values of and , as follows:

Figure 11.

Mixed signal by sensors.

Figure 12.

Mixed image by sensors.

Figure 13.

Clustering analysis.

By calculation, the error of solving the mixed matrix is and . This error range is much smaller than the classical k-means and fuzzy c-means, thus laying a foundation for the reconstruction of compressed sensing.

5.1.2. Using CS to Separate Source Signals

The next problem is to get from known and . Here, we solve this problem by CS. The solution process is similar to the image reconstruction process. The difference is that the sparse basis used here is the Fourier basis. Then, we apply the proposed RWeSL0 algorithm to this process. First, we transform the obtained into column vectors:

Then, we use the Fourier (for the sparse signal) or DWT (for the image) basis for sparse representation and extend the matrix and the valuated mixed matrix to obtain the sensing matrix.

For this equation, ⊗ denotes the Kronecker product sign, represents the Fourier transform, and DWT represents the discrete wavelet transform. Therefore, the CS-UBSS model can be described as:

where is the Fourier transform or DWT of , so is a sparse signal. As for UBSS in the images, firstly, each image matrix needs to be transformed into a row vector, then the four row vectors form a matrix . At the same time, the sparse basis in Equation (40) needs to be replaced by DWT.

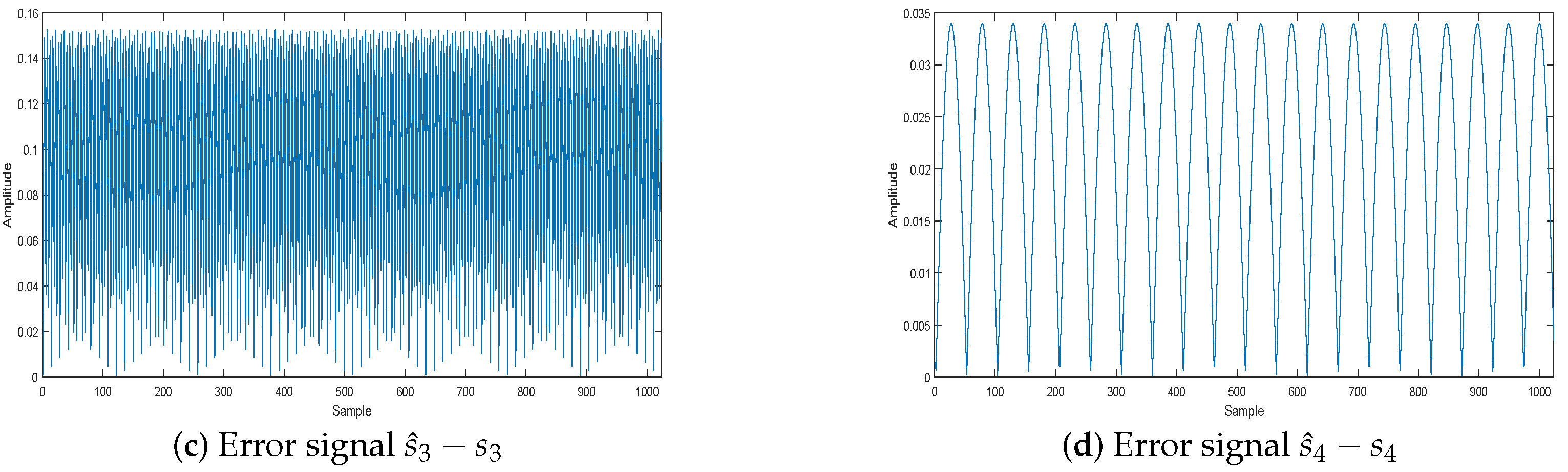

Then, we can recover the source signal by CS. In summary, the above can be described as the flowchart in Figure 14.

Figure 14.

Flowchart of UBSS by CS.

5.2. Performance Analysis of the WReSL0 Algorithm Applied to UBSS

5.2.1. The Effect of the WReSL0 Algorithm Applied to UBSS

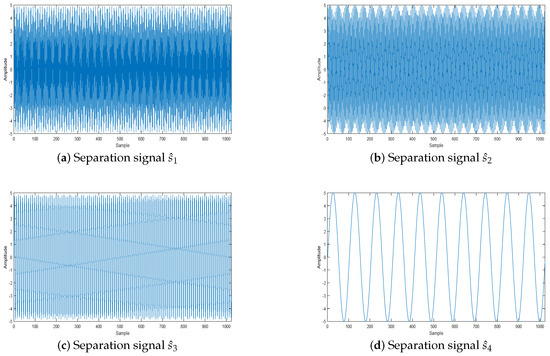

In this section, we evaluate the effect of the WReSL0 algorithm applied to UBSS by the separation of signals and spectrum analysis.

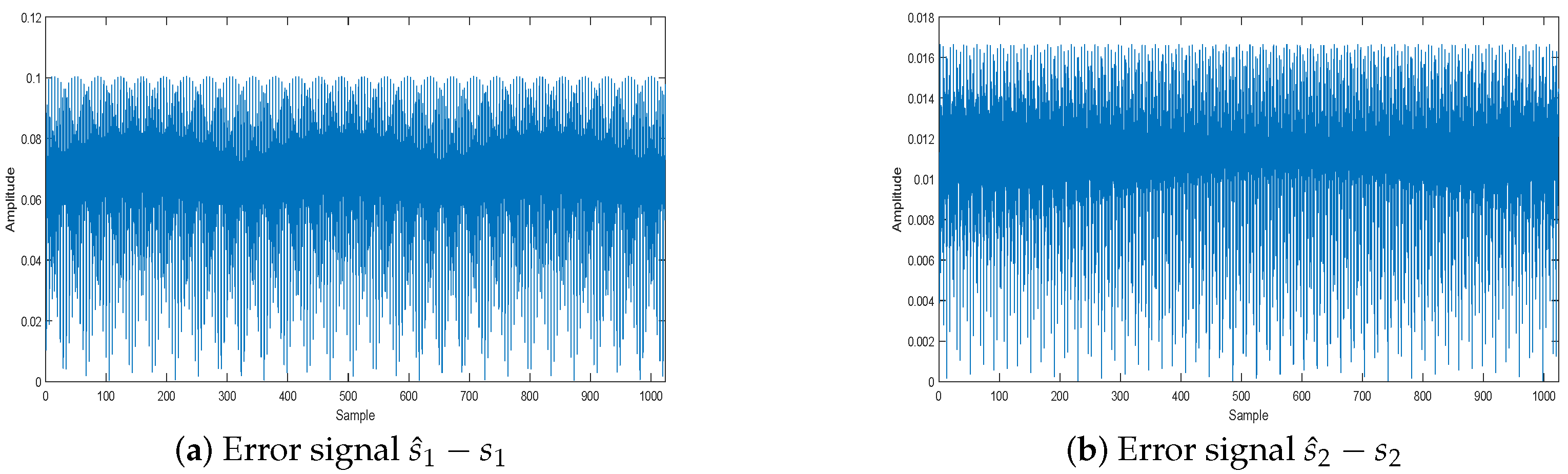

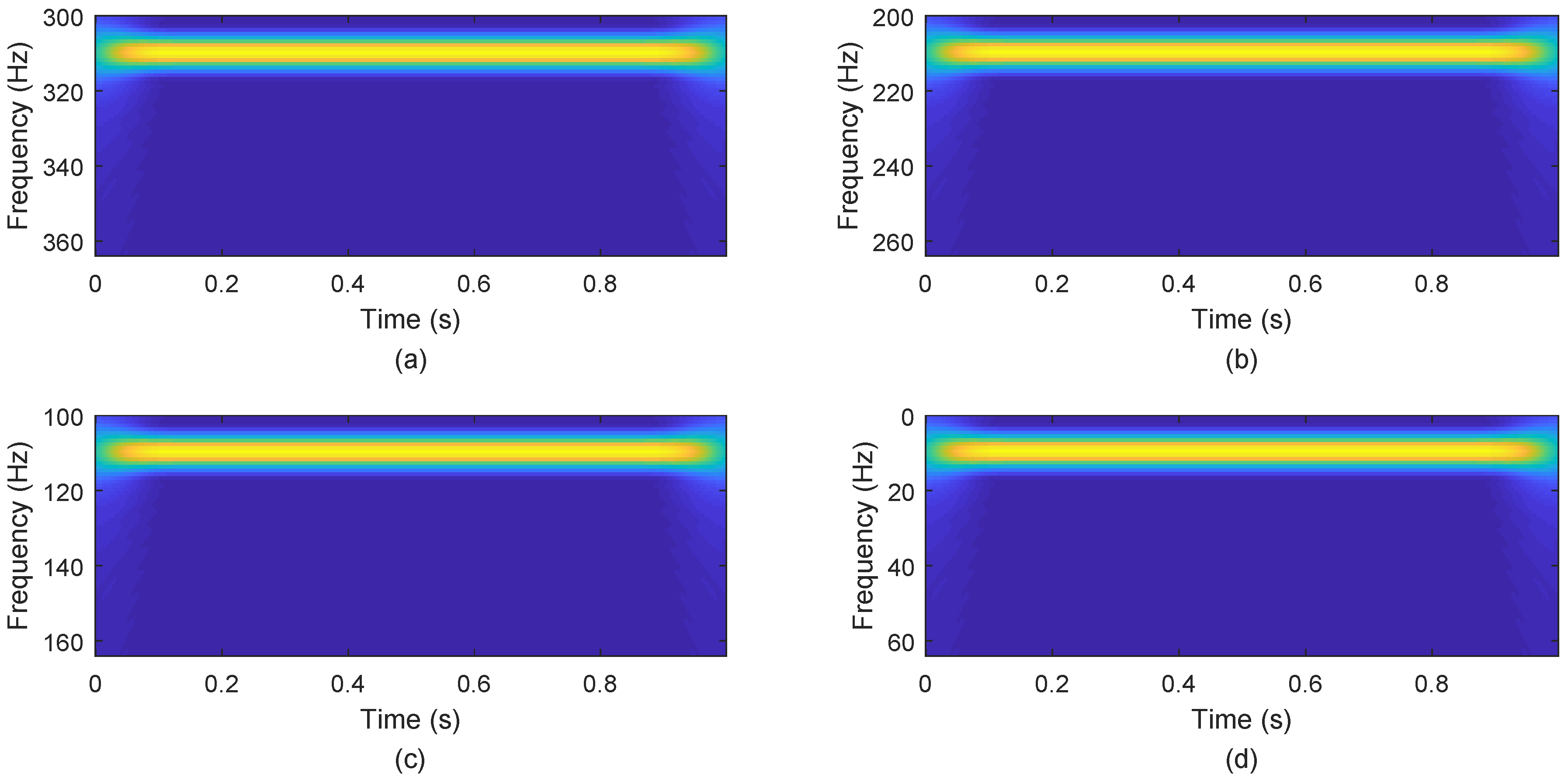

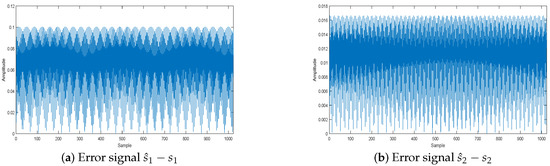

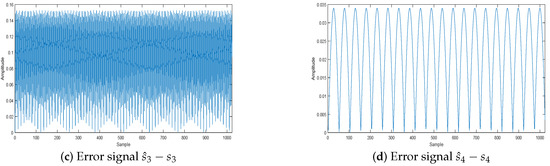

The effect of the separation of signals is shown in Figure 15: the source signals are well separated, and the separation signals and the original signals are very similar. Figure 16 displays the error between the original source signal and the recovered source signal. It indicates that the error between the original source signal and the recovered source signal is fairly small, and the WReSL0 algorithm can better deal with the problem of UBSS. In addition, We get the time-frequency diagram of the restored signal by short-time Fourier transform. Figure 17 is the time-frequency diagram. From this figure, we find that each signal has the same frequency as the original signal, and it also validates the rationality of the proposed algorithm for UBSS.

Figure 15.

Separation signal.

Figure 16.

Separation signal error analysis.

Figure 17.

Separation signals’ frequency spectrum. Subfigures (a–d) show the frequency spectrums of separation signals , , , and .

5.2.2. Performance Comparisons of the Selected Algorithms

Here, we use the SL0, NSL0, and L-RLS algorithms and the classical shortest path method (SPM) [38] to make a comparison in different noise cases. In order to analyze the situation of signal recovery clearly, we apply average SNR (ASNR) (for the signal) and average peak SNR (APSNR) (for the image) to evaluate. Let the original source signal be and the recovered source signal be , so ANSR is defined as:

and PSNR is defined as:

where M and N are the width and height of the image.

The ASNR comparisons are shown in Table 5. From the table, we can see that ASNR attenuates sharply when increases from –. The reason is that the error of the valuated mixed matrix increases obviously, which leads those CS recovery algorithms to perform poorly. In fact, from this table, our proposed RWeSL0 algorithm performs well when is less than , and when is greater than , the L-RLS algorithm performs best, followed by our proposed RWeSL0 algorithm.

Table 5.

Average SNR (ASNR) analysis for separated signals by SPM, SL0, NSL0, L-RLS, and the proposed WReSL0 with changing according to sequence [0,0.1,0.15,0.18,0.2] with 100 runs.

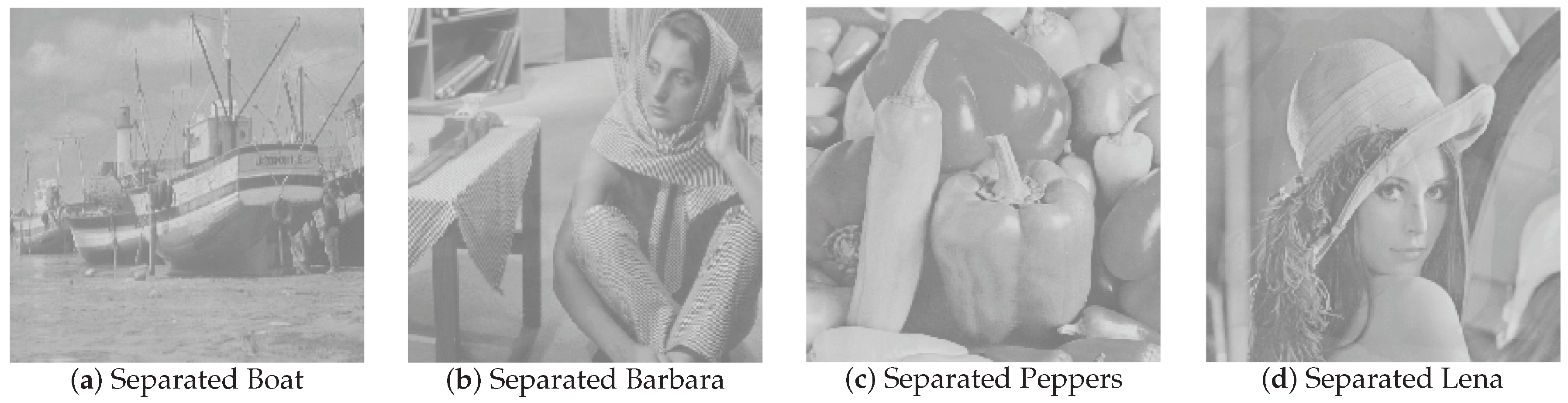

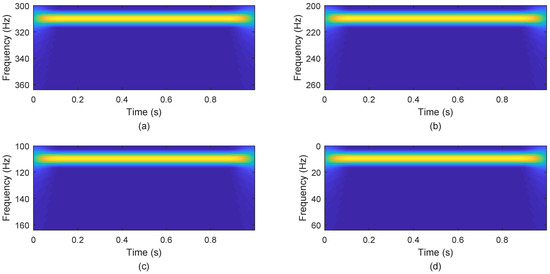

The APSNR comparisons are shown in Table 6. In this table, It is clear that APSNR is not high, and it drops greatly when increases from –. From Figure 18, we can see that these separated images seem to be enveloped in mist, which leads to a low APSNR. Therefore, we will try our best to improve this problem in the future.

Table 6.

APSNR analysis for separated images by SPM, SL0, NSL0, L-RLS, and the proposed WReSL0 with changing according to the sequence [0,0.1,0.15,0.18,0.2] with 100 runs.

Figure 18.

Separated images: (a) boat; (b) Barbara; (c) peppers; (d) Lena.

In summary, the CS technique can be used in UBSS and performs well especially for the signal recovery. Our proposed WReSL0 algorithm can perform well in UBSS for the signal recoverywhen the noise is small; and regarding image recovery, we will develop this in the future.

6. Conclusions

In this paper, we propose the WReSL0 algorithm to recover the sparse signal from given in the noise case. The WReSL0 algorithm is constructed under the GD method, in which the update process of x in the inner loop adopts the regularization mechanism to enhance the de-noising performance. As a key part of the WReSL0 algorithm, a weighted smoothed function is proposed to promote sparsity and provide the guarantee of robust and accurate signal recovery. Furthermore, We deduced the value of and the initial value to ensure the optimization performance of the algorithm. Performance simulation experiments on both real signals and real images show that the proposed WReSL0 algorithm performs better than the or regularization methods and the classical regularization methods. Finally, we apply the proposed WReSL0 algorithm to solve the problem of UBSS and also make comparisons with the classical SPM, SL0, NSL0, and Lp-RLS algorithms. Experiments show that this algorithm has some advanced performance. In addition, we would also like to apply the the proposed algorithm to other CS applications such as the RPCA [39], SAR imaging [40], and other de-noising methods [41].

Author Contributions

All authors have made great contributions to the work. L.W., X.Y., H.Y., and J.X. conceived of and designed the experiments; X.Y. and H.Y. performed the experiments and analyzed the data; X.Y. gave insightful suggestions for the work; X.Y. and H.Y. wrote the paper.

Funding

This research received no external funding.

Acknowledgments

This paper is supported by the National Key Laboratory of Communication Anti-jamming Technology.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Zhang, C.Z.; Wang, Y.; Jing, F.L. Underdetermined Blind Source Separation of Synchronous Orthogonal Frequency Hopping Signals Based on Single Source Points Detection. Sensors 2017, 17, 2074. [Google Scholar] [CrossRef]

- Zhen, L.; Peng, D.; Zhang, Y.; Xiang, Y.; Chen, P. Underdetermined blind source separation using sparse coding. IEEE Trans. Neural Netw. Learn. Syst. 2017, 99, 1–7. [Google Scholar] [CrossRef] [PubMed]

- Donoho, D.L. Compressed sensing. IEEE Trans. Inf. Theory 2006, 52, 1289–1306. [Google Scholar] [CrossRef]

- Candès, E.J.; Wakin, M.B. An introduction to compressive sampling. IEEE Signal Process. Mag. 2008, 2, 21–30. [Google Scholar] [CrossRef]

- Badeńska, A.; Błaszczyk, Ł. Compressed sensing for real measurements of quaternion signals. J. Frankl. Inst. 2017, 354, 5753–5769. [Google Scholar] [CrossRef]

- Candès, E.J. The restricted isometry property and its implications forcompressed sensing. C. R. Math. 2008, 910, 589–592. [Google Scholar] [CrossRef]

- Cahill, J.; Chen, X.; Wang, R. The gap between the null space property and the restricted isometry property. Linear Algebra Its Appl. 2016, 501, 363–375. [Google Scholar] [CrossRef]

- Huang, S.; Tran, T.D. Sparse Signal Recovery via Generalized Entropy Functions Minimization. arXiv, 2017; arXiv:1703.10556. [Google Scholar]

- Tropp, J.A.; Gilbert, A.C. Signal recovery from random measurements via orthogonal matching pursuit. IEEE Trans. Inf. Theory 2007, 12, 4655–4666. [Google Scholar] [CrossRef]

- Determe, J.F.; Louveaux, J.; Jacques, L.; Horlin, F. On the noise robustness of simultaneous orthogonal matching pursuit. IEEE Trans. Signal Process. 2016, 65, 864–875. [Google Scholar] [CrossRef]

- Donoho, D.L.; Tsaig, Y.; Starck, J.L. Sparse solution of underdetermined linear equations by stagewise orthogonal matching pursuit. IEEE Trans. Inf. Theory 2012, 2, 1094–1121. [Google Scholar] [CrossRef]

- Needell, D.; Vershynin, R. Signal recovery from incompleteand inaccurate measurements via regularized orthogonal matching pursuit. IEEE J. Sel. Top. Signal Process. 2010, 2, 310–316. [Google Scholar] [CrossRef]

- Needell, D.; Tropp, J.A. CoSaMP: Iterative signal recovery from incomplete and inaccurate samples. Commun. ACM 2010, 12, 93–100. [Google Scholar] [CrossRef]

- Jian, W.; Seokbeop, K.; Byonghyo, S. Generalized orthogonal matching pursuit. IEEE Trans. Signal Process. 2012, 12, 6202–6216. [Google Scholar] [CrossRef]

- Wang, J.; Kwon, S.; Li, P.; Shim, B. Recovery of sparse signals via generalized orthogonal matching pursuit: A new analysis. IEEE Trans. Signal Process. 2016, 64, 1076–1089. [Google Scholar] [CrossRef]

- Dai, W.; Milenkovic, O. Subspace pursuit for compressive sensing signal reconstruction. IEEE Trans. Inf. Theory 2009, 5, 2230–2249. [Google Scholar] [CrossRef]

- Goyal, P.; Singh, B. Subspace pursuit for sparse signal reconstruction in wireless sensor networks. Procedia Comput. Sci. 2018, 125, 228–233. [Google Scholar] [CrossRef]

- Liu, X.J.; Xia, S.T.; Fu, F.W. Reconstruction guarantee analysis of basis pursuit for binary measurement matrices in compressed sensing. IEEE Trans. Inf. Theory 2017, 63, 2922–2932. [Google Scholar] [CrossRef]

- Mohimani, H.; Babaie-Zadeh, M.; Jutten, C. A Fast Approach for Overcomplete Sparse Decomposition Based on Smoothed L0 Norm. IEEE Trans. Signal Process. 2009, 57, 289–301. [Google Scholar] [CrossRef]

- Zhao, R.; Lin, W.; Li, H.; Hu, S. Reconstruction algorithm for compressive sensing based on smoothed L0 norm and revised newton method. J. Comput.-Aided Des. Comput. Graph. 2012, 24, 478–484. [Google Scholar]

- Ye, X.; Zhu, W.P. Sparse channel estimation of pulse-shaping multiple-input–multiple-output orthogonal frequency division multiplexing systems with an approximate gradient L2-SL0 reconstruction algorithm. Iet Commun. 2014, 8, 1124–1131. [Google Scholar] [CrossRef]

- Nowak, R.D.; Wright, S.J. Gradient projection for sparse reconstruction: Application to compressed sensing andother inverse problems. IEEE J. Sel. Top. Signal Process. 2007, 1, 586–597. [Google Scholar]

- Long, T.; Jiao, W.; He, G. RPC estimation via ℓ1-norm-regularized least squares (L1LS). IEEE Trans. Geosci. Remote Sens. 2015, 8, 4554–4567. [Google Scholar] [CrossRef]

- Pant, J.K.; Lu, W.S.; Antoniou, A. New improved algorithms for compressive sensing based on ℓp norm. IEEE Trans. Circuits Syst. II Express Br. 2014, 3, 198–202. [Google Scholar] [CrossRef]

- Wipf, D.; Nagarajan, S. Iterative Reweighted and Methods for Finding Sparse Solutions. IEEE J. Sel. Top. Signal Process. 2016, 2, 317–329. [Google Scholar]

- Zhang, C.; Hao, D.; Hou, C.; Yin, X. A New Approach for Sparse Signal Recovery in Compressed Sensing Based on Minimizing Composite Trigonometric Function. IEEE Access 2018, 6, 44894–44904. [Google Scholar] [CrossRef]

- Candès, E.J.; Wakin, M.B.; Boyd, S.P. Enhancing sparsity by weighted L1 minimization. J. Fourier Anal. Appl. 2008, 14, 877–905. [Google Scholar] [CrossRef]

- Pant, J.K.; Lu, W.S.; Antoniou, A. Reconstruction of sparse signals by minimizing a re-weighted approximate L0-norm in the null space of the measurement matrix. In Proceedings of the IEEE International Midwest Symposium on Circuits and Systems, Seattle, WA, USA, 1–4 August 2010; pp. 430–433. [Google Scholar]

- Aggarwal, P.; Gupta, A. Accelerated fmri reconstruction using matrix completion with sparse recovery via split bregman. Neurocomputing 2016, 216, 319–330. [Google Scholar] [CrossRef]

- Chu, Y.J.; Mak, C.M. A new qr decomposition-based rls algorithm using the split bregman method for L1-regularized problems. Signal Process. 2016, 128, 303–308. [Google Scholar] [CrossRef]

- Hu, Y.; Liu, J.; Leng, C.; An, Y.; Zhang, S.; Wang, K. Lp regularization for bioluminescence tomography based on the split bregman method. Mol. Imaging Biol. 2016, 18, 1–8. [Google Scholar] [CrossRef]

- Liu, Y.; Zhan, Z.; Cai, J.F.; Guo, D.; Chen, Z.; Qu, X. Projected iterative soft-thresholding algorithm for tight frames in compressed sensing magnetic resonance imaging. IEEE Trans. Med. Imaging 2016, 35, 2130–2140. [Google Scholar] [CrossRef]

- Yang, L.; Pong, T.K.; Chen, X. Alternating direction method of multipliers for a class of nonconvex and nonsmooth problems with applications to background/foreground extraction. Mathematics 2016, 10, 74–110. [Google Scholar] [CrossRef]

- Antoniou, A.; Lu, W.S. Practical Optimization: Algorithms and Engineering Applications; Springer: New York, NY, USA, 2007. [Google Scholar]

- Samora, I.; Franca, M.J.; Schleiss, A.J.; Ramos, H.M. Simulated annealing in optimization of energy production in a water supply network. Water Resour. Manag. 2016, 30, 1533–1547. [Google Scholar] [CrossRef]

- Goldstein, T.; Studer, C. Phasemax: Convex phase retrieval via basis pursuit. IEEE Trans. Inf. Theory 2018, 64, 2675–2689. [Google Scholar] [CrossRef]

- Wei-Hong, F.U.; Ai-Li, L.I.; Li-Fen, M.A.; Huang, K.; Yan, X. Underdetermined blind separation based on potential function with estimated parameter’s decreasing sequence. Syst. Eng. Electron. 2014, 36, 619–623. [Google Scholar]

- Bofill, P.; Zibulevsky, M. Underdetermined blind source separation using sparse representations. Signal Process. 2001, 81, 2353–2362. [Google Scholar] [CrossRef]

- Su, J.; Tao, H.; Tao, M.; Wang, L.; Xie, J. Narrow-band interference suppression via rpca-based signal separation in time–frequency domain. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 99, 1–10. [Google Scholar] [CrossRef]

- Ni, J.C.; Zhang, Q.; Luo, Y.; Sun, L. Compressed sensing sar imaging based on centralized sparse representation. IEEE Sens. J. 2018, 18, 4920–4932. [Google Scholar] [CrossRef]

- Li, G.; Xiao, X.; Tang, J.T.; Li, J.; Zhu, H.J.; Zhou, C.; Yan, F.B. Near—Source noise suppression of AMT by compressive sensing and mathematical morphology filtering. Appl. Geophys. 2017, 4, 581–589. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).