1. Introduction

The rapid development of the global fruit and vegetable industry has contributed to agricultural upgrading and increased income for agricultural practitioners. According to the statistics of the Food and Agriculture Organization of the United Nations, global vegetable and fruit production increased by 3.7-times and 4.3-times, respectively, over the past 50 years [

1]. However, the fruit and vegetable industry is labor-intensive. There are about 150 million people engaged in the daily planting and management of fruits and vegetables in China alone, for which harvesting takes up 40–50% of the total work [

2,

3].

With the advancement of science and technology, the production of field crops around the world has been fully mechanized. At the same time, mechanical equipment has been used in some fields, such as fruit and vegetable cultivation, field management, and so on. However, harvesting fresh fruits and vegetables still generally relies on people, which takes up most of the labor and is the most difficult area to utilize mechanized operations [

2,

4]. Therefore, robotic harvesting has been a hot topic in agricultural research. Although researchers have paid much attention to it, many challenges remain for efficient and reliable picking operations in the real agricultural environment. Until now, most fruit-harvesting robots have only been tested in laboratories. Therefore, the key problem, which could lead to a breakthrough for harvesting robots, is to promote the resolution, reliability and real-time applicability of fruit recognition and location.

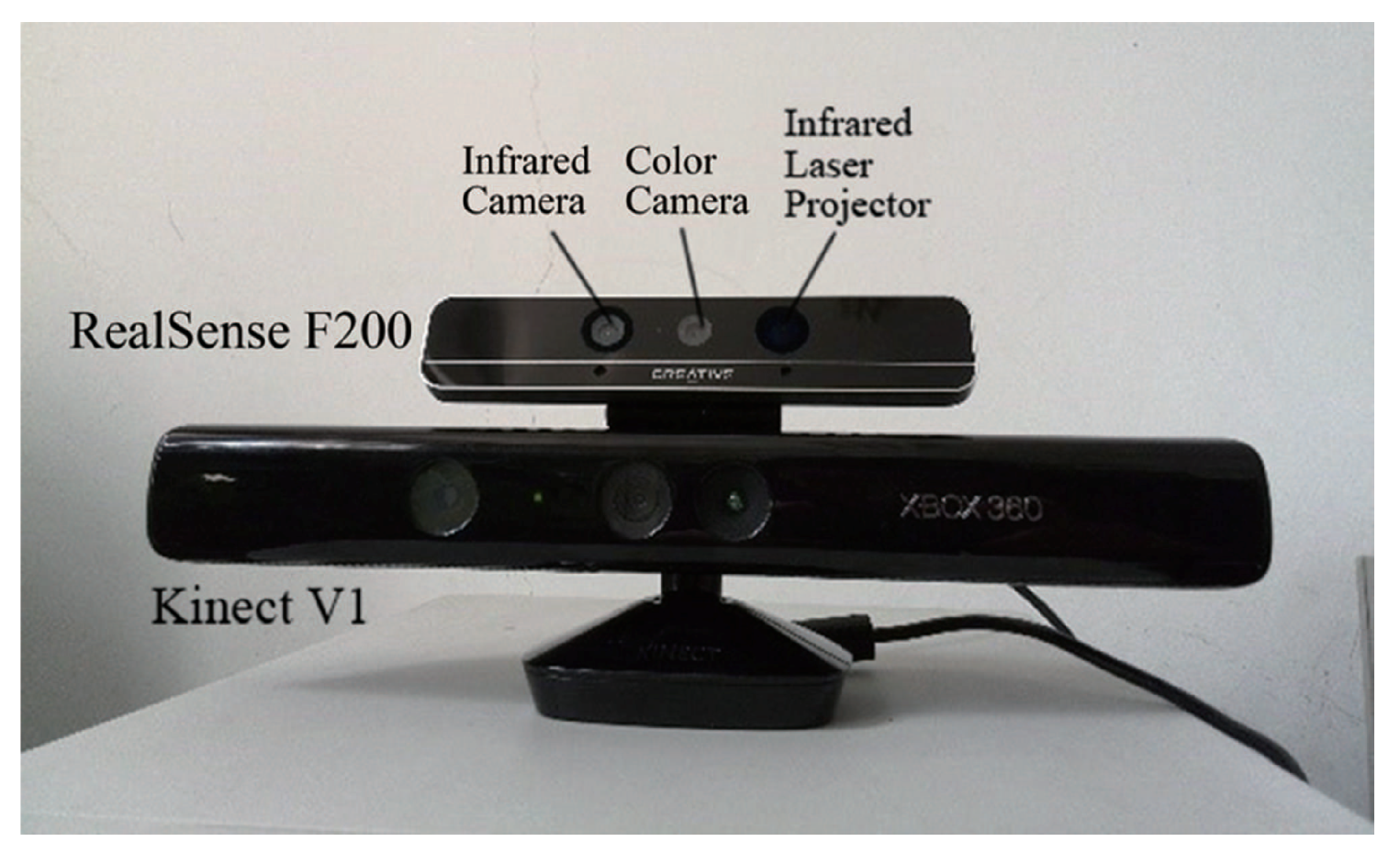

Recognizing and locating fruits is a tough task for picking robots. Over the past few decades, charge-coupled device (CCD) cameras have been used to identify and locate fruit in most research works. However, CCD cameras are too light sensitive to provide reliable identification in natural scenes [

5,

6]. Real-time fruit recognition and location can be affected by some problems: too much redundant information, the significant amount of computing needed to identify fruit objects and the complexity of image matching of the objects’ locations. The method of fruit recognition based on a color image is often useless for problems of a similar color of fruits and leaves, the overlap of fruit portions, uneven fruit color and bright spots on images in a nonstructural environment. Various targeted studies have only achieved limited improvements of those problems and have not proposed a satisfactory solution for them [

7,

8,

9]. In recent years, depth sensors have been used in fruit recognition and location, as they can obtain the object’s characteristic information, without having to rely on the color information completely. Low-cost consumer red-green-blue-depth (RGB-D) cameras, such as Microsoft Kinect and Intel RealSense [

10,

11], have revolutionized the field of fruit recognition and location technology because they can obtain color and three-dimensional (3D) depth information of an object in real time synchronously.

The existing recognition and location algorithms, which are based on RGB-D, are different for different information. Wang et al. [

12] used Kinect to recognize on-tree mangoes by using the histogram of oriented gradients (HOG) and an ellipse-fitting algorithm based on a color image and estimated the size based on the depth information. García-L and Morales [

13] used an RGB image acquired by Asus Xtion to find red spheres that emulate ripe tomatoes and used the depth information to locate the objects. As can be seen, these methods of locating after removing far-shot and recognition are based on the advantages of the RGB-D camera, which can obtain depth and color information synchronously. However, these methods still rely on traditional simple color information to recognize fruits and do not take advantage of the depth information or avoid the deficiencies of conventional machine vision based on CCD cameras for fruit recognition and location.

Nguyen et al. [

14] used Asus Xtion to obtain data points of an apple tree. After removing the far-shot redundant information by the distance filter and filtering out the green leaf background by the red-green (R-G) color filter, the area of fruits can be obtained based on point-cloud clustering. Mai et al. [

15] combined RGB-D information of an apple tree collected by Kinect V2 to reconstruct a color 3D model of the tree. They separated fruit and background based on the color threshold of R-G, removed the noise points based on an outlier filter of point clouds and extracted the 3D shape of each fruit point cloud by mapping the color-depth points. Lehnert et al. [

16] reconstructed the 3D shape by fusing sweet peppers’ color and depth information and separating the red sweet pepper by the naive Bayes classifier in rotated HSI color space and obtained the 3D object fruit by Euclidean clustering. To recognize a single fruit, Tao et al. [

17] separated the point clouds by color difference and depth data, extracted the color in the HSI and RGB color space and extracted the 3D geometric features by a quick point feature histogram descriptor based on depth-point cloud data. Qiu et al. [

18] proposed a strategy to recognize fruit by selecting foreground with a depth threshold and operating green enhancement with a color threshold, which was based on RGB-D images of tomato plants obtained by Kinect V1. Chen et al. [

19] used Asus Xtion at the head to get RGB-D information, removing the green leaf background by HSI thresholding and detecting clusters of tomatoes by depth-point cloud clustering, which can guide the arm to reach the close field of the tomato, and used the in-hand Prime Sense Carmine to obtain depth information to distinguish and locate the on-string fruit with the characteristics of a sphere. The above studies processed images with color differences between the fruit and the background and recognized the object fruit by judging the remaining points of clustering or geometric characteristics with depth information. This method makes the best of depth information in the process of fruit recognition.

Moreover, Choi et al. [

20] used Kinect V2 to synchronize the RGB, near-infrared (NIR) and depth images of on-tree green citrus. They applied a 2D Hough transform algorithm to RGB and NIR images and Choi’s circle estimation algorithm for the depth image to search the object fruit, then detected the fruit from the background with the AlexNet classification model. The results show that the success rate of fruit detection based on depth was the lowest. Yasukawa et al. [

21] provided a method of distinguishing a ripe red tomato from the background with RGB-HSV conversion based on color information and matching the gradient direction of infrared reflection intensity data obtained by the depth sensor to recognize and locate the fruit, which was based on the color information obtained by Kinect V2. The above methods made use of the infrared reflection intensity of the RGB-D sensor.

All of the studies effectively promoted new research on fruit recognition and localization based on the RGB-D sensor. According to the research, Kinect, Xtion and other cameras can obtain multiple information only in the range of 500–800 mm, which cannot be used in close-shot detection. Therefore, we have the following problems:

- (1)

In the range of a far-shot field of view, the defects of too many objects and complex redundant information lead to much interference, and a large amount of computation will influence the success rate and real-time application [

18]. Limited by the resolution and accuracy of consumer RGB-D sensors, far-shot detection cannot obtain a clear depth image of the fruit and will make significant positioning errors [

12].

- (2)

The consumer RGB-D sensors acquire the depth and NIR intensity data actively with a low-power NIR emitter-receiver and capture the RGB image with CCD detection passively. Both are challenging to adapt to outdoor natural light conditions effectively, and thus, the existing studies are mainly based on stable indoor light conditions [

19] or the enclosed light environment [

14].

- (3)

According to many studies, the far-shot location of the “eye-in-hand” cannot meet the requirements of picking accuracy. The open-loop control leads to a decline in picking performance, affecting the picking cycle and success rate [

22,

23]. As a result, the hand-eye coordination of the eye-in-hand [

24,

25,

26] and image-based “look and move” [

23,

27] has become a trend of picking robots. However, Kinect, Xtion and other cameras cannot be applied to close-shot detection due to the limitations in detection range and size.

To break through the limitations of RGB-D sensors in robotic picking applications, Lehnert et al. [

16] and Chen et al. [

19] equipped RealSense and Carmine on robots’ hands, respectively. They only discuss the feature extraction of fruit and its corresponding peduncle in the close shot and do not solve the problem of how to detect fruit in a close-shot canopy background. They do not offer an expanded discussion on the application of in-hand RGB-D cameras, close-shot recognition and location and the servo features.

In this paper, the close-shot detection of citrus based on a new RGB-D camera, RealSense, is discussed.

5. Conclusions

5.1. Reliability of RealSense Close-Shot Recognition

(1) Influence of leaf blade morphology

As shown in

Figure 21, when the blades are very curly and cut by the depth-sphere at a coincident angle, it is possible to obtain the apparent curved intersection arc of the object leaf blades so that the leaves are misjudged as fruit. However, in the natural environment with a canopy, misrecognition caused by this situation is rare. The rate of misrecognizing the isolated blade is only 2.5%. However, in the on-branch environments, the rate of misrecognition is 3.3% and 5.3% for little and severe adhesion, respectively.

To avoid misrecognition of extreme position-postures, the depth information can be cut after adjusting the position-posture of the leaf, changing the angle of view and the center of the sensor (the center of the depth-sphere).

(2) Influence of fruit size and shape

For isolated fruit, the success rate of detection based on the depth-sphere intersection curve was over 98%. However, in the complex on-branch environment, there will be false negatives of fruit objects, and the different sizes and shapes of different fruits influence the success rate of detection. Meanwhile, compared with the circularity of citrus fruit, the size of the fruit has a more obvious influence on the success rate of detection. As shown in

Figure 19, the recognition rate of Egyptian oranges, whose size is most extensive, with average polar diameter and equatorial diameters of 86.6 mm and 77.9 mm, respectively, reached 100% and 70% with little adhesion and serious adhesion, respectively. For Yunnan crystal sugar orange, which is approximated by a circle with an average polar diameter and equatorial diameter of only 49.9 mm and 54.3 mm, respectively, the success rate of detection was the lowest.

The reason that different cutting depths were chosen for the different varieties of citrus in the same depth of field is that sufficient numbers of point clouds of the depth-sphere intersection curve can be obtained. However, for fruit with a tremendous size, depth-sphere cutting with a small cutting depth can still be implemented effectively in an environment with occlusion and adhesion, to obtain arc curves that are independent and have a greater range. The high rate of fruit recognition can be ensured.

(3) Impact of the degree of adhesion

For the recognition technology based on visible light, it is hard to separate information on fruits with occlusion to obtain a single outline of the fruit in the complex on-branch environment. For the detection method with depth information, if there is no adhesion among the fruits and leaves, the point-cloud clustering will be independent according to the different depth in the canopy. RealSense makes a further contribution to the clarity and legibility of individual point-cloud clustering in close-shot range.

Therefore, the method can be used to obtain an independent intersection curve of fruit and detect the fruit according to the double threshold of eccentricity, and pixel number, except the sensor, is unable to obtain enough depth data points of the fruit because of the serious occlusion. Besides, for the condition of little adhesion, in which there is a limited connection between the point cloud of the fruits and leaves, the method can be used to detect the fruit easily by changing the cutting depth. Operation of the “eye-in-hand” robot in the close-shot range can avoid the treatment of serious occlusion and adhesion to ensure reliable recognition and harvest.

However, in the case of serious adhesion, the rate of fruit recognition with this is low. To solve this problem, further research on fruit recognition under serious adhesion is essential.

(4) Effects of light conditions

Light is a key factor in recognition of fruit, and the passive detection technology based on CCD is greatly restricted under natural light and low light. The near-infrared active detection of the depth sensor can achieve recognition without light, which enables the harvesting robot to do night-and-day work in the natural environment. Meanwhile, the consumer-grade depth sensors such as Kinect and front-facing RealSense can be easily manipulated outside for their small power. Thanks to the advantage of its minimum close-shot detection distance (160 mm), RealSense will obtain enough infrared light so that it can effectively overcome the natural light interference and achieve reliable close-shot detections of fruit, which was confirmed in a study on grape detection.

The existing study shows that the fruit recognition with this method has good results under a dark environment. However, the effect of this method under natural light conditions needs to be verified in further research.

5.2. Calculation of RealSense Close-Shot Recognition

To meet the requirements of the practical application of the harvesting robot, compared to object visualization and 3D modeling, real-time RealSense close-shot recognition and servo control have a great impact on performance and practical value. Therefore, computation of the positioning algorithm is crucial. The depth-sphere intersection curve algorithm of RealSense close-shot recognition has outstanding advantages, as follows:

(1) Close-shot detection for fewer targets

In the far-shot field of view, there are some problems, such as too many objects, complex redundant information and the object being “farther, little, and blurry”, which make it difficult to recognize fruit and increase the amount of calculation. In the close-shot field of view, there are few objects and little redundant information, and the object is “close, large, and clear” (shown in

Figure 22). RealSense can obtain high-precision depth data on a limited number of fruits and leaves in the close-shot field, among which the proportion of adequate data is large, making the surface spatial features of each citrus fruit and leaf object more prominent; which greatly reduces the difficulty of recognition, the amount of calculation and the number of errors.

For robotic harvesting, multiple objects in the far-shot canopy and rough detection of the complex environments cannot be directly used for feedback control. Compared with CCD, whose reliability and real-time performance of recognition and location are limited, and Kinect and Xtion, which cannot be used for their far detection range and big size, RealSense can be integrated with the end-effector of the harvesting robot based on its small size and close depth detection range of 160 mm. In addition, the fast depth detection of “large and clear” for close-shot objects meets the required precision of the robot hand-arm for locating and harvesting one-by-one, which makes RealSense the best choice for the real-time servo operation of harvesting robots.

(2) Rapid elimination of foreground and background

Based on a close-shot detection range of 160–700 mm, redundant background, hole noise and unstable foreground can be eliminated with depth thresholding to quickly obtain the citrus point cloud, to avoid complex background interference of the remaining limited fruit and leaf objects. This can decrease the difficulty of recognition.

We can determine by counting the point clouds of 100 on-branch citrus fruits in the close-shot detection range that the size of the point cloud can be decreased from 307,200 (

) to 6000–50,000 per frame. That is 2–16% of the original data, by rapid elimination of the background, which is shown in

Figure 23. Thus, the calculation can be decreased to extract the fruit characteristics rapidly.

(3) Advantages of depth-sphere cutting algorithms

For the existing method of fruit recognition based on depth information, segmentation of the fruit image is completed by 3D reconstruction with the depth-point cloud or the 2D image processing algorithm with the depth image. The method cannot take advantage of depth data to recognize the object fruit and then undergo calculation, which is still dependent on the traditional edge contour extraction algorithm and the complex analysis process of “obtain original depth data—visualization—calculate gray-scale value—extract the contour curve—identify fruit characteristics”.

By comparison, the depth-sphere cutting algorithm directly uses the sensor to obtain the original depth data and the spherical coordinates of the data. The depth-sphere cutting of each object can be directly realized without any data conversion operation to simplify high-precision computing, in order to ensure real-time detection and harvest.

5.3. Future Work

We aim to attempt a new technical route of on-branch fruit recognition. In this manuscript, the analysis is based on depth data directly instead of traditional image segmentation, and the new close-shot recognition method of depth-sphere cutting is promoted. Based on this method, we discover the features of isolated fruit and leaf and then increase the complexity of fruit-leaf collocation to realize the recognition of the on-branch fruits.

In this study, the recognition effect analysis for multiple fruit-leaf position-postures and collocations is completed in an indoor environment, and the integral recognition strategy/process and optimal parameters are obtained. However, in further work, it is necessary to verify the recognition effect in a natural field environment. Furthermore, the fruit recognition in the on-branch environment should be expanded to the on-tree environment. Finally, the research on fruit recognition under serious adhesion and occlusion is essential to further promote the recognition effect. The related research and its practical application in robotic picking are ongoing.