Abstract

In this paper we present a simple stand-alone system performing the autonomous acquisition of multiple pictures all around large objects, i.e., objects that are too big to be photographed from any side just with a camera held by hand. In this approach, a camera carried by a drone (an off-the-shelf quadcopter) is employed to carry out the acquisition of an image sequence representing a valid dataset for the 3D reconstruction of the captured scene. Both the drone flight and the choice of the viewpoints for shooting a picture are automatically controlled by the developed application, which runs on a tablet wirelessly connected to the drone, and controls the entire process in real time. The system and the acquisition workflow have been conceived with the aim to keep the user intervention minimal and as simple as possible, requiring no particular skill to the user. The system has been experimentally tested on several subjects of different shapes and sizes, showing the ability to follow the requested trajectory with good robustness against any flight perturbations. The collected images are provided to a scene reconstruction software, which generates a 3D model of the acquired subject. The quality of the obtained reconstructions, in terms of accuracy and richness of details, have proved the reliability and efficacy of the proposed system.

1. Introduction

The deployment of lightweight radio-controlled flying vehicles (UAV) is now widely spread in a large variety of application fields, like rescue or emergency operation in critical environments, professional video production, or precision agriculture. In particular, the use of UAV’s to acquire images for purposes of photogrammetry and, in general, three-dimensional reconstruction from images, represents a widely spreading application field [1,2,3,4,5]. Among the main reasons for this wide diffusion, there are the low cost and extreme versatility of modern micro-UAVs, able to fly along precise trajectories and to keep a fixed position steadily, as well as the simultaneous decreasing cost and increasing resolution of image sensors.

This paper describes the design and the implementation of a technique for autonomous mission control of a quadcopter, which carries out an autonomous acquisition of the proper image sequence for 3D reconstruction, while processing the acquired video in real time for self-localization and consequent navigation, according to the mission control. This technique has been tested on several different real objects, showing its robustness and effectiveness, as witnessed by the accuracy of the finally obtained 3D models.

1.1. Related Work

The research literature proposing drones for 3D reconstruction of objects and terrains considers several different sensing techniques, like high-resolution cameras [6], or a combination of cameras and laser scanners [7]. There are several state-of-the-art works [3,6,8,9,10], in which a camera is mounted on a drone which carries out its mission autonomously, exploiting the images acquired on flight both for navigation (SLAM) and for 3D reconstruction. In particular, refs. [8,9] propose quite similar applications, where there is even no need for known-shape markers previously placed in the scene. However, to achieve such powerful tasks in real time, these systems need the support of remote high-performance computing facilities, connected with the drone through a high-bandwidth, low-latency wireless link, and running remotely the SLAM and 3D reconstruction algorithms on the image stream acquired by the drone.

Conversely, the purpose of this work was to develop a stand-alone system for automatic image acquisition of a subject to reconstruct in 3D, where the only computing facility is the mobile device (an Android-running tablet or smartphone) normally connected to the remote controller of the drone and used as display of the drone camera. Aiming at developing a low-cost and easy-to-use system, the proposed technique has been designed to keep the workflow requested to the human operator as simple as possible. Therefore, the novelties of the proposed technique, with respect to the state-of-the-art, lie mainly in two aspects:

- The system has been designed to keep the workflow simple and the practical setup of the scene as less invasive as possible;

- The algorithm performing the UAV self-location and navigation in real time has been designed for high computational efficiency, so that it can run on the same Android device employed for UAV flight supervision.

1.2. Article Outline

The article is organized as follows: Section 2 discusses possible approaches and describes the proposed procedure workflow; Section 3 describes the developed algorithm; Section 4 presents some significant experimentally obtained results, and Section 5 reports some final consideration and ideas for further research.

2. The Acquisition Procedure

2.1. Scene Setup

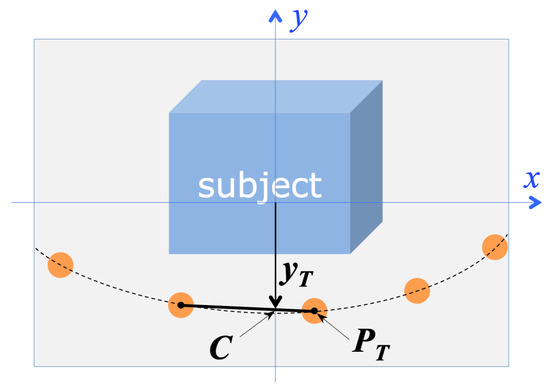

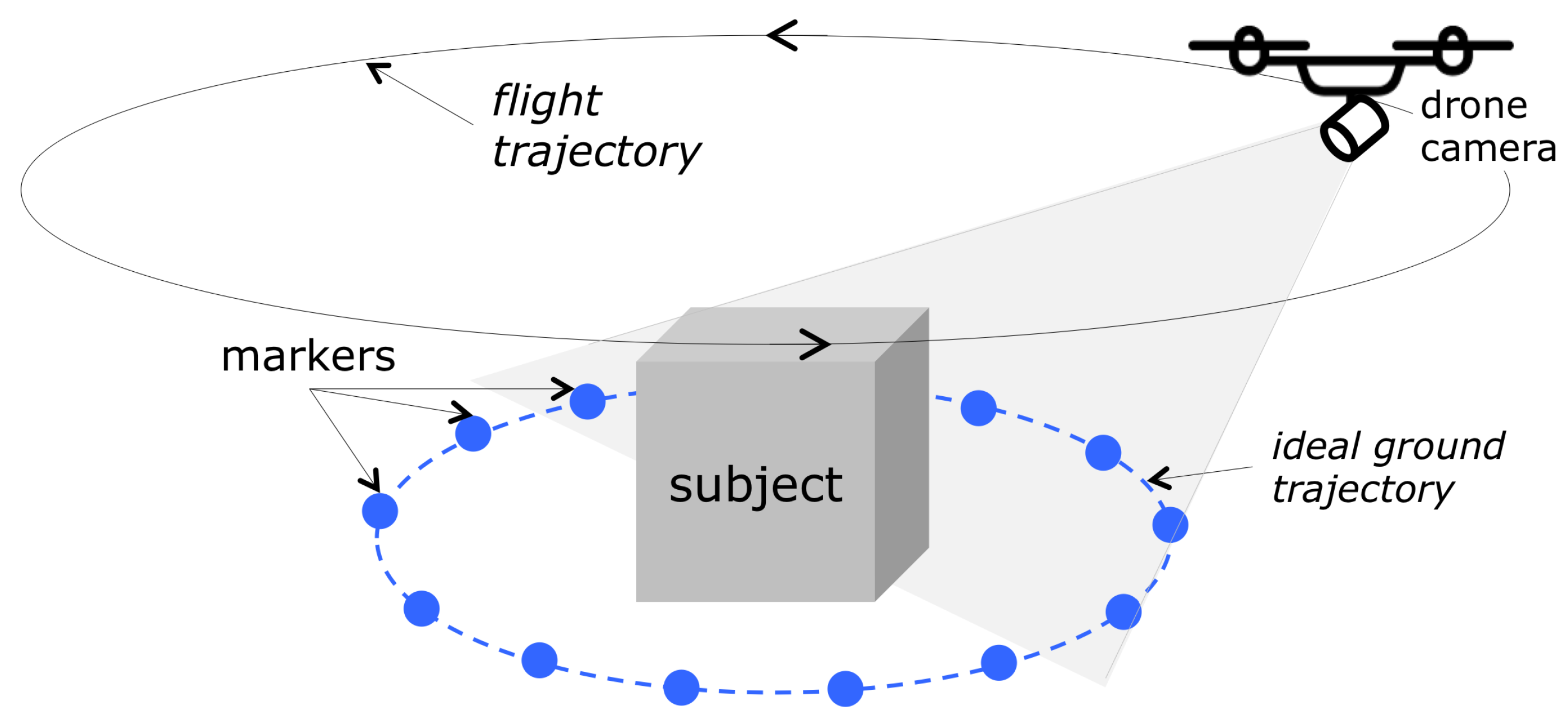

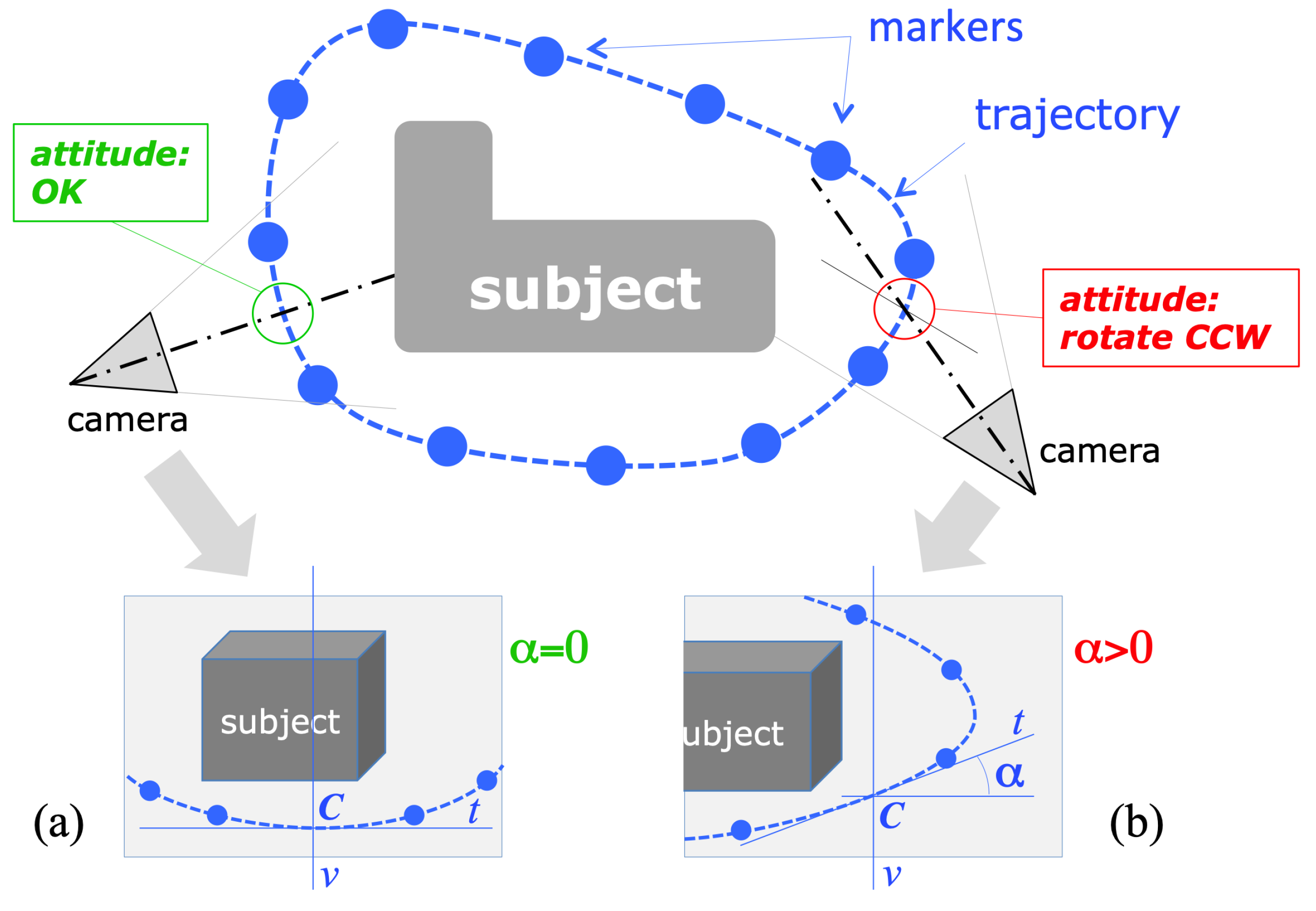

According to the laws of multiple-view geometry [11], it is necessary to collect images of a subject from all possible viewing directions, to reconstruct its 3D shape. In general, this could be achieved by acquiring a video, or several still images (the number of images depending on the object properties and on the desired level of detail in the reconstruction), while moving around the subject, approximately following a circumference, as shown in Figure 1. For objects whose footprint is very different from a circumference (for irregular or long-and-thin footprints, for instance), a circular trajectory would be far from optimal.

Figure 1.

The acquisition setup. The drone has to fly at least one round along the trajectory surrounding the object to reconstruct.

The definition of the optimal fight trajectory for image acquisition, given an approximate volumetric extent of the subject, is a well-studied problem in the literature [4,10]. However, the constraint on the optimal location of the image viewpoints is not so strict; in fact, a simple and safe rule-of-thumb to obtain accurate 3D reconstructions is to maintain approximately the same distance from the local front surface of the subject.

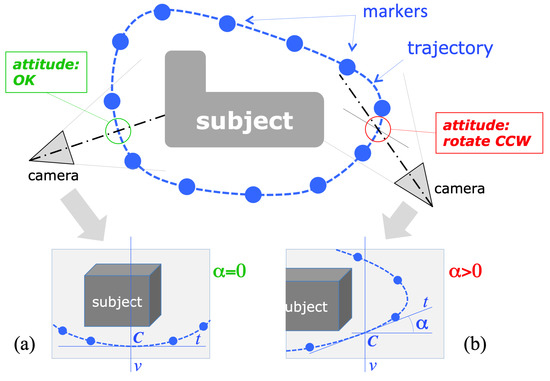

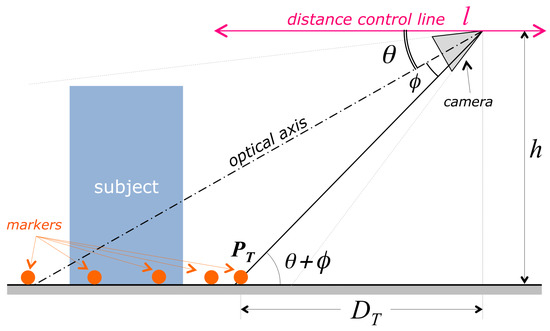

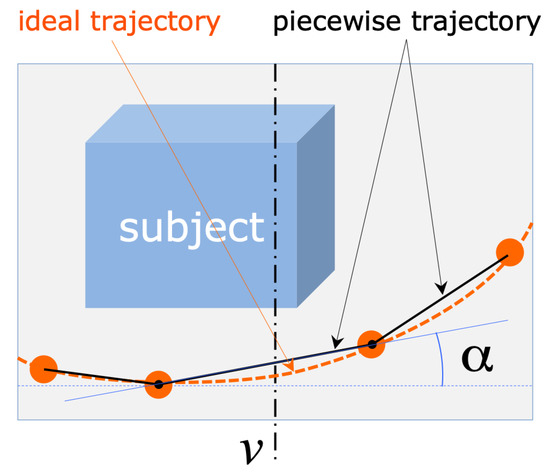

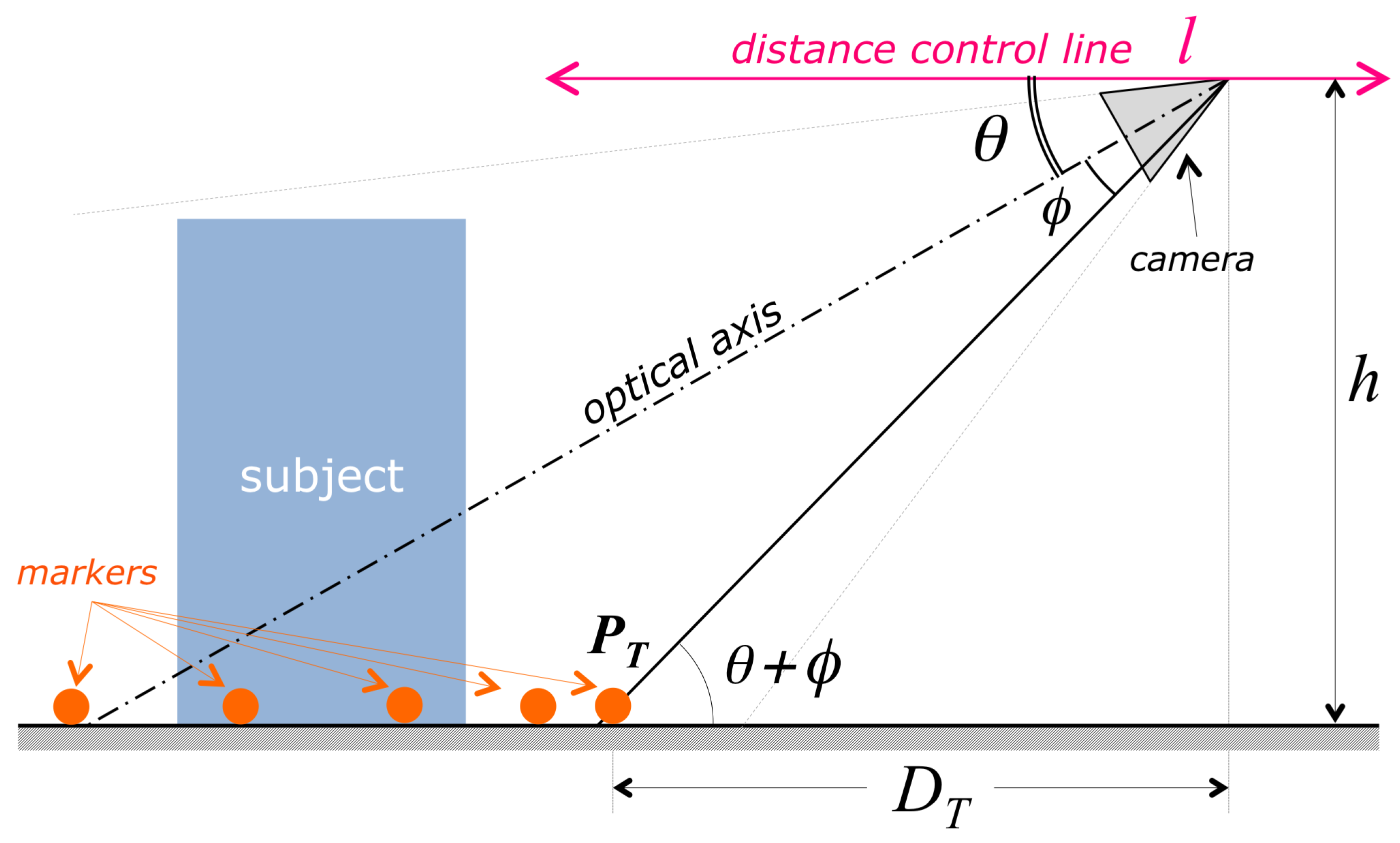

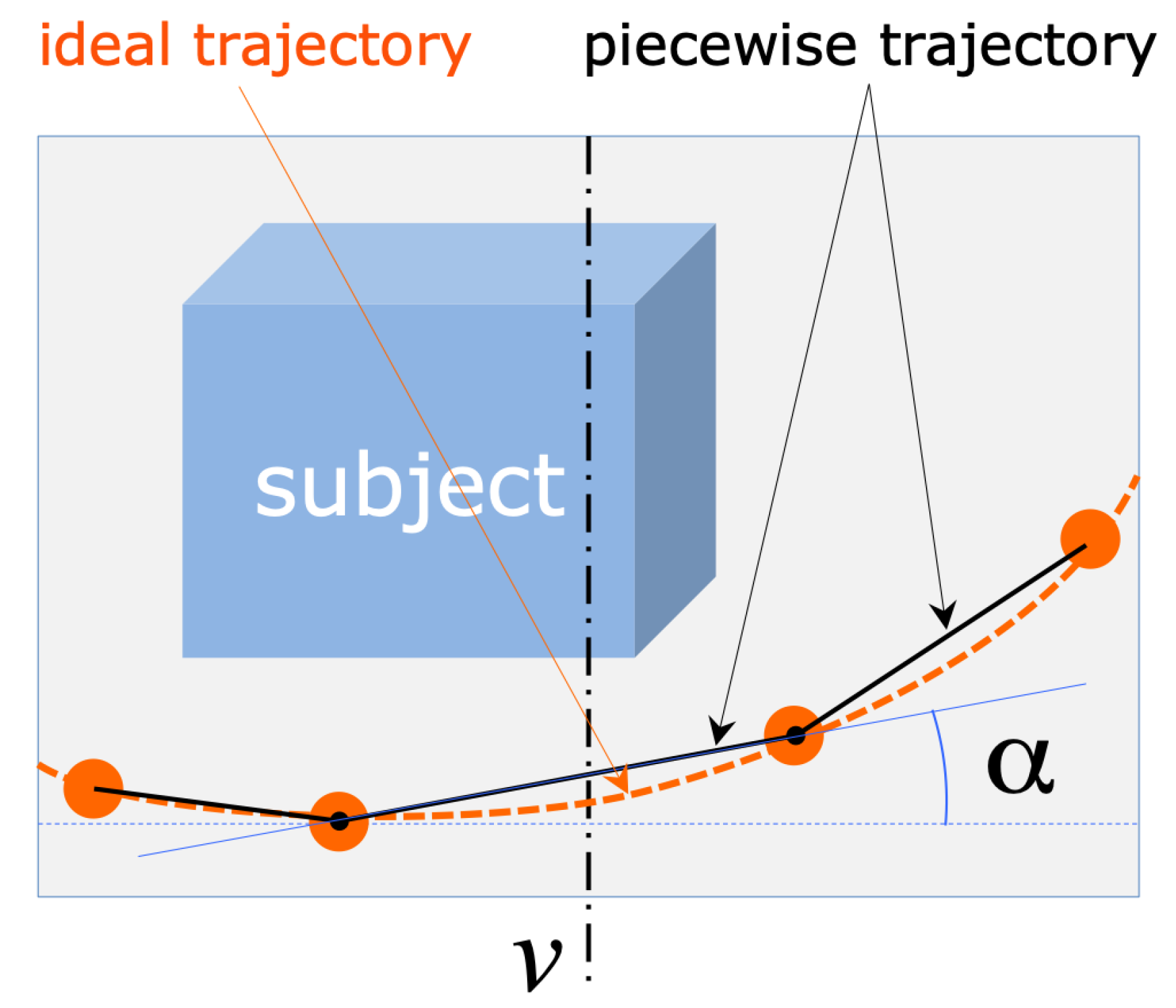

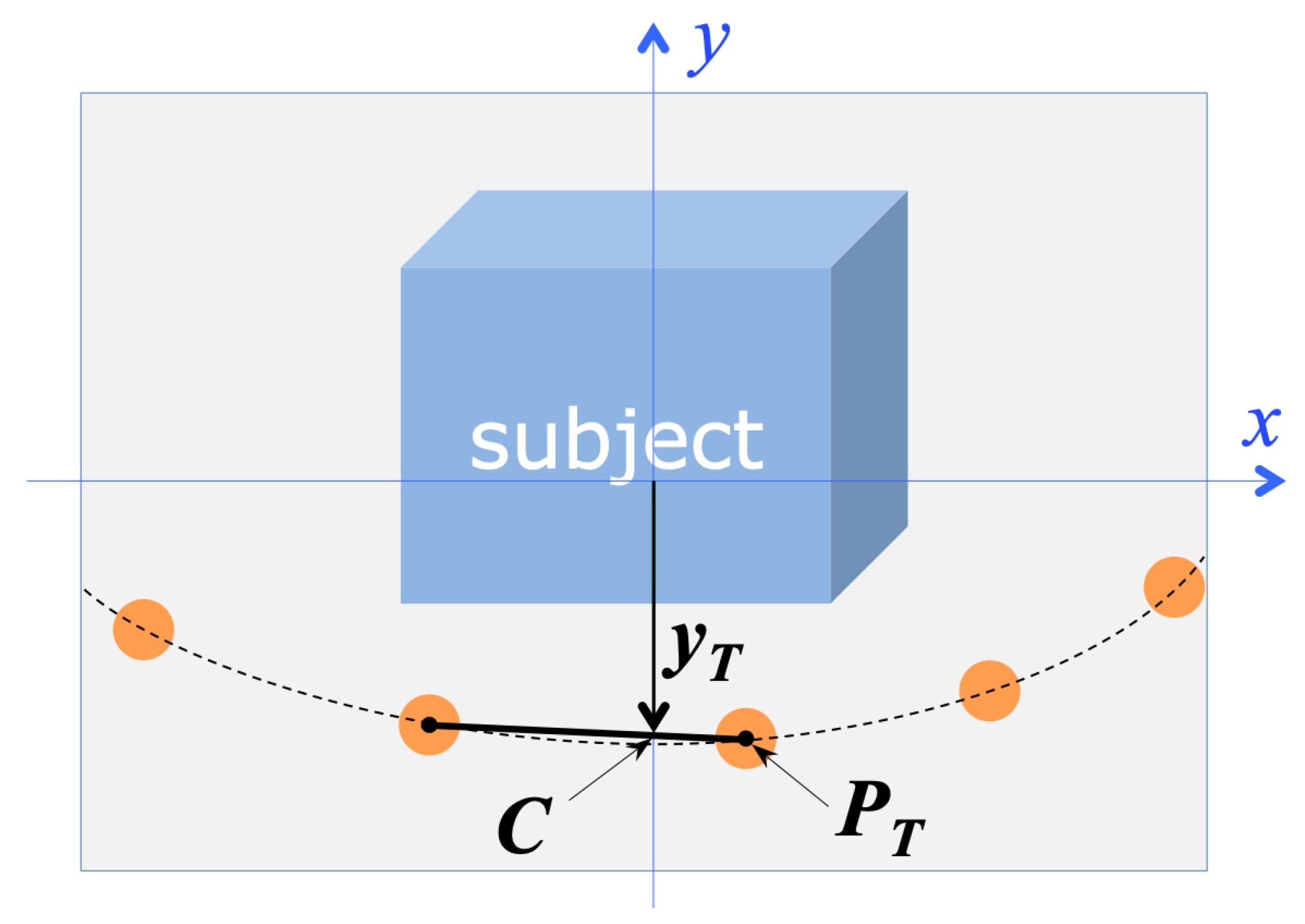

Our aim was therefore to have the drone automatically flying at least one round along this trajectory, while (a) keeping an approximately constant distance from the floor and from the object, and (b) keeping the on-board camera always pointed toward the object. This navigation problem can be actually considered a typical “line following” problem [12], where the line to follow represents the desired closed trajectory around the object, with the horizontal projection of the camera axis kept orthogonal to the trajectory. Besides this necessary requirement, our goal in designing the acquisition procedure was to keep the practical setup of the scene as simple as possible (that is, with minimal geometric constraints on the scene). A simple solution would be to trace a line on the ground around the object, which could be tracked and followed by the drone during the acquisition procedure. However, in order to keep the operative workflow maximally simple, we propose a scene setup in which the trajectory is defined by trajectory markers, in form of uniform-color spheres, that are properly placed along the desired trajectory, as represented in Figure 1. The line to follow is therefore represented by the ideal line connecting adjacent trajectory markers. This choice presents some advantages, compared to tracing a line: placing spheres (e.g., common plain-color balls) on the ground around an object, with no other geometrical constraints but keeping approximately the same distance from the object itself, is significantly simpler and less invasive than tracing a line around it. Moreover, the proper choice of the marker color makes the image processing procedure for their detection and localization significantly robust against detection errors, thus improving the reliability of the whole procedure. Finally, although spherical objects are imaged as ellipses, with eccentricity raising with their distance from the optical image center [13], for the size of the imaged markers and the angular amplitude of the camera’s frustum in our case, this perspective distortion can be neglected for the sake of marker localization, assuming that the visual ray going through the centroid of the imaged ellipse coincides with the visual ray through the corresponding sphere center. This assumption is fundamental to achieve the necessary accuracy in the localization of the spherical markers, which is fundamental for the final accuracy of the reconstructed 3D model. The validity of this assumption is confirmed by the reconstruction quality of the models presented in the experimental results.

The total number of markers is arbitrary, as it depends on the particular trajectory around the subject. The size of the markers is also not critical, as it is just necessary for the markers to be clearly visible in the acquired images. Consequently, the size of the markers should be adapted to the average subject-camera distance, which in turn, depends on the size of the subject being reconstructed. In other words, it is possible to reconstruct arbitrarily large objects, provided we use correspondingly large markers, big enough to be seen from the camera. The only necessary constraint in this procedure is that at least two markers must be visible in each image, as explained in Section 3.

2.2. Procedure Workflow

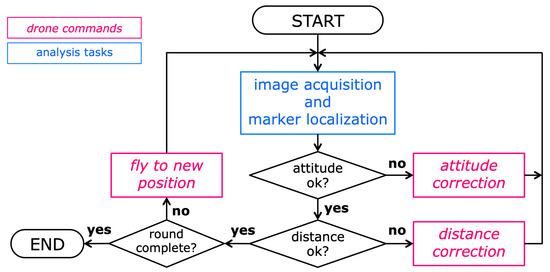

The idea behind this work is to conceive a procedure that is as simple to carry out as possible, with minimal user intervention. The proposed image acquisition workflow consists therefore of the following steps.

2.2.1. Scene Setup

The operator places a set of spheres all around the object or scene to be reconstructed. The size of the spheres should be approximately adapted to the scene’s overall size: the bigger the scene, the bigger the spheres. However their size is not critical at all: they just have to appear in the images as uniform-color circles. The color of the spheres can be arbitrarily chosen, as long as it contrasts with their background, as the marker detection procedure is adapted to the chosen color. The proper number of deployed spheres depends on the size and shape of the object, but also this choice is not critical: the spheres should be just many enough to ensure that each view sees at least two of them. A greater number of visible spheres generally increases the robustness of the procedure but does not affect the final 3D accuracy.

2.2.2. Drone Lift

The user, who can control the flight seeing from the camera viewpoint in real time (by means of the control application running on the device connected to the drone), lifts the drone to the starting position, an arbitrary point of the desired trajectory. With the camera pointing towards the object, the camera should “see” the spheres lying on the ground in front of the subject, which through the perspective projection, will be normally located in the image below the object to reconstruct (as schematized in Figure 1).

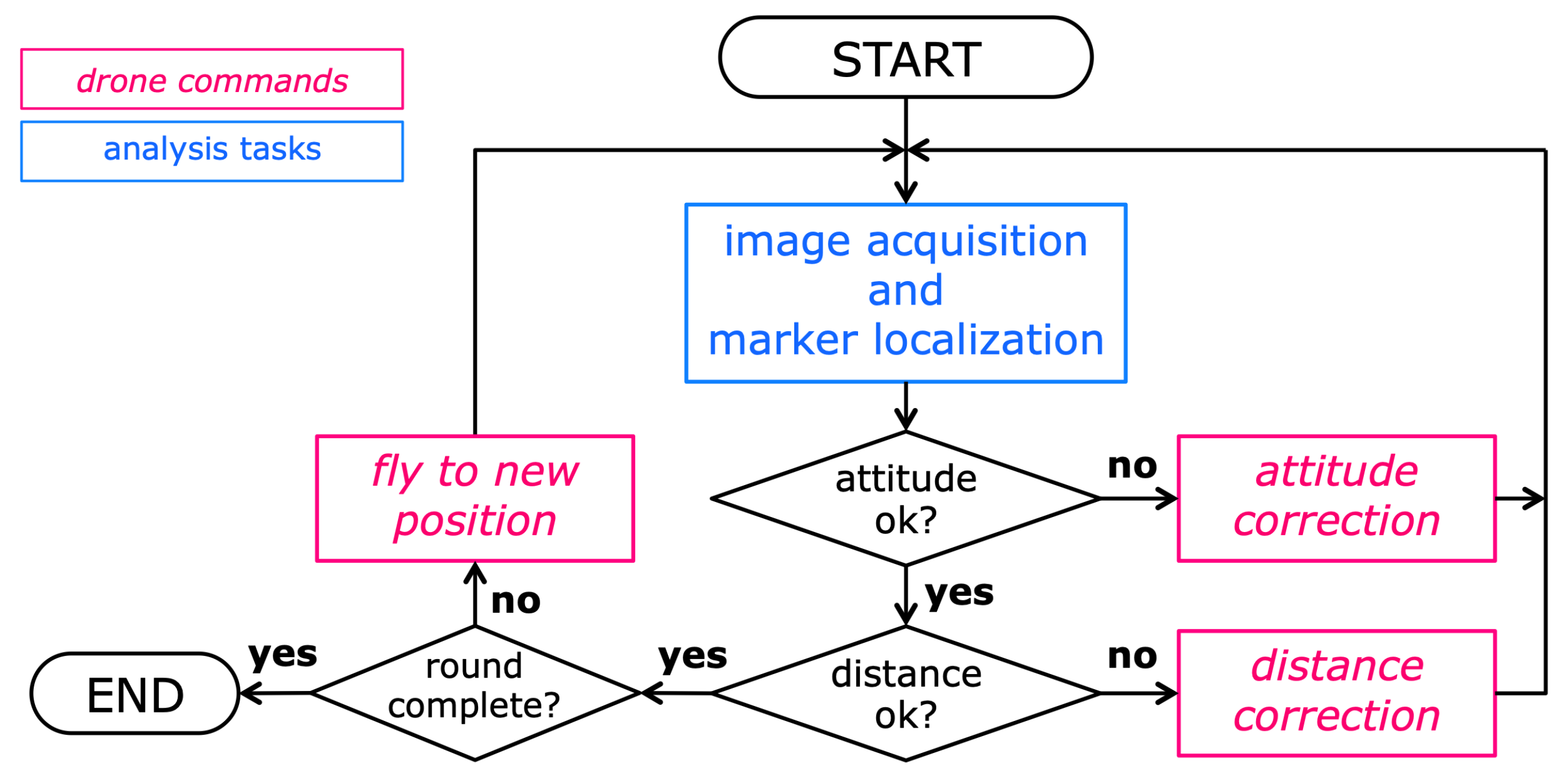

2.2.3. Automatic Acquisition Procedure

Once the user has placed the drone in a valid starting position, he can start the automatic acquisition procedure by giving a command to the Mission Control application running on the device, which takes control of the whole flight and image acquisition process. The user can monitor the ongoing process through the user interface of the application.

2.2.4. Termination

During the acquisition process, the application continuously updates an estimation of the camera attitude (the direction of the camera axis). When, according to this estimation, the drone has completed the round along the closed trajectory, the application automatically drives the drone to land, thus completing the procedure.

4. Experimental Results

The purpose of the experiments and tests we carried out was to judge the obtained performance in terms of the following aspects: (a) the accuracy of the flown trajectory and of the set of acquired viewpoints, in terms of position, orientation and spacing regularity; (b) the overall duration of the acquisition process; and (c) the final accuracy of the 3D model reconstructed with the acquired images.

4.1. Experimental Environment

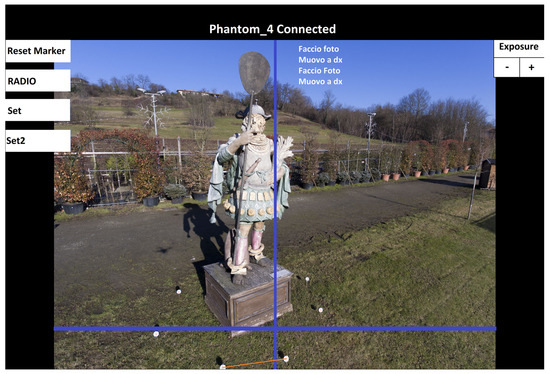

The system was developed around a DJI Phantom 4 quadcopter; the application controls the drone through the DJI API [14]. The on-board camera of this copter is equipped with a 1/2.3 CMOS Mpixel sensor and a wide-angle (20 (35 format equivalent), f/ lens. The camera provides still color images with a resolution of pixel. The Android device used for the experiments is a 8Samsung Tab S4 tablet, equipped with eight ARM Cortex-A73 cores (running at 1.9 ), 4 GB RAM memory and 64 GB flash memory. The tablet running our application is connected to the USB port of the drone’s remote controller, in the same way as the usual device serving as visual interface. Through this interface, the application receives the video stream and the high-resolution images from the camera, and transmits the flight commands to the drone. During the flight, the progress of the acquisition process can be followed on the application dashboard (see Figure 7).

4.2. Flight and Acquisition Process

The acquisition of the subjects presented as results (two sculptures, see Figure 8 and Figure 9) have been carried out in sunny daylight, in order to assess the reliability of the marker detection algorithm in presence of shadows. In the two cases, markers of two different colors have been used, to assess the flexibility of the detection algorithm. For the considered subjects, having a size of up to 4 tall, 2 to 3 wide, we used eight markers, placed approximately on a circumference (for the subject in Figure 8) and on an ellipse (for the subject in Figure 9).

Figure 8.

(Left) one of the acquired images from a sculpture by D. Ferretti (Expo 2015, Milan, Italy). Due to its complex shape, this represented a particularly difficult subject to reconstruct with good accuracy. (Right) a view of the reconstructed 3D model of the sculpture.

Figure 9.

(Left) one of the acquired images from another sculpture (from the collection: warriors in the wind, by S. Volpe, Milan, Italy). (Right) A view of the reconstructed 3D model.

Thanks to the achieved algorithm efficiency, the system is able to acquire and analyze one frame every 3 , on average. For both subjects, the system collected over 200 images in total, in approximately 20 (fast enough to complete the process within the drone battery lifetime). The overall accuracy in following the trajectory could be estimated in terms of the occurrence rate of a trajectory correction. In all the performed experiments, the acquisition process has proved to be significantly robust and accurate: a trajectory correction after the execution of the computed motion was seldom necessary.

To evaluate the ability of the system in locating the markers, we examined the video of the whole acquisition processes, available through the application dashboard. In all experiments, the detection algorithm detected all the visible markers (no “false negatives” occurred); in few situations a “false positive” occurred (mainly in backlit images) but, in all of them, the correctly detected markers kept always the trajectory estimation correct, with no significant consequence for the flight accuracy.

4.3. Final 3D Subject Reconstruction

Although this work focuses on the automatic acquisition process, a qualitative evaluation of the 3D reconstruction results obtained from the acquired images makes sense as a final validation of the proposed procedure. Indeed, the quality of a 3D reconstruction result is very sensitive to the quality of the acquired images and to the correct choice of their position [11]; for this reason, the result we are interested in here is the success and the overall quality of the final 3D reconstruction, rather than the quantitative measurement of the dimensional accuracy of the resulting 3D shape, which essentially depends on the characteristics of the camera and of the used 3D reconstruction software. We adopted a professional Structure-from-Motion software (Agisoft PhotoScan [18]) to compute these 3D reconstructions.

We selected subjects with complex shapes and irregular spatial extent, to test the procedure and the reconstruction on a challenging case. Figure 8 shows the first example. The sculpture belongs to the collection Popolo del cibo, by D. Ferretti, which was exposed at the international “Expo 2015” in Milan. The particular shape of this subject ( height, compared to width at the basement), together with the presence of fine details on the subject surface, led to a high density of the viewpoints. The drone flew a circular trajectory of approximately 4 radius around the sculpture, acquiring over 200 views, with an average image interdistance of approx. 10–15 .

The images have been then processed using a professional Structure-from-Motion software [18]. The resulting quality of the reconstructed 3D models, shown in Figure 8, proves the effectiveness of the proposed technique: the 3D metric reconstruction from the acquired views was successful. The planarity of the basement faces and their mutual orthogonality have been correctly reconstructed, as well as the little details present on the complex shape of this subject.

A similarly demanding subject is the sculpture shown in Figure 9. In this case we deployed orange markers, to test the reliability of the procedure against the marker color. Due to the shape of this subject, we placed eight markers on the ground, approximately forming an ellipse around the sculpture. The drone followed therefore an elliptical trajectory, keeping the starting distance of approx. 3 from the sculpture during the whole flight, acquiring approximately 200 views. As the 3D model in Figure 9 shows, the reconstruction process was equally successful, despite the particularly complex 3D shape of this subject.

5. Conclusions

This paper describes the design and implementation of a simple stand-alone system (consisting of a drone and a simple device—a tablet or a smartphone) able to acquire large-size subjects by means of a camera aboard a UAV, where both the flight and the image shooting are automatically controlled by the proposed application running on the device, which is connected to the drone through the radio link provided by the remote controller. The experimental results have shown that the system is able to accurately follow the planned trajectory, with significant robustness against outdoor illumination and flight perturbations, and to yield a sequence of images enabling good-quality 3D model reconstructions of the acquired objects. The reconstruction results for the considered complex-shaped objects is a sound proof of the reliability of this technique. Compared to the state of the art [8,9,10], the peculiarity of the proposed technique is the ability to produce a valid image data-set for 3D reconstruction with an extremely simple hardware/software system and maximally simple user operation.

Among the possible directions for further improving the proposed technique, we are considering, in particular: (a) the further optimization of the marker localization algorithm, aiming to reduce the total processing time. Since the current solution takes approximately half of the time between two subsequent shots, decreasing this time could ideally speed-up the entire acquisition process up to ; (b) the extension of this procedure to a “multi-level” version, able to follow multiple trajectories lying on different heights, to enable the image acquisition of particularly tall objects (like a tower, for instance).

Author Contributions

Conceptualization, F.P. and S.M.; methodology, F.P. and S.M.; software, F.P. and S.M.; validation, F.P. and S.M.; investigation, F.P. and S.M.; resources, F.P.; writing, F.P. and S.M.

Funding

This research received no external funding.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Muzzupappa, M.; Gallo, A.; Spadafora, F.; Manfredi, F.; Bruno, F.; Lamarca, A. 3D reconstruction of an outdoor archaeological site through a multi-view stereo technique. In Proceedings of the 2013 Digital Heritage International Congress, Marseille, France, 28 October–1 November 2013; Volume 1, pp. 169–176. [Google Scholar] [CrossRef]

- Gongora, A.; Gonzalez-Jimenez, J. Enhancement of a commercial multicopter for research in autonomous navigation. In Proceedings of the 2015 23rd Mediterranean Conference on Control and Automation (MED), Torremolinos, Spain, 16–19 June 2015; pp. 1204–1209. [Google Scholar] [CrossRef]

- Esrafilian, O.; Taghirad, H.D. Autonomous flight and obstacle avoidance of a quadrotor by monocular SLAM. In Proceedings of the 2016 4th International Conference on Robotics and Mechatronics (ICROM), Tehran, Iran, 26–28 October 2016; pp. 240–245. [Google Scholar]

- Daftry, S.; Hoppe, C.; Bischof, H. Building with drones: Accurate 3D facade reconstruction using MAVs. In Proceedings of the 2015 IEEE International Conference on Robotics and Automation (ICRA), Seattle, WA, USA, 26–30 May 2015; pp. 3487–3494. [Google Scholar] [CrossRef]

- 3D Robotics—Site Scan, 2017. Available online: http://3dr.com/ (accessed on 8 May 2012).

- Meyer, D.; Fraijo, E.; Lo, E.K.C.; Rissolo, D.; Kuester, F. Optimizing UAV Systems for Rapid Survey and Reconstruction of Large Scale Cultural Heritage Sites. In Proceedings of the International Congress on Digital Heritage—Theme 1—Digitization And Acquisition, Granada, Spain, 28 September–2 October 2015; Guidi, G., Scopigno, R., Remondino, F., Eds.; IEEE: Piscataway, NJ, USA, 2015. [Google Scholar] [CrossRef]

- Gee, T.; James, J.; Mark, W.V.D.; Delmas, P.; Gimel’farb, G. Lidar guided stereo simultaneous localization and mapping (SLAM) for UAV outdoor 3-D scene reconstruction. In Proceedings of the 2016 International Conference on Image and Vision Computing New Zealand (IVCNZ), Palmerston North, New Zealand, 21–22 November 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Mur-Artal, R.; Montiel, J.M.M.; Tardós, J.D. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Trans. Robot. 2015, 31, 1147–1163. [Google Scholar] [CrossRef]

- Forster, C.; Pizzoli, M.; Scaramuzza, D. Appearance-based Active, Monocular, Dense Reconstruction for Micro Aerial Vehicles. Presented at Robotics: Science and Systems X, University of California, Berkeley, CA, USA, 12–16 July 2014. [Google Scholar]

- Roberts, M.; Dey, D.; Truong, A.; Sinha, S.; Shah, S.; Kapoor, A.; Hanrahan, P.; Joshi, N. Submodular Trajectory Optimization for Aerial 3D Scanning. In Proceedings of the International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 5334–5343. [Google Scholar]

- Hartley, R.I.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Wang, X.; Shen, X.; Chang, X.; Chai, Y. Route Identification and Direction Control of Smart Car Based on CMOS Image Sensor. In Proceedings of the 2008 ISECS International Colloquium on Computing, Communication, Control, and Management, Guangzhou, China, 3–4 August 2008; Volume 2, pp. 176–179. [Google Scholar] [CrossRef]

- Espiau, B.; Chaumette, F.; Rives, P. A new approach to visual servoing in robotics. IEEE Trans. Robot. Autom. 1992, 8, 313–326. [Google Scholar] [CrossRef]

- DJI Developer—Mobile SDK, 2018. Available online: https://developer.dji.com/mobile-sdk/ (accessed on 8 May 2012).

- Pratt, W.K. Digital Image Processing, 4th ed.; John Wiley & Sons, Inc.: New York, NY, USA, 2006. [Google Scholar] [CrossRef]

- Frosio, I.; Borghese, N. Real-time accurate circle fitting with occlusions. Pattern Recognit. 2008, 41, 1041–1055. [Google Scholar] [CrossRef]

- Finlayson, G.D.; Hordley, S.D.; Drew, M.S. On the removal of shadows from images. IEEE Trans. Pattern Anal. Mach. Intell. 2006, 28, 59–68. [Google Scholar] [CrossRef] [PubMed]

- Agisoft PhotoScan Professional (Version 1.2.6). 2018. Available online: http://www.agisoft.com/ (accessed on 8 May 2012).

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).