Author Contributions

Conceptualization, Z.L. and S.A.; methodology, Z.L.; software, Z.L.; validation, Z.L.; formal analysis, Z.L.; investigation, Z.L. and S.A.; resources, S.A.; data curation, Z.L.; writing—original draft preparation, Z.L.; writing—review and editing, Z.L. and S.A.; visualization, Z.L.; supervision, S.A.; project administration, S.A.; funding acquisition, S.A.

Figure 1.

Illustration of the radiation detection task. The gray area denotes the searching area boundary and the walls inside the searching area. The green dashed line with arrow denotes a searching path of the detector.

Figure 1.

Illustration of the radiation detection task. The gray area denotes the searching area boundary and the walls inside the searching area. The green dashed line with arrow denotes a searching path of the detector.

Figure 2.

Schematic illustration of the convolutional neural network (CNN). The input of this CNN contains three images. The first image shows the mean radiation measurement for each of the visited positions. The second image shows the number of measurements for each position. The third image shows the detector’s current position in the searching area. The output layer is a fully connected layer with 4 nodes, and each of the nodes represents its corresponding action’s expected cumulative future reward given the input as current state. For the convolution layers or the pooling layer, the ’size’ parameter specifies the size of the convolution or pooling kernel; the ’filters’ parameter specifies the channel number of the convolution kernel; the ’strides’ parameter specifies the stride of the sliding window for each dimension of input; the ’activation’ parameter specifies the activation function applied to the output of the convolution results. For the pooling layer, we applied max pooling. For the fully connected layers, the ’size’ parameter specifies the number of nodes in the layer, and the ’activation’ parameter specifies the activation function applied to the output of the fully connected layer. The ’Relu’ activation function is defined as

, and the ’Sigmoid’ activation function is defined as

. These definitions and names are consistent with the names used by the ’tf.layers.conv2d’ function in Tensorflow [

26].

Figure 2.

Schematic illustration of the convolutional neural network (CNN). The input of this CNN contains three images. The first image shows the mean radiation measurement for each of the visited positions. The second image shows the number of measurements for each position. The third image shows the detector’s current position in the searching area. The output layer is a fully connected layer with 4 nodes, and each of the nodes represents its corresponding action’s expected cumulative future reward given the input as current state. For the convolution layers or the pooling layer, the ’size’ parameter specifies the size of the convolution or pooling kernel; the ’filters’ parameter specifies the channel number of the convolution kernel; the ’strides’ parameter specifies the stride of the sliding window for each dimension of input; the ’activation’ parameter specifies the activation function applied to the output of the convolution results. For the pooling layer, we applied max pooling. For the fully connected layers, the ’size’ parameter specifies the number of nodes in the layer, and the ’activation’ parameter specifies the activation function applied to the output of the fully connected layer. The ’Relu’ activation function is defined as

, and the ’Sigmoid’ activation function is defined as

. These definitions and names are consistent with the names used by the ’tf.layers.conv2d’ function in Tensorflow [

26].

![Sensors 19 00960 g002]()

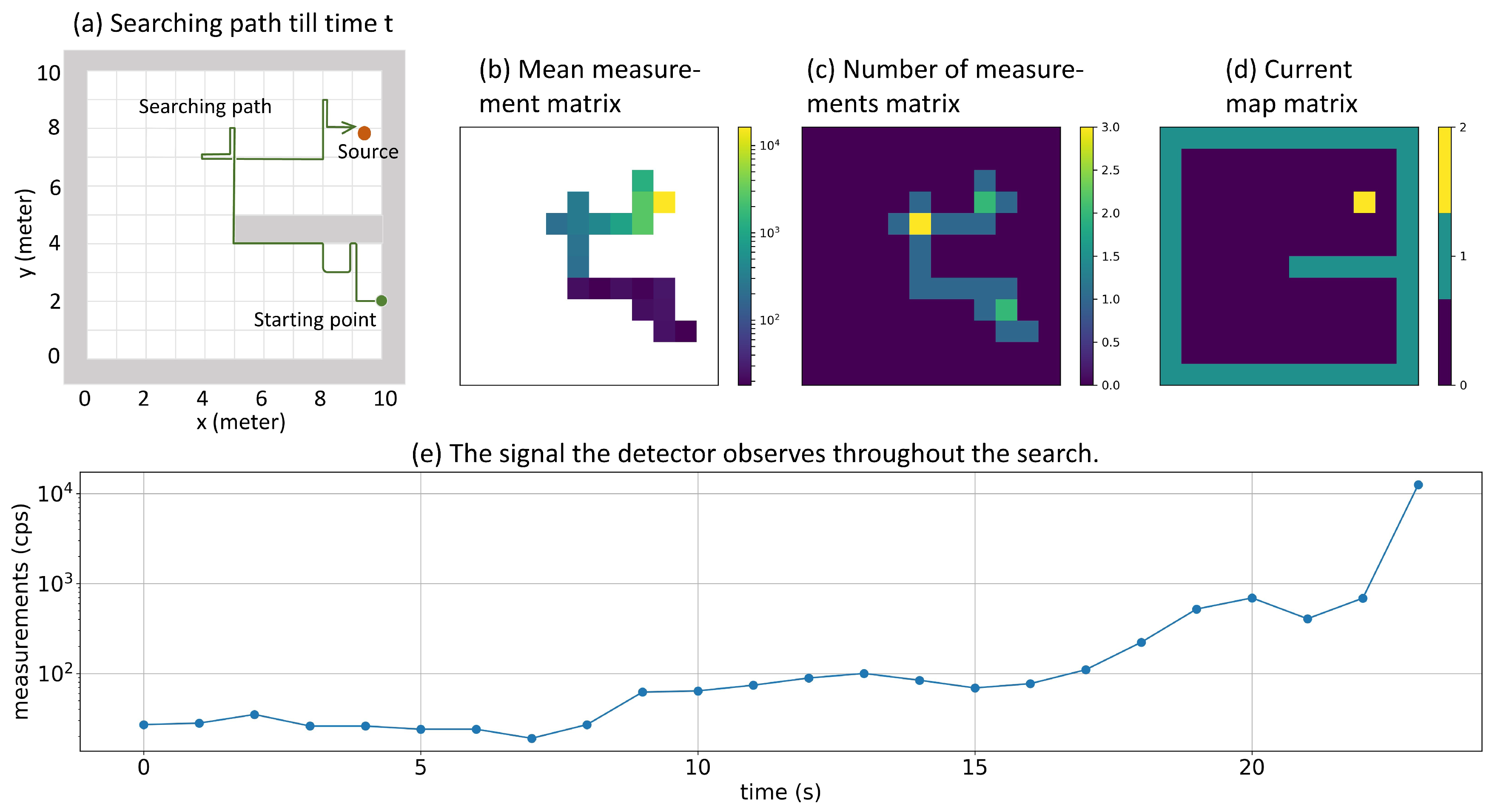

Figure 3.

Representing the searching history using Mean measurement matrix, Number of measurements matrix, and Current map matrix. Plot (a) shows an example of a searching history. In plot (b), color represents the intensity of mean radiation measurements for each position. In plot (c), color represents the number of measurements been taken so far at each position. In plot (d), yellow represents the detector’s current position, green represents boundaries and walls, and blue represents accessible positions. Plot (e) represents the signal that the detector observed throughout the search.

Figure 3.

Representing the searching history using Mean measurement matrix, Number of measurements matrix, and Current map matrix. Plot (a) shows an example of a searching history. In plot (b), color represents the intensity of mean radiation measurements for each position. In plot (c), color represents the number of measurements been taken so far at each position. In plot (d), yellow represents the detector’s current position, green represents boundaries and walls, and blue represents accessible positions. Plot (e) represents the signal that the detector observed throughout the search.

Figure 4.

Illustration of the searching areas in the first experiment. (a) The searching area that does not have walls inside. (b) The searching area that has one wall inside. In both of the searching areas, the detector always starts searching from the lower left corner.

Figure 4.

Illustration of the searching areas in the first experiment. (a) The searching area that does not have walls inside. (b) The searching area that has one wall inside. In both of the searching areas, the detector always starts searching from the lower left corner.

Figure 5.

Illustration of the simulation areas in the detector trapping test. Green dots represent the initial point of the detector, and red dots represent the position of the radiation source. Grey areas represent the walls which can block the source’s radiation entirely. Plot (a) shows the first kind of simulation area, in which the wall is attached to the left edge of the simulation area and has different lengths (0 m, 2 m, 4 m, 6 m, 8 m). The longer the wall is, the harder it is for the detector to move from lower part to the upper part. All these wall configurations have been seen by the Q-learning algorithm in the training stage. Plot (b) shows the second kind of the simulation area. This wall configuration has not been seen by the Q-learning algorithm in the training stage.

Figure 5.

Illustration of the simulation areas in the detector trapping test. Green dots represent the initial point of the detector, and red dots represent the position of the radiation source. Grey areas represent the walls which can block the source’s radiation entirely. Plot (a) shows the first kind of simulation area, in which the wall is attached to the left edge of the simulation area and has different lengths (0 m, 2 m, 4 m, 6 m, 8 m). The longer the wall is, the harder it is for the detector to move from lower part to the upper part. All these wall configurations have been seen by the Q-learning algorithm in the training stage. Plot (b) shows the second kind of the simulation area. This wall configuration has not been seen by the Q-learning algorithm in the training stage.

Figure 6.

Experiment setup for the radiation source estimation test. The simulation area does not have walls inside. The green dot illustrates the starting point of the detector, and the red dots illustrate nine different testing source positions indexed from 1 to 9.

Figure 6.

Experiment setup for the radiation source estimation test. The simulation area does not have walls inside. The green dot illustrates the starting point of the detector, and the red dots illustrate nine different testing source positions indexed from 1 to 9.

Figure 7.

Training curves of the episode reward. One episode is one round of the searching task, starting from initializing the simulation environment and ending with the detector triggering the termination condition (either finding the source or reaching the maximum time step inside an episode). Between every 100 training episodes, 30 randomly initialized episodes were evaluated with CNN parameters fixed. Plot (a) shows the average testing reward of the 30 episodes. Plot (b) shows the minimum testing reward of the 30 episodes. Plot (c) shows the maximum testing reward of the 30 episodes. In these three plots, dark blue lines represent the averaged testing results with window size of 100 episodes, while light blue shadows represent raw testing results.

Figure 7.

Training curves of the episode reward. One episode is one round of the searching task, starting from initializing the simulation environment and ending with the detector triggering the termination condition (either finding the source or reaching the maximum time step inside an episode). Between every 100 training episodes, 30 randomly initialized episodes were evaluated with CNN parameters fixed. Plot (a) shows the average testing reward of the 30 episodes. Plot (b) shows the minimum testing reward of the 30 episodes. Plot (c) shows the maximum testing reward of the 30 episodes. In these three plots, dark blue lines represent the averaged testing results with window size of 100 episodes, while light blue shadows represent raw testing results.

Figure 8.

Average searching time under different source intensities. The shaded area is the 95% confidence interval estimated by bootstrapping. The ’GS’ stands for the gradient search method, and the ’QL’ stands for the Q-learning method. The Q-learning method used less searching times than the gradient search method in all simulation conditions.

Figure 8.

Average searching time under different source intensities. The shaded area is the 95% confidence interval estimated by bootstrapping. The ’GS’ stands for the gradient search method, and the ’QL’ stands for the Q-learning method. The Q-learning method used less searching times than the gradient search method in all simulation conditions.

Figure 9.

Failure rate of the Q-learning algorithm (QL) and the gradient search algorithm (GS) in the radiation source searching test, for simulation areas with walls and without walls. Without walls, the two algorithms had the same failure rate; with walls, the Q-learning algorithm achieved much smaller failure rate than the gradient search method.

Figure 9.

Failure rate of the Q-learning algorithm (QL) and the gradient search algorithm (GS) in the radiation source searching test, for simulation areas with walls and without walls. Without walls, the two algorithms had the same failure rate; with walls, the Q-learning algorithm achieved much smaller failure rate than the gradient search method.

Figure 10.

Detector trapping test 1. In this trapping test, simulation areas with different wall lengths were tested. The relative trapped time was defined as the percentage of the time the detector stayed in the lower 40% part of the simulation area during the whole searching process. The orange curve represents the mean relative trapped time of the gradient search method, and the blue curve represents the mean relative trapped time of the Q-learning method. The example searching paths for both algorithms in different wall lengths are also drawn in the figure. For all the searching path examples, the detector initialization point was at the lower left corner, the radiation source was at the upper left corner, and the color of the path represents the mean radiation intensity (blue is low, and yellow is high). The Q-learning algorithm was not trapped by walls, while the gradient search method was trapped by walls.

Figure 10.

Detector trapping test 1. In this trapping test, simulation areas with different wall lengths were tested. The relative trapped time was defined as the percentage of the time the detector stayed in the lower 40% part of the simulation area during the whole searching process. The orange curve represents the mean relative trapped time of the gradient search method, and the blue curve represents the mean relative trapped time of the Q-learning method. The example searching paths for both algorithms in different wall lengths are also drawn in the figure. For all the searching path examples, the detector initialization point was at the lower left corner, the radiation source was at the upper left corner, and the color of the path represents the mean radiation intensity (blue is low, and yellow is high). The Q-learning algorithm was not trapped by walls, while the gradient search method was trapped by walls.

Figure 11.

Detector trapping test 2. In this trapping test, a simulation area with two overlapped long walls was tested. The relative trapped time was defined as the percentage of the time the detector stayed in the lower 60% part of the simulation area during the whole searching process. For all the searching path examples, the detector initialization point was at the lower left corner, the radiation source was at the upper left corner, and the color of the path represents the mean radiation intensity (blue is low, and yellow is high). Plot (a) shows an example search path from the gradient search method that was failed to find the source. Plot (b) shows an example search path from the Q-learning model taken from the 842,500th episode, and this search was also failed to find the source. Plot (c) shows an example search path from the Q-learning model that was trained for additional 6000 episodes on the new simulation area. After this additional training, the new Q-learning model was able to efficiently search for sources in the new geometry.

Figure 11.

Detector trapping test 2. In this trapping test, a simulation area with two overlapped long walls was tested. The relative trapped time was defined as the percentage of the time the detector stayed in the lower 60% part of the simulation area during the whole searching process. For all the searching path examples, the detector initialization point was at the lower left corner, the radiation source was at the upper left corner, and the color of the path represents the mean radiation intensity (blue is low, and yellow is high). Plot (a) shows an example search path from the gradient search method that was failed to find the source. Plot (b) shows an example search path from the Q-learning model taken from the 842,500th episode, and this search was also failed to find the source. Plot (c) shows an example search path from the Q-learning model that was trained for additional 6000 episodes on the new simulation area. After this additional training, the new Q-learning model was able to efficiently search for sources in the new geometry.

![Sensors 19 00960 g011]()

Table 1.

Radiation source estimation test.

Table 1.

Radiation source estimation test.

| Approach * | Mean Estimation Error | Mean Searching Time (s) ** |

|---|

| | | | | | | | | | | |

|---|

| QL | 0.057 | 0.064 | 6.3 | 7.85 | 16.70 | 22.35 | 6.90 | 15.70 | 17.75 | 15.65 | 16.75 | 22.70 |

| GS | 0.090 | 0.101 | 6.5 | 11.2 | 17.2 | 31.3 | 17.85 | 21.15 | 52.1 | 38.8 | 37.0 | 76.05 |

| U1 | 0.082 | 0.134 | 5.0 | 28 |

| U2 | 0.024 | 0.026 | 3.1 | 37 |

| U3 | 0.013 | 0.011 | 1.7 | 54 |