1. Introduction

Recently, the automotive market has been in the spotlight in various fields and has been growing rapidly. According to the results obtained in [

1], the self-driving car market is expected to be worth

$20 billion by 2024 and to grow at a compound annual growth rate of 25.7% from 2016 to 2024. As a result, companies such as Ford, GM, and Audi, which are strong in the existing automobile market, as well as companies such as Google and Samsung, which are not automobile brands currently, have shown interesting in investing in the development of automotive driving. For the complete development of automotive vehicles, several sensors, as well as their well-organization, are required, and the radar sensor is a major sensor for automobiles [

2].

In fact, radar has long been used for self-driving vehicles, and its importance has been further emphasized recently. LiDAR, ultrasonics, and video cameras are also considered as competing and complementing technologies in vehicular surround sensing and surveillance. Among the automotive sensors, radar exhibits advantages of robustness and reliability, especially under adverse weather conditions [

3]. Self-driving cars can estimate the distance, relative speed, and angle of the detected target through radar, and, furthermore, classify the detected target by using its features such as radar cross-section (RCS) [

4,

5], phase information [

6], and micro-Doppler signature [

7,

8,

9]. In addition, the radar mounted on autonomous vehicles can recognize the driving environment [

10,

11].

With the development of autonomous driving using radar with deep learning, a few studies have been conducted on radar with artificial neural networks [

6,

12]. In [

13], a fully connected neural network (FCN) was used to replace the traditional radar signal processing, where signals after being subjected to windowing and 2D fast Fourier transform (FFT) were used as training data for FCN with the assistance of a camera. This study showed the feasibility of object detection and 3D target estimation with FCN. In [

14,

15], the authors presented target classification using radar systems and a convolutional neural network (CNN). After extracting features by using CNN, they used these features to train the support vector machine (SVM) classifier [

16]. However, to focus on processing time as well as classification accuracy, we choose the you only look once (YOLO) model among various CNN models. YOLO is a novel model that focuses more on processing time compared with other models [

17,

18,

19]. This model directly trains a network with bounding boxes and class probabilities from full images in one evaluation. As the entire detection pipeline is a single network, it takes less time to obtain the output once an input image is inserted [

20].

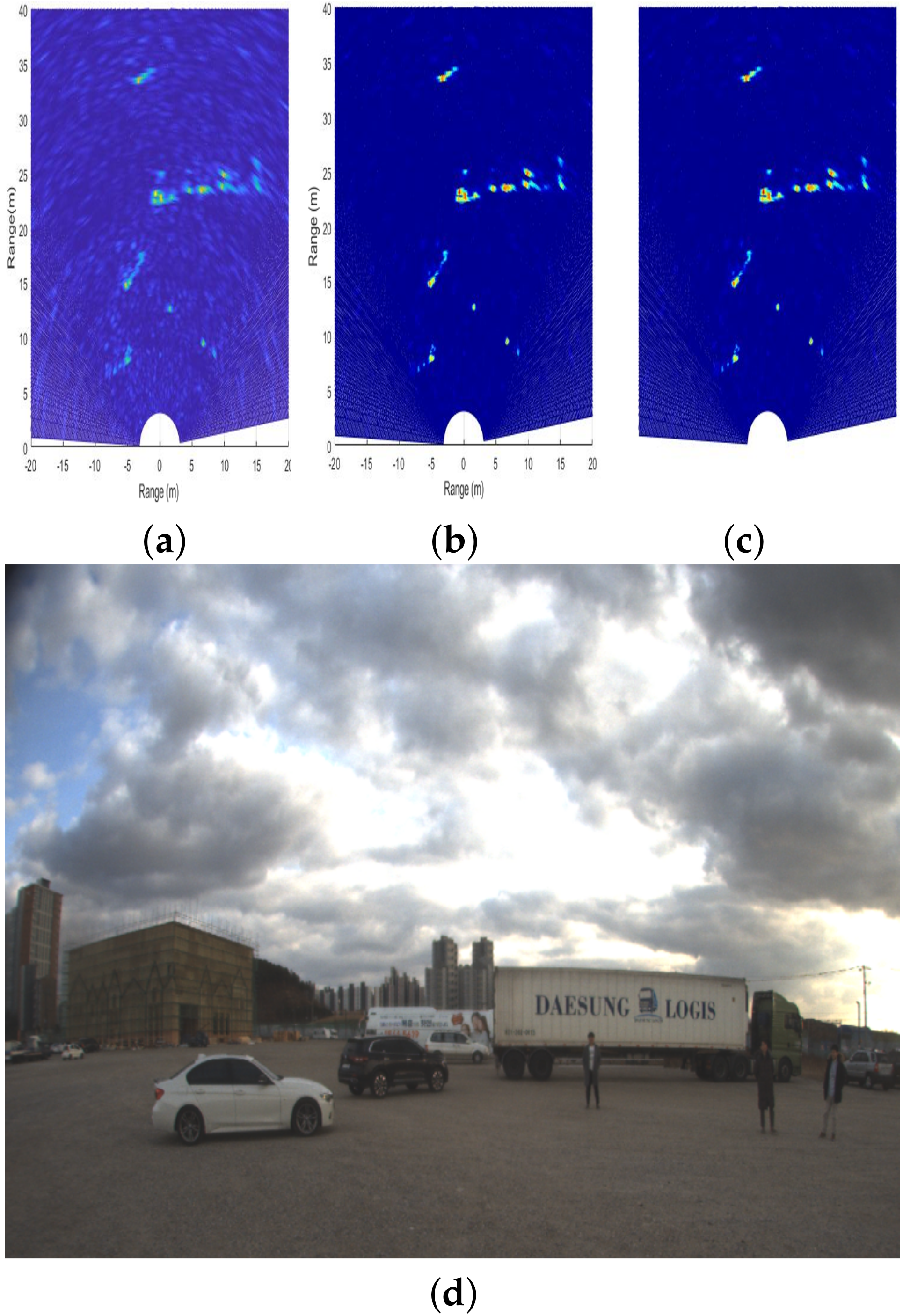

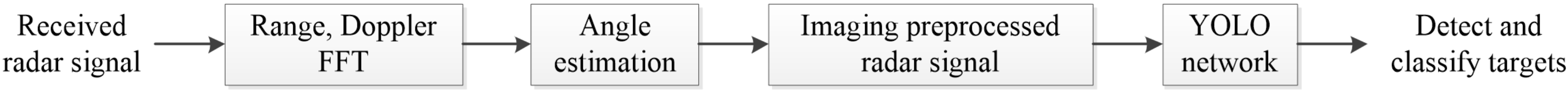

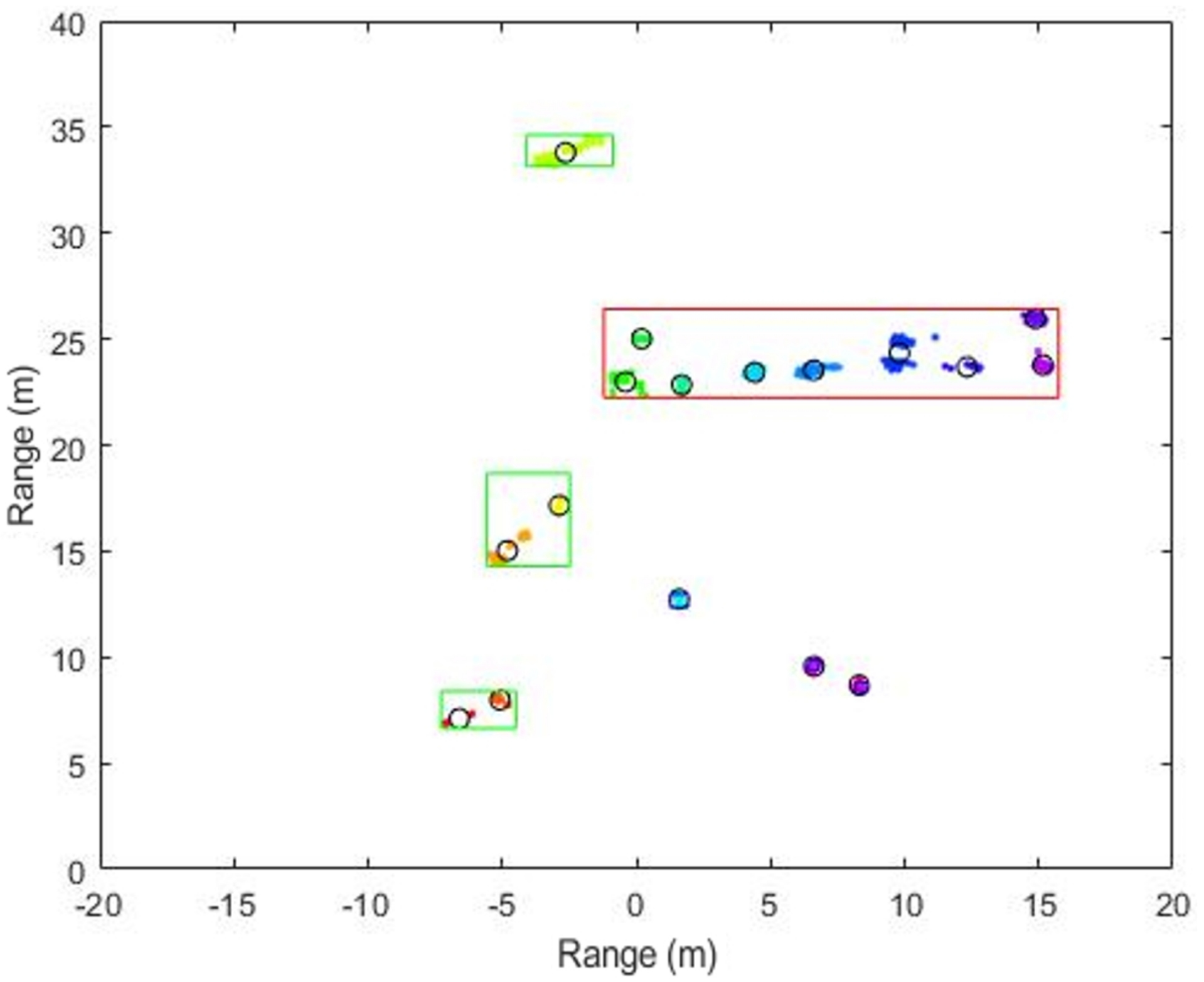

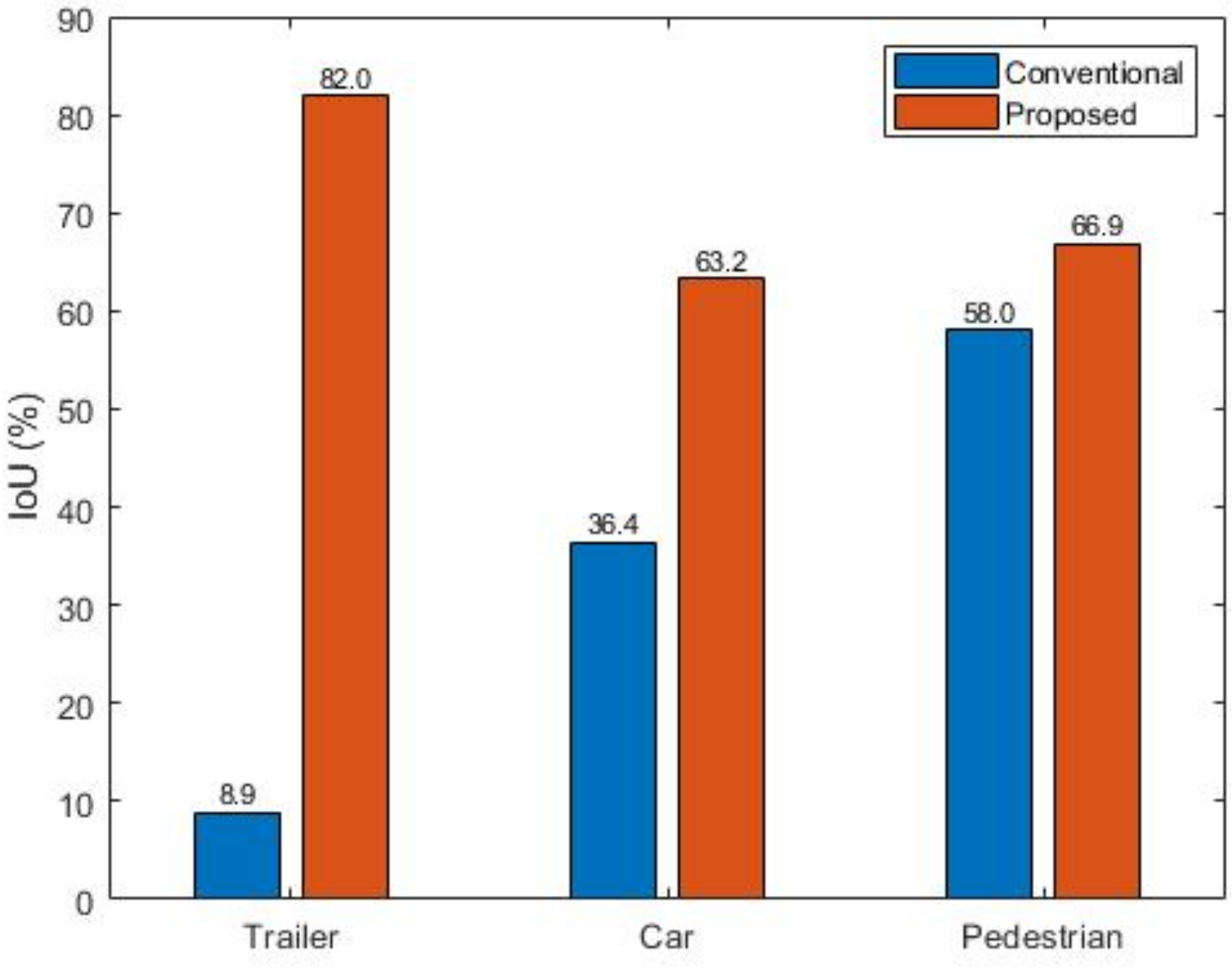

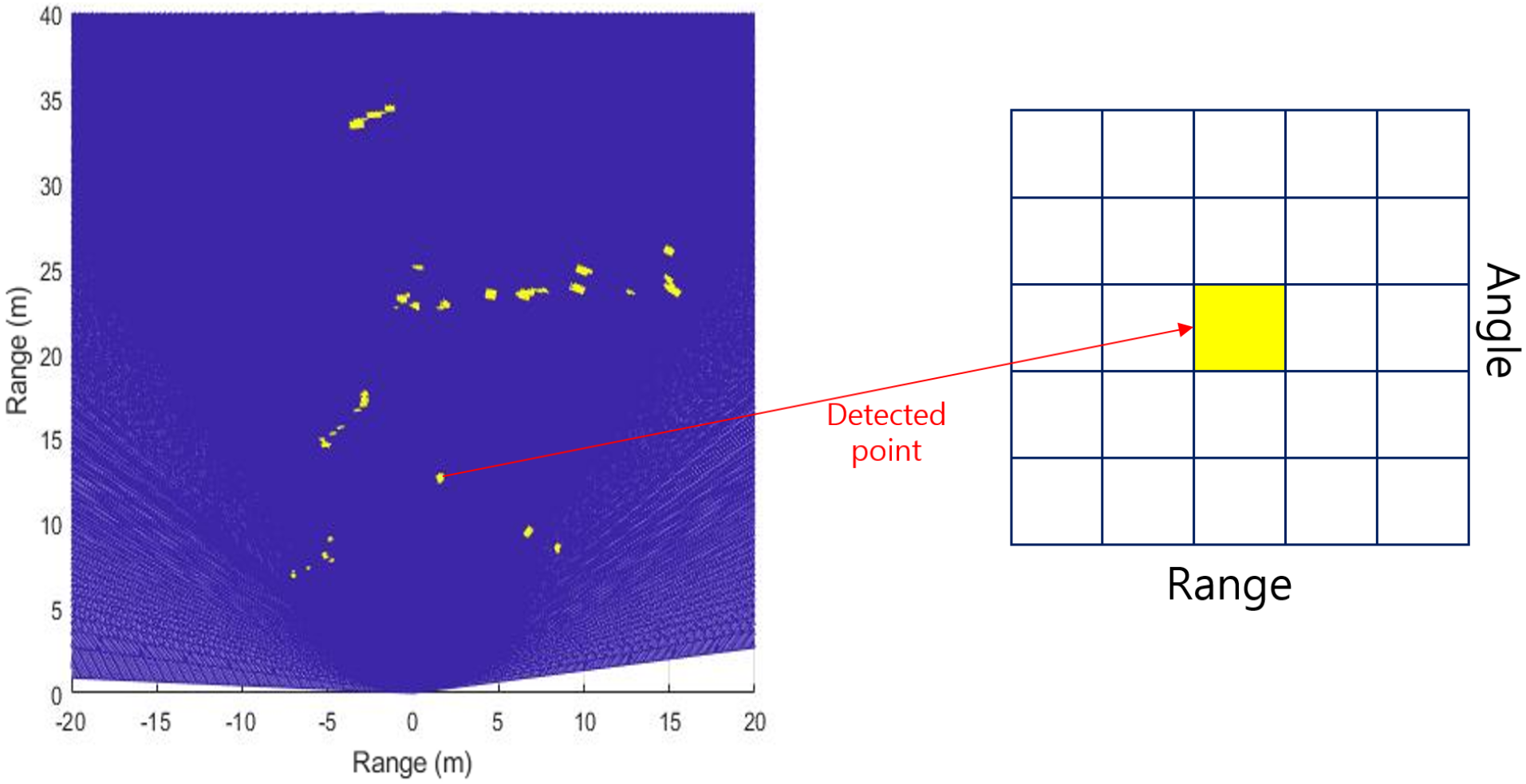

This paper proposes a simultaneous target detection and classification model that combines an automotive radar system with the YOLO network. First, the target detection results from the range-angle (RA) domain are obtained through radar signal processing, and then, YOLO is trained using the transformed RA domain data. After the learning is completed, we verify the performance of the trained model through the validation data. Moreover, we compare the detection and classification performance of our proposed method with those of the conventional methods used in radar signal processing. Some previous studies have combined the radar system and YOLO network. For example, the authors of [

21] showed the performance of the proposed YOLO-range-Doppler (RD) structure, which comprises a stem block, dense block, and YOLO, by using mini-RD data. In addition, the classification performance of YOLO by training it with the radar data measured in the RD domain was proposed in [

22]. Both the above-mentioned methods [

21,

22] dealt with radar data in the RD domain. However, RA data have the advantage of being more intuitive than RD data since the target location information can be expressed more effectively with the RA data. In other words, RA data can be used to obtain the target’s position in a Cartesian coordinate system. Thus, we propose applying the YOLO network to the radar data in the RA domain. In addition, the conventional detection and classification are conducted in two successive stages [

5,

6], but our proposed method can detect the size of the target while classifying its type. Furthermore, the proposed method has the advantage of detecting and classifying larger objects, compared with the existing method, and can operate in real time.

The remainder of this paper is organized as follows. First, in

Section 2, fundamental radar signal processing for estimating the target information is introduced. In

Section 3, we present our proposed simultaneous target detection and classification method using YOLO. Then, we evaluate the performance of our proposed method in

Section 4. Here, we also introduce our radar system and measurement environments. Finally, we conclude this paper in

Section 5.

5. Conclusions

In this paper, we propose a simultaneous detection and classification method by a using deep learning model, specifically a YOLO network, with preprocessed automotive radar signals. The performance of the proposed method was verified through actual measurement with a four-chip cascaded automotive radar. Compared to conventional detection and classification methods such as DBSCAN and SVM, the proposed method showed improved performance. Unlike the conventional methods, where the detection and classification are conducted successively, we could detect and classify the targets simultaneously through our proposed method. In particular, our proposed method performs better for vehicles with a long body. While the conventional methods recognize one long object as multiple objects, our proposed method exactly recognizes it as one object. This study demonstrates the possibility of applying deep learning algorithms to high resolution radar sensor data, particularly in RA domain. To increase the reliability of the performance of our proposed method, it will be necessary to conduct experiments in various environments.