2.1. Multi-Scale Top-Hat

In a sky background, the infrared target is often on the cloud layer or hidden in the background. To better retain only the target pixel information and reduce background interference, infrared images need to be processed by background suppression to remove a large amount of background noise. Top-hat light and dark processing transformation can suppress the background well and retain the target, but the shape and size of the target are different. To better preserve different targets, this paper uses a multi-scale Top-hat transform to process infrared images.

Based on the traditional Top-hat transform, the structural element template is mainly used for morphological corrosion and expansion to extract the target. The background of the infrared image is complex. The complex background noise can be removed by Top-hat transform, and the target can be well preserved. The specific principle of the algorithm is that the original infrared gray-scale image is first eroded and then expanded to obtain the open-operation image. The open-operation image is subtracted from the original infrared gray image to extract the bright details of the image, which corresponds mainly to the image target part. Then the original infrared gray-scale image is first expanded and then eroded to obtain the close-operation image. The close-operation image is subtracted from the original infrared gray image to extract the dark details of the image, which mainly corresponds to the background of the image. This is calculated as follows:

where

is the symbol of corrosion operation,

is the symbol of expansion operation,

is the template of corrosion and expansion,

is the original infrared gray image,

is the bright area of the extracted image,

is the dark area of the extracted image. Finally, the light and dark areas extracted from the original infrared gray image are subtracted to obtain the final result after the Top-hat transformation calculated as follows:

where

is the image after Top-hat processing. To make the Top-hat transform retain both small and large targets when suppressing background, multi-scale Top-hat transform is used to select different sizes of square templates for image manipulation. Square templates with sizes of 3, 5 and 7 are mainly selected, and then images processed with different sizes of templates are fused. The calculation formula used for fusion is as follows:

where

is the number of templates,

is the serial number corresponding to the template,

is the image after Top-hat transform of the template with size

,

is the image gain control coefficient,

is the fusion coefficient of the multi-size image,

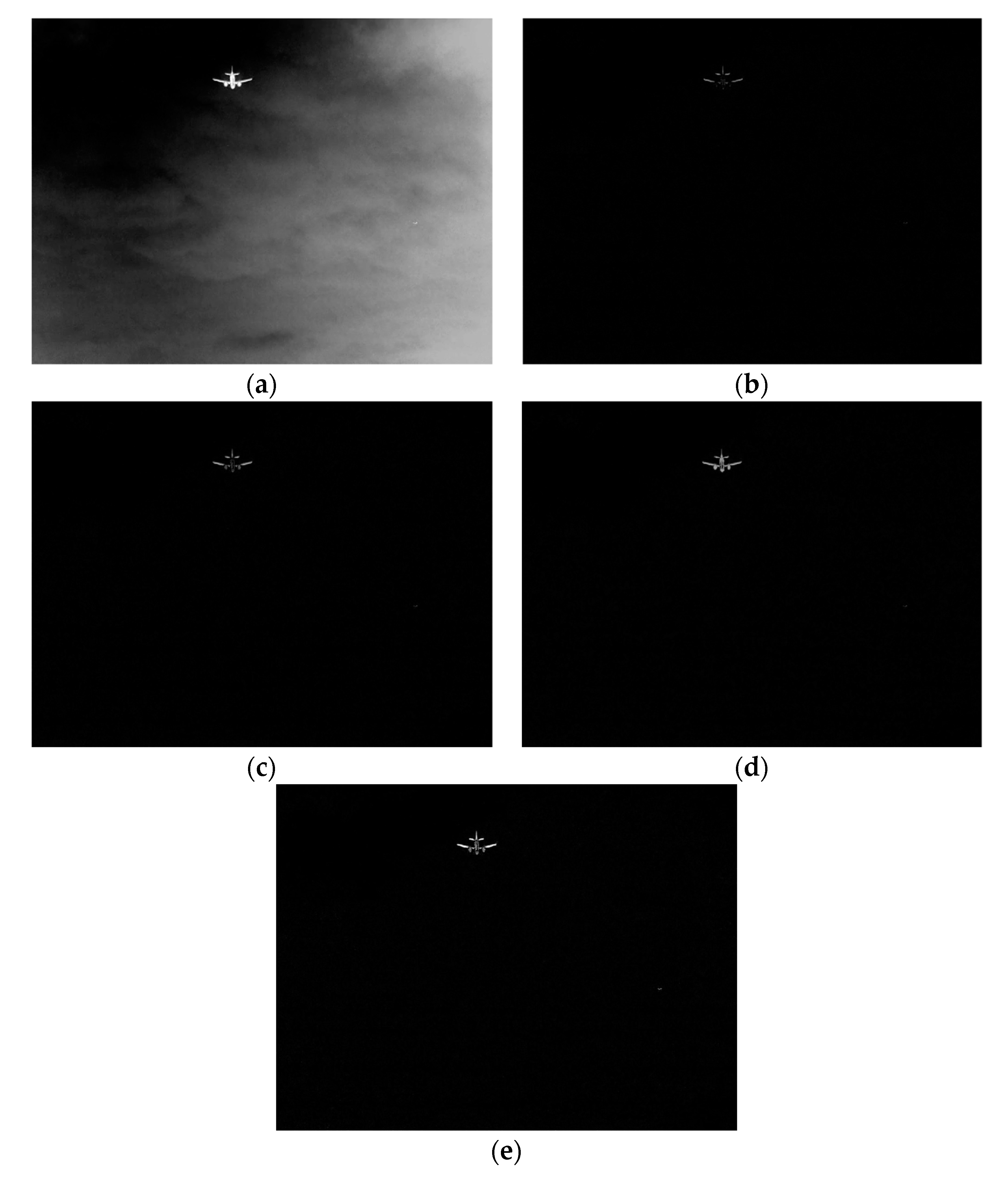

is the result of background suppression of multi-scale Top-hat transform. Through multi-scale Top-hat transformation, various objects of different sizes are retained, and the useless background information in the image is effectively suppressed, which reduces the background interference and is conducive to better detection of the target. The infrared image is suppressed by a multi-scale Top-hat change background as shown in

Figure 1.

2.2. Multiple Saliency Detection Fusion

The size of the target in the image is different, there are some fragments and incompleteness in target detection, and the small and weak targets cannot be highlighted very well. To make the targets more prominent and fully highlight the target, it is necessary to make the small targets more obvious and the weak part of the target more completely prominent. Target saliency extraction can well highlight the weak targets in the image, which is conducive to small target detection and the overall prominence of large targets.

To comprehensively detect the saliency of an image, three saliency detection methods were used to fuse, which are the saliency detection based on spectrum residuals, the saliency detection based on phase spectrum and the saliency detection based on quaternion Fourier transform, respectively. The saliency extraction based on spectral residuals is to transform the image from the time domain to the frequency domain, calculate the spectrum and spectral residuals, and then filter to reconstruct the image. The abnormal part of the image is salient. The algorithm mainly extracts the background of the image, uses a smoothing filter to smooth the frequency spectrum of the frequency domain amplitude, and then removes the background to highlight the abnormal part of the image. The principle of the algorithm is to transform the image obtained from a multi-scale Top-hat transform to the frequency domain by Fourier transform and calculate the amplitude and phase in the frequency domain as follows:

where

is Fourier transform,

is phase extraction function,

is multi-scale Top-hat transform image,

is the amplitude,

is the phase. Then, for ease of calculation and expansion of nuances, the amplitude spectrum of the image is obtained by the logarithm of amplitude

, and then the background spectrum is obtained by using the local average filter template to smooth the amplitude spectrum. The calculate these as follows:

where

is the amplitude spectrum,

is the background spectrum,

is the local average filter template,

is the size of the template, size 3 is more appropriate for smoothing the details of the image better. Finally, the spectral residual is obtained by subtracting the background spectrum

from the amplitude spectrum

, which can well represent the abnormal part of the image, including the target. The calculation is as follows:

where

is the spectrum residual. Then the spectral residuals and phases are inversely transformed by Fourier transform, and the saliency map

is reconstructed by Gauss filtering, calculated as follows:

where

is an imaginary number unit,

is an exponential function,

is an inverse Fourier transform,

is a Gauss filtering function,

is a saliency detection map of spectrum residuals. However, the size of the target is different. To get the target of various sizes, the algorithm is improved by using multi-scale saliency detection, using several different size Gaussian filter templates, and using a maximum fusion algorithm for saliency detection of different scales. The scale fusion algorithm is expressed as follows:

where

is a multi-scale saliency detection map of spectrum residuals,

is a saliency map reconstructed by a Gauss filter template of

size, and the maximum value of

is 10. The final saliency map can be adapted to different sizes and shape targets, which can highlight the target well.

Because the amplitude information sometimes cannot fully represent the saliency of the target, it needs another detection supplement. Based on the saliency detection of the phase spectrum, the saliency information of the target is obtained mainly from the phase information. The principle of the algorithm is that the multi-scale Top-hat transformed gray image is first transformed into the phase spectrum in the frequency domain by Fourier transform, then the phase spectrum of the image is inversely transformed by Fourier transform, and the saliency map is obtained by Gauss filtering, calculated as follows:

where

is the imaginary number unit,

is the exponential function,

is the Fourier transform,

is the inverse Fourier transform,

is the Gauss filter function,

is the phase spectrum of the image,

is the saliency detection image of the phase spectrum. Similarly, multi-scale saliency detection is used to improve the algorithm and adapt to target saliency detection of different sizes. The scale fusion algorithm is obtained as follows:

where

is a multi-scale saliency detection map of phase spectrum,

is a saliency map reconstructed by a Gauss filter template of

size, and the maximum value of

is 10.

After the above two kinds of saliency detection, the edge of the target is usually too blurred. To highlight the edge and detail information, the saliency map is improved by using quaternion Fourier saliency detection. The quaternion Fourier transform is based on the characteristics of human visual system, using four independent features to represent each image, and then quaternary features and phase spectrum are used to detect the saliency of the target, and the saliency of the target in the image is expressed in detail according to the characteristics of the human eye. Because of the need to use the color information of the image, the gray-scale image after multi-scale Top-hat transformation, first through 256 pseudo-color mappings, gets the Red Green Blue (RGB) image and then carries on the quaternary Fourier saliency detection. The principle of the algorithm is to extract the color features of the RGB image obtained from the mapping as follows:

where

,

and

are the red, green and blue color features of the original RGB image obtained by pseudo-color mapping respectively.

,

,

and

are the adjusted red, green, blue and yellow color features respectively. Then the binary features

and

are calculated, which correspond to the two neurons of human visual perception. The calculation is as follows:

Other two-dimensional feature are the brightness feature and motion feature, which are

and

. The brightness feature is obtained from the red, green and blue color features of the original RGB image obtained by pseudo-color mapping. The motion feature is obtained by the frame difference method according to the brightness difference between the two images. The necessary calculations are as follows:

where

is the brightness feature and

is the motion feature. Finally, a quaternion function is constructed. The phase spectrum is obtained by Fourier transform. The phase spectrum is filtered by inverse Fourier transform and Gauss filter to obtain the saliency detection map

based on the quaternary Fourier transform calculated as follows:

where

,

and

are characteristic weight adjustment coefficients,

is Gauss filtering function,

is quaternion characteristic function,

is the result of the phase spectrum of quaternion function obtained by Fourier transform and then by inverse Fourier transform.

The saliency map based on the Fourier transform contains more detail information and edge information, and a more detailed saliency target, which makes up for the shortcomings of the two saliency detection methods mentioned above, but cannot highlight the saliency of the target as a whole. Finally, combining three saliency detection algorithms, a variety of saliency detection fusion is improved. Because the weights of the three detection algorithms are the same, they have their own shortcomings and advantages, so the method of equalization is used to fuse the three saliency detection results

,

and

. The fusion algorithm is calculated as follows:

where

is the multi-scale saliency detection map of spectrum residual,

is the multi-scale saliency detection map of the phase spectrum,

is the saliency detection map of the quaternion Fourier transform,

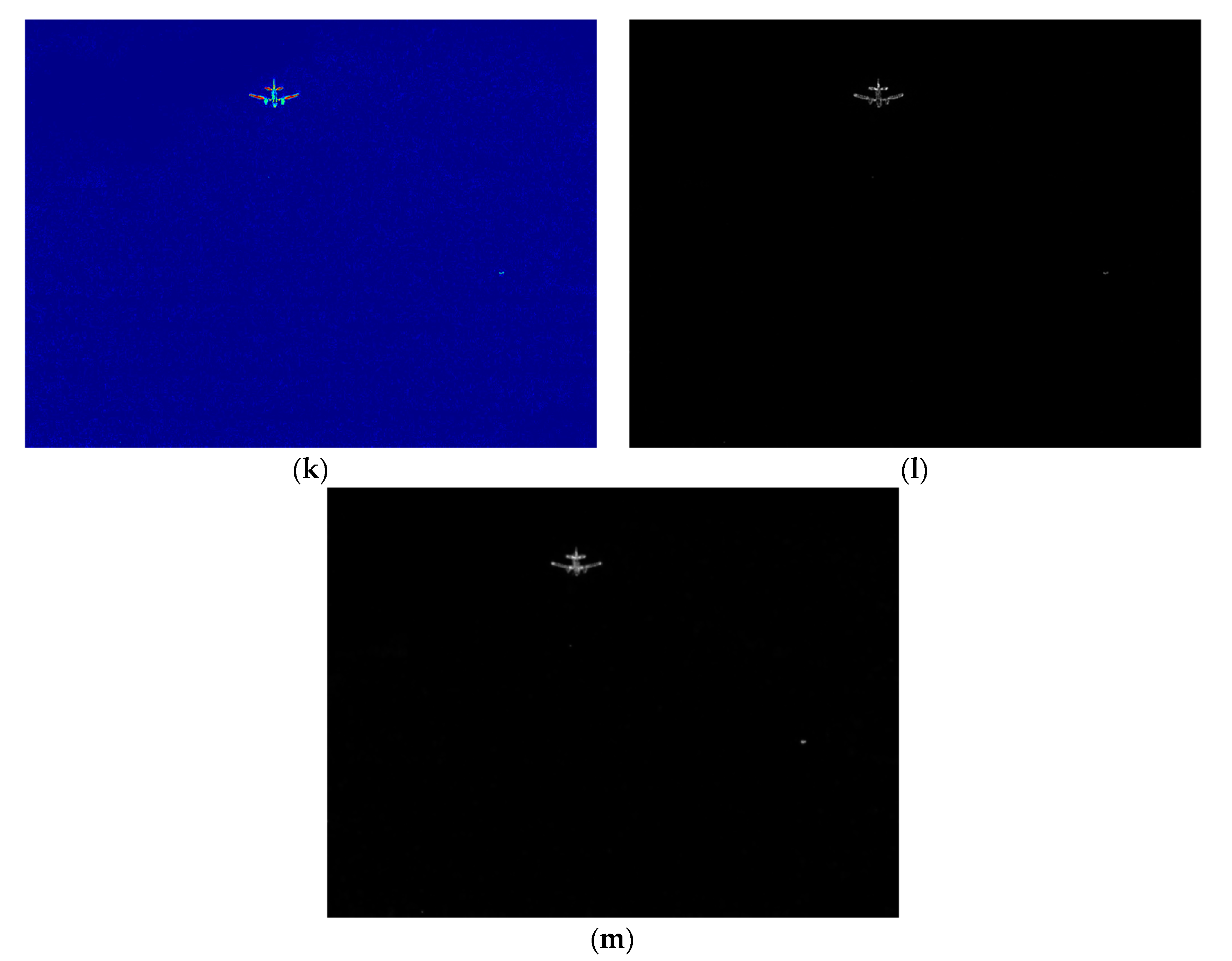

is the fusion saliency map of three saliency detection. The gray-scale image after multi-scale Top-hat transformation is processed by a multi-scale saliency fusion method as shown in

Figure 2. The final saliency map effect obtained by fusion is shown in

Figure 2m. From the four graphs of

Figure 2e,j,l,m, we can see that using the fusion algorithm in this paper, the saliency target is not only clear and the edge is obvious, but also the whole target is complete and undivided. The saliency effect of the infrared image is good.