LSTM and GRU Neural Networks as Models of Dynamical Processes Used in Predictive Control: A Comparison of Models Developed for Two Chemical Reactors

Abstract

:1. Introduction

- (a)

- What is the accuracy of the dynamical models based on the GRU networks, and how do they compare to the LSTM ones?

- (b)

- How do the GRU dynamical models perform in MPC, and how do they compare to the LSTM-based MPC approach?

- (a)

- A thorough comparison of LSTM and GRU neural networks as models of two dynamical processes, polymerisation and neutralisation (pH) reactors, is considered. An important question is whether or not the GRU network, although it has a simpler structure as the LSTM one, offers satisfying modelling accuracy;

- (b)

- The derivation of MPC prediction equations for the LSTM and GRU models;

- (c)

- The development of MPC algorithms for the two aforementioned processes with different LSTM and GRU models used for prediction. An important question is whether or not the GRU network offers control quality comparable to that possible when the more complex LSTM structure is applied.

2. LSTM and GRU Neural Networks

2.1. The LSTM Neural Network

- Two types of activation functions;

- A cell state that serves as the long-term memory of the neuron;

- The neuron is called a cell and has a complex structure consisting of four gates that regulate the information flow.

2.1.1. Activation Functions

2.1.2. Hidden State and Cell State

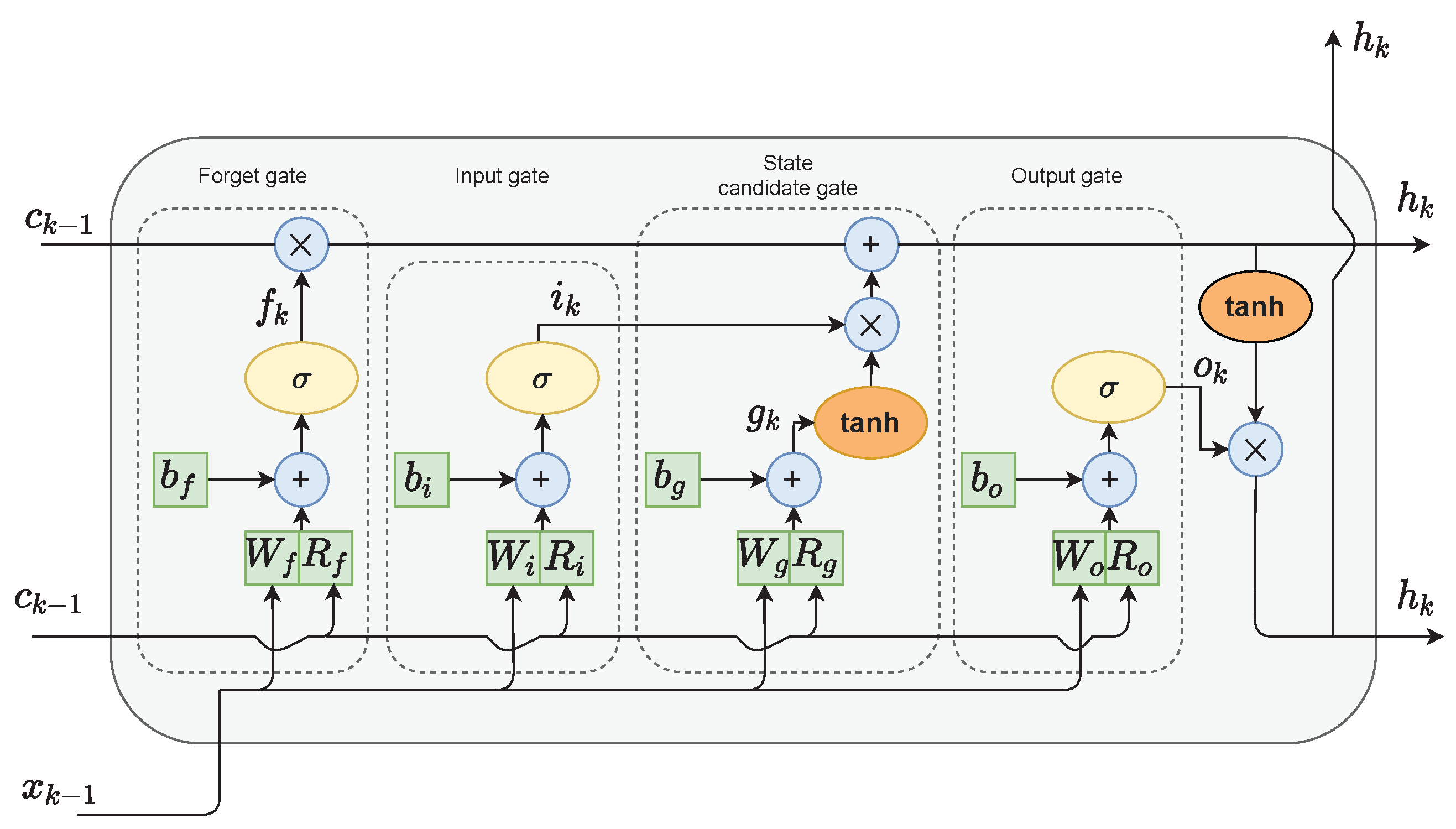

2.1.3. Gates

- The forget gate f decides which values of the previous cell state should be discarded and which should be kept;

- The input gate i selects values from the previous hidden state and the current input to update by passing them through the sigmoid function. The function product is then multiplied by the previous cell state;

- The cell state candidate gate g first regulates the information flow in the network by using the tanh function on the previous hidden state and the current input. The product of tanh is multiplied by the input gate output to calculate the candidate for the current cell state. The candidate is then added to the previous cell state;

- The output gate o first calculates the current hidden state by passing the previous hidden state and the current input through the sigmoid function to select which new information should be taken into account. Then, the current cell state value is passed through the tanh function. The products of both of those functions are finally multiplied.

2.1.4. LSTM Layer Architecture

2.2. The GRU Neural Network

- The GRU cell lacks the output gate; therefore, it has fewer parameters;

- The usage of the cell state is discarded. The hidden state serves both as the working and long-term memory of the network.

- The reset gate r is used to select which information to discard from the previous hidden state and input values;

- The role of the update gate z is to select which information from the previous hidden state should be kept and passed along to the next steps;

- Candidate state gate g calculates the candidate for the future hidden state. This is done by firstly multiplying the previous state with the reset gate’s output. This step can be interpreted as forgetting unimportant information from the past. Next, new data form the input are added to the remaining information. Finally, the tanh function is applied to the data to regulate the information flow.

3. LSTM and GRU Neural Networks in Model Predictive Control

3.1. The MPC Problem

- The magnitude constraints and are enforced on the manipulated variable over the control horizon ;

- The constraints and are imposed on the increments of the same variable over the control horizon ;

- The constraints put on the predicted output variable and over the prediction horizon N.

3.2. The LSTM Neural Network in MPC

3.3. The GRU Neural Network in MPC

- The estimated disturbance is calculated from Equation (22):

- a.

- b.

- The MPC optimisation task is then performed. To calculate the output prediction, the cell and hidden state prediction must be calculated first:

- a.

- b.

- The first element of the calculated decision vector (Equation (19)) is applied to the process, i.e., .

4. Results of the Simulations

4.1. Description of the Dynamical Systems

4.1.1. Benchmark 1: The Polymerisation Reactor

4.1.2. Benchmark 2: The Neutralisation Reactor

4.2. LSTM and GRU Neural Networks for Modelling of Polymerisation and Neutralisation Reactors

- 500 for the models with ;

- 750 for the models with ;

- 1000 for the models with .

- The order of the dynamics of the LSTM model was set to . The number of neurons in the hidden layer was set to . For the considered configuration, ten models were trained, and the best one was chosen;

- The number of neurons was increased to two. Ten models were trained, and the best was chosen. This procedure was repeated until the number of neurons reached ;

- The first two steps were repeated with the increased order of the dynamics , .

- is the the mean squared error for the training dataset in ARX mode;

- is the the mean squared error for the validation dataset in ARX mode;

- is the the mean squared error for the training dataset in recurrent mode;

- is the the mean squared error for the validation dataset in recurrent mode.

- In the case of the polymerisation reactor, the results achieved with the LSTM and GRU networks were comparable. As seen in Figure 4, the means squared errors were similar for every combination of , and ;

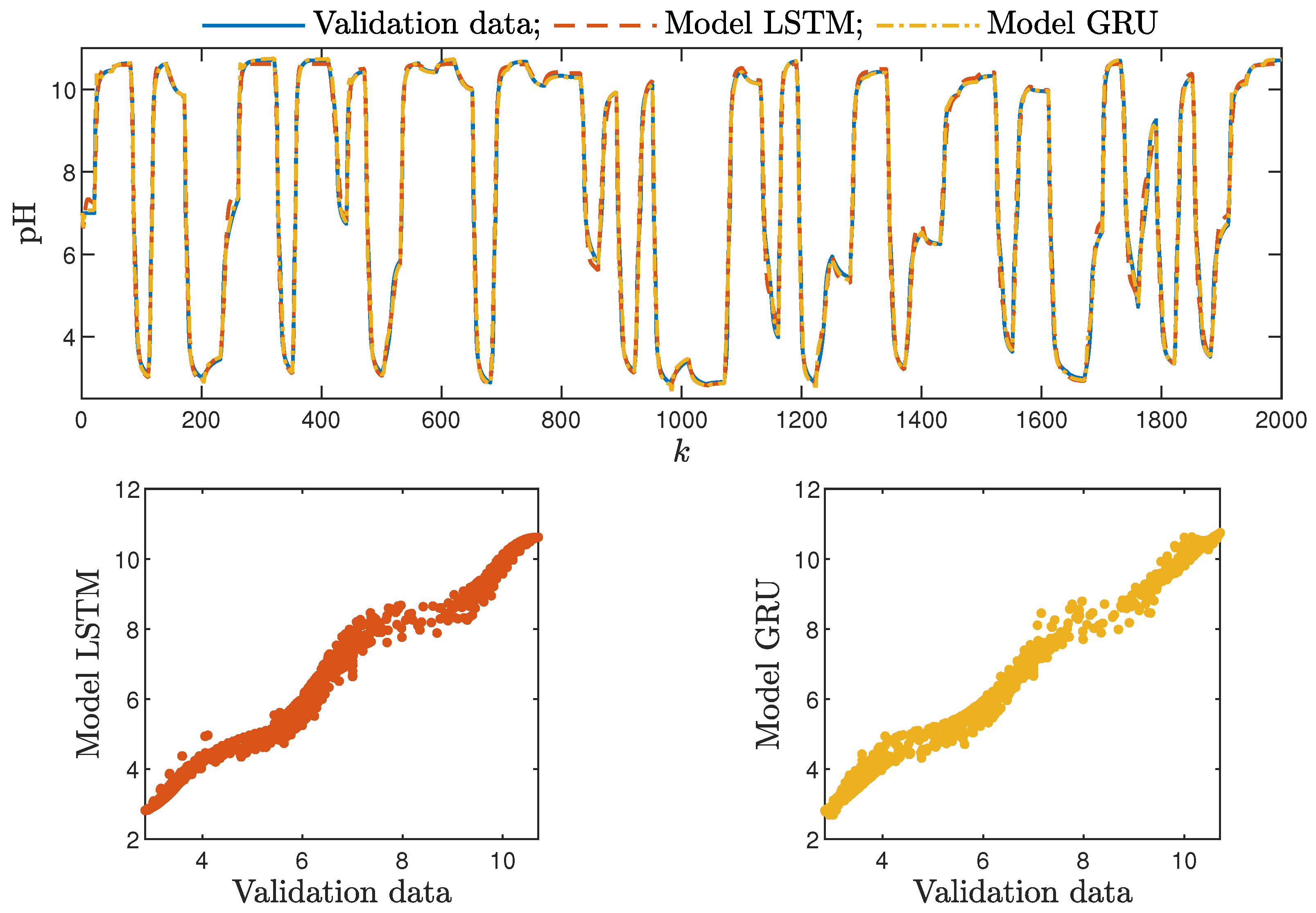

- In the case of the neutralisation reactor, the LSTM models ensured a better quality of modelling, especially for models with a low number of parameters. However, as seen in Figure 5, as the number of neurons increased, this difference became more and more negligible. This is again not surprising. GRU networks have less parameters than LSTM networks. Therefore, GRU models with a low number of neurons and a low order of the dynamics performed worse than their LSTM counterparts. As the models became bigger and more complex, the difference between their quality decreased.

- Models with a higher numbers of neurons (15–30) ensured the best and most consistent modelling quality. This is not surprising, as the number of model parameters is directly proportional to the capacity to reproduce the behaviour of more complex processes. However, this can also be a main drawback of complex models, because of the enormous number of parameters, as shown in Figure 6 and Figure 7, increases their computational cost significantly;These models had too few parameters to accurately represent the behaviour of the processes under study;

- For the models with a medium number of neurons (3–10), the modelling quality was not consistent. In some cases, it was quite poor; in others, it even outperformed models with a huge number of neurons (an example can be found in Table 4, the GRU network with ). One can conclude that this group of models has a structure complex enough to represent the behaviour of the systems under investigation. The training procedure must be, however, performed many times, as training may sometimes not be successful. In other words, if the goal is to find the model with the minimum number of parameters and good quality, the medium-sized models are the best option;

- Interestingly enough, the order of the dynamics of the model seemed not to greatly impact the modelling quality. Models with higher order were most commonly only slightly better than those with . Only in the case of the neutralisation reactor with in Table 3 could a noticeable improvement be observed when was set to two. The unique long-term memory quality of the networks under study may be a cause of this phenomenon. The information about the important previous input and output signals from the past can be kept inside the hidden and cell states, and therefore, the networks can perform very well with only the most recent input values (i.e., , );

4.3. LSTM and GRU Neural Network for the MPC of Polymerisation and Neutralisation Reactors

- The constant weighting coefficient was assumed;

- The prediction horizon N and the control horizon were set to have the same, arbitrarily chosen lengths. If the controller was not working properly, both horizons were lengthened;

- The prediction horizon was gradually shortened, and its minimal possible length was chosen (with the condition );

- The effect of changing the length of the control horizon on the resulting control quality was then assessed experimentally (e.g., assuming successively ). The shortest possible control horizon was chosen;

- Finally, after determining the horizon’s lengths, the weighting coefficient was adjusted.

- , , for the polymerisation process;

- , , for the neutralisation process.

- Optimisation algorithm—Sequential Quadratic Programming (SQP);

- Finite differences type—centred.

- The sum of squared errors (E);

- The Huber standard deviation () of the control error;

- The rational entropy () of the control error.

- Both types of neural networks allowed for a successful application of the MPC control scheme. All control performance indicators, i.e., E, and , showed that GRU network models, when applied for prediction in MPC, lead to very similar control quality when the rudimentary LSTM networks are used. What is more, as GRU models have fewer internal parameters, their computation cost and, therefore, the time of calculations are lower, as shown in Table 5 and Table 6;

- It is advisable to choose models with a relatively simple structure and a low number of parameters to implement in the MPC scheme. More complex models often provide comparable or even worse quality of control, and the computation cost rises with the number of parameters of the model;

- Minor model imperfections are reduced with great success by feedback in MPC. An example of this phenomenon can be observed in the bottom plots in Figure 12, where the model outputs differ slightly from the validation data in some areas. However, when the models are implemented in the MPC scheme, as shown in Figure 16, the quality of control is very satisfactory. However, the negative feedback is not sufficient to ensure satisfactory control if the model itself has poor quality. Example simulation results for the polymerisation process are presented in Figure 18. As a result of a very bad model, the MPC algorithm leads to unacceptable control quality, i.e., the set-point is never achieved, and strong oscillations are observed. Example simulations results when an inaccurate model is used in MPC for the neutralisation process are presented in Figure 19. In this case, the overshoot is larger and the setting time is longer when compared with the MPC algorithm based on a good model, e.g., as shown in Figure 15.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Maciejowski, J. Predictive Control with Constraints; Prentice Hall: Harlow, UK, 2002. [Google Scholar]

- Tatjewski, P. Advanced Control of Industrial Processes, Structures and Algorithms; Springer: London, UK, 2007. [Google Scholar]

- Nebeluk, R.; Marusak, P. Efficient MPC algorithms with variable trajectories of parameters weighting predicted control errors. Arch. Control Sci. 2020, 30, 325–363. [Google Scholar]

- Carli, R.; Cavone, G.; Ben Othman, S.; Dotoli, M. IoT Based Architecture for Model Predictive Control of HVAC Systems in Smart Buildings. Sensors 2020, 20, 781. [Google Scholar] [CrossRef] [Green Version]

- Rybus, T.; Seweryn, K.; Sąsiadek, J.Z. Application of predictive control for manipulator mounted on a satellite. Arch. Control Sci. 2018, 28, 105–118. [Google Scholar]

- Ogonowski, S.; Bismor, D.; Ogonowski, Z. Control of complex dynamic nonlinear loading process for electromagnetic mill. Arch. Control Sci. 2020, 30, 471–500. [Google Scholar]

- Horla, D. Experimental Results on Actuator/Sensor Failures in Adaptive GPC Position Control. Actuators 2021, 10, 43. [Google Scholar] [CrossRef]

- Zarzycki, K.; Ławryńczuk, M. Fast real-time model predictive control for a ball-on-plate process. Sensors 2021, 21, 3959. [Google Scholar] [CrossRef]

- Bania, P. An information based approach to stochastic control problems. Int. J. Appl. Math. Comput. Sci. 2020, 30, 47–59. [Google Scholar]

- Nelles, O. Nonlinear System Identification: From Classical Approaches to Neural Networks and Fuzzy Models; Springer: Berlin, Germany, 2001. [Google Scholar]

- Haykin, S. Neural Networks and Learning Machines; Pearson Education: Upper Saddle River, NJ, USA, 2009. [Google Scholar]

- Ławryńczuk, M. Computationally Efficient Model Predictive Control Algorithms: A Neural Network Approach; Studies in Systems, Decision and Control; Springer: Cham, Switzerland, 2014; Volume 3. [Google Scholar]

- Bianchi, F.M.; Maiorino, E.; Kampffmeyer, M.C.; Rizzi, A.; Jenssen, R. Recurrent Neural Networks for Short-Term Load Forecasting: An Overview and Comparative Analysis; Springer Briefs in Computer Science; Springer: Berlin, Germany, 2017. [Google Scholar]

- Hammer, B. Learning with Recurrent Neural Networks; Lecture Notes in Control and Information Sciences; Springer: Berlin, Germany, 2000; Volume 254. [Google Scholar]

- Mandic, D.P.; Chambers, J.A. Recurrent Neural Networks for Prediction: Learning Algorithms, Architectures and Stability; Wiley: Chichester, UK, 2001. [Google Scholar]

- Rovithakis, G.A.; Christodoulou, M.A. Adaptive Control with Recurrent High-Order Neural Networks; Springer: Berlin, Germany, 2000. [Google Scholar]

- Bengio, Y.; Simard, P.; Frasconi, P. Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 1994, 5, 157–166. [Google Scholar] [CrossRef] [PubMed]

- Hochreiter, S. Untersuchungen zu Dynamischen Neuronalen Netzen. Master’s Thesis, Technical University Munich, Munich, Germany, 1991. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long Short-term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modeling. arXiv 2014, arXiv:1412.3555. [Google Scholar]

- Islam, A.; Chang, K.H. Real-time AI-based informational decision-making support system utilizing dynamic text sources. Appl. Sci. 2021, 11, 6237. [Google Scholar] [CrossRef]

- Graves, A.; Schmidhuber, J. Offline handwriting recognition with multidimensional recurrent neural networks. In Advances in Neural Information Processing Systems; Koller, D., Schuurmans, D., Bengio, Y., Bottou, L., Eds.; Curran Associates, Inc.: La Jolla, CA, USA, 2009; Volume 21, pp. 1–8. [Google Scholar]

- Sak, H.; Senior, A.; Beaufays, F. Long short-term memory recurrent neural network architectures for large scale acoustic modeling. In Proceedings of the Annual Conference of the International Speech Communication Association, Interspeech 2014, Singapore, 14–18 September 2014; pp. 338–342. [Google Scholar]

- Graves, A.; Abdel-Rahman, M.; Geoffrey, H. Speech recognition with deep recurrent neural networks. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 6645–6649. [Google Scholar]

- Capes, T.; Coles, P.; Conkie, A.; Golipour, L.; Hadjitarkhani, A.; Hu, Q.; Huddleston, N.; Hunt, M.; Li, J.; Neeracher, M.; et al. Siri on-device deep learning-guided unit selection text-to-speech system. In Proceedings of the Interspeech 2017, Stockholm, Sweden, 20–24 August 2017; pp. 4011–4015. [Google Scholar]

- Telenyk, S.; Pogorilyy, S.; Kramov, A. Evaluation of the coherence of Polish texts using neural network models. Appl. Sci. 2021, 11, 3210. [Google Scholar] [CrossRef]

- Ackerson, J.M.; Dave, R.; Seliya, N. Applications of recurrent neural network for biometric authentication & anomaly detection. Information 2021, 12, 272. [Google Scholar]

- Gallardo-Antolín, A.; Montero, J.M. Detecting deception from gaze and speech using a multimodal attention LSTM-based framework. Appl. Sci. 2021, 11, 6393. [Google Scholar] [CrossRef]

- Kulanuwat, L.; Chantrapornchai, C.; Maleewong, M.; Wongchaisuwat, P.; Wimala, S.; Sarinnapakorn, K.; Boonya-Aroonnet, S. Anomaly detection using a sliding window technique and data imputation with machine learning for hydrological time series. Water 2021, 13, 1862. [Google Scholar] [CrossRef]

- Bursic, S.; Boccignone, G.; Ferrara, A.; D’Amelio, A.; Lanzarotti, R. Improving the accuracy of automatic facial expression recognition in speaking subjects with deep learning. Appl. Sci. 2020, 10, 4002. [Google Scholar] [CrossRef]

- Chen, J.; Huang, X.; Jiang, H.; Miao, X. Low-cost and device-free human activity recognition based on hierarchical learning model. Sensors 2021, 21, 2359. [Google Scholar] [CrossRef] [PubMed]

- Fang, Y.; Yang, S.; Zhao, B.; Huang, C. Cyberbullying detection in social networks using Bi-GRU with self-attention mechanism. Information 2021, 12, 171. [Google Scholar] [CrossRef]

- Knaak, C.; von Eßen, J.; Kröger, M.; Schulze, F.; Abels, P.; Gillner, A. A spatio-temporal ensemble deep learning architecture for real-time defect detection during laser welding on low power embedded computing boards. Sensors 2021, 21, 4205. [Google Scholar] [CrossRef]

- Ullah, A.; Muhammad, K.; Ding, W.; Palade, V.; Haq, I.U.; Baik, S.W. Efficient activity recognition using lightweight CNN and DS-GRU network for surveillance applications. Appl. Soft Comput. 2021, 103, 107102. [Google Scholar] [CrossRef]

- Varshney, A.; Ghosh, S.K.; Padhy, S.; Tripathy, R.K.; Acharya, U.R. Automated classification of mental arithmetic tasks using recurrent neural network and entropy features obtained from multi-channel EEG signals. Electronics 2021, 10, 1079. [Google Scholar] [CrossRef]

- Ye, F.; Yang, J. A Deep Neural Network Model for Speaker Identification. Appl. Sci. 2021, 11, 3603. [Google Scholar] [CrossRef]

- Gonzalez, J.; Yu, W. Non-linear system modeling using LSTM neural networks. IFAC-PapersOnLine 2018, 51, 485–489. [Google Scholar] [CrossRef]

- Schwedersky, B.B.; Flesch, R.C.C.; Dangui, H.A.S. Practical nonlinear model predictive control algorithm for long short-term memory networks. IFAC-PapersOnLine 2019, 52, 468–473. [Google Scholar] [CrossRef]

- Karimanzira, D.; Rauschenbach, T. Deep learning based model predictive control for a reverse osmosis desalination plant. J. Appl. Math. Phys. 2020, 8, 2713–2731. [Google Scholar] [CrossRef]

- Jeon, B.K.; Kim, E.J. LSTM-based model predictive control for optimal temperature set-point planning. Sustainability 2021, 13, 894. [Google Scholar] [CrossRef]

- Iglesias, R.; Rossi, F.; Wang, K.; Hallac, D.; Leskovec, J.; Pavone, M. Data-driven model predictive control of autonomous mobility-on-demand systems. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 6019–6025. [Google Scholar]

- Okulski, M.; Ławryńczuk, M. A novel neural network model applied to modeling of a tandem-wing quadplane drone. IEEE Access 2021, 9, 14159–14178. [Google Scholar]

- Pascanu, R.; Mikolov, T.; Bengio, Y. On the difficulty of training recurrent neural networks. In Proceedings of the 30th International Conference on Machine Learning, Atlanta, GA, USA, 16–21 June 2013; Volume 28, pp. 1310–1318. [Google Scholar]

- Doyle, F.J.; Ogunnaike, B.A.; Pearson, R. Nonlinear model-based control using second-order Volterra models. Automatica 1995, 31, 697–714. [Google Scholar] [CrossRef]

- Ławryńczuk, M. Practical nonlinear predictive control algorithms for neural Wiener models. J. Process Control 2013, 23, 696–714. [Google Scholar] [CrossRef]

- Gómez, J.C.; Jutan, A.; Baeyens, E. Wiener model identification and predictive control of a pH neutralisation process. Proc. IEEE Part D Control Theory Appl. 2004, 151, 329–338. [Google Scholar] [CrossRef] [Green Version]

- Ławryńczuk, M. Modelling and predictive control of a neutralisation reactor using sparse Support Vector Machine Wiener models. Neurocomputing 2016, 205, 311–328. [Google Scholar] [CrossRef]

- Domański, P. Control Performance Assessment: Theoretical Analyses and Industrial Practice; Studies in Systems, Decision and Control; Springer: Cham, Switzerland, 2020; Volume 245. [Google Scholar]

| LSTM | GRU | ||||

|---|---|---|---|---|---|

| 1 | 1 | 10.22 | 12.17 | 6.58 | 11.08 |

| 2 | 2.51 | 4.06 | 1.58 | 2.26 | |

| 3 | 1.85 | 2.98 | 1.00 | 1.87 | |

| 4 | 1.21 | 1.85 | 0.58 | 0.99 | |

| 5 | 0.35 | 0.88 | 0.61 | 1.06 | |

| 10 | 0.08 | 0.19 | 0.29 | 0.65 | |

| 15 | 0.02 | 0.08 | 0.16 | 0.30 | |

| 20 | 0.06 | 0.13 | 0.09 | 0.16 | |

| 25 | 0.07 | 0.19 | 0.16 | 0.31 | |

| 2 | 1 | 5.21 | 7.33 | 9.25 | 14.10 |

| 2 | 0.83 | 1.46 | 2.56 | 4.58 | |

| 3 | 1.41 | 2.67 | 2.02 | 3.00 | |

| 4 | 0.30 | 0.59 | 0.57 | 1.19 | |

| 5 | 0.50 | 1.09 | 0.26 | 0.63 | |

| 10 | 0.06 | 0.19 | 0.19 | 0.39 | |

| 15 | 0.14 | 0.26 | 0.24 | 0.50 | |

| 20 | 0.13 | 0.23 | 0.11 | 0.24 | |

| 25 | 0.08 | 0.17 | 0.15 | 0.31 | |

| 3 | 1 | 7.68 | 11.05 | 2.96 | 5.24 |

| 2 | 1.37 | 2.42 | 1.09 | 1.76 | |

| 3 | 1.45 | 2.49 | 1.01 | 1.82 | |

| 4 | 0.55 | 1.01 | 0.66 | 1.27 | |

| 5 | 0.80 | 1.49 | 0.22 | 0.55 | |

| 10 | 0.08 | 0.18 | 0.10 | 0.21 | |

| 15 | 0.07 | 0.24 | 0.24 | 0.65 | |

| 20 | 0.07 | 0.16 | 0.14 | 0.27 | |

| 25 | 0.06 | 0.17 | 0.20 | 0.38 | |

| LSTM | GRU | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 2.67 | 3.26 | 6.83 | 7.86 | 3.73 | 5.64 | 8.06 | 11.07 |

| 2 | 1.51 | 2.84 | 2.64 | 4.75 | 1.39 | 2.53 | 3.09 | 5.11 | ||

| 3 | 0.23 | 0.37 | 0.59 | 0.95 | 0.33 | 0.55 | 0.66 | 1.08 | ||

| 4 | 0.25 | 0.54 | 0.37 | 0.84 | 0.40 | 0.83 | 0.97 | 1.89 | ||

| 5 | 0.10 | 0.19 | 0.21 | 0.41 | 0.19 | 0.50 | 0.53 | 1.18 | ||

| 10 | 0.08 | 0.17 | 0.12 | 0.27 | 0.10 | 0.21 | 0.26 | 0.52 | ||

| 15 | 0.06 | 0.10 | 0.10 | 0.18 | 0.19 | 0.40 | 0.44 | 0.89 | ||

| 20 | 0.06 | 0.12 | 0.09 | 0.18 | 0.03 | 0.07 | 0.09 | 0.19 | ||

| 30 | 0.02 | 0.04 | 0.04 | 0.08 | 0.07 | 0.12 | 0.17 | 0.31 | ||

| 2 | 2 | 1 | 3.27 | 4.50 | 8.13 | 10.29 | 4.07 | 6.24 | 6.53 | 9.78 |

| 2 | 1.81 | 3.19 | 2.88 | 4.90 | 0.53 | 0.94 | 1.29 | 2.10 | ||

| 3 | 0.99 | 1.84 | 1.60 | 2.83 | 0.82 | 1.42 | 1.54 | 2.61 | ||

| 4 | 0.34 | 0.69 | 0.47 | 1.03 | 0.45 | 0.96 | 1.03 | 2.08 | ||

| 5 | 0.18 | 0.37 | 0.26 | 0.59 | 0.22 | 0.42 | 0.55 | 0.97 | ||

| 10 | 0.09 | 0.18 | 0.13 | 0.27 | 0.33 | 0.68 | 0.83 | 1.61 | ||

| 15 | 0.13 | 0.31 | 0.18 | 0.46 | 0.04 | 0.14 | 0.10 | 0.30 | ||

| 20 | 0.06 | 0.13 | 0.09 | 0.23 | 0.04 | 0.08 | 0.10 | 0.19 | ||

| 30 | 0.03 | 0.06 | 0.05 | 0.10 | 0.08 | 0.14 | 0.19 | 0.33 | ||

| 3 | 3 | 1 | 1.93 | 3.18 | 5.72 | 8.26 | 1.48 | 2.81 | 2.64 | 4.59 |

| 2 | 1.09 | 2.20 | 1.58 | 3.13 | 0.24 | 0.55 | 0.51 | 1.07 | ||

| 3 | 1.05 | 1.89 | 1.39 | 2.58 | 0.86 | 1.95 | 1.29 | 2.79 | ||

| 4 | 0.70 | 1.18 | 0.89 | 1.73 | 0.15 | 0.39 | 0.30 | 0.80 | ||

| 5 | 0.17 | 0.34 | 0.31 | 0.62 | 0.27 | 0.64 | 0.41 | 1.00 | ||

| 10 | 0.05 | 0.13 | 0.07 | 0.20 | 0.18 | 0.31 | 0.35 | 0.64 | ||

| 15 | 0.10 | 0.20 | 0.14 | 0.32 | 0.08 | 0.21 | 0.16 | 0.39 | ||

| 20 | 0.08 | 0.21 | 0.11 | 0.31 | 0.09 | 0.24 | 0.19 | 0.48 | ||

| 30 | 0.11 | 0.17 | 0.15 | 0.24 | 0.10 | 0.18 | 0.22 | 0.36 | ||

| LSTM | GRU | ||||

|---|---|---|---|---|---|

| 1 | 1 | 6.56 | 5.14 | 13.07 | 13.03 |

| 2 | 3.95 | 4.18 | 7.93 | 9.22 | |

| 3 | 3.23 | 4.08 | 6.36 | 6.58 | |

| 4 | 4.43 | 4.28 | 4.98 | 5.18 | |

| 5 | 2.55 | 2.81 | 6.06 | 5.84 | |

| 10 | 2.33 | 3.10 | 3.45 | 3.87 | |

| 15 | 2.37 | 2.97 | 4.52 | 4.71 | |

| 20 | 2.38 | 2.80 | 4.73 | 4.70 | |

| 30 | 1.47 | 2.04 | 1.03 | 2.01 | |

| 2 | 1 | 6.33 | 5.05 | 11.57 | 10.80 |

| 2 | 3.99 | 4.16 | 7.32 | 7.52 | |

| 3 | 3.09 | 3.89 | 6.11 | 6.41 | |

| 4 | 2.94 | 3.43 | 6.66 | 6.49 | |

| 5 | 1.29 | 1.85 | 5.96 | 5.33 | |

| 10 | 1.48 | 2.14 | 1.48 | 2.27 | |

| 15 | 1.68 | 2.07 | 1.60 | 2.54 | |

| 20 | 1.28 | 1.54 | 1.91 | 2.32 | |

| 30 | 1.15 | 1.69 | 1.38 | 2.37 | |

| 3 | 1 | 7.79 | 6.88 | 12.27 | 12.10 |

| 2 | 4.07 | 4.51 | 7.31 | 7.40 | |

| 3 | 3.24 | 4.08 | 6.56 | 7.46 | |

| 4 | 3.82 | 4.70 | 4.85 | 5.81 | |

| 5 | 3.47 | 4.11 | 2.86 | 3.79 | |

| 10 | 2.63 | 3.46 | 1.16 | 1.77 | |

| 15 | 1.26 | 1.88 | 1.07 | 1.90 | |

| 20 | 1.08 | 1.60 | 1.22 | 1.92 | |

| 30 | 0.94 | 1.75 | 1.21 | 2.11 | |

| LSTM | GRU | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 1 | 1 | 2.46 | 2.92 | 5.00 | 5.17 | 2.39 | 3.25 | 5.98 | 7.79 |

| 2 | 4.22 | 3.91 | 5.11 | 4.50 | 1.62 | 2.28 | 4.38 | 5.73 | ||

| 3 | 2.22 | 2.74 | 3.89 | 4.44 | 1.58 | 2.31 | 3.80 | 5.30 | ||

| 4 | 3.02 | 3.20 | 4.70 | 4.61 | 1.98 | 2.72 | 3.91 | 4.77 | ||

| 5 | 2.56 | 2.97 | 3.81 | 3.98 | 0.77 | 1.32 | 1.55 | 2.33 | ||

| 10 | 1.55 | 1.96 | 2.36 | 2.72 | 1.62 | 2.26 | 3.42 | 4.50 | ||

| 15 | 2.19 | 2.76 | 3.64 | 4.05 | 2.19 | 2.76 | 3.64 | 4.05 | ||

| 20 | 1.44 | 2.13 | 2.50 | 3.58 | 1.44 | 2.13 | 2.50 | 3.58 | ||

| 30 | 1.11 | 1.68 | 2.13 | 2.86 | 1.11 | 1.68 | 2.13 | 2.86 | ||

| 2 | 2 | 1 | 2.19 | 2.72 | 3.80 | 4.50 | 2.36 | 3.25 | 5.51 | 7.25 |

| 2 | 2.63 | 3.02 | 5.15 | 5.38 | 1.99 | 2.78 | 4.34 | 5.44 | ||

| 3 | 2.01 | 2.77 | 3.16 | 3.99 | 1.97 | 2.84 | 3.88 | 5.17 | ||

| 4 | 2.74 | 3.43 | 4.14 | 4.61 | 2.36 | 3.19 | 4.32 | 5.28 | ||

| 5 | 2.60 | 3.15 | 3.21 | 3.53 | 2.22 | 2.93 | 3.64 | 4.68 | ||

| 10 | 1.14 | 1.67 | 1.64 | 2.40 | 1.93 | 2.45 | 3.51 | 3.99 | ||

| 15 | 1.55 | 2.03 | 2.28 | 2.67 | 1.55 | 2.03 | 2.28 | 2.67 | ||

| 20 | 0.93 | 1.29 | 1.45 | 1.82 | 0.93 | 1.29 | 1.45 | 1.82 | ||

| 30 | 1.30 | 1.68 | 1.85 | 2.19 | 1.30 | 1.68 | 1.85 | 2.19 | ||

| 3 | 3 | 1 | 2.05 | 2.50 | 3.78 | 4.25 | 2.79 | 3.39 | 6.15 | 6.66 |

| 2 | 2.87 | 3.34 | 4.11 | 4.22 | 3.91 | 4.37 | 7.75 | 7.13 | ||

| 3 | 1.99 | 2.70 | 2.82 | 3.56 | 1.76 | 2.40 | 4.14 | 5.31 | ||

| 4 | 2.57 | 3.12 | 3.69 | 3.98 | 1.84 | 2.50 | 3.63 | 4.57 | ||

| 5 | 2.59 | 2.99 | 3.73 | 3.72 | 2.16 | 2.82 | 3.98 | 4.68 | ||

| 10 | 0.76 | 1.22 | 1.36 | 2.12 | 1.69 | 2.42 | 3.47 | 4.50 | ||

| 15 | 0.78 | 1.22 | 1.15 | 1.65 | 0.78 | 1.22 | 1.15 | 1.65 | ||

| 20 | 1.48 | 2.00 | 2.03 | 2.51 | 1.48 | 2.00 | 2.03 | 2.51 | ||

| 30 | 1.29 | 1.77 | 1.82 | 2.14 | 1.29 | 1.77 | 1.82 | 2.14 | ||

| LSTM | GRU | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 5 | 0 | 1 | 2.67 × | 667.70 | 1782.41 | 6.67 | 2.76 × | 661.55 | 1608.18 | 7.64 |

| 6 | 0 | 1 | 2.71 × | 677.17 | 1758.72 | 6.64 | 2.72 × | 771.12 | 1653.33 | 7.68 |

| 7 | 0 | 1 | 2.80 × | 630.39 | 1665.62 | 6.50 | 2.73 × | 518.58 | 1568.32 | 7.62 |

| 8 | 0 | 1 | 2.75 × | 627.67 | 1758.21 | 6.49 | 2.80 × | 639.27 | 1742.63 | 7.89 |

| 9 | 0 | 1 | 2.74 × | 477.86 | 1701.53 | 6.52 | 2.75 × | 588.22 | 1603.82 | 8.15 |

| 10 | 0 | 1 | 2.79 × | 454.59 | 1659.05 | 6.71 | 2.78 × | 549.56 | 1623.56 | 8.05 |

| 5 | 1 | 1 | 2.63 × | 1562.43 | 2092.48 | 6.69 | 2.77 × | 509.75 | 1577.64 | 8.01 |

| 6 | 1 | 1 | 2.68 × | 1536.20 | 1962.02 | 6.64 | 2.77 × | 898.48 | 1743.70 | 7.62 |

| 7 | 1 | 1 | 2.73 × | 367.47 | 1587.79 | 6.65 | 2.77 × | 1209.39 | 1964.14 | 7.48 |

| 8 | 1 | 1 | 2.70 × | 617.22 | 1729.51 | 6.67 | 2.71 × | 759.49 | 1843.60 | 7.49 |

| 9 | 1 | 1 | 2.76 × | 455.08 | 1688.71 | 6.72 | 2.75 × | 686.34 | 1734.03 | 7.41 |

| 10 | 1 | 1 | 2.73 × | 463.27 | 1688.71 | 6.61 | 2.78 × | 469.35 | 1612.84 | 7.86 |

| 5 | 2 | 2 | 2.68 × | 839.53 | 1785.23 | 6.79 | 2.78 × | 591.74 | 1706.74 | 7.46 |

| 6 | 2 | 2 | 2.77 × | 528.51 | 1705.00 | 6.50 | 2.80 × | 860.32 | 1878.82 | 7.98 |

| 7 | 2 | 2 | 2.77 × | 826.31 | 1710.11 | 5.61 | 2.72 × | 413.74 | 1569.33 | 7.79 |

| 5 | 0 | 2 | 2.72 × | 573.75 | 1726.27 | 7.68 | 2.74 × | 824.28 | 1841.88 | 8.56 |

| 6 | 0 | 2 | 2.76 × | 458.17 | 1731.28 | 7.66 | 2.74 × | 611.42 | 1713.80 | 8.80 |

| 7 | 0 | 2 | 2.75 × | 449.80 | 1676.01 | 7.93 | 2.74 × | 499.09 | 1592.73 | 8.52 |

| LSTM | GRU | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 5 | 0 | 1 | 213.67 | 0.21 | 0.53 | 3.47 | 208.404 | 0.18 | 0.48 | 3.73 |

| 6 | 0 | 1 | 208.52 | 0.17 | 0.50 | 3.44 | 209.06 | 0.20 | 0.49 | 4.04 |

| 7 | 0 | 1 | 210.56 | 0.26 | 0.53 | 3.46 | 210.63 | 0.19 | 0.49 | 3.86 |

| 8 | 0 | 1 | 212.52 | 0.26 | 0.52 | 3.66 | 212.12 | 0.17 | 0.49 | 3.89 |

| 9 | 0 | 1 | 210.51 | 0.25 | 0.56 | 3.64 | 210.49 | 0.18 | 0.48 | 3.88 |

| 10 | 0 | 1 | 211.33 | 0.27 | 0.53 | 3.55 | 210.73 | 0.20 | 0.51 | 3.95 |

| 5 | 1 | 1 | 215.70 | 0.33 | 0.56 | 3.78 | 208.59 | 0.19 | 0.50 | 4.07 |

| 6 | 1 | 1 | 220.54 | 0.23 | 0.51 | 3.97 | 214.44 | 0.23 | 0.50 | 3.79 |

| 7 | 1 | 1 | 217.20 | 0.19 | 0.52 | 3.76 | 209.26 | 0.21 | 0.51 | 4.13 |

| 8 | 1 | 1 | 219.03 | 0.27 | 0.53 | 3.71 | 213.90 | 0.22 | 0.53 | 4.61 |

| 9 | 1 | 1 | 220.60 | 0.52 | 0.59 | 3.86 | 215.56 | 0.20 | 0.52 | 4.23 |

| 10 | 1 | 1 | 225.69 | 0.21 | 0.52 | 3.86 | 208.41 | 0.18 | 0.48 | 4.02 |

| 5 | 0 | 2 | 412.73 | 0.22 | 0.48 | 3.48 | 206.90 | 0.16 | 0.46 | 3.99 |

| 6 | 0 | 2 | 218.32 | 0.22 | 0.52 | 3.66 | 215.56 | 0.23 | 0.52 | 4.01 |

| 7 | 0 | 2 | 208.80 | 0.18 | 0.51 | 3.66 | 208.98 | 0.20 | 0.51 | 3.84 |

| 5 | 2 | 2 | 227.50 | 0.22 | 0.49 | 4.60 | 217.95 | 0.22 | 0.52 | 4.44 |

| 6 | 2 | 2 | 222.80 | 0.25 | 0.51 | 4.34 | 212.76 | 0.25 | 0.52 | 4.69 |

| 7 | 2 | 2 | 217.91 | 0.24 | 0.53 | 4.52 | 221.07 | 0.23 | 0.51 | 4.80 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zarzycki, K.; Ławryńczuk, M. LSTM and GRU Neural Networks as Models of Dynamical Processes Used in Predictive Control: A Comparison of Models Developed for Two Chemical Reactors. Sensors 2021, 21, 5625. https://doi.org/10.3390/s21165625

Zarzycki K, Ławryńczuk M. LSTM and GRU Neural Networks as Models of Dynamical Processes Used in Predictive Control: A Comparison of Models Developed for Two Chemical Reactors. Sensors. 2021; 21(16):5625. https://doi.org/10.3390/s21165625

Chicago/Turabian StyleZarzycki, Krzysztof, and Maciej Ławryńczuk. 2021. "LSTM and GRU Neural Networks as Models of Dynamical Processes Used in Predictive Control: A Comparison of Models Developed for Two Chemical Reactors" Sensors 21, no. 16: 5625. https://doi.org/10.3390/s21165625

APA StyleZarzycki, K., & Ławryńczuk, M. (2021). LSTM and GRU Neural Networks as Models of Dynamical Processes Used in Predictive Control: A Comparison of Models Developed for Two Chemical Reactors. Sensors, 21(16), 5625. https://doi.org/10.3390/s21165625