To verify the advantages of the improved model compared with other detection models, a great deal of experiments are carried out to illustrate the validity of the model performance.

6.2. The Performance of Different Models in Training

In the training process, the model updates its parameters from the training set to achieve better performance. To verify the effect of CSP1_X and CSP2_X modules on the improved model, this paper compares the training performance with other object detection models, as shown in

Table 5.

It can be seen that with the parameters of this model, 15.9 MB are reduced compared with YOLO-v4 and 13.5 MB are less than YOLO-v3. At the same time, the model size is 0.371 and 0.387 times that of YOLO-v4 and YOLO-v3, respectively. Under the same conditions, the training time of this model is 2.834 h, which is the lowest of all the models compared in the experiment.

In Faster R-CNN, the authors used the Region Proposal Network (RPN) to generate W × H × K candidate regions, which increases the operation cost. Meanwhile, Faster R-CNN not only retained the ROI-Pooling layer in it but also used the full connection layer in the ROI-Pooling layer, which brought the network many repeated operations and then reduced the training speed of the model.

6.3. Comparision of Reasioning Time and Real-Time Performance

In this paper, video plays a role to verify the real-time performance of the algorithm. FPS (Frames Per Second) is often used to characterize the real-time performance of the model. The larger the FPS becomes, the better the real-time performance will be. In the meantime, the adaptive image scaling method is used to verify the reliability of the algorithm in the reasoning stage, as shown in

Table 6.

In the present work, we use the same picture to calculate the test time and compare the total reasoning time on the test set. It can be seen from the table that the adaptive image scaling algorithm can effectively reduce the size of red edges at both ends of the image, and the detection time consumed in the reasoning process is 144.7 s, which is 6.4 s less than that of YOLO-v4. However, thanks to its model structure, SSD consumes the shortest reasoning time. Faster R-CNN consumes the most time in reasoning, which is a common feature of the Two-Stage algorithm. Meanwhile, the FPS of our algorithm can reach 54.57 FPS, which is the highest among all comparison algorithms, while Faster R-CNN reaches the lowest.

6.5. Model Testing

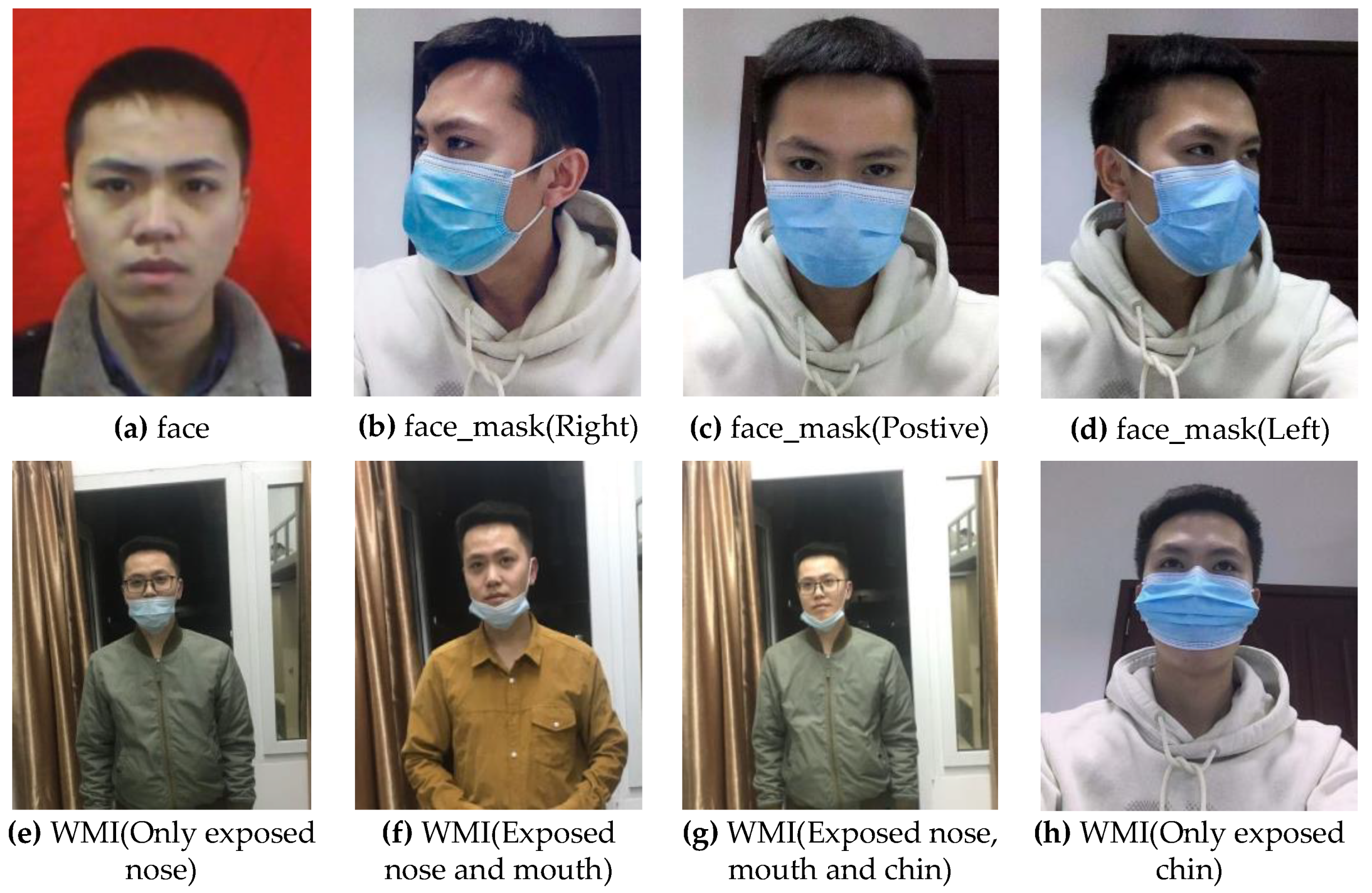

After the model training is completed, the trained weights are used to test the model, and the model is evaluated from many aspects. For our face mask data set, the test results can be classified into three categories:

(true positive) means that the categories in the test set are the same as the test results;

(false positive) means the number of samples in the detected object category is inconsistent with the real object category; and

(false negative) indicates that the real sample is detected as the opposite result or in the undetected category. For all positive cases judged by the model, the number is

, so the proportion of real cases

is called the precision rate, which represents the proportion of samples of real cases in positive cases among samples detected by the model, as shown in Equation (7).

For all positive examples in the test set, the number is

. Therefore, the recall rate is used to measure the ability of the model to detect the real cases in the test set, as shown in Equation (8).

To characterize the precision of the model, this article introduces

(Average Precision) and

(mean Average Precision) indicators to evaluate the accuracy of the model, as shown in Equations (9) and (10).

Among them, , respectively, represent precision, recall rate and the total number of objects in all categories.

Through Equations (7) and (8), it can be found that there is a contradiction between precision rate and recall rate. Therefore, the comprehensive evaluation index F-Measure used to evaluate the detection ability of the model can be shown as:

When

= 1,

represents the harmonic average of precision rate and recall rate, as shown in Equation (12):

If

is higher, the test of the model will be more effective. We use 2161 images with a total of 2213 objects as the test set. The test results of the model with IOU = 0.5 are shown in

Table 8.

It can be seen from

Table 8 that the model in this paper reaches the maximum value in

and the minimum value in

, and this means that the model itself has good detection ability for samples. At the same time, the model reaches the optimal value in the

index compared with other models.

To further compare the detection effect of our model with YOLO-v4 and YOLO-v3 on each category in the test set, the

value comparison experiments of several models are carried out under the same experimental environment, as shown in

Table 9.

It can be seen from

Table 9 that the performance of the

value of our model is higher than YOLO-v4, YOLO-v3, SSD and Faster R-CNN under different IOUs, thus the average precision of our model is effectively verified. However, in the case of SSD in

[email protected], its

in the category of “WMI” reaches the highest value.

In this paper,

is introduced to measure the detection ability of the model for all categories, and the model is tested on IOU = 0.5, IOU = 0.75 and IOU = 0.5:0.05:0.95 to further evaluate the comprehensive detection ability of the model, as shown in

Table 10.

It can be seen that when IOU = 0.5, the

of this model is 3.1% higher than that of YOLO-v4 and 1.1% higher than SSD. Under the condition of IOU = 0.5:0.95, a more rigorous test is carried out, and the experiment shows that

[email protected]:95 is 16.7% and 15.6% higher than YOLO-v4 and SSD, respectively. This fully shows that the model is superior to YOLO-v4 and SSD in comprehensive performance. It is worth pointing out that the

of Faster R-CNN is higher than YOLO-v4 and YOLO-v3, but the FPS is the lowest, which also implies the common characteristics of the Two-Stage detection algorithm: high detection accuracy and low real-time. At the same time, we illustrate the performance of different models in terms of test performance in a visual way, as shown in

Figure 12.

The pictures used in the comparative experiment in

Figure 12 are from the test set of this paper. Each experiment is conducted in the same environment. Meanwhile, visual analysis is carried out on condition of the confidence level 0.5. In the figure, the number of faces in the image from left to right is constantly increasing, so the distribution of faces is denser, and the problems of occlusion, multi-scale and density in a complex environment are fully considered, which offers convenience to fully prove the robustness and generalization ability of the model. From the analysis of the figure, it can be found that the performance of the model used in this paper is better than the other four in test results, but all the models have poor detection results for severe occlusion and half face. We consider that the cause of this problem is because of the lack of images with serious missing face features in the data set, which leads to less learning of these features and the poor generalization ability of the model. Therefore, one of the main tasks in the future is to expand the data set and enrich the diversity of features.

6.7. Analysis of Ablation Experiment

We use ablation experiments to analyze the influence of the improved method on the performance of the model. The experiments are divided into five groups as comparisons. The first group is YOLO-v4. In the second group, the CSP1_X module is introduced into the backbone feature extraction network module of YOLO-v4. The third group is the CSP2_X module introduced into the Neck module of YOLO-v4. In the fourth group, both CSP1_X and CSP2_X modules are added into YOLO-v4 at the same time. The last set of experiments is the result of the model in this article. The experimental results are shown in

Table 12.

It can be seen from the analysis in the table that the use of the CSP1_X module in the backbone feature extraction network enhances the values of the three categories, and at the same time, the and FPS are increased by 2.7% and 19.64 FPS, respectively, thus demonstrating the effectiveness of CSP1_X. Different from YOLO-v4, this paper takes advantage of the CSPDarkNet53 module and introduces the CSP2_X module into Neck to further enhance the learning ability of the network in semantic information. Experiments show that CSP2_X also improves the values of the three categories, and and FPS are increased by 2.3% and 21.62 FPS, respectively, compared with YOLO-v4. From the comparative experiments of the fourth and the fifth groups, we find that the H-swish activation function significantly ameliorates the detection accuracy of the model.

In summary, the improved strategies proposed in this paper based on YOLO-v4 are meaningful for promoting the recognition and detection of face masks in complex environments.