1. Introduction

The development of new technological tools designed for aid during surgical navigation tasks has raised the interest of a considerable number of research groups during recent years. Waelkens et al. [

1] compared the surgical navigation to the Global Positioning System (GPS)-based navigation. Indeed, GPS-based navigation consists of the GPS-based detection of the user’s current position and the subsequent identification of the most suitable path to the target destination, whereas surgical navigation requires the detection of the surgical tool’s current position and the subsequent identification of the optimal route to the surgical target (e.g., tumor). To do that, the system uses clinical images of the area of interest taken before (pre-operative) or during (intra-operative) the intervention and guides the surgeon’s movements according to the surgical tool’s detected positions. Analogically to the GPS system in the GPS-based navigation, the accurate detection of the surgical tool’s current position in an intra-operative basis is of paramount importance for the correct guiding and tracking during surgical navigation. The subsystem devoted to track the surgical tool’s position is called ‘tracker’. Several technological solutions have been proposed to implement such a system, the optical and the electromagnetic approach being the most common ones.

The optical trackers are widely used in current interventions. In these systems, a reference object (usually referred to as ‘fiducial marker’) is attached to the tip of the tool (or to any other point of interest), so that it can be spatially tracked by a pair of stereoscopic cameras placed at a convenient position (usually attached to the ceiling of the room). By triangulation calculations, the camera system can compute the spatial coordinates of the detected markers, associated to the tool’s coordinates. The markers may be either passive (e.g., near-infrared reflectors) or active (e.g., LEDs). In recent years, these systems have been combined with augmented reality (AR) tools to provide more immersive handling during surgical navigation and training of medical procedures [

2], and even for marker-less surgical guiding approaches [

3]. Despite the progresses, challenges such as misalignments between the physical and the virtual objects are still to be faced [

4], as well as inaccuracies during the intra-operative AR-based navigation [

5]. These systems can provide highly accurate surgical tool tracking [

6,

7], but they have the drawback of requiring direct line-of-sight contact with the markers [

8]. If a body (someone from the staff) or an object (another tool, a piece of equipment, etc.) hinders this contact, the track is lost, in addition to other specific limitations associated to intra-operative imaging resolution [

9] and misalignments between pre-operative and intra-operative images and tracking [

10]. A couple of examples of these systems currently available in the market are the Polaris

® system from NDI [

11] or the custom systems based on OptiTrack Motion Capture [

12].

The electromagnetic trackers provide a solution for the direct line-of-sight requirement. In this case, the markers are made of ensembles of small sensor coils usually housed in a small case, again attached to the point to be tracked. The tracker is available to detect the spatial location of these markers due to the variations in the electromagnetic field caused by their interaction with the field. This detection can be made even when there is no direct line-of-sight between the tracker and the marker [

13], thereby allowing free movements of the surgical team, and making them suitable for operations with minimal incision. However, these systems show two main drawbacks. Firstly, their effective action field is considerably reduced in comparison with the optical ones [

14], and their application is limited to operations involving small areas, such as otorhinolaryngological ones. Secondly, due to the magnetic nature of the system, the measurements made by the tracker to detect the position of the markers can be altered by magnetic field distortion caused by metallic objects and electrically powered equipment in the surgical scenario [

13,

15], which poses a considerable limitation on the materials and equipment (including the tools themselves) that can be used for the intervention. As an example, an electromagnetic-based system currently available in the market is the Aurora

® system from NDI [

16]. Hybrid optical and electromagnetic tracking systems have also been proposed [

13], even including augmented reality tools as well [

17], albeit always keeping the above-mentioned limitations.

Given the associated limitations to each method, new technological solutions to overcome them are still being pursued. Among the available options, microwave imaging rises as an interesting alternative that can provide for continuous and non-invasive intra-operative surgical tracking while overcoming the line-of-sight and magnetic interaction problems [

18]. This technique is based on the changes in the dielectric constant and dielectric losses between the different biological tissues involved. These variations in the permittivity can be seen and identified by analyzing the changes in the response of microwave antennas [

19]. With these alterations in the dielectric properties of the tissues, their boundaries can be identified and the biomedical image can be built [

20]. These techniques find wide use in several fields, such as cancer detection and management [

21,

22]. Considering the usual contrast in dielectric properties, these techniques could also be applied to detect both surgical targets (such as tumors) and surgical tools [

23]. In this work, we study the feasibility and validation of a microwave antenna-based imaging system for intra-operative surgical navigation.

4. Discussion

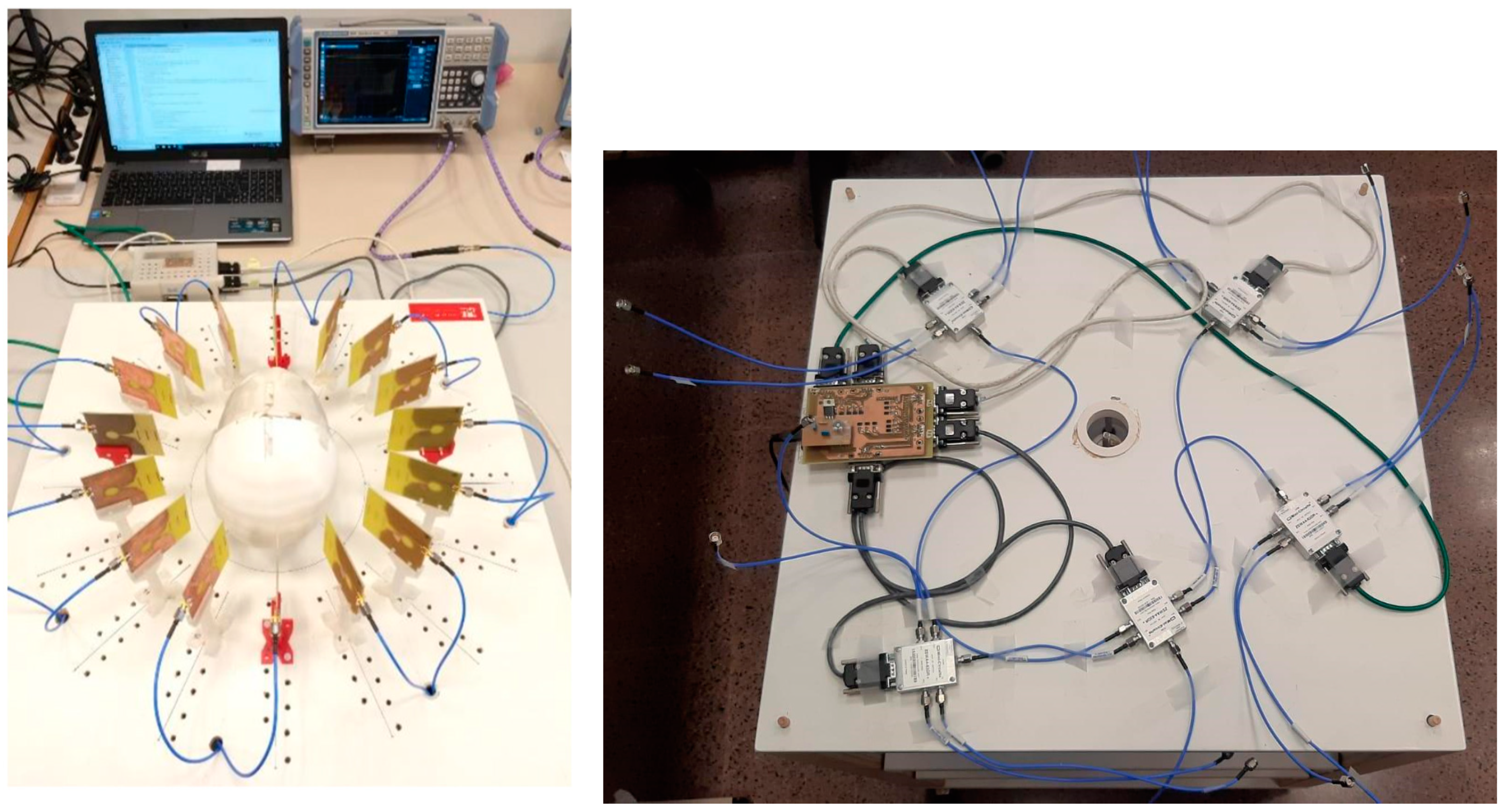

A microwave-based image system for cranial intraoperative tool navigation was proposed, and its performance was assessed. The system is composed of 16 twin Vivaldi-like antennas placed throughout a circumference with an equally spaced pattern, surrounding the cranial surgery area, pointing to the center of the circumference. An automated switching electronic system is used to drive the antennas and make the corresponding reflection measurements. The responses of the antennas are affected by the reflections of the electromagnetic waves on the cranium shape and on strange objects, such as tumors or surgical tools. These responses are further processed to locate the desired objects and provide surgical tool navigation. It should be noted that the maximum emitted power by the antennas is lower than 1 mW, which is less than the usual power involved in a cellphone call [

29]. The proposed system is thereby suitable for use in clinical scenarios.

Two methods were studied to process the responses of the antennas and build the medical image. These methods, viz. DAS and DMAS, consist of a spatial modeling of the surgical environment by assigning a computed intensity to each pixel of the image depending on the corresponding formulation and the time-domain response of each antenna. The following paragraphs will discuss the experimental validation and results of the proposed system using these two methods.

Figure 12 shows the capability of the proposed system to scan the cranium and detect the tumor within the experimental setup considered here. Both algorithms show acceptable detection capabilities in this regard. Considering the images in which only the cranium is involved (

Figure 12 top), the DAS algorithm provides brighter images, which allow us to see a higher level of detail. This should be analyzed with caution, because it also implies the apparition of spurious details, such as the reflected beams captured by each antenna, which do not correspond to any physical object in the scenario. That being said, as long as the spurious information is static and previously known (such as these beams, directly related to the position of each antenna), it could be easily eliminated. The DMAS algorithm, however, provides a cleaner image, almost with no spurious details, but with a more poorly defined cranium. Conversely, when the tumor is involved (

Figure 12 bottom), DMAS seems to show better detection capabilities, providing a clearer, more defined location of the tumor. In this case, the high-intensity reflections by the tumor material (in comparison to those by the cranium material) hinder the detection of the cranium shape in both methods, being less visible (but detectable) for the DAS algorithm and almost invisible for the DMAS algorithm. Considering these pictures, it seems that both algorithms show strengths and weaknesses for different aspects, and therefore a detailed analysis for both of them is worthwhile. Ostensibly, the DAS image can be more suitable for calibration tasks, for example, taking reference measurements of the cranium’s dimensions, and also for detection and tracking of events within the cranium area, which is better resolved with this algorithm, whereas DMAS seems to show better performance regarding accurate location of strange objects within the image framework, although losing information related to the cranium shape.

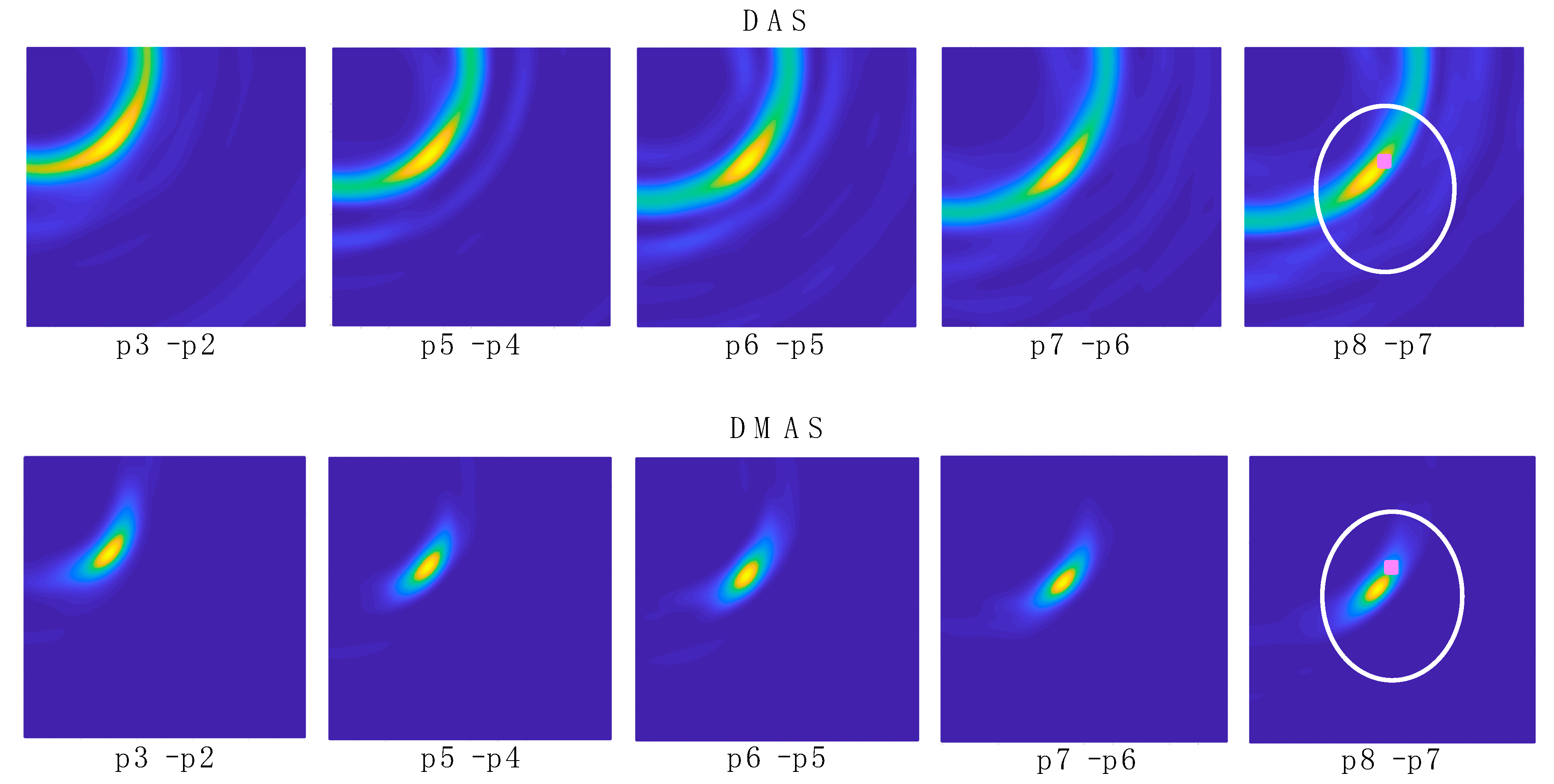

In the experimental results for the navigation task shown in

Figure 13, it can be seen how the long shape of the tool hinders the clear and direct identification of the tool’s final-end position, especially for the last positions (the tool entering the cranium, longer tool’s body portion within the image). Indeed, the long-shaped tool yields the detection of many reflections throughout the tool’s body by different antennas, depending on their position. This information could be useful for further processing of the images in the final system, so that the full shape of the tool can be depicted in the image shown in the user-oriented graphic interface. However, for the pursued navigation assistance, considering the binarization process proposed here, this phenomenon leads to the apparition in the binarized images of several areas with several associated centroids, and the detection of the current position of the tool becomes complicated. In addition, other objects different from the tool could be detected, leading to the definition of false positions for the tool. For example, it can be seen that the tumor is detected in positions p1 and p2 with both methods, since the tool had not yet arrived at the detection area at those moments. Therefore, for navigations purposes, we propose the differential method in which the prior image is subtracted to the current one, as shown in

Figure 14, so that the undesired, unmoved details are eliminated and only the information related to the tool’s trajectory evolution is tracked.

Figure 14 shows the images obtained with the differential method for navigation purposes, for both algorithms. Here, the information obtained from each image is only related to the tool’s trajectory, i.e., the difference in the tool’s final-end position between the last measurement and the current one. These images provide a clear view of the trajectory followed by the tool, starting out of the measurement area and following a straight line towards approximately the tumor’s position. This approximation can be seen by observation of the images “p8–p7” in

Figure 14 and the bottom images in

Figure 12. The proposed process, including the binarization of the resulting image and the computation of the centroid in the high-luminance region, allows the detection of the tool’s final-end coordinates in the current position, thereby tracking the tool’s navigation. The results for this position detection process again confirm the approach of the tool to the tumor’s location, as can be seen in

Table 1. Considering these results, it should be noted that: (1) the tumor’s position coordinates refer to the tumor’s exact center, which cannot be physically reached by the tool in the proposed setup due to the physical dimensions of the solid object emulating the tumor; and (2) the tool in position p2 was out of the measurement range, and no information can be obtained from this position.

The comparison between the detected positions with both algorithms and the reference positions obtained from the robot’s coordinates (

Table 1 and

Table 2) shows a good agreement, and it therefore confirms the potential of the proposed system for intraoperative navigation imaging. The detected positions and the error analysis yield similar results for both algorithms. The error analysis results show smaller errors and standard deviations for both algorithms for the innermost region. This is coherent with the detected positions, in which the closer the tool is to the tumor’s position, the smaller the difference between the detected position and the reference one. With the tumor (and the innermost region) being close to the center of the coordinates, this means that the error becomes smaller as the detected positions approach the center, which is logical given the radial configuration for the antenna system. As a consequence, the highest accuracy will be achieved for the innermost positions of the tool, located within the cranial area, meaning that the system is optimized for higher accuracy and resolution in the most interesting region for cranial surgery.

In this regard, the system shows a mean error of roughly 1.26 mm in the best case and 3.02 mm in the worst case for the interesting region with respect to the reference coordinates. Considering the cranium total dimensions, this means errors between 0.98% (best case) and 1.78% (worst case). It should be noted that, for magnetic-based tracking systems, mean detection errors of ~0.5 ± 0.5 mm have been reported [

30], which can raise up to 27 mm due to interference of metallic objects [

8]. For optical tracking, mean errors of 0.24 ± 1.05 mm have been reported, which can raise up to 1.65 ± 5.07 mm when some cameras are occluded [

7]. The detection errors reported here are also consistent with the errors reported in other microwave imaging approaches. For example, a similar system was used in [

31], also with 16 antennas (operating at 1–4 GHz), to detect intraoperative cranial inner hemorrhages, which reported detection errors between 1 and 5 mm. With the positioning error being dependent on the wavelength of the highest frequency in the system (which is linked to the resolution), the reported results here show consistency with those in [

31].

Figure 14 also shows that, after the previous image subtraction, the DAS algorithm does not provide a graphical view of the tool’s final-end position in a manner as clear and well-defined as the DMAS algorithm does. Notwithstanding that, in this case, given the simple shape of the tool, the results regarding the position detection after the binarization and centroid computation process are quite similar for both algorithms, as shown in

Table 1. That being said, the visual inspection of

Figure 14 suggests that the DAS algorithm is more sensible for the luminance threshold (kept constant at 0.8 throughout the whole results analysis). Indeed, lower thresholds would have resulted in a sort of half-moon-shaped white area in the binarized images, instead of the ellipsoid-shaped ones for 0.8, as shown in

Figure 15. With the centroids being computed as the mass center of the white area, a lower threshold would lead to a displacement of the finally detected position; thus yielding to a greater error in the detection.

Figure 14 confirms that this phenomenon is considerably less noticeable for the DMAS algorithm. The analysis of the detection error as a function of the luminance threshold is shown in

Figure 18, which confirms this behavior. These results highlight the dependence of DAS on the luminance threshold and allow us to conclude that DMAS is more robust to DAS to variations in this parameter. Consequently, DMAS is expected to show a more reliable performance when tools with more complex shapes are considered, or when rotations of the tool are involved.

Apart from this criterion, no further reasons were detected to claim the outperformance of one algorithm with respect to the other one. It should be noted that the setup considered in this study inherently has a certain instrumental error regarding the reference coordinates obtained from the position of the robot, due to the vibrations of the links of the robot during the movement as well as the oscillations of the tool’s final end due to its long shape and the tip-based holding. Therefore, seeing the small differences in the performance of both algorithms (see

Table 1 and

Table 2), both algorithms show acceptable performance for intraoperative tool navigation tracking, and we cannot point to any algorithm being the most advantageous regarding the detection accuracy for the experimental setup considered in this study and a properly selected luminance threshold.

As a matter of fact, the raw images (before applying the differential method) for both algorithms (

Figure 13) show similar information and even similar shapes for the high-luminance areas, and therefore this above-mentioned higher robustness of DMAS to the luminance threshold seems to come from the differential stage. It should be noted that the DMAS formulation inherently implies noise filtering, which often means detail loss. DMAS differential images (

Figure 14) can provide a robust tracking of the tool’s final end, but the information related to the tumor position is blurred. DAS raw images (

Figure 13), however, allow to see the tumor and even the cranium shape in addition to the tool, which would allow for intraoperative tracking of the tumor. This is a highly desirable feature, and therefore the combination of the information extracted from both algorithms could provide the surgical team with highly accurate intraoperative navigation and guidance for the approach of the surgical tools to the tumor position, even when changes in the tumor’s position are involved, such as those resulting from the brain-shift effect. It should be noted that this would be made only at the expense of a slightly higher computational cost, with no extra hardware required, since both algorithms would independently process the same measurements. The reported system, combining both algorithms, is thereby proposed as a potential surgical navigation system to robustly address interventions prone to tumor displacements.

The discussion shown in this initial study should be, however, limited by the restricted validity of the experimental setup, notably simplified. For a more realistic scenario, including real biological tissues or phantoms mimicking them, the dielectric properties of the materials involved would be different, and the measurements would be thereby altered. The measurements made with each antenna, upon which the images are built, are based on the reflections of the emitted electromagnetic waves while travelling through the scenario. These reflections occur when the waves travel through the boundaries of consecutive mediums with different dielectric properties. They mostly depend on the dielectric constant and conductivity differences in the boundary, rather than on the specific values for each medium. Although the dielectric properties for real biological tissues are evidently not the same as in the proposed experimental setup, the performance of the proposed system can be predicted by their differences.

Focusing on the tumor detection and tracking tasks, the average dielectric constant for health tissues in the brain is approximately 42, whereas for tumor tissues it turns to roughly 55 due to the high water content [

32,

33]. This means a relative increase of 30%, which is a sufficient difference to allow the tumor detection by means of microwave imaging techniques. It should be noticed that these techniques have been reported to handle and detect accurately contrasts as low as 4% [

22]. As for the surgical tool, the evident differences in the materials (chiefly metals vs. biological tissues) and their properties allow to foresee good detection with the proposed system. All these differences allow to expect good detection capabilities both for the tumor and the tools when more realistic phantoms are involved, or even in real surgery scenarios, thereby potentially providing for the pursued RF-based real-time surgical tool tracking.

In addition, there are several strategies that could be applied in order to mitigate a hypothetic misperformance in a more realistic scenario, if required. More specifically, the properties of the tissues in a real-case brain, which allow the detection of tumors through the differences seen in the propagation speed of the electromagnetic waves travelling through them, are notably affected by their dielectric constant (

εr), as seen in (4). In this sense, some strategies could be applied for a more accurate detection. For example, the

εr of the materials could be characterized or estimated by means of initial measurements considering the

S21 parameter of active face-to-face antenna pairs. Additionally, already-known average values for the dielectric properties of biological tissues could be assumed. Another approach could be the use of filters and further processing techniques for the measured signals, so that more accurate detection of the properties of the materials the waves travel through could be achieved, and therefore a suitable propagation speed could be assigned for each case. Such filters could include, but are not limited to, adaptive beamforming algorithms [

34] and hybrid methods [

35]. It should be noted that these strategies are independent one to another, and there is no constraint that could prevent simultaneous use. Consequently, all of them could be used and combined in a proper way, so that the accuracy and detection capabilities could be enhanced as much as possible, attaining solutions adapted to each specific case.