1. Introduction

As the demand for mobile and wireless communications grows, a large number of spectrum resources are needed to accommodate the increasing number of mobile and wireless users. Since the amount of available spectrum is limited, there are severe shortages of spectrum resources in modern wireless communication systems. Consequently, it is critical for cellular networks to support a high number of mobile users with limited spectrum resources while maintaining a high quality of services (QoS). Device-to-device (D2D) communication technology was introduced as a promising solution to resolve the spectrum shortage problem [

1]. By using the D2D technology, mobile users in the proximity are able to communicate with each other directly without imposing heavy loads on cellular networks. The scope of D2D communications has been extended to vehicle-to-vehicle (V2V) and vehicle-to-everything (V2X) systems and the D2D technology is considered a key technology in fifth-generation (5G) wireless communications. Many researchers have focused on D2D technology and have conducted a large number of research activities regarding D2D communications.

Since D2D communications utilize spectrum channels, which are already occupied by cellular users, it is essential to allocate communication resources to D2D devices in a way that the performances of D2D links are improved without destroying the QoS of cellular links. Thus, the resource allocation (RA) for D2D devices in the existence of cellular devices is an inherent issue of D2D communications in theoretical and practical aspects. A large number of research studies have been conducted regarding RA for D2D communications, especially underlay cellular networks [

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13]. When different D2D links occupy distinct cellular channels, efficient one-to-one mapping techniques may be applied to RA [

2]. On the other hand, in case multiple D2D links are allowed to share a cellular channel, the optimal RA problem becomes NP-hard and cannot be solved analytically, especially when a high number of wireless devices are distributed densely in a cell. Hence, sub-optimal approaches with reduced complexities have been investigated to implement RA in practical D2D communication systems [

14,

15,

16]. In [

14], a two-step resource allocation scheme was introduced to maximize the sum capacity of D2D communications. In [

15], a centralized resource allocation method based on the difference of the convex function (DC) programming was proposed to solve a weighted sum rate maximization problem. In [

16], an alternating channel assignment–power allocation scheme was proposed to maximize the sum rate of the cellular and D2D links.

As the scope of D2D is extended to D2D with mobility, we need a dynamic RA scheme suitable for networks with devices changing their locations continuously. It is clear that a dynamic RA requires a much higher system overhead and computational complexity than a static one because the resource allocation needs to be updated whenever a network setup, including device locations, changes. Data transmissions need to be conducted over multiple time steps for several reasons, e.g., due to the segmentation of long data frames into multiple short ones due to limited bandwidth channels. The number of time steps may be determined by data size, channel bandwidth, battery life of UEs, etc. The RA becomes more complex and hard to implement if a sequence of resources needs to be allocated over multiple time steps. Thus, a sub-optimal RA scheme with low complexity is more demanding in modern communication networks.

Recently, deep learning (DL) and reinforcement learning (RL) have received attention from a wide range of fields. DL has been actively adopted in optimization, system identification, recognition, and classification in many applications, including wireless communications. RL is a mechanism of agents that learns what to do, or how to map situations to actions, in order to maximize a reward through a trial-and-error search. Deep RL (DRL) incorporates DL into RL, in which agents make decisions from unstructured input data, where the deep Q-network (DQN) is a well-known example of DRL [

17]. DRL has also been widely applied to various forms of optimization and policy determination problems, including wireless communication systems. DRL is an efficient mechanism for sequential decision-making, so it is a natural approach to apply DRL to RA over multiple time steps. Using DRL is considered a good approach to determining resources for D2D devices in a sub-optimal manner, with lower complexity in practical communication networks. In the training phase, artificial neural networks (ANNs) in agents are intensively trained for as many situations as possible. Then, in an actual operational phase, agents just observe situations and draw sub-optimal solutions to an RA problem using trained ANNs.

Many research works have applied learning techniques to RA for D2D communications [

18,

19,

20,

21,

22,

23,

24,

25,

26,

27,

28,

29,

30,

31,

32,

33,

34]. As a learning principle for training RA units, DL [

18,

19,

20,

21,

22], RL [

23,

24,

25], and DRL [

26,

27,

28,

29,

30,

31,

32,

33,

34] have been widely utilized. Depending on who determines the resource allocations for D2D devices, two types of RA schemes have been proposed: a centralized RA [

18,

19,

20,

23,

26,

27,

28,

31] and a decentralized RA [

20,

21,

22,

23,

25,

29,

30,

32,

33,

34]. In the case of DRL-based RA schemes, a single-agent framework is used for centralized RA schemes [

26,

27,

28,

31] while a multi-agent framework is used for decentralized RA schemes [

21,

22,

23,

24,

25,

29,

30,

32,

33,

34]. In essence, centralized single-agent RA schemes have attained a high QoS in the communication network by utilizing highly computational complexities. On the other hand, decentralized multi-agent RA schemes require low amounts of computation resulting in a degraded QoS. To obtain a high QoS with low computational complexity, we adopted a multi-agent structure to be used in the centralized RA framework, which is the main distinction from preceding works.

Various forms of DL-based RA schemes have been proposed. A hybrid power allocation scheme was proposed to maximize the sum rate of D2D users by mitigating the QoS constraint violation [

18], and a channel and power allocation scheme for overlay D2D networks was proposed to maximize the sum rate of D2D pairs with a minimum rate constraint [

19]. The DL framework (for the optimal RA in multi-channel cellular systems with D2D communications) was proposed to maximize the overall spectral efficiency [

20], and random graph-based sparse–long short-term memory (LSTM) network for joint resource management was proposed to maximize the determinacy of latency in cellular machine-to-machine communications [

21]. In [

22], the RA scheme in unmanned aerial vehicle (UAV)-assisted cellular V2X (C-V2X) communications was proposed to maximize the bandwidth efficiency while satisfying the rate and latency of users.

RA schemes using RL in training phases were also proposed. An energy optimization technique was proposed in 5G wireless vehicular social networks [

23], and a joint power allocation and relay selection scheme based on Q-learning was proposed to improve energy efficiency in relay-aided D2D communications underlay cellular networks [

24]. A content-caching strategy based on multi-agent RL with reduced action space was introduced to maximize the expected total caching reward in mobile D2D networks [

25].

An increasing number of research studies are investigating and devising RA schemes based on the DRL principle. A centralized double-DQN-based RA scheme was proposed for dynamic spectrum access in D2D communications underlay cellular networks [

26], and a centralized hierarchical DRL-based method was proposed to find an optimal relay selection and power allocation strategy for 5G mmWave D2D links [

27]. In [

28], a DRL-based algorithm was proposed to determine the transmit power of D2D and cellular links for maximizing an overall sum-rate. In [

29], each V2V link selects resources with the aid of DQN to satisfy a latency constraint and minimize the mutual interference between the infrastructure and vehicles in unicast and broadcast scenarios. A deep deterministic policy gradient (DDPG) algorithm was used for the energy efficient power control in D2D-based V2V communications [

30], and an adaptive RL framework was used to select the appropriate channel selection for a non-orthogonal multiple access-unmanned aerial vehicle (NOMA-UAV) network [

31]. In [

32], a distributed frequency RA framework based on the multi-agent actor–critic (MAAC) was proposed. In [

33], a multi-agent DRL-based distributed power control and RA algorithm was introduced to maximize the throughput of D2D and cellular users. In [

34], a DRL-based joint mode selection and channel allocation algorithm was proposed in D2D communication-enabled heterogeneous cellular networks to maximize the system sum-rate in mmWave and cellular bands.

In centralized RA, a central coordinator collects information from all devices in a cell and determines the resources for all participating devices. On the other hand, in a decentralized RA, participating devices determine their own resources by using their locally obtained information. The centralized RA scheme results in a better performance than the decentralized scheme at the cost of high system overhead and high computational complexity concentrated on a central unit. On the other hand, the decentralized RA scheme results in a lower system overhead and distributed computation burden at the cost of the degradation of the communication performance. As the number of devices participating in D2D networks grows, the system overhead required to collect data from devices and deliver RA results to individual devices also increases. This results in increasing interest in decentralized RA schemes, and recently, various forms of decentralized RA schemes adopting DRL have been proposed. The basic requirement for implementing a decentralized DRL-based RA scheme involves sufficient computing capabilities from participating D2D devices because each one needs to operate its own learning mechanism, such as ANN. Vehicles and roadside infrastructures are able to supply sufficient computing power and enough space to mount high-performance devices so that V2X networks can utilize a decentralized–DRL-based RA scheme. On the other hand, personal hand-held devices do not have enough power supply and computational capabilities to run their own learning units so a decentralized DRL is not considered a viable solution for RA. Consequently, central a RA scheme still has high demands, and an advanced approach to reducing the computational complexity of DRL adopted for RA by a central coordinator is highly demanding.

In this paper, we propose a practically efficient centralized RA scheme based on a multi-agent DRL for D2D communications underlay cellular networks. We aim to present a good performance of the centralized RA scheme while reducing the computational complexity by using a multi-agent structure. Transmit power and the spectrum channel of D2D links are considered resources of D2D communications, and the objective of RA is to maximize the sum of the average effective throughput of all cellular and D2D links in a cell accumulated over multiple time steps. We obtained outage probabilities of cellular and D2D links in terms of the spectrum channel and the transmit power of the devices. Then, we define an effective throughput and formulate the optimization problem required for RA. We introduce a multi-agent DRL framework in which agents reside in a central coordinator of the cell and conduct constituent learning processes in a staggered and cyclic manner. Thanks to the segmentation of ANN into smaller ones, the proposed multi-agent DRL requires lower computational complexities in both the training phase and testing phase than the joint DRL for RA. The proposed RA scheme promptly allocates resources depending on the locations of participating devices, which vary dynamically. It was observed from simulations that the proposed DRL-based RA scheme performs well in various aspects of D2D communications underlay cellular networks. The usefulness of the proposed RA scheme is clearer in case the D2D devices are distributed more densely in a cell resulting in a higher level of mutual interferences among devices. Consequently, the proposed RA scheme is considered practically efficient in the next-generation communication network in which a high number of D2D devices with high mobility exist in cellular networks.

This paper is organized as follows. In

Section 2, the system model of D2D communications underlay cellular networks is presented and the optimal resource allocation is formulated to maximize the sum of the average effective throughput of cellular and D2D links accumulated over multiple time steps. In

Section 3, we provide a short introduction to the deep reinforcement learning algorithm. In

Section 4, we propose a multi-agent DRL-based RA scheme, in which multiple agents conduct constituent learning in a staggered manner with a timing offset in a cyclic manner in a training phase. In

Section 5, we analyze the performance of the proposed scheme in various aspects and compare it with other RA schemes. Finally, we conclude this paper in

Section 6.

2. System Model

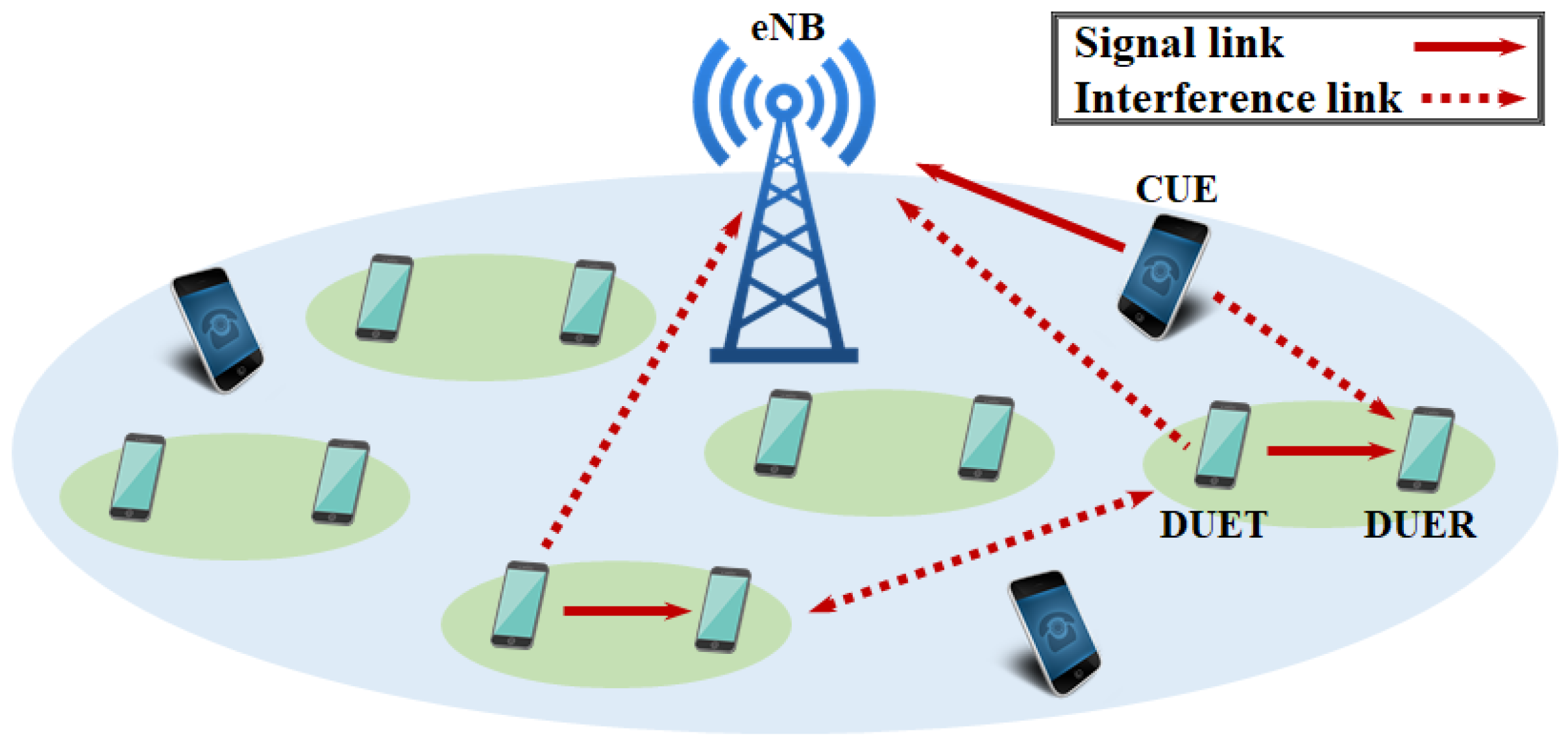

We consider a single cell, in which an evolved Node B (eNB),

K cellular user equipment (CUE), and

M D2D pairs exist, as shown in

Figure 1. A D2D pair is formed by the transmitting D2D user equipment (DUET) and the receiving D2D user equipment (DUER), where D2D communications occur during a cellular uplink period. Note that RA decisions are made centrally by eNB and delivered to corresponding DUETs during a cellular downlink period. Each CUE occupies a dedicated channel while D2D links use channels already occupied by CUEs, where multiple D2D links are allowed to share a channel. We index CUE and the channel occupied by CUE as

. We also index the D2D pair and its DUET and DUER as

.

Let and denote a transmit symbol of DUET m and CUE k, respectively, each of which has the power of and , respectively. We let the transmit power of each DUET be chosen out of L discrete values, i.e., for each m. We also let denote the distance between user equipment (UE) x and y, where denotes the distance between UE x and eNB. We let denote a small-scale fading gain of the channel between CUE k and DUER n, and let denote a small-scale fading gain of the channel between DUET m and DUER n over the channel k. We suppose and are independent and are identically distributed (i.i.d.) zero-mean circularly symmetric complex Gaussians with unit variances. We use a log-distance model for large-scale fading in the channel between UEs x and y, which are determined by with a path loss exponent . We define a D2D channel access indicator as if a D2D pair m uses the cellular channel k, and otherwise.

The received signal at DUER

m over the channel

k is written as

where

denotes the additive noise at DUER

m, which is a zero-mean circularly symmetric complex white Gaussian with a variance

. The signal-to-interference-and-noise ratio (SINR) of

is determined by

Similarly, the SINR of the received signal at eNB over the channel

k, denoted by

, is defined as

We declare a link outage when the achievable data rate does not meet a target rate. Let

and

denote target rates of the cellular link and D2D link, respectively. We also let

and

denote values of the SINR of the cellular link and D2D link, respectively, by which corresponding target rates are achieved. Note that

and

, where

and

. Then,

and

represent the SINR threshold for declaring outages of the cellular link and D2D link, respectively. We also let

and

denote the outage probabilities of the cellular link

k and D2D link

m over a channel

k, respectively. Then, we obtain

and

whose derivations are provided in

Appendix A. Note that

is defined only when

.

We define an effective throughput of the D2D link

m over the channel

k as a target rate multiplied by the probability of the successful transmission, i.e.,

. In the same manner, the effective throughput of the cellular link

k is defined by

. The goal of RA is determining the channel and transmitting power of all D2D links at each time step to maximize the cumulative sum of the average effective throughputs of the cellular and D2D links over multiple time steps

T. Multiple D2D links are allowed to share an identical cellular channel. A D2D link occupies a single cellular channel during data transmission. Then, RA can be expressed as

where the time step

t is specified in

,

,

, and

as

,

,

and

, respectively, with a slight abuse of notation. Constraints in (

6) imply that each D2D link utilizes only one cellular channel while a cellular channel can be used by multiple D2D links. A mathematical technique to solve (

6) is not available, so we need to rely on a brute-force search approach to obtain optimal solutions of (

6), which are

,

for all

. Since there exist

possibilities of the pair of

and

for given

m and

t, the overall number of possible combinations of

and

is

. Thus, in a brute-force search, the objective function needs to be evaluated for each

candidate to obtain an optimal solution. As a result, the optimal RA is too complex to be implemented in a practical system especially when the number of participating D2D links

M is high. If distinct cellular channels are assigned to different D2D links, the channel allocation can be performed by a low-complexity one-to-one mapping algorithm, e.g., the Hungarian algorithm [

2]. However, in case multiple D2D links are allowed to use an identical cellular channel, the high computational complexity becomes a large constraint in regard to using a brute-force-search-based RA scheme in a practical communication system. Thus, in this paper, we devise a low-complexity RA scheme based on DRL, which can be utilized in practice.

3. Deep Reinforcement Learning Preliminaries

Reinforcement learning (RL) is a mechanism of learning what to do, or how to map situations to actions, in order to maximize a reward through a trial-and-error search. It is known that the Markov decision process (MDP), represented by a model-free learning scheme, is useful for studying optimization problems solved by RL. MDP can formalize sequential decision-making, in which agents interact with the environment, observe states, and take actions affecting not only immediate rewards but also subsequent situations. MDP is represented by , where is a set of states, is a set of actions that the agent can take based on a given state, is a transition function characterizing the probability that a given state and action are mapped to the next state, and is a set of possible rewards obtained by an agent. If the cardinalities of , , and are finite, the MDP is called a finite MDP.

In the RL, at a certain time step t, an agent observes a state of the environment and accordingly takes an action based on a policy . The policy is a mapping from states to probabilities of selecting each possible action. Following the action , the state transits to a new state and the agent obtains a reward and computes a return as , where is a discount factor adjusting the impact of future rewards. The agent evaluates the expected return obtained by starting from a state s and following a policy , thereafter, as and the expected return obtained by starting from a state s, taking an action a, and following a policy , thereafter, as . Note that and are called a state-value function and an action-value function, respectively, under a policy . Then, the agent determines the optimal policy for a given state s by , through which optimal state-value function and action-value function are also defined by and , respectively.

Q-learning was developed as an off-policy RL algorithm for a temporal-difference control of a finite MDP. It handles problems with stochastic transitions and resulting rewards without requiring a model of the environment. At each time step

t, the agent staying at a state

selects an action

based on an action selection rule, which is designed to balance the behaviors of exploration and exploitation by agents. Greedy,

-greedy, and soft-max methods are widely-used examples of the action selection rule. The quality of the pair of state

and action

is evaluated by a function

, whose result

is called a state–action value. After taking an action, the agent observes a resultant reward

and the next state

, and then updates the state–action value by

where

is the learning rate. This procedure is repeated from the initial time step up to the final time step. This series of steps is called an episode. At the beginning of each episode, the environment is set to an initial state and the agent’s reward is reset to zero. We directly approximate the optimal action–value function,

, by using the state–action value

, independent of the policy being followed. It is known that in MDP, the state–action value converges with probability 1 to the optimal action–value function if each action is executed at each state during the infinite run times and the learning rate

decays, appropriately. The optimal policy

can be found once the optimal action–value function

is determined. After a sufficient number of updates, the state–action values for all states and actions converge.

In the case of a large state space

, evaluating the state–action values

for all states requires high computational complexity. We can speed up the learning process by using a function approximator, obtained from earlier experiences, to compute the state–action values. DeepMind introduced a deep Q-learning, or deep Q-network (DQN), which uses a convolutional neural network (CNN) or generally an artificial neural network (ANN) as a function approximator [

17]. DQN has two phases, the (i) training phase and (ii) testing phase. In the training phase, an agent trains its state–action value approximator through a sufficient number of learning iterations. Then, the system enters a testing phase, in which the trained state–action value approximator is used to draw the best actions for a given set of observations.

In the training phase of DQN, the agent utilizes two ANNs, called Q-networks, which are a prediction network and a target network. In Q-networks, states are defined by observations obtained by the agent and are fed to input nodes. With a given state

s, the prediction network computes

approximately for each realization of action

at each output node. The action of an agent is chosen by the action selection rule and applied to the environment or emulator. Then, a reward

r, as well as a new state

, are obtained, and the transition vector

is stored in the experience replay memory. Since observations at consecutive iterations are highly correlated, small updates of state–action values may significantly change the policy and the data distribution, which may result in the instability of RL. To overcome this problem, deep Q-learning utilizes an experience replay. Random samples of prior transition vectors are picked from an experience replay memory and used to evaluate a loss function through the prediction network and target network, where a batch of transitions may be used. This removes correlation in the observation sequence and smooths changes in the data distribution. The prediction network is updated at every time step by using the obtained loss function while the target network is updated periodically or updated softly at every time step. This process is composed of one learning iteration, which is summarized in

Figure 2. After a training phase is completed by a sufficient number of iterations, the testing phase begins, in which the agent takes an action

a corresponding to the output node of ANN having the greatest

for given states

s fed to input nodes of ANN.

4. DRL-Based Resource Allocation for D2D Communications

We propose a DQN-based RA scheme for D2D communications underlay cellular networks. First, we consider a joint RA scheme by which resources for all D2D links are determined simultaneously. A central coordinator at eNB is considered a single agent of DQN, which conducts RA for all DUEs. We define an episode as a time duration

T for which a sequence of data transmissions from DUET to DUER is complete. We count the time steps inside each episode, i.e., the time step

t is defined between 0 and

. At time step

t, the state

is defined by locations of all UEs in the cell, indices of channel resources, and transmit power levels of D2D links at the time step

t. Let

denote the vector of locations of all UEs, i.e., CUEs, DUETs, and DUERs, and let

and

denote vectors of allocated channel indices and transmit power levels of all D2D links at time step

t, respectively. Then, the state is expressed as

We define the action (

) at time step

t by the determination of the transmit power levels and channel indices to all D2D links at time step

t. The instantaneous reward at time step

t, denoted by

, is defined as the sum of the average effective throughput of D2D and cellular links in the cell at time step

t, i.e.,

and the accumulated reward up to the time step

, denoted by

, is obtained as

The reward accumulated over the time steps in an episode will be called a

benefit and expressed as

The goal of RA is to maximize the benefits under existing constraints as introduced in (

6).

In DQN, the state

is fed to input nodes of ANN and each output node of ANN is dedicated to each action. As introduced in (

8), a state is defined by a

-tuple vector, which are

entries of

and

M entries for each of

and

. Each

M D2D link can be allocated a channel index and a power level out of

K and

L possible values, respectively, so that there exist

possible realizations of action at each time step. It follows that ANNs in the prediction network and target network have

input nodes and

output nodes, as shown in

Figure 3a. Suppose ANN has

hidden layers, each of which has

nodes. We also suppose neighboring layers are fully connected. In the testing phase, ANN performs the forward propagation from the input layer to the output layer. We consider the computational complexity of the forward propagation of ANN in terms of floating point operation (FLOP) [

35]. A forward propagation from a layer with

nodes to a layer with

nodes requires approximately

FLOPs where the computational complexities of the activation functions of nodes are negligible. Then, FLOPs required for forward propagation over ANN is approximately

. In the training phase, ANN is updated through multiple pairs of forward and backward propagations. It is known that FLOPs of backward propagation are typically 2–3 times the FLOPs of forward propagation [

35]. Thus, it is sufficient to focus on forward propagation when comparing computational complexities of ANNs. When

L,

K, and

M are high,

is much higher than

and

so that the computational complexity of ANN is dominated by

. Since

FLOPs are too high to be executed in real time, the RA scheme based on a single-agent DQN is practically infeasible with high

L,

K, and

M.

To resolve this problem, we utilize a structure of multi-agent DQN, in which each agent corresponds to each D2D link and operates its own DQN. Note that agents exist physically in a central coordinator at eNB. Agents have ANNs in prediction and target networks with segmented structures as depicted in

Figure 3b. Agents share a state, which is identical to the state of a single-agent DQN defined in (

8). The action of an agent is reduced to the allocation of transmit power and spectrum channel of the corresponding D2D link only. Thus, the action chosen by the agent

m at time step

t, denoted by

, is defined by

where

and

denote the index of channel and transmit power level of the D2D link

m at the time step

t, respectively. Since each D2D link has

possible realizations of action, the ANN in each DQN has

output nodes as shown in

Figure 3b, where the number of input nodes remains as

. Then, the overall FLOPs required for forward propagations over

M segmented ANNs is approximately

. If

, the computational complexity of multiple-segmented ANNs is dominated by

, which is

of the complexity of a single-agent ANN. On the other hand, if

, the complexity is dominated by

, which is

of the complexity of a single-agent ANN. In both cases, a significant level of complexity reduction is observed by using the multi-agent DQN.

The learning process of multi-agent RL is executed as described below. Let us define constituent learning as a sequence of operations by a single agent, i.e., observing a state s, taking an action a, observing a reward r and a new state , updating weights of prediction/target networks and . If all agents conduct constituent learning simultaneously, it is impossible to evaluate explicitly the influence of individual agent actions on the change of the environment. Since the reward and the next state fed back to each agent do not reflect explicitly the contribution of the corresponding agent to the environment, the multi-agent RL may not converge well or may not improve performance through iterations. Thus, it is a reasonable approach to devise a sequential operation of constituent learning by multiple agents.

We let agents conduct constituent learning in a cyclic manner with the timing offset as depicted in

Figure 4. Without loss of generality, labeling agents is based on the order of performing constituent learning. This order is randomly selected at the beginning of the training phase and is maintained. After completing one constituent learning procedure, each agent keeps idling until its turn comes around again, by which each agent performs constituent learning periodically. We define a time step as a time interval corresponding to a period of learning by agent 0 as depicted in

Figure 4. All UEs may change their locations at the beginning of every time step. After an agent

m takes an action, a new state is observed by this agent as a result of environmental change. This newly observed state is also used as a state initiating constituent learning by the next agent, i.e.,

, if

. On the other hand, agent 0 does not use a newly observed state

of agent

as an initial state

because UEs may change locations at the beginning of the time step. All agents complete starting constituent learning processes within a time step. Note that

due to the existence of the idling period between adjacent constituent learning of each agent, which is a modification from the conventional DQN. In this manner, the overall cyclic learning procedure is operated during a training phase. The collection of constituent learning of all agents composes a learning iteration. We let

,

,

, and

denote state, action, reward, next state of the agent

m at time step

t. Even at the same time step, different agents have different values for these variables.

The learning procedure of each agent over multiple time steps and episodes is described as follows, which is also summarized in Algorithm 1. For a simple description, we focus on the operation of a specific agent indexed by

m, where

. This corresponds to a single timeline of a single agent in

Figure 4. First, we initialize weights of prediction networks

by randomly generated small numbers, and set weights of target networks as

. Experience replay memory

is initialized by running a random policy. The agent

m observes a state

and takes an action

based on the

-greedy policy as an action selection rule to affect the environment. This implies that the agent

m selects an action

resulting in the maximum state–action value

with probability

or selects an action randomly from other candidates with probability

. Note that we use the expression

to declare that the state–action value

is obtained by ANN with weights

. Affected by action

, the environment changes and the agent

m obtains a reward

by (

9) and observes a next state

. The transition vector

is stored in the experience replay memory

. The batch of transition vectors, which have been previously stored in

, are sampled randomly and used to evaluate a loss function as the following. Suppose

is one sample included in a batch

picked up from

, where we use subscript

j to represent an index at which the transition vector is stored in

with a slight abuse of notation. The predicted state–action value

is obtained from the output node corresponding to the action

in ANN of prediction network with weight

. The target state–action value

is obtained by a target network with weight

as

Then, the loss function

is computed as the mean squared error between the target state–action value and predicted state–action value by

where

represents the size of batch

. We update the weights of the prediction network

by using a stochastic gradient descent algorithm as

where

is a learning rate, and update weights of target network

softly as

where

. We repeat the above process over the time steps in each episode and repeat the whole process over

E episodes.

After the training phase is completed through multiple episodes as introduced above, the RA scheme enters a testing phase which corresponds to an actual operation of DUEs underlay cellular networks. At every time step, observation obtained by eNB is used by each agent as a state, which is input to the trained prediction network. Then, the action resulting in the maximum state–action value at the output nodes of the prediction network is chosen for each agent. The chosen action is reported to DUET and used as resources for the corresponding D2D communication. In the testing phase, resource allocation for all agents may be executed simultaneously, not in a staggered manner, at each time step. The environment is influenced by D2D communications performed in this manner, and new observation is obtained by eNB. This procedure repeats over all time steps of the testing phase.

| Algorithm 1 Training Phase of Agent m in a Multi-Agent DRL-Based RA |

Initialization: Randomly initialize weights of prediction network . Initialize weights of the target network by . Initialize experience replay memory . fordo for do Observe state . Determine action based on the action selection rule. Report the chosen action to DUET and DUET takes an action accordingly. Observe reward and next state . Store the transition vector in . Randomly sample the batch of transition vectors from . Obtain from the prediction network. Obtain by ( 13) in the target network. Compute the loss function by ( 14). Update and by ( 15) and ( 16), respectively. end for end for

|

5. Numerical Results

We consider a single circular-shaped cell, in which eNB is located at the center, and

K CUEs as well as

M DUE pairs exist, where DUERs are placed around the corresponding DUETs within a distance of 5 [m]. The distribution of CUE and DUET in the cell and the distribution of DUER around the DUET follow the binomial point process (BPP) model [

36]. All UEs change their locations at every time step. Simulation parameters used in numerical experiments are listed in

Table 1 and hyperparameters used for DRL are listed in

Table 2. Simulation software used for numerical experiments were Python 3.6.12 and PyTorch 1.4.0. The transmit power of each CUE was determined such that the corresponding SNR at eNB resulted in an outage probability of

%. We consider various values for

and

for the analysis of RA schemes in various aspects.

Each ANN in the prediction and target networks has five fully connected layers, the middle three of which are hidden layers. Each hidden layer has 300 neurons equipped with the ReLU activation function; a stochastic gradient descent optimizer is used for updating the weight of ANNs. Experiences replaying memories are initially filled with experiences obtained by running random policies. The training phase is completed in 5000 iterations (500 episodes and 10 iterations/episode), and the

-greedy policy with linear annealing is applied as an action selection rule. It is observed from

Figure 5 that DQNs are updated well during the training phase.

We evaluate the performances of RA schemes in terms of average benefits and compare performances of the proposed DRL-based RA, random RA, and greedy RA schemes. A random RA allocates the spectrum channel and transmits the power of the D2D links randomly. In a greedy RA, the channel and transmit power of D2D links are determined through a greedy search to maximize the sum of the average effective throughput for each time step. We consider a scenario that every time step, locations of UEs change, and resources of all D2D pairs are allocated simultaneously.

In

Figure 6, we plot the average benefits obtained by various RA schemes under comparison with respect to the number of D2D pairs

M existing in the cell, where various radii of cell

and target rates of cellular link

are considered. It is observed from

Figure 6 that the proposed DRL-based RA scheme shows better performance than others in all situations. As the number of D2D links

M in the cell grows, all RA schemes show lower average benefits due to resulting severer mutual interference among UEs. However, the performance degradation of the proposed DRL-based RA scheme is less sensitive to the growth of

M than other RA schemes. Thus, the performance gain of the proposed RA scheme over others becomes significant as the number of D2D pairs in the cell increases. It is also observed that the performance gain of the proposed DRL-based RA scheme over others increase as the radius of cell

decrease. From these observations, it is obviously inferred that the proposed RA scheme is quite useful especially when DUEs are distributed densely in a cell and suffer from a high level of mutual interference from other UEs. Since the demand for D2D communications is continuously growing, the proposed RA scheme would be a meaningful solution to resolve the spectrum shortage problem in the next-generation wireless communication systems.

It is additionally observed that the proposed DRL-based RA scheme attains highly improved average benefit with higher

compared with other RA schemes, which is clear from

Figure 7. This is explained by the property that the proposed RA scheme balances well effective throughputs of both D2D links and cellular links, while a greedy RA scheme has a priority in maintaining the QoS of D2D links at a required level. Consequently, the proposed DRL-based RA scheme obtains a significant performance gain over others in case the DUEs are distributed densely in a cell and CUE has a higher target rate than DUE.

In

Figure 8,

Figure 9 and

Figure 10, we plot the average transmit power of DUETs, the average outage probability of D2D links, and the average outage probability of cellular links with respect to

M, respectively, where various

and

are considered. It is observed that the proposed DRL-based RA scheme adapts the transmitting power sensitively with respect to

M and

, to achieve high benefits while maintaining the QoS of the cellular links. For larger

M and smaller

, the proposed RA scheme prevents benefits from decreasing by using a lower transmitting power of DUET at the cost of a higher outage probability of the D2D links. The overall performance is maintained at the sacrifice of the D2D link because the cellular link has a higher contribution to the overall performance than the D2D link when

is high. Although a greedy RA scheme also adapts the transmit power of DUET depending on

M, higher transmit power is allocated to D2D links with growing

M and thus the average outage probability of D2D links is maintained at the cost of increasing the average outage probability of cellular links. For higher

, this way of power allocation results in higher degradation in the average benefit so the performance is outperformed further by the proposed RA scheme.

Figure 11 shows clearly that a higher ratio of

results in lower transmitting power of DUET and, thus, a higher outage probability of D2D links by the proposed DRL-based RA scheme, which is different from a greedy RA scheme. The performance gain of the proposed RA scheme over others comes from the fact that each agent was trained to know implicitly how other agents will act at the next time step for a given set of observations. In other RA schemes, on the other hand, D2D links determine their resources only depending on the current observation. Since each agent in the proposed RA scheme takes an action based on the prediction of other agents’ future actions, the proposed RA scheme works much better than others especially in case a high level of mutual interference among UEs exists.

The proposed DRL-based RA scheme allocates adaptively communication resources depending on the locations of UEs, the cell size, and the target rate of the cellular link by using the pre-trained allocation rule, which enables a fast RA in the actual operation of communication networks.