1. Introduction

Low back disorders (LBDs) are a leading occupational health issue, and commonly due to overexertion. LBDs account for almost 40% of work-related musculoskeletal disorders in the U.S. [

1], resulting in financial and personal burdens due to healthcare costs, time off work, lost productivity, physical pain, and psychological distress. Individuals working in manual material handling jobs are at an elevated risk of LBDs due to overexertion experienced by the back during repetitive lifting and bending [

2,

3]. Overexertion injuries result from the accumulation of microdamage caused by repeated loading of musculoskeletal tissues (e.g., muscles, tendons, ligaments, discs), and are consistent with a mechanical fatigue failure process [

4,

5,

6].

Ergonomic interventions have reduced work-related musculoskeletal disorder prevalence [

7,

8,

9] by reducing physical strain on workers [

10,

11]. The hierarchy of controls is a useful framework for implementing interventions after risks are identified [

9].

Ergonomic assessments identify injury risk and play a critical role in informing and prioritizing where ergonomic interventions are needed. Several ergonomic assessment tools have been developed to identify LBD risk resulting from manual lifting tasks. The Lifting Fatigue Failure Tool (LiFFT) is noteworthy because it is based on fatigue failure principles [

12] and estimates cumulative damage of the low back. LiFFT works by counting the number of lifting repetitions and using peak load moment (an indicator of back strain due to the object lifted) to estimate damage inflicted on the person’s back each lift [

12]. Gallagher et al. used mechanical fatigue failure principles to develop a relationship between lumbar moment and tissue damage per cycle, which underlies LiFFT [

12]. LiFFT was validated against existing epidemiological databases [

13,

14] containing the peak load moment for each lift, the number of lifts performed per day, and the incidence of LBD for workers in diverse occupations [

12,

13].

However, ergonomic assessments can be time-consuming, costly, and inconsistent in practice. For instance, approximately one in three assessments has been found to contain errors, and in 13% of the assessments, the errors were substantial enough to invalidate the evaluation [

15]. To perform an ergonomic assessment with LiFFT, a trained safety professional observes an individual worker during a shift, manually recording the weight of each object lifted, the horizontal distance from the object to the lumbar spine (or hip), and the number of repetitions of this task [

12]. Due to the time-intensive nature of these observations, continuous and personalized monitoring is often impractical or cost prohibitive. This means that rather than assessments occurring over a long duration and involving a variety of tasks across multiple workers, conclusions are drawn from limited observations and may not be generalizable across a wide range of workers or tasks.

There is an opportunity to develop more efficient and consistent ways to assess ergonomic injury risk over a range of workers and workplace environments by providing automated monitoring of overexertion risk (e.g., to the low back). Wearable sensors are one type of emerging technology that could improve ergonomic assessments by monitoring individuals remotely and for long durations, allowing for personalized injury risk assessment to inform ergonomic interventions. This could reduce the time and effort burden on safety professionals, while simultaneously enabling more widespread risk assessment.

Wearable sensor systems using inertial measurement units (IMUs) are already being explored and adopted in industry to complement or expedite ergonomic assessment [

16,

17]. IMU systems provide kinematic information [

18,

19,

20] and are beneficial for ergonomic assessments that seek to capture data related to high-risk postures and bending frequency. There are some reports from industry indicating that IMU systems are useful in identifying and reducing musculoskeletal injury risks [

16,

17], and quantitative claims are now being made by device manufacturers about the efficacy and capabilities of their IMU systems for injury reduction. However, there is a dearth of formal, independent, or peer-reviewed studies that have evaluated these IMU systems, or the scientific basis underlying their risk assessment algorithms.

One known limitation of IMU systems is that they do not measure the weight of an object lifted, which may limit their ability to identify injury risk due to physical overloading or overexertion [

21]. While IMU systems can identify when an individual has performed a forward bend, they are unable to determine the weight of the object lifted or if an object has been lifted at all. This limitation is significant because musculoskeletal loading is dependent on forces and moments (kinetics), which require knowledge of the weight of an object lifted. Manufacturers of these IMU systems have tried to implement workarounds, such as manually inputting object weight, assuming a nominal object weight, or developing algorithms that attempt to estimate object weight indirectly based on movement patterns or other heuristics. However, the validity and efficacy of these workarounds is unknown. Fundamentally, it remains unclear to what degree we should expect IMU systems to be capable of assessing overexertion injury risk to the low back.

Combining pressure-sensing insoles with IMU sensors has the potential to overcome this limitation and to provide more accurate ergonomic assessment of lifting tasks. We previously found that lumbar moment, an indicator of low back loading, can be estimated 33% more accurately with combined signals from a trunk IMU and pressure insoles than with trunk IMU signals only, based on an analysis using idealized wearable sensor signals [

21]. However, material (e.g., tissue) damage is exponentially related to the peak load applied [

4,

5,

6], meaning that modest errors in lumbar moment estimates [

21,

22,

23] can result in much larger errors in damage estimates, affecting estimates of injury risk. These prior findings [

21] suggest that improved accuracy from combining pressure-sensing insoles and an IMU might meaningfully improve LBD risk assessment in the workplace. However, this proposition requires further research and validation, and important open questions remain, including:

The objective of this study was to address the two open questions above. To maximize the generalizability of our results and study contributions, we used a data-driven simulation approach to characterize and explore how accurately we could classify LBD risk using only trunk motion (e.g., from a single trunk IMU) versus using trunk motion and forces under the feet (e.g., from a single IMU combined with pressure insoles).

The first question is important because prior literature [

21,

22,

23] only quantified the accuracy of a biomechanical indicator of loading on the lower back (i.e., lumbar moment). Ultimately, what safety professionals care about is how accurately they can classify injury risk (not just estimate time-series biomechanical variables such as lumbar moment). LBD risk assessment accuracy has not yet been studied for trunk IMU and pressure insole systems. Furthermore, it is currently unclear what level of biomechanical load accuracy is good enough for ergonomic assessment in practice, particularly given the nonlinear relationships between musculoskeletal load, damage, and injury risk [

6,

12,

24].

The second question is important because it is currently unclear whether improved accuracy—which we expect using additional information from the pressure insoles—is substantial enough to justify the extra cost and complexity of adding pressure sensors into a user’s shoes. It is possible that processing IMU data alone may be sufficient for obtaining reasonably accurate estimates of LBD risk over a full workday (e.g., hundreds of lifts). This may be true even if the IMU-estimated accuracy is worse than the combined trunk IMU and pressure insole accuracy on a lift-by-lift basis.

There were three possible conclusions from our study:

Possibility 1: Capturing under-the-foot force data (e.g., from pressure insoles) is unnecessary because trunk motion alone (e.g., from an IMU) can provide reasonably good accuracy in estimating LBD risk over the course of a workday.

Possibility 2: Trunk motion data alone are insufficient to accurately estimate LBD risk, but adding under-the-foot force data results in a substantial improvement and reasonably good accuracy in LBD risk estimation.

Possibility 3: Neither trunk motion nor trunk motion combined with under-the-foot fore data seems adequate to accurately assess LBD risk over a workday, suggesting that alternative risk assessment tools may be needed.

Any one of these conclusions would be interesting, insightful, and actionable with respect to the potential application of wearable sensors to automate ergonomic risk assessment for the low back.

2. Materials and Methods

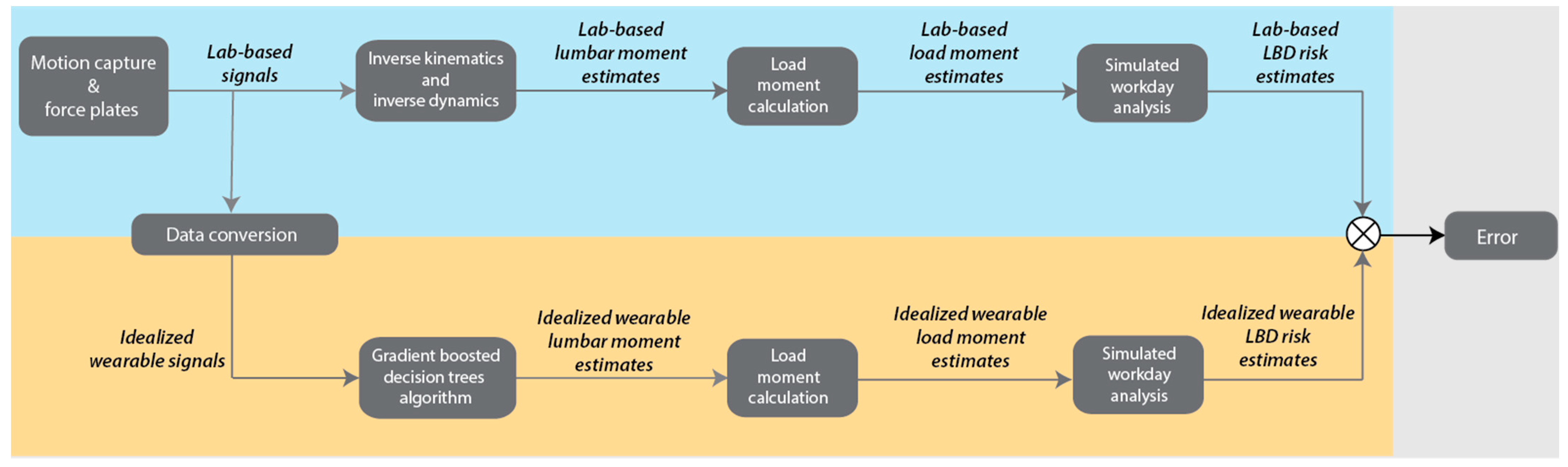

The study was accomplished in five stages. First, we analyzed an existing dataset [

21], which contains full-body 3D (three-dimensional) motion capture and bilateral ground reaction force data from 10 individuals performing a range of lifting tasks to obtain lab-based estimates of lumbar moment (an indicator of low back loading). Second, we trained a machine learning algorithm to estimate peak lumbar moment during each lift using only trunk motion signals (which we refer to as the

Trunk signals and algorithm) and then using trunk motion and force under the feet (

Trunk+Force signals and algorithm). For this analysis, we used idealized wearable sensor signals, meaning that data from laboratory instrumentation were converted into signals reasonably obtained from wearable sensors (rationale detailed below). Third, we converted the peak

lumbar moments into peak

load moments to use as inputs to the ergonomic assessment tool, LiFFT. Fourth, we simulated 10,000 material handling workdays based on randomly selected lifts and used LiFFT to compute LBD risk. Fifth, we evaluated the accuracy of the Trunk and Trunk+Force approaches by comparing their estimates of LBD risk during each simulated workday to lab-based (ground truth) estimates of LBD risk.

2.1. Data Collection and Processing

We reanalyzed a subset of an existing dataset [

21] in which 10 participants performed manual material handling tasks. For details of the experimental protocol and reasoning, see Matijevich et al. [

21]. We selected the 50 tasks involving symmetrical lifting of objects of different weights (5–23 kg) from different heights (40–160 cm above the ground) to simulate jobs of varying physical demands. Ground reaction forces and the center of pressure under each foot were collected with force plates (Advanced Mechanical Technology Inc., Watertown, MA, USA). Full-body kinematics were collected simultaneously from a 3D motion capture system (Vicon, Oxford, UK). Body segmental and joint kinematics were estimated from the motion capture data and rigid-body inverse kinematics in Visual3D (C-Motion, Germantown, MD, USA) software. Lab-based lumbar moment was estimated using bottom–up inverse dynamics, which prior studies have found to be in good agreement with top–down inverse dynamics during lifting [

22,

25]. We refer to these lumbar moments as lab-based (or ground truth) estimates since we used comprehensive 3D kinetic and kinematic data from laboratory instrumentation. In contrast, the Trunk and Trunk+Force lumbar moment estimates (detailed below) only used a small subset of signals to reflect wearable sensor measurement capabilities.

All participants gave written informed consent to the original protocol, which was approved by the Institutional Review Board at Vanderbilt University (IRB #141697).

2.2. Algorithm Development

We trained a gradient boosted decision trees machine learning algorithm to estimate lumbar extension moment using methods similar to Matijevich et al [

21]. This algorithm estimates the target metric by building a series of decision trees in stages. Each stage seeks to optimize the final prediction by estimating the residual error of the predictions from the previous stage. The final algorithms produced the lumbar moment estimates based on a series of approximately 100 trees. We used scikit-learn library and Amazon SageMaker, a cloud-based machine learning platform, for algorithm development, model training, and evaluation.

The lumbar extension moment during lifting was chosen as the target metric because it is an indicator of loading on the lower back and can be adapted to be an input to an established ergonomics assessment tool, LiFFT (see

Section 2.3), to estimate cumulative damage and injury risk to the low back [

6,

12]. We estimated the time-series target lumbar moment from lab-based data [

21], as detailed in

Section 2.1. This resulted in a lookup table for each participant containing a lab-based peak lumbar moment estimate for each lifting task completed.

We used idealized wearable signals as inputs to train each algorithm. Idealized wearable signals refer to motion lab data converted into time series signals that can be feasibly obtained from wearable sensors [

21,

26]. Using idealized wearable signals enabled us to address the questions outlined in the Introduction in the most fundamental and generalizable way, without being limited by the quality of existing wearable sensor hardware or their calibration methods, and without narrowly evaluating a single product. When using actual wearable sensors—specifically pressure insoles—task- and hardware-specific calibration methods are typically required to obtain accurate and reliable force estimates [

27]. However, developing these calibrations is complex and time-consuming. Therefore, our approach was to first evaluate and understand the expected necessity of pressure insole data in this current study using idealized wearable signals. The results of this study would inform which types of wearable sensors are needed and provide the basis for deciding whether to invest time into developing custom pressure insole calibrations.

We trained two separate algorithms using two different sets of signals: the first algorithm used Trunk signals as inputs, and the second algorithm used Trunk+Force signals as inputs. IMU sensors estimate orientation in the global coordinate system. IMU angle estimates are strongly correlated with angles obtained from 3D motion capture [

28,

29]. Therefore, Trunk input signals were composed of 3 directional orientation angles, accelerations, and angular velocities in the global coordinate system, which were approximated using 3D motion capture. Pressure insoles estimate ground reaction force and the center of pressure in the foot’s coordinate system. These have been found to be correlated with force plate data [

30,

31]. Thus, Force input signals were composed of a 1D force vector and the center of pressure in the mediolateral and anteroposterior directions, approximating signals that can be obtained from pressure insoles. The center of pressure from the force plate was transformed into the foot’s coordinate system, and the 1D force vector under each foot was obtained by projecting the 3D ground reaction force from the force plate onto a vector normal to the foot.

To train each algorithm, we used cross validation by participant [

32]. In other words, the data from 9 participants were used to train the algorithm, and then the trained algorithm was applied to the 10th participant’s data to estimate lumbar moment [

32]. This process was repeated for all 10 participants, resulting in two lookup tables per participant, one for each wearable signal condition (Trunk, Trunk+Force), containing the lumbar moment estimates for each lifting task. Cumulative damage and injury risk estimated from fatigue failure models are driven by high load magnitudes [

4,

5,

12]. Therefore, to improve estimates of the larger lumbar moments and avoid overfitting lower lumbar moments (e.g., during upright standing), we applied a minimum threshold of 100 Nm on the target metric when training each algorithm. As such, only data points where lumbar moments were above 100 Nm were included in the training dataset, prioritizing trained algorithm performance at the high lumbar moment magnitudes that occur during lifting.

Peak lumbar moment lookup tables were then converted into peak load moment lookup tables. Peak load moment is another low back load metric and the specific input required by LiFFT [

12,

24]. This conversion from lumbar moment to load moment was achieved by subtracting out moment contributions from the upper body orientation and acceleration, and calculations are explained in detail below.

2.3. Converting Lumbar Moment into Load Moment

The peak load moment (

) was calculated during each

ith lift [

12,

24] to assess LBD risk using LiFFT. Load moment (

) is defined as the time-series moment created about the lumbar spine by the object lifted and calculated by multiplying the weight of the object by the horizontal distance from the object to the lumbar spine (L5/S1). Peak load moment refers to the maximum load moment during a lift. However, the machine learning algorithm from Matijevich et al [

21]. and summarized in

Section 2.2 was trained to estimate

lumbar moment (

). Lumbar moment includes moment contributions due to the weight of the lifted object (

), the linear acceleration of the upper body and object (

), and the rotational acceleration of the upper body (

). Thus, to estimate load moment, we performed the following calculation:

L is the horizontal distance from the lumbar spine to the center of the mass of the head–arms–trunk (HAT), estimated using anthropometric tables [

33]. The dataset did not include markers on the box; thus, we assumed that the object lifted was accelerating at the same rate as the upper body and was being held close to the center of the mass of the upper body.

is the mass of the HAT, estimated using anthropometric tables [

32].

is the mass of the object being lifted in the analyzed trial.

is the acceleration of the upper body, estimated with the idealized trunk IMU signals.

is the moment of inertia of the HAT, estimated using anthropometric tables [

32], assuming that the HAT is a rectangular prism.

is the angular acceleration of the upper body, estimated using the idealized trunk IMU signals. These assumptions seemed reasonable for the lifting tasks performed because the mass of the upper body is much larger than the mass of the box, meaning that the contribution of the box to

is expected to be small by comparison. See

Section 4.4 for more discussion about this topic, including post hoc analyses that provided additional support for these assumptions being reasonable.

2.4. Simulated Workdays

We explored 1000 simulated workdays per participant using LiFFT and empirical data from the peak load moment lookup tables. A simulated workday consisted of 800 to 2000 randomly selected lifts from the lookup table. For each lift (

), we extracted the peak load moment (

) from the lookup table (lab-based, Trunk, or Trunk+Force), then used it as an input to LiFFT [

12,

24]. The cumulative damage over all lifts (

) was computed and then used to estimate the LBD risk for a simulated workday [

12,

24].

LBD risk

was computed using a binary logistic regression equation previously developed using epidemiological databases with injury prevalence categories [

13,

14]. The term LBD risk refers to the probability of being in a high-risk job (not the probability of someone being injured). This definition originates from the epidemiological databases used to validate LiFFT [

13,

14].

For each simulated workday, LBD risk was computed separately from the lab-based, Trunk, and Trunk+Force lookup tables. In total, this simulated workday analysis resulted in a 1000 × 3 table of LBD risk results for each participant, where each row corresponded to one simulated workday, and the three columns were three conditions (lab-based, Trunk, and Trunk+Force). An overview of the lab-based data analysis, algorithm development, and LBD risk assessment is provided in

Figure 1.

2.5. Evaluation

To evaluate the accuracy of LBD risk estimates from Trunk and Trunk+Force algorithms relative to lab-based LBD risk estimates (

Figure 1), we calculated the root mean square error (RMSE) for each participant across all workdays. We then calculated the between-participant average RMSE.

To help answer the question of whether Trunk+Force signals improve injury risk assessment accuracy relative to Trunk signals alone, we performed a statistical analysis to compare RMSE results between conditions. First, a Kolmogorov–Smirnov test was used to confirm normal distribution of the RMSE results. Subsequently, a dependent (paired-samples) t-test was performed to compare RMSE from Trunk vs. Trunk+Force algorithms. The p-value and effect size (Cohen’s d) were calculated. The Pearson correlation coefficients between the lab-based and Trunk LBD risks and between the lab-based and Trunk+Force LBD risks were also computed.

To gain insight into the practical significance of different levels of accuracy, we computed an additional summary metric: the percentage of simulated workdays where Trunk or Trunk+Force LBD risk estimates were within ±10% of the full-scale lab-based LBD risk assessment. For instance, if lab-based (ground truth) LBD risk was 65%, then Trunk or Trunk+Force estimates between 55% and 75% would be within ±10% based on this metric.