1. Introduction

The research work presented in this paper is mainly related to the wideband spectrum sensing problem, which consists of detecting the occupied or active frequency bands, at a given moment and in a given place, over a very large frequency domain (e.g., larger than 1 GHz). This information is necessary for cognitive radio systems [

1] but also for some spectrum monitoring-related civil and military applications [

2,

3].

In this framework, standard spectral analysis methods resulted in heavy or impractical spectrum sensing architectures because of the very high sampling frequency required and the huge quantity of data to be processed. Since a finite-dimensional signal with a sparse or compressible representation can be recovered exactly from a small set of linear, non-adaptive measurements [

4], the compressed sensing approach [

5,

6,

7,

8] allows the input sampling constraint to be relieved by taking advantage of the spectrum sparsity [

9]. The new constraint is then that at a given time and in a given location, only a small part of the whole monitored frequency band is really occupied. Hence, by taking advantage of this spectrum sparsity, instead of first sampling at a high rate and then compressing the sampled data before processing, the data can be directly sensed at a lower sampling rate in a compressed form.

A recent survey of wideband spectrum sensing approaches with special attention paid to approaches that utilize sub-Nyquist sampling techniques can be found in [

10], and ref. [

11] provides an overview of recent advances in this domain.

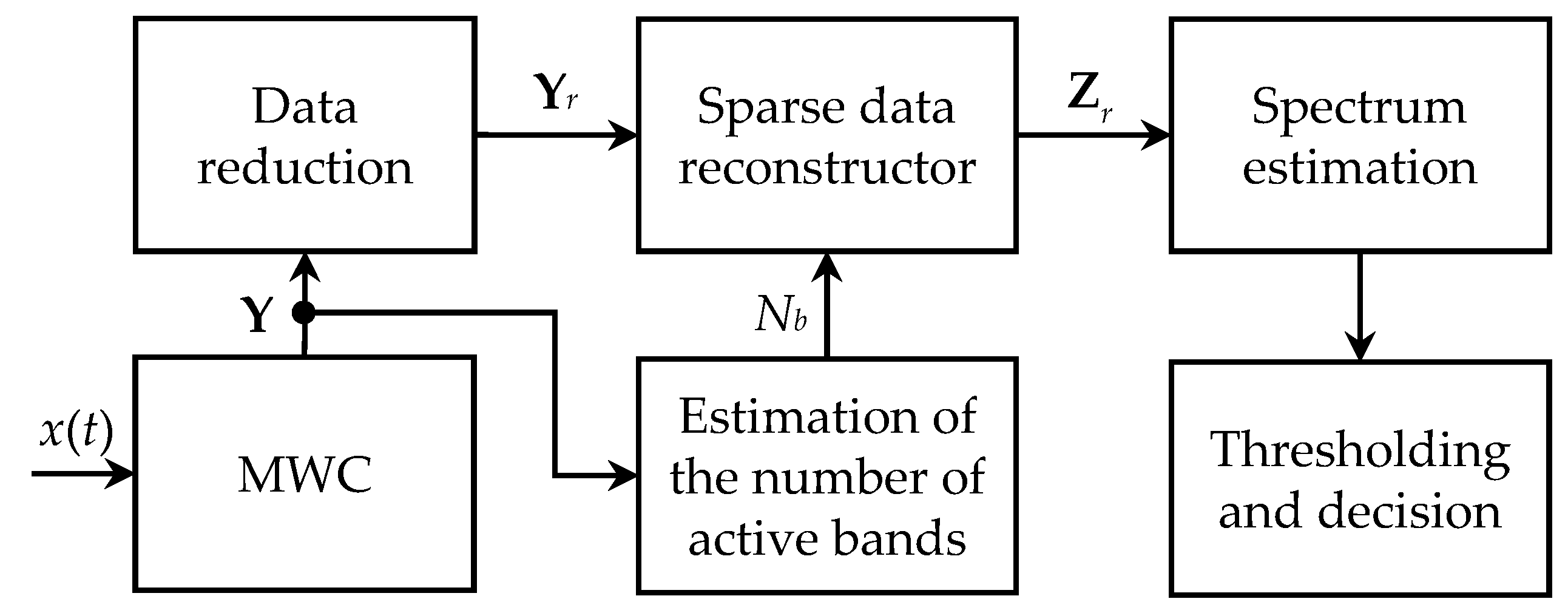

The general wideband spectrum sensing scheme considered in this paper is given in

Figure 1. The first stage of the processing chain is represented by the MWC (modulated wideband converter) [

12,

13], which is able to sample the received signal at a much lower rate than the Nyquist limit (

), without any information loss, provided that its frequency content is sparse enough. This specific Xsampling method is considered here just because it has been actually used to obtain the experimental results discussed in

Section 5, but it is worth noting that some other competing techniques have been also proposed over these last few years.

Thus, ref. [

14] describes and discusses an Xsampling architecture named analog-to-information converter (AIC), which aims at acquiring efficiently wideband signals. A blind sub-Nyquist sampling approach, referred to as the quadrature analog-to-information converter (QAIC), is proposed in [

15]. It relaxes the analog frontend bandwidth requirements at the cost of some added complexity compared to MWC for an overall improvement in sensitivity and energy consumption. A novel random triggering-based modulated wideband compressive sampling (RT-MWCS) method is also proposed in [

16] to facilitate the efficient realization of sub-Nyquist rate compressive sampling systems for sparse wideband signals. Compared to MWC, RT-MWCS has a simple system architecture and can be implemented with one channel at the cost of more sampling time. As a last example, a single channel modulated wideband converter (SCMWC) scheme for the spectrum sensing of band-limited wide-sense stationary (WSS) signals was introduced in [

17]. With one antenna or sensor, this scheme can save not only sampling rates but also hardware complexity.

Since the contribution presented in this paper is independent of the type of Xsampling scheme, the MWC architecture was selected for the reason mentioned above. It consists of M identical parallel signal processing paths, with a wideband input signal x(t) on each of them being multiplied with a different -periodical binary random signal, low-pass filtered, sampled at , and analog to digital converted. Note that , the most often equal to , is also twice the cut-off frequency of the low-pass filter.

The MWC output then consists of an M × N matrix Y, where N is the number of samples required to ensure a given spectral resolution and M ≤ N.

The matrix

Y could be directly used as an input for the sparse data reconstructor, which can be solved with the equation below:

under the sparsity hypothesis for the expected solution, i.e., the

L ×

N matrix

Z. The

L ×

M matrix

W involved in Equation (1) is known, its elements being calculated directly from the periodical binary random signals (scramblers) used by the MWC.

In the compressed sensing approach, the price to pay for the reduction in the input sampling rate is the additional processing required by the signal recovery. The dimension of the input matrix

Y for reconstruction is then of particular importance. Since typical values of

N may be very large, it is proposed to reduce the data matrix

Y before sparse data reconstruction. Compared to state-of-the-art published research ([

18,

19,

20]), our approach directly exploits the intrinsic sparsity of the matrix

Y. Hence, rather than using it as input for the sparse data reconstructor, the following matrix is considered instead:

where

is the

N ×

M matrix provided by the “economy size” singular value decomposition (SVD) of the matrix

Y, i.e.:

In this way, the sparse data reconstructor will work with an

M ×

M instead of an

M ×

N input matrix, which considerably reduces the computational burden for large values of

N. Actually, problem (1) can be rewritten as follows:

where

.

Note that the sparse data reconstructor also requires the estimation of the number of active frequency bands

. This task can be carried out by different algorithms, such as the information-theoretic criteria [

21], which makes use of the

M singular values of

Y provided by the diagonal of the

matrix.

The reduced data matrix

and the estimated number of active frequency bands

are then used in the next stage to find out the sparse problem solution

through a greedy algorithm, such as OMP (orthogonal matching pursuit) [

22,

23], or an optimization-based one, such as LASSO (least absolute shrinkage and selection operator) [

24,

25]. In [

26], the authors showed that LASSO was a suitable choice for compressive spectrum sensing and recovery in wideband 5G cognitive radio networks.

As will be demonstrated in this paper, greedy algorithms are already data reduction invariant and do not require any modification when being used in this framework. However, the standard LASSO algorithm does not have this useful property because of the standard norm, which is involved in the optimization process.

In order to overcome this drawback of the standard LASSO algorithm, a new data reduction invariant norm was first introduced to replace the standard norm in the optimization process. Then, it was demonstrated that the newly defined version of the LASSO algorithm became data reduction invariant. To the best of our knowledge, this is the only data reduction invariant version of the LASSO algorithm proposed so far in the literature.

Finally, once the matrix is provided by the sparse data reconstructor, the input signal spectrum is estimated, and the threshold is determined in order to make a decision about the active frequency bands.

The rest of the paper is organized as follows. In

Section 2, it is demonstrated that greedy algorithms for sparse signal reconstruction are already data reduction invariant. The new version of the LASSO algorithm was introduced in

Section 3, and its invariance with respect to data reduction was also demonstrated. The performance of the proposed algorithm is finally evaluated using both simulated and measured data in

Section 4 and

Section 5 respectively, while

Section 6 summarizes the research work presented in this paper and provides some conclusions about its results. Some mathematical preliminaries are also provided in

Appendix A; the standard OMP algorithm is briefly recalled in

Appendix B, while the data reduction invariance of the newly defined

norm is demonstrated in

Appendix C.

The general notations used in this paper are as follows. Matrices and vectors are denoted by symbols in boldface, including uppercase for matrices and lowercase for vectors. and represent complex transpose and Hermitian operators, respectively. denotes the M × M identity matrix. and stand for the norm and the Frobenius norm, respectively. Some other specific notations are defined in the next sections.

2. Data Reduction Invariance of Greedy Algorithms

In this section, it is shown that greedy reconstruction algorithms are invariant with respect to data reduction. Although the invariance property is demonstrated for the OMP algorithm only, this result can be extended by similarity to the other algorithms of this class, such as a compressive sampling matching pursuit (CoSaMP) [

27,

28] or Iterative Hard Thresholding (IHT) [

8,

29]. Also note that while an extensive comparison between the proposed algorithm and OMP is carried out in the next section, in terms of the mean square error and detection probability, some results obtained by the CoSaMP and IHT algorithms on measured data are provided as well in

Section 4.

Let us consider the two problems corresponding to the original and reduced data matrix, respectively:

where

and

, as already mentioned above.

Let us also define the following notations:

: a subset of with formed by the indices of non-zero rows of the solution , at the kth iteration;

: the matrix formed with the columns of whose indices belong to ;

: the optimized matrix;

.

It can be readily noticed that the matrix is a projector, since .

Let us finally denote by

the set of all matrices

A invariant with respect to

(fixed points of the projector), so that:

For the first problem in Equation (5), the residual can be written as follows:

where:

Since

, according to Lemma A1 and Lemma A3 (see

Appendix A), the Equations (7) and (8) result in:

For the second problem in Equation (5), the residual can be written as follows:

where:

Since it has already been shown that

, multiplying Equation (11) at the right side by

results in:

and finally:

Hence, reducing the data matrix does not modify the residual norm. Consequently, taking into account the bijective relationship (11) between and , since the OMP algorithm aims at minimizing the residual norm, it can operate as well on the reduced data matrix without changing the final result.

Furthermore, the key elements involved in the OMP algorithm are the scalar products between the

matrix columns and the residual. More precisely, the relevant information is contained in the diagonal of the matrix below:

Hence, this matrix does not change when using a reduced data matrix instead of the original one. Consequently, there exists an isomorphism between the intermediate calculations required by the OMP algorithm running on the two data matrices since all the intermediate variables are linked by bijective relationships, and all the elements involved in the decision-making steps (i.e., residual norm and scalar products) are invariant.

3. Data Reduction Invariant Version of LASSO Algorithm

A new version of the LASSO algorithm, invariant to data reduction, is introduced in this section. A key point to keep in mind is that it operates on the reduced data matrix, as explained in the previous section, and therefore, it optimizes instead of , which results in a significant complexity reduction. Since , the sparse solution can be then easily recovered from the optimized matrix.

In the case of the standard LASSO algorithm,

is obtained as a solution of the following optimization problem:

The objective function

is not invariant with respect to data reduction because of the

norm

. Indeed,

, which is not equal to

. Hence, it is proposed to replace it with the modified

norm

, defined as follows:

It can be readily noticed that this newly defined norm is different from the Frobenius norm because of the square root under the trace operator. According to Equations (A4) and (16), it is also data reduction invariant (see

Appendix C for further details), so it is called the “invariant

norm”.

Consequently, if the initial solution (LASSO starting point) belongs to

, the final solution of the data reduction invariant LASSO algorithm can be obtained from:

In order to properly describe the LASSO algorithm in its new invariant form, let us consider the following notations:

: matrix without its ith row;

: the matrix without its ith column;

: the ith row of the matrix ;

: the ith column of the matrix .

One of the basic ideas of the LASSO algorithm is to transform the multidimensional optimization problem (17) into a set of mono-dimensional optimization problems.

This is conducted by expressing the objective function as a sum of two terms, the first one depending on only one component of , and the second one depending on all its other components. Thus, the objective function can be optimized successively with respect to each component of , which is equivalent to globally optimizing it with respect to all its components.

The problem related to the introduction of the new invariant norm is that it is not possible anymore to separate a given component of because of the square root function.

By denoting

, the following expression holds:

According to the definition of the invariant

norm, it can be also written as:

then becomes:

Focus now only on the second term of

since the first one does not depend on

. Let us also define the following notations:

Hence, the objective function becomes:

The value of

µ minimizing

can be then obtained from:

If Equation (24) yields a negative value for µ, take since according to Equation (22).

For a fixed value of

µ, by keeping only the terms depending on

, the objective function to be minimized with respect to

can be written as:

Lagrange’s multipliers method leads to the following objective function:

where

θ stands for the Lagrange multiplier.

Developing Equation (26) to make the components of

appear results in:

The phase

is involved only in the product

, and it can be readily seen that

F is minimized when:

so that:

The value of

that minimizes

F is then obtained from:

From Equations (28) and (30), it can be inferred that:

and because

is a unit vector, it can be finally expressed as:

In practice,

is first calculated using Equation (32), then

µ is evaluated from Equation (24); the value of

λ is estimated using the cross-validation method [

30]. Finally,

is computed from Equation (22).

Algorithm 1 below summarizes the processing flow associated with the proposed data reduction invariant LASSO technique.

| Algorithm 1 Processing flow for the proposed data reduction invariant LASSO technique |

Input: M × N matrix at the output of the MWC scheme, M × L X sampling-related matrix and N × M matrix , obtained from the SVD of the matrix using Equation (3). Initialization: Compute according to Equation (2). Take an initial solution for the L × M matrix belonging to . Find the optimal λ value using the cross-validation method [ 30]. Fori←1 to L, do Obtain the matrices and by removing the ith column from the matrix and the ith row from the matrix , respectively. In addition, obtain the ith column of matrix and denote it by . Calculate according to Equation (20). Calculate according to Equation (22). Calculate according to Equation (32) and then according to Equation (24). Calculate according to Equation (22). Update the estimated solution by replacing its ith row with . End for Output: Calculate the final estimated solution, i.e., the L × N matrix . |

A comparison of complexity can be finally performed between the proposed algorithm and the standard one. Thus, based on Algorithm 1 presented above, it can be readily established that the complexity is reduced from for the standard LASSO to for the data invariant LASSO algorithm. It can be noticed that the complexity gain increases with the value of L since the proposed algorithm reduces its quadratic dependence on this parameter to a linear one. An additional complexity result is provided in the next section in terms of the number of multiplications for a given set of simulation parameters.

4. Simulation Results

This section aims to illustrate the performance of the proposed invariant LASSO algorithm in a simulated wideband spectrum sensing scenario characterized by the following parameters:

Monitored frequency band: ;

The number of active frequency bands: ;

Bandwidth of each active frequency band: ;

Spectral resolution: ;

The number of MWC parallel processing paths ;

Sampling frequency on each path: ;

The number of samples acquired on each path .

Note that for this set of parameters, the sizes of the matrices and are lowered from 21 × 1024 and 32 × 1024, to 21 × 21 and 32 × 21, respectively. It can be also noticed that given the real nature of the analyzed signal, the eight active frequency bands have to be considered by couples of two so that they actually correspond to four transmitters.

Figure 2 shows the variation in the cost function during the cross-validation process. Its minimum value is obtained for

. This value of

λ does not depend on the noise level and is used by the invariant LASSO algorithm, as explained in the previous section.

Figure 3 illustrates the new algorithm performance for two signal-to-noise ratios (SNR), i.e., 30 dB and 10 dB, respectively. Note that these two values are in-band SNRs since they are measured only within the active bands. If the same SNRs are calculated over the whole monitored band, they correspond to 19 dB and −1 dB, respectively.

As can be readily seen, the active bands are very well reconstructed for an in-band SNR = 30 dB, and they can be perfectly detected using an appropriate threshold, which is iteratively and blindly updated, as has also been already proposed in [

31].

For an in-band SNR = 10 dB, although the results are still exploitable, the algorithm reaches its limits. This can be explained by the fact that the LASSO algorithm introduces an SNR loss in the reconstructed bands of about 11 dB in this configuration. Indeed, as can be noticed from

Figure 3b, SNR loss leads to the increasingly challenging detection of active bands, as well as higher false alarm rates and bandwidth estimation errors.

In order to evaluate the complexity gain for the considered set of simulation parameters (

M = 21,

L = 32), the number of multiplications is also shown in

Figure 4 for

. It can be noticed that a significant complexity reduction is obtained using the proposed algorithm, which becomes even slightly larger with the value of

N.

The performance of the new algorithm was finally evaluated for a wide range of in-band SNR (5–30 dB) and false alarm probabilities (

) in terms of the normalized mean error and detection rate (

Figure 5). The same parameters provided by the OMP algorithm were also plotted for comparison purposes.

These two performance parameters have been obtained at the output of a “threshold and detect” scheme, using Monte-Carlo simulations with 1000 independent noise realizations and random positions of the active frequency bands. The threshold is calculated to keep the false alarm rate constant at the output of this scheme. The normalized error is then obtained as a complement with respect to one of the relative numbers of threshold overruns inside the active frequency bands. The detection rate is calculated as the relative number of detected frequency bands. Note that a frequency band is considered as being detected if there is at least one threshold overrun inside it.

The results depicted here have been obtained for a false alarm probability of , but they are similar to the other false alarm probabilities in the range above. It can be noticed that the performances of the two algorithms are close. However, the proposed algorithm appears to be more robust to noise, while OMP provides a slightly better detection rate for high SNRs.

5. Experimental Results

The proposed data reduction invariant LASSO was also evaluated using measured data. Our experimental testbed is shown in

Figure 6, and its block diagram, including the external instruments, is provided in

Figure 7. It is based on a 4-channels MWC analog board, which is described in more detail in a previously published paper [

13], and is able to monitor wideband spectral domains up to 1 GHz.

Table 1 provides the main parameters of our experimental testbed.

Our analog front-end board for compressed sampling with its four physical channels is shown in

Figure 8, while

Figure 9 illustrates its operating principle. On input, an SCA-4-10+ splitter from Mini-Circuits©, with less than 7 dB loss, was used to provide the input signal to the four channels.

Similar to channel 2 depicted in

Figure 9, each channel included an M1-0008 mixer from MArki©. The mixer receives an amplified radio-frequency signal to analyze its RF input and a pseudo-random modulating waveform on its LO input.

The mixer output (IF) goes through a low-pass filter, which is an SXLP-36+ low-pass filter from Mini-Circuits©, with a 3 dB cut-off frequency of 40 MHz. This filter was chosen because it has a very flat response (variations lower than 1 dB) from the 0 to 36 MHz band and a sharp cut-off above this band.

For our experiments, the radio-frequency signal was provided by a Keysight 81180A arbitrary waveform generator. An Avnet ML605 DSP Kit, as shown in

Figure 10, was also used to generate the pseudo-random modulating waveforms. It included a Xilinx Virtex-6 FPGA, as well as digital-to-analog and analog-to-digital capabilities. Moreover, it enables the selection of each channel sequence from a compiled list. If necessary, recompilation allows new sequences to be added or changes some parameters, such as the bit rate. The embedded Gigabit-Transceiver X (GTX) high-speed Serializer-Deserializer transceivers from 0 to 3 were connected to channels 1

–4 of the front-end analog board.

A DSO90404A Agilent Infiniium 4-channel oscilloscope was used to acquire the output signals and save them. To synchronize the acquisition with respect to the modulating waveforms, a pulse signal was generated by the GTX 7 of the ML605 board and plugged into the oscilloscope external trigger.

The acquisition system was calibrated using the approach described in [

32].

Figure 11 shows the relative error between the observed and predicted system output, as a function of output frequency, for the calibrated system and for the uncalibrated one, which clearly demonstrates the interest in the calibration stage. The relative error was evaluated using a formula similar to the criterion considered in [

33]:

Here o(f) denotes the observed output signal corresponding to the subband centered on f (the whole frequency band of the signal at the output of the acquisition system has been divided into 28 subbands). Similarly, p(f) denotes the predicted output signal corresponding to the subband centered on f, which is predicted by the calibrated model or by the theoretical model. In any case, even for the theoretical model, a calibrated low-pass filter is always included: the true frequency response of the filter is taken into account in the related equations.

The wideband spectrum sensing results provided by the proposed reconstruction algorithm, using original and reduced data, are shown in

Figure 12 and

Figure 13 for 2 and 6 active transmitters, respectively.

For the scenario when two active transmitters, i.e., four active frequency bands, are considered (

Figure 12), it can be noticed that they are both well detected. The amplitude of the upper-frequency bands is lower than expected because the corresponding transmitter carrier is close to the higher limit of the monitored frequency band. We have noticed that the reconstruction is usually less reliable in this area, probably due to higher non-linear effects in the analog front-end at very high frequencies, and it is interesting to see that the transmitter is detected even in these difficult conditions.

For the scenario with six active transmitters, i.e., 12 active frequency bands (

Figure 13), the first five transmitters are well detected, while the last one seems to be lost. In addition to the fact that it is also close to the higher limit of the monitored frequency band, as in the previous case, there is another aspect that explains this result. Actually, with six transmitters to be detected instead of two, the expected solution is significantly less sparse than in the previous case, which leads to some reconstruction quality loss.

However, it can be readily seen that the reconstruction results are slightly better, in terms of SNR and MSE, when the proposed algorithm runs on reduced data, as shown in

Figure 13b. The execution time is also about 20 times shorter than when it runs on original data, which confirms the results presented in

Figure 4.

Finally, as already mentioned in

Section 2, the reconstruction results obtained with two greedy algorithms, CoSaMP and IHT, are also illustrated in

Figure 14 for the same measured data scenario with six transmitters. Note that there seems to be less noise on these images just because, contrariwise to LASSO, in the case of greedy algorithms, the spectrum is reconstructed only inside the detected active bands. However, as illustrated in

Figure 14, they are more likely to miss some transmitters and generate false alarms. They can also be subject to bandwidth estimation errors if further processing is carried out to extract more information related to the detected bands. Note that this kind of post-processing is out of the scope of the paper and is mentioned here only to illustrate the limitations of CoSaMP and IHT algorithms.