1. Introduction

With the rapid development of unmanned and mobile positioning, the demand for high-precision and high-robustness localization techniques is increasing [

1]. Among modern navigation and positioning systems, the global navigation satellite system (GNSS) is widely used on a variety of occasions due to its global coverage and high-precision positioning capability [

2]. However, in complex urban road environments such as tunnels, canyons, tall buildings, overpasses, or other situations where satellite signals are obstructed, GNSS systems are challenged with severe signal attenuation or complete loss of lock [

3]. Such signal loss is a serious challenge for unmanned vehicles that rely on the GNSS for precise navigation and positioning, as well as intelligent transportation systems and other positioning systems that require high reliability and accuracy. In order to solve the problem of GNSS loss of lock, researchers have proposed a combined positioning method using the inertial navigation system (INS) and the GNSS [

4,

5]. The INS can provide relatively accurate position and velocity in a short period of time, but the acceleration and angular velocity information output from the inertial measurement unit needs to be integrated, and the error will keep accumulating with the increase of time, and it cannot provide high-precision positioning information independently for a long time. Therefore, by combining the INS with the GNSS [

6,

7], the global positioning information provided by the GNSS can be used to correct the accumulated errors of an INS and achieve more accurate and robust navigation and positioning.

In the field of GNSS/INS integrated positioning, the Kalman filter (KF) and its derivative algorithms, such as the extended Kalman filter (EKF) [

8,

9] and the unscented Kalman filter (UKF) [

10,

11], have long been the mainstream techniques for handling state estimation of both linear and nonlinear systems. By fusing the global positioning of the GNSS and the autonomous positioning of the INS, these filtering methods have successfully improved the positioning accuracy and system robustness in a variety of environments. However, as the complexity of application scenarios increases, KF and its variants exhibit limitations in dealing with highly nonlinear problems, error accumulation, and occasions where global optimization solutions are required [

12,

13]. Especially in urban canyon or tunnel environments, where GNSS signals are susceptible to interference or occlusion, the problem of error accumulation of these algorithms under prolonged operation is particularly significant. To overcome these challenges, factor graphs are proposed as a new solution [

14,

15,

16,

17,

18]. By constructing a global optimization framework that allows for a comprehensive consideration of the entire system state, rather than relying solely on sequential state updates, factor graphs exhibit higher accuracy and stability when dealing with nonlinear systems and performing long-term estimation. Compared to Kalman filtering and its variants, factor graphs not only handle highly nonlinear problems more naturally but also reduce the effect of error accumulation through global optimization, which significantly improves the performance of positioning systems in complex environments. The authors in [

19,

20] compared the combined navigation based on factor graphs and the EKF, and the factor graphs make the navigation and localization performance better than the EKF by constructing constraints with more historical data and multiple iterations. The authors in [

21] compared the multi-source information fusion by using factor graphs and compared it with the federated filtering method. The localization error, stability, and data fusion time of the factor graphs is better than that of the federated filtering. The factor graph method shows a wide range of application prospects and advantages in the field of combinatorial localization [

22,

23,

24], which opens up a new way to improve localization accuracy and system robustness.

In recent years, with the rapid development of deep learning technology, neural network-based methods have provided new perspectives for solving complex navigation and positioning problems [

25,

26,

27]. A variety of neural network-based theoretical models and algorithms have been introduced in combined positioning and information fusion [

28] to improve the continuity of the combined positioning system and the positioning accuracy in the GNSS out-of-lock environment. The authors in [

29,

30] used a multilayer perceptron (MLP) to assist in combined GNSS/INS localization and utilized the velocity, angular rate, and specificity information of the INS to predict pseudo-GNSS information in the event of GNSS interruption. However, when the model complexity is too high or the amount of training data is insufficient, the MLP is prone to overfitting the training data and falling into local optimization. The authors in [

31] utilized the recurrent neural network (RNN) assisted localization to predict the current error when the GNSS is unavailable by inputting the amount of change in velocity and attitude with respect to the position and velocity error, which is used to compensate and correct the INS error. However, it still suffers from overfitting risk, gradient vanishing, or explosion problems. While long short-term memory (LSTM) networks can solve the common gradient vanishing problem of the RNN in long sequence learning, LSTM is able to deal with the time dependence of data compared to an MLP. The previously mentioned scholars have used various neural networks combined with Kalman and its variants to solve the combinatorial localization problem in the case of GNSS interruptions, whereas combinatorial localization methods using neural networks combined with factor graphs are less common.

In summary, to achieve robust and continuous localization in the absence of the GNSS, this paper proposes a combined localization method using an LSTM-assisted GNSS/INS pre-integrated factor graph in scenarios where GNSS signals are interrupted. This method first utilizes the pre-integrated factor graph algorithm to fuse GNSS and INS data, enabling high-precision positioning in environments with good GNSS signal availability. In the event of a GNSS lockout, the method uses a pre-trained LSTM model to predict the GNSS position increments, which are then used to estimate the position information during the GNSS outage. This prediction effectively compensates for the absence of GNSS position information during interruptions, ensuring the continuity and robustness of the localization system. The main contributions of this paper are as follows:

The paper describes an integrated GNSS/INS positioning framework based on factor graphs. It involves pre-integrating the IMU to reduce error propagation and enhance the overall accuracy of the factor graph-based combined positioning system.

By training an LSTM neural network prediction model, GNSS position increments are predicted in the event of GNSS interruptions, generating GNSS information that is integrated with INS positioning results to improve the accuracy and robustness of the GNSS/INS combined positioning system.

The remainder of the paper is organized as follows:

Section 2 introduces the factor graph theory, IMU pre-integration factors, GNSS factors, and the factor graph combined positioning model.

Section 3 details the training and prediction methods of the proposed LSTM neural network prediction model. Road tests and result analysis are conducted in

Section 4, and conclusions are presented in

Section 5.

2. Information Fusion Methods Based on Factor Graphs

A factor graph is a graphical structure used to represent probabilistic graphical models, which illustrates the decomposition of multivariable functions into a product of local functions. Factor graphs are composed of variable nodes and factor nodes connected by undirected edges. Variable nodes represent system states, while factor nodes represent constraints or measurements between variables. In navigation positioning systems, the optimal navigation solution corresponds to the maximum a posteriori estimate of the system state. According to Bayesian theory, the posterior probability can be expressed as the product of a series of probability models [

32].

where

represents the system state variable at time

,

is the measurement value from the sensor at time

,

is the likelihood probability density of the initial state variables,

is the posterior probability density of the measurements, and

is the probability density of the measurements at time

. Since the initial state is known and each positioning measurement is independent, the posterior probability is the product of the probability densities of state transitions and measurement predictions at each moment. The global conditional probability density function is proportional to the likelihood probability density and the state transition prior probability in the numerator mentioned above.

According to the maximum a posteriori probability criterion, the optimal estimation of the state variable can be represented by the maximum a posteriori probability density:

According to the principles of factor graphs, each probability density corresponds to a factor node within the graph. Thus, the above expression can be rewritten as follows:

where the factor node

can be represented as follows:

where

is the observation value,

is the noise covariance matrix of the corresponding sensor, and

is the sensor measurement residual. For nonlinear optimization within the factor graph, the maximum a posteriori can be transformed into minimizing the sum of the nonlinear least squares [

33], expressed as follows:

By combining the sensor factors derived from observations and prior information, the solution for maximum a posteriori probability is found, thus enabling the fusion of data from different sensors. Therefore, for the GNSS/INS integrated positioning factor graph model, the optimal estimate of the system state

can be represented as follows:

where

are the covariance matrices for the IMU preintegration and GNSS, respectively, and

are the residuals of the IMU preintegration factors and GNSS positioning factors, which are further derived and explained later in the text.

is the prior information and

is the prior covariance matrix. The prior factor is used in navigation positioning problems to introduce prior information or measurement information about the state variables, helping the positioning system to estimate the state more accurately.

2.1. IMU Pre-Integration Factor Node

Typically, IMUs output data at a high frequency, and directly processing these high-frequency data can lead to a substantial computational burden. Raw IMU data consists of angular velocity and acceleration. Integration of angular velocity results in orientation, while acceleration is integrated to obtain velocity and further integration of velocity results in displacement. In this process, errors accumulate progressively. To address this issue, pre-integration is employed to preprocess IMU data [

34]. Through pre-integration, high-frequency IMU data can be compressed into a single update over a period, reducing the error accumulation caused by multiple integrations. Essentially, the raw IMU data are integrated to compute the carrier’s relative displacement and rotational changes over a period. To ensure data synchronization and alignment, the IMU preintegration time interval is kept consistent with the GNSS sampling interval, and an IMU preintegration factor is added within each GNSS sliding window. The schematic diagram of IMU preintegration is shown in

Figure 1.

In the integrated positioning system, the acceleration

and angular velocity

measured by the IMU reflect the dynamic changes of the carrier [

35]. The IMU measurement model can be represented as follows:

where

are the measured true values for the gyroscope and accelerometer,

are the biases for the gyroscope and accelerometer, and

are the noises for the gyroscope and accelerometer. According to the kinematic model of the INS, the differential equations for position, velocity, and attitude with respect to time can be obtained as follows:

where

represents the navigation coordinate system (East-North-Up),

denotes the body coordinate system (Right-Front-Up),

is the position in the n-frame,

is the velocity in the n-frame,

is the quaternion from the b-frame to the n-frame,

is the attitude rotation matrix from the b-frame to the n-frame, and

is the local earth gravity in the n-frame. By integrating the differential equations for position, velocity, and attitude over the time interval

, the kinematic equations of the INS are obtained as follows:

The IMU pre-integration from time

to

can be expressed as follows:

where

represents the position pre-integration, velocity pre-integration, and attitude pre-integration. In factor graph optimization, a noise covariance matrix is required to weight the IMU factor, with the error state vector defined as follows:

where

represent the pre-integrated measurement errors for position, velocity, and attitude, respectively, and

are the bias errors for the gyroscope and accelerometer. The continuous-time dynamics model for pre-integrated error states is represented as follows:

where the state transition matrix

, the process noise matrix

, and the noise vector

are respectively represented as follows:

The pre-integration covariance matrix can be expressed as follows:

where the initial covariance matrices

,

,

.

The first-order Jacobian propagation equation can be expressed as follows:

where the initial Jacobian matrix

is the identity matrix. Based on the Jacobian and covariance matrices, a first-order expansion can be used to update the pre-integrated measurements of Equation (11), and the bias-updated pre-integrated measurements are calculated as follows:

where

is a submatrix of

, located according to

. This definition also applies to

. Hence, the residual of the IMU pre-integration factor can be represented as follows:

2.2. GNSS Factor Nodes

The GNSS is highly valuable in open and unobstructed environments for providing absolute and long-duration positional information. However, in environments with obstructions such as urban high-rises, tunnels, and overpasses, GNSS signals may be blocked or interfered with. Thus, it becomes necessary to fuse data from other sensors to enhance navigation accuracy and robustness. The raw GNSS pseudorange and carrier phase observations can be expressed as follows:

where

represents the pseudorange and carrier phase observations from receiver ‘

r’ to satellite ‘

s’;

denotes the geometric distance from the satellite antenna phase center to the receiver antenna phase center;

refers to the receiver and satellite clock biases;

are the ionospheric and tropospheric delays;

is the wavelength;

is the carrier phase integer ambiguity;

are the pseudorange hardware delays at the receiver and satellite ends;

are the phase delays at the receiver and satellite ends; and

includes residual errors on the pseudorange and carrier phase observations, encompassing the sum of observation noise and multipath errors.

To enhance GNSS positioning accuracy and interference resistance, a dual-frequency ionosphere-free (

IF) combination is used to eliminate the first-order ionospheric delay:

where

represents different GNSS signal frequencies, and the resulting ionosphere-free combination observation equation is as follows:

where

are the noise-inclusive observation values of the pseudorange and carrier phase after ionosphere-free combination;

are the satellite coordinates;

is the receiver clock bias, obtainable from precise orbit and clock data;

are the tropospheric wet delays and their projection functions, respectively, and the tropospheric delays are usually divided into a kilo component and a wet component, where the kilo component is usually calibrated directly by a priori models, such as the Saastamoinen model [

36], while the wet component usually needs to be estimated as a parameter due to its large uncertainty;

is the position of the GNSS receiver measurement center in the n-frame; and

are the wavelength and integer ambiguity of the ionosphere-free combination. Since the GNSS positioning solution provides the coordinates of the receiver center and the INS mechanical alignment provides the navigation results of the IMU measurement center, which physically do not coincide, a lever arm correction is required during combined navigation solution calculation [

37].

where

is the position of the IMU measurement center in the n-frame and

is the GNSS antenna lever arm. The GNSS factor residual can be expressed as follows:

2.3. Factor Graph Model

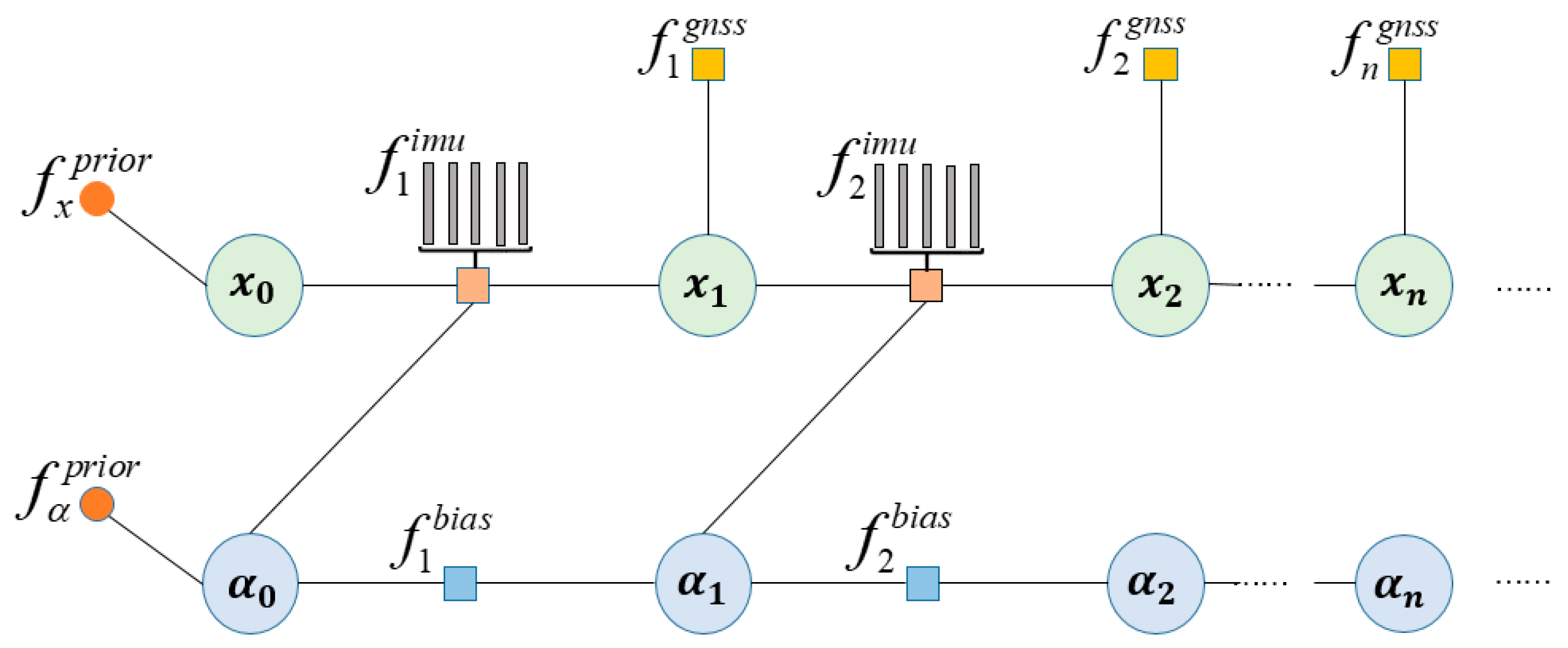

According to the factor graph theory and the previous analysis on factor nodes [

38], the factor graph model of combined GNSS/INS positioning can be derived as shown in

Figure 2.

is the system state variable and

is the system deviation variable.

is the priori factor, which provides the initial estimation for the system state variable and deviation variable.

is the GNSS factor, and

is the deviation factor.

is the IMU pre-integration factor, which pre-integrates the IMU data with the sampling frequency of GNSS to ensure that the pre-integrated IMU factor and the GNSS factor frequency are consistent. The factor graph combination localization model estimates the state at the initial moment by the a priori factors, while the state variables at the later moment need to be jointly estimated by the state variables and deviation variables at the current moment, and the GNSS factors are also needed to make auxiliary corrections to the state variables.

In the combined GNSS/INS positioning factor graph, the connections between the factor nodes indicate the flow of information. The IMU and GNSS factor nodes are usually independent of each other as they represent different types of sensor measurements, while the state variable nodes are connected to the state variable nodes of the previous moment in order to build a dynamic model of the positioning state. This model is designed to take full advantage of their complementary properties, which can effectively improve the positioning performance and robustness of the positioning system.

3. LSTM Neural Network Prediction Assisted Positioning Method

3.1. Neural Network Prediction Model Description

In the GNSS/INS integrated systems, numerous neural network-assisted models have already been established. The common idea is to build a relationship between the outputs of the INS (angular velocity, specific force, velocity, position, etc.) and GNSS information. When GNSS signals are available, GNSS information, along with INS outputs, can be used to train a neural network model. In the event of GNSS interruption, pseudo-GNSS information can be obtained from the trained neural network model. Before constructing the neural network model, it is necessary to establish a system model and select appropriate parameters as inputs and outputs to enhance training efficiency and prediction accuracy. Common models include the

model [

39].

where

is the position measurement of the GNSS and INS, respectively;

is the position error of the GNSS and INS, respectively;

is the true value of the position of the GNSS and INS, respectively; and

is the difference between the position measurement of the INS and GNSS. From Equation (26), it can be seen that the established

model is always affected by both GNSS and INS errors. To avoid this problem, the

model is proposed.

where

is the GNSS position increment in the

time period, and

is the GNSS position measurement and position error at the

moment, respectively. From Equation (27), it can be seen that the

model is only related to the GNSS position error, avoiding the mixed error of GNSS and INS, which improves the prediction accuracy of the LSTM neural network-assisted model. The specific structure of the LSTM neural network-assisted model is shown in

Figure 3.

Where represents angular velocity, represents specific force, represents the velocity and position of the INS, is the GNSS position, and represents the position error. The LSTM prediction assistance model is divided into two parts: the training phase and the prediction phase. During the training phase, inputs include angular velocity, specific force, and velocity from the previous and current moments of the INS, and the output is the position increment of the GNSS . During the training phase, when GNSS signals are strong, the combined positioning accuracy is high and good training data is used to determine the LSTM model parameters in preparation for the prediction phase. During the prediction phase, inputs include the current and next moment’s angular velocity, specific force, and velocity from the INS. Using the LSTM model, the position increment of the GNSS is predicted, and this prediction is added to the initial position information to generate a pseudo-GNSS position , which is used to suppress the divergence of inertial navigation errors.

The accuracy of LSTM training is closely related to network parameters, such as the number of hidden units, learning rate, and optimizer. The accuracy of model training improves with the decrease in the learning rate; however, a too-low learning rate can result in longer training times. A higher number of hidden units can enhance the model’s learning capability but may also lead to overfitting, especially when there is limited training data. The Adam optimizer, utilizing moment estimation, offers advantages such as adaptability, fast computation, and low memory usage, making it a good choice to accelerate training speeds. To mitigate potential overfitting issues, a dropout layer is added after each LSTM layer, enhancing model stability. The final layer of the model is a fully connected layer containing two neurons that output position increments. Specific LSTM parameter settings are shown in

Table 1.

3.2. LSTM Model

LSTM is a type of recurrent neural network (RNN) [

40]. Traditional neural networks or deep neural networks, where nodes within each layer are not interconnected, are suitable for training models that take single sample inputs and produce single sample outputs but are not well-suited for training with sequential data as input. LSTM introduces a complex gating mechanism that can address various shortcomings of traditional and deep neural networks when dealing with sequential data.

The basic unit of an LSTM includes input gates, forget gates, and output gates [

41]. These three main gating structures interact to decide how information is stored, updated, and forgotten, with the basic structure depicted in

Figure 4. The forget gate determines which information should be discarded from the cell state, the input gate decides which information from the current input should update the cell state, and the output gate determines which part of the cell state will be used for output. The specific formulas are as follows:

Where represents the forget gate, input gate, and output gate, respectively; is the input vector; represents the hidden unit state; is the memory cell state; is the candidate memory value for the current time step; represents the Hadamard product; are the weights and biases of the forget gate; are the weights and biases of the input gate; are the weights and biases of the output gate; and and are activation functions that help avoid problems like exploding or vanishing gradients.