A Knowledge Transfer Framework for General Alloy Materials Properties Prediction

Abstract

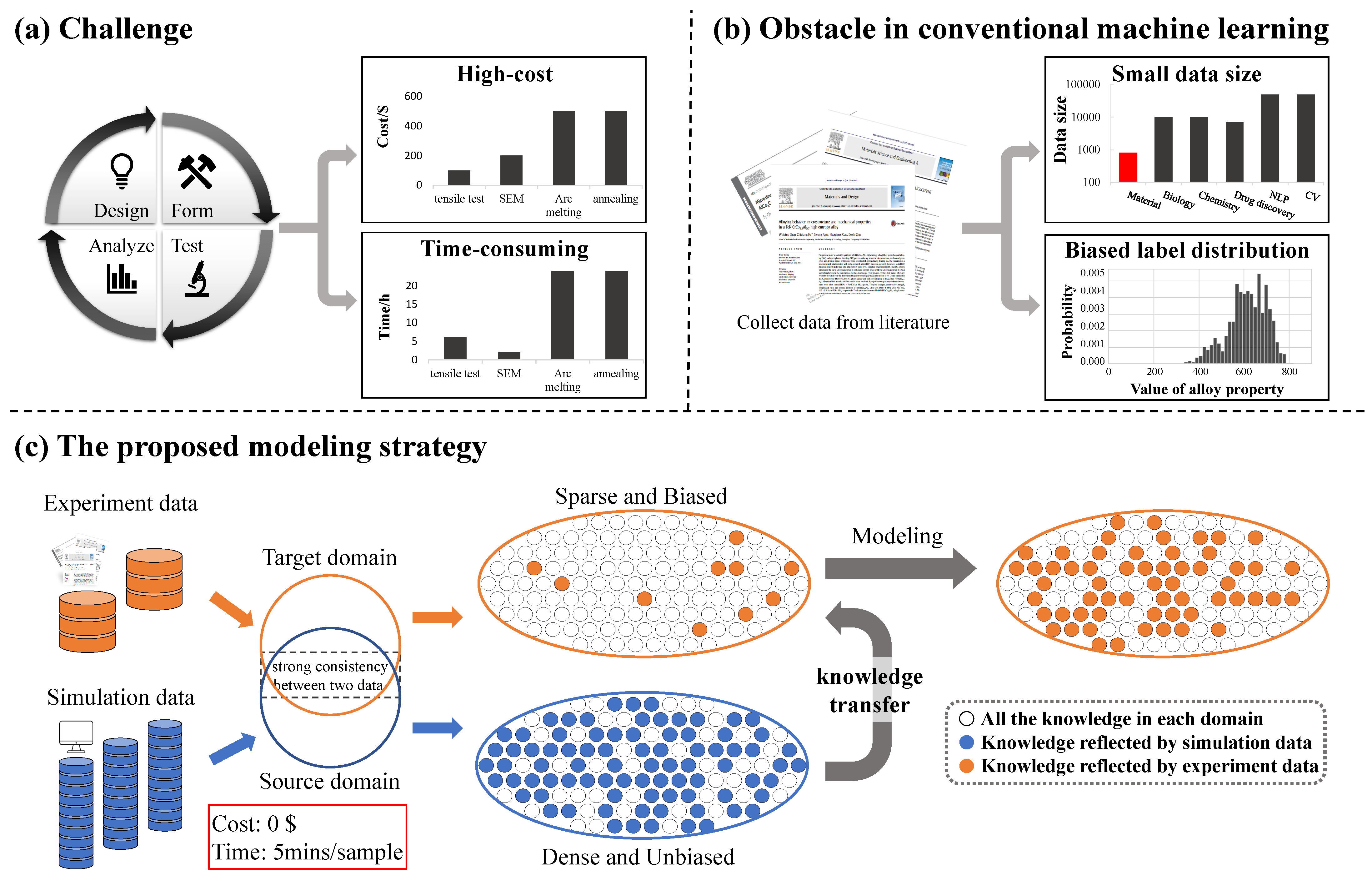

:1. Introduction

2. Materials and Methods

2.1. Datasets

2.1.1. TTT

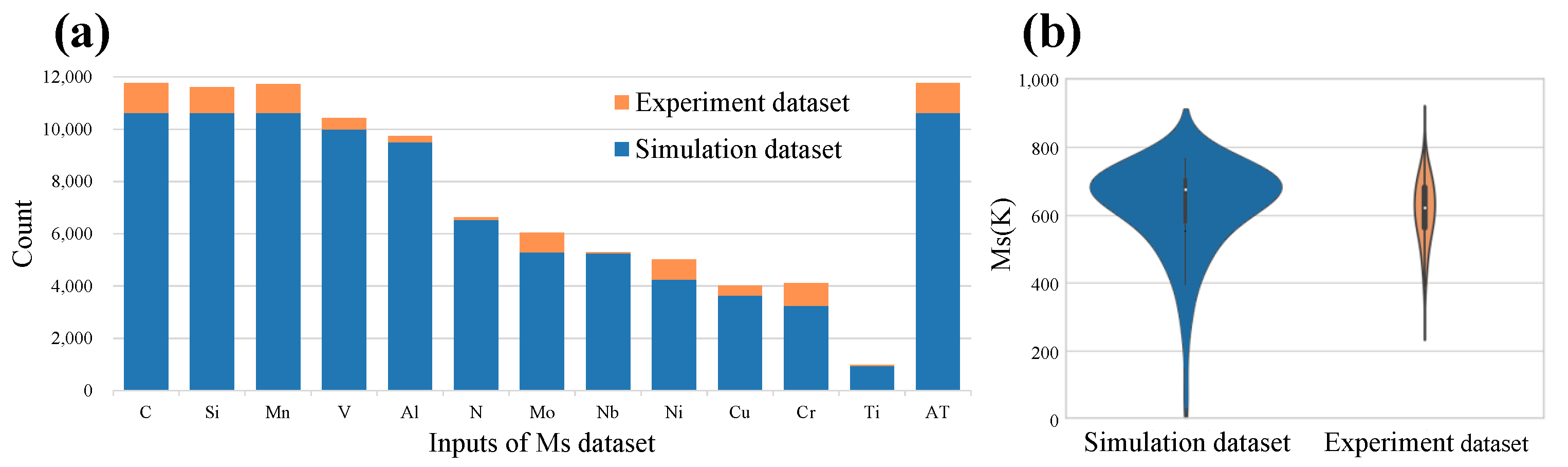

2.1.2. Ms

2.1.3. E

2.1.4. CCT

2.1.5. Phase

2.2. Simulation Software

2.2.1. Jmatpro

2.2.2. Thermo-Calc

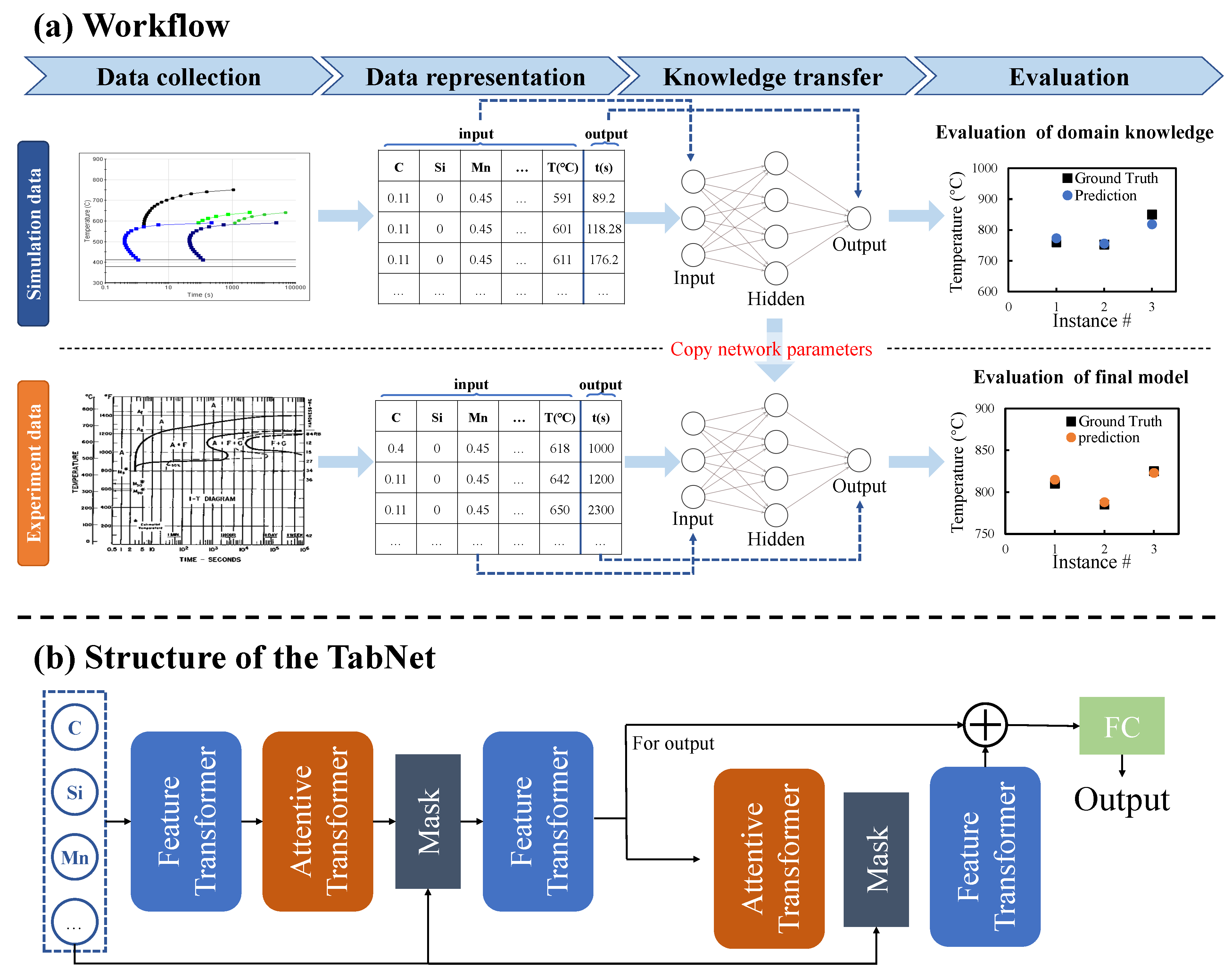

2.3. Framework

- (1)

- Feature transformer layer: Feature calculation, split for the decision step output, and information for the subsequent step. It can be seen that the Feature transformer layer consists of two parts. The parameters of the first half of the layer are shared, which means that they are jointly trained on all steps; while the second half is not shared, and is trained separately on each step. For each step, the input is the same features, so we can use the same layer to do the common part of the feature calculation, and then use different layers to do the feature part of each step. GLU is a gated linear unit, which is based on the original FC layer plus gating. The residual connection is used in the layer, and it is multiplied by to ensure the stability of the network. The Feature transformer layer realizes the calculation of the features selected in the current step.

- (2)

- Attentive transformer layer: feature selection, the function of this layer is to calculate the Mask layer of the current step based on the result of the previous step. A learnable mask layer is used to select salient features, and through the selection of sparse features, the learning of the model is more effective in each step. If a feature has been used many times in the previous step, it should no longer be selected by the model. Therefore, the model uses this Prior scales item to reduce the weight ratio of this type of feature. The Attentive transformer layer can obtain the Mask matrix of the current step according to the results of the previous step, and try to make the Mask matrix sparse and non-repetitive. The Mask vector of different samples can be different, which means that TabNet can allow different samples to choose different features instance-wise, and this feature is not available in tree models. For additive models such as XGBoost, a step is a tree. And the features used in this decision tree are selected on all samples, it cannot be instance-wise.

2.4. Experimental Tools and Setup

3. Results

3.1. Data Analysis

3.2. Extract Thermodynamics Knowledge from Simulation Data

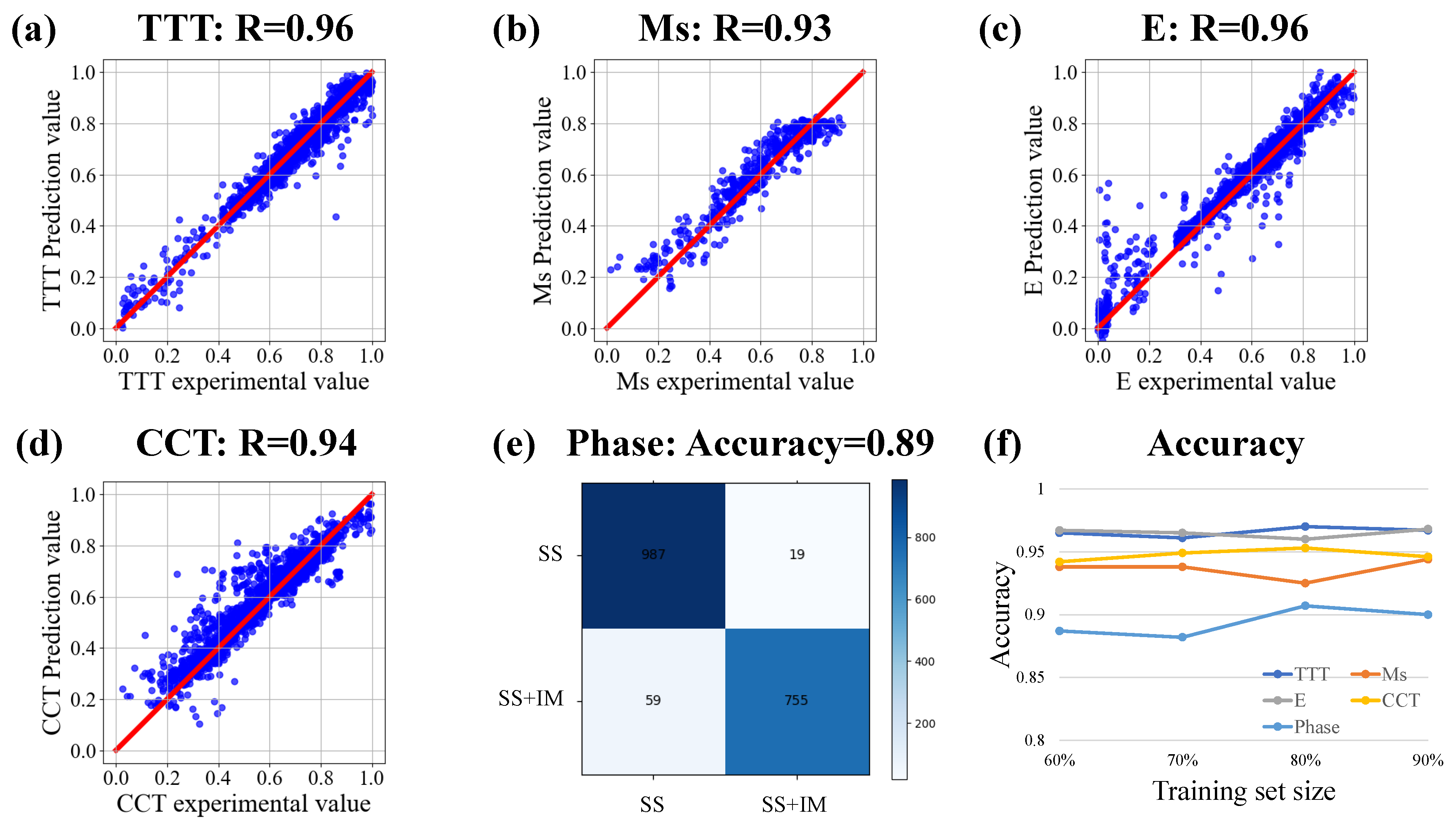

3.3. Transfer Learning of Experiment Data

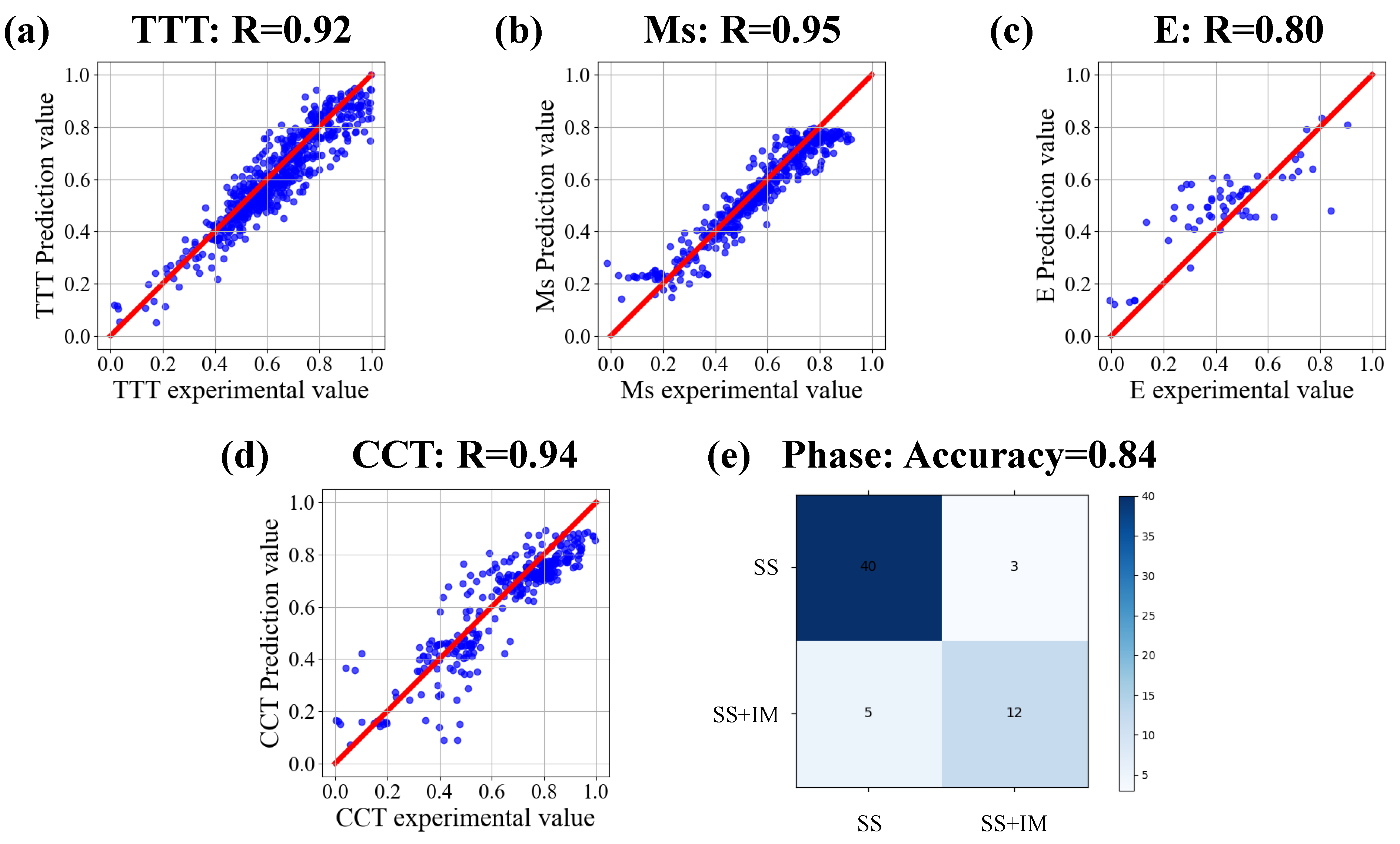

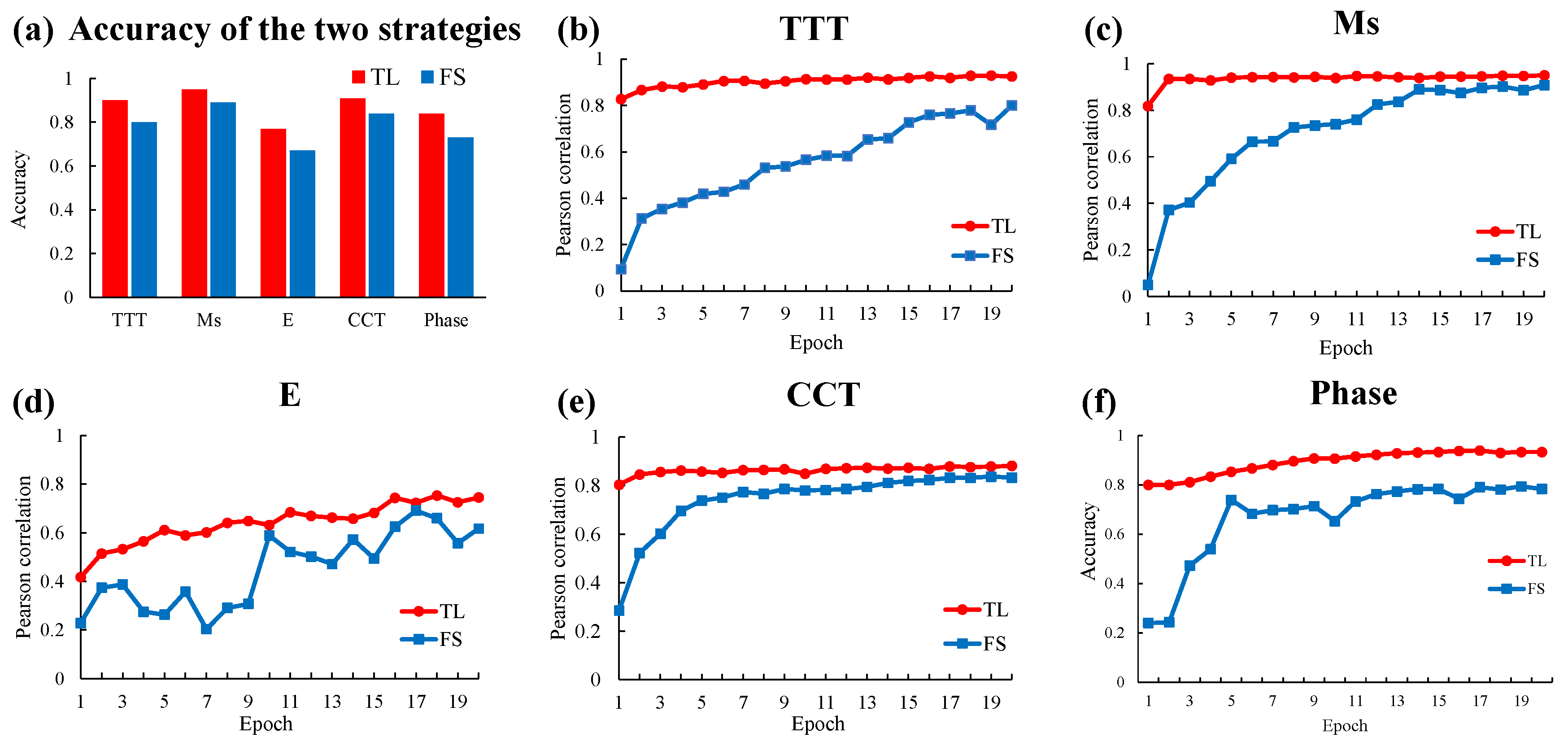

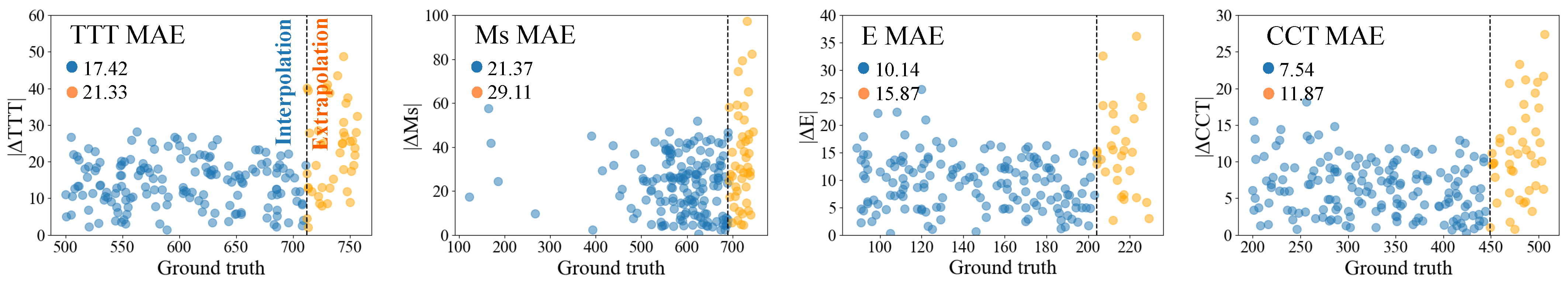

3.4. Model Validation

3.5. Transfer Learning of Unseen Properties

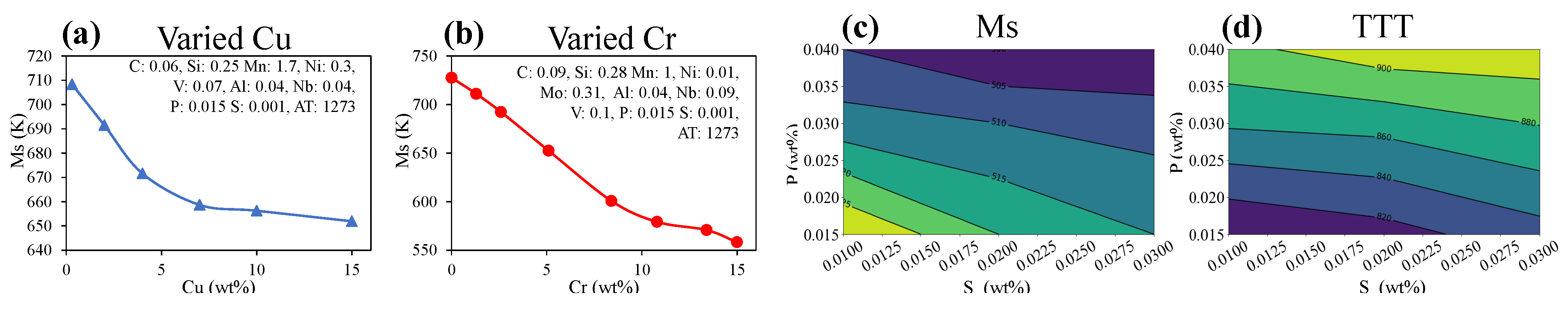

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Li, Y.; Ding, Y.; Munir, K.; Lin, J.; Brandt, M.; Atrens, A.; Xiao, Y.; Kanwar, J.R.; Wen, C. Novel β-Ti35Zr28Nb alloy scaffolds manufactured using selective laser melting for bone implant applications. Acta Biomater. 2019, 87, 273–284. [Google Scholar] [CrossRef] [PubMed]

- Liu, W.; Liu, S.; Wang, L. Surface modification of biomedical titanium alloy: Micromorphology, microstructure evolution and biomedical applications. Coatings 2019, 9, 249. [Google Scholar] [CrossRef] [Green Version]

- Miracle, D.B.; Senkov, O.N. A critical review of high entropy alloys and related concepts. Acta Mater. 2017, 122, 448–511. [Google Scholar] [CrossRef] [Green Version]

- Zhou, X.; Liu, C.; Yu, L.; Liu, Y.; Li, H. Phase transformation behavior and microstructural control of high-Cr martensitic/ferritic heat-resistant steels for power and nuclear plants: A review. J. Mater. Sci. Technol. 2015, 31, 235–242. [Google Scholar] [CrossRef]

- Ramprasad, R.; Batra, R.; Pilania, G.; Mannodi-Kanakkithodi, A.; Kim, C. Machine learning in materials informatics: Recent applications and prospects. NPJ Comput. Mater. 2017, 3, 54. [Google Scholar] [CrossRef] [Green Version]

- Hart, G.L.; Mueller, T.; Toher, C.; Curtarolo, S. Machine learning for alloys. Nat. Rev. Mater. 2021, 6, 730–755. [Google Scholar] [CrossRef]

- Schmidt, J.; Marques, M.R.; Botti, S.; Marques, M.A. Recent advances and applications of machine learning in solid-state materials science. NPJ Comput. Mater. 2019, 5, 83. [Google Scholar] [CrossRef] [Green Version]

- Agrawal, A.; Choudhary, A. Perspective: Materials informatics and big data: Realization of the “fourth paradigm” of science in materials science. Apl. Mater. 2016, 4, 053208. [Google Scholar] [CrossRef] [Green Version]

- Huang, X.; Wang, H.; Xue, W.; Ullah, A.; Xiang, S.; Huang, H.; Meng, L.; Ma, G.; Zhang, G. A combined machine learning model for the prediction of time-temperature-transformation diagrams of high-alloy steels. J. Alloy. Compd. 2020, 823, 153694. [Google Scholar] [CrossRef]

- Wu, C.T.; Chang, H.T.; Wu, C.Y.; Chen, S.W.; Huang, S.Y.; Huang, M.; Pan, Y.T.; Bradbury, P.; Chou, J.; Yen, H.W. Machine learning recommends affordable new Ti alloy with bone-like modulus. Mater. Today 2020, 34, 41–50. [Google Scholar] [CrossRef]

- Evans, J.D.; Coudert, F.X. Predicting the mechanical properties of zeolite frameworks by machine learning. Chem. Mater. 2017, 29, 7833–7839. [Google Scholar] [CrossRef]

- Costanza, G.; Tata, M.; Ucciardello, N. Superplasticity in PbSn60: Experimental and neural network implementation. Comput. Mater. Sci. 2006, 37, 226–233. [Google Scholar] [CrossRef]

- Feng, S.; Zhou, H.; Dong, H. Using deep neural network with small dataset to predict material defects. Mater. Des. 2019, 162, 300–310. [Google Scholar] [CrossRef]

- Feng, S.; Fu, H.; Zhou, H.; Wu, Y.; Lu, Z.; Dong, H. A general and transferable deep learning framework for predicting phase formation in materials. NPJ Comput. Mater. 2021, 7, 10. [Google Scholar] [CrossRef]

- Zhang, Y.; Ling, C. A strategy to apply machine learning to small datasets in materials science. NPJ Comput. Mater. 2018, 4, 25. [Google Scholar] [CrossRef] [Green Version]

- Raccuglia, P.; Elbert, K.C.; Adler, P.D.; Falk, C.; Wenny, M.B.; Mollo, A.; Zeller, M.; Friedler, S.A.; Schrier, J.; Norquist, A.J. Machine-learning-assisted materials discovery using failed experiments. Nature 2016, 533, 73–76. [Google Scholar] [CrossRef]

- Lu, Q.; Liu, S.; Li, W.; Jin, X. Combination of thermodynamic knowledge and multilayer feedforward neural networks for accurate prediction of MS temperature in steels. Mater. Des. 2020, 192, 108696. [Google Scholar] [CrossRef]

- Peng, J.; Yamamoto, Y.; Hawk, J.A.; Lara-Curzio, E.; Shin, D. Coupling physics in machine learning to predict properties of high-temperatures alloys. NPJ Comput. Mater. 2020, 6, 141. [Google Scholar] [CrossRef]

- Pan, S.J.; Yang, Q. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Yang, C.; Zhao, H.; Bruzzone, L.; Benediktsson, J.A.; Liang, Y.; Liu, B.; Zeng, X.; Guan, R.; Li, C.; Ouyang, Z. Lunar impact crater identification and age estimation with Chang’E data by deep and transfer learning. Nat. Commun. 2020, 11, 6358. [Google Scholar] [CrossRef]

- Gao, Y.; Cui, Y. Deep transfer learning for reducing health care disparities arising from biomedical data inequality. Nat. Commun. 2020, 11, 5131. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Tan, C.; Sun, F.; Kong, T.; Zhang, W.; Yang, C.; Liu, C. A survey on deep transfer learning. In Proceedings of the International Conference on Artificial Neural Networks, Rhodes, Greece, 4–7 October 2018; pp. 270–279. [Google Scholar]

- Saunders, N.; Guo, U.; Li, X.; Miodownik, A.; Schillé, J.P. Using JMatPro to model materials properties and behavior. JOM 2003, 55, 60–65. [Google Scholar] [CrossRef]

- Khakurel, H.; Taufique, M.; Roy, A.; Balasubramanian, G.; Ouyang, G.; Cui, J.; Johnson, D.D.; Devanathan, R. Machine learning assisted prediction of the Young’s modulus of compositionally complex alloys. Sci. Rep. 2021, 11, 17149. [Google Scholar] [CrossRef]

- Sage, A.M. Atlas of Continuous Cooling Transformation Diagrams for Vanadium Steels; Vanitec Publication; American Society for Metals: Detroit, MI, USA, 1985. [Google Scholar]

- Gorsse, S.; Nguyen, M.; Senkov, O.N.; Miracle, D.B. Database on the mechanical properties of high entropy alloys and complex concentrated alloys. Data Brief 2018, 21, 2664–2678. [Google Scholar] [CrossRef]

- Tibshirani, R. Regression shrinkage and selection via the lasso. J. R. Stat. Soc. Ser. B Methodol. 1996, 58, 267–288. [Google Scholar] [CrossRef]

- Rasmussen, C.E. Gaussian processes in machine learning. In Summer School on Machine Learning; Springer: Berlin/Heidelberg, Germany, 2003; pp. 63–71. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef] [Green Version]

- Saunders, N.; Guo, Z.; Li, X.; Miodownik, A.; Schillé, J.P. The calculation of TTT and CCT diagrams for general steels. JMatPro Softw. Lit. 2004, 1–12. [Google Scholar]

- Andersson, J.O.; Helander, T.; Höglund, L.; Shi, P.; Sundman, B. Thermo-Calc & DICTRA, computational tools for materials science. Calphad 2002, 26, 273–312. [Google Scholar]

- Arik, S.Ö.; Pfister, T. Tabnet: Attentive interpretable tabular learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 2–9 February 2021; Volume 35, pp. 6679–6687. [Google Scholar]

- Borgwardt, K.M.; Gretton, A.; Rasch, M.J.; Kriegel, H.P.; Schölkopf, B.; Smola, A.J. Integrating structured biological data by kernel maximum mean discrepancy. Bioinformatics 2006, 22, e49–e57. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Hoerl, A.E.; Kennard, R.W. Ridge regression: Biased estimation for nonorthogonal problems. Technometrics 1970, 12, 55–67. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 1997, 55, 119–139. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM Sigkdd International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Prokhorenkova, L.; Gusev, G.; Vorobev, A.; Dorogush, A.V.; Gulin, A. CatBoost: Unbiased boosting with categorical features. arXiv 2017, arXiv:1706.09516. [Google Scholar]

- Chen, C.; Ong, S.P. AtomSets as a hierarchical transfer learning framework for small and large materials datasets. NPJ Comput. Mater. 2021, 7, 173. [Google Scholar] [CrossRef]

| Hyperparameter | TTT | Ms | E | CCT | Phase |

|---|---|---|---|---|---|

| 8 | 8 | 16 | 8 | 16 | |

| 8 | 8 | 16 | 8 | 16 | |

| steps | 2 | 2 | 2 | 2 | 2 |

| lr | 0.01 | 0.01 | 0.01 | 0.1 | 0.1 |

| optimizer | Adam | Adam | Adam | Adam | Adam |

| Task | TTT | Ms | E | CCT | Phase |

|---|---|---|---|---|---|

| MMD | 0.0199 | 0.4793 | 2.1783 | 0.5038 | 2.1739 |

| Prediction Model | TTT | Ms | E | CCT | Phase |

|---|---|---|---|---|---|

| Jmatpro | 0.85 | 0.72 | 0.65 | 0.71 | - |

| Thermo-calc | 0.81 | - | - | 0.74 | 64% |

| Our | 0.92 | 0.95 | 0.80 | 0.94 | 84% |

| No. | Co/at% | Cr/at% | Fe/at% | Ni/at% | Si/at% | Nb/at% | Prediction Result | Literature Result |

|---|---|---|---|---|---|---|---|---|

| 1 | 25.23 | 20.23 | 30.21 | 24.32 | 0 | 0 | SS | SS |

| 2 | 24.68 | 21.30 | 28.46 | 23.84 | 0 | 1.73 | SS + IM | SS + IM |

| 3 | 25.11 | 21.11 | 27.49 | 24.47 | 0 | 1.82 | SS + IM | SS + IM |

| 4 | 25.10 | 20.46 | 26.39 | 24.94 | 0 | 3.10 | SS + IM | SS + IM |

| 5 | 23.34 | 21.68 | 27.68 | 24.15 | 0 | 3.16 | SS + IM | SS + IM |

| 6 | 23.07 | 22.05 | 28.43 | 22.60 | 0 | 3.85 | SS + IM | SS + IM |

| 7 | 31.81 | 0 | 35.51 | 32.68 | 0 | 0 | SS | SS |

| 8 | 30.52 | 0 | 30.89 | 30.27 | 8.31 | 0 | SS | SS |

| 9 | 28.16 | 0 | 27.73 | 27.28 | 16.83 | 0 | SS | SS + IM |

| 10 | 25.72 | 0 | 26.31 | 26.06 | 21.91 | 0 | SS + IM | SS + IM |

| Knowledge Source | Accuracy | Precision | Recall | F1 Score |

|---|---|---|---|---|

| TTT | 0.807 | - | - | - |

| Ms | 0.752 | - | - | - |

| CCT | 0.812 | - | - | - |

| E | 0.738 | - | - | - |

| Phase | 0.821 | - | - | - |

| Ensemble | 0.872 | 0.878 | 0.871 | 0.881 |

| None | 0.684 | 0.679 | 0.682 | 0.660 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, H.; Zhang, H.; Ren, G.; Zhang, C. A Knowledge Transfer Framework for General Alloy Materials Properties Prediction. Materials 2022, 15, 7442. https://doi.org/10.3390/ma15217442

Sun H, Zhang H, Ren G, Zhang C. A Knowledge Transfer Framework for General Alloy Materials Properties Prediction. Materials. 2022; 15(21):7442. https://doi.org/10.3390/ma15217442

Chicago/Turabian StyleSun, Hang, Heye Zhang, Guangli Ren, and Chao Zhang. 2022. "A Knowledge Transfer Framework for General Alloy Materials Properties Prediction" Materials 15, no. 21: 7442. https://doi.org/10.3390/ma15217442