Abstract

Additive manufacturing technologies such as directed energy deposition use powder as their raw material, and it must be deposited in a precise and controlled manner. Venturi injectors could be a solution for the highly precise transport of particulate material. They have been studied from different perspectives, but they are always under high-pressure conditions and mostly fed by gravity. In the present study, an optimization of the different dimensional parameters needed for the manufacturing of a Venturi injector in relation to a particle has been carried out to maximize the amount of powder capable of being sucked and transported for a specific flow in a low-pressure system with high precision in transport. For this optimization, simulations of Venturi usage were performed using the discrete element method, generating different variations proposed by a genetic algorithm based on a preliminary design of experiments. Statistical analysis was also performed to determine the most influential design variables on the objective, with these being the suction diameter (D3), the throat diameter (d2), and the nozzle diameter (d1). The optimal dimensional relationships were as follows: a D3 34 times the particle diameter, a d2 26.5 times the particle diameter, a d1 40% the d2, a contraction angle alpha of 18.73°, and an expansion angle beta of 8.28°. With these proportions, an 85% improvement in powder suction compared to the initial attempts was achieved, with a maximum 2% loss of load.

1. Introduction

Additive manufacturing has been considered an important and growing form of manufacturing for many years. The standard ISO/ASTM 52900:2021 [1] defines it as the process by which, starting from the data of a 3D model, a part is manufactured layer by layer. However, the way to comply with this definition is extremely varied and depends on different technologies. This standard divides these technologies into seven categories, but only four of them can directly use their raw material in powder form: binder jetting, directed energy deposition, material extrusion, and powder bed fusion.

The materials used in these technologies can be metallic, polymeric, or ceramic, but all have in common their high quality and consequent cost.

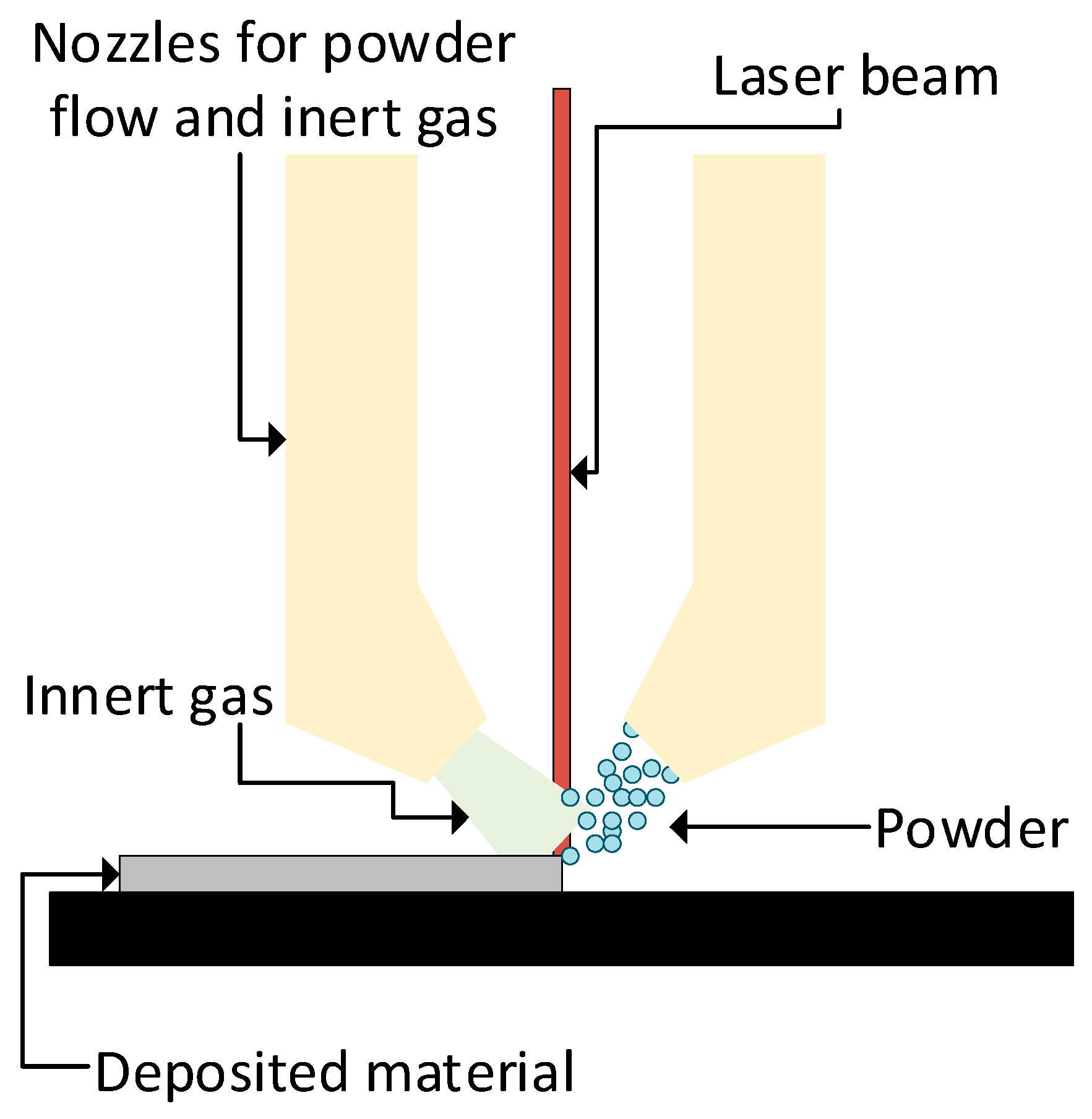

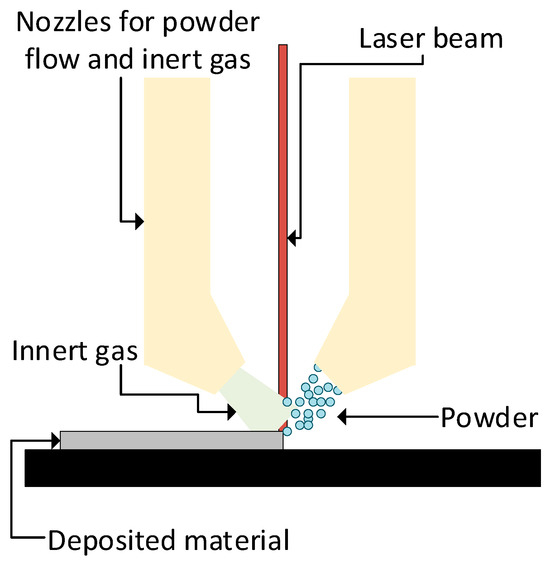

Taking directed energy deposition as an example, this technology uses a combination of inner gas (normally argon) as a clean atmosphere to avoid oxidation, feeding material (metallic or polymeric powder [2]), and a power source to melt the powder in a controlled manner (a laser or electron beam). A schematic of a DED powder system is shown in Figure 1.

Figure 1.

A schematic diagram of a DED system with a laser and powder feedstock.

This technology divides the powder feed into three moments [3]: metering, conveyance, and delivery. Each moment has different possible methods (mechanical, pneumatic, vibration-assisted, or gravitational methods, for example), all of them with different control necessities. However, a high accurate spray control is needed because part of the sprayed powder is not used [4]. This manufacturing system could have multiple nozzles, being able to mix different materials to obtain Functionally Graded Materials (FGMs) [5,6].

Analyzing the three moments in the powder feed, a novel solution could be the use of Venturi injectors, which allow the powder to be sucked from one point and pushed to where it is needed in a controlled manner, unifying the metering and conveyance systems.

From analyzing the solutions on the market that use these technologies, it can be seen that the raw materials in powder format tend to have very small particle sizes and are available in all densities. These characteristics can greatly influence the design of Venturi injectors and must therefore be taken into account when sizing them.

The most studied point in the literature is the net efficiency of the Venturi injector, its mean, and the lowest possible pressure drops. In this direction, Zerpa et al. [7] studied the geometry effects of the divergent and convergent sections in classical injectors, as did also Almeida [8], but this one with the aim of improving the propulsion of the fluid at the injector outlet. Zhang [9] focused on studying how the dimensional parameters affect the pressure distribution inside the injector, concluding that the throat length does not affect the pressure distribution inside the injector. Also analyzing these variables, in [10], aspect ratios were established that sought the maximum allowable differential pressure for the injector to operate at the maximum flow rate of the injector. However, Park [11] established a maximum differential pressure criterion as a function of Reynolds number.

A typical effect sought in the use of Venturi injectors is the generation of vacuum, but at a certain point the depression can produce cavitation, and given the importance of this effect, Shi et al. and Bermejo et al. [12,13] studied how this cavitation affects and its possible uses. One of these uses is fluid mixing; thus, Shi and Nikrityuk [14,15] studied how this cavitation affects the mixing process.

O’Hern et al. [16] established a method for the design of these injectors, while Sobenko et al. [17] proposed a model for predicting the performance of a Venturi injector, in addition to analyzing the flow pressure ratios that generated the highest level of instability in the Venturi injector.

On the other hand, there are studies focused on powder transport or injection. This use of Venturi injectors has been well established for many years [18], but progress is still being made in understanding the internal phenomena of the injector. When powder is introduced both in virtual tests [19] as well as in real tests [20], it should be noted that the requirements for fluid transport are different from those for powder transport, and so the design of injector geometry continues to be studied and advanced, as did by Xu et al. [21]. Of course, it is necessary to study how the sprayed powder behaves depending on its use [22,23].

In the case of powder transport, it must also be taken into account how particle morphology affects injector performance [24].

In terms of injector optimization, Expósito et al. [25] established a procedure applied to classical injectors focused on fluid mixing by using genetic algorithms, and Wang et al. [26] performed another optimization, also applying it to fluid mixing, but using a somewhat different Venturi injector design and machine learning algorithms as the optimization method.

In all the above studies, no work can be found that focuses on the design of an optimal Venturi with a view to maximizing powder transport as a priority. It is also noted that the powder transport studies focus on Venturi injectors that feed powder by gravity, not by suction.

In addition, if the DED manufacturing process requires an inert gas (normally at high pressure), the powder feed needs to be at lower pressure to guarantee the fast cleaning of the atmosphere. Thus, the transport of powder at atmospheric pressure is a good option, but no studies have been found that focus on the design of low-pressure Venturi injectors (less than 100 kPa) [18].

Based on these detected shortcomings, this study optimized the different dimensional parameters of a Venturi injector to maximize the amount of powder capable of suction and transport for a specific flow in a range of low pressures and with high transport precision for the development of a mixing powder device for Functionally Graded Additive Manufacturing.

2. Materials and Methods

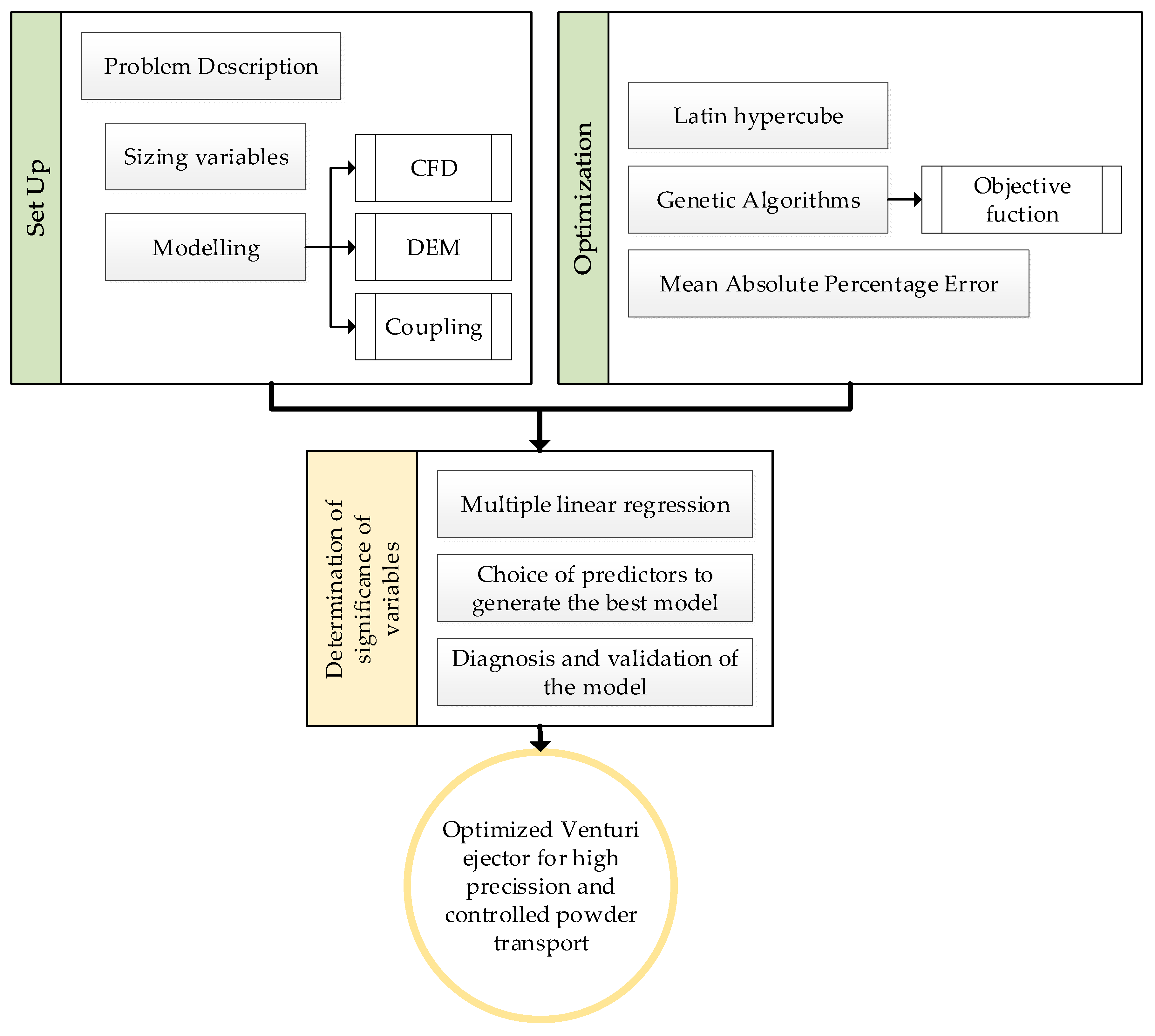

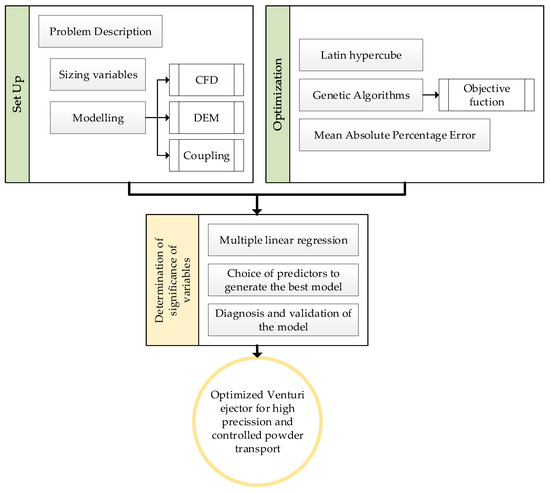

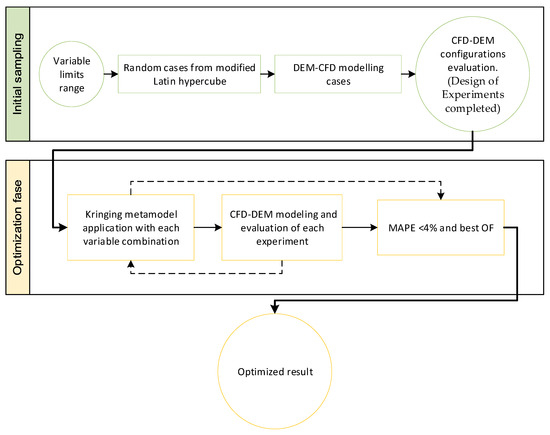

This work presents a methodology for optimizing the dimensions of Venturi injectors with low pressure for the transportation of powder with high accuracy. To achieve this, this section presents the description of the problem and the main dimensions of Venturi injectors; then, the modeling of the problem (both from the point of view of the flow, CFD, particle interaction, DEM, and coupling between them); and finally, the optimization tools used to obtain the optimal design. Alongside this, the final section explains the statistical tools used to determine the significance of variables with the results obtained. This process follows the flowchart shown in Figure 2.

Figure 2.

Flowchart of the research process.

2.1. Problem Description

The objective of this work is to establish a sizing methodology for optimized Venturi injectors, using particle and flow characteristics as a reference.

The mean particle diameter of the powder to be conveyed and the flow velocity were used as reference constants.

In this case, a powder named Powder-ID256-Scale_1.00 was used, which can be found in the database of representative but not real calibrated powders in Altair Edem software® (2022 version) and has the characteristics presented in Table 1. For the flow rate, a volumetric flow rate of 0.01 was considered.

Table 1.

Particle data.

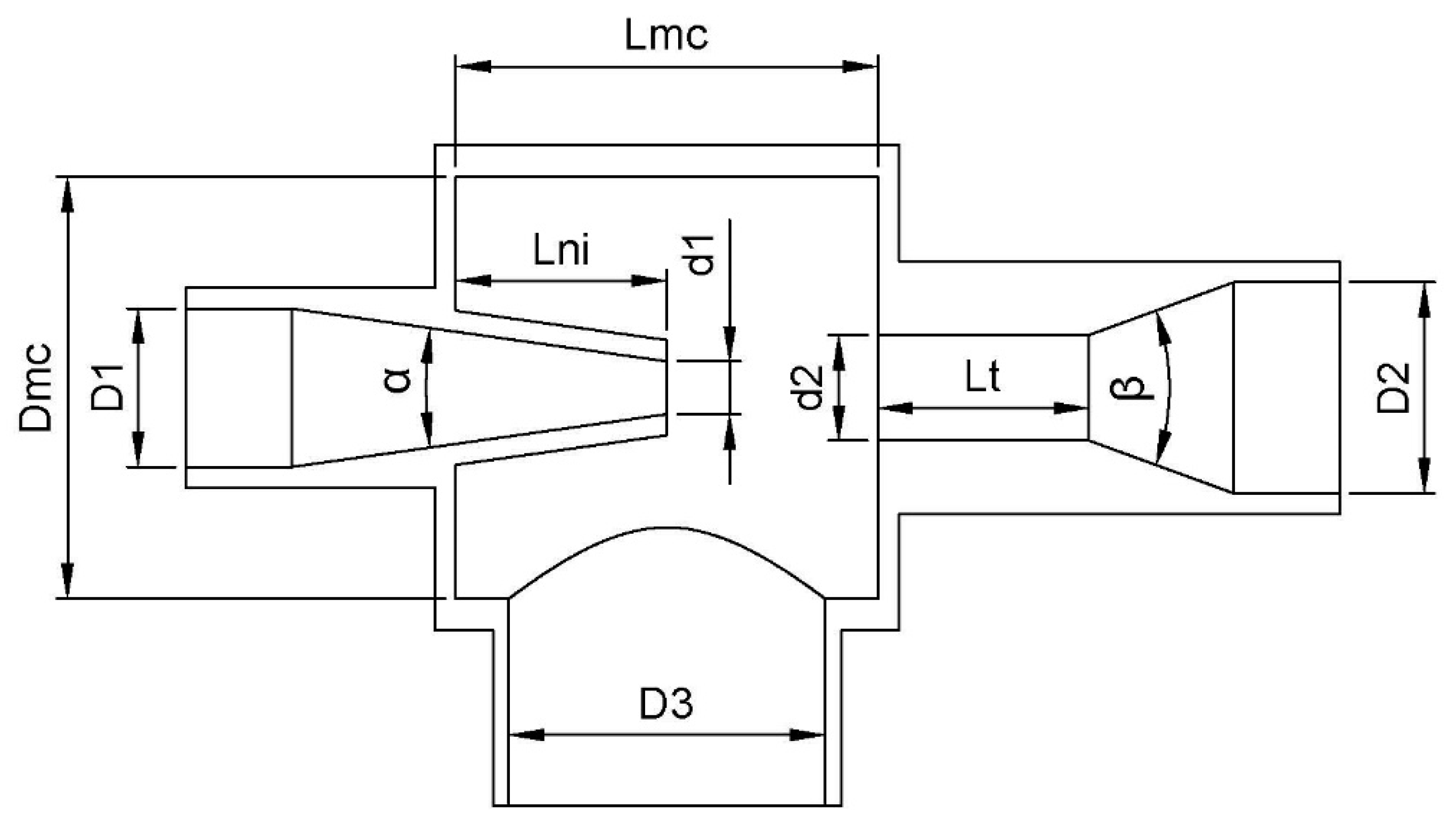

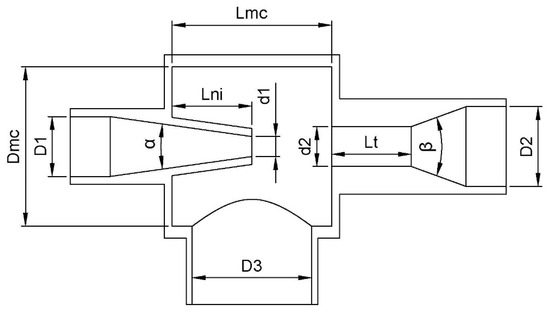

Since it is not entirely clear which factors influence powder suction, the dimensional variables shown in Table 2 were taken as the objects of our study. These variables are depicted in Figure 3.

Table 2.

Venturi injector design variables and their constraints.

Figure 3.

Venturi injector design section and its variables.

Due to the physical constraints of possible laboratory manufacture, although the variables D1, d1, and D2 have a percentage relationship with other variables, if the result of this relation is greater than 34 mm, this value will be taken as the dimension.

2.2. Modeling the Problem

To carry out this study, a simulated environment was developed. For these simulations, 3 interactions must be taken into account: the fluid, the particle–particle interaction, and the interaction between the fluid and the particles. In addition, the simulation interval time must be the minimum possible with the objective of reducing the computational time, but enough to guarantee powder ejection from the Venturi. In this study, that time was 2 s.

2.2.1. Modeling the Fluid Motion

For fluid modeling, the fluid was taken as a continuous element and was governed by the Navier–Stokes continuity equations. In general, applying the conservation of mass to a control volume, the equation in differential form corresponds to Equation (1):

where ρ is the density of the fluid; is the time; and is the flow velocity vector.

In turn, the conservation of motion quantity equation is obtained by applying Newton’s second law to the same control volume, where Equation (2) governs it, and its components can be viscous, pressure, gravity, centrifugal forces, Coriolis forces, etc.:

where is the pressure; is the gravity; and is the viscous stress vector corresponding to Equation (3), where the vector is decomposed in the , , and directions with the following subscript:

where is the dynamic viscosity of the fluid.

In addition, the Spalart–Allmaras turbulent flow model [27] was added, which is governed by Equation (4):

where and . Also, and are the production and destruction terms of the modified turbulent viscosity, corresponding to Equations (5) and (9), respectively:

with

where is the rotation tensor; is the distance from the nearest wall; is the Von Karman’s constant (0.41); and .

with

Moreover, for the modeling of the turbulent viscosity, Equation (13) was used:

with

This model was completed with the coefficients , , , and .

2.2.2. Modeling of Particle Interaction

Different mathematical models can be used for particle modeling, depending on the particle characteristics.

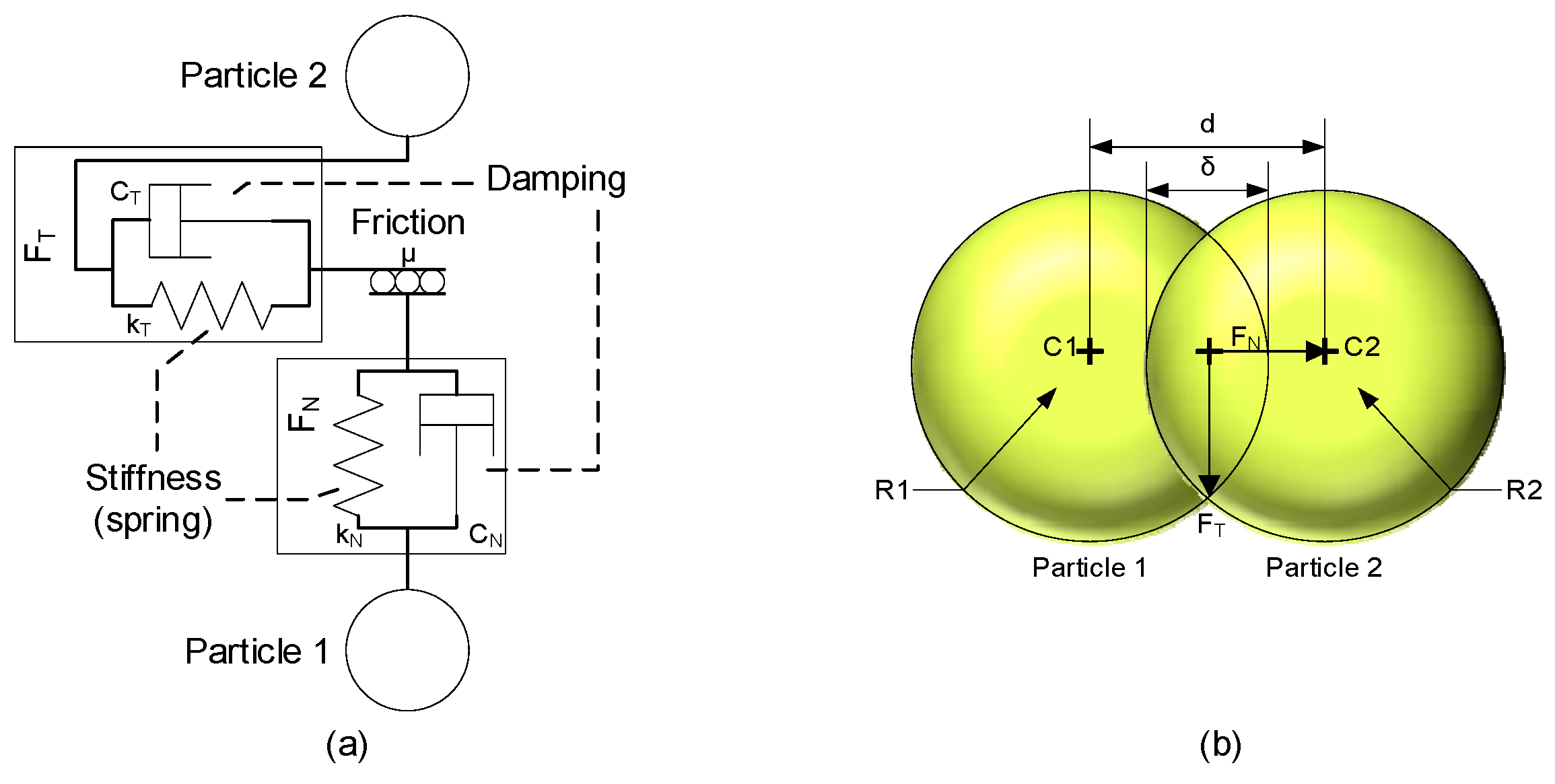

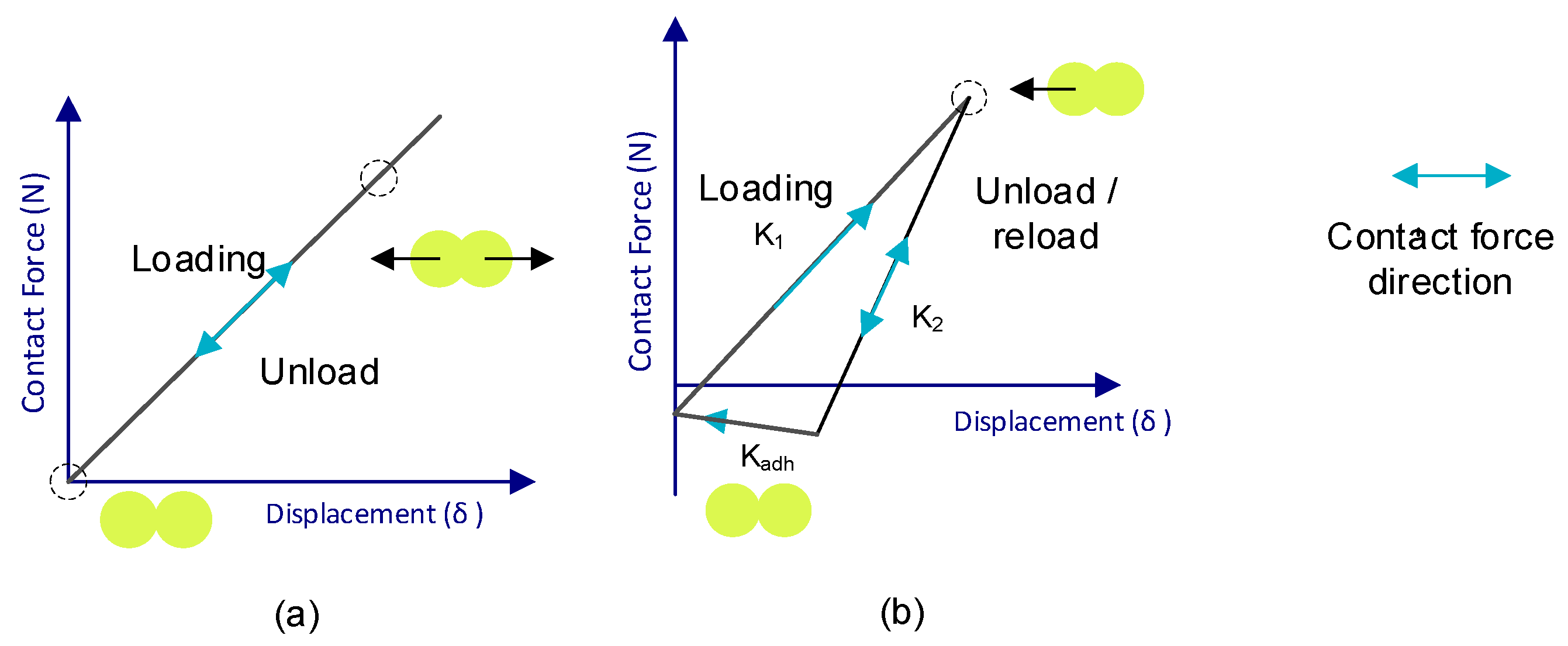

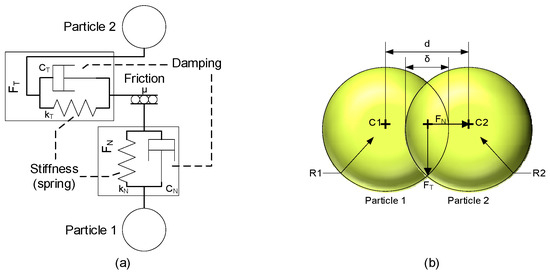

When talking about polymeric particles, it is assumed that they have a certain level of elasticity. This means that when the particles interact with the walls, they undergo some form of deformation; in the case of particle-to-particle interactions, this deformation causes the distance between their centers to be reduced due to what is considered an overlap between the two particles Figure 4b.

Figure 4.

Representation of the interaction between particles (a) and of the overlap at the point of contact (b).

In Figure 4a, these interactions are represented at the point of contact of two particles by a parallel connection between a spring and a damper.

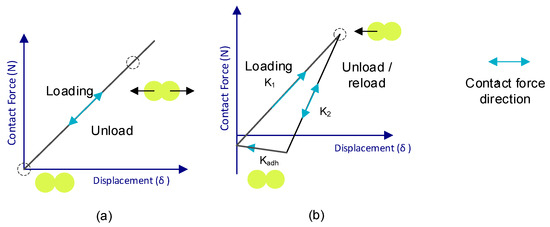

Depending on the mathematical model used, the energy transferred by these interactions is represented to a greater or lesser extent in the equations of motion. An example of this is shown in Figure 5, where the contact force–motion functions are depicted: Figure 5a is the representation of the most basic contact model (Hert–Mindlin contact model) [28,29,30,31,32], and Figure 5b depicts the Edinburgh Elasto-Plastic Adhesion contact model.

Figure 5.

Schematics of the contact–displacement force function of the Hert–Mindlin (a) and Edinburgh Elasto-Plastic Adhesion (b) models.

The equations governing the Hert–Mindlin (no slip) model are summarized in Table 3 [33].

Table 3.

Hert–Mindlin equation (no-slip) model.

Although each of these models is more complete than the previous one, the complexity of these models results in a high computational cost, so it is not always more effective to choose the most complete model.

In the case of the present work, the Hert–Mindlin (no-slip) model was used for the particle–wall interactions, and the Edinburgh Elasto-Plastic Adhesion model was employed for the interaction between particles.

When two particles come into contact, the force is distributed into two components called the normal force () and tangential force (), as can be seen in Figure 4b. The definition of each of these forces depends on the model.

In the case of the Edinburgh Elasto-Plastic Adhesion model, some factors are added to account for particle plasticity. The governing equations are summarized in Table 4 [34].

Table 4.

The Edinburgh Elasto-Plastic Adhesion model.

2.2.3. The Particle Flow Coupling Model

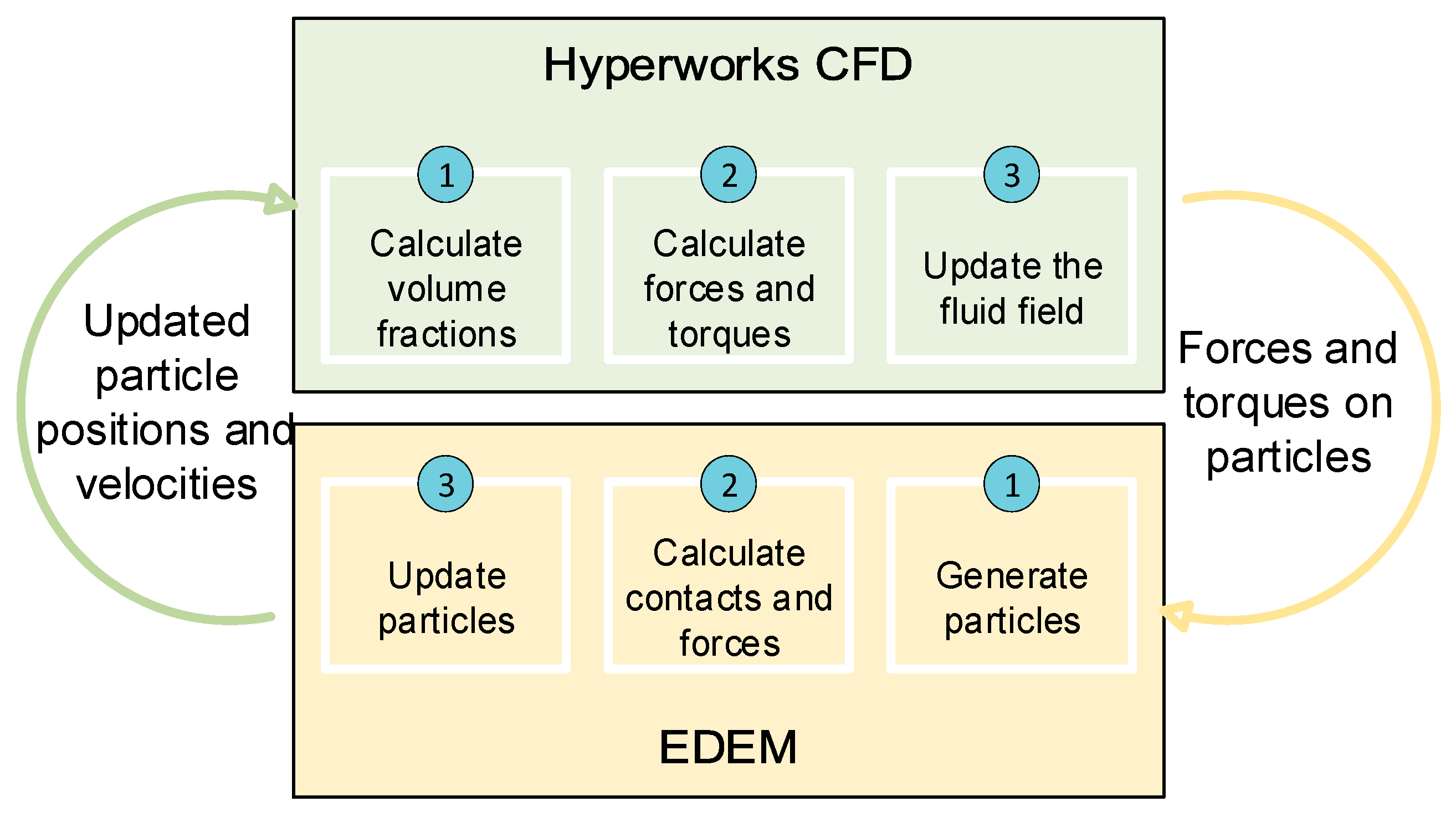

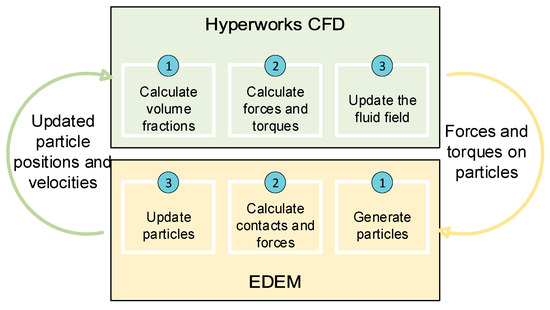

To study the behavior of the particles in the fluid in motion, a joint computational fluid dynamics (CFD) and discrete element method (DEM) simulation was carried out, using the models explained above, in each simulation time step. For this purpose, the software tools Altair Edem® (2022 version) and Altair Hyperworks CFD® (2022 version) were run together, following the workflow shown in Figure 6.

Figure 6.

Workflow between Hyperworks CFD and EDEM.

In this way, the workflow starts in the Hyperworks CFD tool, which calculates the volume fractions, forces, and moments of the fluid; it then updates the information for that time step and transfers it to the EDEM tool.

In the EDEM tool, the forces and contacts of the particles are calculated; the position and velocity of the particles are updated; and this information is transferred to the Hyperworks CFD tool.

This cycle was repeated with each time step until the end of the set test time.

To obtain an accurate calculation of the trajectory of these particles, it is necessary to use a mathematical entrainment model that transfers the information between the two phases of the test (fluid and powder). In this study, a model [35,36] that combines the Ergun [37] and Wen-Yu [38] models was used to evaluate the tensile force, according to Equation (16):

where

where is the gas velocity vector; is the volume of particle I; corresponds to the solid velocity vector; and is the free volume fraction.

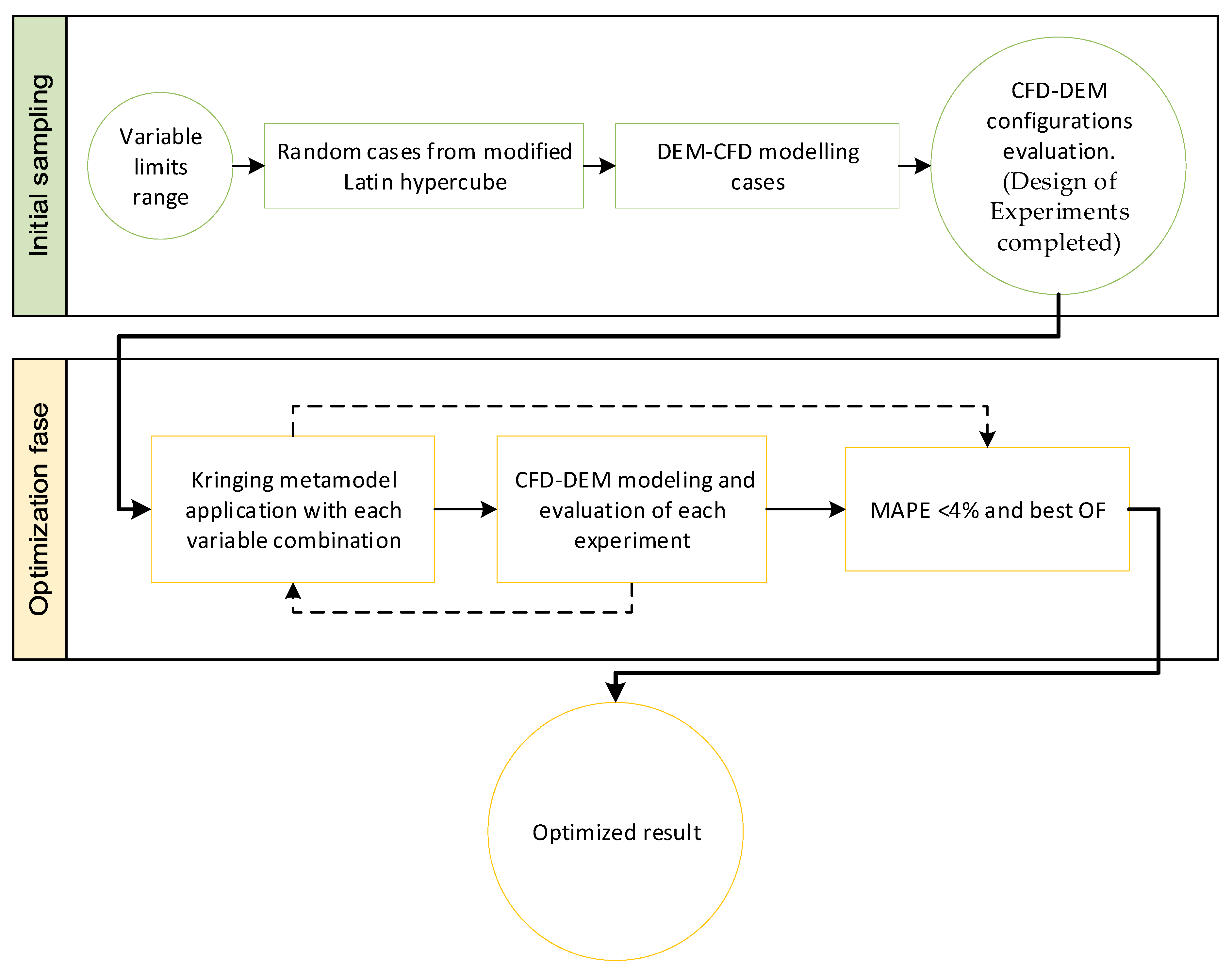

2.3. Optimization Using Genetic Algorithms

A process for choosing what variable is modified and the measure of this change is needed. In this study, the process selected is the use of genetic algorithms.

Genetic algorithms are a mathematical assimilation of the law of natural selection in nature [39,40]. In this case, 100 generations with 100 individuals each were applied. A tournament selection was applied by selecting two random individuals and comparing the fitness function value (using the Kriging metamodel interpolation method to estimate the possible responses [41,42]) to store the best one in the intermediate population (100 tournaments until obtaining an intermediate population of 100 individuals). Half of the intermediate population (50% crossover rate) was randomly selected and combined to obtain offspring (arithmetic crossover), while the other half of the intermediate population was maintained. Additionally, a 60% mutation rate was applied, as well as elitism (the worst individual of the new populations was always replaced by the best one of the previous populations). To create these metamodels, the results previously obtained in a design of experiments were used as a reference.

The Kriging metamodel uses an exponential correlation model and a polynomial regression model. However, polynomial regression is able to change the order from 0 to 2, depending on the recorded data. When you have a lower-order polynomial, it requires fewer reference values and is easier to calculate, but it has less precision. For this, the algorithm starts by trying to make use of a second-order regression model to predict each response; if this fails, it will automatically use a first-order regression model, and if this fails, it will go back down using a zero-order regression model. This automatic order change ensures that you are always using the best possible regression model with the data you have.

The modified Latin hypercube algorithm is used to generate a set of initial cases for initial interpolations [39]. First, a combination is generated with all the variables at the minimum of their range, another combination with all variables at the midpoint of their range, and another combination with all the variables at their maximum point, which implies 3 designs. Subsequently, the Latin hypercube algorithm is applied to add “n” points, where “n” is the number of design variables. Therefore, this algorithm divides the range of each variable into equal parts, selects a random value for each variable, and eliminates the corresponding range for each of the variables from the list of possible values to be used in the next combination of variables. This process is repeated until “n” points are added. This means that the optimization will start with a total of 3+n combinations, which in this study means a total of 12 initial combinations.

Since the Kriging metamodel interpolation method gives better results interpolating than extrapolating, the Latin hypercube algorithm was modified so that all generated values corresponding to the first or last rank of each variable are modified and set to the minimum or maximum value, respectively. In this way, these data are shifted to the contour of the search space, promoting data interpolation over extrapolation.

In this study, the Venturi design variables explained in Section 2.1 were used as the variables to be optimized, while the mass of powder discharged in two seconds (Obj1) and the pressure drop produced between the inlet and the outlet of the Venturi injector (Obj2) are the response variables to maximize and minimize, respectively. To achieve this in a mono-objective approach, the objective function was chosen to transform the scale of the powder discharge and pressure drop results by normalizing them. For this purpose, Equation (20) transforms the minimum value of each variable into 0 and the maximum value into 1, scaling the rest of the results. This transformation is called normalization.

Thus, the objective function will be the maximization of Equation (21):

The mean absolute percentage error (also known as MAPE) was used as a stopping criterion in each iteration [41], which measures, in percentage terms, the absolute error committed by the response variables as a whole. This indicator responds to Equation (22):

where is the number of response variables to optimize; is the reference value (those obtained in the simulations to check); and is the value to be compared (those given by the algorithm as an estimated value according to the data available for training the Kriging metamodel).

When this criterion is below 4%, the optimization will be considered good since the subsequent numerical simulation of the solution proposed by the genetic algorithm approximately matches (MAPE < 4%) the estimated results of the algorithm. However, if the iteration reaches 4% but better results have been obtained in previous iterations, it continues iterating until the objective function is the best of all cases studied while the maximum MAPE criterion allowed is met. Sometimes the stopping criterion is not reached after many iterations. In these cases, every 10 iterations without reaching the stopping criterion, the results of the MAPE are analyzed. If the trend of these results is towards 4%, 10 more iterations are performed. In case the results start to fluctuate with no improving trend, the best result of the objective function in the last 10 iterations will be assumed as the optimum.

The duty cycle for each iteration can be seen in Figure 7.

Figure 7.

Optimization workflow.

2.4. Determination of Significance of Variables

In order to study the relative importance, from a statistical point of view, of the different design variables considered, a multiple linear regression analysis with standardized coefficients was carried out to model the behavior of powder discharge.

2.4.1. Multiple Linear Regression

The objective of regression analysis is to mathematically model the behavior of a response variable () as a function of one or more independent or predictor variables ().

The multiple linear regression model can be written in matrix notation as follows:

where

The vector contains the coefficients of the model, where is the ordinate at the origin and , is the average effect that a one-unit increase in the predictor variable has on the dependent variable , when all the other regressor variables are held fixed or constant. The vector contains the errors or residuals, where is the difference between the observed value and the value estimated by the model. In addition, .

Since the magnitude of each regression coefficient depends on the units in which the predictor variable to which it corresponds is measured, in order to determine the impact of each variable on the model, standardized coefficients were used, which were obtained by standardizing (subtracting the mean and dividing by the standard deviation) the predictor variables prior to the model’s fitting.

Multiple linear correlation models require the following conditions:

- Linearity between the independent variables of the model and the response variable.

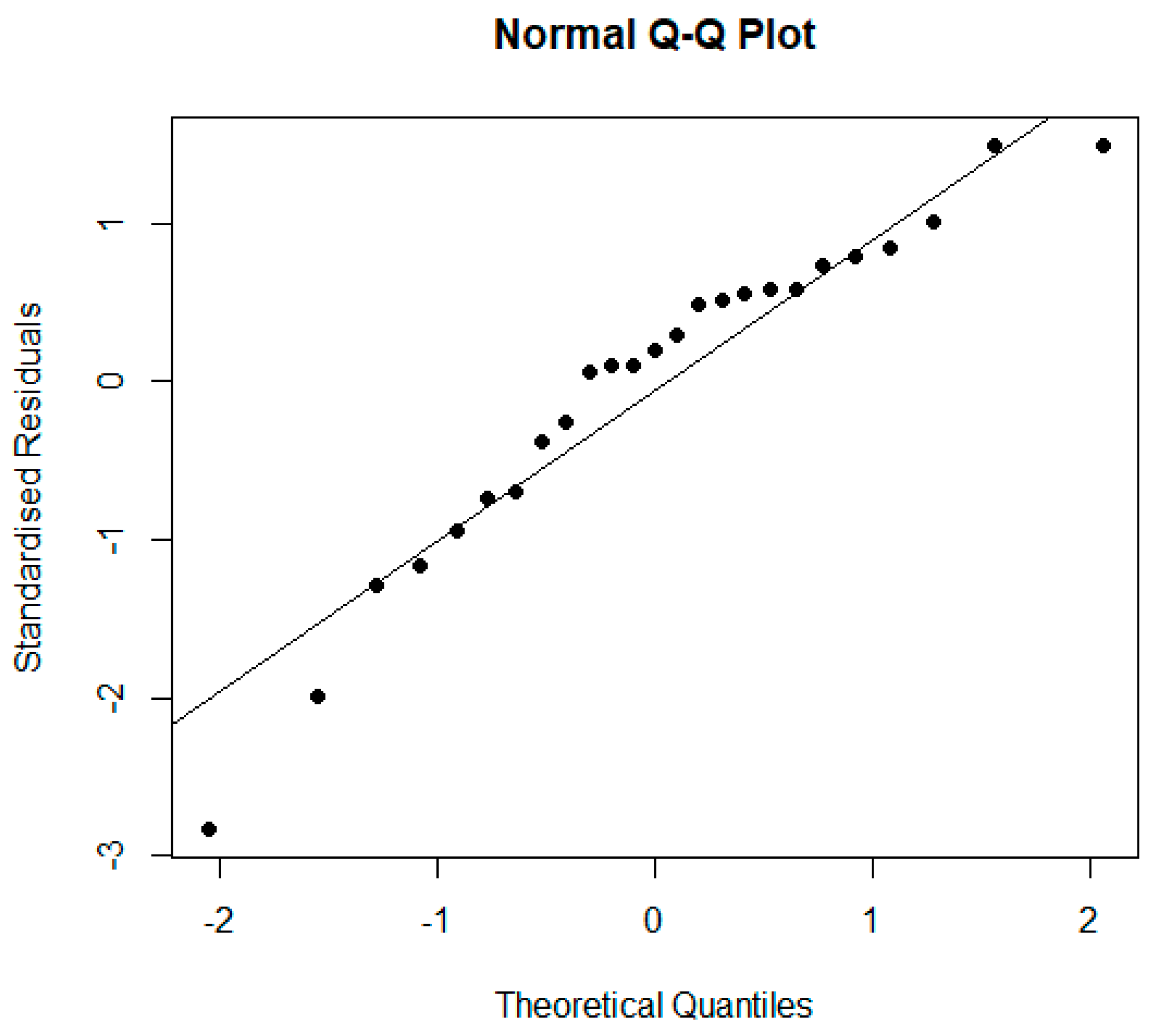

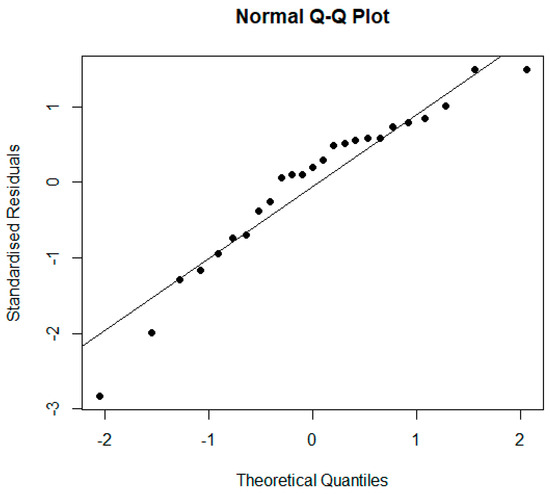

- Normality of the residuals: It is assumed that the residuals are normally distributed with a zero mean. This is usually checked by means of graphical methods (histograms, box-and-whisker plots, or quantile–quantile (Q–Q) plots) as well as normality hypothesis tests such as, for example, the Shapiro–Wilk test, which is applicable when analyzing small samples (composed of less than 50 elements) or the Anderson–Darling test, which is a non-parametric test on sample data (with more than 7 elements) coming from a specific distribution.

- Homogeneity of the variance of the residuals (homoscedasticity): To check this, the residuals are plotted. If the variance is constant, they are randomly distributed with the same dispersion and without any specific pattern. One can also resort to homoscedasticity tests such as the Breusch–Pagan test, which only detects linear forms of heteroscedasticity, or the Goldfeld–Quandt test, which compares the variances of two sub-models separated by a specified break point and rejects if the variances differ.

- The residuals are independent of each other: The Durbin–Watson test allows for the diagnosis of the presence of a correlation between consecutive residuals ordered in time, which is a possible manifestation of a lack of independence.

- There are no outliers with a high influence. That is, the regression model is not strongly influenced by one or more outlier data points because this would raise doubts about the adequacy of the model and the reliability of the data in some cases. Cook’s distance allows for detecting outliers with a high influence. For a sample of elements and a model of independent variables, a Cook’s distance greater than the median of an F-distribution with and degrees of freedom, with , is considered of concern.

- Uncorrelated predictors: In multiple linear models, the predictors must be independent, meaning there must be no collinearity between them. Tolerance and the variance inflation factor () are two parameters that quantify the same thing (one is the inverse of the other). The reference limits that are usually used are: : absence of collinearity; : regression may be affected by some collinearity; : cause for concern; and : serious collinearity.

- Parsimony: This term refers to the fact that the best model is the one that can most accurately explain the variability observed in the response variable using the least number of predictors.

Good models are those that meet the most criteria for goodness-of-fit. There will always be circumstances where failure to meet any of the criteria will not necessarily make the model unfeasible from a practical point of view [42].

2.4.2. Choice of Predictors to Generate the Best Model

When selecting the predictors to be part of the model, several methods can be followed, among them the so-called stepwise methods, which consist of iteratively adding and/or removing predictors in the regression model in order to find the subset of variables in the dataset that results in a model that reduces the prediction error. There are three stepwise regression strategies:

- Forward selection, which starts with no predictors in the model, iteratively adds the most contributing predictors, and stops when the improvement is no longer statistically significant.

- Backward selection (or backward elimination), which starts with all predictors in the model (full model), iteratively eliminates the least contributing predictors, and stops when you have a model in which all predictors are statistically significant.

- Stepwise selection (or sequential substitution), which is a combination of the forward and backward selections. You start with no predictors and sequentially add the most contributing predictors (as in forward selection). After each new variable is added, any variable that no longer provides an improvement in model fit (as in backward selection) is removed.

The step-by-step method requires some mathematical criteria to determine whether the model gets better or worse with each addition or removal. There are several parameters or metrics that can be used, the most important of which are: , Mallows’ , the Akaike Information Criterion (AIC), and the Bayesian Information Criterion (BIC) [43].

When there are terms in the model that do not contribute significantly to the model, tends to be smaller than . Therefore, it is desirable to refine the model. In general, to speak of a model that has a satisfactory fit, it is necessary that the coefficients and have values greater than 0.7. However, when comparing different models for the same dataset, a lower AIC/BIC score is better.

2.4.3. The Lack-of-Fit Test

The F-test is useful for checking that the model as a whole performs better than chance. If a regression model does not produce a significant F-test result, then you probably do not have a very good regression model (or, quite possibly, you do not have very good data). However, while failing this test is a fairly strong indicator that the model has problems, passing the test (i.e., rejecting the null hypothesis) does not imply that the model is good.

Most computer programs specialized in statistics include procedures to perform both simple and multiple regression analyses and usually include variable selection techniques. In this work, we used the free software Jamovi [44] based on the statistical language R [45,46].

3. Results

3.1. Optimization of Variables

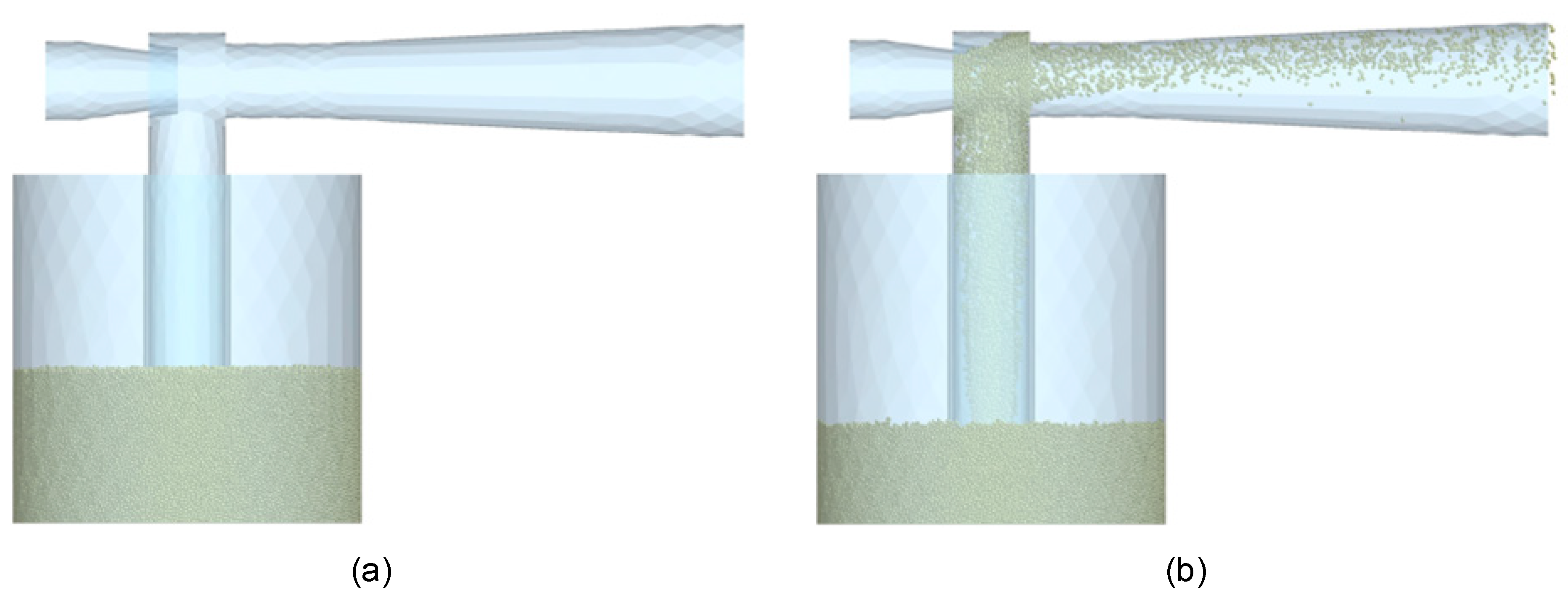

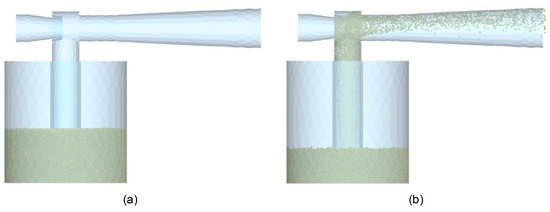

Given the limits for each variable explained in Section 2.1 and applying the modified Latin hypercube algorithm, a total of 12 initial cases were obtained, which can be seen in Table 5 (design variables). One example of the simulation is depicted in Figure 8, which corresponds to the beginning (Figure 8a) and the end (Figure 8b) of the TSV2 simulation.

Table 5.

List of initial cases.

Figure 8.

TSV2 simulation starting (a) and ending (b).

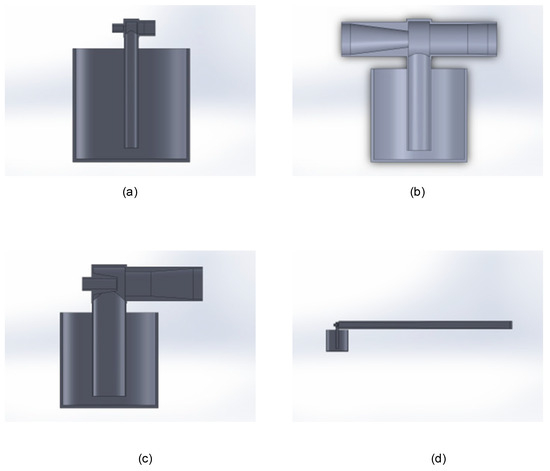

Each of these cases was drawn in CAD, and joint CFD-DEM tests were carried out. These gave the results shown in Table 6. As a reference, some examples of these design configurations are shown in Figure 9.

Table 6.

Results of cases generated by the modified Latin hypercube.

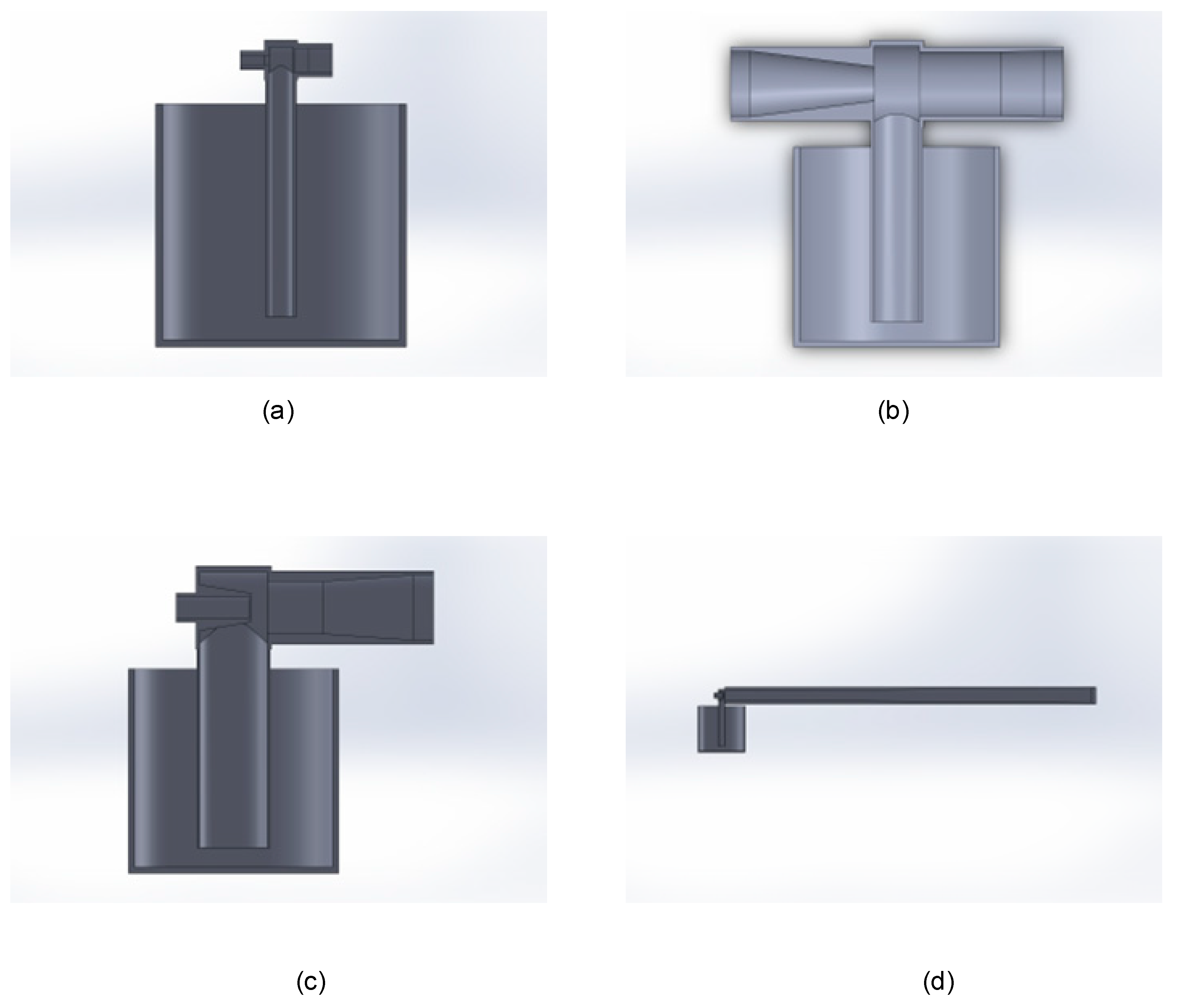

Figure 9.

Examples of CAD designs of different configurations: TSV1 (a), TSV5 (b), TSV7 (c), and TSV11 (d).

The direct measurements from the tests can be seen in columns 2 and 3, along with the normalized values in columns 4 and 5.

These data were fed into the optimization algorithm, as explained in Section 2.3, following the cycle of optimization iterations up to a total of 13 times.

For each combination, the genetic algorithm shows, as a result, a possible optimal variable combination and the predicted objective function result. Those optimal design variables obtained by the algorithm after each execution are shown in Table 7.

Table 7.

Combinations of variables in the optimization process.

Table 8 shows the simulation results (both measured and normalized) obtained for every combination in Table 7 (optimal designs). It can be seen how the value of the objective function, given by Equation (21), increases as the optimal point is approached. A deeper analysis of these results is made in Section 3.2.

Table 8.

Results of the optimization process.

With each iteration, the predictions of the algorithm results were noted down to apply the stopping criterion. Table 9 shows, for each optimal design, the normalized response variables (powder discharge and pressure drop) according to the simulations (first column) and the same response variables predicted by the genetic algorithm (second column). The MAPE between the estimated and simulated results is depicted in the last sub-column (M). As the number of data points increases, the estimates of the genetic algorithm are more accurate, consequently reducing the MAPE (compared to the simulation results).

Table 9.

MAPE stop criterion results.

After a total of 13 iterations, the results of the proposed optimization algorithm can be considered good since the results estimated by it and those resulting from evaluating this combination of variables through the simulations are less than 4% apart, fulfilling the condition of having the best result of the objective function up to that iteration. It is noteworthy that the optimal result discharges 85% more powder (Obj 1normalized) with only a 2% pressure drop (Obj 2normalized) compared to all cases studied during the optimization process.

In this iteration 13, the variables obtained were those depicted in Table 10.

Table 10.

Final optimized dimensions result.

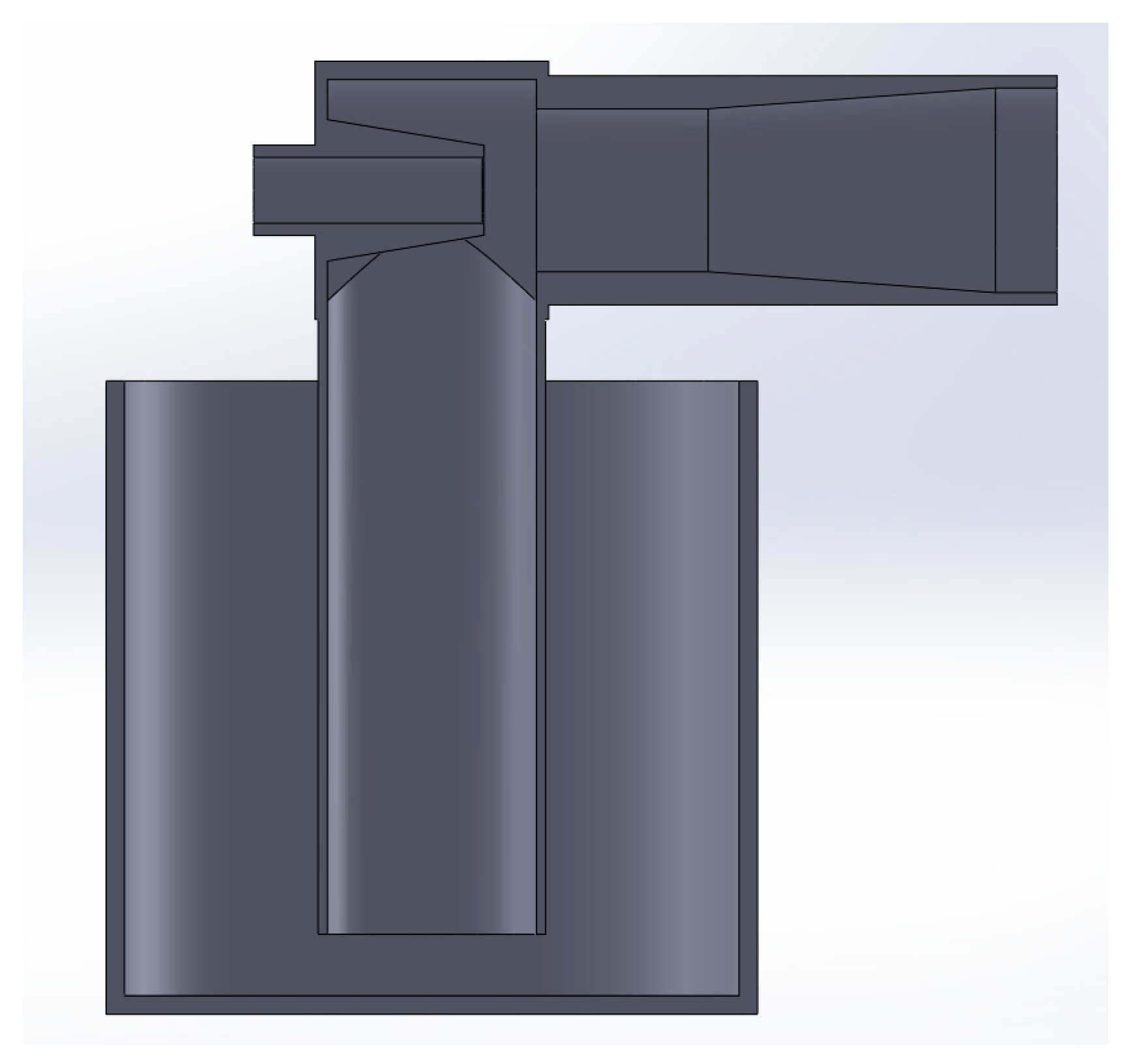

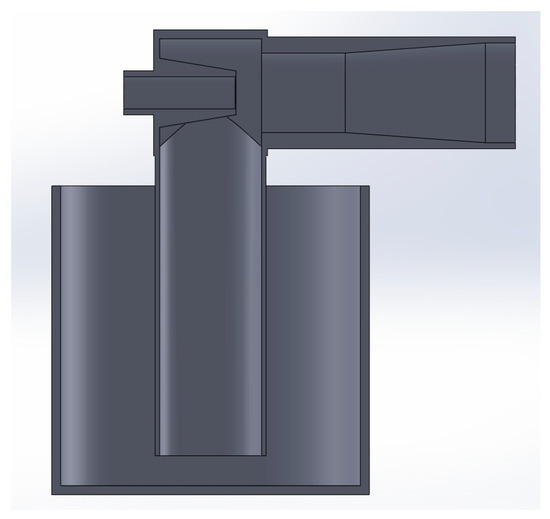

A CAD representation of the optimal Venturi proportions is shown in Figure 10.

Figure 10.

Optimal Venturi (representation in section view).

It is worth mentioning that the dimensions of Table 10 are the optimized ones for a 1 mm particle diameter, but they have a proportional relationship with the particle diameter. Therefore, the optimized dimensions could be explained as follows: D3 and d2 are 34 and 26.5 times the particle diameter, respectively; d1 is 40% of d2; D1 is 101% of d1; D2 is 126% of d2; and PRi is 75% of D3. The Lt, α, and β are fixed. Nevertheless, the statistical analysis depicted in Section 3.2 reveals that the most significant design variables of a Venturi injector to maximize the powder discharge are D3, d1, and d2.

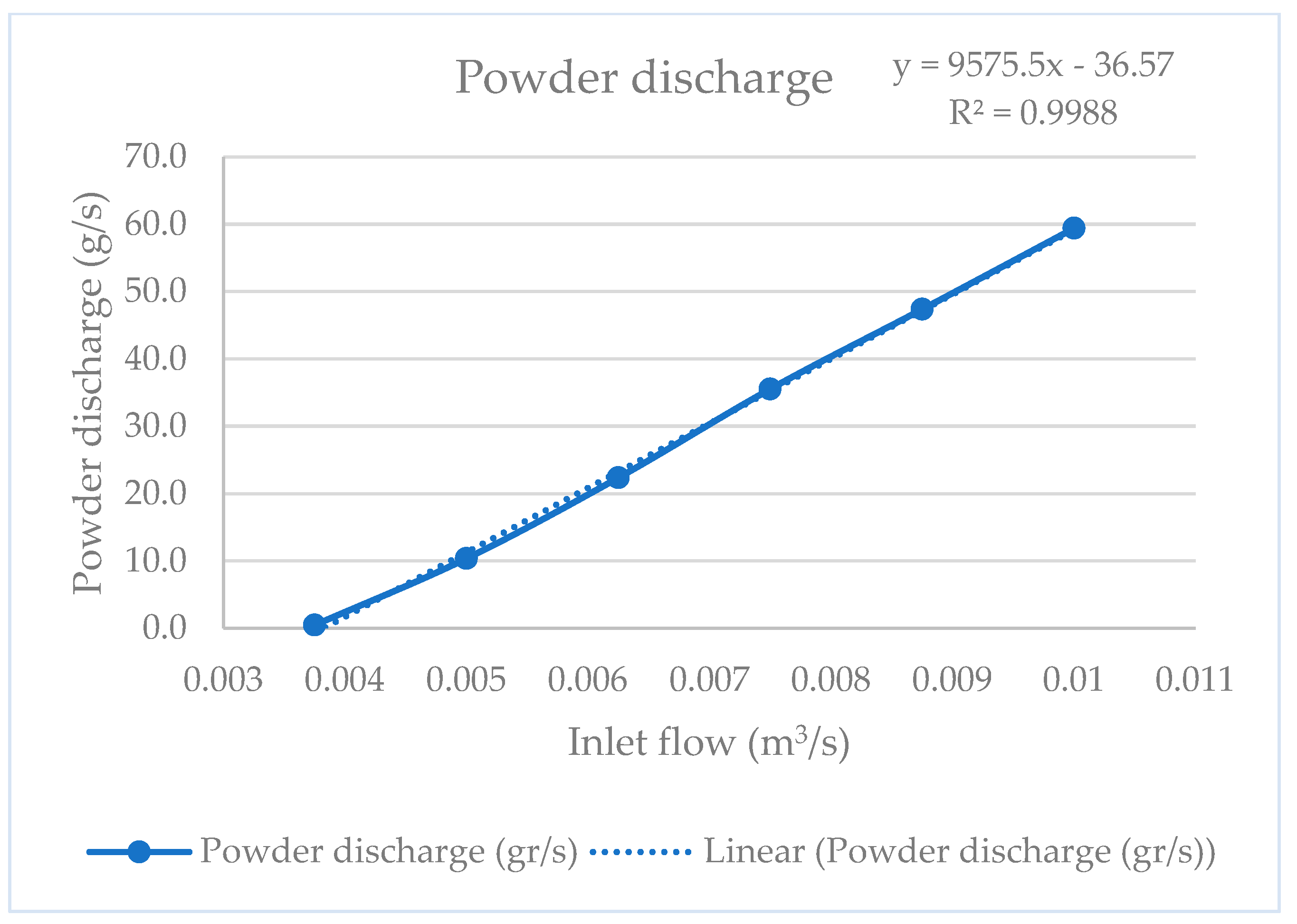

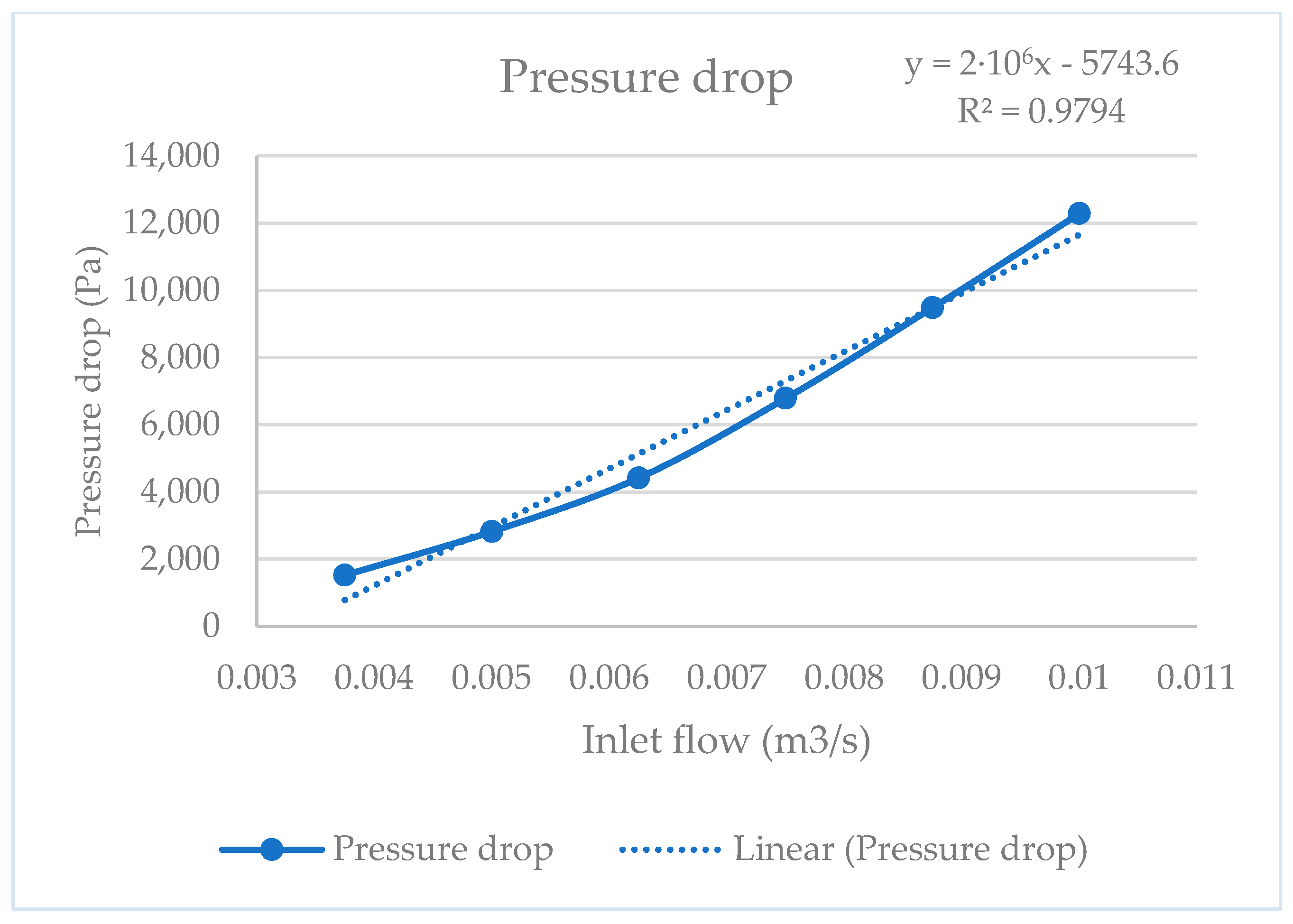

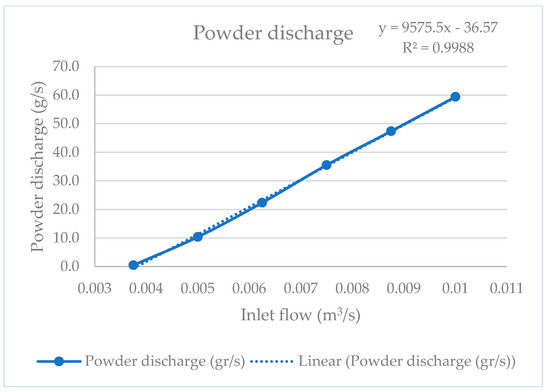

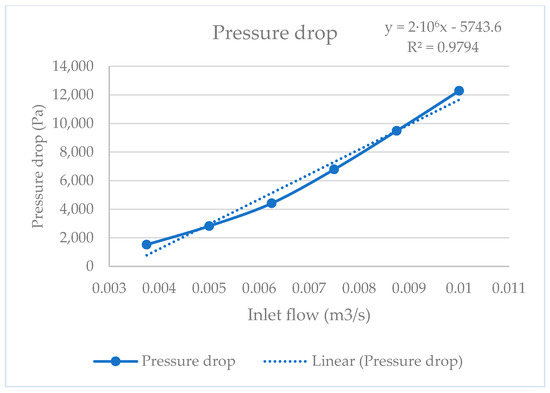

On the other hand, since the final goal of the optimization of the Venturi injector is to obtain an accurate powder transport system for directed energy deposition, the optimal Venturi injector with a 40 mm D1 and D2 configuration was analyzed using different inlet flow values. This 40 mm D1 and D2 configuration was selected to facilitate standardization and future experimental implementation. Figure 11 and Figure 12 show the results obtained, where a linear relationship between powder discharge and the pressure drop with inlet flow can be observed, respectively.

Figure 11.

Discharge curve.

Figure 12.

Pressure drop curve.

3.2. Determination of the Significance of Variables

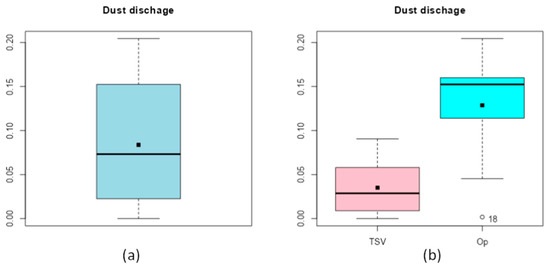

A statistical analysis of the powder discharge results is now carried out in order to find out to what extent each of the variables used in the design of the Venturi injector affects the results.

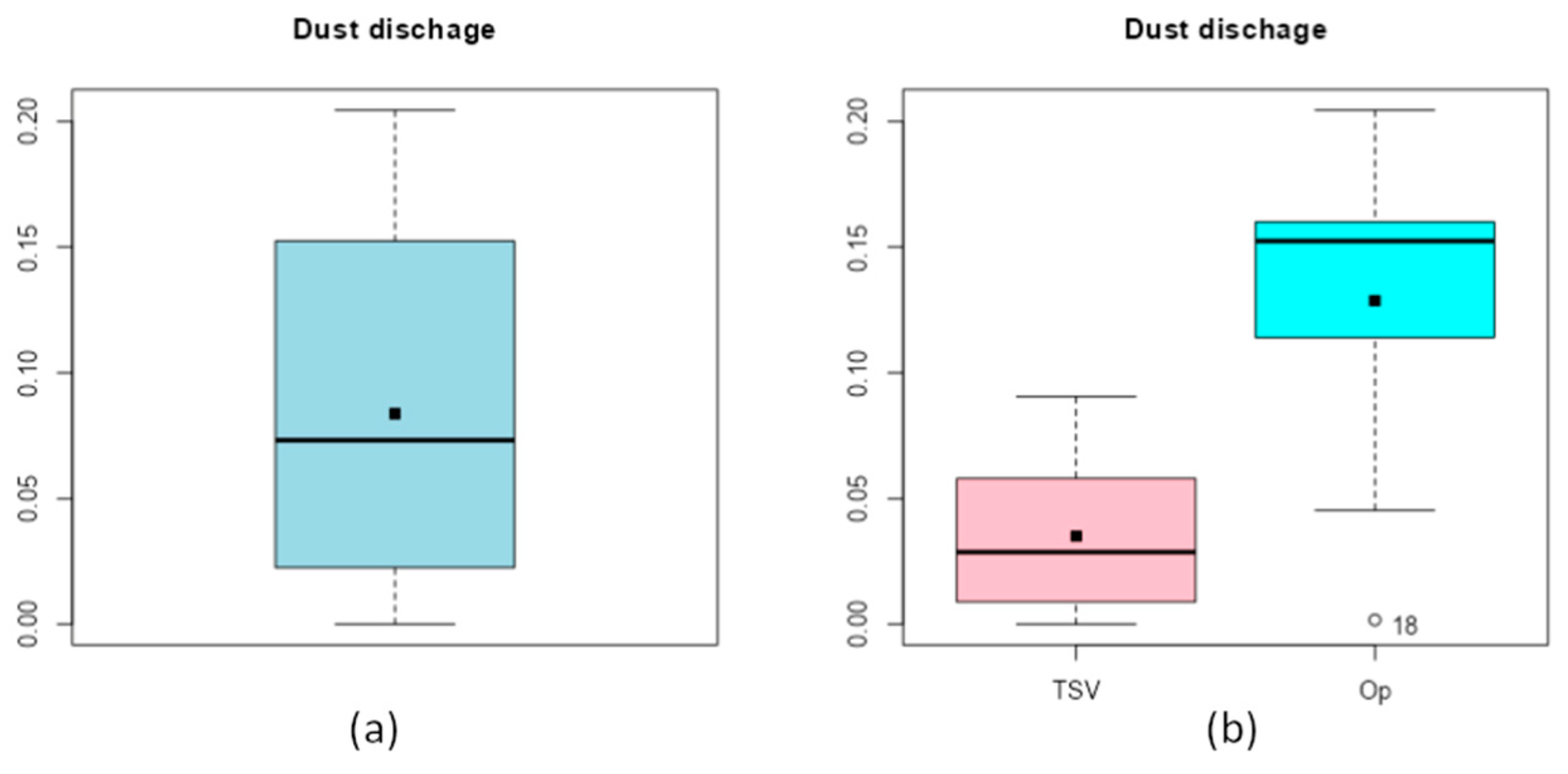

The first thing to carry out is to group all the results together to see the dispersion of the results. Figure 13a shows that the results are not very dispersed. However, when the results of the randomly generated variations (TSV) are separated from the results of the optimizations (Op), it is clear that the optimizations tend to be upward (Figure 13b).

Figure 13.

Grouping of the results all together (a) and separated by origin (b).

3.2.1. Multiple Linear Regression

For the construction of the models, a backward selection based on the p-value was first used to establish an order of inclusion of the variables by blocks (one by one) in the Model Builder of the Linear Regression library of the jmv Module in Jamovi software (2.3.28 version) [46].

Bearing in mind that it is of interest to have and coefficients greater than 0.7 and low AIC and BIC values, with a p-value for the overall model test as small as possible, it was decided to choose Model 5 to study the relative importance, from a statistical point of view, of the different design variables considered as it fulfills more criteria of the quality of fit (see Table 11).

Table 11.

Statistical results for each regression model.

In Model 5, the most significant variables are D3, d1, and d2 (in that order), all of them with a p-value of less than 0.05 (see Table 12). Furthermore, the values of the standard estimators and the corresponding 95% confidence interval show that, if the other variables of the model are kept constant, the higher the value of D3, the higher the powder discharge increases, and the higher the values of d1 and d2, the powder discharge decreases in both cases.

Table 12.

Results of standard estimators and confidence intervals at 95%.

Regarding the Cook’s distance (see Table 13), as the number of sample data is and the number of independent variables is (), we obtain qf(0.50, p, n-p) = 0.9238, where qf(p, df1, df2) is a quantile function for the F distribution with df1 and df2 degrees of freedom of the R Stats Package [45].

Table 13.

Cook’s distance of Model 5.

Consequently, as the maximum Cook’s distance does not exceed this value, no outliers with high influence are detected, as can be seen in Figure 12.

3.2.2. Checking Assumptions

The verification tests explained in Section 2.4 were carried out, and the results that were obtained are shown in Table 14 and Table 15.

Table 14.

Normality and heteroscedasticity tests.

Table 15.

Autocorrelation test.

Since in all the tests the p-value is greater than 0.05, there is no reason to assume that the assumptions of normality, homogeneity of variance, and independence of residuals are violated.

In the Q-Q plot (Figure 14), the points are quite close to the line, thus corroborating the normality of the residuals.

Figure 14.

Q-Q plot.

Finally, a collinearity study of the variables was carried out, the results of which can be seen in Table 16.

Table 16.

Study of the collinearity of the variables.

In this case, as the VIF values are closer to one than to five, it can be concluded that although the regression may be affected by some level of collinearity, this is not a cause for concern, as indicated in Section 2.4.1.

4. Conclusions

After evaluating the different combinations of the dimensional variables required for the manufacture of low-velocity Venturi injectors using combined CFD and DEM simulations, a genetic algorithm was used to achieve an optimal combination of variables for sizing a Venturi injector based on mean particle diameter and flow rate.

From analyzing these results, it can be seen that, by aiming for the lowest possible pressure drop, the Venturi convergence and divergence angles (α and β) are within the ranges of the standard for the manufacture of classic Venturi injectors. Therefore, they can be considered valid.

Furthermore, from the statistical study, it can be concluded that the variables that most significantly affect powder suction are D3, d1, and d2 (in that order), which makes it clear that d1 and d2 are important because they are the two variables on whose size the vacuum generated in the mixing chamber depends, and at equal pressure, the larger the suction diameter (D3), the more physical space there is for the particles to rise.

This suggests that the switching variables D1 and D2 can act independently, allowing them to be adapted to the system where the injector is to be installed.

It is also confirmed that throat length does not affect powder deposition [9], thus making it possible to reduce the size of the injector. Additionally, the position of the nozzle inside the mixing chamber is similar to that demonstrated by Xu et al. [21].

On the other hand, the simulations of the optimal Venturi configuration with different inlet flow demonstrated a good linearity between inlet flow and powder discharge, which means that it is possible to control the mass flow and, consequently, the powder discharge. With this approach, different Venturi injectors could be integrated for the development of accurate mixing powder devices intended for Functionally Graded Additive Manufacturing. However, this linearity was only demonstrated for flow rates lower than the one used for optimization (0.01 m3⁄s), so the optimal parameters should be recalculated for higher flow rates. Since the objective of this study is the use of this optimized injector for a DED system, the recommendation is to optimize the injector for the maximum flow rate required in each case. In this optimization process, we found better ranges of pressure drop than observed by Zerpa et al. [7], and better linearity than observed by Almeida et al. [8] about the press drop–flow relationship. We also demonstrated that, in the case of powder transportation, the relation between the nozzle and throat diameters is above the found in the bibliography. Comparing our results with those obtained by Wang et al. [26] using machine learning, we can see how, while the input and outlet angles are similar, the proportional dimensions of the nozzle and the throat are different, confirming that the optimization is not the same when the feed process is by gravity or suction.

It should be noted that the mathematical models used in this work are fully validated according to the literature. However, future research will be carried out to experimentally validate these results. On the other hand, this optimization procedure is based on the particle diameter, so, in future research, it remains to be seen its effectiveness when applied to particles with different properties such as density, cohesivity, or diameters, even opening the possibility to study a range of optimal dimensions for more than one powder in the same injector.

Having demonstrated that it is possible to use Venturi injectors to unify the metering and conveyance in DEM systems, it has the following advantages over traditional systems: being a unified system whose implementation should be easier and cheaper; easy installation of more nozzles, thus increasing the possibilities of Functionally Graded Materials; and easy change of materials (cleaning and feeding). In view of the results obtained, the use of Venturi injectors could be studied in other technologies such as material extrusion or powder bed fusion, changing the feeding method, and giving the possibility to work with Functionally Graded Materials in different ways.

Author Contributions

Conceptualization, J.G.-M.; methodology, J.G.-M., R.P. and B.G.; software, J.G.-M., R.P. and B.G.; validation, J.G.-M., R.P., M.M. and B.G.; formal analysis, J.G.-M., R.P. and B.G.; investigation, J.G.-M., R.P., M.M. and B.G.; resources, R.P. and M.M.; data curation, J.G.-M. and B.G.; writing—original draft preparation, J.G.-M.; writing—review and editing, J.G.-M., R.P. and B.G.; visualization, J.G.-M.; supervision, R.P. and M.M.; project administration, M.M.; funding acquisition, M.M. All authors have read and agreed to the published version of the manuscript.

Funding

This work was co-financed by the Agencia Canaria de Investigación, Innovación y Sociedad de la Información de la Consejería de Economía, Conocimiento y Empleo, and Fondo Social Europeo (FSE) Programa Operativo Integrado de Canarias 2014–2020, Eje 3 Tema Prioritario 74 (85\%).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The data presented in this study are available within this article.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- ISO/ASTM 52900:2021; ISO Additive Manufacturing. General Principles. Fundamentals and Vocabulary. ISO: Geneva, Switzerland, 2021.

- Wittmann, A.; Bader, T.; Chamandani, S.A.; Hentschel, O.; Schmidt, M.; Gold, G. Feasibility of Manufacturing Dielectric Image Lines by Using Laser-Based Directed Energy Deposition of Polymers. IEEE Access 2023, 11, 120585–120592. [Google Scholar] [CrossRef]

- Singh, A.; Kapil, S.; Das, M. A Comprehensive Review of the Methods and Mechanisms for Powder Feedstock Handling in Directed Energy Deposition. Addit. Manuf. 2020, 35, 101388. [Google Scholar] [CrossRef]

- Lee, H.-K. Effects of the Cladding Parameters on the Deposition Efficiency in Pulsed Nd:YAG Laser Cladding. J. Mater. Process. Technol. 2008, 202, 321–327. [Google Scholar] [CrossRef]

- Liu, W.; DuPont, J.N. Fabrication of Functionally Graded TiC/Ti Composites by Laser Engineered Net Shaping. Scr. Mater. 2003, 48, 1337–1342. [Google Scholar] [CrossRef]

- Li, W.; Karnati, S.; Kriewall, C.; Liou, F.; Newkirk, J.; Brown Taminger, K.M.; Seufzer, W.J. Fabrication and Characterization of a Functionally Graded Material from Ti-6Al-4V to SS316 by Laser Metal Deposition. Addit. Manuf. 2017, 14, 95–104. [Google Scholar] [CrossRef]

- Singh, J.; Zerpa, L.E.; Partington, B.; Gamboa, J. Effect of Nozzle Geometry on Critical-Subcritical Flow Transitions. Heliyon 2019, 5, e01273. [Google Scholar] [CrossRef] [PubMed]

- Almeida, A.R. Some Design Aspects for Venturi Gas Lift Valves. SPE Prod. Oper. 2015, 30, 321–328. [Google Scholar] [CrossRef]

- Zhang, J.X. Analysis on the Effect of Venturi Tube Structural Parameters on Fluid Flow. AIP Adv. 2017, 7, 065315. [Google Scholar] [CrossRef]

- Yan, H.J.; Chen, Y.; Chu, X.Y.; Xu, Y.C.; Wang, Z.P. Effect of Structural Optimization on Performance of Venturi Injector. IOP Conf. Ser. Earth Environ. Sci. 2012, 15, 072014. [Google Scholar] [CrossRef]

- Park, K.A.; Choi, Y.M.; Choi, H.M.; Cha, T.S.; Yoon, B.H. The Evaluation of Critical Pressure Ratios of Sonic Nozzles at Low Reynolds Numbers. Flow Meas. Instrum. 2001, 12, 37–41. [Google Scholar] [CrossRef]

- Shi, H.; Li, M.; Liu, Q.; Nikrityuk, P. Experimental and Numerical Study of Cavitating Particulate Flows in a Venturi Tube. Chem. Eng. Sci. 2020, 219, 115598. [Google Scholar] [CrossRef]

- Bermejo, D.; Escaler, X.; Ruíz-Mansilla, R. Experimental Investigation of a Cavitating Venturi and Its Application to Flow Metering. Flow Meas. Instrum. 2021, 78, 101868. [Google Scholar] [CrossRef]

- Shi, H.; Nikrityuk, P. The Influence of Inflow Swirl on Cavitating and Mixing Processes in a Venturi Tube. Fluids 2020, 5, 170. [Google Scholar] [CrossRef]

- Shi, H.; Liu, Q.; Nikrityuk, P. Numerical Study of Mixing of Cavitating Flows in a Venturi Tube. Can. J. Chem. Eng. 2021, 99, 813–828. [Google Scholar] [CrossRef]

- O’Hern, H.; Murphy, T.; Zhang, X.; Liburdy, J.; Abbasi, B. A Design Method for Low-Pressure Venturi Nozzles. Appl. Mech. 2022, 3, 390–411. [Google Scholar] [CrossRef]

- Sobenko, L.R.; Frizzone, J.A.; de Camargo, A.P.; Saretta, E.; Rocha, H.S.d. Characterization of Venturi Injector Using Dimensional Analysis. Rev. Bras. Eng. Agríc. Ambient. 2019, 23, 484–491. [Google Scholar] [CrossRef]

- Klinzing, G.E.; Rizk, F.; Marcus, R.; Leung, L.S. Pneumatic Conveying of Solids: A Theoretical and Practical Approach; Particle Technology Series; Springer: Dordrecht, The Netherlands, 2010; Volume 8, ISBN 978-90-481-3608-7. [Google Scholar]

- Yang, D.; Xing, B.; Li, J.; Wang, Y.; Gao, K.; Zhou, F.; Xia, Y.; Wang, C. Experimental Study on the Injection Performance of the Gas-Solid Injector for Large Coal Particles. Powder Technol. 2020, 364, 879–888. [Google Scholar] [CrossRef]

- Xiong, Y.; Zhang, M.; Yuan, Z. Three-Dimensional Numerical Simulation Method for Gas–Solid Injector. Powder Technol. 2005, 160, 180–189. [Google Scholar] [CrossRef]

- Xu, J.; Liu, X.; Pang, M. Numerical and Experimental Studies on Transport Properties of Powder Ejector Based on Double Venturi Effect. Vacuum 2016, 134, 92–98. [Google Scholar] [CrossRef]

- Tang, P.; Fletcher, D.F.; Chan, H.-K.; Raper, J.A. Simple and Cost-Effective Powder Disperser for Aerosol Particle Size Measurement. Powder Technol. 2008, 187, 27–36. [Google Scholar] [CrossRef]

- Wang, Z.; Li, T.; Wang, F.; Guan, L.; Zhang, R. Numerical Simulation of Polymer Dispersion Systems for Polymer Injection on Offshore Platforms. ACS Omega 2020, 5, 20343–20352. [Google Scholar] [CrossRef]

- Liu, W.; Xu, J.; Liu, X. Numerical Study on Collision Characteristics for Non-Spherical Particles in Venturi Powder Ejector. Vacuum 2016, 131, 285–292. [Google Scholar] [CrossRef]

- Expósito Carrillo, J.A.; Sánchez de La Flor, F.J.; Salmerón Lissén, J.M. Single-Phase Ejector Geometry Optimisation by Means of a Multi-Objective Evolutionary Algorithm and a Surrogate CFD Model. Energy 2018, 164, 46–64. [Google Scholar] [CrossRef]

- Wang, H.; Wang, J.; Yang, B.; Mo, Y.; Zhang, Y.; Ma, X. Simulation and Optimization of Venturi Injector by Machine Learning Algorithms. J. Irrig. Drain Eng. 2020, 146, 04020021. [Google Scholar] [CrossRef]

- Spalart, P.; Allmaras, S. A One-Equation Turbulence Model for Aerodynamic Flows. In Proceedings of the 30th Aerospace Sciences Meeting and Exhibit, Reno, NV, USA, 6 January 1992. [Google Scholar]

- Hertz, H. On the Contact of Elastic Solids. J. Reine Angew. Math. 1882, 92, 156–171. [Google Scholar] [CrossRef]

- Mindlin, R. Compliance of Elastic Bodies in Contact. J. Appl. Mech. 1949, 16, 259–268. [Google Scholar] [CrossRef]

- Mindlin, R.D.; Deresiewicz, H. Elastic Spheres in Contact Under Varying Oblique Forces. J. Appl. Mech. 1953, 20, 327–344. [Google Scholar] [CrossRef]

- Tsuji, Y.; Tanaka, T.; Ishida, T. Lagrangian Numerical Simulation of Plug Flow of Cohesionless Particles in a Horizontal Pipe. Powder Technol. 1992, 71, 239–250. [Google Scholar] [CrossRef]

- Nassauer, B.; Kuna, M. Contact Forces of Polyhedral Particles in Discrete Element Method. Granul. Matter 2013, 15, 349–355. [Google Scholar] [CrossRef]

- Ai, J.; Chen, J.-F.; Rotter, J.M.; Ooi, J.Y. Assessment of Rolling Resistance Models in Discrete Element Simulations. Powder Technol. 2011, 206, 269–282. [Google Scholar] [CrossRef]

- Morrissey, J.P.; Thakur, S.C.; Ooi, J.Y. EDEM Contact Model: Adhesive Elasto-Plastic Model. Granul. Matter 2014, 16, 383–400. [Google Scholar] [CrossRef]

- Gidaspow, D.; Bezburuah, R.; Ding, J. Hydrodynamics of Circulating Fluidized Beds: Kinetic Theory Approach; Illinois Institute of Technology: Chicago, IL, USA, 1991. [Google Scholar]

- Dong, Z.; Xue, Q.; Zuo, H.; She, X.; Li, J.; Wang, J. Gas-Solid Flow and Shaft Injected Gas Penetration in an Oxygen Blast Furnace Analyzed Using a Three-Dimensional DEM-CFD Coupling Mathematical Model. ISIJ Int. 2016, 56, 1588–1597. [Google Scholar] [CrossRef]

- Ergun, S. Fluid Flow through Packed Columns. Chem. Eng. Prog. 1952, 48, 89–94. [Google Scholar]

- Wen, C.Y.; Yu, Y.H. Mechanics of Fluidization. Chem. Eng. Prog. Symp. Ser. 1966, 162, 100–111. [Google Scholar]

- Paz, R.; Pei, E.; Monzón, M.; Ortega, F.; Suárez, L. Lightweight Parametric Design Optimization for 4D Printed Parts. Integr. Comput. Eng. 2017, 24, 225–240. [Google Scholar] [CrossRef]

- Paz, R.; Monzón, M.D. Optimization Methodology for the Material Assignation in Bioprinted Scaffolds to Achieve the Desired Stiffness over Time. Int. J. Numer. Methods Biomed. Eng. 2019, 35, e3248. [Google Scholar] [CrossRef]

- De Myttenaere, A.; Golden, B.; Grand, B.L.; Rossi, F. Mean Absolute Percentage Error for Regression Models. Neurocomputing 2016, 192, 38–48. [Google Scholar] [CrossRef]

- Gutiérrez Pulido, H.; de la Vara Salazar, R. Análisis y Diseño de Experimentos, 3rd ed.; McGraw-Hill: Mexico City, Mexico, 2012. [Google Scholar]

- Ugarte, M.D.; Militino, A.F.; Arnholt, A.T. Probability and Statistics with R; CRC Press: Boca Raton, FL, USA, 2008; ISBN 978-1-58488-891-8. [Google Scholar]

- Love, J.; Dropmann, D.; Selker, R. The Jamovi Project 2022. Available online: https://www.jamovi.org/ (accessed on 1 March 2022).

- R Core Team. A Language and Environment for Statistical Computing. Suppl. Inf. Ref. S 2021, 1, 371–378. [Google Scholar]

- Navarro, D.J.; Foxcroft, D.R. Learning Statistics with Jamovi: A Tutorial for Psychology Students and Other Beginners. 2019. Available online: http://learnstatswithjamovi.com (accessed on 1 March 2022). [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).