In recent decades, a continuous growth in the number of digital images has been observed, due to the spread of smart phones and various social media. As a result of the huge number of imaging sensors, there is a massive amount of visual data being produced each day. However, digital images may suffer different distortions during the procedure of acquisition, transmission, or compression. As a result, unsatisfactory perceived visual quality or a certain level of annoyance may occur. Consequently, it is essential to predict the perceptual quality of images in many applications, such as display technology, communication, image compression, image restoration, image retrieval, object detection, or image registration. Broadly speaking, image quality assessment (IQA) algorithms can be classified into three different classes based on the availability of the reference, undistorted image. Full-reference (FR) and reduced-reference (RR) IQA algorithms have full and partial information about the reference image, respectively. In contrast, no-reference (NR) IQA methods do not posses any information about the reference image.

Convolutional neural networks (CNN), introduced by LeCun et al. [

1] in 1988, are used in many applications, from image classification [

2] to audio synthesis [

3]. In 2012, Krizhevsky et al. [

4] won the ImageNet [

5] challenge by training a deep CNN relying on graphical processing units. Due to the huge number of parameters in a CNN, the training set has to contain sufficient data to avoid over-fitting. However, the number of human annotated images in many databases is rather limited to training a CNN from scratch. On the other hand, a CNN trained on ImageNet database [

5] is able to provide powerful features for a wide range of image processing tasks [

6,

7,

8], due to the learned comprehensive set of features. In this paper, we propose a combined FR-IQA metric based on the comparison of feature maps extracted from pretrained CNNs. The rest of this section is organized as follows. In

Section 1.1, previous and related work are summarized and reviewed. Next,

Section 1.2 outlines the main contributions of this study.

1.1. Related Work

Over the past few decades, many FR-IQA algorithms have been proposed in the literature. The earliest algorithms, such as mean squared error (MSE) and peak signal-to-noise ratio (PSNR), are based on the energy of image distortions to measure perceptual image quality. Later, methods have appeared that utilized certain characteristics of the human visual system (HVS). This kind of FR-IQA algorithms can be classified into two groups: bottom-up and top-down ones. Bottom-up approaches directly build on the properties of HVS, such as luminance adaptation [

9], contrast sensitivity [

10], or contrast masking [

11], to create a model that enables the prediction of perceptual quality. In contrast, top-down methods try to incorporate the general characteristics of HVS into a metric to devise effective algorithms. Probably, the most famous top-down approach is the structural similarity index (SSIM) proposed by Wang et al. [

12]. The main idea behind SSIM [

12] is to make a distinction between structural and non-structural image distortions, because the HVS is mainly sensitive to the latter ones. Specifically, SSIM is determined at each coordinate within local windows of the distorted and the reference images. The distorted image’s overall quality is the arithmetic mean of the local windows’ values. Later, advanced forms of SSIM have been proposed. For example, edge-based structural similarity [

13] (ESSIM) compares the edge information between the reference image block and the distorted one, claiming that edge information is the most important image structure information for the HVS. MS-SSIM [

14] built multi-scale information to SSIM, while 3-SSIM [

15] is a weighted average of different SSIMs for edges, textures, and smooth regions. Furthermore, saliency weighted [

16] and information content weighted [

17] SSIMs were also introduced in the literature. Feature similarity index (FSIM) [

18] relies on the fact that the HVS utilizes low-level features, such as edges and zero crossings, in the early stage of visual information processing to interpret images. This is why FSIM utilizes two features: (1) phase congruency, which is a contrast-invariant dimensionless measure of the local structure and (2) an image gradient magnitude feature. Gradient magnitude similarity deviation (GMSD) [

19] method utilizes the sensitivity of image gradients to image distortions and pixel-wise gradient similarity combined with a pooling strategy, applied for the prediction of the perceptual image quality. In contrast, Haar wavelet-based perceptual similarity index (HaarPSI) [

20] applies coefficients obtained from Haar wavelet decomposition to compile an IQA metric. Specifically, the magnitudes of high-frequency coefficients were used to define local similarities, while the low-frequency ones were applied to weight the importance of image regions. Quaternion image processing provides a true vectorial approach to image quality assessment. Wang et al. [

21] gave a quaternion description for the structural information of color images. Namely, the local variance of the luminance was taken as the real part of a quaternion, while the three RGB channels were taken as the imaginary parts of a quaternion. Moreover, the perceptual quality was characterized by the angle computed between the singular value feature vectors of the quaternion matrices derived from the distorted and the reference image. In contrast, Kolaman and Pecht [

22] created a quaternion-based structural similarity index (QSSIM) to assess the quality of RGB images. A study on the effect of image features, such as contrast, blur, granularity, geometry distortion, noise, and color, on the perceived image quality can be found in [

23].

Following the success in image classification [

4], deep learning has become extremely popular in the field of image processing. Liang et al. [

24] first introduced a dual-path convolutional neural network (CNN) containing two channels of inputs. Specifically, one input channel was dedicated to the reference image and another for the distorted image. Moreover, the presented network had one output that predicted the image quality score. First, the input distorted and reference images were decomposed into

-sized image patches and the quality of each image pair was predicted independently of each other. Finally, the overall image quality was determined by averaging the scores of the image pairs. Kim and Lee [

25] introduced a similar dual-path CNN but their model accepts a distorted image and an error map calculated from the reference and the distorted image as inputs. Furthermore, it generates a visual sensitivity map which is multiplied by an error map to predict perceptual image quality. Similarly to the previous algorithm, the inputs are also decomposed into smaller image patches and the overall image quality is determined by the averaging of the scores of distorted patch-error map pairs.

Recently, generic features extracted from different pretrained CNNs, such as AlexNet [

4] or GoogLeNet [

2], have been proven very powerful for a wide range of image processing tasks. Razavian et al. [

6] applied feature vectors extracted from the OverFeat [

26] network, which was trained for object classification on ImageNet ILSVRC 2013 [

5], to carry out image classification, scene recognition, fine-grained recognition, attribute detection, and content-based image retrieval. The authors reported on superior results compared to those of traditional algorithms. Later, Zhang et al. [

27] pointed out that feature vectors extracted from pretrained CNNs outperform traditional image quality metrics. Motivated by the above-mentioned results, a number of FR-IQA algorithms have been proposed relying on different deep features and pretrained CNNs. Amirshahi et al. [

28] compared different activation maps of the reference and the distorted image extracted from AlexNet [

4] CNN. Specifically, the similarity of the activation maps was measured to produce quality sub-scores. Finally, these sub-scores were aggregated to produce an overall quality value of the distorted image. In contrast, Bosse et al. [

29] extracted deep features with the help of a VGG16 [

30] network from reference and distorted image patches. Subsequently, the distorted and the reference deep feature vectors were fused together and mapped onto patch-wise quality scores. Finally, the patch-wise scores were pooled, supplementing with a patch weight estimation procedure to obtain the overall perceptual quality. In our previous work [

31], we introduced a composition preserving deep architecture for FR-IQA relying on a Siamese layout of pretrained CNNs, feature pooling, and a feedforward neural network.

Another line of works focuses on creating combined metrics where existing FR-IQA algorithms are combined to achieve strong correlation with the subjective ground-truth scores. In [

32], Okarma examined the properties of three FR-IQA metrics (MS-SSIM [

14], VIF [

33], and R-SVD [

34]), and proposed a combined quality metric based on the arithmetical product and power of these metrics. Later, this approach was further developed using optimization techniques [

35,

36]. Similarly, Oszust [

37] selected 16 FR-IQA metrics and applied their scores as predictor variables in a lasso regression model to obtain a combined metric. Yuan et al. [

38] took a similar approach, but kernel ridge regression was utilized to fuse the scores of the IQA metrics. In contrast, Lukin et al. [

39] fused the results of six metrics with the help of a neural network. Oszust [

40] carried out a decision fusion based on 16 FR-IQA measures by minimizing the root mean square error of prediction performance with a genetic algorithm.

1.2. Contributions

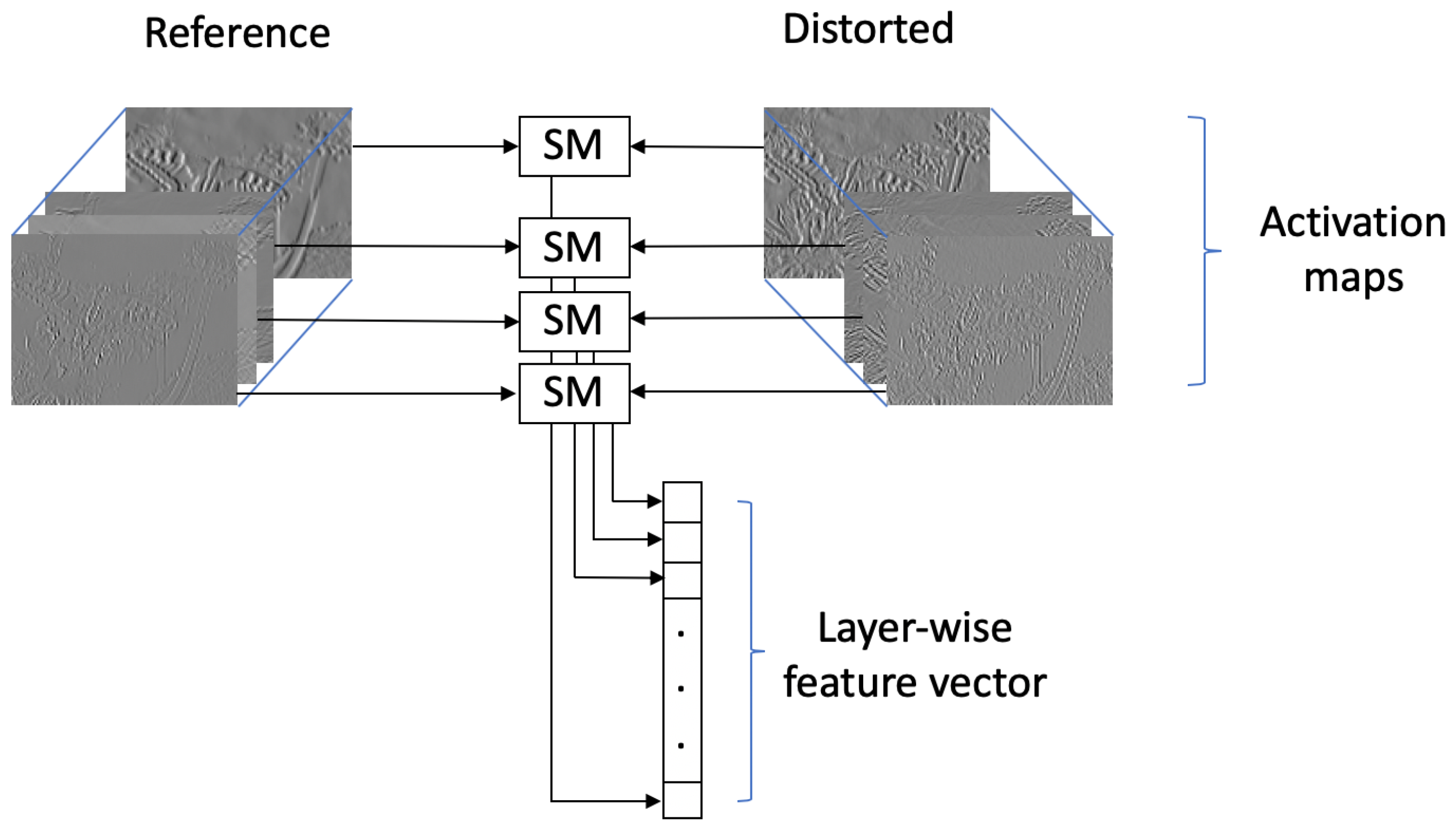

Motivated by recent convolutional activation map based metrics [

28,

41], we make the following contributions in our study. Previous activation map-based approaches compared directly the similarity between reference and distorted activation maps by histogram-based similarity metrics. Subsequently, the resulted sub-scores were pooled together using different ad-hoc solutions, such as geometric mean. In contrast, we take a machine learning approach. Specifically, we compile a feature vector for each distorted-reference image pair by comparing distorted and reference activation maps with the help of traditional image similarity metrics. Subsequently, these feature vectors are mapped to perceptual quality scores using machine learning techniques. Unlike previous combined methods [

35,

36,

39,

40], we do not apply directly different optimization or machine learning techniques using the results of traditional metrics; instead, traditional metrics are used to compare convolutional activation map and to compile a feature vector.

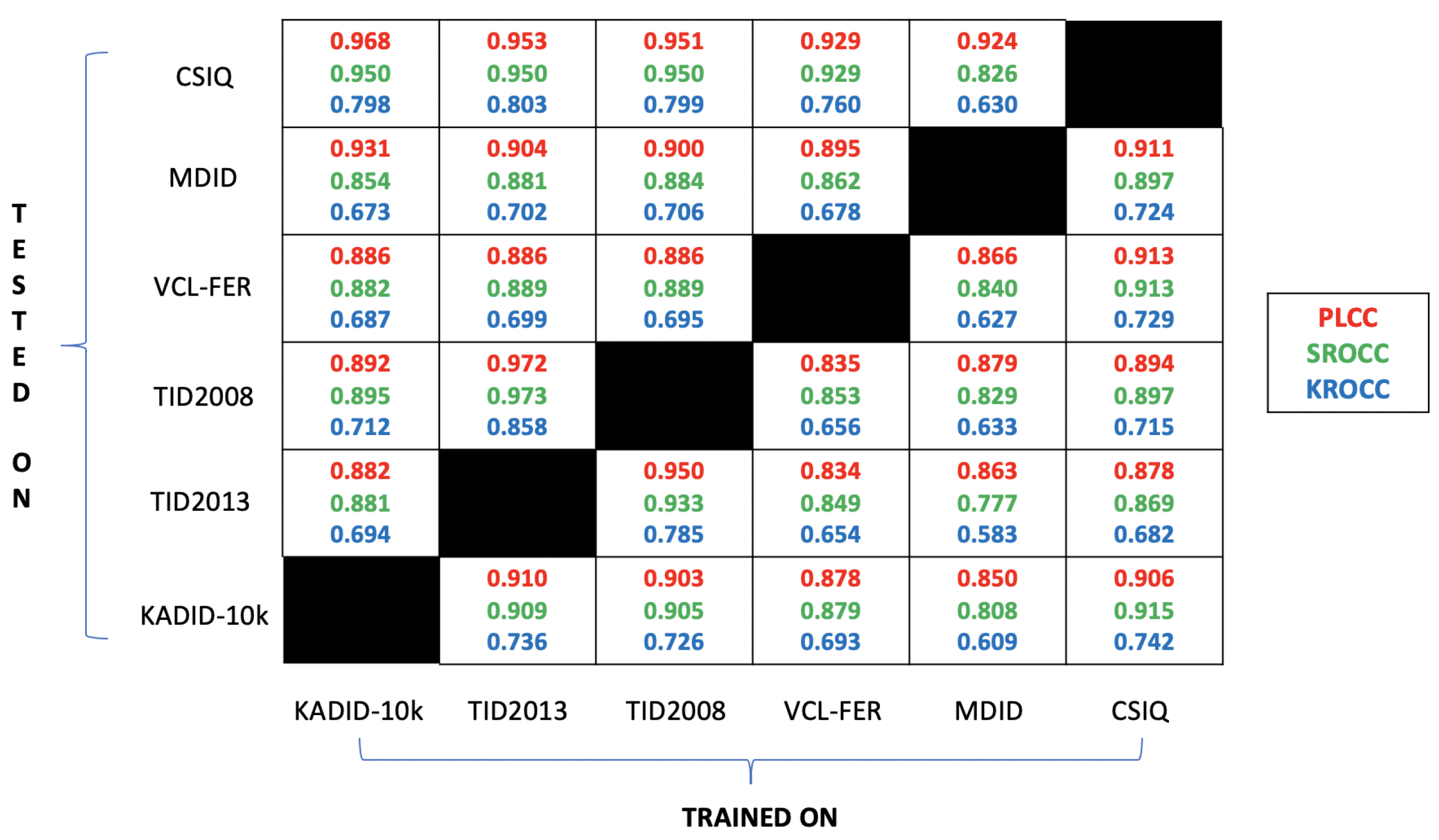

We demonstrate that our approach has several advantages. First, the proposed FR-IQA algorithm can be easily generalized to any input image resolution or base CNN architecture, since image patches are not required to crop from the input images like several previous CNN-based approaches [

24,

25,

29]. In this regard, it is similar to recently published NR-IQA algorithms, such as DeepFL-IQA [

42] and BLINDER [

43]. Second, the proposed feature extraction method is highly effective, since the proposed method is able to reach state-of-the-art results even if only 5% of the KADID-10k [

44] database is used for training. In contrast, state-of-the-art deep learning based approaches’ performances are strongly dependent on the training database size [

45]. Another advantage of the proposed approach is that it is able to achieve the performance of traditional FR-IQA metrics, even in cross-database tests. Our method is compared against the state-of-the-art on six publicly available IQA benchmark databases, such as KADID-10k [

44], TID2013 [

46], VCL-FER [

47], MDID [

48], CSIQ [

49], and TID2008 [

46]. Specifically, our method is able to significantly outperform the state-of-the-art on the benchmark databases.