An Algorithmic Framework for Cocoa Ripeness Classification: A Comparative Analysis of Modern Deep Learning Architectures on Drone Imagery

Abstract

1. Introduction

1.1. The Challenge of the Chocolate Bean

1.2. The Promise of an Eye in the Sky

1.3. The Evolution of Machine Perception

1.4. Our Contribution

- A rigorous evaluation of architectural robustness, demonstrating that while modern architectures (ConvNeXt, Swin) thrive under aggressive augmentation pipelines, classic deep architectures (ResNet-101) suffer catastrophic performance collapse.

- A statistically validated comparison of three distinct deep learning paradigms evaluated on the specific challenges of the Cocoa Ghana dataset, supported by paired t-tests and confidence intervals.

- The definition of a reproducible, algorithmic training standard (Algorithm 1) that serves as a baseline for future agricultural deep learning research.

| Algorithm 1 Unified Two-Stage Training and Evaluation Pipeline |

|

2. Related Work

2.1. The Challenge of Cocoa Harvesting and the Rise of Automation

2.2. Deep Learning for Fruit Ripeness Classification

2.3. Drone-Based Applications and Architectural Considerations

3. Methodology

3.1. Dataset and Preparation

3.2. Model Selection and Preliminary Screening

- ResNet-50 and ResNet-101: Selected as the standard benchmarks for residual CNNs to test whether increased depth correlates with robustness in this domain.

- DenseNet-169: Selected to evaluate feature reuse via dense connections.

- EfficientNet-B4: Chosen over B0 or B7 as it offers an optimal trade-off between parameter count and input resolution scaling for imagery.

- MobileNetV3-Large: Selected to represent the upper bound of lightweight, edge-deployable performance compared to the smaller variants.

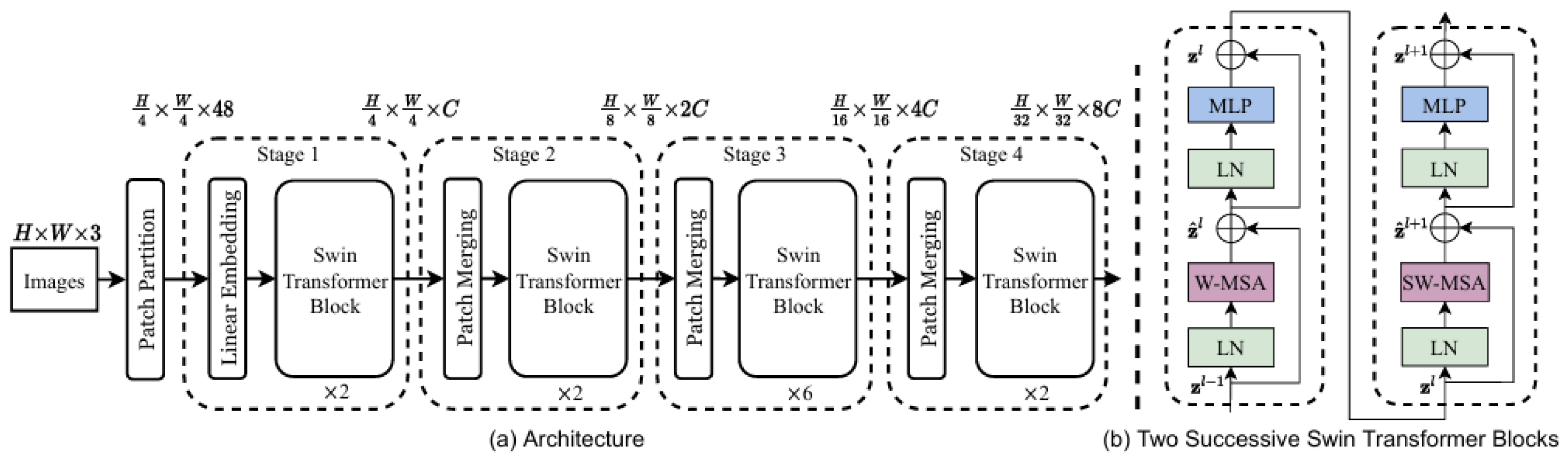

- Swin-Base and ConvNeXt-Base: Selected as state-of-the-art representatives of Vision Transformers and modern hybrid CNNs, respectively. The overall structure of the Swin Transformer, characterized by its hierarchical design and patch-merging capabilities, is depicted in Figure 2.

3.3. Experimental Design and Training Framework

- Image Resolution and Augmentation: Images were resized to pixels. This resolution was selected as a trade-off: it preserves fine-grained texture details of the cocoa pods better than standard inputs, without incurring the prohibitive computational cost of . The training set was processed using TrivialAugmentWide [19], an automated policy that randomly selects aggressive augmentations (e.g., rotation, color jitter, solarize) to maximize generalization.

- Test Time Augmentation (TTA): For evaluation, we employed a stochastic TTA strategy to further assess model robustness. Rather than relying on fixed crops, the model performed inference on five distinct, randomly augmented views of each test image. Unlike the training phase, TTA utilized a conservative augmentation pipeline consisting of random horizontal flips (), random rotations (), and subtle color jittering (brightness and contrast ). The final prediction was derived by averaging the softmax probabilities of these five stochastic views, reducing the impact of outliers and simulating varying viewing conditions.

- Loss Function: To address the class imbalance, a Weighted Cross-Entropy Loss function was employed. Class weights were computed using the inverse frequency formula: , where N is the total number of samples, C is the number of classes (5), and is the number of samples in class c. We also applied label smoothing (factor 0.1) to prevent overfitting.

- Unified Two-Stage Fine-Tuning: All seven models were trained using the same two-stage strategy to ensure a fair comparison.

- –

- Stage 1: Only the final classifier head was trained for 15 epochs with a high learning rate ( or ), allowing the new layers to adapt.

- –

- Stage 2: The entire network was unfrozen and fine-tuned end-to-end for 50 epochs with a lower learning rate ( or ) and early stopping patience of 10.

- Optimization: The AdamW optimizer was used with a Cosine Annealing Scheduler to systematically adjust the learning rate.

- Hardware Environment: All experiments were conducted on a workstation equipped with an NVIDIA Quadro P5000 GPU (16GB VRAM). The batch size was set to 12 to accommodate the resolution and gradient requirements. Total training time for the two-stage pipeline averaged approximately 4 h per model per seed.

3.4. Statistical Validation

3.5. Evaluation Protocol

- Accuracy: This metric measures the overall correctness of the model across all classes. It is calculated as the ratio of all correct predictions to the total number of predictions made.

- Precision: Precision quantifies the reliability of a positive prediction. For a given class, it answers the question “Of all the instances the model predicted to be this class, what fraction was actually correct?”

- Recall (Sensitivity): Recall measures the model’s ability to find all relevant instances of a class. It answers the question “Of all the actual instances of this class in the dataset, what fraction did the model correctly identify?”

- F1-Score: The F1-score is the harmonic mean of precision and recall, providing a single score that balances both metrics. It is particularly useful when the class distribution is imbalanced.

- Confusion Matrix: A confusion matrix is a C × C grid, where C is the number of classes. It provides a detailed breakdown of classification performance by showing the relationship between the true labels and the predicted labels. The diagonal elements represent the number of correctly classified instances for each class, while off-diagonal elements reveal the specific misclassifications, indicating which classes are most often confused with one another.

- Precision–Recall (PR) Curve: A PR curve is a two-dimensional plot that illustrates the trade-off between precision (y-axis) and recall (x-axis) for a given class across a range of decision thresholds. A curve that bows out toward the top-right corner indicates a model with both high precision and high recall. The Area Under the PR Curve (AUC-PR) serves as a single, aggregate measure of performance, with a higher value indicating a more skillful classifier.

3.6. Computational Analysis

4. Results

4.1. Statistical Performance Analysis

4.2. Visual Performance Analysis

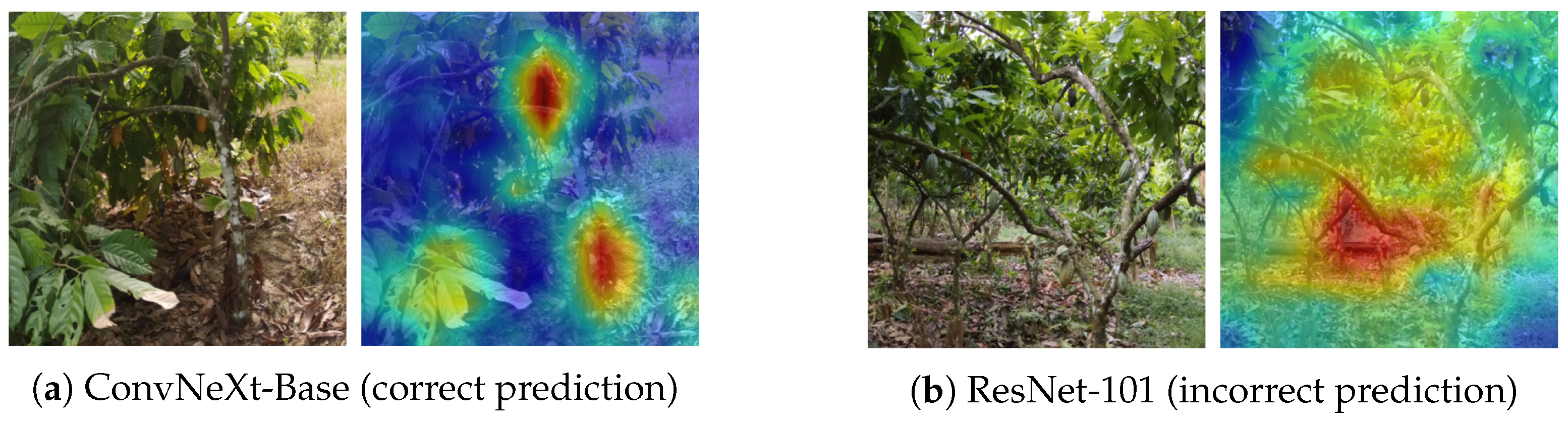

4.3. Model Interpretability

4.4. Ablation Study Discussion

5. Conclusions

5.1. Principal Findings

5.2. Limitations and Future Horizons

- Ablation Analysis: Our advanced pipeline integrated multiple techniques (weighted loss, TTA, aggressive augmentation). A granular ablation study to isolate the individual contribution of each component was not conducted due to computational constraints but remains a necessary step to optimize the training recipe further.

- Domain Shift and Geographic Generalization: A significant limitation of this study is the geographic specificity of the dataset. All evaluation imagery was acquired from a single region in Ghana. Consequently, the models have not been validated against the visual variations found in other major cocoa-producing regions, such as Côte d’Ivoire, Indonesia, or Ecuador. Factors such as differing soil coloration (background noise), varying solar angles, and regional differences in cocoa pod varieties could introduce domain shift that degrades model performance. Future work must prioritize cross-regional validation to ensure the algorithm’s robustness for global deployment.

- Task Granularity: This study focused on image-level classification. The immediate next step is to adapt the top-performing ConvNeXt backbone into an object detection framework (such as Faster R-CNN or YOLO) to provide farmers with precise pod counts and localization.

5.3. Implications for Precision Agriculture

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Mature-Unripe | Tree | Immature | Riped | Spoilt | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Phase | Model | P | R | F1 | P | R | F1 | P | R | F1 | P | R | F1 | P | R | F1 |

| Preliminary Screening | ResNet101 | 0.83 | 0.82 | 0.82 | 0.75 | 0.69 | 0.72 | 0.78 | 0.77 | 0.77 | 0.77 | 0.83 | 0.80 | 0.84 | 0.92 | 0.88 |

| ResNet50 | 0.81 | 0.82 | 0.82 | 0.78 | 0.66 | 0.71 | 0.74 | 0.75 | 0.74 | 0.75 | 0.88 | 0.81 | 0.87 | 0.89 | 0.88 | |

| DenseNet169 | 0.85 | 0.78 | 0.81 | 0.69 | 0.72 | 0.71 | 0.77 | 0.70 | 0.74 | 0.71 | 0.85 | 0.78 | 0.87 | 0.89 | 0.88 | |

| ResNet152 | 0.79 | 0.83 | 0.81 | 0.76 | 0.62 | 0.68 | 0.73 | 0.76 | 0.74 | 0.76 | 0.81 | 0.78 | 0.84 | 0.88 | 0.86 | |

| EfficientNet B6 | 0.78 | 0.83 | 0.80 | 0.73 | 0.64 | 0.68 | 0.77 | 0.71 | 0.74 | 0.74 | 0.78 | 0.76 | 0.84 | 0.89 | 0.87 | |

| EfficientNet B3 | 0.79 | 0.80 | 0.79 | 0.71 | 0.66 | 0.69 | 0.78 | 0.72 | 0.75 | 0.73 | 0.78 | 0.76 | 0.83 | 0.92 | 0.87 | |

| ResNet34 | 0.76 | 0.83 | 0.79 | 0.76 | 0.62 | 0.68 | 0.77 | 0.74 | 0.75 | 0.71 | 0.76 | 0.73 | 0.85 | 0.89 | 0.87 | |

| MobileNet_v2 | 0.78 | 0.78 | 0.78 | 0.71 | 0.63 | 0.67 | 0.74 | 0.75 | 0.74 | 0.68 | 0.82 | 0.74 | 0.86 | 0.83 | 0.85 | |

| DenseNet121 | 0.75 | 0.81 | 0.78 | 0.72 | 0.60 | 0.65 | 0.73 | 0.71 | 0.72 | 0.71 | 0.80 | 0.75 | 0.87 | 0.87 | 0.87 | |

| ResNet18 | 0.74 | 0.76 | 0.75 | 0.72 | 0.62 | 0.67 | 0.74 | 0.73 | 0.74 | 0.72 | 0.83 | 0.77 | 0.84 | 0.86 | 0.85 | |

| EfficientNet B5 | 0.78 | 0.81 | 0.79 | 0.75 | 0.56 | 0.64 | 0.67 | 0.70 | 0.69 | 0.68 | 0.74 | 0.71 | 0.78 | 0.88 | 0.83 | |

| SqueezeNet1_0 | 0.76 | 0.80 | 0.78 | 0.65 | 0.68 | 0.67 | 0.74 | 0.73 | 0.74 | 0.72 | 0.53 | 0.61 | 0.76 | 0.85 | 0.80 | |

| AlexNet | 0.47 | 0.71 | 0.57 | 0.52 | 0.32 | 0.40 | 0.53 | 0.56 | 0.54 | 0.44 | 0.20 | 0.28 | 0.67 | 0.68 | 0.67 | |

| VGG19 | 0.44 | 0.72 | 0.55 | 0.25 | 0.11 | 0.15 | 0.00 | 0.00 | 0.00 | 0.17 | 0.28 | 0.21 | 0.42 | 0.49 | 0.45 | |

| VGG16 | 0.39 | 0.84 | 0.53 | 0.00 | 0.00 | 0.00 | 0.09 | 0.01 | 0.02 | 0.24 | 0.05 | 0.08 | 0.27 | 0.54 | 0.36 | |

| Main Study (Adv.) | ConvNeXt-Base | 0.84 | 0.79 | 0.81 | 0.73 | 0.67 | 0.70 | 0.73 | 0.81 | 0.77 | 0.77 | 0.85 | 0.81 | 0.88 | 0.90 | 0.89 |

| Swin-Base | 0.83 | 0.83 | 0.83 | 0.74 | 0.70 | 0.72 | 0.78 | 0.73 | 0.75 | 0.77 | 0.83 | 0.80 | 0.87 | 0.95 | 0.91 | |

| DenseNet169 | 0.84 | 0.73 | 0.78 | 0.70 | 0.71 | 0.70 | 0.76 | 0.74 | 0.75 | 0.69 | 0.82 | 0.75 | 0.82 | 0.89 | 0.85 | |

| EfficientNet-B4 | 0.81 | 0.78 | 0.79 | 0.71 | 0.66 | 0.68 | 0.73 | 0.76 | 0.74 | 0.73 | 0.72 | 0.72 | 0.78 | 0.92 | 0.84 | |

| MobileNetV3-L | 0.79 | 0.83 | 0.81 | 0.76 | 0.49 | 0.60 | 0.68 | 0.78 | 0.73 | 0.67 | 0.84 | 0.75 | 0.85 | 0.85 | 0.85 | |

| ResNet50 | 0.78 | 0.64 | 0.70 | 0.60 | 0.46 | 0.52 | 0.59 | 0.75 | 0.66 | 0.56 | 0.60 | 0.58 | 0.67 | 0.89 | 0.77 | |

| ResNet101 | 0.77 | 0.45 | 0.57 | 0.53 | 0.36 | 0.43 | 0.55 | 0.63 | 0.59 | 0.40 | 0.62 | 0.49 | 0.49 | 0.83 | 0.62 | |

References

- Food and Agriculture Organization (FAO). Cocoa’s Contribution to Ghana’s Economy; Food and Agriculture Organization (FAO): Rome, Italy, 2018. [Google Scholar]

- Amoa-Awua, W.K.; Madsen, M.; Olaiya, A.; Ban-Kofi, L.; Jakobsen, M. Quality Manual for Production and Primary Processing of Cocoa; Cocoa Research Institute of Ghana (CRIG): Accra, Ghana, 2007. [Google Scholar]

- Abbas, A.; Zhang, Z.; Zheng, H.; Alami, M.M.; Alrefaei, A.F.; Abbas, Q.; Naqvi, S.A.H. Drones in Plant Disease Assessment, Efficient Monitoring, and Detection: A Way Forward to Smart Agriculture. Agronomy 2023, 13, 1524. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021; pp. 10012–10022. [Google Scholar]

- Liu, Z.; Mao, H.; Wu, C.; Feichtenhofer, C.; Darrell, T.; Xie, S. A convnet for the 2020s. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 11976–11986. [Google Scholar]

- Saranya, N.; Srinivasan, K.; Kumar, S.P. Banana ripeness stage identification: A deep learning approach. J. Ambient Intell. Humanized Comput. 2022, 13, 4033–4039. [Google Scholar] [CrossRef]

- Chen, L.; Li, S.; Bai, Q.; Yang, J.; Jiang, S.; Miao, Y. Review of Image Classification Algorithms Based on convolutional neural networks. Remote Sens. 2021, 13, 4712. [Google Scholar] [CrossRef]

- Rizzo, M.; Marcuzzo, M.; Zangari, A.; Gasparetto, A.; Albarelli, A. Fruit Ripeness Classification: A Survey. Artif. Intell. Agric. 2023, 6, 144–163. [Google Scholar] [CrossRef]

- Moya, V.; Quito, A.; Pilco, A.; Vásconez, J.P.; Vargas, C. Crop Detection and Maturity Classification Using a YOLOv5-Based Image Analysis. Emerg. Sci. J. 2024, 8, 496–512. [Google Scholar] [CrossRef]

- Ayikpa, K.J.; Mamadou, D.; Gouton, P.; Adou, K.J. Classification of Cocoa Pod Maturity Using Similarity Tools. Data 2023, 8, 99. [Google Scholar] [CrossRef]

- Ayikpa, K.J.; Ballo, A.B.; Mamadou, D.; Gouton, P. Optimization of Cocoa Pods Maturity Classification Using Stacking. J. Imaging 2024, 10, 327. [Google Scholar] [CrossRef] [PubMed]

- Koirala, A.; Walsh, K.B.; Wang, Z.; McCarthy, C. Deep learning for real-time fruit detection and orchard fruit load estimation: MangoYOLO. Precis. Agric. 2019, 20, 1107–1135. [Google Scholar] [CrossRef]

- Ekawaty, Y.; Indrabayu; Areni, I.S. Automatic Cacao Pod Detection Under Outdoor Condition Using Computer Vision. In Proceedings of the 2019 4th International Conference on Information Technology, Information Systems and Electrical Engineering (ICITISEE), Yogyakarta, Indonesia, 20–21 November 2019; pp. 31–34. [Google Scholar]

- Suyuti, J.; Indrabayu; Zainuddin, Z.; Basri, B. Detection and Counting of the Number of Cocoa Fruits on Trees Using UAV. In Proceedings of the 2023 IEEE International Conference on Industry 4.0, Artificial Intelligence, and Communications Technology (IAICT), Bali, Indonesia, 13–15 July 2023; pp. 257–262. [Google Scholar]

- Ferraris, S.; Meo, R.; Pinardi, S.; Salis, M.; Sartor, G. Machine Learning as a Strategic Tool for Helping Cocoa Farmers in Côte D’Ivoire. Sensors 2023, 23, 7632. [Google Scholar] [CrossRef] [PubMed]

- Rajeena P. P., F.; S. U., A.; Moustafa, M.A.; Ali, M.A.S. Detecting Plant Disease in Corn Leaf Using Efficientnet architecture—An analytical approach. Electronics 2023, 12, 1938. [Google Scholar]

- Halstead, M.; McCool, C.; Denman, S.; Perez, T.; Fookes, C. Fruit Quantity and Ripeness Estimation Using a Robotic Vision System. IEEE Robot. Autom. Lett. 2018, 3, 2995–3002. [Google Scholar] [CrossRef]

- KaraAgro AI Foundation. Drone-Based Agricultural Dataset for Crop Yield Estimation; Hugging Face: New York, NY, USA, 2023. [Google Scholar] [CrossRef]

- Müller, S.G.; Hutter, F. TrivialAugment: Tuning-free Yet State-of-the-Art Data Augmentation. arXiv 2021, arXiv:2103.10158. [Google Scholar]

| Area/Study | Method/Model Used | Key Findings/Remarks |

|---|---|---|

| Crop Ripeness (Survey) | Various CNN approaches vs. traditional vision | CNNs outperform classical methods and are robust under diverse conditions [8]. |

| Cocoa Pod Ripeness | MobileNet, texture features, ensemble learning | Achieved up to 99.6% accuracy with texture features; ensembles reached ~98.7% accuracy, mainly on ground-level images [10,11]. |

| Banana Ripeness | Custom lightweight CNN; transfer learning with VGG16/ResNet-50 | Approximately 95% accuracy; specialized lightweight models are effective and efficient [6]. |

| Mango Ripeness | YOLO-based detector (“MangoYOLO”) | Achieved an F1-score of 0.89; effectively distinguishes ripe from unripe fruit [12]. |

| Sweet Pepper Ripeness | Two-stage CNN (Faster R-CNN + classification branch) | Multi-branch approach outperforms single-stage detectors (F1: 77.3% vs. 72.5%) [17]. |

| Drone-Based Cocoa Imaging | K-means clustering; YOLO/Faster R-CNN variants | Traditional methods work well at close range; CNN-based detectors provide accurate counts and scalability [13,14]. |

| CNN Architecture Comparison | VGG, ResNet, DenseNet, MobileNet, EfficientNet, YOLO | Trade-offs: VGG is heavy; ResNet is deep but resource-intensive; DenseNet is efficient; MobileNet is lightweight; EfficientNet offers excellent accuracy-to-size; YOLO enables real-time detection [12,15,16]. |

| Class Name | Train | Validation | Test |

|---|---|---|---|

| cocoa-pod-mature-unripe | 857 | 175 | 187 |

| cocoa-tree | 578 | 121 | 137 |

| cocoa-pod-immature | 531 | 118 | 115 |

| cocoa-pod-riped | 409 | 80 | 88 |

| cocoa-pod-spoilt | 473 | 116 | 84 |

| Total | 2848 | 610 | 611 |

| Model | Architectural Family | Parameters (M) | GFLOPs | Inference Time (ms/image) |

|---|---|---|---|---|

| MobileNetV3-Large | Classic CNN (Lightweight) | 5.5 | 1.3 | 5 |

| EfficientNet-B4 | Classic CNN (Efficient) | 19.3 | 9.0 | 13 |

| DenseNet-169 | Classic CNN (Dense) | 14.1 | 19.9 | 17 |

| ResNet-50 | Classic CNN (Residual) | 25.6 | 24.1 | 9 |

| ResNet-101 | Classic CNN (Residual) | 44.5 | 45.9 | 16 |

| Swin-Base | Vision Transformer | 87.9 | 94.2 | 37 |

| ConvNeXt-Base | Modern CNN | 88.6 | 90.3 | 34 |

| Architectural Family | Model | Accuracy (%) | Macro F1-Score (%) | Test Loss |

|---|---|---|---|---|

| Modern CNN | ConvNeXt-Base | 79.21 ± 0.13 | 79.55 ± 0.11 | 0.6207 ± 0.0163 |

| Vision Transformer | Swin-Base | 79.27 ± 0.56 | 79.75 ± 0.63 | 0.6048 ± 0.0166 |

| Classic CNN (Dense) | DenseNet-169 | 76.54 ± 0.41 | 76.96 ± 0.38 | 0.7465 ± 0.0260 |

| Classic CNN (Lightweight) | MobileNetV3-Large | 76.00 ± 0.41 | 76.14 ± 0.59 | 0.6915 ± 0.0273 |

| Classic CNN (Efficient) | EfficientNet-B4 | 75.29 ± 0.71 | 75.39 ± 0.98 | 0.7242 ± 0.0177 |

| Classic CNN (Residual) | ResNet-50 | 64.32 ± 0.94 | 63.43 ± 1.24 | 0.9520 ± 0.0274 |

| Classic CNN (Residual) | ResNet-101 | 55.86 ± 4.01 | 54.70 ± 4.46 | 1.1282 ± 0.0946 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Momenpour, T.; AbuMallouh, A. An Algorithmic Framework for Cocoa Ripeness Classification: A Comparative Analysis of Modern Deep Learning Architectures on Drone Imagery. Algorithms 2026, 19, 55. https://doi.org/10.3390/a19010055

Momenpour T, AbuMallouh A. An Algorithmic Framework for Cocoa Ripeness Classification: A Comparative Analysis of Modern Deep Learning Architectures on Drone Imagery. Algorithms. 2026; 19(1):55. https://doi.org/10.3390/a19010055

Chicago/Turabian StyleMomenpour, Thomures, and Arafat AbuMallouh. 2026. "An Algorithmic Framework for Cocoa Ripeness Classification: A Comparative Analysis of Modern Deep Learning Architectures on Drone Imagery" Algorithms 19, no. 1: 55. https://doi.org/10.3390/a19010055

APA StyleMomenpour, T., & AbuMallouh, A. (2026). An Algorithmic Framework for Cocoa Ripeness Classification: A Comparative Analysis of Modern Deep Learning Architectures on Drone Imagery. Algorithms, 19(1), 55. https://doi.org/10.3390/a19010055