1. Introduction

AI (artificial intelligence), which is a key element to the 4th Industrial Revolution, has started to affect the general media and journalism significantly under the name of “robot journalism” [

1]. Robot journalism has been known to the general public since 2010 as a concept of generating news articles automatically by means of AI-based algorithms with no involvement of human journalist [

2]. As AI extracts natural language phrases (NLP) from various data sets automatically, the traditional news industry has transformed [

3]. When it comes to promptness and accuracy, which are regarded as critical in news articles, robot journalist now surpass human journalist.

Forbes, a U.S. Economist magazine, is the news agency that introduced AI-generated articles in 2012 for the first time. In 2013, the Associated Press introduced Wordsmith and promptly reported a large amount of data regarding the business performance of enterprises. The LA Times reported the earthquake situation real-time by utilizing “Quakebot”. In 2016, the Washington Post started to adopt “Heliograf” in the Rio Olympic Games to report sports game results in a prompt and simple manner. In Korea, AI-generated news started to be used in 2015. In January 2016, the Financial News introduced IamFNBOT, an AI robot journalist. The Herald Business introduced HeRo, an AI robot journalist for English articles. eToday and Electronic Times utilized e2BOT and @NEWS respectively in their news article production. While these robot journalist handled existing news reports on corporate information and stocks, including current stock condition and fluctuation, corporate performance, etc., Yonhap News collected and analyzed data from every soccer game of the England Premier League and provided news reports by using SoccerBot.

At present, robot journalism based on AI-generated news articles is expected to be established over the general media market [

4]. As such, media agencies now introduce an AI-based news generation system as an efficient media management system [

5]. As demands for AI-generated news among news consumers are increasing, the introduction and commercialization of AI-generated articles is recognized, no longer as a matter of choice, but a matter of eventuality.

In its initial stage, research on AI-generated articles or robot journalism in the media industry focused on the area of technology development. Specifically, the focus was on technology development and the issue of whether AI-generated articles could replace, and be as significant as, human-written articles. In this process, one of the main subjects of research was the dynamic conflict between robot journalist and human journalist [

6]. Since AI-generated articles started to be commercialized, the scope of research has expanded to cover feedbacks from news consumers [

2], ethics of algorithms, and legal issues such as article copyright [

7,

8].

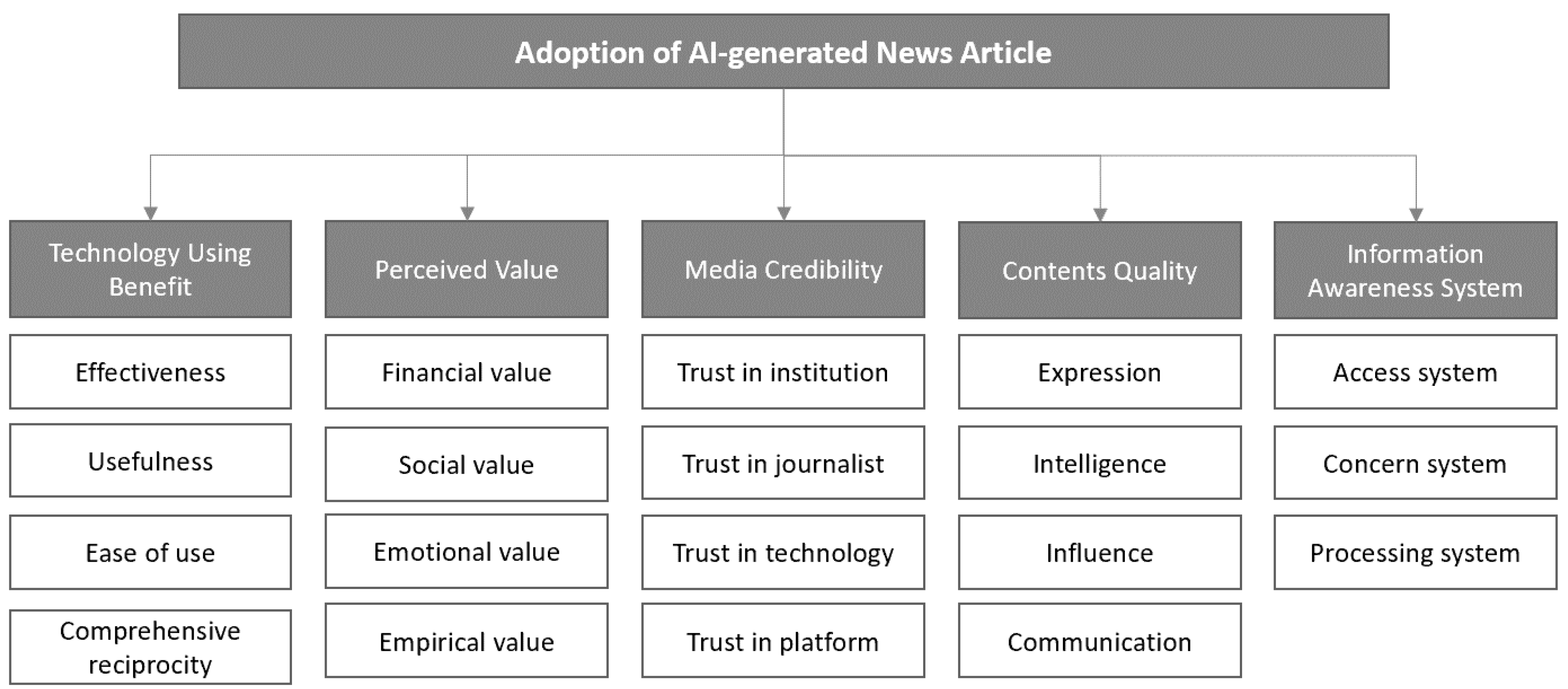

However, there has been little research on the market’s perception of, or response to, AI, as well as on receptive attitudes in terms of news consumption or consumption values from the market’s perspective while AI-generated articles are currently being commercialized. Also, in the news media environment with high technology and innovative businesses, the presses need the decision and creation of Al-generated news articles to communicate with people under uncertainty and information asymmetry. Accordingly, this study defines determinant factors of the intention to accept AI-generated news articles and presents a model for the consumers’ decision to accept AI-generated news by analyzing determinant factors affecting such acceptance attitudes most significantly. To this end, the analytic hierarchy process (AHP) methodology related to decision-making models was selected in a survey conducted on an expert group in the media industry in order to present a model consisting of objective factors of acceptance and strategic significances for the popularization of AI-generated news.

4. Results

4.1. Comparison of Evaluation Variables

As shown in

Table 4, media reliability (0.373) was found to be the most significant factor among the five key factors. As for media articles, including AI-generated ones, it turned out that the basic reliability and content level of media articles affected the acceptance of AI-generated articles, rather than technical factors, in the following order: content level (0.197), benefits of technology utilization (0.184), perceived values (0.136), and information perception (0.110).

According to the analysis results of each area, it turned out that among the benefits of technology utilization factors, usefulness (0.364) and effectiveness (0.327) affected the decision of acceptance more significantly, and that among the perceived value factors, financial value (0.551) was of great importance, followed by empirical value (0.195) and then social value (0.157). The factor of the least effect turned out to be emotional value (0.098). Among media reliability factors, it turned out that trust in institution (0.385) was the most important factor affecting the decision of acceptance, and then trust in technology (0.286), trust in platform (0.205), and trust in journalist (0.123). As for the content level, the communication (0.333) was viewed as the most important factor of influence, followed by the intelligence (0.245), influence (0.233), and expression (0.190). Finally, among factors of information perception, system (0.424), processing system (0.356), and concern system (0.219) were the important factors.

In the total list of 19 specific factors, those affecting acceptance of AI-generated news articles most significantly were trust in institution (0.144), trust in technology (0.107), and trust in platform (0.077), all of which turned out to be related to reliability. Another important factor next to them was financial value (0.075). The following factors also turned out to be important: usefulness (0.067), communication (0.065), effectiveness (0.060), intelligence (0.048), and access system (0.046).

4.2. Comparison of Evaluation Areas between Journalist and Media Engineering Groups

According to the comparative analysis of evaluation areas among journalist and media engineers, both groups viewed media reliability as the most important factor. While the group of journalists selected the level of content (0.250) and benefits of technology utilization (0.130), the group of media engineers selected the benefits of technology utilization (0.247) and perceived values (0.205) as important factors. This result shows that the difference between the two groups of the critical factors affecting Al-generated news articles (see

Table 5).

4.3. Comparison of Evaluation Factors between Journalist and Media Engineering Groups

As shown in

Table 6, the result of the comparative analysis on each evaluation factor between the two groups indicates that both viewed trust in institution as the most important factor. In the comparison of the two groups based on the top five factors, it turned out that the journalist group recognized as most important in its acceptance of AI-generated news articles the following factors of reliability and article contents in order: trust in technology (0.116), communication (0.092), trust in platform (0.087), and intelligence (0.063). The media engineering group viewed the following factors in order as important: financial value (0.102), effectiveness (0.093), trust in technology (0.091), and usefulness (0.081). This result indicates the difference between the two groups, except in regards to the trust in technology factor. Particularly in the case of the media engineering group, it turned out that the two groups were keenly aware of the importance of the two following factors: benefits of technology utilization and financial value.

5. Discussion and Conclusions

This study defined acceptance models based on important factors affecting acceptance of AI-generated news and comparatively examined the importance of these factors. The following are the analysis results: First, it turned out that in the evaluation of five key factors, media reliability was the factor affecting the acceptance of AI-generated news most significantly. This corresponds to previous studies [

69,

70] where reliability was viewed as the most important element both in journalism and AI-generated news. As for AI-generated news articles, the accuracy of data and information is secured. However, there might be some differences even in the case of AI algorithms depending on the organization’s learning method and scope, which indicates the limitation regarding complete reliance on the objectivity of intention in the process of generating news articles. Accordingly, although weather forecasts or articles of one-dimensional information may be more accurate and reliable, the reliability and objectivity of news articles are always an important factor in deciding whether they should be produced by humans or learned AI.

Second, among specific factors of media reliability, trust in institution turned out to be the most important factor. This suggests that the reliability of an institution, which manages AI and gives inputs to it can be a more sensitive factor rather than the reliability of robot journalists, AI technology, or relevant platforms. Previous studies likewise pointed out the significant relevance between news articles and the tendency or philosophy of media outlets [

71,

72,

73]. After all, whether the journalist is a human or robot, the organization that supervises and decides news contents can affect robot journalism significantly.

Third, previous studies, in general, have suggested that the acceptance of technology-related products or services relies heavily on technological factors, such as ease and effectiveness [

74,

75]. In the case of news articles, however, the level of content is more significant than the benefits of technology acceptance according to the present study. Particularly, it turned out that rather than the expression and influence of articles, the level of expertise reflected in articles and their extent of communication were viewed as more important. In the case of AI-generated news articles, it is likely that in contrast with existing news articles, communication problems are due to the low level of knowledge or lack of communication skills on the part of robot journalist. This factor may induce rejection to AI news.

In view of practical significances based on the result above, media outlets need to emphasize organizational reliability in order to promote robot journalism and popularize AI-generated news articles. They need to put forth efforts to more actively share relevant information and promote the excellence and reliability of AI robot journalist generating news articles. In addition to that, efforts should continue to be put forth into sharing information transparently and promoting communication for the brand power and reliability of media outlets that the general public is aware of in consideration of the importance of reliability, not only on the part of robot journalist but also media outlets.

In terms of information perception, the importance of access systems has been emphasized more than that of interests in AI-generated news articles and relevant processing systems. Now it is time to emphasize the process of accessing AI-generated news articles. While AI-generated news is limited to mere objective facts, with the inclination of delivering information on weather, stock prices, sports game results, etc., it is likely that robot journalism will be popularized faster once attention is paid to the ease and variety of approaches to new areas of AI-generated news articles in the future, including society, politics, and economics.

Finally, research results show that in the context of acceptance of AI-generated news articles, reliability and level of contents are viewed as more important than benefits of technology and information perception. For this reason, media outlets need to consider establishing a complex system for both existing articles written by human journalists and AI-generated articles produced by robot journalists, rather than insisting on a company-wide press organization system on the basis of robot journalism in recognition of financial efficiency. While robot journalist involves geographical limits of coverage and interviews, human journalists need to focus on writing articles reflecting their insight on the actual context through comprehensive thinking by going around many different places and extending their networking of news sources. Humans and robots can complement each other efficiently while the former provide insight and the latter provide customized news services [

31].

This study is of academic significance in that it defines factors related to the acceptance of AI-generated news and presents an acceptance model. At the same time, however, it involves limitations in model generalization since its subject group included only 30 related experts in Korea. At present, not only the current status of the media industry but also the level of robot journalism development and commercialization are different among countries. Thus, developmental research needs to be conducted among expert groups over this global society in order to develop an acceptance model that can be generalized properly. In addition, this study suggests a model by deriving factors of acceptance based on previous studies on general acceptance of technology and news articles. However, AI-generated news presents content with receptive and consumptive characteristics, unlike common products and services. There must be unique factors of acceptance or consumption value in AI-generated news articles. Accordingly, future studies need to clarify the differentiated factors of selection and stimulation regarding the acceptance of AI-generated news articles. Finally, this study suggests an acceptance model based on the opinions of an expert group, while an acceptance model needs to be developed in reflection of acceptance intention factors of the general public, the end users of articles. A future study needs to collect the opinions of general consumers on AI-generated news articles in such ways as managing big data from social network systems or collecting questionnaires from customers who have experienced Al-generated news articles to analyze them empirically.