Can Low-Cost Unmanned Aerial Systems Describe the Forage Quality Heterogeneity? Insight from a Timothy Pasture Case Study in Southern Belgium

Abstract

1. Introduction

- (1)

- compare low-cost off-the-shelf UAS approaches (between 1000 and 5000 €) to monitor essential components of grassland heterogeneity in a precision livestock context;

- (2)

- provide practical recommendation for researchers and practitioners willing to develop precision grazing applications based on low-cost UASs.

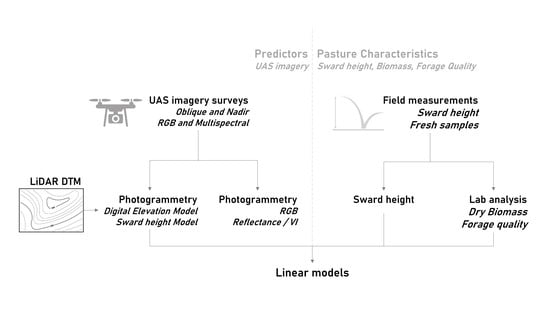

2. Materials and Methods

2.1. Study Area

2.2. Acquisistion of Unmanned Aerial System Imagery

2.3. Processing of Unmanned Aerial System Imagery

2.4. Sward Height Reference Data

2.5. Biomass and Forage Quality Data

2.6. Modeling the Height, Biomass, and Forage Quality

3. Results

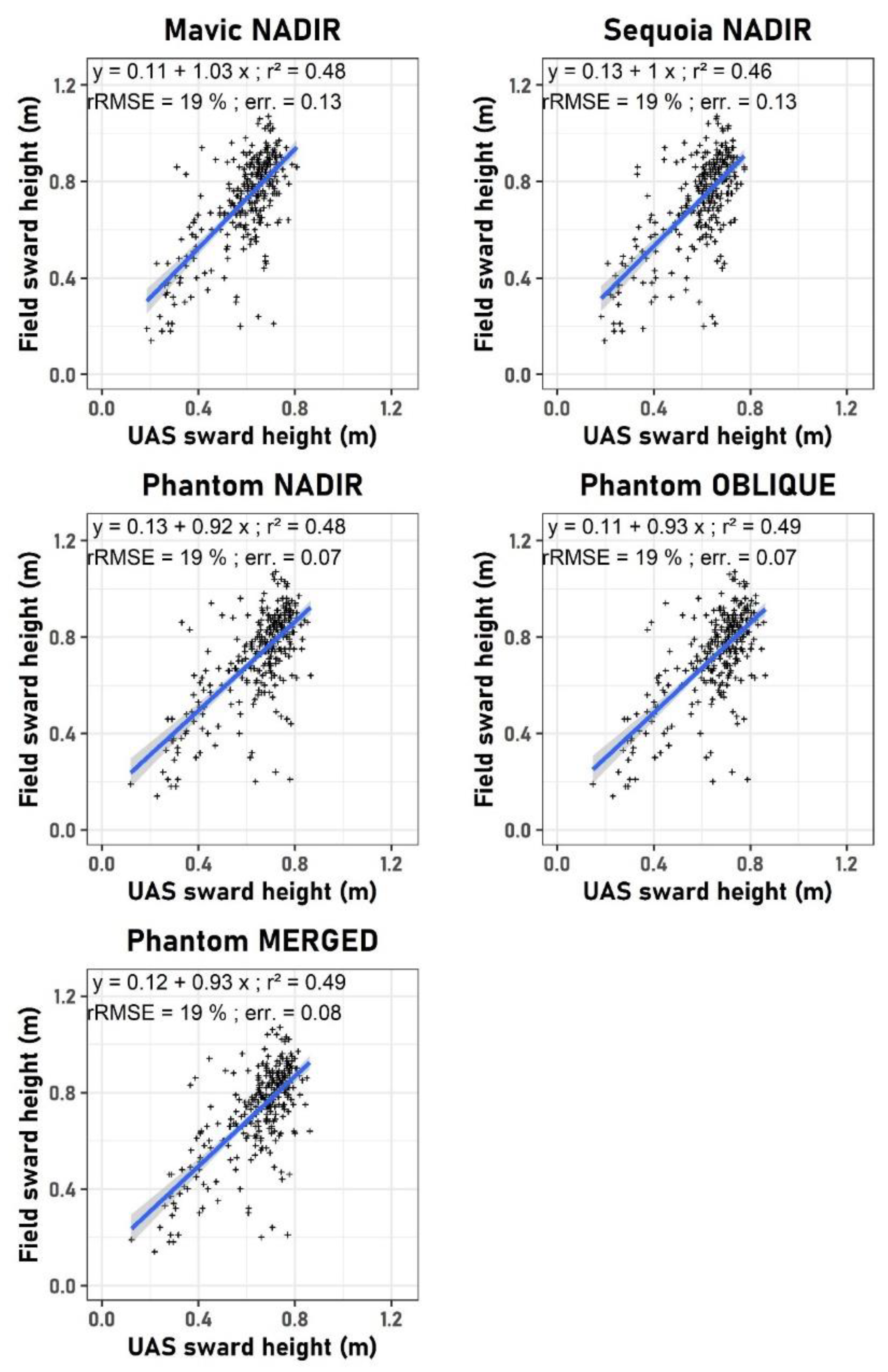

3.1. Sward Height Modeling with UAS

3.2. Above-Ground Biomass Modeling with UAS

3.3. Forrage Quality Modeling with UAS

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Andriamandroso, A.L.H.; Bindelle, J.; Mercatoris, B.; Lebeau, F. A review on the use of sensors to monitor cattle jaw movements and behavior when grazing. Biotechnol. Agron. Soc. Environ. 2016, 20, 273–286. [Google Scholar]

- Debauche, O.; Mahmoudi, S.; Andriamandroso, A.L.H.; Manneback, P.; Bindelle, J.; Lebeau, F. Web-based cattle behavior service for researchers based on the smartphone inertial central. Procedia Comput. Sci. 2017, 110, 110–116. [Google Scholar] [CrossRef]

- Laca, E.A. Precision livestock production: Tools and concepts. Rev. Bras. Zootec. 2009, 38, 123–132. [Google Scholar] [CrossRef]

- Larson-Praplan, S.; George, M.; Buckhouse, J.; Laca, E. Spatial and temporal domains of scale of grazing cattle. Anim. Prod. Sci. 2015, 55, 284–297. [Google Scholar] [CrossRef]

- Andriamandroso, A.; Lebeau, F.; Bindelle, J. Changes in biting characteristics recorded using the inertial measurement unit of a smartphone reflect differences in sward attributes. Precis. Livest. Farming 2015, 15, 283–289. [Google Scholar]

- French, P.; O’Brien, B.; Shalloo, L. Development and adoption of new technologies to increase the efficiency and sustainability of pasture-based systems. Anim. Prod. Sci. 2015, 55, 931–935. [Google Scholar] [CrossRef]

- Michez, A.; Bauwens, S.; Brostaux, Y.; Hiel, M.-P.; Garré, S.; Lejeune, P.; Dumont, B. How Far Can Consumer-Grade UAV RGB Imagery Describe Crop Production? A 3D and Multitemporal Modeling Approach Applied to Zea mays. Remote Sens. 2018, 10, 1798. [Google Scholar] [CrossRef]

- Lopez-Granados, F. Weed detection for site-specific weed management: Mapping and real-time approaches. Weed Res. 2011, 51, 1–11. [Google Scholar] [CrossRef]

- Yang, G.; Liu, J.; Zhao, C.; Li, Z.; Huang, Y.; Yu, H.; Xu, B.; Yang, X.; Zhu, D.; Zhang, X.; et al. Unmanned Aerial Vehicle Remote Sensing for Field-Based Crop Phenotyping: Current Status and Perspectives. Front. Plant Sci. 2017, 8, 8. [Google Scholar] [CrossRef]

- Bebronne, R.; Michez, A.; Leemans, V.; Vermeulen, P.; Dumont, B.; Mercatoris, B. Characterisation of fungal diseases on winter wheat crop using proximal and remote multispectral imaging. In Precision Agriculture′19; Wageningen Academic Publishers: Cambridge, MA, USA, 2019; pp. 415–421. [Google Scholar]

- Lee, H.; Lee, H.-J.; Jung, J.-S.; Ko, H.-J. Mapping Herbage Biomass on a Hill Pasture using a Digital Camera with an Unmanned Aerial Vehicle System. J. Korean Soc. Grassl. Forage Sci. 2015, 35, 225–231. [Google Scholar] [CrossRef]

- Lee, H.; Lee, H.; Go, H. Estimating the spatial distribution of Rumex acetosella L. on hill pasture using UAV monitoring system and digital camera. J. Korean Soc. Grassl. Forage Sci. 2016, 36, 365–369. [Google Scholar] [CrossRef]

- Michez, A.; Piégay, H.; Lisein, J.; Claessens, H.; Lejeune, P. Classification of riparian forest species and health condition using multi-temporal and hyperspatial imagery from unmanned aerial system. Environ. Monit. Assess. 2016, 188, 188. [Google Scholar] [CrossRef] [PubMed]

- Lisein, J.; Michez, A.; Claessens, H.; Lejeune, P. Discrimination of Deciduous Tree Species from Time Series of Unmanned Aerial System Imagery. PLoS ONE 2015, 10, e0141006. [Google Scholar] [CrossRef] [PubMed]

- Klosterman, S.; Richardson, A.D. Observing spring and fall phenology in a deciduous forest with aerial drone imagery. Sensors 2017, 17, 2852. [Google Scholar] [CrossRef] [PubMed]

- Herrmann, I.; Bdolach, E.; Montekyo, Y.; Rachmilevitch, S.; Townsend, P.A.; Karnieli, A. Assessment of maize yield and phenology by drone-mounted superspectral camera. Precis. Agric. 2020, 21, 51–76. [Google Scholar] [CrossRef]

- Klosterman, S.; Melaas, E.; Wang, J.A.; Martinez, A.; Frederick, S.; O’Keefe, J.; Orwig, D.A.; Wang, Z.; Sun, Q.; Schaaf, C.; et al. Fine-scale perspectives on landscape phenology from unmanned aerial vehicle (UAV) photography. Agric. For. Meteorol. 2018, 248, 397–407. [Google Scholar] [CrossRef]

- Manfreda, S.; McCabe, M.F.; Miller, P.E.; Lucas, R.; Pajuelo Madrigal, V.; Mallinis, G.; Ben Dor, E.; Helman, D.; Estes, L.; Ciraolo, G.; et al. On the use of unmanned aerial systems for environmental monitoring. Remote Sens. 2018, 10, 641. [Google Scholar] [CrossRef]

- Savian, J.V.; Schons, R.M.T.; Marchi, D.E.; de Freitas, T.S.; da Silva Neto, G.F.; Mezzalira, J.C.; Berndt, A.; Bayer, C.; de Faccio Carvalho, P.C. Rotatinuous stocking: A grazing management innovation that has high potential to mitigate methane emissions by sheep. J. Clean. Prod. 2018, 186, 602–608. [Google Scholar] [CrossRef]

- Andriamandroso, A.; Castro Muñoz, E.; Blaise, Y.; Bindelle, J.; Lebeau, F. Differentiating pre-and post-grazing pasture heights using a 3D camera: A prospective approach. Precis. Livest. Farming 2017, 17, 238–246. Available online: https://orbi.uliege.be/handle/2268/215034 (accessed on 21 May 2020).

- Grüner, E.; Astor, T.; Wachendorf, M. Biomass Prediction of Heterogeneous Temperate Grasslands Using an SfM Approach Based on UAV Imaging. Agronomy 2019, 9, 54. [Google Scholar] [CrossRef]

- Forsmoo, J.; Anderson, K.; Macleod, C.J.; Wilkinson, M.E.; Brazier, R. Drone-based structure-from-motion photogrammetry captures grassland sward height variability. J. Appl. Ecol. 2018, 55, 2587–2599. [Google Scholar] [CrossRef]

- Insua, J.R.; Utsumi, S.A.; Basso, B. Estimation of spatial and temporal variability of pasture growth and digestibility in grazing rotations coupling unmanned aerial vehicle (UAV) with crop simulation models. PLoS ONE 2019, 14, e0212773. [Google Scholar] [CrossRef]

- Bareth, G.; Schellberg, J. Replacing manual rising plate meter measurements with low-cost UAV-derived sward height data in grasslands for spatial monitoring. PFG–J. Photogramm. Remote Sens. Geoinf. Sci. 2018, 86, 157–168. [Google Scholar] [CrossRef]

- Bendig, J.; Yu, K.; Aasen, H.; Bolten, A.; Bennertz, S.; Broscheit, J.; Gnyp, M.L.; Bareth, G. Combining UAV-based plant height from crop surface models, visible, and near infrared vegetation indices for biomass monitoring in barley. Int. J. Appl. Earth Obs. Geoinf. 2015, 39, 79–87. [Google Scholar] [CrossRef]

- Michez, A.; Lejeune, P.; Bauwens, S.; Herinaina, A.A.L.; Blaise, Y.; Castro Muñoz, E.; Lebeau, F.; Bindelle, J. Mapping and monitoring of biomass and grazing in pasture with an unmanned aerial system. Remote Sens. 2019, 11, 473. [Google Scholar] [CrossRef]

- Yuan, W.; Li, J.; Bhatta, M.; Shi, Y.; Baenziger, P.S.; Ge, Y. Wheat height estimation using LiDAR in comparison to ultrasonic sensor and UAS. Sensors 2018, 18, 3731. [Google Scholar] [CrossRef]

- Lisein, J.; Pierrot-Deseilligny, M.; Bonnet, S.; Lejeune, P. A Photogrammetric Workflow for the Creation of a Forest Canopy Height Model from Small Unmanned Aerial System Imagery. Forests 2013, 4, 922–944. [Google Scholar] [CrossRef]

- Gil-Docampo, M.; Arza-García, M.; Ortiz-Sanz, J.; Martínez-Rodríguez, S.; Marcos-Robles, J.L.; Sánchez-Sastre, L.F. Above-ground biomass estimation of arable crops using UAV-based SfM photogrammetry. Geocarto Int. 2019, 35, 687–699. [Google Scholar] [CrossRef]

- Batistoti, J.; Marcato Junior, J.; Ítavo, L.; Matsubara, E.; Gomes, E.; Oliveira, B.; Souza, M.; Siqueira, H.; Salgado Filho, G.; Akiyama, T.; et al. Estimating Pasture Biomass and Canopy Height in Brazilian Savanna Using UAV Photogrammetry. Remote Sens. 2019, 11, 2447. [Google Scholar] [CrossRef]

- Capolupo, A.; Kooistra, L.; Berendonk, C.; Boccia, L.; Suomalainen, J. Estimating plant traits of grasslands from UAV-acquired hyperspectral images: A comparison of statistical approaches. ISPRS Int. J. Geo-Inf. 2015, 4, 2792–2820. [Google Scholar] [CrossRef]

- Wijesingha, J.; Astor, T.; Schulze-Brüninghoff, D.; Wengert, M.; Wachendorf, M. Predicting Forage Quality of Grasslands Using UAV-Borne Imaging Spectroscopy. Remote Sens. 2020, 12, 126. [Google Scholar] [CrossRef]

- Näsi, R.; Viljanen, N.; Oliveira, R.; Kaivosoja, J.; Niemeläinen, O.; Hakala, T.; Markelin, L.; Nezami, S.; Suomalainen, J.; Honkavaara, E. Optimizing Radiometric Processing and Feature Extraction of Drone Based Hyperspectral Frame Format Imagery for Estimation of Yield Quantity and Quality of A Grass Sward. Int. Arch. Photogramm. Remote. Sens. Spat. Inf. Sci. 2018, 42, 1305–1330. [Google Scholar] [CrossRef]

- Vong, C.N.; Zhou, J.; Tooley, J.A.; Naumann, H.D.; Lory, J.A. Estimating Forage Dry Matter and Nutritive Value Using UAV- and Ground-Based Sensors—A Preliminary Study; ASABE: St. Joseph, MI, USA, 2019; p. 1. [Google Scholar]

- Na, S.-I.; Kim, Y.-J.; Park, C.-W.; So, K.-H.; Park, J.-M.; Lee, K.-D. Evaluation of feed value of IRG in middle region using UAV. Korean Soc. Soil Sci. Fertil. 2017, 50, 391–400. [Google Scholar]

- Barnes, R.F.; Nelson, C.; Collins, M.; Moore, K.J. Forages. In Volume 1: An Introduction to Grassland Agriculture; John Wiley & Sons: Hoboken, NJ, USA, 2003. [Google Scholar]

- James, M.R.; Robson, S.; d’Oleire-Oltmanns, S.; Niethammer, U. Optimising UAV topographic surveys processed with structure-from-motion: Ground control quality, quantity and bundle adjustment. Geomorphology 2017, 280, 51–66. [Google Scholar] [CrossRef]

- Javernick, L.; Brasington, J.; Caruso, B. Modeling the topography of shallow braided rivers using Structure-from-Motion photogrammetry. Geomorphology 2014, 213, 166–182. [Google Scholar] [CrossRef]

- Gonçalves, J.; Henriques, R. UAV photogrammetry for topographic monitoring of coastal areas. ISPRS J. Photogramm. Remote Sens. 2015, 104, 101–111. [Google Scholar] [CrossRef]

- Vautherin, J.; Rutishauser, S.; Schneider-Zapp, K.; Choi, H.F.; Chovancova, V.; Glass, A.; Strecha, C. Photogrammetric accuracy and modeling of rolling shutter cameras. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 3, 139–146. [Google Scholar] [CrossRef]

- Tucker, C.J. Red and photographic infrared linear combinations for monitoring vegetation. Remote Sens. Environ. 1978, 8, 127–150. [Google Scholar] [CrossRef]

- Metternicht, G. Vegetation indices derived from high-resolution airborne videography for precision crop management. Int. J. Remote Sens. 2003, 24, 2855–2877. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Viña, A.; Arkebauer, T.J.; Rundquist, D.C.; Keydan, G.; Leavitt, B. Remote estimation of leaf area index and green leaf biomass in maize canopies. Geophys. Res. Lett. 2003, 30, 30. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Kaufman, Y.J.; Stark, R.; Rundquist, D. Novel algorithms for remote estimation of vegetation fraction. Remote Sens. Environ. 2002, 80, 76–87. [Google Scholar] [CrossRef]

- Rouse, J., Jr.; Haas, R.; Schell, J.; Deering, D. Monitoring vegetation systems in the Great Plains with ERTS. NASA Spec. Publ. 1974, 351, 309. [Google Scholar]

- Barnes, E.; Clarke, T.; Richards, S.; Colaizzi, P.; Haberland, J.; Kostrzewski, M.; Waller, P.; Choi, C.; Riley, E.; Thompson, T.; et al. Coincident detection of crop water stress, nitrogen status and canopy density using ground based multispectral data. In Proceedings of the Fifth International Conference on Precision Agriculture, Bloomington, MN, USA, 16–19 July 2000; Volume 1619. [Google Scholar]

- Moges, S.; Raun, W.; Mullen, R.; Freeman, K.; Johnson, G.; Solie, J. Evaluation of green, red, and near infrared bands for predicting winter wheat biomass, nitrogen uptake, and final grain yield. J. Plant Nutr. 2005, 27, 1431–1441. [Google Scholar] [CrossRef]

- Sripada, R.P.; Heiniger, R.W.; White, J.G.; Meijer, A.D. Aerial Color Infrared Photography for Determining Early In-Season Nitrogen Requirements in Corn. Agron. J. 2006, 98, 968–977. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Gritz, Y.; Merzlyak, M.N. Relationships between leaf chlorophyll content and spectral reflectance and algorithms for non-destructive chlorophyll assessment in higher plant leaves. J. Plant Physiol. 2003, 160, 271–282. [Google Scholar] [CrossRef]

- Serrano, L.; Filella, I.; Penuelas, J. Remote sensing of biomass and yield of winter wheat under different nitrogen supplies. Crop. Sci. 2000, 40, 723–731. [Google Scholar] [CrossRef]

- Rueda-Ayala, V.P.; Peña, J.M.; Höglind, M.; Bengochea-Guevara, J.M.; Andújar, D. Comparing UAV-Based Technologies and RGB-D Reconstruction Methods for Plant Height and Biomass Monitoring on Grass Ley. Sensors 2019, 19, 535. [Google Scholar] [CrossRef]

- Estornell, J.; Ruiz, L.; Velázquez-Martí, B.; Hermosilla, T. Analysis of the factors affecting LiDAR DTM accuracy in a steep shrub area. Int. J. Digit. Earth 2011, 4, 521–538. [Google Scholar] [CrossRef]

- Albl, C.; Sugimoto, A.; Pajdla, T. Degeneracies in rolling shutter sfm. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; Springer: Berlin/Heidelberg, Germany, 2016; pp. 36–51. [Google Scholar]

- Strecha, C.; Zoller, R.; Rutishauser, S.; Brot, B.; Schneider-Zapp, K.; Chovancova, V.; Krull, M.; Glassey, L. Quality assessment of 3d reconstruction using fisheye and perspective sensors. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, 2, 215–222. [Google Scholar] [CrossRef]

- Pagliari, D.; Pinto, L. Use of fisheye parrot bebop 2 images for 3d modelling using commercial photogrammetric software. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2018, 42, 813–820. [Google Scholar] [CrossRef]

- Viljanen, N.; Honkavaara, E.; Näsi, R.; Hakala, T.; Niemeläinen, O.; Kaivosoja, J. A Novel Machine Learning Method for Estimating Biomass of Grass Swards Using a Photogrammetric Canopy Height Model, Images and Vegetation Indices Captured by a Drone. Agriculture 2018, 8, 70. [Google Scholar] [CrossRef]

- Lussem, U.; Bolten, A.; Menne, J.; Gnyp, M.; Bareth, G. Ultra-high spatial resolution UAV-based imagery to predict biomass in temperate grasslands. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, 4213, 443–447. [Google Scholar] [CrossRef]

- Näsi, R.; Viljanen, N.; Kaivosoja, J.; Alhonoja, K.; Hakala, T.; Markelin, L.; Honkavaara, E. Estimating biomass and nitrogen amount of barley and grass using UAV and aircraft based spectral and photogrammetric 3D features. Remote Sens. 2018, 10, 1082. [Google Scholar] [CrossRef]

- Hakl, J.; Hrevušová, Z.; Hejcman, M.; Fuksa, P. The use of a rising plate meter to evaluate lucerne (Medicago sativa L.) height as an important agronomic trait enabling yield estimation. Grass Forage Sci. 2012, 67, 589–596. [Google Scholar] [CrossRef]

- Sanderson, M.A.; Rotz, C.A.; Fultz, S.W.; Rayburn, E.B. Estimating forage mass with a commercial capacitance meter, rising plate meter, and pasture ruler. Agron. J. 2001, 93, 1281–1286. [Google Scholar] [CrossRef]

- Aasen, H.; Honkavaara, E.; Lucieer, A.; Zarco-Tejada, P.J. Quantitative remote sensing at ultra-high resolution with UAV spectroscopy: A review of sensor technology, measurement procedures, and data correction workflows. Remote Sens. 2018, 10, 1091. [Google Scholar] [CrossRef]

- Schneider-Zapp, K.; Cubero-Castan, M.; Shi, D.; Strecha, C. A new method to determine multi-angular reflectance factor from lightweight multispectral cameras with sky sensor in a target-less workflow applicable to UAV. Remote Sens. Environ. 2019, 229, 60–68. [Google Scholar] [CrossRef]

| Reference | Sensor | UAS | View Angle | UAS Mapping Product (GSD) |

|---|---|---|---|---|

| Mavic NADIR | FC220 | Mavic Pro 2 platinum | Nadir | Sward Height Model (0.02 m) Orthophotomosaic RGB (0.01 m) |

| Sequoia NADIR | Sequoia multispectral | Phantom 4 Pro | Nadir | Sward Height Model (0.05 m) Orthophotomosaic R G NIR RE (0.025 m) |

| Phantom NADIR | FC6310 | Phantom 4 Pro | Nadir | Sward Height Model (0.02) Orthophotomosaic RGB (0.01 m) |

| Phantom OBLIQUE | FC6310 | Phantom 4 Pro | Oblique (70°) | Sward Height Model (0.02 m) Orthophotomosaic RGB (0.01 m) |

| Phantom MERGED | FC6310 | Phantom 4 Pro | Nadir | Sward Height Model (0.02 m) Orthophotomosaic RGB (0.01 m) |

| Vegetation Index | Formula | Reference |

|---|---|---|

| Green Red Difference Index (GRDI) | [41] | |

| Modified Green Red Vegetation Index (MGRVI) | [25] | |

| Red Green Blue Vegetation Index (RGBVI) | [25] | |

| Normalized Green Blue Index (NGBI) or Plant Pigment Ratio Index (PPR) | [42] | |

| Normalized Red Blue Index (NRBI) | [13] | |

| Visible Atmospherically Resistant Index (VARI) | [43,44] |

| Vegetation Index | Formula | Reference |

|---|---|---|

| Normalized Difference Vegetation Index (NDVI) | [45] | |

| Normalized Difference Red Edge (NDRE) | [46] | |

| Green NDVI (GNDVI) | [47] | |

| Green Ratio Vegetation Index (GRVI) | [48] | |

| Chlorophyll Vegetation Index (CVI) | [49] | |

| Chlorophyll Index Red-edge (CIR) | [49] | |

| Normalized Green–Red Difference Index | [41] | |

| Red Ratio Vegetation Index (RVI) | [50] |

| Parameters | Sequoia Nadir | Mavic Nadir | P4 Merged | P4 Nadir | P4 Oblique | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| R2 | RMSE | R2 | RMSE | R2 | RMSE | R2 | RMSE | R2 | RMSE | |

| Chemical composition | ||||||||||

| Dry matter (%) | 0.07 | 1.19 | 0.17 | 1.13 | 0.33 | 1.01 | 0.47 | 0.9 | 0.25 | 1.07 |

| Crude ash (%) | 0.23 | 0.53 | 0.3 | 0.5 | 0.34 | 0.49 | 0.48 | 0.43 | 0.29 | 0.5 |

| Crude protein (%) | 0.24 | 1.06 | 0.18 | 1.1 | 0.42 | 0.93 | 0.54 | 0.82 | 0.4 | 0.93 |

| Crude cellulose (%) | 0.84 | 1.25 | 0.8 | 1.43 | 0.82 | 1.33 | 0.84 | 1.27 | 0.84 | 1.27 |

| Neutral detergent fiber (%) | 0.83 | 1.77 | 0.73 | 2.24 | 0.83 | 1.81 | 0.82 | 1.83 | 0.84 | 1.75 |

| Acid Detergent Fiber (%) | 0.79 | 1.34 | 0.68 | 1.66 | 0.8 | 1.31 | 0.77 | 1.4 | 0.82 | 1.24 |

| Acid Detergent Lignin (%) | 0.51 | 0.41 | 0.36 | 0.47 | 0.53 | 0.4 | 0.33 | 0.48 | 0.6 | 0.37 |

| Nutritive value | ||||||||||

| In-vitro DM digestibility (%) | 0.82 | 2.33 | 0.75 | 2.72 | 0.81 | 2.39 | 0.83 | 2.24 | 0.8 | 2.43 |

| Fermentable organic matter (g/kg) | 0.82 | 12.51 | 0.71 | 15.93 | 0.82 | 12.44 | 0.85 | 11.6 | 0.82 | 12.46 |

| Digestible organic matter (g/kg) | 0.82 | 12.81 | 0.74 | 15.52 | 0.81 | 13.06 | 0.84 | 12.19 | 0.81 | 13.1 |

| Digestible protein (%) | 0.24 | 10.31 | 0.18 | 10.67 | 0.42 | 9.01 | 0.54 | 7.99 | 0.41 | 9.1 |

| PDI (g/kg) | 0.67 | 3.77 | 0.68 | 3.73 | 0.68 | 3.7 | 0.74 | 3.39 | 0.68 | 3.74 |

| PDI-E (g/kg) | 0.42 | 3.91 | 0.44 | 3.86 | 0.51 | 3.59 | 0.61 | 3.22 | 0.51 | 3.62 |

| PDI-N (g/kg) | 0.24 | 8.58 | 0.18 | 8.89 | 0.42 | 7.51 | 0.54 | 6.67 | 0.4 | 7.58 |

| OEB (g/kg) | 0.29 | 7.59 | 0.12 | 8.45 | 0.49 | 6.4 | 0.6 | 5.65 | 0.48 | 6.46 |

| Fodder units for dairy cattle (UFL/kg) | 0.83 | 0.02 | 0.74 | 0.03 | 0.82 | 0.02 | 0.85 | 0.02 | 0.82 | 0.02 |

| Fodder units for beef cattle (UFV/kg) | 0.83 | 0.02 | 0.74 | 0.03 | 0.83 | 0.03 | 0.85 | 0.02 | 0.82 | 0.03 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Michez, A.; Philippe, L.; David, K.; Sébastien, C.; Christian, D.; Bindelle, J. Can Low-Cost Unmanned Aerial Systems Describe the Forage Quality Heterogeneity? Insight from a Timothy Pasture Case Study in Southern Belgium. Remote Sens. 2020, 12, 1650. https://doi.org/10.3390/rs12101650

Michez A, Philippe L, David K, Sébastien C, Christian D, Bindelle J. Can Low-Cost Unmanned Aerial Systems Describe the Forage Quality Heterogeneity? Insight from a Timothy Pasture Case Study in Southern Belgium. Remote Sensing. 2020; 12(10):1650. https://doi.org/10.3390/rs12101650

Chicago/Turabian StyleMichez, Adrien, Lejeune Philippe, Knoden David, Cremer Sébastien, Decamps Christian, and Jérôme Bindelle. 2020. "Can Low-Cost Unmanned Aerial Systems Describe the Forage Quality Heterogeneity? Insight from a Timothy Pasture Case Study in Southern Belgium" Remote Sensing 12, no. 10: 1650. https://doi.org/10.3390/rs12101650

APA StyleMichez, A., Philippe, L., David, K., Sébastien, C., Christian, D., & Bindelle, J. (2020). Can Low-Cost Unmanned Aerial Systems Describe the Forage Quality Heterogeneity? Insight from a Timothy Pasture Case Study in Southern Belgium. Remote Sensing, 12(10), 1650. https://doi.org/10.3390/rs12101650