1. Introduction

As an essential material carrier of cultural heritage, traditional villages provide significant resources for us to explore historical stories and folk customs of different times and regions [

1,

2]. They are rural settlements formed spontaneously during the long-term interaction between people and nature [

3], and they are also the non-renewable cultural resources of Chinese civilization [

4]. However, along with rapid urbanization, the contradiction between people’s pursuit of a better living environment and preserving traditional villages has become increasingly prominent. Many traditional villages have experienced severe problems such as hollowing out, land abuse, and demolition of the old to build new ones, leading to the decay of village buildings and a large amount of cultural heritage facing extinction [

5,

6]. Strengthening the protection and development of traditional villages is essential to China’s “rural revitalization strategy” [

7,

8]. However, due to the large number and wide distribution of traditional Chinese villages and the highly complex built environment, it is challenging to achieve rapid and high-precision acquisition of building information in traditional villages by relying on existing methods. Therefore, it is urgent to explore a technique that can automatically and accurately extract the buildings of traditional Chinese villages.

In the past, scholars mainly evaluated traditional village building through manual surveys by experts, such as combining historical image maps [

9], field observation and recording [

10,

11], and in-depth interviews with residents [

12,

13]. Although much architectural information has been obtained, disadvantages include labor and material resource consumption, reliance on subjective judgment, and susceptibility to environmental interference. In recent years, deep learning models have made breakthroughs in computer vision with their robust data fitting and feature representation capabilities. They are widely used in remote sensing image information extraction [

14,

15,

16,

17,

18,

19,

20], bringing a new research perspective for efficiently evaluating traditional village buildings. Compared with traditional methods such as the feature detection method [

21,

22,

23,

24], region segmentation method [

25,

26,

27,

28,

29,

30], and auxiliary information combination method [

31,

32,

33,

34,

35,

36], remote sensing information extraction based on deep learning can spatially model adjacent pixels and obtain higher quality results in processing numerous vision tasks. Xiong et al. [

14] proposed a detection model for traditional Chinese houses—Hakka Weirong Houses (HWHs)—based on ResNet50 and YOLO v2 and combined with multi-metric evaluation proved that the model has high accuracy and excellent performance; Liu et al. [

15] drew the advantages of U-Net and ResNet and proposed the SSNet deep residual learning sequence semantic segmentation model, and proved the superiority of the model through experiments. Compared with the semantic and target detection models, the instance segmentation model can simultaneously achieve detection, positioning, and segmentation and extract richer feature information. Some scholars introduced the Mask R-CNN instance segmentation model [

37], such as Chen et al. [

16], who combined the Mask R-CNN model with a transfer learning technique to achieve high precision building area estimation; Li, Wang et al. [

17,

18] combined the Mask R-CNN model with data augmentation technique to achieve new and old buildings in the Chinese countryside, Chinese rural building roofs with high accuracy; in addition, Zhan et al. [

19] earned high accuracy extraction of residential buildings based on the Mask R-CNN model and by improving the feature pyramid network. Tejeswari et al., achieved high-precision urban building extraction based on the Mask R-CNN model combined with a large dataset automatically generated by Google API, demonstrating that sufficient data samples are the key point limiting the accuracy of contemporary deep learning models [

20].

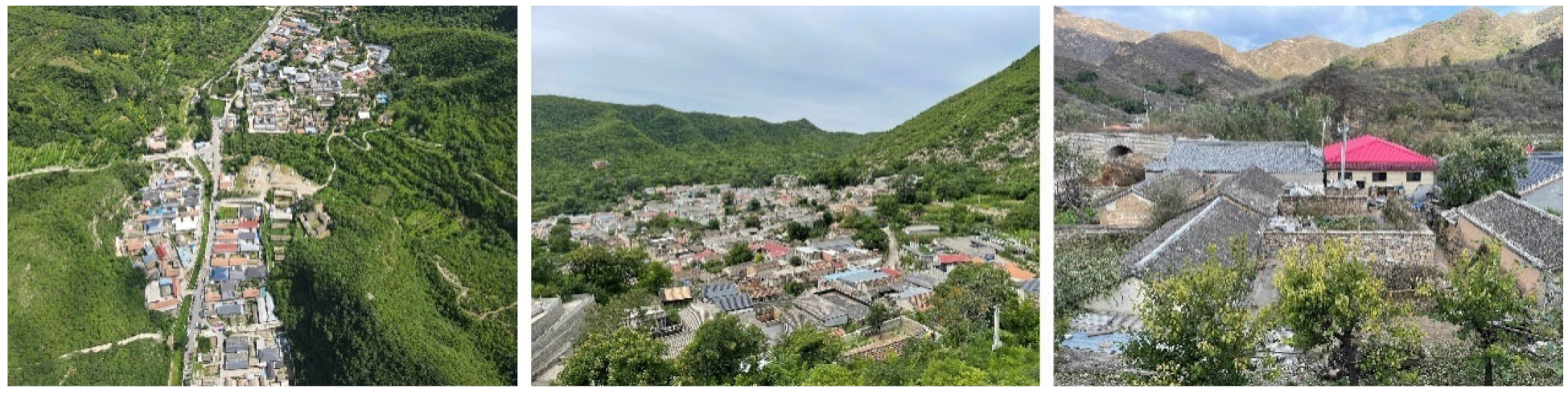

In summary, some studies have shown the excellent performance of instance segmentation models in some specific object extractions. However, these objects often have clear boundaries, similar morphology and size, fewer classes, and apparent background features on remote sensing images. Traditional villages have a long history, and various factors such as geographic location, economic and cultural factors, and human-made factors have led to a diverse and complex overall built environment. As an important carrier for shaping the image of Chinese architecture and characterizing the cultural connotation, as shown in

Figure 1, roofs include small green tile roofs inherited from ancient times, red tile roofs, small green tile roofs with cement roofs after the founding of the country, and multi-colored plastic steel roofs and resin roofs of the beginning of this century, and many other types [

38]. At the same time, multi-functional needs and the size of the house lots lead to high heterogeneity of form and size within the group. The above multi-scale, multi-type, and multi-temporal roofs enhance the complexity of the built environment and pose a significant challenge to the existing building extraction models.

In recent studies, multi-scale feature extraction and fusion have effectively coped with complex environments [

39,

40,

41,

42,

43,

44,

45,

46,

47,

48,

49,

50,

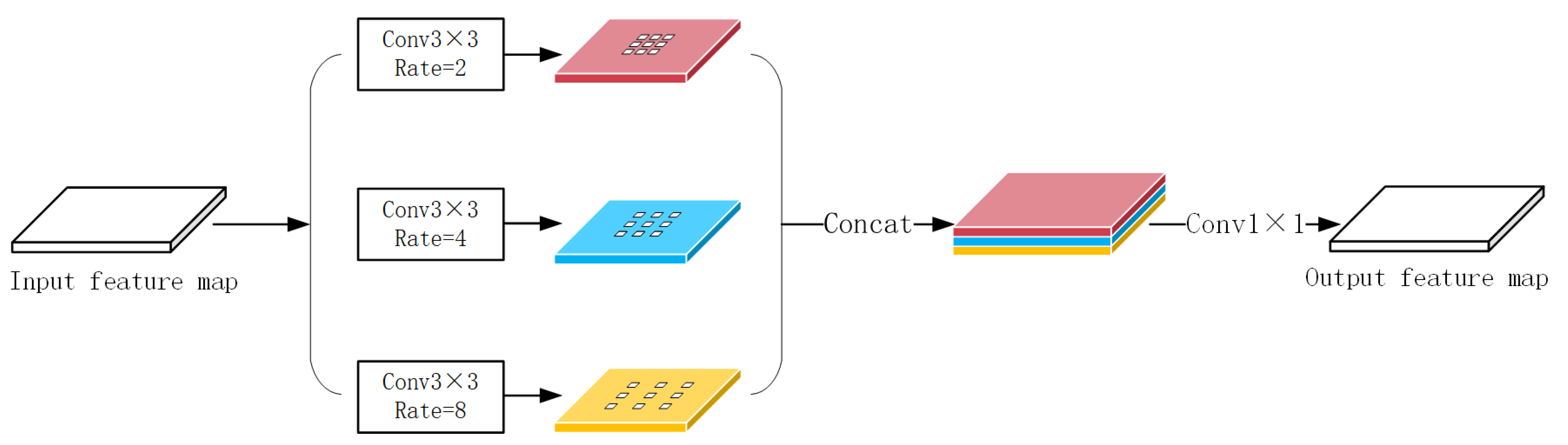

51]. Chen et al., incorporated “dilated convolution” into the DeepLabv2 [

39] model to increase the perceptual field by inserting “holes” in the filter to achieve accurate recognition of targets in complex environments; The method was later further improved by several researchers in DeepLabV3 [

40], ResUNet++ [

41], and PSPNet [

42], which proposed the Atrous Spatial Pyramid Pooling (ASPP) module to further obtain multi-scale information and enhance the model’s recognition and detection of objects in the context of complex environments. Additionally, Li et al., demonstrated the effectiveness of the ASPP module for the building extraction task [

43]. Furthermore, multi-scale feature fusion based on Feature Pyramid Networks (FPN) [

44] is widely used to improve recognition performance in complex environments. This model is based on a top-down architecture to construct high-level semantic feature maps at all scales and predict feature maps at different scales. However, Liu et al., found that the unidirectional information propagation mechanism in FPN led to the underutilization of the underlying features and proposed the Path Aggregation Feature Pyramid Network (PAFPN) [

45], which enhances the feature pyramid by shortening the information path using the precise localization signal at the bottom and establishes a bottom-up path enhancement that strengthens the contextual relationship between the multilayer features. The superior performance of the model has been proven in numerous other detection tasks, such as ground aircraft [

46], tunnel surface defects [

47], traffic obstacles [

48], and other complex environments for target extraction. Still, its potential for building extraction tasks has yet to be effectively explored. The bottom features have been applied in some early models [

49,

50,

51], but it is needed to sufficiently extract and fuse the multi-scale elements to enhance the whole feature hierarchy.

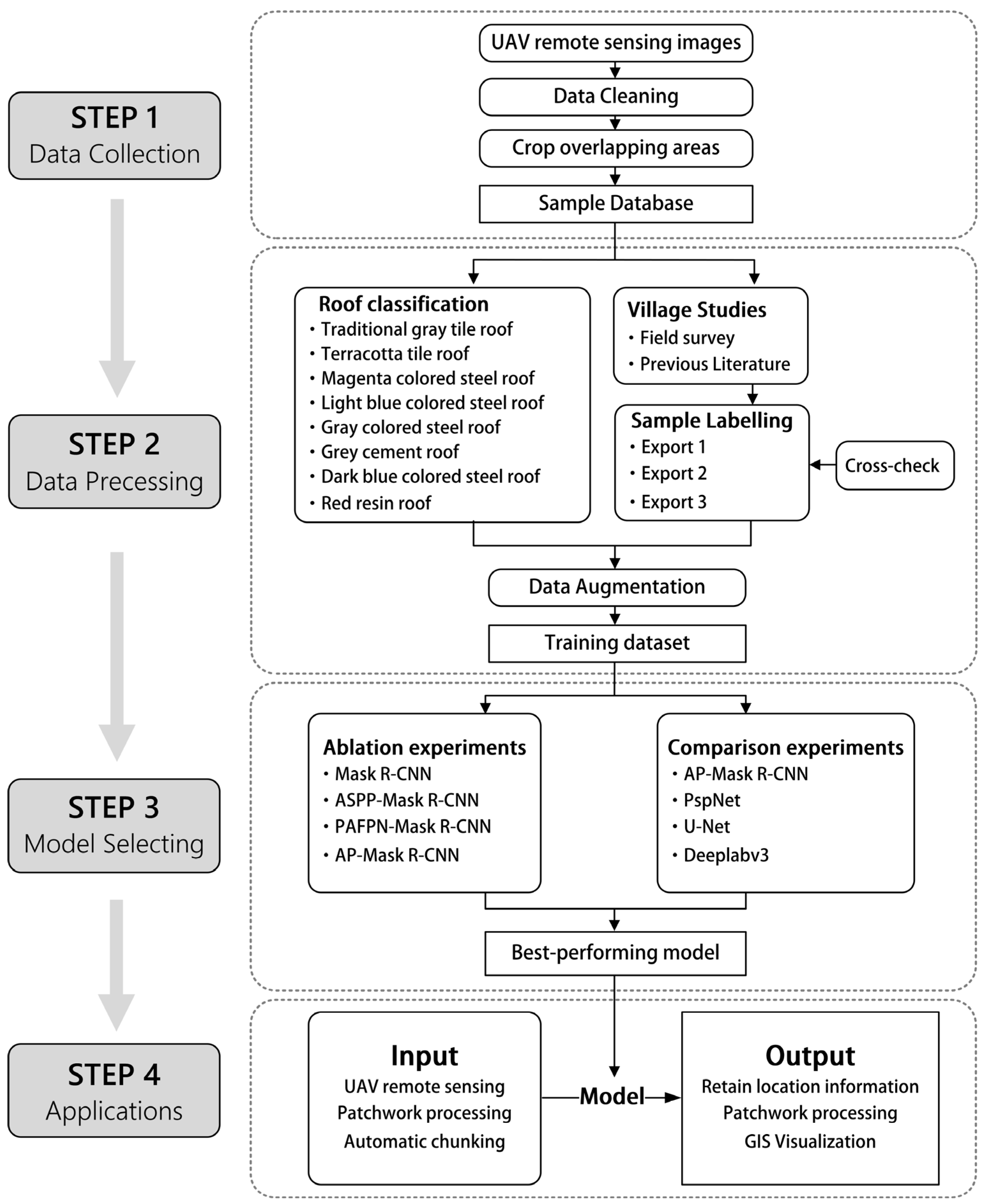

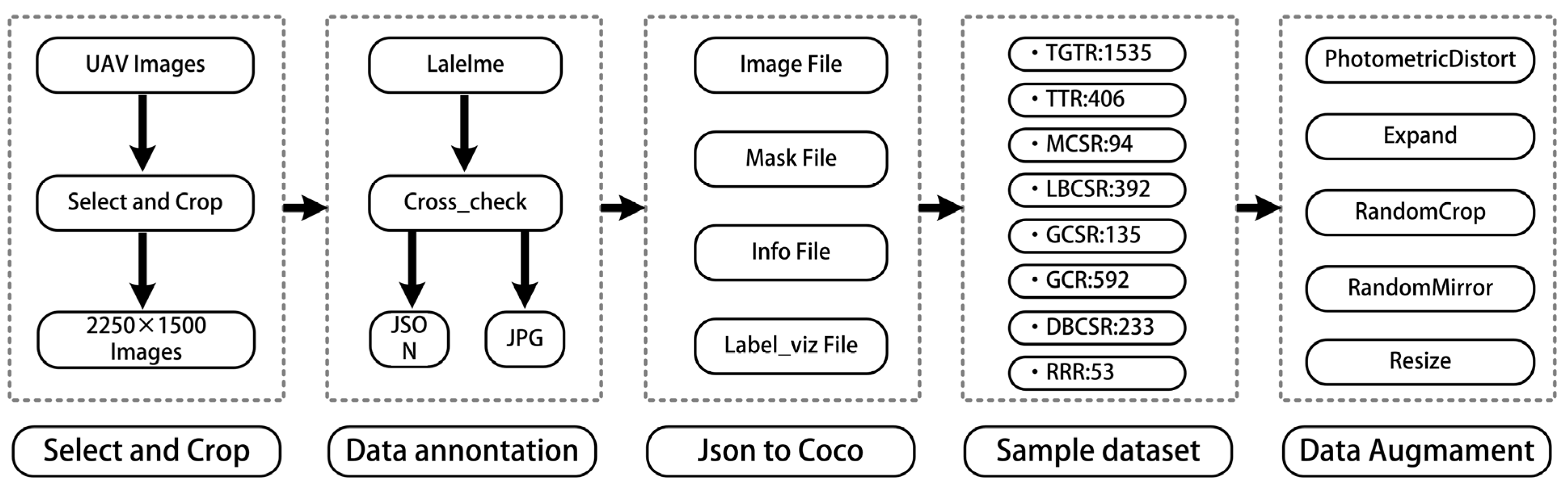

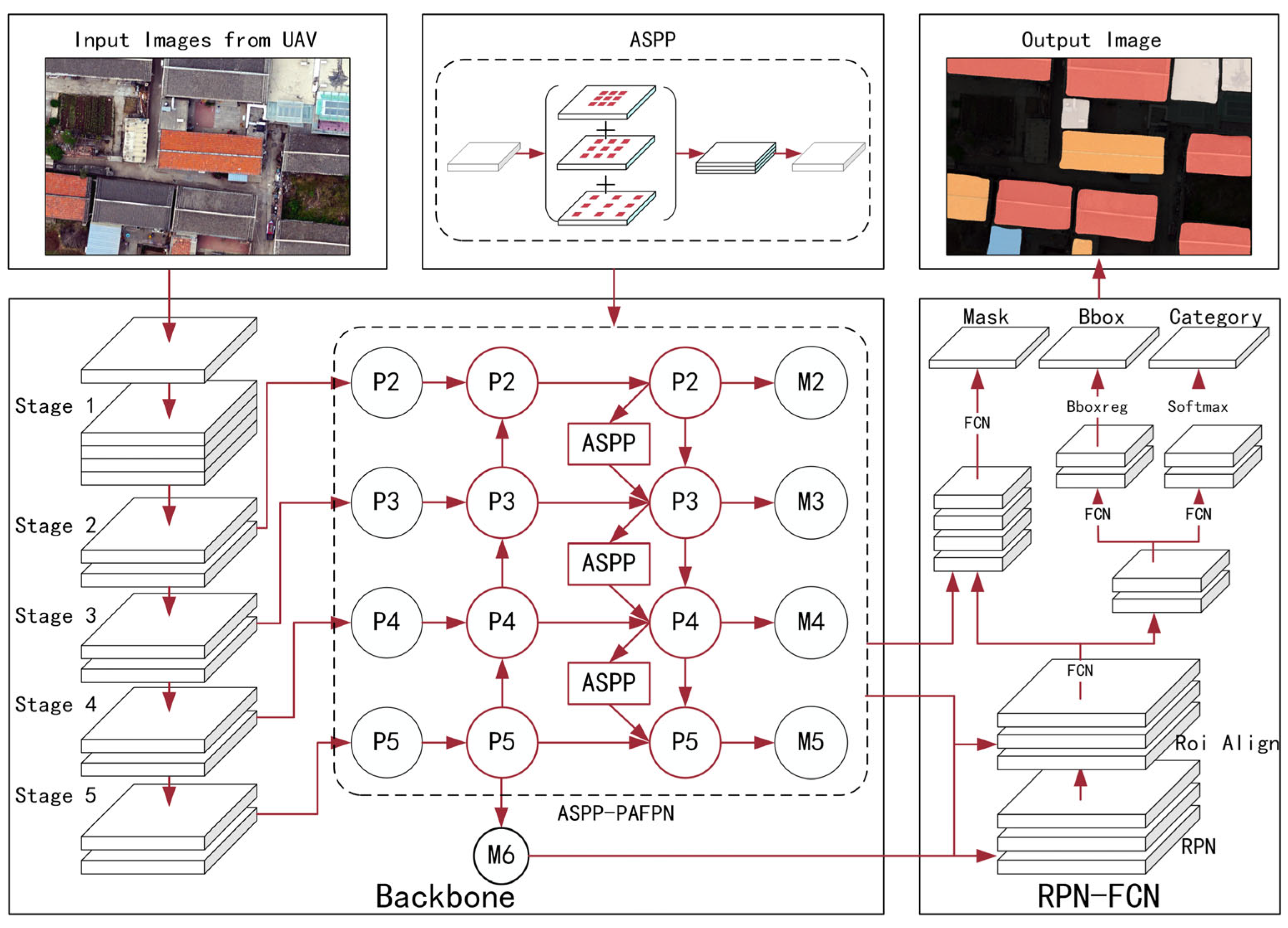

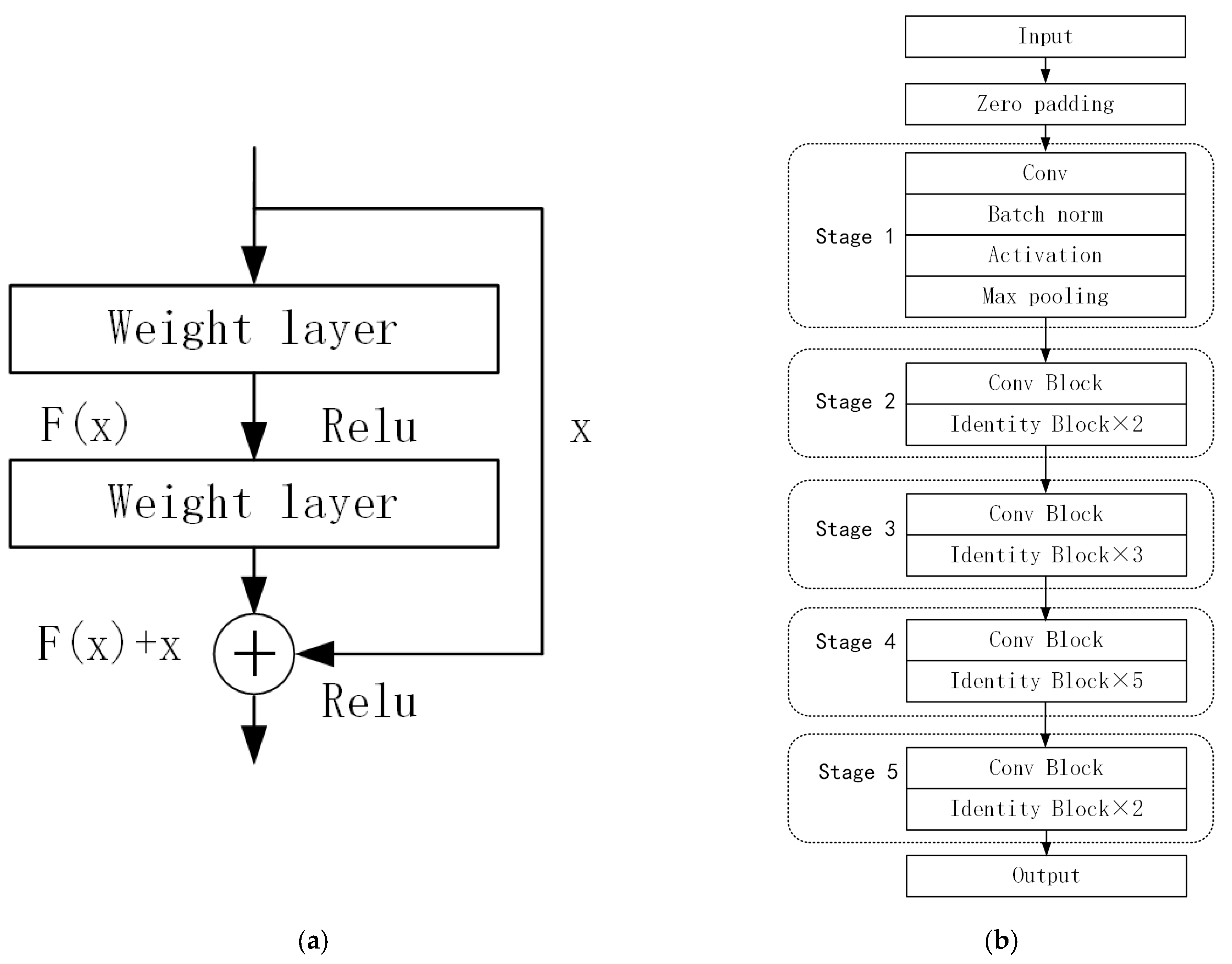

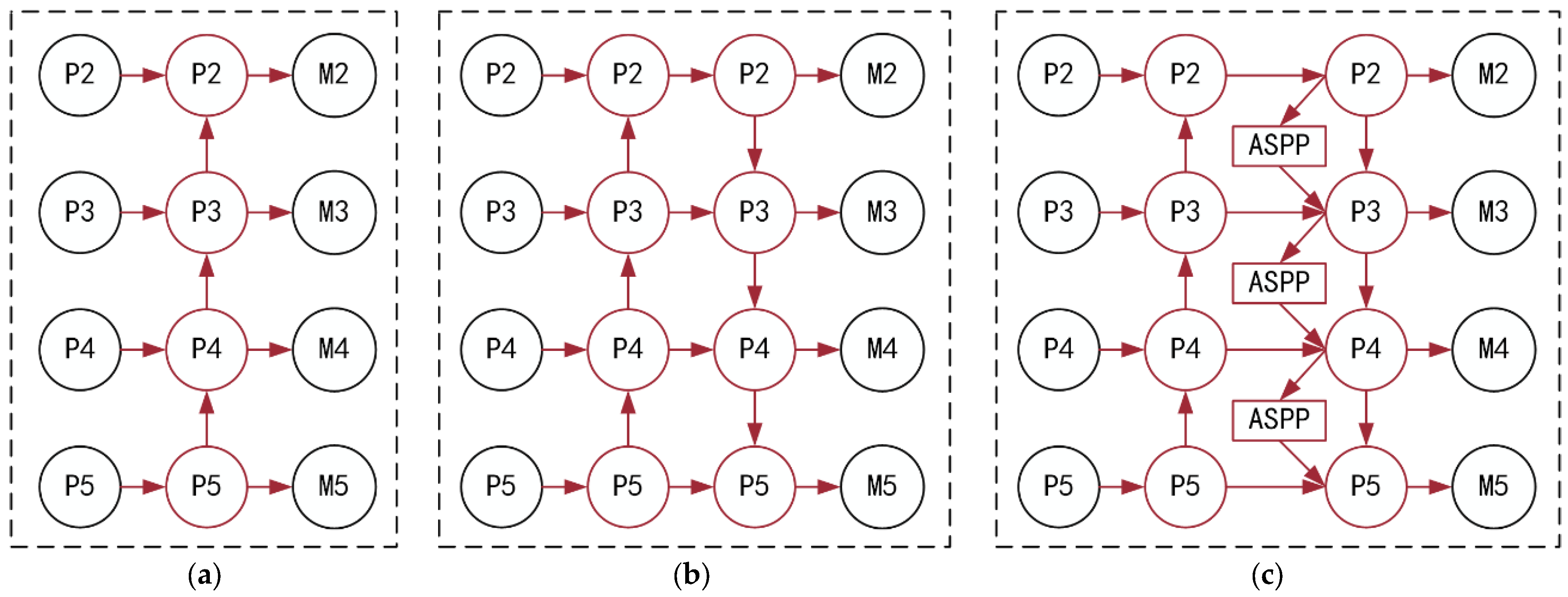

With the above challenges, this paper proposes a high-precision automatic extraction method for traditional village buildings by combining UAV remote sensing orthophoto and improved Mask R-CNN. First, we construct the first traditional Chinese village building roof orthophoto dataset, which consists of fine-grained labels of building roofs in eight historical villages in Beijing. Second, we use the Mask R-CNN instance segmentation model as the basis; Path Aggregation Feature Pyramid Network (PAFPN) and Atrous Spatial Pyramid Pooling (ASPP) as the main strategies to enhance the backbone model for multi-scale feature extraction and fusion; and data augmentation and transfer learning as auxiliary means to overcome the shortage of labeled data. After comparison experiments and ablation experiments combined with common evaluation indexes of computer vision, the superiority of the performance of this model is verified. Finally, Hexi village is selected as the validation object. The extraction results are visualized in GIS, and the complete workflow is to prove our method’s excellent robustness and generalization ability. This paper is the first time a fast extraction method has been developed in China for traditional village buildings.

This paper is organized as follows:

Section 2 describes the study area;

Section 3 describes in detail the traditional village building extraction method proposed in this paper, which includes data acquisition and preprocessing, and improved Mask R-CNN model architecture;

Section 4 conducts detailed experiments to verify the performance of the model;

Section 5 discusses various aspects of the research results, and proposes limitations and improvement directions; finally, the paper concludes.

2. Study Area

As the capital of five major dynasties (Liao, Jin, Yuan, Ming, and Qing) and China’s political center, Beijing has many traditional villages of unparalleled historical, cultural, and social value. With a long history, most of these villages originated in the Ming Dynasty (1948 to 1990 A.D.). They made remarkable contributions to the defense of the ancient capital as the early homes of the Great Wall defenders and are essential for transmitting ancient military culture.

However, along with rapid urbanization, the contradiction between people’s pursuit of a better living environment and the preservation of traditional villages has become increasingly prominent, with many traditional villages experiencing severe problems such as hollowing out, land abuse, demolition of the old and construction of the new, and the destruction of a large number of traditional buildings, directly leading to the disappearance of cultural heritage. In recent years, Beijing has successively issued policy documents such as the Beijing Urban Master Plan (2016–2035) and the Technical Guidelines for Repairing Traditional Villages in Beijing, emphasizing the importance of protecting the architectural style of traditional villages.

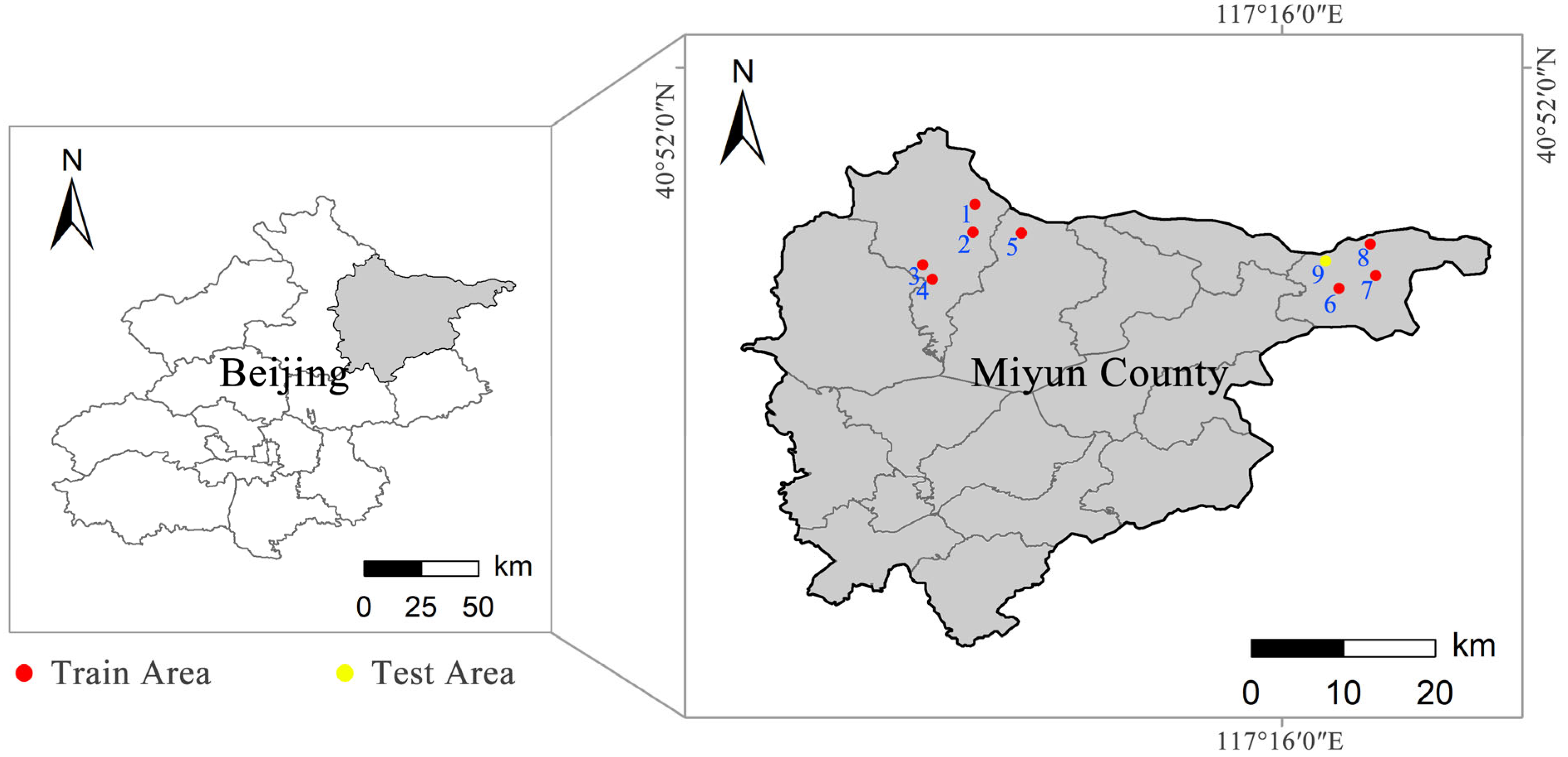

In this study, nine representative historical villages in Beijing (shown in

Figure 2) were selected as the source of data acquisition (including five traditional villages and four non-traditional villages), among which eight towns, namely Baimaguan, Fengjiayu, Jijiaying, Shangyu, Xiaokou, Xiaying, Xituogu, and Yaoqiaoyu, were used as training sets, and Hexi village was used for application practice. These villages have many traditional buildings with small gray tile roofs and modern facilities with various roof forms, which are typical of the classic village style in North China.

4. Results

To verify the AP_Mask R-CNN model’s precision and practicality, we designed three sets of experiments in this section: an ablation experiment, a comparison experiment, and application practice. Among them, the ablation experiment and comparison experiment are based on the evaluation indexes; the application practice is based on the UAV remote sensing images of traditional villages as the test objects. The qualitative analysis is conducted based on the extraction results. The software environment used for all experiments is shown in

Table 3.

4.1. Comparison Experiments

The comparison experiments are mainly used to investigate whether this model has advantages over the traditional village building extraction task. This section compares it with three advanced semantic segmentation models for quantitative comparison and comparative analysis of recognition results. Among the models used are DeepLabv3 [

40], PspNet [

42], and U-Net [

57]. In these semantic segmentation models, pixel accuracy is used as a metric. The contrast models were all built on the open-source code of bubbliiiing (

https://github.com/bubbliiiing, accessed on 25 October 2022) for U-Net, PspNet, and DeepLabv3.

The learning rate was initially set to 0.01 for all comparison models. The learning rate size was adjusted periodically during training using an SGD (stochastic gradient descent) optimization strategy combined with a cosine annealing strategy. In addition, due to the imbalance in the number of pixels between different categories in the dataset, all models use the focal loss function instead of the original cross-entropy loss function. Through the training of 150 epochs, the overall evaluation metrics of different models are shown in

Table 4. First, AP_Mask R-CNN outperforms the other models in terms of overall metrics. Regarding category-specific metrics, the accuracy change curves are similar for all models, verifying the effect of the quality of different categories of data on their accuracy. Secondly, the F1-score and the IoU of AP_Mask R-CNN are higher than other models in recognizing terracotta tile roofs, light blue-colored steel roofs, gray-colored steel roofs, gray cement roofs, and dark blue-colored steel roof types, indicating that it has a more significant advantage in recognizing different roof types. Especially for dark blue-colored steel roofs, U-Net, DeeplabV3, and PSPNet models show very low recognition accuracy, which indicates that there are still limitations in using only semantic segmentation models to recognize multi-scale, multi-type, and multi-temporal roofs.

4.2. Ablation Experiments

In this study, ablation experiments were conducted to verify the effectiveness of each module, where the models used were (1) Baseline Mask R-CNN; (2) P_Mask R-CNN (incorporated into PAFPN); (3) A_MaskR-CNN (incorporated into ASPP); and (4) AP_Mask R-CNNN (incorporated into ASPP-PAFPN). The overall evaluation metrics of all models were derived by training 150 epochs, and the change in accuracy values and the training loss were calculated.

The overall evaluation results of each model, the numerical changes of the metrics under different module combinations, and training loss curves are shown in

Table 5 and

Table 6, and

Figure 11. From the overall metrics, the precision of all three models under different module combinations has improved to some extent. The average precision of Mask R-CNN incorporated into ASPP-PAFPN has been enhanced by 7.8%, the recall has improved by 4.6%, the F1-score has improved by 6.0%, and the IoU has improved by 8.5%; the precision of Mask R-CNN incorporating only ASPP has been enhanced by 2.1%, the recall has improved by 3.4%, the F1-score improved by 2.7%, and the IoU has improved by 3.1%; and Mask R-CNN incorporating only PAFPN improved by 4.9% in precision, 3.1% in the recall, 4.2% in the F1-score, and 2.3% in the IoU. In summary, ASPP-PAFPN brings better results than PAFPN or ASPP. Regarding category-specific metrics, the accuracy changes are mainly concentrated in the categories with low accuracy. Some types with precision higher than 90% show slight improvements, such as traditional gray tile roofs and terracotta tile roofs. In terms of improvement strategies, incorporating only the ASPP module, the recognition accuracy improvement is more significant for dark blue-colored steel roofs (+9.0%) and magenta-colored steel roofs (+7.7%). Using only PAFPN, the gain is substantial for red resin roofs (+15.7%) and dark blue-colored steel roofs (+10.9%), followed by gray cement roofs (+4.2%) and light blue-colored steel roofs (+3.6%). When incorporating both ASPP and PAFPN, red resin roofs (+15.7%), dark blue-colored steel roofs (+13.9%), magenta-colored steel roofs (+6.9%), light blue-colored steel (+5.3%), gray cement roofs (+4.6%), gray-colored steel roofs (+ 3.2%), terra cotta tile roofs (+2.1%), and traditional gray tile roofs (1.2%), in order of decreasing F1-score. From the training loss curves, the convergence speed improvement effect after adding ASPP and PAFPN modules is not particularly obvious. However, it can still prove the advantage of AP-Mask R-CNN in loss convergence. The above results show that the ASPP and PAFPN modules can improve the model’s performance. ASPP focuses on dark blue and magenta-colored steel roofs with more diverse scales and forms. PAFPN is more sensitive to red resin roofs, light blue-colored steel roofs, and gray cement roofs, which are easily confused with the background, with a smaller sample size. Combining the two improvements in this study can retain more accuracy advantages for the model.

In this study, to illustrate the impact of each module on the detection, eight representative samples were selected from the test sample data set based on the principles of category combination, target scale size, roof density, and roof orientation, and the prediction was performed based on the above model. After that, the prediction results are shown together with the original images and ground truth marker data for comparison and analysis, as shown in

Figure 12a–f, respectively. In addition, to highlight the differences between ground truth and extraction results, different colored boxes are drawn. The red boxes in the extraction results indicate the wrong extraction, and the green boxes indicate an entirely or partially missing extraction. By analyzing the variations of the boxes, the effect of different modules on different types of data can be derived.

From the detection results of different models, it can be seen that the complex environment of the village influences the baseline model. The modeling of some categories of features needs to be more apparent. There are more false detections, missed detections, and serious jittering of detection edges, mainly in the small and medium-scale targets of gray cement roofs, red resin roofs, magenta-colored steel roofs, and gray-colored steel roofs. After incorporating ASPP, the detection rate of the model increases for small-scale gray cement roofs and decreases for red resin roofs; after incorporating PAFPN, the false detection of red resin roofs and magenta-colored steel roofs is improved, and the detected roof shapes are more regular. Therefore, this study incorporates the aggregated path feature fusion method (PAFPN) and feature pyramid pooling (ASPP) into the Mask R-CNN model to successfully avoid the loss of underlying feature information, strengthen the contextual relationship between multilayer features, improve the feature extraction capability of the Mask R-CNN backbone model, and better respond to the complex multi-scale, multi-type, and multi-temporal building roof environment.

4.3. Application Practices

This section selects a traditional village, Hexi village, for application practice to test this method’s generalization ability and transferability. The village has a long history and covers an area of 4.7 square kilometers with various types and scales of buildings. The selected village is of double-layer significance for the performance verification of the AP_Mask R-CNN model and for preserving the village building.

The workflow includes six steps: UAV image data acquisition, orthophoto reconstruction, chunking, model detection, stitching, and visualization. After the data acquisition of the selected area by UAV is completed, a super-resolution orthophoto is generated with the help of ContextCapture software. Since the deep learning vision model has a specific limitation on the size of single image data, it is necessary to chunk the remote sensing image and retain the original projection coordinate system and geographic location. The chunked image data are recognized using the trained AP_Mask R-CNN model, and then the model output is stitched together with Python and visualized in GIS.

The visualization results of Hexi village and the original images are shown in

Figure 13 and

Figure 14. Compared with the comparison and ablation experiments, the overall detection effect of Hexi village achieves a better state, except for the slightly decreased detection precision of terra cotta tile roofs, red resin roofs, and gray cement roofs, the detection rate and accuracy of the other types, such as traditional gray tile roofs and dark blue-colored steel roofs, have been greatly improved, which indicates that the proposed method has better generalization ability and transferability.

6. Conclusions

In this study, an innovative workflow for automatically extracting traditional village buildings based on UAV images is established to promote the conservation and development of traditional Chinese villages. The method is based on deep learning and UAV remote sensing to automatically classify, locate, and segment different building roofs in traditional villages. For this purpose, the authors collected orthophoto datasets of the roofs of typical traditional village buildings in Beijing and finely annotated them with good classification. Secondly, the improved Mask R-CNN model performs well in extracting roof features in the homemade dataset under the premise of the complex architectural environment with multiple scales, categories, and temporalities in traditional villages and accurately extracting traditional village architectural information. Faced with the architectural survey task in many villages in China at present, efficient and accurate extraction of buildings is an effective means to cope with the problem.

Although the current framework needs to be more mature, it has shown considerable potential for the automatic extraction of traditional village buildings in terms of precision and practicality. Accuracy is the first focus of this study, with combining ASPP and PAFPN to improve the backbone model for complex feature extraction and fusion as the primary strategy and transfer learning and data augmentation techniques as auxiliary strategies to enhance the performance of the model for automatic building segmentation, localization, and classification. The results of the ablation experiments show that precision, recall, and F1-score have significant advantages, indicating that the improved method in this study improves the stability of the training process and the effectiveness of the final model for building extraction. Practicality, another focus of this study, is mainly ensured by selecting the study area and following strict classification criteria. Starting from this goal, firstly, eight historical villages with different geographical features, village scales, and building types were selected as the study area in Beijing, which combined good weather conditions and flight techniques to lay down the reliability of the source of the building roof dataset. Secondly, methods such as cross-validation support the classification of building roofs to ensure that the classification and labeling errors in the dataset input to the model are minimized. Finally, we chose the traditional Chinese village of Hexi for our application practice. Its extraction results verify the robustness and generalization ability of the model proposed in this study, indicating that the model has some migration effect in evaluating other traditional villages in North China.