1. Introduction

Overgrazing destroys grassland ecological functions. The survey of grazing animals is of great significance in maintaining the balance of grass and livestock. Investigating the geographical and temporal distribution of different grazing livestock (sheep, cattle, horses, etc.) provides the basic and indispensable information for grassland ecological management [

1].

Satellites and manned aircraft are usually used in early animal surveys. Spaceborne remote sensing data with low and medium spatial resolution (1–60 m) have been used for indirect animal surveys since the early 1980s [

2], mainly by detecting signs indicating the presence of animals in the area, such as fecal counts [

3,

4,

5], food removal, and burrow counts [

6,

7]. Submeter very-high-resolution (VHR) spaceborne imagery has potential in modeling the population dynamics of large (>0.6 m) wild animals at large spatial and temporal scales, but has difficulty discerning small (<0.6 m) animals at the species level, although high-resolution commercial satellites, such as WorldView-3 and WorldView-4, have reached ground resolution of up to 0.31 m in panchromatic mode [

2]. Although satellites have the advantages of wide coverage and not disturbing animals, they are limited by the weather, and the resolution is still not high enough to finely distinguish animal objects. Manned aircraft have also been widely used for wild animal surveys, such as kangaroo censuses in New South Wales, Australia [

8] and polar bear censuses in the seasonally ice-free Foxe Basin, Canada [

9]. Although manned aircraft are flexible in terms of survey time and area, they are relatively expensive [

10], require qualified pilots, and possibly have individual biases when used in real-time censuses [

8].

In recent years, unmanned aerial vehicles (UAVs), a convenient and low-cost remote sensing platform, have been widely used in various fields, including wild animal surveys. Compared with manned helicopters, UAVs are more flexible and quieter, keeping the distance between the observer and the animal, ensuring the safety of field investigators in dangerous environments, and avoiding human interference with animal habitats. Previous surveys relied on manually observing and counting from large numbers of images. Researchers developed a series of automatic and semiautomatic object detection methods to improve efficiency. Moreover, some scholars [

11] compared the factors affecting the detection probability of ground observation, manual inspection, and automatic detection from UAV images. They concluded that the combination of drone-captured imagery and machine learning does not suffer from the same biases that affect conventional ground surveys and could better provide information for managing the ecological population [

11].

Studies have shown that some simple threshold-based methods are still sufficient for detecting and counting animals with similar grayscale values and significant differences from the background. For example, using threshold segmentation and template matching techniques, Gonzalez et al. [

12] developed an algorithm to count and track koalas and deer in UAV RGB and thermal imaging videos. However, against complex backgrounds, these methods’ accuracy will usually be greatly affected. As higher-resolution images become available, researchers developed various algorithms based on machine learning to extract more complex features. Xue et al. [

13] developed a semi-supervised object-based method that combined a wavelet algorithm and an adaptive network-based fuzzy neural network (ANFIS) to detect and count wildebeests and zebras in a single VHR GeoEye-1 panchromatic image of open savanna. The accuracy of this method is significantly higher than that of the traditional threshold-based method (0.79 vs. 0.58). Torney et al. [

14] developed a method via rotation-invariant object descriptors combined with machine learning algorithms to detect and count wildebeests in aerial images collected in the Serengeti National Park, Tanzania. The algorithm was more accurate for the total count than both manual counts, while the per-image error rates were greater than manual counts, and the recognition accuracy was 74.15%. Rey et al. [

10] proposed a semiautomated data-driven active learning system jointly based on an object proposal strategy with an ensemble of exemplar support vector machine (EESVM) models to detect large mammals, including common elands, greater kudus, and gemsboks, in the semiarid African savanna from 6500 RGB UAS images, achieving a recall of 75% for a precision of 10%. The author believes that recall is much more important than precision in this application. Although machine learning methods based on non-deep neural networks can still produce good detection results in simple cases, these methods usually cannot fully mine complex animal features.

Deep learning technology, such as convolutional neural networks (CNNs), has developed rapidly in recent years and achieved great success in computer vision. Compared with traditional methods, which only extract shallow image features, convolutional neural networks can automatically learn much richer semantic information and high-level image features with higher learning efficiency. It more comprehensively describes the differences between various types of objects. The CNN-based object detection algorithm includes anchor-free and anchor-based models. Anchor-based models include Faster R-CNN [

15], RetinaNet [

16], YOLOv3 [

17], YOLOv7 [

18], etc. These models need to adjust the hyperparameter settings of the anchor during the training procedure to better match the size of the objects in the dataset. The anchor-free model is more convenient without such a process, and the representative models include FCOS [

19], CenterNet [

20], YOLOX [

21], etc.

Some researchers have also introduced the deep learning method to detect animal objects in UAV remote sensing images. For example, in 2017, Kellenberger et al. [

22] used a two-branch CNN network structure to detect wild animals in the Kuzikus Wildlife Conservation Park in Namibia, and the precision and speed of the model were greatly improved compared with Fast RCNN. In 2018, Kellenberger et al. [

23] studied how to extend CNN to large-scale wildlife census tasks. When the recall was set to 90%, false positives of the CNN were reduced by an order of magnitude, but the precision of the model was still lower. In short, the above two methods lack consideration for the comprehensive performance of the model and cannot guarantee good performance in both recall and precision. In 2020, Roosjen et al. [

24] used the neural network resnet18 to automatically detect and count spotted wing drosophila, including sex prediction and discrimination. The results showed that UAV images have the potential to be researched and applied to integrated pest management (IPM) strategies. In 2020, Peng et al. [

25] developed an automatic detection model for kiangs in Tibet, based on the improved Faster R-CNN and aiming at small object detection of the UAV images, and increased the

from 0.85 to 0.94. However, the dataset in this study was relatively small, and there was only one type of animal object. The classifying ability of similar types of objects has not been verified.

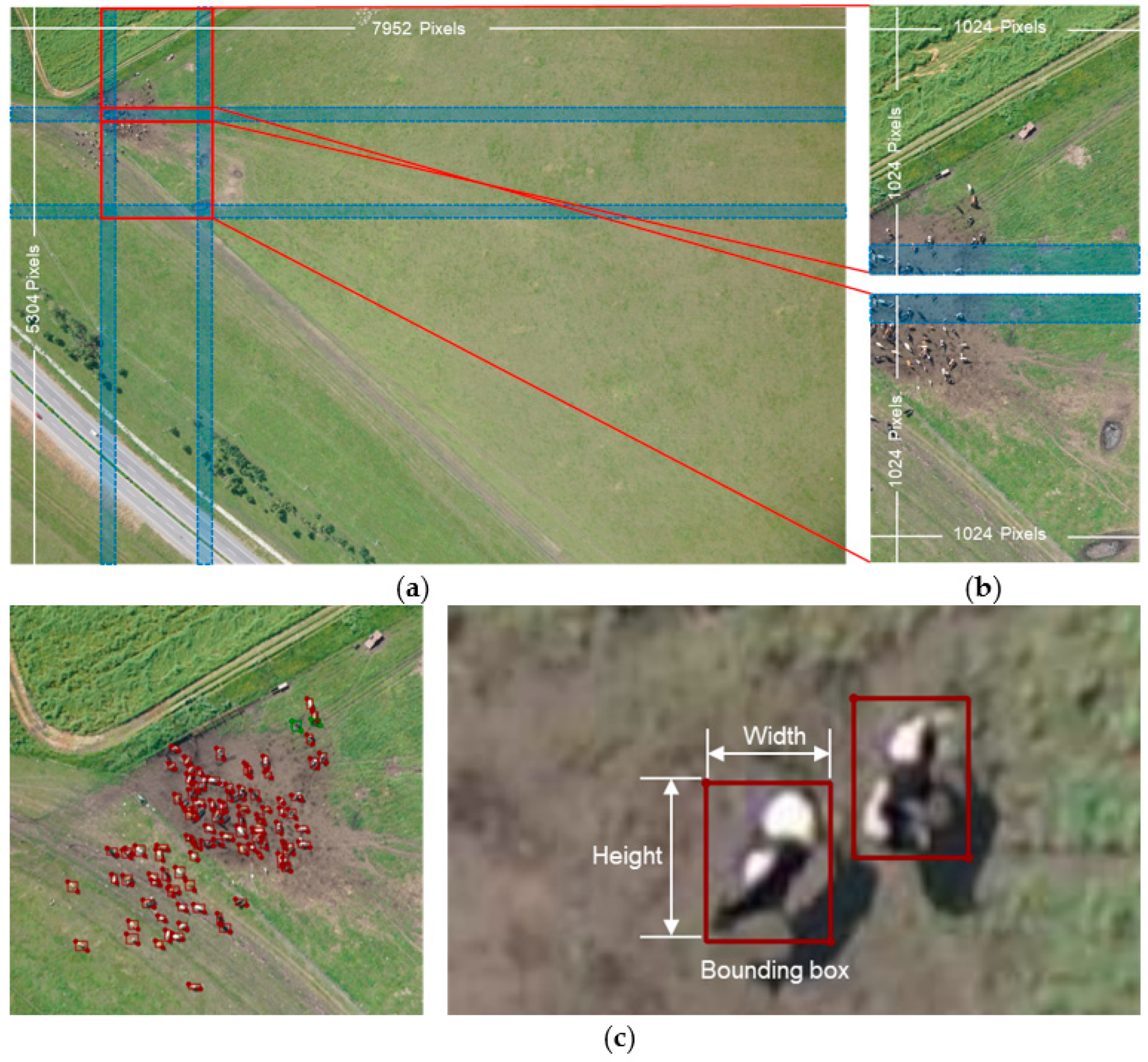

In this study, the grassland grazing livestock detection in UAV images was different from that in natural images taken on the ground and other objects’ detection of remote sensing images, which brings the following challenges to the algorithm design.

First, considering the surveying efficiency, the field of view angle tends to be large, and the image resolution is low. Therefore, animal objects only occupy very few pixels, making it difficult to extract useful and distinguishable features to perform detection. Moreover, grassland grazing livestock such as cattle, horses, and sheep share similar characteristics and are much more difficult to distinguish than those in the classic applications, vehicles, aircraft, and ships.

Second, the UAV images contain a large area of invalid complex background, with changeable illumination conditions, and many false objects exist, such as rocks, haystacks, and woods. Moreover, due to the posture changes of the animals, the animals of the same category may have a different appearance in imagery.

In addition, with the development of the deep learning network model, deeper networks with high precision consume a large amount of computer resources, making them hard to use in portable minimized platforms and for real-time processing. Therefore, the model size is an important index to be considered in the model construction, as well as the precision.

To solve the difficulties in the above aspects, in this paper, we propose an effective grazing livestock detection model—GLDM, based on YOLOX nano [

21]. The rest of the paper is organized as follows.

A grazing livestock dataset based on UAV imagery data of the Hulunbuir grassland was established. We describe the dataset in detail and show some examples in

Section 2.

The proposed model is elaborated in

Section 3.

Comparison, ablation, and multi-scale adaptation experiments of the model had been conducted. The details and results of the experiments are shown in

Section 4.

Finally, we summarize and conclude the paper in

Section 5.