1. Introduction

Parallel machine scheduling only has an important role not in manufacturing (e.g., electronic and chemical industries), but in many scheduling processes, including transport scheduling like train schedules, airport management like landing scheduling, and hospital management, such as surgery scheduling. Symmetry also has a presence in different scheduling problems [

1,

2]. Parallel machine scheduling can be considered as complex artificial systems that have the characteristics of randomness, multi constraint, complexity, and multi-objective, etc. Job scheduling problems are known as NP-hard problems, due to their complexity, randomness, multi-objectives, and multi-variables [

3]. Therefore, machine job scheduling problems are very important and they have gained a huge influence to increase manufacturing productivity and to improve service operations. More so, they designate limited resources to jobs with reference to operational constraints (i.e., to obtain the typical usage of available resources) [

4,

5]. Such problems are complex to solve with a specific algorithm during an exact time. Therefore, many studies had been presented while using various heuristic algorithms to propose solutions that may solve machine scheduling problems [

6,

7,

8].

In traditional parallel machine scheduling problems (PMSPs), independent

n jobs are to be processed on a number of identical

m machines. Accordingly, each job must be executed on one of the

m machines within a fixed

t time. There are three well-known types of PMSPs, uniform, identical, and unrelated machines. The identical machines process jobs within the same time in all machines. In uniform machines, job processing implemented jobs at a regular rate at different speeds. Where UPMSPs different jobs are processed in machines at various rates, and a machine can implement different jobs at various rates [

9].

UPMSPs are employed in various applications, such as various manufacturing industries, including food processing plants, and car factories [

10], semiconductor [

11,

12], tobacco [

13], textile [

14], petroleum [

15], and tire [

16]. Additionally, they have been applied in multiprocessor computer [

17,

18,

19], for multithreading [

20], in hospital operating rooms [

21], human resources management [

22], mail facilities [

23], printing [

24], pharmacy automation [

25], vehicular networks [

26], and heterogeneous systems that include GPU and CPU [

27].

In previous literature, numerous meta-heuristic methods were utilized to address scheduling problems in unrelated parallel machines such as ant colony optimization (ACO) [

28,

29], genetic algorithm (GA) [

15], simulated annealing (SA) [

30], tabu search (TS) algorithm [

31], firefly algorithm (FA) [

32], particle swarm optimization (PSO) [

33], artificial bee colony (ABC) [

34], or their combinations, such as hybridization of GA and SA [

35].

In this paper, we design a new model to solve the problems of unrelated parallel machine scheduling that us based on improved harris hawks optimizer (HHO) by salp swarm algorithm (SSA). HHO is a new nature-inspired optimizer presented by Heidari et al. [

36]. It is influenced by the cooperative behavior of Harris’ Hawks that hunt escaping rabbits. Heidari et al. [

36] demonstrated that HHO outperforms nature-inspired techniques in 29 engineering problems. It had been used in various applications, such as feature selection [

37], engineering problems [

38,

39,

40,

41,

42,

43], satellite image processing [

44], prediction models [

45], and scheduling tasks in cloud computing [

46]. SSA is also a nature-inspired method that simulates the behavior of Salpidae’s family. It was employed in different fields, such as node localization in wireless sensor network [

47], feature selection [

48,

49,

50], prediction models [

51], cloud computing [

52], and image segmentation [

53,

54]. From these applications for HHO and SSA, it has been observed the high ability of both of them to find the optimal solution for different optimization problems. As well as, in most cases, they provided results better than the comparative algorithms. However, each of them still has its limitations that motivated us to benefit from the advantages of both of them.

The modified version of HHO is called MHHO, in which the SSA is used to enhance the performance of HHO to be applied for solving different UPMSPs problems. SSA works as a local search for HHO to improve its performance and decrease its computational time.

This paper provides the following contributions:

The improved HHO with SSA is adopted to solve the UPMSPS problem for small and large jobs on different machines with setup time.

The results of numeric experiments is presented to validate the proposed method with different conditions.

We compare the MHHO method with known methods in order to demonstrate the superiority of the MHHO over existing methods.

The rest parts of this study are arranged, as follows.

Section 2 presents a review of previous works on UPMSPs.

Section 3 presents UPMSPS preliminaries. Next, the proposed algorithm is described in

Section 4, while in

Section 5, experiments and results are given to assess the performance of the proposed algorithm. Finally, we conclude our paper and remark future research in

Section 6.

2. Literature Review

In this section, a number of previous published literature on UPMSPs is described. We encourage the reader to see the comprehensive surveys [

6,

7,

8] to obtain more knowledge about the earlier works on this research direction. Arnaout et al. [

28] applied the ACO algorithm to address UPMSPs. The performance of the introduced algorithm outperforms the TS algorithm and other meta-heuristic methods. In [

17], another metaheuristic method used to solve UPMSPs based on simple-iterated greedy local search. The proposed method produced efficient solutions. In [

55], the authors improved the GA by integrating the dominant propensities to solve UPMSPs. The proposed hybrid GADP algorithm reached the optimal solution and compared with previous heuristics. In [

56], another algorithm, called GRASP, is presented to deal with the scheduling problems of unrelated parallel machine. They compared GRASP with existed methods and approved its superiority over existed UPMSPs methods. Rodriguez et al. [

57] proposed an effective algorithm, namely iterative greedy (IG), to solve a large-scale UPMSPs. They compared their with several previous UMPSPs methods, and showed that IG outperforms previous methods. In [

29], a two-stage ACO scheme is presented to solve the UPSMPs and minimize the scheduling makespan on UPM with sequence-dependent set-up time of 120 jobs and 10 machines.

Bitar et al. [

58] presented a memetic algorithm to deal with the large-scale of UPMSPs in semiconductor-manufacturing. The proposed method aims to minimize the flow time and maximize the number of processed products. Moreover, a memetic algorithm was employed to solve various scheduling problems, such as [

59,

60,

61,

62,

63]. In [

31], a minimization of the makespan of a number of

j jobs and

m machines of UPMSPs is considered. A hybridization of two methods, namely truncated branch-and-bound and TS, is proposed and compared to some of the metaheuristic algorithms from the exited approaches. The authors of [

64] addressed UPMSPs by employing the power-down scheme in order to minimize total tardiness and total energy consumption. A mathematical model is formulated, then, Ant Optimization algorithm is proposed with Apparent Tardiness Cost (ATC) heuristic rule.

Pacheco et al. [

65] presented a metaheuristic algorithm, called Variable Neighborhood Search, to solve the problems of UPMS. They addressed the problem of job scheduling in one machine and regarded two problems, called sequence-dependent setup times and preventive maintenance. Li et al. [

66] suggested a polynomial-time approximation algorithm to solve UPMSPs of green manufacturing companies by including the sum of energy cost. In [

67], the authors presented a TS algorithm to solve parallel machines scheduling problems. They developed two mathematical models for the problems of a number of parallel machines scheduling of operations that operated together and to minimize the makespan. In [

68], an efficient mathematical model and a number of heuristic algorithms are presented in order to minimize the energy consumption and the tardiness of the unrelated parallel machine scheduling problems.

Fanjul-Peyro et al. [

69] addressed UPMSPs and presents two models called a mathematical programming and a mixed integer linear program algorithm. The proposed models reached optimal solutions for UPMSPs of 400 jobs and four machines. Wang el al. [

70] addressed the large-scale UPMSPs and presented a solution model by applying the TS algorithm components that embedded with multiple-jump strategy. This model solves the on-preemptive scheduling problems and reached the local optimal solution. In [

33], the authors presented a hybrid GA and PSO method, called HPSOGA, to address the UPMSPs. They used the Taguchi method to determine and calibrate the optimal parameters. The proposed method outperformed previous solution methods. Ding et al. [

71] combined mixed integer linear programming (MILP) with a column generation heuristic to address the UPMSPs under the time-of-use pricing. The proposed scheme was used in order to minimize the total electricity cost with a bounded makespan restriction.

Wu et al. [

72] presented an effective method to minimize and reduce the energy consumption and the makespan to resolve unrelated parallel machine scheduling problems. They proposed a memetic differential evolution algorithm. Additionally, they used a meta-Lamarckian learning strategy to enhance their method, which, when compared to two existed methods, demonstrated better performance. Bektur and Tugba [

72] considered UPMS problems with a common server. They presented a MILP scheme to solve UPMSPs. More so, they proposed both SA and TS to address the problem. Arroyo et al. [

73] proposed an IG algorithm and a MILP model for unrelated parallel machine scheduling. The proposed solution model had been tested on different size of benchmark and compared to some of the existed models, such as ACO, SA, and discrete differential evolution. The IG algorithm outperformed the other three methods in all test benchmarks.

4. Proposed Method

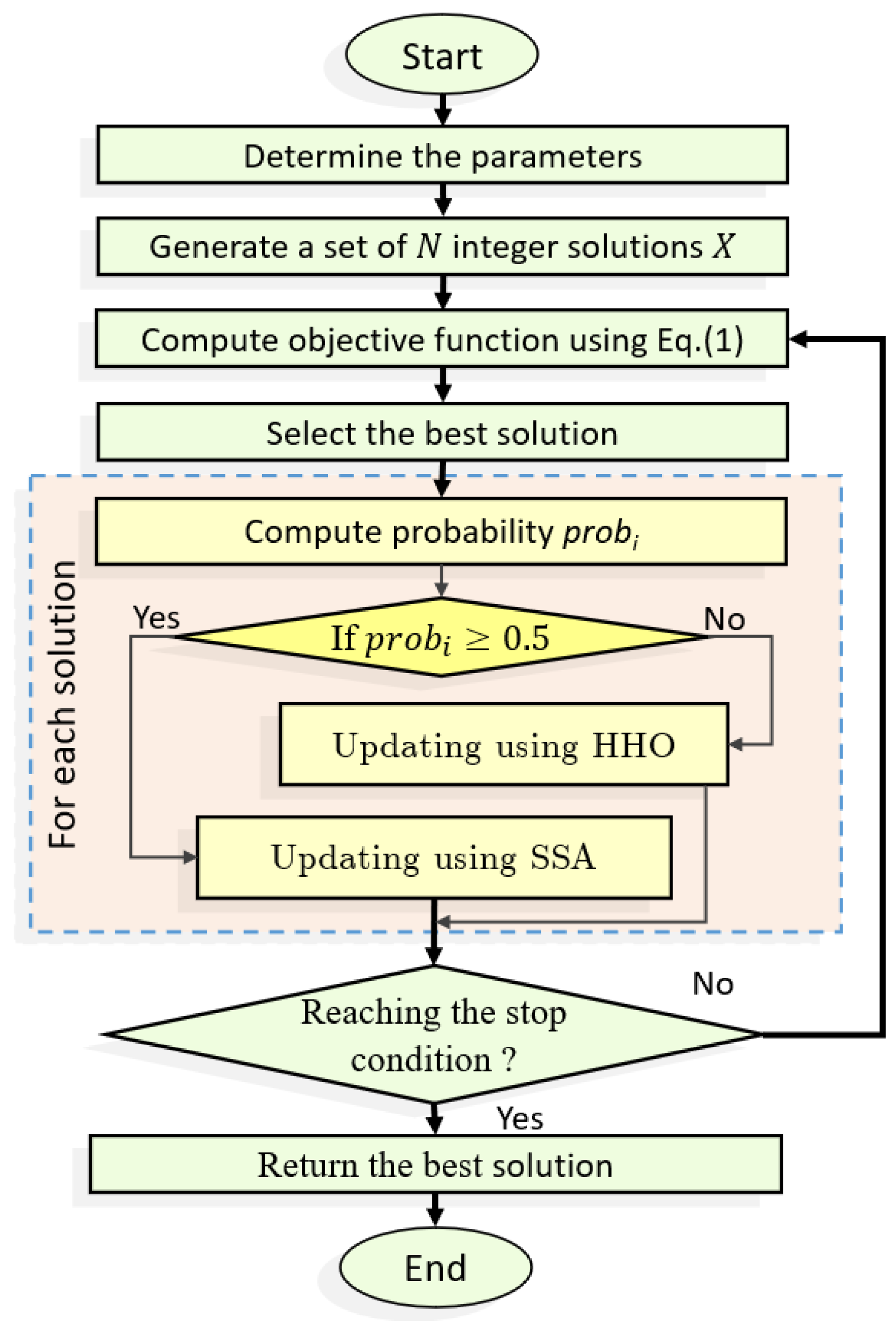

The proposed method, called Modified HHO (MHHO), to solve UPMSPs with setup times is presented in this section. It applies the SSA as a local search for the HHO algorithm, as shown in

Figure 1.

The MHHO algorithm begins by creating random integer numbers to represent the initial solution of UPMSPs solutions (X), whereas the solution includes a set of individuals (N) for a set of n jobs that listed to be processed over m machines. The initial makespan value is calculated for each individual by the fitness function and the best solution is selected based on the smallest fitness value.

Subsequently, the solution is updated using either the HHO or the SSA based on a probability. This probability is computed while using the fitness value for each solution. These steps are repeated until the stop condition is met. Here, it is set to the max number of fitness evaluations, which is set to 100,000.

The description of the initial and updating solutions are explained as follows.

4.1. Solution Representations

The UPMSP solution contains dimension with values in the range (i.e., ). For example, if there are three machines and five jobs, the representation of one individual can be like this: , which means the first and third jobs will be scheduled on machine number 2, then the second and fourth jobs will be scheduled on machine number 1, etc.

Thereafter, the sequence of the jobs for each machine is determined. For example, if there is a

T matrix with

n jobs and

m machines, the

indicates the sequence-dependent setup times that are needed to set up for

j-th job after

i-th job on the

m-th machine.

Subsequently, for machine number 1, the sequence-dependent setup times are represented by the first row as following (5, 2, 6, 4, and 13) jobs. The other rows represent the jobs for the remaining machines. Zero values in the machine’s row indicate no jobs will be processed by this machine. In this study, the makespan value is determined using the Adjusted Processing Times Matrix

[

30] to linear combine the previous

P and

S. It is formulated as in the following equation:

where

S is

and represents the processing times.

P is

and represents the setup times for each machine. Therefore, this matrix represents the initial solution for the optimization process.

4.2. Updating Stage

The MHHO evaluates the makespan for each solution (

) based on the fitness value for each one, as in Equation (

1). The smallest makespan

determines the best solution. After that, the MHHO updates the solution by switching between HHO and SSA algorithms based on a computed probability (

), as in Equation (

3).

where

is the current fitness. If

, then the individual

is updated by HHO, or else the SSA is used. These steps are repeated until meeting the stop condition. Finally, the best scheduling based on the minimum

value is outputted.

5. Experiments and Results

A set of the UPMSP benchmark dataset is used to assess the performance of the MHHO. In addition, its results are compared with the traditional HHO and SSA methods, and state-of-the-art methods with different numbers of machines and job sequences.

5.1. Dataset Description

In general, this UPMSP dataset has different number of instance problems, and each of them has its processing time and setup time. In addition, these instances are randomly constructed according to the discrete uniform distribution from U.

In this study, the number of machines is set to 2, 4, 6, 8, 10, and 12. While, the number of jobs are 6, 7, 8, 9, 10, 11, 20, 40, 60, 80, 100, and 120. Whereas, the jobs of is classified into two groups, the small group which contains 6, 7, 8, 9, 10, and 11 jobs. The second group (i.e., large group) contains 20, 40, 60, 80, 100, and 120 jobs. The dataset used in this paper is publicly available in the following website

http://www.schedulingresearch.com.

For each problem, the algorithm is performed 30 times to enable fair comparison and further statistical analysis of the results.

5.2. Performance Measure

In this section, the metrics used to evaluate the performance of the algorithm are discussed. These metrics, including the relative percentage deviation and improvement ratio measure and the definition of each of them, is given, as follows:

Relative percentage deviation (

): It is defined in Equation (

4) to compare the results of all methods.

where

represents the average of makespan.

is the lower boundary, which represents the average of the processing time for each job and it is defined in Equation (

5).

where

and

are the minimum and maximum time of processing, respectively, for each job among the machines and they are defined as:

where

is the adjusted processing time,

and

are the numbers of machines and jobs, respectively.

Improvement ratio (

): It measures the ratio of enhancement of the proposed algorithm against the others and it is defined as:

where

is the average of the makespan values of the compared method.

5.3. Parameter Settings

The proposed MHHO is compared with the traditional HHO and SSA and

Table 1 shows the parameters for each algorithm. These parameters were tuned and selected experimentally.

The experiments were performed using MATLAB R2014b (MathWorks Inc., Natick, MA, USA) run over a computer with CPU Core i7 and 8 GB RAM working with Windows 10 (64 bit). The average of of different 15 instances was computed for each job in each problem. The common parameter, such as the maximum number of fitness evaluations, is set to 100,000 for all algorithms, whereas the population size depends on the problem size.

5.4. Comparison with Basic HHO and SSA

In this experiment, we compare the performance of the proposed MHHO with the traditional SSA and HHO algorithm, as given in

Table 2. It can be noticed from this table that the high performance of the proposed MHHO according to different metrics. For example, the proposed MHHO has the smallest

among all of the tested instances (either in small or large datasets). Moreover, it can be observed the high improvement of the MHHO than two SSA, and HHO with average overall instances nearly 36.890 % and 21.611%, respectively.

Figure 2 depicts the average of the improvement along with each machine and job. Note that high improvement of MHHO over SSA is achieved especially at the number of jobs 2, 10, and 12, whereas, at the machines 40 then 100. By comparing the results of HHO and MHHO, it can be noticed the high difference in the improvement is achieved at the number of jobs 2, while, when the number of machines becomes high, like 100 and 120, the proposed MHHO outperforms HHO with high improvements.

According to the value of , note that the proposed MHHO has the smallest value among all of the tested instances, followed by the SSA, then the HHO. This indicates the high stability of the proposed MHHO algorithm.

In terms of CPU time, the traditional SSA algorithm is the faster algorithm, followed by the MHHO; however, HHO takes a long time until it reached the stop conditions. The main reason that MHHO takes that time results from the operator of traditional HHO that needs more CPU time.

Figure 3 depicts the CPU time that is required by the proposed MHHO and the other two methods among each tested number of machines. From these results, it can be observed that HHO consumes more time than the other two methods, while the faster algorithm is SSA, followed by MHHO. The mean reason for the longer time of MHHO results is because of the drawbacks of the traditional HHO that depends on more operators.

5.5. Results of over Small-Size Problems

In this experiment, we compare the results of

of MHHO with other methods using small-size problems as in

Table 3. These methods including the traditional HHO, and SSA, SA [

75], T9 [

74], T8 [

74]. From these results, it can be observed that the MHHO outperforms other methods, especially the SA, T9, and T8. However, by comparing the proposed MHHO with SSA, it can be noticed the high performance of the MHHO overall the small-size problems except at machine 2 and job 9, there are small improvements. Additionally, by comparing the proposed MHHO with HHO, the MHHO stills provides better results than the HHO among all the tested problems.

5.6. Comparison with Other MH Methods Using Large-Size Problems

We compare the performance of MHHO with a set of method, including invasive weeds optimization (IWO) [

32], Artificial Bee Colony (ABC) [

77], metaheuristic for randomized priority search (Meta-RaPS) [

32], improved firefly (IFA) [

32], Hybrid ABC (HABC) [

77], Tabu Search (TS) [

32], RSA [

78], partition heuristic (PH) [

32], Genetic Algorithm (GA) [

32], Ant Colony Optimization (ACO) [

32], extended ACO (ACOII) [

32], GADP [

55], basic Simulated Annealing (SA) [

78], FA [

32], SOS with the longest processing time first (LPT) rule (SOS-LPT) [

79], symbiotic organisms search (SOS) [

79], HSOSSA [

30], ESA [

30], and ESOS [

30].

The comparison is performed based on the value of

and

as given in

Table 4 and

Table 5.

Table 6 lists the parameter settings for these algorithms.

From

Table 4, one can observe that MHHO has the best

values at 28 instances from 36 instances (as in boldface), followed by HSOSSA, which achieves the best

value, at eight instances.

Moreover,

Table 5 shows the performance of the algorithms in terms of

. Note that MHHO has high improvements over the other approaches in most of the tested instances except at some jobs. For example, in machine 2 and job 20, the HSOSSA provides better results than MHHO. The same observation is noticed at machine 4 and jobs 20 and jobs 40. While, at the machine 8 and job 40, it can be seen that HSOSSA and ESOS have better value than MHHO. As well as, the FA, ESA, and ESOS provide better results than MHHO at machine 10 and job 20, in addition, at machine 10 and job 40, the HSOSSA and ESOS are better than MHHO. Finally, at the machine 20 and job 20, the performance of the MHHO is less than FAm, SOS-LPT, and HSOSSA.

Because there are several state-of-the-art methods that have been developed but used different measures to assess their performance. Accordingly, we compared our proposed MHHO with those methods at machines 2, 6, and 12 with jobs 20, 40, 60, and 80, the results are given in

Table 7, which uses the minimum, average, and maximum of the

. From this table, it can be concluded that the proposed MHHO has better performance than others in terms of average (Avg.) overall the instances, except at the machine 12 and job 20, where the ESOS is better. In addition, in terms of the best

value, the proposed MHHO provides the smallest value overall the instances and jobs followed by FA. Moreover, it can be seen that the

value for the proposed MHHO is better than the

for others.

6. Conclusions

We presented a new hybrid meta-heuristic approach to address unrelated parallel machine scheduling problems (UPMSPs) with sequence-dependent setup times. The proposed MHHO approach is a hybrid of Harris Hawks optimizer (HHO) and the salp swarm algorithm (SSA). MHHO allows enhancing the performance of HHO based on SSA, and also avoid premature convergence. Therefore, the SSA is applied as a local operator to improve the performance of HHO to find optimal solutions and avoid local optima. Moreover, we considered the minimization of makespan as a performance evaluation of the proposed approach. The proposed MHHO had been evaluated while using a set of instances, which contains small and large job sizes over six machines during a series of experiments. Furthermore, to approve the superiority of the MHHO, we compared MHHO with the original SSA, HHO, and other well-known methods that had been applied to solve UPMSPs. The comparison results showed that MHHO outperforms both SSA and HHO. Additionally, MHHO outperforms other methods, additionally, it showed better convergence to the optimal solution.

For future work, the MHHO could be employed in various optimization applications, such as data clustering, cloud computing scheduling, image processing, forecasting applications, and others.